Embedded Motion Control 2019 Group 2: Difference between revisions

| (60 intermediate revisions by 4 users not shown) | |||

| Line 1: | Line 1: | ||

<div style="width:calc(80vw)"> | |||

== Group members == | == Group members == | ||

| Line 8: | Line 10: | ||

| Bob Clephas || 1271431 | | Bob Clephas || 1271431 | ||

|- | |- | ||

| Tom van de | | Tom van de Laar || 1265938 | ||

|- | |- | ||

| Job Meijer || 1268155 | | Job Meijer || 1268155 | ||

| Line 18: | Line 20: | ||

= Introduction = | = Introduction = | ||

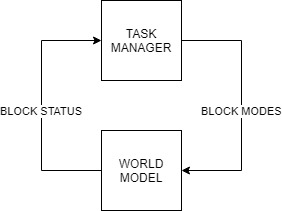

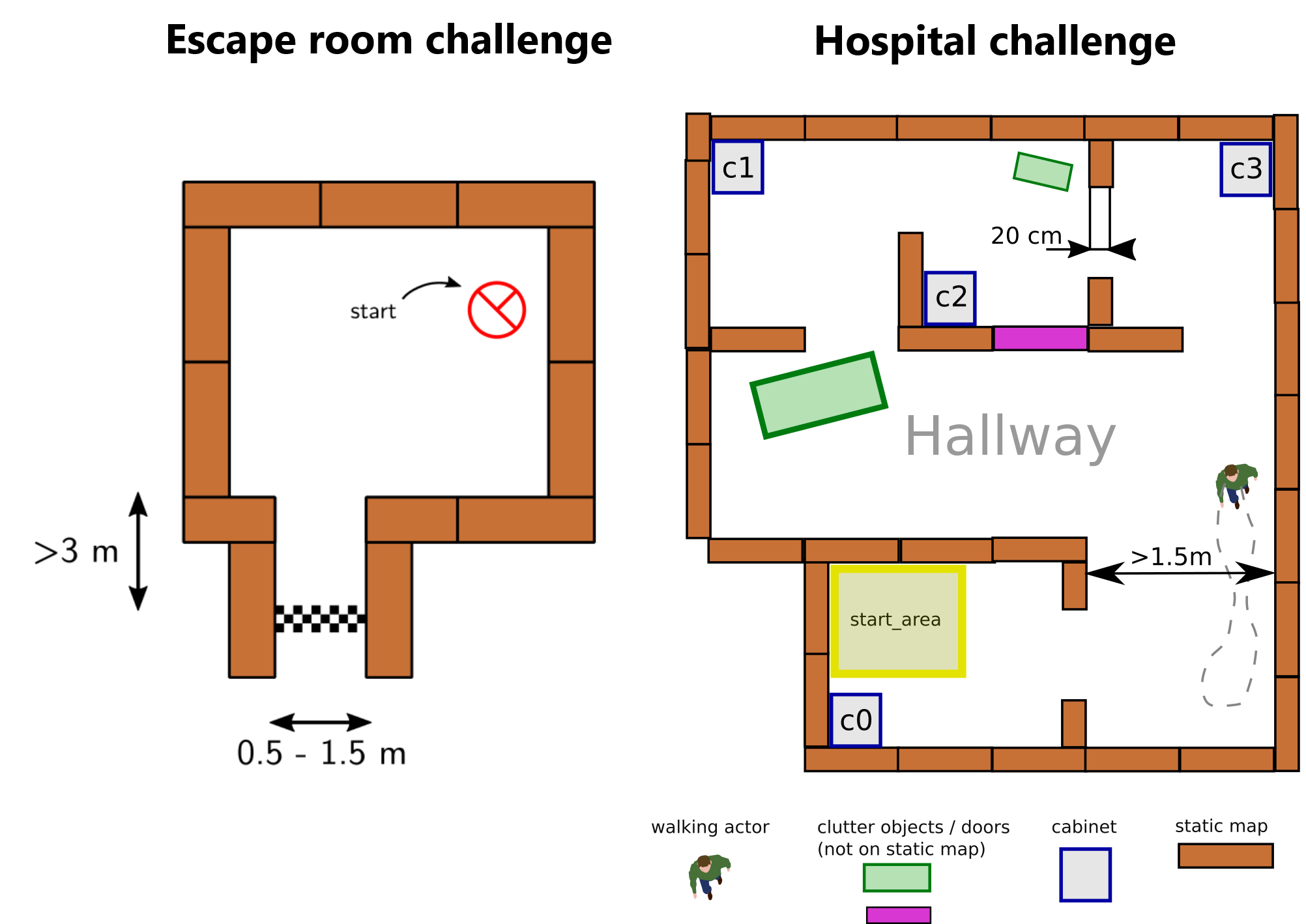

[[File:challenges.png|right|thumb|600px|Figure 1: The two challenges during the course. On the left the escape room challenge where PICO must drive out of the room autonomously. On the right the hospital challenge where PICO must visit multiple cabinets autonomously]] | |||

[[File:challenges.png|right|thumb| | Welcome to the wiki of group 2 of the 2019 Embedded Motion Control course. During this course the group designed and implemented their own software which allows a PICO robot to complete two different challenges autonomously. The first challenge is the "Escape room challenge" where the PICO robot must drive out of the room from a given initial position inside the room. In the second challenge called the "Hospital challenge" the goal is to visit an unknown number of cabinets in a specific order, placed in different rooms. For both challenges the group designed one generic software structure that is capable of completing both challenges without changing the complete structure of the program. The example maps of both challenges are shown in Figure 1 to give a general idea about the challenges. The full details of both challenges are given on the general wiki page of the 2019 Embedded Motion Control course ([http://cstwiki.wtb.tue.nl/index.php?title=Embedded_Motion_Control_2019#Escape_Room_Competition link]). | ||

Welcome | |||

=== Escape room challenge === | === Escape room challenge === | ||

The main goal of the challenge was to exit the room as fast as possible, given an arbitrary initial position and orientation of PICO. The robot will encounter various constraints such as the length of the finish line from the door, width of the hall way, time constraints and accuracy. | |||

The main goal of the challenge was to exit the room as fast as possible given an arbitrary initial position and orientation of PICO | The PICO robot was first placed in a rectangular room with unknown dimensions and one door/opening towards the corridor. The corridor was perpendicular to the room with an opening on its far end as well. The initial position and orientation of the PICO robot was completely arbitrary. PICO was supposed to cross the finish line placed at a distance greater than or equal to 3 metres from the door of the corridor. The walls of the room were also not straight which posed a challenge during the mapping of the room from the laser data. The challenge is discussed in much detail about the algorithms used, implementation, program flow and results of the challenge in the following sections. | ||

=== Hospital challenge === | === Hospital challenge === | ||

The main goal of this challenge was to visit the cabinets in a particular order given by the user as fast as possible. The global map consisting of rooms and cabinets and the starting room of PICO were mentioned beforehand. | |||

The hospital challenge contained multiple rooms with doors which can be open or closed. It tested the ability of PICO to avoid static/dynamic objects and plan an optimal path dynamically. The static objects included clutter objects/doors and the dynamic objects included human beings moving in the room while PICO was performing its task which were not specified on the given global map. Lastly, PICO was asked to visit the list of cabinets to visit in a specified order given by the user before the start of the challenge. The challenge is discussed in much detail in the following sections. The algorithms used are explained, how they are implemented is shown, the program flow is discussed, and the results of the challenge are told. | |||

= Design document = | = Design document = | ||

The group started by creating a design document, to arrive at a well-thought-out design of the software. This document describes the starting point of the project. The given constraints and hardware is listed in an overview and the needed requirements and specifications of the software. The last part of the design document illustrates the overall software architecture which the group wants to design. The design document provided a solid basis upon which was build during the remaining part of the project. The full document can be found [[Media:4SC020_DesignDocument_Group2.pdf|here]]. Important specifications and requirements are listed in Table 1 and the general software architecture is explained in full detail in the chapter in the [[#General software architecture and interface | General software architecture and interface]]. | |||

''' Table 1: Requirements and specifications ''' | ''' Table 1: Requirements and specifications ''' | ||

| Line 122: | Line 84: | ||

= General software architecture and interface = | = General software architecture and interface = | ||

<div id="General software architecture and interface"></div> | |||

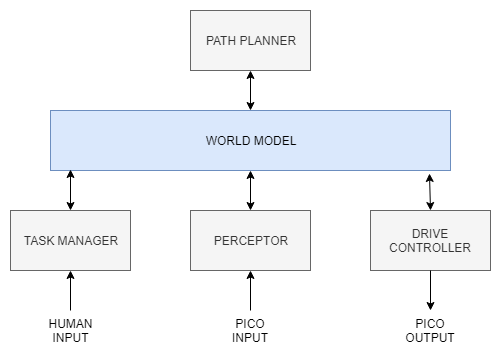

A generic software architecture was implemented as discussed in the lectures during the course. The software architecture is split into several building blocks, Task manager, World model, Perceptor, Path planner, Drive controller. The respective data flow between these building blocks are as shown in Figure 2. The splitting of the building blocks is necessary to keep the software structured and divide functionalities of the robot. | |||

<br /> | <br /> | ||

[[File:GeneralOverview.png|center|thumb|600px|Figure 2: Global overview of the software architecture used]] | [[File:GeneralOverview.png|center|thumb|600px|Figure 2: Global overview of the software architecture used]] | ||

The [[#Task manager | task manager]] helps in structuring the program flow by setting | The [[#Task manager | task manager]] helps in structuring the [[#program flow | program flow]] by setting modes to a software block (block mode). Based on received statuses from the software blocks (block status) a particular task is performed. It mainly focuses on the behavior of the program and segments the challenge into a step by step process/task division taking into account the fall back scenarios. It manages high level tasks which are discussed in more detail later. The [[#Perceptor | perceptor]] is primarily used for converting the input sensor data into usable information for the PICO. It creates a local map, fits it onto the global map and then aligns it when required. The [[#Path planner | path planner]] mainly focuses on planning an optimal path trough nodes, such that the PICO can reach the required destination. The [[#Drive controller | drive controller]] focuses on driving the PICO to the required destination by giving a motor set point in terms of translational and rotational velocity. The [[#World model | world model]] is used to store all the information and acts as a medium of communication between the other blocks. Detailed explanations of each block can be found in [[#Software blocks | software blocks]] section. | ||

'''Block modes & Block Statuses''' | '''Block modes & Block Statuses''' | ||

Block statuses and modes are primarily used for communication between the task manager and the other blocks. It also helps | Block statuses and modes are primarily used for communication between the task manager and the other blocks. It also helps gain easy understanding of the segmentation of each task/phase. In each iteration of the software the Perceptor, Path planner and Drive controller receive a block mode from the task manager, describing the required functionality of that during that iteration. After executing the task, the software block returns a block status describing the outcome of the task. i.e. if it was successful or not. The block diagram shown below explains the transmission of block modes and statuses between the Task manager and the other software blocks. Also, the exact block modes and statuses are listed below. | ||

[[File:blockdiag.jpg]] | [[File:blockdiag.jpg]] | ||

| Line 143: | Line 106: | ||

The block modes that were defined are as follows : | The block modes that were defined are as follows : | ||

*MODE_EXECUTE - defined for all the blocks | *MODE_EXECUTE - defined for all the blocks to execute its main functionality | ||

*MODE_INIT - defined for all the blocks | *MODE_INIT - defined for all the blocks to initialize | ||

*MODE_IDLE - defined for all the blocks | *MODE_IDLE - defined for all the blocks to do temporary disable the block | ||

*PP_MODE_ROTATE - defined for the path planner which directs the drive controller to change orientation of PICO | *PP_MODE_ROTATE - defined for the path planner which directs the drive controller to change orientation of PICO | ||

*PP_MODE_PLAN - defined for the path planner to plan an optimal path | *PP_MODE_PLAN - defined for the path planner to plan an optimal path | ||

| Line 157: | Line 119: | ||

These statuses and modes are used in the execution of appropriate phases and cases discussed in the [[# Overall program flow | overall program flow]] section. | These statuses and modes are used in the execution of appropriate phases and cases discussed in the [[# Overall program flow | overall program flow]] section. | ||

= Overall program flow = | <div id="program flow"></div> | ||

= Overall program flow = | |||

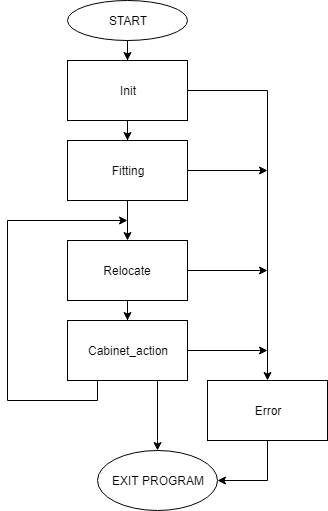

[[File:Flowchart_phases.png|right|thumb|400px|Figure 3: Overall program structure with phases]] | [[File:Flowchart_phases.png|right|thumb|400px|Figure 3: Overall program structure with phases]] | ||

The overall software | The overall software behavior is divided in five clearly distinguished phases. During each phase all actions lead to one specific goal and when that goal is reached, a transition is made towards the next phase. | ||

The following five phases are identified and | The following five phases are identified and shown in Figure 3. | ||

''' | ''' Initialization phase ''' | ||

The software always starts in this phase. | The software always starts in this phase. All the inputs and outputs of the robot are initialized and checked, during this phase. Moreover, all the required variables in the software are set to their correct values. At the end of the initialization phase the software is set and ready to perform the desired tasks, the software switches to the fitting phase. | ||

''' Fitting phase ''' | ''' Fitting phase ''' | ||

During the fitting phase PICO tries to determine its initial position relative to the given map. It determines the location with the help of the laser range finder data and tries to fit the environment around the robot to the given map. In the case that the obtained laser data is insufficient to get a good, unique fit, it | During the fitting phase PICO tries to determine its initial position relative to the given global map. It determines the location with the help of the laser range finder data and tries to fit the environment around the robot to the given map. In the case that the obtained laser data is insufficient to get a good, unique fit, it will start to rotate the robot. If still no unique fit is obtained after the rotation, the robot will try to drive towards a location inside the room and will rotate again at this location. The full details on how the fitting algorithm works are described in the [[#Perceptor | perceptor]] section of this wiki. As soon as there is an unique and good fit, the location of PICO is known and the software switched to the relocate phase. | ||

''' Relocate phase ''' | ''' Relocate phase ''' | ||

During the relocate phase the goal is to move the PICO robot to the desired cabinet. To do this, a path is calculated from the current location towards the desired cabinet in the [[#Path planner | path planner]]. The [[#Drive controller | drive controller]] follows this path and avoids obstacles on its way. When it is found that the path | During the relocate phase the goal is to move the PICO robot to the desired cabinet. To do this, a path is calculated from the current location towards the desired cabinet in the [[#Path planner | path planner]]. The [[#Drive controller | drive controller]] follows this path and avoids obstacles on its way. When it is found that the path is blocked, a new path is calculated around the blockage. As soon as the PICO robot has arrived at the desired cabinet the software switches to the cabinet action phase. | ||

''' Cabinet action phase ''' | ''' Cabinet action phase ''' | ||

During the cabinet action phase the PICO robot executes the required actions at the cabinet. This includes saying 'I arrived at cabinet #' and taking a snapshot of the current laser data to proof that the robot has arrived at the correct location. After performing the required actions the software | During the cabinet action phase the PICO robot executes the required actions at the cabinet. This includes saying 'I arrived at cabinet #' and taking a snapshot of the current laser data to proof that the robot has arrived at the correct location. After performing the required actions the software determines if the PICO robot should visit another cabinet, and if so, it switches back to the relocate phase. If all the cabinets are visited the software is stopped and the challenge is successfully completed. | ||

''' Error phase ''' | ''' Error phase ''' | ||

The error phase is different from the other phases in the sense that it is never the desired to end up in this phase. The only situation when the software switched to the error phase is when something is different | The error phase is different from the other phases in the sense that it is never the desired to end up in this phase. The only situation when the software switched to the error phase is when something is different then expected. This can for example happen when a required file is missing during the initialization phase or the fitting phase went through all the fallback mechanisms and still hasn't succeed in finding a unique fit. In all cases something unforeseen has happened and the software is out of options to recover itself. If that happens, the PICO robot is switched to a safe state e.g. all motors are disabled, and then all useful information for debugging is displayed to the operator. Finally the software is terminated and the possible cause can be found and fixed. | ||

''' Detailed descriptions of the different phases ''' | ''' Detailed descriptions of the different phases ''' | ||

For all the phases detailed flowcharts and descriptions are made. In those flowcharts all the fallback mechanisms are shown and the exact | For all the phases detailed flowcharts and descriptions are made. In those flowcharts all the fallback mechanisms are shown and the exact behavior is explained in full detail. During the writing of the software those flowcharts are used to create the software and to find bugs. They also helped the group to come to a clear agreement on how the software architecture should look like. Finally it also helped the group to discuss how to handle unforeseen situations and how a found solution can be fitted in the existing software. | ||

| Line 195: | Line 157: | ||

== World model == | == World model == | ||

The world model is the block which stores all the data that needs to be transferred between the different blocks. It acts as a medium of communication between the various blocks such as | The world model is the block which stores all the data that needs to be transferred between the different blocks. It acts as a medium of communication between the various blocks such as perceptor, task manager, path planner and drive controller. It contains all the get and set functions of the various blocks to perform respective tasks. | ||

The detailed overview of the input and output data from the software blocks to the world model is shown in Figure 4. A description of what each data line represents is stated in Table 3. | |||

[[File:Worldmodeloverview.png|center|thumb|800px|Figure 4: Detailed overview of software structure with data flow between the blocks]] | [[File:Worldmodeloverview.png|center|thumb|800px|Figure 4: Detailed overview of software structure with data flow between the blocks]] | ||

| Line 208: | Line 170: | ||

! scope="col" | '''Description''' | ! scope="col" | '''Description''' | ||

|- | |- | ||

| LRF Sensor input || | | LRF Sensor input || Laser range finder data is used to create the local map objects like walls and detect corners | ||

|- | |- | ||

| ODO Sensor input || Odometery data gives the current position and orientation of PICO | | ODO Sensor input || Odometery data gives the current position and orientation of PICO | ||

| Line 215: | Line 177: | ||

|- | |- | ||

| Global map|| This is the given map with the position of cabinets and rooms specified | | Global map|| This is the given map with the position of cabinets and rooms specified | ||

|- | |- | ||

| Close proximity region|| This is the region defined around PICO in order to avoid obstacles | | Close proximity region|| This is the region defined around PICO in order to avoid obstacles | ||

| Line 234: | Line 194: | ||

|} | |} | ||

== Task manager == | == Task manager == | ||

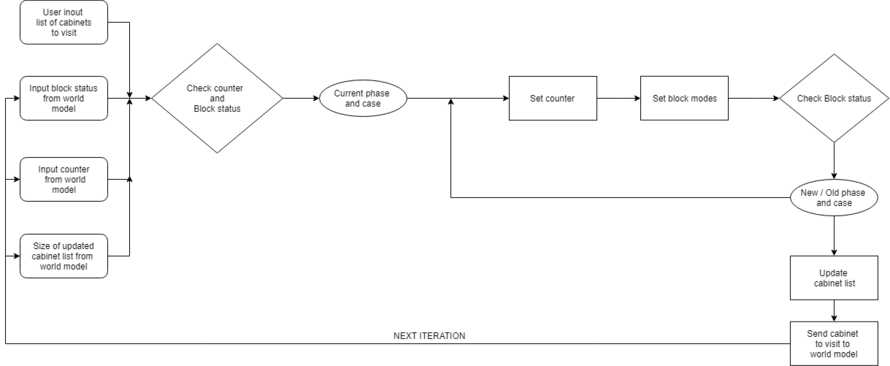

[[File:Escaperoomtask.png|right|thumb|800px|Figure 5: Task manager state machine for the escape room challenge]] | |||

[[File:Escaperoomtask.png|right|thumb|800px|Figure | |||

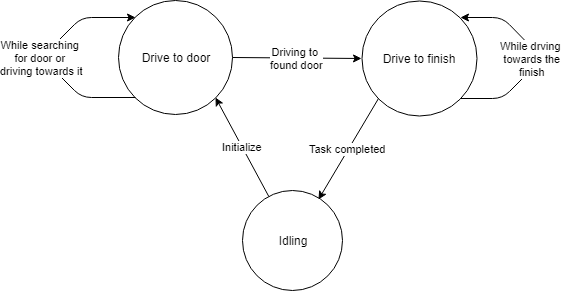

The | The task manager functions as a finite state machine which switches between different tasks/states. It focuses mainly on the behavior of the whole program rather than the execution. It determines the next operation mode based on the current operation mode, current block statuses and counters in which the corresponding block modes of the perceptor, path planner and drive controller are set for the next task execution. It communicates with the other blocks via the World model. | ||

Since the "Escape room challenge" and the “Hospital challenge” require a complete different approach in terms of cooperation between the blocks, the task manager is rewritten with different program structure maintaining the same framework for the “Hospital challenge”. | |||

''' | '''ESCAPE ROOM CHALLENGE''' <br> | ||

In this challenge, the task manager initially sets the modes of the different blocks for PICO to find the door and drive to the door. It either chooses to stay in the same state or drives to the exit based on the received status of the blocks. If PICO is driving to a possible door or is searching for a door, the task manager chooses to stay in the same state of driving to the door. If PICO drives to the found door, then the task manager sends a command to drive PICO to the finish. If PICO is driving to a possible exit or is searching for an exit, the task manager still commands PCIO to drive to the finish. Lastly, if PICO crosses the finish line, the task sets the mode of all the blocks to Idle. The functions of the task manager for the escape room challenge are described in the state machine diagram in Figure 5 | |||

'''HOSPITAL ROOM CHALLENGE''' <br> | |||

In this challenge, the task manager maintains the same framework with a different program structure. It functions as a state machine and handles the behavior of the program in a well structured manner considering various fall back scenarios which can be edited whenever needed. The list of cabinets to visit are initially read by the task manager and sent to the world model. It works primarily on setting appropriate block modes, setting counter variables and changing from a particular phase and case of the program to another in order to perform a required task based on the block statuses it receives. The various phases and cases are discussed further in the [[#Overall program flow | overall program flow]] section. The functions of the task manager for the hospital challenge are described in the flowchart in figure 6 and can also be seen in description in [[#Overall program flow | overall program flow]] section. | |||

The function description can be found here : [[Media:Task_manager_document.pdf|Task manager description]] | The function description can be found here : [[Media:Task_manager_document.pdf|Task manager description]] | ||

[[File:Taskhospital.png|center|thumb|800px|Figure 6: Task manager general state machine for the Hospital challenge]] | |||

[[File:Taskhospital.png|center|thumb|800px|Figure | |||

== Visualization == | == Visualization == | ||

To make the debugging of the program faster and more effective a | To make the debugging of the program faster and more effective, a visualization class is created. This visualization class draws an empty canvas of a given size. With one line of code the defined objects can be added to this canvas. Each loop iteration the canvas is cleared and can be filled again with the updated or new objects. The visualization class is excluded from the software architecture. There is chosen to not include this class in the software architecture, because this class has no real functionality except for debugging. Any other software block can make use of the visualization to quickly start debugging. However, the visualization is especially used in the main to keep the program structured. | ||

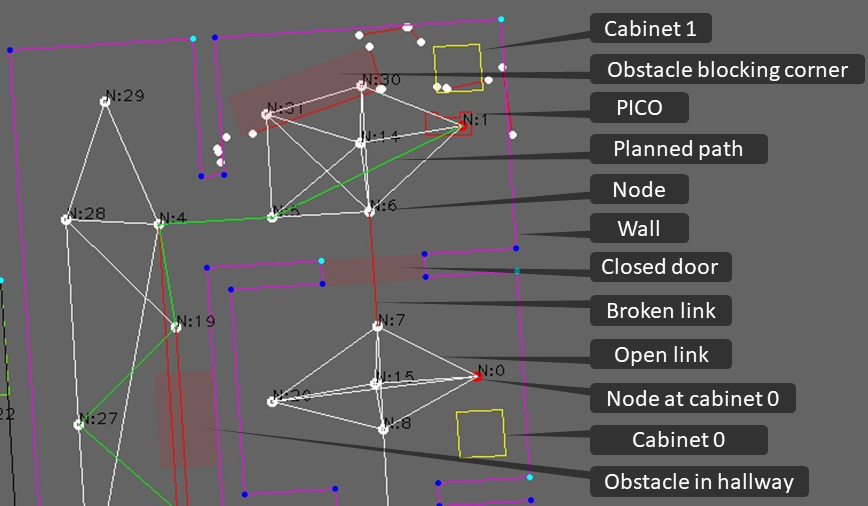

The visualization makes use of the opencv library and a few of its basic functionalities such as imshow, | The visualization makes use of the opencv library and a few of its basic functionalities such as imshow, newcanvas, Point2d, circle, line and putText. The struct "object", which is further explained in [[Media:Perceptor_used_data_structures.pdf|Perceptor data structures]] and defined in the [[#code snippets | code snippet]] "class definition", can be added to the visualization. For example, in the visualization can be seen if points are connected (blue) or not (white). Moreover, darker blue points indicate that the corner is concave, light blue points indicate convex corners with respect to the robot. The maps as defined in the world model can also be visualized. The map struct contains a vector of objects, such as walls, cabinets, nodes, doors, path etc. If needed the object structured can easily be extended to include new objects which can be visualized in the same manner. | ||

In figure | In figure 7 example objects are visualized. The global map is created by the perceptor from the Json file, the walls of the global map are visualized with purple lines. The walls of the room where PICO starts in are defined by the black lines. The laser range finder data can be visualized as red dots. The close proximity region is visualized using red and green dots. A green dot indicates a direction which contains no objects, and a red dot indicates an object is within the defined close proximity region. Furthermore, arrows are used to visualize how close the object is to the robot. This region is used by the drive controller for the potential field algorithm. Using the LRF data the current scan is created. The data points from the LRF are converted into line points and visualized as red lines. The local map is stored in the world model and contains multiple current scan lines merged over time. Moreover, the local map is visualized as green lines and corner points which are colored based on their properties, such as convex, concave or not connected. Once a path is planned by the path planner block is can be added to the global map. Therefore, the planned path can also be visualized by visualizing the global map. The node set as destination for PICO is visualized dark blue. The final destination of a path, in this case always a cabinet node, is set visualized red. If needed the nodes and link from one node to another node can be visualized as well (normally white). Often only the nodes from the planned path are visualized, to prevent a chaotic canvas. If certain objects are visualized using the same color it can easily be changed. | ||

[[File:Visualisation.gif|frame|border|center|600px|Figure | [[File:Visualisation.gif|frame|border|center|600px|Figure 7:The simulation objects]] | ||

== Perceptor == | == Perceptor == | ||

| Line 306: | Line 226: | ||

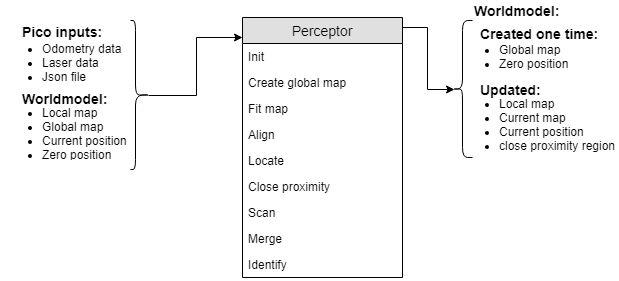

The perceptor receives all of the incoming data from the | The perceptor receives all of the incoming data from the PICO robot and converts the data to useful data for the worldmodel. The incoming data exists of odometry data obtained by the wheel encoders of the PICO robot and the laserdata obtained by the laser scanner. also the JSON file containing the global map and location of the cabinets, which is provided a week before the hospital challenge, is read and processed. Moreover, the output of the perceptor to the world model consists of the global map, a local map, a current map, the current/zero position and a close proximity region. The incoming data is handled within the perceptor by the functions described below. | ||

The inputs and outputs of the perceptor are shown in the following | The inputs, functions and outputs of the perceptor are shown in the following Figure: | ||

[[File:Perceptor.png]] | [[File:Perceptor.png]] | ||

| Line 314: | Line 234: | ||

The data structures used in the perceptor can be viewed in the following document: [[Media:Perceptor_used_data_structures.pdf|Perceptor data structures]]. | The data structures used in the perceptor can be viewed in the following document: [[Media:Perceptor_used_data_structures.pdf|Perceptor data structures]]. | ||

[[File:Fitting 2.gif|right|frame|550px|Figure | [[File:Fitting 2.gif|right|frame|550px|Figure 8:Visualization of the local map fitted onto the global map (fitting)]] | ||

[[File:LineFitAlgorithm.gif|right|frame|550px|Figure | [[File:LineFitAlgorithm.gif|right|frame|550px|Figure 9: Visualisation of the line fit algorithm]] | ||

''' Init | Each function from the perceptor is described in more detail in the upcoming section. | ||

The | |||

''' Init'''<br> | |||

The PICO robot saves the absolute driven distance since its startup. Therefore, when the software | |||

starts it needs to reinitialize the current position of the robot. If the robot receives its first odometry | starts it needs to reinitialize the current position of the robot. If the robot receives its first odometry | ||

dataset it saves this position and sets it as zero position for the worldmodel. Later this zero position can be subtracted from the new odometry data, to obtain the position of | dataset it saves this position and sets it as zero position for the worldmodel. Later this zero position can be subtracted from the new odometry data, to obtain the position of PICO with respect to position where it initialized. The initialization of the odometry data only happens once in the program, when the software is started. | ||

''' | ''' Create global map '''<br> | ||

A | A JSON file containing information of the global map is read in the perceptor once when the program is started. The JSON file is read and the information is directly transformed into the data structure used by the program. The global map contains all the walls of the hospital challenge, as well as the nodes and links between each node. Furthermore, the starting area of the robot is defined on the given map. The definition of this area is needed to make a more accurate and faster fit of the global map onto the local map. | ||

''' Close proximity '''<br> | ''' Close proximity '''<br> | ||

Dynamic objects are not measured by the local map. To prevent collisions between the robot and another object (dynamic or static), a close proximity region is determined. This region is described as a circle with | Dynamic objects are not measured by the local map. To prevent collisions between the robot and another object (dynamic or static), a close proximity region is determined. This region is described as a circle with a configured radius around the robot. The function returns a vector describing the measured distances of a filtered set of laser range finder data points to the worldmodel from where the drive controller can request it when needed. | ||

''' Locate '''<br> | ''' Locate '''<br> | ||

This function makes use of the zero-frame which is determined by the init function. Once the | This function makes use of the zero-frame which is determined by the init function. Once the | ||

odometry data is read a transformation is used to determine the position of the robot with respect to | odometry data is read a transformation is used to determine the position of the robot with respect to | ||

the initialized position. Other functions need the position of the robot to navigate | the initialized position. Other functions need the position of the robot to navigate through the maps. Therefore, this current position is outputted to the worldmodel. | ||

''' Fit map '''<br> | ''' Fit map '''<br> | ||

For the | For the PICO robot to know where it is located on the given global map, a fit function is created. This fit function is used when the perceptor receives the ‘PC_MODE_FIT’ command from the task manager which only happens in the beginning of the program as described in the task manager section. The way this function works is by picking one object (with 2 points thus basically a line) from the global map and one from the local map. Next it rotates the global map such that the two lines are parallel, this happens 2 times for one set of objects, and thereafter translates the global map such that the middle points of these lines are on top of each other. When this is done the number of lines and points ‘on top’ of each other is determined. Two lines are on top of each other when the angle difference is smaller than a defined bound and when the distance between there middle points is smaller than a defined bound. To make the function more robust, also point properties such as convex and concave are taken into account and should match. A fit score is calculated based on the combined angle and distance errors of the matched walls. If there are static objects placed inside the initial room only parts of the walls can be observed in the local map. Therefore, the shorter walls of the local map are made equal in length to the global map so they can be tested if they match the global map walls. Furthermore, the fitscore is only based on walls that are matched, in combination with the extending of the shorter walls from the local map this ensures robustness to static objects in the initial room. When the number of matching walls is higher or equal to that of another iteration then this transformation, number of matching walls and fit score is saved. The global map is transformed back to how it was, and another set of objects is tested. Once all the combinations of objects from the local map and global map are tested, the best transform is used to transform the global map. This transformation only happens if the global map matches correctly with the global map. In Figure 8 the fitting of the map can be seen. Initially the robot cannot match enough walls with a good fitscore, therefore, the robots starts rotating. Once the robot added enough walls to the local map which he is able to match the global map the transformation is saved. Lastly, this transformation is executed and the global map is transformed on the local map, even with the two static objects in the initial room. | ||

''' Align '''<br> | ''' Align '''<br> | ||

Once the global map is fitted onto the local map, the align function is used to keep the two maps on top of each other. The way this works is by checking the average angle difference and average offset between the current scan map and the global map. To do this, first it is checked which of the lines from the current scan map are ‘on top’ of the lines from the global map. In this case on top is slightly different as defined in the fit function. Here it means that 2 lines should be roughly parallel, there parallel distance should be small and they should have no gap between them. When two lines are on top of each other, the angle error and parallel distance is summed up onto the total angle and total distance error. After all the all the objects are checked against each other this total error is divided by the number of matching walls and used to translate the global map to match the current scan. | Once the global map is fitted onto the local map, the align function is used to keep the two maps on top of each other. The way this works is by checking the average angle difference and average offset between the current scan map and the global map. To do this, first it is checked which of the lines from the current scan map are ‘on top’ of the lines from the global map. In this case on top is slightly different as defined in the fit function. Here it means that 2 lines should be roughly parallel, there parallel distance should be small and they should have no gap between them. The exact definition of those terms are explained in Figure 10. When two lines are on top of each other, the angle error and parallel distance is summed up onto the total angle and total distance error. After all the all the objects are checked against each other this total error is divided by the number of matching walls and used to translate the global map to match the current scan. | ||

''' Scan '''<br> | ''' Scan '''<br> | ||

To create usable information from the laser rangefinder data, a scan function is created. However, this data is in polar coordinates. Therefore, the data is first transformed to cartesian coordinates. These transformed datapoints are then used in a fit algorithm. The aim of the fit algorithm is to create wall objects of the transformed datapoints. The first step in the fit algorithm is creating a new wall object with as corner point the first and second datapoint. Secondly, a fit error is determined by measuring the projection error from all the datapoints in between the corner points of the wall object, which in the first case are none. The fit error is split in a maximum fit error and an average fit error, for the explanation only the maximum fit error is used. Thirdly, this fit error is compared with a threshold value. If the maximum fit error does not exceed the threshold, the second corner of the wall object is updated to the next datapoint. When the maximum fit error exceeds the threshold value, the wall object can be added to the current map if it is fitted through enough datapoints. The minimum number of datapoints needed can be configured. Before the wall object is added, the last wall point is set to the previously evaluated datapoint. If there are unchecked datapoints left, a new wall object will be created. Depending on the distance between the previous and currently evaluating datapoint, the wall object will start from the previous or currently evaluating datapoint. In Figure 9 the algorithm is explained graphically and a code snippet of this algorithm can be found in [[#code snippets |code snippets]]. | |||

is first transformed to | |||

''' Merge '''<br> | ''' Merge '''<br> | ||

In this function the new laser data is merged with an existing map to form a more robust and | In this function the new laser data is merged with an existing map to form a more robust and complete map of the environment. Therefore, laser data in the form of a current map created by the scan function is imported. Furthermore, the previous created output of the merge function is imported as well, which is called the local map. Firstly, the previous created map is transformed to the current position of the robot. Secondly, similar walls are merged. Walls are considered to be similar if they are parallel to each other, have a small difference in angle or are split into two pieces. If two walls are close to each other but one has a smaller length they are merged as well. The merge settings are stored in the configuration file. The different merge cases can be seen in Figure 10. Once similar walls are merged, the endpoints of walls are connected to form the corners points of the room. Each point of a wall has a given radius, and if another point has a distance to this point which is smaller than its radius then the points will be connected. To improve the robustness of the local map, the location of the connected corner point is based on their relative weight. Their weight is defined based on the number of datapoints the wall if fit through. Furthermore, the wall objects that are not connected at the end of the function will be removed. Therefore, the local map will only consist of walls which are connected to each other. | ||

complete map of the environment. Therefore, laser data in the form of a current map created by the | |||

scan function is imported. Furthermore, the previous created output of the merge function is | |||

imported as well, which is called the local map. Firstly, the previous created map is transformed to | |||

the current position of the robot. Secondly, similar walls are merged. Walls are considered to be | |||

similar if they are parallel to each other, have a small difference in angle or are split into two pieces. | |||

If two walls are close to each other but one has a smaller length they are merged as well. The merge | |||

settings are stored in the configuration file. The different merge cases can be seen in | |||

similar walls are merged, the endpoints of walls are connected to form the corners points of the | |||

room. Each point of a wall has a given radius, and if another point has a distance to this point which is | |||

smaller than its radius then the points will be connected. To improve the robustness of the local map | |||

the location of | |||

the wall | |||

Therefore, the local map will only consist of walls which are connected to each other. | |||

''' Identify '''<br> | ''' Identify '''<br> | ||

The functionality of this function is to identify the property of the points in the local map. For | The functionality of this function is to identify the property of the points in the local map. For instance, corner points can be convex or concave. This property is later used to help identify doors and improve the fit function. The position of the robot determines if the corner point is convex or concave. With this property information the map is scanned for doors. For the escape room challenge a door is identified as two convex points close to each other. A door is defined between two walls, these walls should be approximately in one line. Also, the corner points cannot be from the same wall to further increase the robustness of the map. It is unlikely that the local map immediately contains two convex points which can form a door. Therefore, a possible door is defined, so the robot can drive to the location and check if there is a real door at this position. There are multiple scenarios where a possible door can be formed. Such as, one convex point and one loose end, two loose ends or a loose end facing a wall. Concave points can never form a door and are therefore excluded. When forming a possible door, the length of the door and the orientation of the walls is important as well. | ||

instance corner points can be convex or concave. This property is later used to help identify doors | |||

convex or concave. With this property information the map is scanned for doors. For the escape | |||

room challenge a door is identified as two convex points close to each other. A door is defined | |||

between two walls, these walls should be approximately in one line. Also, the corner points cannot | |||

be from the same wall to further increase the robustness of the map. It is unlikely that the local map | |||

immediately contains two convex points which can form a door. Therefore, a possible door is | |||

defined, so the robot can drive to the location and check if there is a real door at this position. There | |||

are multiple scenarios where a possible door can be formed. Such as, one convex point and one loose | |||

end, two loose ends or a loose end facing a wall. Concave points can never form a door and are | |||

therefore excluded. When forming a possible door the length of the door and the orientation of the | |||

walls is important as well. | |||

[[File:Lines on top.gif|center|frame|550px|Figure | [[File:Lines on top.gif|center|frame|550px|Figure 10: Line matching]] | ||

== Path planner == | == Path planner == | ||

<div id="Path planner"></div> | <div id="Path planner"></div> | ||

[[File:Link broken2.gif|right|frame|550px|Figure | [[File:Link broken2.gif|right|frame|550px|Figure 11:Visualization of an obstecale creating broken links]] | ||

For planning paths the choice is made to use nodes that are placed at the following locations: <br> | For planning paths the choice is made to use nodes that are placed at the following locations: <br> | ||

| Line 395: | Line 285: | ||

- Distributed over each room in order to plan around objects, eg. in the middle <br> | - Distributed over each room in order to plan around objects, eg. in the middle <br> | ||

Gridding the map is also considered but was not chosen because of the higher complexity and with separate nodes debugging is easier as well. The path planner determines the path for the PICO robot based on the list of available nodes and the links between these nodes. For planning the optimal path, [https://en.wikipedia.org/wiki/Dijkstra%27s_algorithm Dijkstra's ] algorithm is used and the distance between the nodes is used as a cost. The choice for Dijkstra is based on the need for an algorithm that can plan the shortest | Gridding the map is also considered but was not chosen because of the higher complexity and with separate nodes debugging is easier as well. The path planner determines the path for the PICO robot based on the list of available nodes and the links between these nodes. For planning the optimal path, [https://en.wikipedia.org/wiki/Dijkstra%27s_algorithm Dijkstra's ] algorithm is used and the distance between the nodes is used as a cost. The choice for Dijkstra is based on the need for an algorithm that can plan the shortest route from one node to another. Also it was selected on being sufficient for this application, the extra complexity of the [https://en.wikipedia.org/wiki/A*_search_algorithm A*] algorithm was not needed. The planned path is a set of positions that the PICO robot is going to drive towards, this set is called the ‘next set of positions’. This next set of positions is saved in the world model and used by the task manager to send destination points to the drive controller. In Figure 11 is shown how the path planner behaves in case of a closed door: the link is broken and a new path around the closed door is planned. | ||

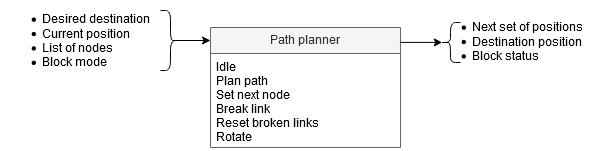

The path planner takes the desired destination, current position, list of all available nodes and the path planner block mode as inputs. The functions of the path planner are idling, planning a path, setting the next node of the planned path as current destination position, breaking a link between nodes, resetting all broken links and rotating the PICO robot. This results in the planned path (next set of positions), the destination position and the path planner block status. The inputs, functions and outputs of the path planner are visualized in the following | The path planner takes the desired destination, current position, list of all available nodes and the path planner block mode as inputs. The functions of the path planner are idling, planning a path, setting the next node of the planned path as current destination position, breaking a link between nodes, resetting all broken links and rotating the PICO robot. This results in the planned path (next set of positions), the destination position and the path planner block status. The inputs, functions and outputs of the path planner are visualized in the following Figure: | ||

[[File:PathPlanner3.png]] | [[File:PathPlanner3.png]] | ||

| Line 423: | Line 313: | ||

<div id="Drive controller"></div> | <div id="Drive controller"></div> | ||

The drive controller software block ensures that the | The drive controller software block ensures that the PICO robot drives to the desired location. it receives the current and desired location and automatically determines the shortest path towards the desired location. While driving it also avoids small obstacles on it's path. If the object is too large, or the path is blocked by a door for example, the drive controller signals this to the path planner which calculated an alternative path. | ||

To avoid objects and ensure the PICO robot does not hit a wall or object, two algorithms are considered. The first algorithm takes a fixed radius around the robot and checks if each each laser data point on that radius is blocked or not. The algorithm then finds an direction that is as close as possible facing towards the actual goal with all free directions. The second algorithm that is considered is the potential field algorithm. In this algorithm the distance towards the object close to the robot is also taken into account instead of only blocked/not blocked. This second algorithm is more complex but will ensure a smoother driving of the PICO robot. Eventually the potential field algorithm is implemented because of the smoother driving | To avoid objects and ensure the PICO robot does not hit a wall or object, two algorithms are considered. The first algorithm takes a fixed radius around the robot and checks if each each laser data point on that radius is blocked or not. The algorithm then finds an direction that is as close as possible facing towards the actual goal with all free directions. The second algorithm that is considered is the potential field algorithm. In this algorithm the distance towards the object close to the robot is also taken into account instead of only blocked/not blocked. This second algorithm is more complex but will ensure a smoother driving of the PICO robot. Eventually the potential field algorithm is implemented because of the smoother driving behavior. | ||

The potential field algorithm uses two types of forces which are added and used to find a free direction. The first force is an attractive force towards the desired location. Secondly all the objects (walls, static and dynamic objects) that are close to the robot have a repellent force away from them. The closer the object, the larger the repellent force. All the forces are added together and the resulting direction vector is determined. This new direction is used as a desired direction at that time instance. In the gif shown | The potential field algorithm uses two types of forces which are added and used to find a free direction. The first force is an attractive force towards the desired location. Secondly all the objects (walls, static and dynamic objects) that are close to the robot have a repellent force away from them. The closer the object, the larger the repellent force. All the forces are added together and the resulting direction vector is determined. This new direction is used as a desired direction at that time instance. In the gif shown in Figure 12 the repellent forces are visualized and it can be seen that PICO uses this to drive around an object in its path. The green points are showing the free directions and in red the directions towards an object, together with an arrow visualizing the repellent force. | ||

More details of the Drive Controller, including function descriptions and a detailed flowchart can be found in the Drive Controller functionality description document [http://cstwiki.wtb.tue.nl/images/DriveController_functionality.pdf here]. In the [[#code snippets | code snippet]] section of this wiki the actual potential field algorithm is found. | More details of the Drive Controller, including function descriptions and a detailed flowchart can be found in the Drive Controller functionality description document [http://cstwiki.wtb.tue.nl/images/DriveController_functionality.pdf here]. In the [[#code snippets | code snippet]] section of this wiki the actual potential field algorithm is found. | ||

[[File:Close_prx.gif|frame|border|center|600px|Figure | [[File:Close_prx.gif|frame|border|center|600px|Figure 12: visualization of the PICO driving around an object with the potential field algorithm.]] | ||

= Challenges = | = Challenges = | ||

== Escape Room Challenge == | == Escape Room Challenge == | ||

[[File:escaperoom3.gif|right|frame|550px|Figure | [[File:escaperoom3.gif|right|frame|550px|Figure 13:Simulation of the escape room]] | ||

Our strategy for the escape room challenge was to use the software structure for the hospital challenge as much as possible. Therefore, the room is scanned from its initial position. From this location a local map of the room is created by the perceptor. Including, convex or concave corner points, doors and possible doors (if it is not fully certain the door is real). Based on this local map the task manager gives commands to the drive controller and path planner to position in front of the door. Once in front of the the possible door and verified as a real door the path planner sends the next position to the world model. Which is the end of the finish line in this case, which is detected by two lose ends of the walls. Also the robot is able to detect if there are objects in front of the robot to eventually avoid them. | Our strategy for the escape room challenge was to use the software structure for the hospital challenge as much as possible. Therefore, the room is scanned from its initial position. From this location a local map of the room is created by the perceptor. Including, convex or concave corner points, doors and possible doors (if it is not fully certain the door is real). Based on this local map the task manager gives commands to the drive controller and path planner to position in front of the door. Once in front of the the possible door and verified as a real door the path planner sends the next position to the world model. Which is the end of the finish line in this case, which is detected by two lose ends of the walls. Also the robot is able to detect if there are objects in front of the robot to eventually avoid them. | ||

| Line 444: | Line 333: | ||

===Simulation and testing=== | ===Simulation and testing=== | ||

Multiple possible maps where created and tested. In most of the cases the robot was able to escape the room. However, in some cases such as the room in the escape room challenge the robot could not escape. The cases were analyzed but there was enough time to implement these cases. Furthermore, the software was only partly tested with the real environment at the time of the escape room challenge. | Multiple possible maps where created and tested. In most of the cases the robot was able to escape the room. However, in some cases such as the room in the escape room challenge the robot could not escape. The cases were analyzed but there was enough time to implement these cases. Furthermore, the software was only partly tested with the real environment at the time of the escape room challenge. However, each separate function worked, such as driving to destinations, making a local map with walls, doors and corner points, driving through a corridor and avoiding obstacles and this created a solid basis for the hospital challenge. | ||

===What went good during the escape room challenge:=== | ===What went good during the escape room challenge:=== | ||

The | The software basis was made robust, it could detect the walls even though a few walls were placed under a small angle and not straight next to each other. Furthermore, the graphical feedback in from of a local map was implemented on the “face” of the PICO robot. The PICO robot even drove to a possible door when later realizing this was not a door. Most important is that the software basis was set for the final challenge and most of the software written and tested for the escape room challenge can be used for the final challenge. | ||

===Improvements for the escape room challenge:=== | ===Improvements for the escape room challenge:=== | ||

Doors can only be detected if it consists of convex corners, or two loose ends facing each other. In the challenge it was therefore not able to detect a possible door. The loose ends were not facing each other as can be seen in | Doors can only be detected if it consists of convex corners, or two loose ends facing each other. In the challenge it was therefore not able to detect a possible door. The loose ends were not facing each other as can be seen in Figure 13. Furthermore, there was not back up strategy when no doors where found, other then scanning the map again. PICO should have re-positioned itself somewhere else in the room or the PO could have followed a wall. However, we are not intending to use a wall follower in the hospital challenge. Therefore, this does not correspond with our chosen strategy. Another point that can be improved is creating the walls. For now walls can only be detected with a minimal number of laser points. Therefore, in the challenge it was not able to detect the small wall next to the corridor straight away. This was done to create a robust map but therefore also excluded some essential parts of the map. | ||

In the simulation environment the map is recreated including the roughly placed walls. As expected in this simulation of the escape room the | In the simulation environment the map is recreated including the roughly placed walls. As expected in this simulation of the escape room the PICO did not succeed to find the exit, the reasons are explained above. | ||

== Hospital Challenge== | == Hospital Challenge== | ||

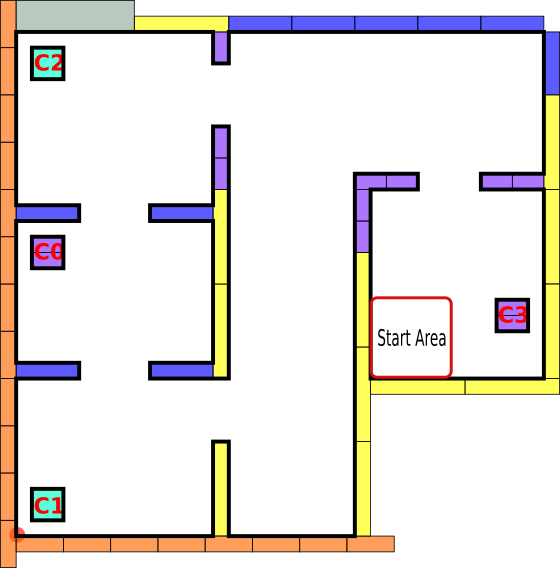

[[File:MapImageWcommentsCut.png|thumb|right|upright=3|Figure | [[File:MapImageWcommentsCut.png|thumb|right|upright=3|Figure 14: Global map during hospital challenge]] | ||

[[File:Finalmap_emc_inkscape 2019.png|thumb|right|500px|upright=3|Figure 15: The given final map]] | |||

During the hospital challenge | During the hospital challenge the PICO robot must visit multiple cabinets in a hospital to pick and place medicine. One week before this challenge a global map of the hospital was given which contained the coordinates of the cabinets, walls and doors. This map is shown in figure 15. However, during the actual challenge one door was closed and extra static objects were added to the environment. At last there was also one person was walking in the hospital environment and the PICO robot had to deal with those differences compared to the map. At the beginning of the challenge the specific cabinets and the order how to visit those was announced and was equal to C0, C1 and finally C3. During the challenge the door between C0 and C1 was closed, also an extra object was added in the room of C1 and one in the hallway between the rooms. | ||

Out of the nine groups competing in the challenge, only two groups completed the challenge and we are one of those groups. We visited all the required cabinets in just under 5 minutes. With this finish time we ended up in second place! In the video below the first part of the challenge is shown and on YouTube the full video [https://www.youtube.com/watch?v=WREYyta6aR4 Hospital challenge - video group 2 - 2019] in high quality can be found. This video also explains the things that happen and shows how the PICO robot with our software handles those situations. | |||

[[File:HospitalChallengeGif.gif|border|upright=4|center|600px|Hospital challenge video]] | [[File:HospitalChallengeGif.gif|border|upright=4|center|600px|Hospital challenge video]] | ||

<br> | <br> | ||

=== What went well === | === What went well === | ||

The | Since we successfully accomplished the challenge, the overall program and approach of the group worked as desired. The software was able to deal with the uncertainties such as the walking person and the unknown objects in the hospital. In particular we very proud of how the PICO robot dealt with the person walking in hospital. The PICO robot avoided the person if needed and the software was robust in the sense that the localization was still able to correctly locate the PICO robot when the person was walking. We are also proud of how the PICO robot dealt with the closed door and the object in the hallway. It tried to drive trough the closed door but quickly realized that it was closed. After that it immediately planned a new path around the closed door. On that new path the unknown object was positioned, and the PICO robot drove around it as soon as it realized that the path was blocked. In Figure 14 a snapshot of the map in purple with the planned path in green is shown. The white lines are the possible paths between the nodes and the red lines are the broken links at the location of the closed door and unknown object. This snapshot was taken during the hospital challenge when the PICO robot was standing in front of cabinet 1. The last thing that we are in particular proud of is the localization on the map during the challenge. It was robust against the several unknown objects, it was able to fit correctly at the beginning of the challenge and it was able to deal with the person walking in the hospital. | ||

=== Improvements for the hospital challenge === | === Improvements for the hospital challenge === | ||

During the challenge we needed one restart. In the first trial the fitting of the map at the beginning of the challenge was not fully correct and the software was not able to correct it during the driving. In the second try the fitting was correct and the software made small changes during the driving to ensure the localization was correct during the whole challenge. During the second try the PICO slightly hit the obstacle in the hallway, which was unexpected since a protection mechanism to avoid running into objects is implemented. Although we extensively tested the protection mechanism, it still happened. We are not fully certain about the exact cause but we expect that the combination of the person walking around and the size of the object. If the software is used in the future this exact cause should be investigated in new testing sessions. The last improvement is reducing the total time to finish the challenge. The driving speed was lowered during the challenge to improve the accuracy of the localization, because the detected walls and doors were slightly off. If the robustness of the localization is improved, the driving speed can be increased, and the finish time will be reduced. | |||

= Code snippets = | = Code snippets = | ||

| Line 487: | Line 375: | ||

== What went good == | == What went good == | ||

When looking back at the project, several things went well. Firstly, a good structure was set up before we started coding. Once the whole structure was clear to everyone, parts could more easily be divided amongst the team members. Secondly, the data flow (input and output) was defined before coding of a specific part started. This ensured easy coupling of different parts. Lastly, larger algorithms and challenges are discussed amongst team members to ensure the algorithms are thought trough and work as specified. | When looking back at the project, several things went well. Firstly, a good structure was set up before we started coding. Once the whole structure was clear to everyone, parts could more easily be divided amongst the team members. Secondly, the data flow (input and output) was defined before coding of a specific part started. This ensured easy coupling of different parts. Lastly, larger algorithms and challenges are discussed amongst team members to ensure the algorithms are thought trough and work as specified. The cooperation between the group members was good and the way of structuring the process lead to successfully completing the hospital challenge. | ||

== What could be improved == | == What could be improved == | ||

| Line 493: | Line 381: | ||

== Overall conclusion == | == Overall conclusion == | ||

Although there are some things that could be improved, the overall conclusion is that the project was successful. We completed the hospital challenge and the overall software is clearly written and easy to improve and adjust. The most improvements can be made by improving the robustness of the overall software. In the beginning of the project the focus could have been more towards the escape room challenge. Nevertheless, the created software has a good structure and when implemented on the PICO robot it is able to finish the hospital challenge. | |||

Latest revision as of 12:10, 21 June 2019

Group members

| Name | Student number |

|---|---|

| Bob Clephas | 1271431 |

| Tom van de Laar | 1265938 |

| Job Meijer | 1268155 |

| Marcel van Wensveen | 1253085 |

| Anish Kumar Govada | 1348701 |

Introduction

Welcome to the wiki of group 2 of the 2019 Embedded Motion Control course. During this course the group designed and implemented their own software which allows a PICO robot to complete two different challenges autonomously. The first challenge is the "Escape room challenge" where the PICO robot must drive out of the room from a given initial position inside the room. In the second challenge called the "Hospital challenge" the goal is to visit an unknown number of cabinets in a specific order, placed in different rooms. For both challenges the group designed one generic software structure that is capable of completing both challenges without changing the complete structure of the program. The example maps of both challenges are shown in Figure 1 to give a general idea about the challenges. The full details of both challenges are given on the general wiki page of the 2019 Embedded Motion Control course (link).

Escape room challenge

The main goal of the challenge was to exit the room as fast as possible, given an arbitrary initial position and orientation of PICO. The robot will encounter various constraints such as the length of the finish line from the door, width of the hall way, time constraints and accuracy. The PICO robot was first placed in a rectangular room with unknown dimensions and one door/opening towards the corridor. The corridor was perpendicular to the room with an opening on its far end as well. The initial position and orientation of the PICO robot was completely arbitrary. PICO was supposed to cross the finish line placed at a distance greater than or equal to 3 metres from the door of the corridor. The walls of the room were also not straight which posed a challenge during the mapping of the room from the laser data. The challenge is discussed in much detail about the algorithms used, implementation, program flow and results of the challenge in the following sections.

Hospital challenge

The main goal of this challenge was to visit the cabinets in a particular order given by the user as fast as possible. The global map consisting of rooms and cabinets and the starting room of PICO were mentioned beforehand. The hospital challenge contained multiple rooms with doors which can be open or closed. It tested the ability of PICO to avoid static/dynamic objects and plan an optimal path dynamically. The static objects included clutter objects/doors and the dynamic objects included human beings moving in the room while PICO was performing its task which were not specified on the given global map. Lastly, PICO was asked to visit the list of cabinets to visit in a specified order given by the user before the start of the challenge. The challenge is discussed in much detail in the following sections. The algorithms used are explained, how they are implemented is shown, the program flow is discussed, and the results of the challenge are told.

Design document

The group started by creating a design document, to arrive at a well-thought-out design of the software. This document describes the starting point of the project. The given constraints and hardware is listed in an overview and the needed requirements and specifications of the software. The last part of the design document illustrates the overall software architecture which the group wants to design. The design document provided a solid basis upon which was build during the remaining part of the project. The full document can be found here. Important specifications and requirements are listed in Table 1 and the general software architecture is explained in full detail in the chapter in the General software architecture and interface.

Table 1: Requirements and specifications

| Requirements | Specifications |

|---|---|

| Accomplish predefined high-level tasks | 1. Find the exit during the "Escape room challenge"

2. Reach a predefined cabinet during the "Hospital Challenge" |

| Knowledge of the environment | 1. The robot should be able to identify the following objects:

2. The map is at 2D level 3. Overall accuracy of <0.1 meter |

| Knowing where the robot is in the environment | 1. Know the location at 2D level

2. XY with < 0.1 meter accuracy 3. Orientation (alpha) with <10 degree accuracy |

| Being able to move | 1. Max. 0.5 [m/s] translational speed

2. Max 1.2 [rad/sec] rotational speed 3. Able to reach the desired position with <0.1 meter accuracy 4. Able to reach the desired orientation with <0.1 radians accuracy |

| Avoid obstacles | 1. Never bump into an object

2. Being able to drive around an object when it is partially blocking the desired path |

| Standing still | 1. Never stand still for longer than 30 seconds |

| Finish as fast as possible | 1. Within 5 minutes (Escape room challenge)

2. Within 10 minutes (Hospital Competition) |

| Coding language | 1. Only allowed to write code in C++ coding language

2. GIT version control must be used |

General software architecture and interface

A generic software architecture was implemented as discussed in the lectures during the course. The software architecture is split into several building blocks, Task manager, World model, Perceptor, Path planner, Drive controller. The respective data flow between these building blocks are as shown in Figure 2. The splitting of the building blocks is necessary to keep the software structured and divide functionalities of the robot.

The task manager helps in structuring the program flow by setting modes to a software block (block mode). Based on received statuses from the software blocks (block status) a particular task is performed. It mainly focuses on the behavior of the program and segments the challenge into a step by step process/task division taking into account the fall back scenarios. It manages high level tasks which are discussed in more detail later. The perceptor is primarily used for converting the input sensor data into usable information for the PICO. It creates a local map, fits it onto the global map and then aligns it when required. The path planner mainly focuses on planning an optimal path trough nodes, such that the PICO can reach the required destination. The drive controller focuses on driving the PICO to the required destination by giving a motor set point in terms of translational and rotational velocity. The world model is used to store all the information and acts as a medium of communication between the other blocks. Detailed explanations of each block can be found in software blocks section.

Block modes & Block Statuses

Block statuses and modes are primarily used for communication between the task manager and the other blocks. It also helps gain easy understanding of the segmentation of each task/phase. In each iteration of the software the Perceptor, Path planner and Drive controller receive a block mode from the task manager, describing the required functionality of that during that iteration. After executing the task, the software block returns a block status describing the outcome of the task. i.e. if it was successful or not. The block diagram shown below explains the transmission of block modes and statuses between the Task manager and the other software blocks. Also, the exact block modes and statuses are listed below.

The block status that were defined are as follows :

- STATE_BUSY - Set for each block in case it is still performing the task

- STATE_DONE - Set for each block if the task is completed

- STATE_ERROR - Set for each block if it results in an error

The block modes that were defined are as follows :

- MODE_EXECUTE - defined for all the blocks to execute its main functionality

- MODE_INIT - defined for all the blocks to initialize

- MODE_IDLE - defined for all the blocks to do temporary disable the block

- PP_MODE_ROTATE - defined for the path planner which directs the drive controller to change orientation of PICO

- PP_MODE_PLAN - defined for the path planner to plan an optimal path

- PP_MODE_NEXTNODE - defined for the path planner to send the next node in the path

- PP_MODE_FOLLOW - defined for the path planner follows the path

- PP_MODE_BREAKLINKS - defined for the path planner breaks the links between a node when

- PP_MODE_RESETLINKS - defined for the path planner to rest the broken links between nodes which were broken due to an object between the two nodes.

- PC_MODE_FIT - defined for the perceptor to fit the local map onto the global map

These statuses and modes are used in the execution of appropriate phases and cases discussed in the overall program flow section.

Overall program flow

The overall software behavior is divided in five clearly distinguished phases. During each phase all actions lead to one specific goal and when that goal is reached, a transition is made towards the next phase. The following five phases are identified and shown in Figure 3.

Initialization phase

The software always starts in this phase. All the inputs and outputs of the robot are initialized and checked, during this phase. Moreover, all the required variables in the software are set to their correct values. At the end of the initialization phase the software is set and ready to perform the desired tasks, the software switches to the fitting phase.

Fitting phase

During the fitting phase PICO tries to determine its initial position relative to the given global map. It determines the location with the help of the laser range finder data and tries to fit the environment around the robot to the given map. In the case that the obtained laser data is insufficient to get a good, unique fit, it will start to rotate the robot. If still no unique fit is obtained after the rotation, the robot will try to drive towards a location inside the room and will rotate again at this location. The full details on how the fitting algorithm works are described in the perceptor section of this wiki. As soon as there is an unique and good fit, the location of PICO is known and the software switched to the relocate phase.

Relocate phase

During the relocate phase the goal is to move the PICO robot to the desired cabinet. To do this, a path is calculated from the current location towards the desired cabinet in the path planner. The drive controller follows this path and avoids obstacles on its way. When it is found that the path is blocked, a new path is calculated around the blockage. As soon as the PICO robot has arrived at the desired cabinet the software switches to the cabinet action phase.

Cabinet action phase

During the cabinet action phase the PICO robot executes the required actions at the cabinet. This includes saying 'I arrived at cabinet #' and taking a snapshot of the current laser data to proof that the robot has arrived at the correct location. After performing the required actions the software determines if the PICO robot should visit another cabinet, and if so, it switches back to the relocate phase. If all the cabinets are visited the software is stopped and the challenge is successfully completed.

Error phase

The error phase is different from the other phases in the sense that it is never the desired to end up in this phase. The only situation when the software switched to the error phase is when something is different then expected. This can for example happen when a required file is missing during the initialization phase or the fitting phase went through all the fallback mechanisms and still hasn't succeed in finding a unique fit. In all cases something unforeseen has happened and the software is out of options to recover itself. If that happens, the PICO robot is switched to a safe state e.g. all motors are disabled, and then all useful information for debugging is displayed to the operator. Finally the software is terminated and the possible cause can be found and fixed.

Detailed descriptions of the different phases

For all the phases detailed flowcharts and descriptions are made. In those flowcharts all the fallback mechanisms are shown and the exact behavior is explained in full detail. During the writing of the software those flowcharts are used to create the software and to find bugs. They also helped the group to come to a clear agreement on how the software architecture should look like. Finally it also helped the group to discuss how to handle unforeseen situations and how a found solution can be fitted in the existing software.

The flowcharts with all the required details are grouped in this document.

Software blocks

World model

The world model is the block which stores all the data that needs to be transferred between the different blocks. It acts as a medium of communication between the various blocks such as perceptor, task manager, path planner and drive controller. It contains all the get and set functions of the various blocks to perform respective tasks.

The detailed overview of the input and output data from the software blocks to the world model is shown in Figure 4. A description of what each data line represents is stated in Table 3.

Table 3: Description of data that is transmitted

| Data | Description |

|---|---|

| LRF Sensor input | Laser range finder data is used to create the local map objects like walls and detect corners |

| ODO Sensor input | Odometery data gives the current position and orientation of PICO |

| Local map | This is the map created from the LRF data |

| Global map | This is the given map with the position of cabinets and rooms specified |

| Close proximity region | This is the region defined around PICO in order to avoid obstacles |

| Current position | This data stores the current position of the PICO which gets updated |

| Next node | Next node to visit in the optimal planned path |

| Optimal path | Shortest path created by Dijkstra algorithm |

| Desired destination/ next cabinet node | This stores the current cabinet number that needs to be visited by the PICO |

| Block modes | Used by every block to help PICO perform a certain action |

| Block status | This is the status of each block such as Done/busy/error and based on which a particular block modes are set and the required task is performed |

Task manager

The task manager functions as a finite state machine which switches between different tasks/states. It focuses mainly on the behavior of the whole program rather than the execution. It determines the next operation mode based on the current operation mode, current block statuses and counters in which the corresponding block modes of the perceptor, path planner and drive controller are set for the next task execution. It communicates with the other blocks via the World model. Since the "Escape room challenge" and the “Hospital challenge” require a complete different approach in terms of cooperation between the blocks, the task manager is rewritten with different program structure maintaining the same framework for the “Hospital challenge”.

ESCAPE ROOM CHALLENGE

In this challenge, the task manager initially sets the modes of the different blocks for PICO to find the door and drive to the door. It either chooses to stay in the same state or drives to the exit based on the received status of the blocks. If PICO is driving to a possible door or is searching for a door, the task manager chooses to stay in the same state of driving to the door. If PICO drives to the found door, then the task manager sends a command to drive PICO to the finish. If PICO is driving to a possible exit or is searching for an exit, the task manager still commands PCIO to drive to the finish. Lastly, if PICO crosses the finish line, the task sets the mode of all the blocks to Idle. The functions of the task manager for the escape room challenge are described in the state machine diagram in Figure 5

HOSPITAL ROOM CHALLENGE

In this challenge, the task manager maintains the same framework with a different program structure. It functions as a state machine and handles the behavior of the program in a well structured manner considering various fall back scenarios which can be edited whenever needed. The list of cabinets to visit are initially read by the task manager and sent to the world model. It works primarily on setting appropriate block modes, setting counter variables and changing from a particular phase and case of the program to another in order to perform a required task based on the block statuses it receives. The various phases and cases are discussed further in the overall program flow section. The functions of the task manager for the hospital challenge are described in the flowchart in figure 6 and can also be seen in description in overall program flow section.

The function description can be found here : Task manager description

Visualization