PRE2017 4 Groep7: Difference between revisions

| Line 526: | Line 526: | ||

== Classic Naive Bayes == | == Classic Naive Bayes == | ||

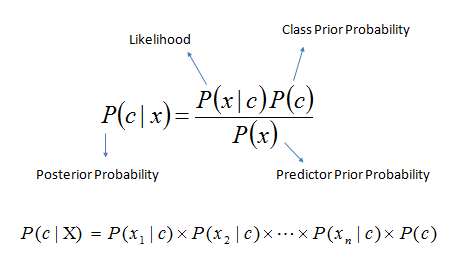

In order to design a new technique to classify relevance of messages, it is necessary to first look at established techniques that approximate the goal of a Whatsapp spam filter. The first technique that comes to mind is the use of Bayes classifiers. | In order to design a new technique to classify relevance of messages, it is necessary to first look at established techniques that approximate the goal of a Whatsapp spam filter. The first technique that comes to mind is the use of Bayes classifiers. | ||

Naïve Bayes classifiers are a popular technique In use for e-mail filtering. Typically spam is filtered using a bag of words technique, where words are used as tokens to calculate the probability according to Bayes Theorem that an e-mail is spam or not spam(ham). | |||

[[File:Bayes_rule.png]] | |||

To demonstrate how a naïve bayes spam filter might work, consider the example of a database of a random number X spam messages and 2X ham messages. It is now our task to classify new e-mails as they arrive, based on the currently existing objects. | |||

Since the amount of ham messages is twice the size of the spam messages, a new (still unobserved) message is twice as likely to be a member of ham than to be a member of spam. In Bayesian theorem, this probability is known as prior probability. These probabilities are solely based on previous observations. | |||

With the priors formulated, the program is ready to classify a new message. The message is broken up into words and each word is ran through the conditional probability table of all words in the database. Through this process the likelihood of the message being spam or ham is calculated. | |||

Finally, the posterior probability of the messages belonging to either class is calculated and whichever is higher is the class the message will be assigned to. | |||

Words have a certain probability of occurring in either spam or ham. The filter does not know these probabilities in advance, it needs to be trained first so they can be built up. For instance, the spam probability of words like “Sex” or “Nigerian” are generally higher than the probabilities of names of family members and friends. | Words have a certain probability of occurring in either spam or ham. The filter does not know these probabilities in advance, it needs to be trained first so they can be built up. For instance, the spam probability of words like “Sex” or “Nigerian” are generally higher than the probabilities of names of family members and friends. | ||

When the agent is trained, the likelihood functions are used to compute the chance of an e-mail with a particular set of words belongs to either the spam or the ham class. | When the agent is trained, the likelihood functions are used to compute the chance of an e-mail with a particular set of words belongs to either the spam or the ham class. | ||

One of the biggest advantages of Bayesian spam filtering is the fact that it is possible to train the filtering to each user, creating a personal spam filter. This training is possible because the spam a user receives correlates with that users activities. Eventually a Bayesian spam filter will assign a higher probability based on the user’s patterns. This property makes the use of a Bayesian classifier particularly attractive for Whatsapp spam filtering as the types of messages a user receives vary widely for users of the app. Bayesian Classifiers might also assign accurate probabilities from messages received from different groups as the group name can be used as a token as well. | |||

One of the biggest advantages of Bayesian spam filtering is the fact that it is possible to train the filtering to each user, creating a personal spam filter. This training is possible because the spam a user receives correlates with that users activities. Eventually a Bayesian spam filter will assign a higher probability based on the user’s patterns. This property makes the use of a Bayesian classifier particularly attractive for Whatsapp spam filtering as the types of messages a user receives vary widely for users of the app. Bayesian Classifiers might also assign accurate probabilities from messages received from different groups as the group name can be used as a token as well. | |||

== Research questions == | == Research questions == | ||

Revision as of 13:41, 12 June 2018

0LAUK0 - Group 7

Group Members

- Bas Voermans | 0957153

- Julian Smits | 0995642

- Tijn Centen | 1006867

- Bart van Schooten | 0999971

- Jodi Grooteman | 1006743

- Emre Aydogan | 0902742

Problem Statement

A Personal assistant (PA) works closely with a person to provide administrative support, this support is usually delivered on a one-to-one basis. A PA helps a person to make the best use of their time because they limit the time spent on secretarial and administrative tasks. unfortunately having the luxury of a personal assistant is reserved for the rich and successful only, this is because of the one-to-one nature and the extensive knowledge usually required to perform PA tasks successfully. In this study the research will be focused on one aspect of a PA, which is to scan incoming messages and to only notify the person of noteworthy messages. The users in this “USE” study are defined as students in the netherlands, and because the main means of communication between students is Whatsapp Messenger. It is a good starting point to alleviate students of the current growing expected accessibility that is imposed onto them. Currently Whatsapp Messenger uses a notification system that lets you turn on and turn off notifications of a certain group or a certain person. However, in most cases this is far from ideal because if a group has a relatively low amount of relevant messages one would be inclined to switch off notifications from this group all together, but if a message sent in this group has direct relevance to the user this information would probably be missed. The goal of this study is to design some software agent that can distinguish which messages are relevant to a students academic exploits, and notifies the user accordingly. The student would effectively have a personal assistant whose role is to manage their whatsapp.

Users

Who are the users?

The users that this research is meant for the users that have to weed through countless notifications while deciding what is important to them and what is not. Hence users that deal with many of these notifications are the main goal. This research will focus mainly on the student user group, which makes it easier to define the needs and requirements of this group since this research is familiar with this group.

Requirements of the users

- The system should run on pre-owned devices

- The system should filter important information out of incoming messages.

- The system should tune its intrusiveness based on the users feedback.

USE Aspects

This chapter takes a look at the potential impact of the product of the research. If the product fully works and solves the problem described in the problem description, it can have a great impact on the users of the product and the society as a whole. Beneath is described what impact the product can have on the users, society, possible relevant enterprises and the economy. Lastly it is described whether or not certain features are desirable.

Users

The users of the product will, as described above, primaliary be students, but it can also be extended to anybody with a smartphone who receives more messages than desired but does not want to miss out on any potentially important messages. When a person no longer has to spend time on reading all seemingly unimportant messages or scan through them looking for important messages, they will have more time to spend on things they want to spend their time on. This is a positive effect of the product as this allows the user to focus on their core business. However, the product might also have different effects on the user. Scanning texts messages or text in general for relevant information can be a valuable skill to have, as it has also applications in other scenarios, such as scanning scientific articles or reports for important information. When an AI takes care of this tasks, users might lose this skill. This might hinder them in the other scenarios as described above, where the AI possible can not help them find the important information. Another negative consequence might occur when the AI does not work perfect, but the user trusts it to work perfect. In this scenario the user might miss an important message, which can have quite some consequences. In a work environment this can mean that the user does not get informed about a (changed) deadline or meeting. In a social environment this can lead to irritation or even a quarrel.

Society

When talking about society, it means all people - users and non-users of the product - combined and everything included that comes with that. To look at what impact the product might have on the society, it is researched how relations between individuals chance, as well as how the entire society together behaves. The consequences for users as described above can be extended to a society level. If people become more productive as described above, it certainly would benefit society, as more can be accomplished. The fact that people might lose the ability to quickly scan text to find important information can also have an impact on society. If an entire generation grows up like this, there will also nobody to teach it to younger generations, meaning that society as a whole will lose this skill. Now it can be questioned how relevant such a skill might still be in future society, but its a loss nonetheless. Another thing that might occur when a large public uses the product is that nobody longer reads all the seemingly unimportant messages. If nobody reads them anymore, those who write those messages will probably stop doing so, removing the purpose of the product.

Enterprise

Possible relevant enterprises might be those who are interested to buy the product. This could be either a company like WhatsApp themselves, who want to integrate it in their application themselves, or a third party that wants to publice it as an application on its own. The companies, especially a third party, would want to make profit of such an application. companies like WhatsApp could offer it as a free service to make sure users keep using their application and possible attract new users. Third party companies can not do this and would need to find another way to make profit of the application. An easy solution for this seems to make the application not free of charge.

Economy

The product will reduce costs for users. A lot of people do not have time or do not want to filter the most important information themselfs. For this they can use a personal assistant to take over this task. But the agent will be less expensive than a personal assistant. This will save money. A disadvantage of this is that personal assistants will have less work. If people use the product of this research instead of a personal assistant for this particular task, personal assistants are not needed for this task anymore. This causes that there is less work for personal assistants.

Desirability of possible features of the agent

In this section it is researched how desirable certain possible features of the agent are. These features would probably improve the performance of the agent, but might have negative ethical consequences.

First it is analysed what the effect of the agent having access to the university infrastructure or certain other application the users uses, such as its agenda is. When the agent is able to use the information available on those platforms, such as which courses the users follows currently or when a next meeting is scheduled, the agent can make a better decision on whether or not a message is relevant on that moment of time. But is it desirable that the agent has access to these types of information? It could be seen as an infringement to the users privacy. This argument can be tackled by the fact that the user would have to give consent before the agent can access the information, as well as the fact that no human other than the user would have access to the information when the agent uses it, as it operates locally on the users smartphone. The agent could spread personal information towards third parties, if it would automatically respond to some messages. When these responses contain personal information, the privacy of the user could be lost. However, it is planned to add such features to the agent, and therefore the privacy of the user will be guaranteed.

Next it is analyzed whether or not the agent could be seen as censorship. By hiding certain messages, the agent could influence the users opinion and behaviour. If the algorithm of the agent could be manipulated by third parties, to always block or show certain messages, it could be seen as a form of censorship. This would be a bad thing and certainly not desirable. Therefore it should be impossible for third parties to influence the agents algorithm. When the application works locally on the users phone, this should be the case. Furthermore, the agent only blocks or shows the notification about a message, and not the messages themselves. When the user opens the chat application, such as WhatsApp, the user can still read all the messages it received, including those of which it did not receive a notification. Therefore, in the occasion a third party could abuse the application to censor certain messages, it would only be partial censorship. Thus it can be concluded that the application will not lead to censorship.

Approach

To start of, research to the state-of-the-art will be done to acquire the knowledge to do a good study on what the desired product should be. Next an analysis will be made concerning the User, Society and Enterprise (USE) aspects with the coupled advantages and disadvantages. At this point the description of the prototype will be worked out in detail and the prototype will start to be build. At the same time research will be done to analyse the different approaches of filtering the incoming messages and the impact they give. The results of the research will be implemented in the prototype. When the prototype is complete, the goal of the project will be reflected upon and some more improvements of the prototype can be made.

Used resources

The used resources can be found here: Used resources

State of the art

Personal assistants

Personal assistants already exists to a certain degree in many different forms, from really simple ones that collects and summarizes important information for small-scale fishers to automatic email filtering and voice controlled physical robots. Below is highlighted some of the already existing personal assistants and explain briefly how they work.

Firstly there are the email based personal assistants. An example of this is GmailValet, a service that manages your inbox to reduce the amount of (spam) email that you receive. Another example is SwiftFile, an intelligent assistant that classifies emails and sort them in different folders. The user can easily switch between folders, viewing the different categories of emails. RADAR, yet another email filter agent, uses a different approach based on machine learning. Experiments showed this approach worked well and the agent improved really fast. This also lead to an increase in the productivity of the user. There has also been some work done of personal email assistants that can respond automatically to certain emails, such as a notification when the user is on vacation, with great success.

Personal assistants can also be used to solve other problems common in an office environment. Planning a meeting with multiple people can be really time consuming, as all participating people need to agree on the final date and time. Using a personal assistant for this problem, it could plan such meeting for 10 participants in around 5 seconds, way faster than any human could. Other personal assistants use machine learning to learn the users scheduling preferences, and makes appointments based on that.

Research about personal assistants for other tasks has also been performed, such as a module based agent that can interact with files, other programs and handle databases. There also exists a patent for a personal assistant that can answer a phone call when the user is unable to do so. Based on previous conversations it can learn how to respond and predict what the user wants. Next there is the intelligent personal assistant robot BoBi. A form of a secretary that can handle tasks normally performed by a secretary, mainly intelligent meeting recording, multilingual interpretation and the ability to read papers.

Some more know already existing personal assistants are those build into current smartphones, such as Siri and Cortana. A research paper crowns Cortana currently as the best working agent in assisting the user.

There also exists personal assistants with a focus on a more specific target audience. To make sure visually impaired people can also make optimal use of current technology, a speech based personal assistant was designed. The communication would be bi-directional, meaning that the user can talk to the agent, and the agent can respond as well. The agent could be used to open programs on a computer, perform calculation or a google search. Another variant of this is voice controlled physical robots. Commands can be given via a smartphone to the robot, which can perform various tasks in the real world.

To help small scale fisherman a personal assistant named JarPi was designed that can run on cheap technology. JarPi would be used to collect information about the current location and the weather condition, and present in in a comprehensible manner. Normally such technology is quite expansive, rendering it unavailable for small scale fisherman. A more advanced agent would be a socially-aware robot assitant, or SARA for short. By analyzing the user via various inputs such as visually, vocally and verbally, the agent will be able to create its own visual, vocal and verbal behaviours. This can be used to create a appealing robot agent, for example at an event for recommendations.

Some general research about certain element of a personal assistant has also be performed. One of the big problems of creating a personal assistant is that an user model needs to be build, in order to really personalize the agent. A solution to this could be cognitive user model which comprises an user interest model, an user behavior model, an inference component and a collaboration component. Another problem occurs when analysing text messages, as abbreviations are often used in this medium. This can be solved by creating a dictionary of the used abbreviations, so they can be converted into normal text.

Lastly, some research has been done on the impact and effects of personal assistant agents on the user and society. Research has shown that for an user to like a personal assistant it has to be “human-like” and “professional”. The agent should be able to recognize the user’s voice and answer in a natural manner. It is also important to create a physically attractive interface for the user. When other stimuli are added, it works best to use an immersive 3d visual display.

For enterprises personal assistants also bring a change. AI personal assistants are being integrated in more and more aspects of our live, and can be used for example to shop or book a vacation. A company without such service might lose out on customers to a concurrent which does have it. At the same time, when by example a travel agency starts using a personal assistant agent, they might need less employees to plan and book vacations for its customers. Also, certain functions such as a management function might see drastic chances. Currently mangeners spent a lot of their time on administrative tasks, such as making schedules for the employees and fixing holes in the planning when somebody calls in sick. When these tasks can be carried out by an AI agent, been a manager would be a different job.

Text classification and filtering

Much research has also already been done on text classification and spam filtering. Most of these researches focus on filtering spam using different algorithms. Below will be highlighted some of the already existing spam filters and text classification algorithms.

Firstly spam can be tried to be filtered using many different algorithms. An method using an artificial neural network trained with the scaled conjugate gradient backpropagation algorithm showed great success, using little classification time and high accuracy. Another researched showed that using populair binary classification algorithms such as NB, SVM, LDA and NMF, combined with a non-binary classification algorithm such as K-means or NMF also leads to great results. Yet another study showed that a recurrent neural network can also be used to filter pre-processed spam. pre-processing means maken all letters lower cases, removing all special characters and stop words, since they contain no semantic information. With an accuracy of up to 98% this method also works. Spam could also be filtered using machine-learning and calculation of word weights, although this process can become more difficult when spam starts to look more like real text. Next, instead of using a global discrimination model, a local discrimination model could be build, personalized for the user. Although it is more challenging, it would certainly be useful. Another method is filtering based on keywords, using both a whitelist and a blacklist, to calculate the probability that a message is spam. When trying to filter email spam, one could also not only look at the message itself, but also at its header and possible attachments. When a mail for instance contains an .exe file, mainly used in spam email, it could automatically flag it as spam.

At the same time as researchers try to develop better spam detecting, spammers try to find new ways to elude spam filters. This way it keeps getting harder to make a fully functional spam detection algorithm.

On the field of text categorization and classification, much research has also already been done. First up naive Bayes could be used in different variants to classify text messages. When adding preprocessing or incorporating additional features the efficiency did not increase nor decrease drastically. However, it does reduce the feature space of the classification algorithm, which is beneficial when working with limited resources. discriminative or generative recurrent neural networks can also be used for text classification. Both of them have their different uses, and are better depending on the scenario. The generative model is especially effective for so called zero-shot learning, which is about applying knowledge from different tasks to tametisks that the model did not see before. The discriminative model is however more effective on larger datasets. These kinds of text classifications can also be used to find recommendations for users, to to filter messages on their relevance. A learning personal agent can be used to find new relevant information. The agent both learns from the user what he deems relevant, en classifies text to find whether or not it is indeed relevant to said user. A different approach to text classification is a keywords-based approach. Filtering on text messages on relevant keywords, the amount of notification that needs to be send to the user can greatly be used, sending only notifications of those message that are marked urgent or important. This method is also quite effective. Lastly, to easy the text classification algorithms, preprocessing can be done. By removing words that are seemingly irrelevant to determine its classification, the classification is both faster and reduce the feature space. Different techniques can be used to remove the irrelevant words, all with their pros and cons.

Prototype description

To get a good understanding of what kind of prototype is required for the described problem and the given user, a concrete goal needs to be described that will fulfill a good selection of the user requirements described in the section above. After a concrete goal is described a prototype design needs to be created to solve the problem described in the problem statement.

Goal

The goal that the prototype should fulfill is dependent on the user requirements that have been described. Since it is not possible to create a prototype that is able to achieve all requirements in the current planning, a selection of important requirements will be chosen that are to be implemented in the prototype. The rest of the requirements are going to be analysed and researched in a written manner to still be able to give insights in their importance to the user.

The requirements that are chosen for the prototype are the following:

- The system should run on pre-owned devices

- The system should filter important information out of incoming messages

- The system should tune its intrusiveness based on the users feedback

So the prototype will become a software module that can be implemented by existing messaging applications like Whatsapp, telegram or other messaging applications that can be used to send and receive messages between a large group of people. The module will output a binary value depending on whether the message is important or unimportant. To determine the grandiosity the system should base its reasoning on feedback that the user gives during setup or usage of the application.

Design

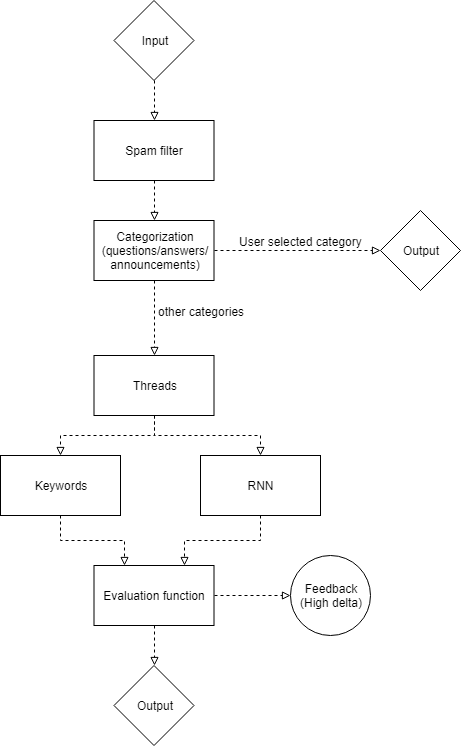

To achieve the goal described above, two prototype design variations will be created to be able to analyse their effectiveness. The first variation will be using keyword based filtering which has the advantage of having an understandable filtering process, since the keywords support the reasoning. The second variation will be using machine learning in the form of a recurrent neural network (RNN), which is often used for text based machine learning. These two subsystems will be integrated in a larger system that also involves the removal of clearly identifiable spam and the coupling of closely related messages in the form of threads.

Input Output interface

The required input for the filtering module should be as abstract as possible to support as many different messaging applications as possible. However, there should be consistency in the input format. Not only the message itself is important, but also the metadata like the date and time, the sender, whether a message is a response to a different message and whether any media like images is coupled with the message. The prototype will not be able to analyse any coupled media but the information of media being present can still be useful for filtering. Messages are inputted in batches, just like they are for unread notifications. The messages in a batch should all come from the same group chat since the messages could be coupled with each other. The module will then process this batch without taking other batches into account. The output of the filtering module will be a boolean value indicating for every individual message, whether the message should be shown to the user or should be discarded.

Spam filter

The first step to start analyzing the messages is to filter the spam out of the messages. The purpose of this is to cut out the messages that do not really have an influence on the context. For example the smiley’s are mostly not important. Therefore when there is a message with only smiley’s the program can categorize this as spam and thus filter it out. In this part the message is clearly looked at from a point that it only looks at what the actual text of a message is. To give an example, a message with a strange combination of letters would be filtered out. Thus the program does not pay attention to the meaning of a message but to the actual content of that particular message. Filtering out the spam before analyzing is important because the program would not have to analyze messages that have no influence in the first place.

Categorization

The messages will also be categorized in groups that are concerned with the structural meaning of the sentence. These are for example questions, answers or announcements. By extracting this information from the messages the program can give the user even more options to filter the incoming messages. A certain user might only be interested in announcements and not in questions. This can be indicated and the appropriate messages can be shown or discarded without going through the next layers of the program. When a user does not give a preference for a certain category the messages will be propagated to the next layer, which is the thread layer.

After the clearly identifiable spam messages have been discarded and the categories have been detected, the remaining messages can be coupled together in so called threads. This is done to retain important information that could be spread over multiple messages. A factor that could indicate a thread is for example the time of sending the messages, since messages sent in a short timespan will most likely involve the same subject. Another factor is the person that sends the messages, since information is most of the time coming from one person and is intended for all the others. The last factor is when a message is a reply on a different message. This is a feature that some messaging applications support and will link the messages that is being replied on to the new message. These two linked messages most likely need to be coupled together.

These coupled messages are then combined in such a way that the filtering in the next step will take the combined messages into account before determining the importance of the message.

Filtering

Now the program starts with categorizing the coupled messages in two groups. The first group is the important messages and the second are the unimportant messages. There are multiple ways of doing this, but the prototype will only involve two of them. Namely Keyword based filtering and Recurrent neural networks.

Keyword based filtering

The first method is keyword based filtering. This method makes use of a predefined list of important keywords. Every message is checked and given a score on how many important keywords are in that message. When a message has a higher score than a certain threshold the message will be placed in the group important messages.

Evaluation of messages will be done in a few steps. First the program checks if the message has one of the following words: Who, what, when, where. By checking these words the program already gains a lot of information about the message. The next step is to analyze what kind of word is stated after one of the W words. For example when there is a sentence that ends with who. It might not be as important as a sentence that starts with who. This is because the sentence that ends with who is not a question and thus might not have a much meaning as the other one. Also the message that ends with Who is not grammatically correct. This indicates that it has a low priority. In addition to that the length of the message is taken into account. The longer the message the more important it is most of the time.

Recurrent neural networks

The second method is recurrent neural networks. This method uses learning to categorize messages. Therefore it needs training. There are two ways of obtaining this training. The first one is to analyze messages by hand and use this to train the neural network. The second one is to give a set of messages to the user of the product and let the user categorize these messages. This creates personalized test data for all the users and thus will the neural network also be a personalized to a user when using this test data to learn. Combining these two methods of creating training data is the best thing to do. This is because then the neural network can have more training and it is not fully personalized. The fact that it is not fully personalized is a good thing because the user would otherwise fully rely on his categorization. When the user would not be able to categorize the messages the program would perform bad. Now with using both training data sources the program is optimized. Using a neural network gives a certain percentage of correct categorized messages. There option is there to make the user give a percentage to the program and that it keeps learning until this percentage is reached.

Both options of filtering the messages can be used separately or combined. An analysis will be performed when both filters are finished and based on that analysis the evaluation function will be created, which is explained in the next section.

Evaluation function

The evaluation subsystem will evaluate the incoming messages with the results of the different filtering options. Based on the results of the filtering options a different evaluation function can be chosen. Some ideas for the evaluation function are only choosing a result of one of the filters; taking the average; taking the maximum or minimum or looking at the magnitude of the difference. The evaluation subsystem also allows for personalization, since the users can indicate a degree of how many messages need to be filtered out, which can be transformed into a threshold that can be compared to the result of the evaluation function. Furthermore, personalization can be applied in the form of asking feedback. Users will most likely not want to give feedback on every message that is filtered so the results of the two filtering options could be used to get an understanding of the certainty of the network in filtering that message. If, for example, the difference of two filtering options exceeds a value that can be indirectly set by the user, the program can show the message and ask whether it is useful.

Prototype progress

The following section will show the progress of the prototype over multiple iterations. Each iteration is approximately one week and will contain the actions done in bullet points as well as a written summary of the implementation with occasional images.

Iteration 1

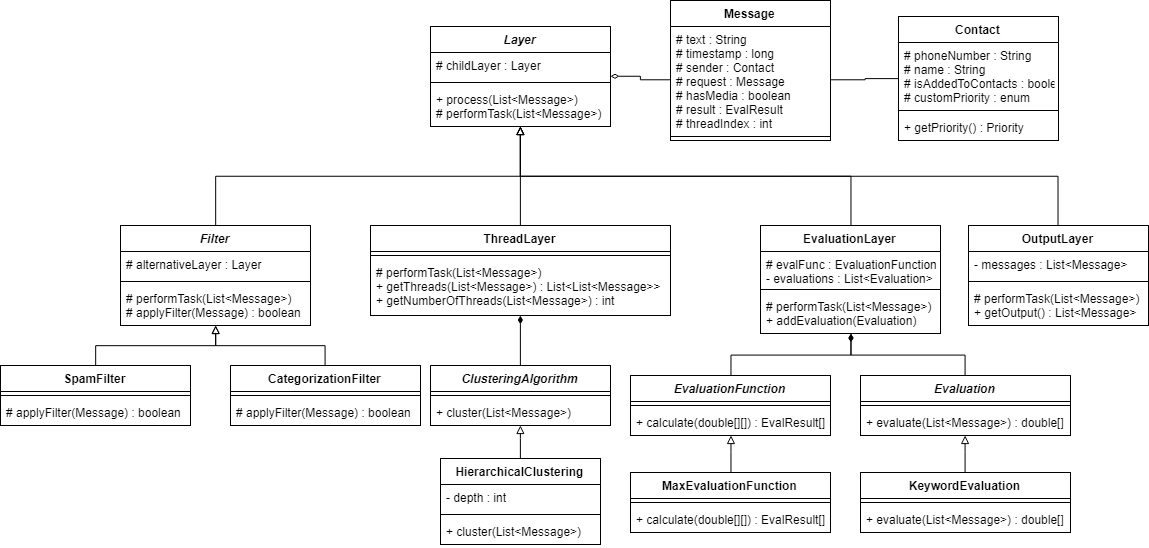

- Created structure with class diagram

- Implemented the base structure from the class diagram in java

- Started on question sentence detection for categorization

- Started on thread layer with a hierarchical clustering algorithm

- Started on UI

This iteration is the start of the creation of the prototype so the first action done was to create a good structure that is flexible enough to change the order of filters and other layers in the prototype later on. For this, a class diagram is made that is inspired from the prototype structure made for the prototype design. The class diagram shows that an abstract layer class is the parent of all of the layers in the prototype, which enables the use of restructuring the layers on the go when necessary. A layer class is very basic and only has a child layer and some methods for processing the messages and propagating them through to the child layer. The filter layer extends from the layer class and has an extra ‘alternative layer’ that is used to feed the messages to that got filtered out. Again there is an abstract method that should handle the filtering and which can be implemented by the subclasses which are the spam filter and the categorization filter for now. Next up there is a thread layer that is able to make use of different clustering algorithms for coupling related messages. For now only the hierarchical clustering algorithm is implemented with the properties time and sender but different algorithms could be implemented to see which works best. The evaluation layer is used to create an abstract structure that can be used to utilize multiple different evaluation methods for determining the degree of importance. The keyword evaluation is the evaluation that is going to be implemented next. After all evaluation methods have processed the messages an evaluation function needs to merge the results in a single value and determine whether the message is important or not. The last layer is the output layer which catches all messages that are outputted at different layers like the spam filter or the evaluation layer and returns the collected messages in order with the addition of an importance result.

The complete structure that can be seen in the image is already implemented in the programming language Java. This language is chosen since Java is also used for the Android operating system which is very open and could allow the prototype to be inserted and read from the incoming notifications. Furthermore Java is well known by the team. The prototype is already able to process messages created by hand, since dummy implementations have been made for all the layers. This allows implementation of some layers while the other layers might not work as intended yet. The layers that do have some implementation are the categorization filter and the thread layer.

Categorization filter

For the categorization filter, the detection of questions has been started on as the first category. The way that the question categorization works for now is to have a list of words that often indicate a question sentence when these words are placed at the beginning of the sentence. Different words receive a different amount of points, since some words always indicate a question sentence and other words occasionally. Furthermore a message can have multiple sentences from which only one is a question. To be able to detect this each individual sentence is processed and if there is a word indicating a question at the start, it will be detected. This works better than only looking at the first word of the message, since for some questions a small sentence might be before it to introduce the question. An example can be seen in the results table for the message sent at time 6. The last feature that is detected is a question mark at the end of a sentence, which also gives some points to the sentence showing a higher resemblance to a question. After the detection is done the points are compared with a threshold and if the number of points is greater than the threshold, the sentence is classified as a question. In next iterations the classification will be improved to work with other forms of question sentences and other categories will also be added. Below are the results of fifteen sentences of which are five questions. Four out of five questions are correctly classified as a question.

| Time | Sender | Message | Question categorization | Expected answer |

|---|---|---|---|---|

| 0 | John | test | No | No |

| 1 | John | spam | No | No |

| 2 | Jane | this is spam | No | No |

| 3 | Jan | real message | No | No |

| 4 | Henk | real good message | No | No |

| 5 | John | Is this good? | Yes | Yes |

| 6 | Henk | hello! shall we go to the beach? | Yes | Yes |

| 10 | John | Are you attending the lecture? | Yes | Yes |

| 11 | Jane | Yes, I am! | No | No |

| 12 | Henk | Yes, I am too! | No | No |

| 14 | Jan | No, I am on holiday | No | No |

| 16 | John | When will you be back? | Yes | Yes |

| 19 | Jan | I will be back tomorrow | No | No |

| 21 | Jane | Any of you know the answer to question 5? | No | Yes |

| 30 | Jane | ??? | No | No |

Thread layer

The layer responsible for coupling of related messages is also started on with the addition of a clustering algorithm called hierarchical clustering. The hierarchical clustering algorithm starts with each message as a separate cluster and looks for each iteration which messages have the least ‘distance’ between them and combines them into one cluster. This distance is determined by the euclidean distance function with the properties time and sender. The property time is used by computing the difference between each pair of messages, while the sender distance is determined by the distance function described in Distance function for clustering categories. For the hierarchical clustering algorithm a depth is expected which indicates the amount of iterations to cluster the messages. If this value is too low, very few messages will be clustered meaning no extra information while a high value will result in many questions clustered in the same cluster which is practically the same as not using clustering at all. A good depth is thus required to ensure a high entropy while the entropy will be low both if the depth is too high or low. From testing on the dataset below it is determined that expressing the depth in the amount of messages works better than giving a hard value. Furthermore, the depth worked best with a factor of three fourth. However, further refining is required on different datasets and when adding extra properties to the distance function. The results in the table show that there are two threads created. Especially the thread with index 2, since the differences in time are not as close as other messages, which shows that the sender distance function also does its work.

| Time | Sender | Message | Thread id |

|---|---|---|---|

| 0 | John | test | 0 |

| 1 | John | spam | -1 |

| 2 | Jane | this is spam | -1 |

| 3 | Jan | real message | 0 |

| 4 | Henk | real good message | 0 |

| 5 | John | Is this good? | 0 |

| 6 | Henk | hello! shall we go to the beach? | 0 |

| 10 | John | Are you attending the lecture? | 2 |

| 11 | Jane | Yes, I am! | 2 |

| 12 | Henk | Yes, I am too! | 2 |

| 14 | Jan | No, I am on holiday | 2 |

| 16 | John | When will you be back? | 2 |

| 19 | Jan | I will be back tomorrow | 2 |

| 21 | Jane | Any of you know the answer to question 5? | -1 |

| 30 | Jane | ??? | -1 |

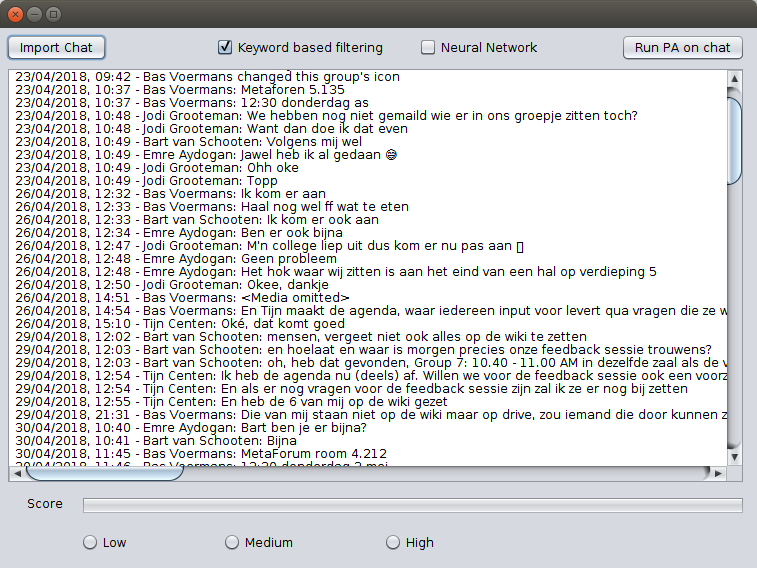

GUI Design

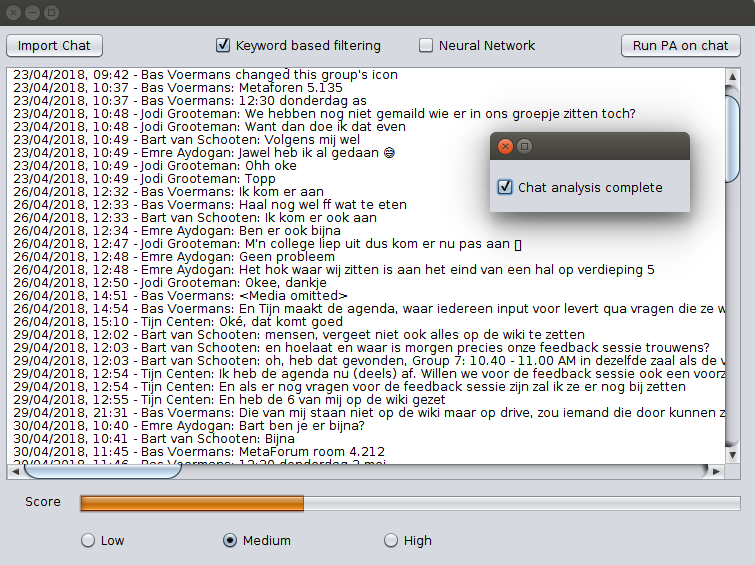

To make for a pleasant way of interacting with the PA prototype a GUI was designed in parallel with the actual implementation of the PA.

|

|

For the first iteration the functionality of the GUI was kept fairly limited. The user is able to import chats as .txt files through the file manager of the OS, which the GUI then shows in a text area. The user is then given the choice which filters he/she want to apply to this chat. The last bit of interactivity this GUI offers is the actual button to run the PA on the imported chat with the selected filters. This has yet to be implemented in a future version of the prototype.

What follows then is a pop up dialog that notifies the user that the analysis has been completed successfully. Furthermore, a random score is generated and shown to the user to further give an idea of how the GUI should function.

Iteration 2

- Start on user preferences

- Preprocessing and normalization

- Bayesian network evaluation

- Recurrent Neural Network evaluation

- Prototype structure improvements

- Create results summary

- Reading of chat data

User preferences

To give the user the ability to express their own preferences regarding the degree of blocking notifications, creating threads and receiving feedback a user preferences object is created that stores all the preferences of the user. These preferences and settings can either be set by the user or can be altered by means of learning from feedback. The thread depth factor is an example of the latter, since the depth itself is not saying anything to the user. The user can however indicate that messages are coupled wrong in the sense of too few coupling or too many. With this answer the depth factor can be fine-tuned. Furthermore the preferences of the categorization layer are already present. These preferences indicate whether for example a question needs to be always blocked or allowed or needs to be automatically processed by the evaluation layer. This preference can be useful when a lot of questions are asked in a group that are not aimed at the user. The user preferences object can keep track of even more upcoming preferences of other layers in the future.

Preprocessing and normalization

The preprocessing that is done in the program consists of several different parts. Each part is described below. Some of the parts have to be done after classifying what kind of sentence it is, for example the removal of punctuation since it is important for question classification. Because of this the preprocessing is split up into two layers. The first one is the preprocessing layer and the second one is the normalization layer. The normalization layer is executed after the classification layer is done. This makes it so that the preprocessing is still done before determining the importance of a message but after the classification of what kind of sentence it actually is.

Messages contain a lot of meaningless words. They give the sentence structure but they do not have any influence on the meaning of the message. These words can thus be removed from the sentence before analyzing the importance of the message. The words that are meant here are for example: “the”, “a”, “an” or “to”. All these words are put into an array, then the sentence is checked whether or not it contains words of this array. When the sentence contains one or more of these words then they are deleted from the sentence and the leftover of the sentence is propagated to the next step of the preprocessing.

Translating verbs to their base form is part of the normalization. This will make evaluating a lot easier. The first step is to split the sentences up in words then the words will be checked whether or not it is a verb and then the verb will be put in its base form. To do this a library named JAWS is being used. In combination with the dictionary from wordnet the JAWS library is able to convert a verb to their base form. The next step is finding out how to find a verb in a sentence. This could be done using the Stanford pos tagger. When processing a sentence with this library every word in the sentence will be tagged. This tag will say what kind of word it is. For example a noun or a verb. But by doing this the program needs a lot of computation time since this database is very big. Therefore this is not implemented in the prototype. Because this did not work out for the program another solution had to be found. This solution was pretty simple after all. Because when the program processes every word in a sentence with the JAWS library it only changes the words that are actually verbs. The other words in the sentence are untouched. When the processing is done the only thing that is left to do is to put the words back in a sentence so they actually form a message again.

Translating the numbers to words is also a part of the preprocessing of the prototype and is done by using an existing class that translates numbers to words. The only thing left to do is to detect where the numbers are in a sentence and replacing them by calling the function in the existing class. The numbers in the sentence are found with a regular expression in java. This is a tool to find special characters or numbers really easy. When the numbers are replaced the sentence will be returned and put through the next step of the preprocessing.

Most of the punctuation in a sentence do not indicate the importance of a message, therefore it is good to remove the punctuation before evaluating the message. Punctuation is however important for determining whether or not messages are questions for example. Therefore this step of preprocessing will be done after classifying the message but before evaluating the importance. Removing the punctuation is a very simple task because the regular expressions in java can easily remove all the punctuation. When this is done the sentence will be propagated to the next step of the program.

Bayesian network evaluation

The first evaluation method that has been created is the naïve bayesian network evaluation. The library used to create a bayesian network is the Classifier4j library and is implemented as follows. The evaluation class consists of a Bayesian classifier and a word data source. While training the bayesian network the text of all messages is being teached to the classifier depending on whether the message is spam or not. The classifier will then process the text and keep track of the number of occurrences in spam and non-spam for that word. The evaluation of messages works by computing the probability of the message being spam or not depending on these saved occurrences by the training method. The storing and loading of the word data source is not supported by the library and is thus created. The storing, loading and training functionalities are elaborated on more below. The results of the bayesian network are shown in the table below and from these results the bayesian network evaluation seems very promising, since all but one sentence is classified correctly. This is however still on the dummy messaging data and in the next iteration real data from a Whatsapp group will be used that is classified by hand. The results are generated by the network with the following structure: first a pre-processing layer followed by a thread layer, a categorization filter and a normalization layer. Then comes the evaluation layer with the bayesian evaluation method. The sentence that is classified wrongly as spam is: “is this good?” which is similar to the sentence “real good message”. More training data would resolve the issue but could also cause the network to function less good since Whatsapp messages are generally very short without good grammatical structure.

| Time | Sender | Message | Classified answer | Expected answer |

|---|---|---|---|---|

| 0 | John | test | spam | spam |

| 1 | John | spam | spam | spam |

| 2 | Jane | this is spam | spam | spam |

| 3 | Jan | real message | spam | spam |

| 4 | Henk | real good message | spam | spam |

| 5 | John | Is this good? | spam | good |

| 6 | Henk | hello! shall we go to the beach? | spam | spam |

| 10 | John | Are you attending the lecture? | good | good |

| 11 | Jane | Yes, I am! | good | good |

| 12 | Henk | Yes, I am too! | good | good |

| 14 | Jan | No, I am on holiday | good | good |

| 16 | John | When will you be back? | good | good |

| 19 | Jan | I will be back tomorrow | good | good |

| 21 | Jane | Any of you know the answer to question 5? | good | good |

| 30 | Jane | ??? | spam | spam |

| TP | 7 | FP | 0 |

| FN | 1 | TN | 7 |

| Total | 15 | ||

| Precision | 1.0 | ||

| Recall | 0.88 | ||

| Specificity | 1.0 | ||

| Accuracy | 0.93 |

Recurrent Neural Network evaluation

Furthermore, for the second visualization method Recurrent Neural Networks (RNN) have been looked at for the viability and while the training might be time consuming, neural networks have proved themselves to be able to analyze sequences of text or music very well. For this iteration a library for neural networks has already been chosen and a partial implementation is also already made. The library that is chosen is the DeepLearning4j library which supports a wide variety of neural networks for the Java programming language. While the library has a steep learning curve there are some good examples that show the implementation of a RNN on reviews where the network needs to categorize positive and negative reviews. The library works by setting up a network that expects so called word vectors. From these vectors the network is able to train and evaluate whether a message is spam or not. To be able to input the messages in the network, they first need to be transformed to word vectors, followed by a mapping onto the data structure that is expected by the library. The example uses a pre-trained database by Google of words to vectors, which is called the Google News dataset that ‘contains 300-dimensional vectors for 3 million words and phrases.’ This file is however 1.5GB of size and also requires a lot of memory to run the prototype. While testing 3GB of memory gave a out of memory exception and since it is desirable that the prototype can run locally on mobile devices this option is not possible. The next option was then to create a custom word to vector database that is aimed at the grammatical structure of messages. Since the structure is simpler and the vocabulary is much smaller in these messages, this custom database can be much smaller. For now the database is trained with a piece of text called ‘warpiece’, since the reading and classifying of chats is not done yet. With this custom dataset the network is able to run and only one message out of the fifteen could not be mapped to vectors, which is probably the message with only question marks. The network is now also able to be trained and evaluated but further work is needed to receive results out of the network.

Prototype structure improvements

This iteration the prototype structure is again improved, since it previously was difficult to read and change the structure of the layers because they needed to be written out from output to input. To solve this issue a class has been created that can receive layers in chronological processing order and the class itself will then link the individual layers. Furthermore the class also has easy to use methods that make tinkering with the structure very easy. This last improvement also comes into play when looking at how to save, load and train the complete prototype. Of course all layers need to be able to process the messages to get output but the saving, loading and training might differ from layer to layer. To solve this, layers can implement interfaces that indicate the storing feature or the training feature. When one of these methods are then called on the prototype, only the layers that can perform the saving, loading or training will actually do this.

Results summary

To be able to easily analyze the results of a chat evaluation some important numbers are calculated that express the performance of the prototype depending on the amount of true and false positives and negatives. These include the precision, recall, specificity and accuracy for now but can easily be extended to gain extra information.

Reading of chat data

Iteration 3

- Preprocessing and Normalization

- Recurrent Neural Networks Evaluation

- Integrate chat file parser

- Intermediate results

Preprocessing and Normalization

In this iteration the preprocessing is extended. A feature that replaces abbreviations with their full form is added. This is done by having all the abbreviations that are used in whatsapp in an excel file. Then this file is read by the program. The program uses Apache POI library to read the excel file. The messages come into the preprocessing and get split up. Then every word is checked whether or not it is in the abbreviation list. When it is it will get replaced by its full text. Then all the words in the message are put back together. The full messages will be propagated to the next part.

Recurrent Neural networks evaluation

The recurrent neural networks evaluation method has also been improved in such a way that the dummy data used earlier can be processed and gives correct results as output. However, when reading data in from real chats the evaluation method does not work flawlessly. This probably has to do with unstructured or unexpected messages that the prototype cannot cope with yet. The neural network itself can also be stored and loaded now. The results of the dummy data on the neural network are with an accuracy of 100%, which means that all 15 messages could be classified correctly after training. This is of course a small dataset but is an improvement on the bayesian network results.

Feedback structure

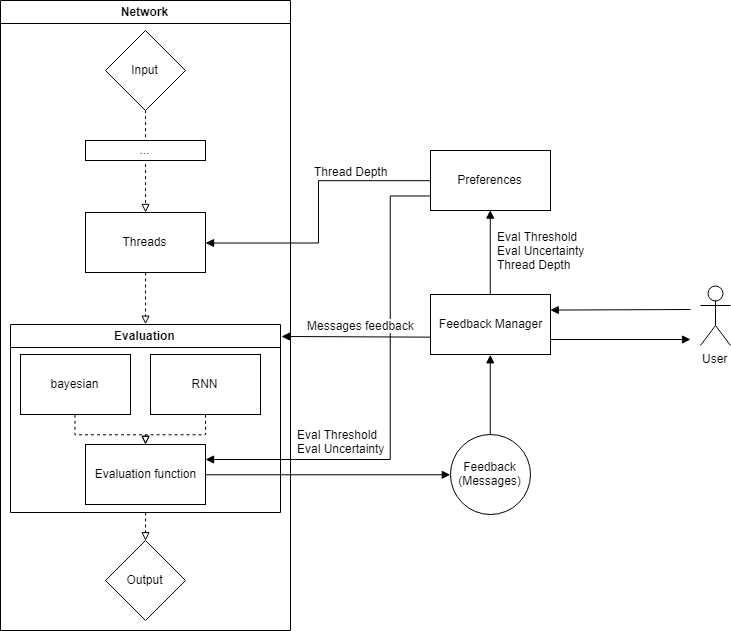

The way of giving feedback to the choice made by the network has also been created in this iteration. The way it works for message feedback is that there is a certain ‘uncertainty’ around the switching threshold of messages that are notification worthy and messages that are not. If the score of a message falls in this uncertainty range the message will be included in a feedback request that will be sent to the user. There are multiple options for the user to receive the feedback, such as through a notification, through a provided application on the phone or together with a batch of other feedback requests. Each layer in the network can listen for answered feedback requests of different types and will only take action when a predefined type is received. The types of feedback that are implemented for now are: message importance feedback, number of feedback requests, amount of blocked messages and the number of threads. Some of these feedback requests can be sent out autonomously from a layer in the network, while a different feedback request might be sent out on a timely basis. In the figure to the right the feedback structure can be seen. If for example a message falls in the uncertainty range during the evaluation it will be send to the feedback manager which in turn will propagate the message feedback request towards the user. After the user gave the feedback, the message will be received by the feedback manager and will be send to the evaluation layer where all evaluation methods can train and process the given feedback. In case feedback other than message importance is received by the feedback manager, it will propagate the feedback results towards the preferences. The preferences contain all hyperparameters that can be improved by learning from the user.

Integrate chat file parser

The Whatsapp chat file parser has also been integrated into the prototype. The parser can read Whatsapp chats that are exported from Whatsapp through the ‘send chat by email’ option. This will send a chat in a text (.txt) format containing the date and time, the sender of the message and the message itself. Since the date format is different for different languages and regions the parser should also be able to cope with the different formats. For now the parser supports the United States, United Kingdom and the Dutch format which are the most common formats for the group chats that are analyzed for this prototype. Furthermore the parser also keeps track of a ‘contact book’ since the prototype wants to know which messages are coming from the same contact. This is especially important for the Thread layer.

Intermediate results

In this section the intermediate results of the current iteration prototype are shown. For now there are two English chats that are classified by hand and can thus be used to train and evaluate the prototype. The first dataset consists of 178 messages with 146 notification worthy and 32 not notification worthy. The second and larger dataset consists of 1505 messages of which 959 notification worthy and 546 not notification worthy. There are more chats present but these need to be classified by hand first to say something about the results.

The network that is used to generate the following results is the following:

Preprocessing -> Threads -> Categorization -> Normalization -> Evaluation (Bayesian) -> Output

The following hyperparameters are used:

| Batch Size | 200 |

| Thread depth | 0.75 |

| Evaluation Threshold | 0.5 |

| Evaluation Uncertainty | 0.0 |

The first small dataset processed on a network trained on the same dataset took 5.3 seconds to train and process:

| TP | 145 | FP | 5 |

| FN | 1 | TN | 27 |

| Total | 178 | ||

| Precision | 0.97 | ||

| Recall | 0.99 | ||

| Specificity | 0.84 | ||

| Accuracy | 0.97 |

Although the network is trained on the same dataset, these results show that the bayesian network can definitely distinguish between messages. The fact that the number of false positives is relatively high is not too worrying since a higher percentage false positives is better than a high percentage of false negatives. People would rather receive messages that are not too important than miss out on important messages.

The larger dataset processed on a network trained on the same dataset took 24.3 seconds to train and process:

| TP | 929 | FP | 171 |

| FN | 30 | TN | 375 |

| Total | 1505 | ||

| Precision | 0.84 | ||

| Recall | 0.97 | ||

| Specificity | 0.68 | ||

| Accuracy | 0.87 |

These results show that the network is performing a little bit less on a larger dataset while it has been trained on the same large dataset. It is however still more desirable to have more false positives than false negatives.

The small dataset processed on a network trained on the large dataset took 3.9 seconds to process:

| TP | 132 | FP | 7 |

| FN | 14 | TN | 25 |

| Total | 178 | ||

| Precision | 0.95 | ||

| Recall | 0.90 | ||

| Specificity | 0.78 | ||

| Accuracy | 0.88 |

Compared to the results on the trained small dataset network this test did perform a little bit worse, which is understandable since the network did not see the 178 messages ever before. For this reason these results are still very promising and with even more fine tuning they could be improved even more. What can be seen from the data is that especially the number of false negatives increased which is not desirable for reasons described earlier in this subsection.

Preprocessing

The input the program gets is most of the time a really raw input. When analyzing emails the input will be a perfectly fine piece of text without typo’s and strange non-important messages in between.On the contrary the program that is being build has to take into account that in whatsapp a lot of typo’s are made and a lot of different strange text messages will be sent that do not mean anything by first seeing them. When an user is more used to the Whatsapp languages he or she gets to know some abbreviations that do not exist in the normal speaking languages. Therefore preprocessing is necessary to make it the program a lot easier to “read” and interpret all the messages.

The idea that removes all the words like: “the”, “is”, “was”, “where” might be a good idea to implement. Generally those words are not important to the meaning of a message. Those words are called stopwords. This would be implementing with have a list of stopwords, the stoplist. Then all the messages would be scanned for those stopwords and then the stopwords would be removed from the message. The idea to remove the stopwords would be a benefit to the program because it would have less clutter and non important words to analyze. Nevertheless it would make it harder to identify questions, as it removes one of the most important parts of the message that would identifies it as a question. Therefore this preprocessing step would suit the program more when it is done after the messages are categorized, and thus be a processing step somewhere in the middle of the programm.

An addition to the preprocessing, that other research papers suggested, could be that all the verbs would be translated back to their root form. This is called stemming. When having a sentence with the word talking in it. It would be replace talking with talk. In addition to that all the different verbs of talk would also be replaced with talk. When doing this the set of words that have to be checked would be a lot smaller because the list would only need to have one word instead of five different verbs of that word.

Threads

To get more out of singular messages threads can be used to to couple multiple messages into threads. With this the program can analyze a conversation instead of a single message. In conversations the topic does not change much. Therefore in single messages there might be a topic that is not literally stated in that message. Looking the context, the other messages, most of the time there is a topic that is addressed in that message. This is why threads are a great tool to analyze messages.

When will messages be coupled together in a thread is the next question. The most important property that the program will take into account is time. When messages fall in the same time interval they will be coupled. This is a very basic but good implementation. The second property that will be implemented is checking who is the sender of a message. When the same person sends more messages right after each other. The chances are pretty high that those messages address the same topic. Thus the messages should be put into a thread.

The paper suggest the usage of K-Means or NMF to cluster the messages. K means works well when the shape of the clusters are hyper-spherical. For the algorithm constructed in this research the clusters are not hyper-spherical. This is not the case in the implementation of clustering messages. Also for both K-means and NMF the number of clusters have to be predefined. In the case of clustering messages the number of clusters is not defined before clustering. A third algorithm to cluster is hierarchical clustering. This algorithm starts with giving every instance its own cluster. Then the algorithm starts combining clusters until it converges. This works well for this implementation because in hierarchical clustering there is not a predefined amount of clusters. However with hierarchical clustering a depth limit has to be specified. This could be a disadvantage for this implementation.

Clustering based on time is fairly easy because every timestamp is a number and the program can cluster on the distance between messages using the euclidean distance function or another distance function. In which the distance is the difference in time for the mean of two clusters. Clustering based on who sent the message is harder. There is not a way to do clustering on contacts using an euclidean or other similar distance measures, thus therefore a different method needed to be implemented to work with categories instead of numbers, which is described below.

Distance function for clustered categories

To compute a reasonable distance measure for categories that are uniformly distributed, meaning the individual differences are equal, inspiration from the Levenshtein distance was taken. The reason why the Levenshtein distance itself is not completely what is desired for the distance of categories, like the sender is because it also takes the order and the length into account. If the order or length is different between two clusters containing the same two senders the distance could be greater than the distance between two equal length clusters having different senders.

The next step was to make a sketch of multiple clusters with a different length and sender configuration. Some general rules were established to get an idea of which cluster pairs should receive a higher distance than others. For example two equal clusters should have a distance of 0 and two completely different clusters should have a distance of 1. Then the cluster ABB was compared with clusters AB, AC and C with the desired order of increasing distance: AB, AC, C. To establish the distance, fractions are made with the denominator equal to the sum of the two clusters together and the numerator equal to the sum of different senders in both clusters. As can be seen in the table below, this gives the desired result. To balance this distance function out with the other possible distance functions a multiplication factor has been added that can scale the distance depending on the importance of the clustering categories.

clusterDistance(c1, c2):

diffNumber = #{c1 - c2} + #{c2 - c1}

totalNumber = #{c1} + #{c2}

return diffNumber / totalNumber

| A | B | C | AB | AC | ABB | |

|---|---|---|---|---|---|---|

| A | 0 | 1 | 1 | 1/3 | 1/3 | 2/4 |

| B | x | 0 | 1 | 1/3 | 1 | 1/4 |

| C | x | x | 0 | 1 | 1/3 | 0/4 |

| AB | x | x | x | 0 | 2/4 | 0/5 |

| AC | x | x | x | x | 0 | 3/5 |

| ABB | x | x | x | x | x | 0 |

Classic Naive Bayes

In order to design a new technique to classify relevance of messages, it is necessary to first look at established techniques that approximate the goal of a Whatsapp spam filter. The first technique that comes to mind is the use of Bayes classifiers. Naïve Bayes classifiers are a popular technique In use for e-mail filtering. Typically spam is filtered using a bag of words technique, where words are used as tokens to calculate the probability according to Bayes Theorem that an e-mail is spam or not spam(ham).

To demonstrate how a naïve bayes spam filter might work, consider the example of a database of a random number X spam messages and 2X ham messages. It is now our task to classify new e-mails as they arrive, based on the currently existing objects. Since the amount of ham messages is twice the size of the spam messages, a new (still unobserved) message is twice as likely to be a member of ham than to be a member of spam. In Bayesian theorem, this probability is known as prior probability. These probabilities are solely based on previous observations. With the priors formulated, the program is ready to classify a new message. The message is broken up into words and each word is ran through the conditional probability table of all words in the database. Through this process the likelihood of the message being spam or ham is calculated. Finally, the posterior probability of the messages belonging to either class is calculated and whichever is higher is the class the message will be assigned to.

Words have a certain probability of occurring in either spam or ham. The filter does not know these probabilities in advance, it needs to be trained first so they can be built up. For instance, the spam probability of words like “Sex” or “Nigerian” are generally higher than the probabilities of names of family members and friends. When the agent is trained, the likelihood functions are used to compute the chance of an e-mail with a particular set of words belongs to either the spam or the ham class. One of the biggest advantages of Bayesian spam filtering is the fact that it is possible to train the filtering to each user, creating a personal spam filter. This training is possible because the spam a user receives correlates with that users activities. Eventually a Bayesian spam filter will assign a higher probability based on the user’s patterns. This property makes the use of a Bayesian classifier particularly attractive for Whatsapp spam filtering as the types of messages a user receives vary widely for users of the app. Bayesian Classifiers might also assign accurate probabilities from messages received from different groups as the group name can be used as a token as well.

Research questions

Feedback before using the prototype

The easiest and probably most obvious way to get personal data from the user is to give them a form to fill in. This form would consist of some general questions like: “Are you a student?”, “If so, where are you studying?”, “Do you consider positive enforcing messages important? (think of a confirmation or a compliment)” and so on. Giving the user such a form to fill in has the advantages that the program would already be able to be personalized when it will do its job in the beginning. Also during the improvement of the programm while the user is using it, it would need less feedback from the user because it already got a lot. The disadvantages of using such a form is that when an user answers such a question, the program makes an assumption based on the answer. For example when the user says that is has football as hobby, the program takes a certain list of words with all the keywords for football in it and gives the user a notification when one of those keywords is sent. But it might be the case that the user is not interested what happens in the champions league at all, but it plays football as a hobby. Another disadvantage of using a from might be that the user does not like to take the time to fill it in. Furthermore when the user fills in that he or she likes everything that is in the form the program will not do anything. Considering this the choice has been made to not include a form in the beginning.

Contact biasses

For making the program more personal, considering the contacts of the user is a very important aspect in doing this. This can be done in a couple of different ways.

The first option on how to consider contacts is to label a contact with a tag which would for example be: “Peer”, “Teacher” or “Brother”. In reality there would be a lot of different tags. Also when the contact is not in one of the categories of the tags the user would be able to create a new tag and give that tag a importance rate. The user would give this tag to a sender when he receives a messages from him or her. This tagging would only be done once and that would be the first time when the user receives a message from the sender. The advantage of this is that the program can use the information from the tag to get a better view of the importance of a message. The biggest disadvantage is that the user would have to do a lot of tagging the the beginning.

The other option would be to consider if the user has the contact in his or her phone. And according to this give a priority to the contact. There are three priorities for a contact. These priorities are low, medium and high. When a the number of the contact is already in the phone of the user. The contact is set to a medium priority. When the contact’s number is not in the phone of th user the contact is set to low. The user is able to manually adjust the priority of a contact in the user interface. This solution would be good because the user does not have to do a lot of work in the beginning. In addition to that the program would be able to consider the contact in determining the importance of a message. Also the user would be able to customize the priorities when desired.

By taking both options into consideration the choice has been made to implement the second option. This is because the the advantages of being able to customize when desired and not having to do a lot of work are decisive.

Feedback while using prototype

Getting feedback from the user is always important to consider. An user is more satisfied most of the time when something or someone cares about their opinion. From an use aspect making a feature that takes the opinion of the user into account would be a great thing to do. Then the question arises in what way would it be best to do this.

The easiest thing to do would be to give the user the option to give their opinion whether or not a message is useful after every message. This would be an easier option to implement but a rather annoying one for the user. The program would get a lot of information to learn and would be able to filter better probably. On the contrary the user would have to give a lot of feedback. Imagine geting 100 messages in an hour in a groupschat. Then the user would get the question 100 times whether or not the program did good. This is a huge disadvantage and does outweigh the advantages of this solution.

The next solution to this problem would require significantly less feedback from the user. For classification of the messages the program uses different algorithms. When the different algorithems do not give a matching answer. Then there will be asked for feedback from the user. In this way the program can learn from the things that are unclear to the program and the user would not have to give a lot of feedback. Also because the program would improve itself, it would ask for less feedback over time. This solution should in theory work much better than the first one. Therefore the choice has been made to implement this solution. It will fit the use aspect really well as the user will be giving feedback and wont be annoyed by the amount of feedback it has to give.

Communicate with the university infrastructure

In the the designed prototype, the only action the agent can do which impacts the user is to show or hide messages. however, it could prove advantageous to have the agent operate in more ways than just that. if for example, the user would receive a lot of messages about an upcoming group meeting and the agent has access to the users timetable, the agent could easily filter these messages into one category. The other way around could be that if a few group members schedule an appointment and invite the user over whatsapp, the agent could introduce an event in the users calendar. A very useful tool for people who are more forgetful of actually scheduling planned meetings. These new ways to act could also have a downside because they introduce complexity into the agent as for example, each course a user takes would have different keywords that are relevant and the dataset should then contain keywords for each course, this could show the agent down. This challenge will not be tackled in this study, but research into it could be useful for future studies.

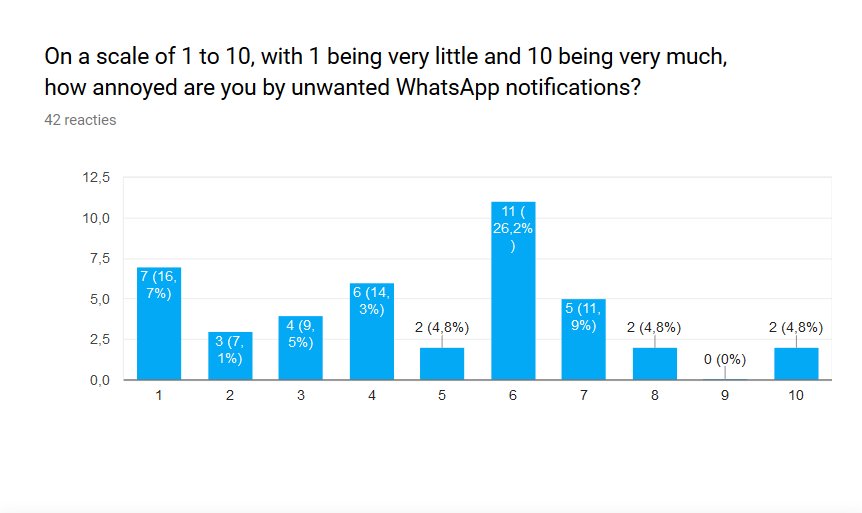

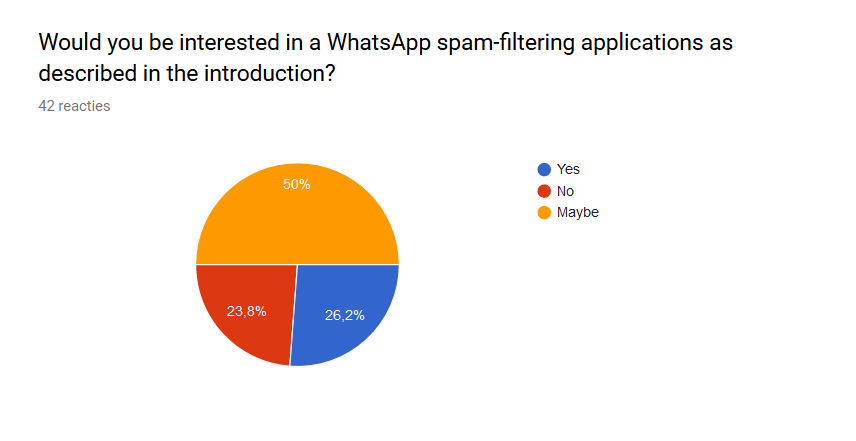

User Survey

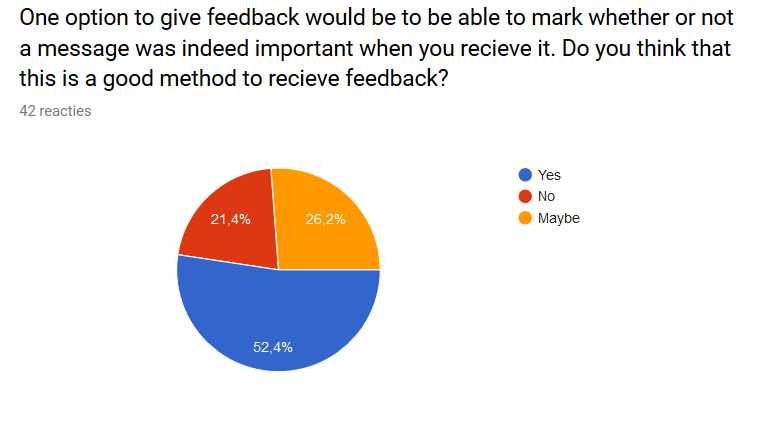

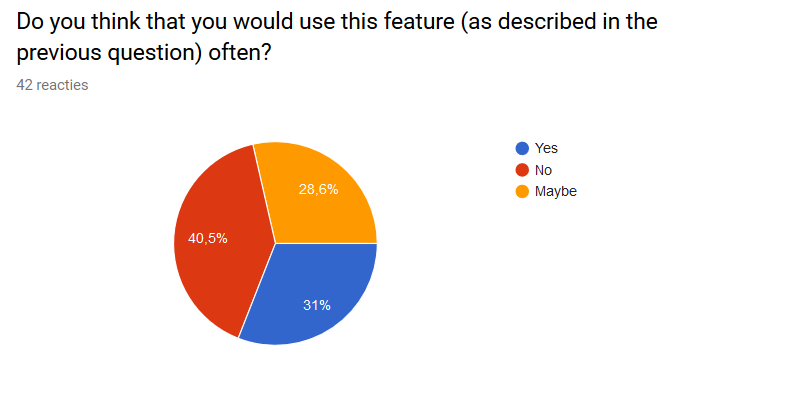

Since the team themselves are potential users of the product, they already have a general idea about what the user wants. However, the team only consits of 6 people, and opinions may vary greatly. To get a better idea of what other users would want from the product, a survey was created. The survey has been sent to other students of TU/e, therefore, almost all responses are from other students. This can have influence on the results, but since the product is also targeted towards these students, the responses still seem to be representative for the potential users.42 people filled in the survey. The survey itself can be found here: Survey Regarding WhatsApp Spam Filtering The goal of the first question was to see how big of a problem the problem the team tries to solve actually is. The amount of people who are not very annoyed by WhatsApp notifications is quite large. 20 out of the 42 people (47,6%) answered with a 4 or lower. This is the same amount of people that answered with a 6 or higher. Overall it can concluded that the problem, although less than initially thought, is indeed present to a certain degree among students of the TU/e. The full result can be seen below: