PRE2024 3 Group17: Difference between revisions

No edit summary |

No edit summary |

||

| Line 45: | Line 45: | ||

|Sophie | |Sophie | ||

|Lecture + group meeting + state of the art (3/4 art) + writing the problem statement | |Lecture + group meeting + state of the art (3/4 art) + writing the problem statement | ||

| | |Group meeting + tutor meeting + specify deliverables | ||

| | and approach + make concise planning | ||

|15 hours | |||

|- | |- | ||

|Mila | |Mila | ||

Revision as of 12:00, 24 February 2025

Students

| Names | Student number | |

|---|---|---|

| Bridget Ariese | 1670115 | b.ariese@student.tue.nl |

| Sophie de Swart | 2047470 | s.a.m.d.swart@student.tue.nl |

| Mila van Bokhoven | 1754238 | m.m.v.bokhoven1@student.tue.nl |

| Marie | 1739530 | m.a.a.bellemakers@student.tue.nl |

| Maarten | 1639439 | m.g.a.v.d.loo@student.tue.nl |

| Bram van der Heijden | 1448137 | b.v.d.heijden1@student.tue.nl |

Task division

| Name | Week 1 | Week 2 | Total time spent |

|---|---|---|---|

| Bridget | lecture + group meeting+State of the art (6 art) | group meeting + tutor meeting+Write up two use cases | 12 |

| Sophie | Lecture + group meeting + state of the art (3/4 art) + writing the problem statement | Group meeting + tutor meeting + specify deliverables

and approach + make concise planning |

15 hours |

| Mila | State of the art (3/4 art) + Users, Milestones, Deliverables, task Division | Create survey | |

| Marie | State of the art (3/4 art) + Approach | Write up State-of-the-art | 3H |

| Maarten | State of the art (6 art) | Research How to train a GPT? + start making one | 10H |

| Bram | State of the art (3/4 art) + User Requirements | Write up Problem Statement |

After the tutor meeting on Monday, we’ll meet as a group to go over the tasks for the coming weeks. Based on any feedback or new insights from the meeting, we’ll divide the work in a way that makes sense for everyone. Each week, we’ll check in, see how things are going, and adjust if needed to keep things on track. This way, we make sure the workload is shared fairly and everything gets done on time.

Problem statement

In this project we will be researching mental health challenges, specifically focusing on stress and loneliness, and exploring how social robots and Artificial Intelligence (AI) could assist people with such problems. Mental health concerns are on the rise, with stress and loneliness being particularly prevalent in today's society. Factors such as the rapid rise of social media channels and the increasing usage of technology in our everyday life contribute to higher levels of emotional distress. Additionally, loneliness is increasing in modern society, due to both societal changes and technological advancements. The increasing usage of social media and technology is replacing real-life interaction, creating superficial interactions that don’t fulfill deep emotional needs. Second, the shift to remote working and online learning means fewer face-to-face interactions, leading to weaker social bonds.

Seeking professional help can be a difficult step to take due to stigma, accessibility issues and financial constraints. There are long waiting times for psychologists, making it difficult for individuals to access professional help. This stigma and increase of stress and loneliness is especially apparent in age groups of young adolescents and the youth, who are also particularly vulnerable to stigma. Especially with the ever increasing load of study material the education system has to teach students and childeren, study related stress is becoming a larger problem by the day.

Many students struggling with mental health challenges such as loneliness and stress, often feel that their issues aren’t ‘serious enough’ to seek professional support, even though they might be in need. But even when it is serious enough to consult a professional, patients are going to have to wait a long time before actually getting therapy, as waiting lines have been getting significantly larger over the past years. Robots as well as Artificial Intelligence (AI) technologies might be the solution to bridge this gap, by offering accessible mental support that does not come with the same stigma as therapy. The largest benefit is mainly that AI therapy can be accessed at any time, anywhere.

This paper will focus on a literature review of using social robots and Large Language Models (LLMs) in supporting students and young adults with the rather common minor mental health issues of loneliness and stress. The study will start with reviewing the stigma, needs and expectations of users in regards to artificial intelligence in mental health issues and the current state of the art and its limitations. Based upon this information, a framework for a mental health LLM will be constructed, either in the form of a GPT or in the form of a guideline mental health GPT’s should follow. Finally a user study will be conducted to analyse the effectiveness of this proposed framework.

(Old problem statement: In this project we will be researching mental health challenges, specifically focusing on stress and loneliness, and exploring how social robots and Artificial Intelligence (AI) could assist people with such problems. Mental health concerns are on the rise, with stress and loneliness being particularly prevalent in today's society. Factors such as the rapid rise of social media channels and the increasing usage of technology in our everyday life contribute to higher levels of emotional distress. Additionally, loneliness is increasing in modern society, due to both societal changes and technological advancements. The increasing usage of social media and technology is replacing real-life interaction, creating superficial interactions that don’t fulfill deep emotional needs. Second, the shift to remote working and online learning means fewer face-to-face interactions, leading to weaker social bonds. Also, with the increasing life expectancy, there are more elderly people. Elderly people are at a higher risk for loneliness since they might live alone after losing partners, friends and family.

Seeking professional help can be a difficult step to take due to stigma, accessibility issues and financial constraints. There are long waiting times for psychologists, making it difficult for individuals to access professional help. Many students, as well as other individuals, struggling with mental health challenges such as loneliness, depression, anxiety or stress, often feel that their issues aren’t ‘serious enough’ to seek professional support, even though they might be in need of some help. Robots as well as Artificial Intelligence (AI) technologies might be solutions to bridge this gap between those who require help and the availability of mental health resources. In this project, we will specifically focus on the use of social robots and Large Language Models (LLMs) and their potential role in providing mental health support.

Beyond individual use, robots could be introduced in the therapeutic field, assisting professionals by monitoring patients' well-being over time, collecting data, or providing guided therapy sessions in structured environments. They could provide emotional support in a way that is easier accessible and cost-effective. However, this raises critical ethical considerations, particularly regarding data privacy and emotional dependence. Users may share sensitive personal experience with these robots or technological applications, raising concerns about how this data is stored and used. Additionally, there is the risk that individuals may form emotional attachments to AI-based companions.

Our research will mainly focus on conducting a literature review and gathering insight through qualitative or quantitative user studies to understand the needs and expectations of the users. Additionally, if it aligns with our research objectives, we may build and train some form of GPT as a prototype product to explore its feasibility in providing mental health support.

Through this project, we aim to explore the potential benefits, limitations and ethical considerations of integrating robots and LLMs into the mental health support system. By analyzing existing technologies, exploring user needs and potentially implementing existing limitations into new prototypes, we hope to find insights that are valuable to how robotics can positively impact mental well-being in an increasingly technology-driven world.)

Users

Our target users are students who are struggling with mental health challenges, specifically targeting loneliness and stress. The user focus is on those that either feel like their problems are not 'serious' enough to go to a therapist, have to wait a long time to see a therapist and need something to bridge the wait, or those that struggle to seek help and give them an easier alternative.

Personas and user cases

Joshua just started his second year in applied physics. Last year was stressful for him with obtaining his BSA, and now that this pressure has decreased he knows he wants to enjoy his student life more. But he doesn’t know where to start. All his classmates of the same year have formed groups and friendships, and he starts feeling lonely. Its hard for him to go out of his comfort zone and go to any association alone. His insecurities make him feel more alone. Like he doesn’t have anywhere to go, which makes him isolate himself even more, adding to the somber moods.

He knows that this is also not what he wants, and wants to find something to help him. Its hard to admit this to something, hard to put it in words. Therapy would be a big step, and would take too long to even get an appointment with a therapist. He needs a solution that doesn’t feel like a big step and is easily accessible.

Olivia, a 21 year-old Sustainable innovation student, has been very busy with her bachelor end project for the past few months, and it has often been very stressful and caused her to feel overwhelmed. She has always struggled with planning and asking for help, and this has especially been a factor for her stress during this project.

It is currently 13:00, and she has been working on her project for four hours today already, only taking a 15-minute break to quickly get some lunch. Olivia has to work tonight, so she has a bunch of tasks she wants to finish before dinner. Without really realizing it, she has been powering through her stress, working relentlessly on all kinds of things without really having a clear structure in mind, and has become quite overwhelmed.

With her busy schedule and strict budget, a therapist has been a non-explored option. Olivia has not grown up in an environment where stress and mental problems were discussed openly and respectfully, and has always struggled to ask for help with these problems. However last week, she found online help tool and used it a few times to help her calm down when things get too intense. On the screen, is an online AI therapist. This therAIpist made it easier for Olivia to accept that she needed help and look for it. She has found it to become increasingly easy for her to formulate her problems, and the additional stress of talking to someone about her problems have decreased. Olivia receives a way to talk about her problems and get advice.

When she is done explaining her problems to the AI tool, it applauds Olivia for taking care of herself, and asks her if she could use additional help of talking to a human therapist. Olivia realizes this would really help her, and decides to take further action in looking for help in an institution. In the time she would wait for an appointment she can make further use of the AI tool in situations where help is needed quickly and discreetly.

User requirements

- Achieve personal/emotional progression on their mental health struggle.

- Get people to open up towards other people and talk about their issues to family or friends.

- Make people feel comfortable chatting/opening up towards the artificial intelligence.

- Handling user data with care for example not sharing or leaking personal data and asking for consent when collecting data.

Existing mental health chatbots

There are already some existing mental health chatbots. Some examples are:

- Woebot : According to the website of Woebot: Woebot is a fully-automated mental health ally you can chat with through an app on your smartphone or tablet, anytime day or night. Woebot invites you to monitor and manage your mood using tools such as mood tracking, progress reflection, gratitude journaling, and mindfulness practice. Woebot is intended to help you manage mood and anxiety symptoms and can be used as a mental health support tool to supplement treatments, therapies, or self-care practices. https://woebothealth.com/referral/

How it works: Woebot starts a conversation by asking you how you’re feeling and, based on what you share, Woebot suggests tools and content to help you identify and manage your thoughts and emotions and offers techniques you can try to help you feel better. Woebot’s conversations are written by conversational writers using elements from evidence-based approaches like Cognitive Behavioral Therapy (CBT), Interpersonal Psychotherapy (IPT), and Dialectical Behavioral Therapy (DBT), along with collaboration from our Clinical experts. https://woebothealth.com/referral/

- Wysa: This AI chatbot employs evidence-based therapeutic techniques, including CBT, mindfulness, and dialectical behavior therapy (DBT), to assist users in managing stress, anxiety, and depression. Wysa offers a safe space for users to express their emotions and learn coping mechanisms.

Postive reviews: About Wysa there is also an article about positive signs from users. They call it for example "a trusting environment promotes wellbeing” and “ubiquitous access offers real-time support" https://pmc.ncbi.nlm.nih.gov/articles/PMC11304096/

- Psychologist, this AI is focused on students and is created via character.ai, a platform where you can create a chatbot based on fictional or real people. Here a psycholist student created one named Psychologist and this one is being well praised with millions of messages already received. In an article of the bbc they are saying it does help handling pressure of the daily life. https://www.bbc.com/news/technology-67872693?utm_source=substack&utm_medium=email

General postive comments on mental health robots:

https://www.nature.com/articles/s44184-024-00097-4

- "The most amazing feature of these tools is how they are able to understand you… This still blows my mind. – Sandro, 48, Switzerland",

- "It’s really nice. It’s sympathetic and kind – Philip, 58, United Kingdom"

- "Compared to like friends and therapists, I feel like it’s safer – Jane, 24, United States"

Our own GPT

Typically, developing a chatbot requires extensive training on data sets, refining models and implementing natural language processing (NLP) techniques. This process includes a vast amount of data collection, training and updating of the natural language understanding (NLU).

However, with OpenAI's “Create Your Own GPT,” much of this technical work is abstracted away. Instead of training a model from scratch, this tool allows users to customize an already trained GPT-4 model through instructions, behavioral settings and uploaded knowledge bases. So without the need for coding or AI expertise it enables users to create a tailored AI assistant like for example our mental health chatbot.

The things that needs to be done for our gpt training:

- Behavior and design: Knowing how to guide conversations effectively, ensuring responses are matching with the desires of our users (emphathetic, ethical, engaging)

- User centric thinking: Defining the needs of students seeking mental health support and structuring conversations accordingly.

- Prompt engineering: Determining how the gpt needs to respond (so less solution but more personal focused with asking questions).

- Testing.

Our current GPT:

https://chatgpt.com/g/g-67b84edc9194819182a10a0dff7371c5-your-mental-health-chat-partner

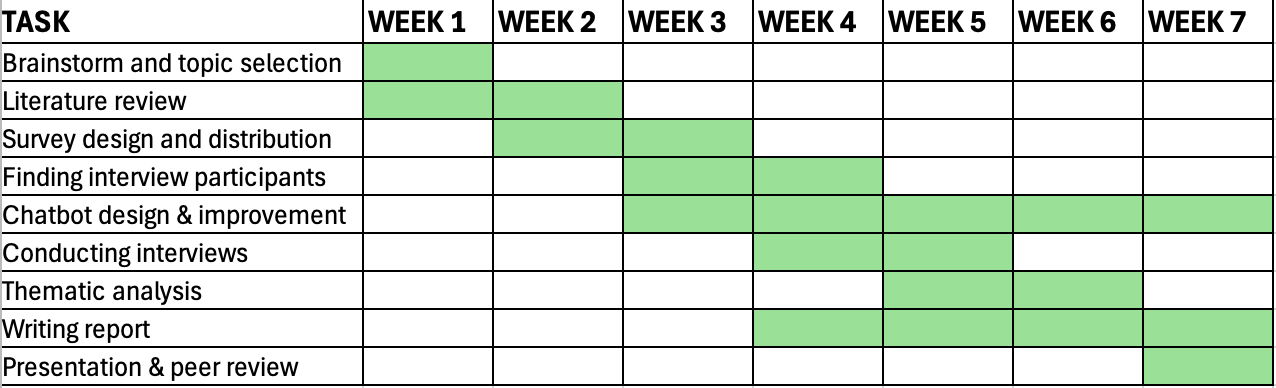

Milestones

Week 1

- Brainstorming topics and pick one

- Communicate topic and group to course coordinator

- Start the problem statement

- Conduct literature review (5 articles pp)

- Formulate planning, deliverables and approach

- Users – user requirements

- Identify possibilities technical aspect

Week 2

- Improving and writing the problem statement, including the following:

- What is exactly what we want to research?

- How are we going to research this?

- Context and background

- Which problem are we addressing?

- Importance and significance

- Research question/research aim (problem definition)

- Current gaps or limitations

- Desired outcomes – what is the goal of this study?

- Scope and constraints – who is our target group?

- Formulating questions for the survey and setting the survey up – this survey should include questions/information that will help up us determine what we should and should not include in our chatbot, additional to the insights found in literature.

- Demographic information

- Short questions about levels/experiences of loneliness and stress

- Short questions about the current use of AI – what type of resources do students already use supporting possible mental health problems such as loneliness and/or stress?

- Sending out the survey (we aim for at least 50 participants for this survey)

- Formulating two user cases – one for loneliness and one for stress. These user cases should include:

- Clear and concise user requirements

- A description of our user target group – who are they? What do they value? What does their daily life look like?

- Problems they might have related to our topic

- Reliability?

- Ethical concerns? Privacy?

- How useful are such platforms?

- Exploring and working out the possibilities/challenges in how to develop the chatbot/AI.

- Which chatbots do already exist? How do they work? Is there any literature about the user experience of any of these platforms?

- How does it technically work to create a chatbot?

- What do we need for it? Knowledge? Applications/platforms?

- How much time will it take?

- What might be some challenges that we could encounter?

- How and in what way do we need to create the manual?

- Updating the state of the art, including:

- Which chatbots do already exist? How do they work? Is there any literature about the user experience of any of these platforms?

- Overview of psychological theories that could apply

- What research has been done relating to our topic? What of this can we use? How?

- A clear and comprehensive planning of the rest of the quartile. Including clear and concise points what to do per week and all intermediate steps.

Week 3

- Starting to find participants for the interviews

- How many participants?

- Determine inclusion and exclusion criteria

- Process and analyze survey data –

- Create a list of prioritized and pain points and user needs – cross this to literature findings

- Map user pain points to potential chatbot features

- Where do the users experience problems? How might we be able to solve these within our design?

- Writing the manual for the chatbot – this should incorporate the literature findings found in week 2 and should be separate for loneliness and stress.

- Translate theoretical insights into practical chatbot dialogues

- Research on what good language is for an AI

- ?? ERB forms and sending those to the ethical commission

- Making consent forms for the interviews

- Exploring and working out the ethical considerations

- Working on the design of the chatbot

- Defining chatbot objectives – what are the key functionalities?

- Map out conversation trees for different user scenarios

- Incorporate feedback loops

- If time and possible – start working on interview guide to have more space and time for conducting the interviews.

Week 4

- Finalizing chatbot (COMPLETE BEFORE WEDNESDAY) –

- Finalizing design and features

- Testing and debugging

- Formulating interview guide (COMPLETE BEFORE WEDNESDAY)

- Develop a semi-structured guide – balance open-ended questions to encourage deeper insights, organize questions into key themes.

- Finalizing participants –

- Ensure participants fit user category criteria

- Informed consent – provide clear explanations of the study purpose, data use and confidentiality in advance to the interview.

- Scheduling and logistics – finalize interview schedules, locations and materials

- Prepare a backup plan in case of lots of dropouts

- Write introduction – use problem statement as starting point and include it in the introduction

- Provide context, research motivation and objectives.

- Short overview approach

- Write section about survey, interview questions, chatbot manual and approach

- Survey structure, explain questions, and findings (using graphs?)

- Interview structure, explain interview guide and rationale for chosen approach

- Explain ethical considerations and study design

- Elaborate on the informed consent and ERB form

- Start conducting interviews

Week 5

- Conducting the last interviews (ideally done before Wednesday)

- Ensure a quiet, neutral space (if real-life)

- Securely record interviews (using Microsoft?)

- Start to process and analyze the interviews

- Transcription – clean version

- Thematic analysis, steps:

- Familiarization with the data – reading and rereading through transcripts to identify key patterns, note initial impressions and recurring topics.

- Coding the data – descriptive (basic concepts) and interpretative codes (meanings behind responses)

- Identifying themes and subthemes – group similar codes into broader themes

- Reviewing and refining themes – check for coherence, ensure each theme is distinct and meaningful

- Defining and naming themes – assign clear and concise names that reflect core purpose.

- Start writing findings section – organize interview insights by theme

- Present each theme with supporting participant quotes

- Discuss variations in responses (different user demographics?)

Week 6

- Completing thematic analysis – clearly highlighting what can/should be changed in the design of the chatbot.

- Cross-checking and validating themes

- Extracting key insights for chatbot improvement

- Highlight what should be changed or optimized in the chatbot design

- Categorize findings into urgent, optional and future improvements, depending on time constraints.

- Finish writing findings section

- Write results section

- Present main findings concisely with visual aids (tables, graphs, theme maps, etc.)

- Write discussion section

- Compare findings of the interviews to existing literature and to the results of the survey study

- Address unexpected insights and research limitations

- Write conclusion section

- Summarize key takeaways and propose future research possibilities

- Updating the chatbot based on insights of the interviews

Week 7

Space for potential catch-up work

- Finish and complete chatbot

- Finalize report

- Prepare presentation

- Give final presentation

- Fill in peer review

Approach

Each week, there will be a meeting with the tutors on Monday morning to ask questions and get feedback. Furthermore, we meet as a group before and after each tutor meeting to prepare the meeting, evaluate the feedback and discuss and make a new plan and task division for the upcoming week.

On a weekly basis, we will evaluate what tasks need to be done and assign the tasks according to skills and interests of the group members.

We will start the process by finding a topic, doing literature research and exploring the options for our self created chatbot. After this process is complete, we will start by exploring potential user needs through a simple and short Microsoft Forms survey. We aim to have around 50 responses to be able to draw some insights from it. We will then create a chatbot which incorporates the findings from the survey as well as the findings found in existing literature. At the same time we will create an interview guide to be able to evaluate our prototype with users that fall within our target group. After having conducted the interviews, we will perform a thematic analysis to find themes and sub themes and formulate improvements for our chatbot. The final step is to make changes to the design of the chatbot accordingly to the findings of the interviews.

Deliverables

The wiki will be updated at the latest by Friday afternoon which is our weekly deliverable.

The final deliverable is the final presentation together with the final report. The report consists of our entire process, including all intermediate steps with explanation and justification, as well as theoretical background information, conclusions, discussion and implications about the findings for future research.

Literature Review

State of the art (25 articles): (3/4 pp, Maarten en Bridget 6)

Mila:

- Zhang, J., & Chen, T. (2025). Artificial intelligence based social robots in the process of student mental health diagnosis. Entertainment Computing, 52, 100799. https://doi.org/10.1016/j.entcom.2024.100799

- Eltahawy, L., Essig, T., Myszkowski, N., & Trub, L. (2023). Can robots do therapy?: Examining the efficacy of a CBT bot in comparison with other behavioral intervention technologies in alleviating mental health symptoms. Computers in Human Behavior: Artificial Humans, 2(1), 100035. https://doi.org/10.1016/j.chbah.2023.100035

- Jeong, S., Aymerich-Franch, L., Arias, K. et al. Deploying a robotic positive psychology coach to improve college students’ psychological well-being. User Model User-Adap Inter 33, 571–615 (2023). https://doi-org.dianus.libr.tue.nl/10.1007/s11257-022-09337-8

- Edwards, A., Edwards, C., Abendschein, B., Espinosa, J., Scherger, J. and Vander Meer, P. (2022), "Using robot animal companions in the academic library to mitigate student stress", Library Hi Tech, Vol. 40 No. 4, pp. 878-893. https://doi.org/10.1108/LHT-07-2020-0148 Sophie:

Sophie:

Velastegui, D., Pérez, M. L. R., & Garcés, L. F. S. (2023). Impact of Artificial Intelligence on learning behaviors and psychological well-being of college students. Salud, Ciencia y Tecnologia-Serie de Conferencias, (2), 343.

This article assesses how interaction with technology affect college student’s well-being. Educational technology designers must integrate psychological theories and principles in the development of AI tools to minimize the risks of student’s mental well-being.

Lillywhite, B., & Wolbring, G. (2024). Auditing the impact of artificial intelligence on the ability to have a good life: Using well-being measures as a tool to investigate the views of undergraduate STEM students. AI & society, 39(3), 1427-1442.

This article investigates the impact of artificial intelligence on the ability to have a good life. They focus on students in the STEM majors. The authors found a set of questions that might be good starting points to develop an inventory of students’ perspectives on the implications of AI on the ability to have a good life.

Pittman, M., & Reich, B. (2016). Social media and loneliness: Why an Instagram picture may be worth more than a thousand Twitter words. Computers in human behavior, 62, 155-167.

This article examines if there is a difference between image-based social media use and text-based media use regarding loneliness. The results suggest that loneliness may decrease, while happiness and satisfaction with life may increase with the usage of image-based social media. Text-based media use appears ineffectual. The authors propose that this difference may be due to the fact that image-based social media offers enhanced intimacy.

O’Day, E. B., & Heimberg, R. G. (2021). Social media use, social anxiety, and loneliness: A systematic review. Computers in Human Behavior Reports, 3, 100070.

This article examines the broad aspects of social media use and its relation to social anxiety and loneliness. It provides a better understanding of how more socially anxious and lonely individuals use social media. Loneliness is a risk factor for problematic social media use, and social anxiety and loneliness both have the potential to put people at a risk of experiencing negative consequences as a result of their social media use. More research needs to be done to examine the causal relations.

Bridget:

Socially Assistive Robotics combined with Artificial Intelligence for ADHD. (2021, 9 januari). IEEE Conference Publication | IEEE Xplore. https://ieeexplore.ieee.org/abstract/document/9369633

This paper presents a patient-centered therapy approach using the Pepper humanoid robot to support children with attention deficit. Pepper integrates a tablet for interactive exercises and cameras to capture real-time emotional data, allowing for personalized therapeutic adjustments. The system, tested in collaboration with a diagnostic center, enhances children's engagement by providing a non-intimidating robotic intermediary.

BetterHelp - Get started & Sign-Up today. (z.d.). https://www.betterhelp.com/get-started/?go=true&utm_source=AdWords&utm_medium=Search_PPC_c&utm_term=betterhelp_e&utm_content=161518778316&network=g&placement=&target=&matchtype=e&utm_campaign=21223585199&ad_type=text&adposition=&kwd_id=kwd-300752210814&gad_source=1&gclid=CjwKCAiAzba9BhBhEiwA7glbasw7PG1fxUn6i-hXi0CPIHNrD_1VB3F6SF7OPBtBi8pw0-H0ntSblRoCEa8QAvD_BwE¬_found=1&gor=start

Better help is an online therapy platform that eases access to psychological help.

'Er zijn nog 80.000 wachtenden voor u' | Zorgvisie

Fiske, A., Henningsen, P., & Buyx, A. (2019). Your Robot Therapist Will See You Now: Ethical Implications of Embodied Artificial Intelligence in Psychiatry, Psychology, and Psychotherapy. Journal Of Medical Internet Research, 21(5), e13216. https://doi.org/10.2196/13216

This paper assesses the ethical and social implications of translating embodied AI applications into mental health care across the fields of Psychiatry, Psychology and Psychotherapy. Building on this analysis, it develops a set of preliminary recommendations on how to address ethical and social challenges in current and future applications of embodied AI.

Kuhail, M. A., Alturki, N., Thomas, J., Alkhalifa, A. K., & Alshardan, A. (2024). Human-Human vs Human-AI Therapy: An Empirical Study. International Journal Of Human-Computer Interaction, 1–12. https://doi.org/10.1080/10447318.2024.2385001

This study examines mental health professionals' perceptions of Pi, a relational AI chatbot, in early-stage psychotherapy. Therapists struggled to distinguish between human-AI and human-human therapy transcripts, correctly identifying them only 53.9% of the time, while rating AI transcripts as higher quality on average. These findings suggest that AI chatbots could play a supportive role in mental healthcare, particularly for initial problem exploration when therapist availability is limited.

Holohan, M., & Fiske, A. (2021). “Like I’m Talking to a Real Person”: Exploring the Meaning of Transference for the Use and Design of AI-Based Applications in Psychotherapy. Frontiers in Psychology, 12. https://doi.org/10.3389/fpsyg.2021.720476

This article explores the evolving role of AI-enabled therapy in psychotherapy, particularly focusing on how AI-driven technologies reshape the concept of transference in therapeutic relationships. Using Karen Barad’s framework on human–non-human relations, the authors argue that AI-human interactions in psychotherapy are more complex than simple information exchanges. As AI-based therapy tools become more widespread, it is crucial to reconsider their ethical, social, and clinical implications for both psychotherapeutic practice and AI development.

Maarten:

Humanoid Robot Intervention vs. Treatment as Usual for Loneliness in Older Adults

This study investigates the effectiveness of humanoid robots in reducing loneliness among older adults. Findings suggest that interactions with social robots can positively impact mental health by decreasing feelings of loneliness.

Citation:

Bemelmans, R., Gelderblom, G. J., Jonker, P., & De Witte, L. (2022). Humanoid robot intervention vs. treatment as usual for loneliness in older adults: A randomized controlled trial. Journal of Medical Internet Research [1]

Enhancing University Students' Mental Health under Artificial Intelligence: A Narrative Review

This review discusses how AI-based interventions can be as effective as traditional therapy in managing stress and anxiety among students, offering convenience and less stigma.

Citation:

Li, J., Wang, X., & Zhang, H. (2024). Enhancing university students' mental health under artificial intelligence: A narrative review. LIDSEN Neurobiology, 8(2), 225. [2]

Artificial Intelligence Significantly Facilitates Development in the Field of College Student Mental Health

The article explores key applications of AI in student mental health, including risk factor identification, prediction, assessment, clustering and digital health.

Citation:

Yang, T., Chen, L., & Huang, Y. (2024). Artificial intelligence significantly facilitates development in the field of college student mental health. Frontiers in Psychology, 14, 1375294. [3]

A Robotic Positive Psychology Coach to Improve College Students' Wellbeing

This study examines the use of a social robot coach to offer positive psychological interventions to students, finding significant improvements in psychological well-being and mood.

Citation:

Jeong, S., Aymerich-Franch, L., & Arias, K. (2020). A robotic positive psychology coach to improve college students' well-being. arXiv preprint arXiv:2009.03829. [4]

Potential Applications of Social Robots in Robot-Assisted Interventions

This research discusses how social robots can be integrated into interventions to alleviate symptoms of anxiety, stress and depression by increasing the ability to regulate emotions.

Citation:

Winkle, K., Caleb-Solly, P., Turton, A., & Bremner, P. (2021). Potential applications of social robots in robot-assisted interventions. International Journal of Social Robotics, 13, 123–145. [5]

Exploring the Effects of User-Agent and User-Designer Similarity in Virtual Human Design to Promote Mental Health Intentions for College Students

The study examines how the design of virtual people can affect their effectiveness in promoting conversations about mental health among students.

Citation

Liu, Y., Chen, Z., & Wu, D. (2024). Exploring the effects of user-agent and user-designer similarity in virtual human design to promote mental health intentions for college students. arXiv preprint arXiv:2405.07418. [6]

Marie:

Artificial intelligence in mental health care: a systematic review of diagnosis, monitoring and intervention applications

This article reviews 85 relevant studies in order to find information about the application of AI in mental health in the domains of diagnosis, monitoring and intervention. It presents the methods most frequently used in each domain as well as their performance.

-> citation:

Cruz-Gonzalez, P., He, A. W.-J., Lam, E. P., Ng, I. M. C., Li, M. W., Hou, R., Chan, J. N.-M., Sahni, Y., Vinas Guasch, N., Miller, T., Lau, B. W.-M., & Sánchez Vidaña, D. I. (2025). Artificial intelligence in mental health care: a systematic review of diagnosis, monitoring, and intervention applications. Psychological Medicine, 55, e18, 1–52 https://doi.org/10.1017/S0033291724003295

An Overview of Tools and Technologies for Anxiety and Depression Management Using AI

This article evaluates the utilization and effectiveness of AI applications in managing symptoms of anxiety and depression by conducting a comprehensive literature review. It identifies current AI tools, analyzes their practicality and efficacy, and assesses their potential benefits and risks.

-> citation:

Pavlopoulos, A.; Rachiotis, T.; Maglogiannis, I. An Overview of Tools and Technologies for Anxiety and Depression Management Using AI. Appl. Sci. 2024, 14, 9068. https:// doi.org/10.3390/app14199068

Harnessing AI in Anxiety Management: A Chatbot-Based Intervention for Personalized Mental Health Support

This study analyzes the effectiveness of an AI-powered chatbot, made using ChatGPT, in managing anxiety symptoms through evidence-based cognitive-behavioral therapy techniques.

-> citation:

: Manole, A.; Cârciumaru, R.; Brînzas, , R.; Manole, F. Harnessing AI in Anxiety Management: A Chatbot-Based Intervention for Personalized Mental Health Support. Information 2024, 15, 768. https:// doi.org/10.3390/info15120768

Bram:

Child and adolescent therapy, 2006

PC Kendall, C Suveg

This book is about treating mental health issues in children and adolescence. Where especially chapters 1-5 and 7 are interesting to apply to a possible ai, which assists people with mental health issues. Specifically not chapter 6 as this is a more serious matter.

Psychotherapy and Artificial Intelligence: A Proposal for Alignment

Flávio Luis de Mello, Sebastião Alves de Souza

This article is about psychotherapy in artificial intelligence. They demonstrate this by implementing a their model of an artificial intelligence in psychotherapy on web application.

Perceptions and opinions of patients about mental health chatbots: scoping review

Alaa A Abd-Alrazaq, Mohannad Alajlani, Nashva Ali, Kerstin Denecke, Bridgette M Bewick, Mowafa Househ

this article looks at chatbots in mental health and reviews what patients think about them.