PRE2018 4 Group8

Project Plan

Members

| Name | Student ID | Study | |

|---|---|---|---|

| Rik Hoekstra | 1262076 | r.hoekstra@student.tue.nl | Applied Mathematics |

| Wietske Blijjenberg | 1025111 | w.t.p.blijjenberg@student.tue.nl | Software Science |

| Kilian Cozijnsen | 1004704 | k.d.t.cozijnsen@student.tue.nl | Biomedical Engineering |

| Arthur Nijdam | 1000327 | c.e.nijdam@student.tue.nl | Biomedical Engineering |

| Selina Janssen | 1233328 | s.a.j.janssen@student.tue.nl | Biomedical Engineering |

Ideas

Surgery robots

The DaVinci surgery system has become a serious competitor to conventional laparoscopic surgery techniques. This is because the machine has more degrees of freedom, thus allowing the surgeon to carry out movements that they were not able to carry out with other techniques. The DaVinci system is controlled by the surgeon itself, and the surgeon therefore has full control and responsibility over the result. However, as robots are becoming more developed, they might become more autonomous as well. But mistakes can still occur, albeit perhaps less frequently than with regular surgeons. In such cases, who is responsible? The robot manufacturer, or the surgeon? In this research project, the ethical implications of autonomous robot surgery could be addressed.

Elderly care robots

The ageing population is rapidly increasing in most developed countries, while vacancies in elderly care often remain unfilled. Therefore, elderly care robots could be a solution, as they relieve pressure of the carers of elderly people. They can also offer more specialised care and aide the person in their social development. However, the information recorded by the sensors and the video-images recorded by cameras should be protected well, as the privacy of the elderly should be ensured. In addition to that, robot care should not infantilise the elderly and respect their autonomy.

Facial emotion recognition

Facebook uses advanced Artificial Intelligence (AI) to recognise faces. This data can be used or misused in many ways. Totalitarian governments can use such techniques to control the masses, but care robots could use facial recognition to read the emotional expression of the person they are taking care of. In this research project, facial recognition for emotion regulation can be explored, as there are interesting technical and ethical implications that this technology might have on the field of robotic care.

Problem Statement

Research Question

The choice of our subject of study has gone to emotion recognition in elderly. For this purpose, the following research question was defined:

In what way can Convolutional Neural Networks (CNNs) be used to perform emotion recognition in real-time video images of elderly people?

Sub-subjects

Based on the research question, a set of sub-subjects was identified. The purpose of these sub-questions is to collectively solve the research question.

- Technical sub-questions:

What are the requirements for the dataset that will be used?

What are the requirement for the training, test and validation set?

What are the best features to use for facial expression analysis?

What is a suitable CNN architecture to analyse dynamic facial expressions?

- USE sub-questions:

What is a possible application of our CNN emotion recognition technology?

Which users and enterprises would benefit from our software?

What are the consequences of false-positives versus false-negatives?

Are there legal or moral issues that will impede the application of our technology?

Introduction

19.9% of the elderly report that they experience feelings of loneliness. A potential cause of this is that they have often lost quite a large deal of their family and friends. The solution for this could be an assistive robot with a human-robot interactive aspect. It recognises the facial expression of the elderly person and from this deducts their needs. If the elderly person looks sad, the robot might suggest them to contact a family member or a friend via a skype call. However, the technology for such interaction has not been developed thorougly yet. While human-robot interaction using speech analysis is a relatively mature topic, the field of facial expression recognition from a robot's camera images is a lot more unexplored. Research also shows that the combination of video images and recorded speech data is especially powerful and accurate in determining an elderly person's emotion. Therefore, this research project proposes a package for facial emotion recognition, as can be used for the SocialRobot project, where facial recognition and speech analysis have been implemented already, but facial expression analysis was not.

If robots could accurately recognize human emotions, for example with the use of Convolutional Neural Networks on video images of the elderly person, the care that elderly care robots provide could be enhanced in many ways. However, the use of facial recognition does raise moral and legal questions, especially concerning privacy and autonomy of the elderly person. This project investigates in what way Convolutional Neural Networks (CNNs) can/should be used for the purposes of emotion recognition in elderly care robots.

Objectives

- Construct a CNN that must be able to distinguish at least 1 emotion from other emotions.

- Analyze the technical possibilities of the CNN

- Analyze the ethics of using a CNN for emotion recognition in elderly care robots.

Application examples

Case-study

Bart always describes himself as "pretty active back in his days". But now that he's reached the age of 83 he is not that active anymore. He lives in an apartment complex for elderly people, with an artificial companion named John. John is a care-robot, that besides helping Bart in the household also functions as an interactive conversation partner. Every morning after greeting Bart, the robot gives a weather forecast. This is a trick it learned from the analysis of casual human-human conversations which almost always start with a chat about the weather. Today this approach seems to work fine, as after some time Bart reacts with: “Well, it promises to be a beautiful day, isn’t it?” But if this robot was equipped with simple emotion recognition software it would have noticed that a sad expression appeared on Bart’s face after the weather forecast was mentioned. In fact, every time Bart hears the weather forecast he thinks about how he used to go out to enjoy the sun and the fact that he cannot do that anymore. With emotion recognition, the robot could avoid this subject in the future. The robot could even try to arrange with the nurses that Bart goes outside more often.

In this example, Bart would profit of the implementation of facial emotion recognition software in his care robot. At the same time, a value conflict might arise. On the one hand, the implementation of emotion recognition software could seriously improve the quality of the care delivered by the care robot. But on the other hand, we should seriously consider up to what extend these robots may replace the interaction with real humans. And when the robot decides to take action to get the nurses to let Bart go outside more often this might conflict with the right of privacy and autonomy. It might feel to Bart as if he is treated like a child, when the robot calls his carers without Bart's consent.

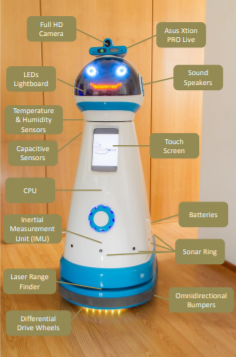

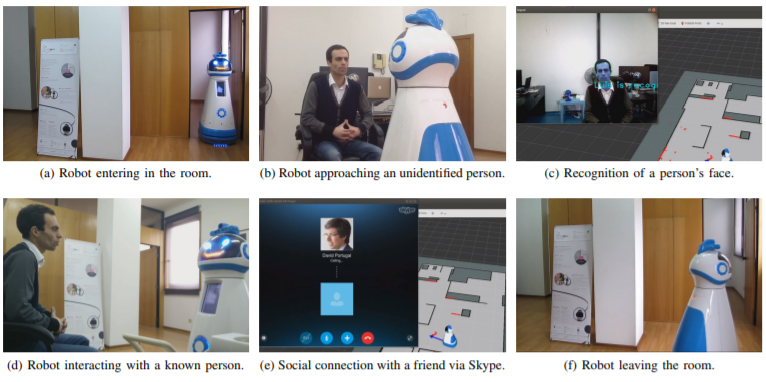

SocialRobot project

SocialRobot is a European project that has the aim to aide elderly people in living independently in their own house and to improve their quality of life, by helping them with maintaining social contacts. The robotic platform has two wheels, it is 125cm so that it looks approachable. It is equipped with, including but not limited to: a camera, infrared sensor, microphones, a programmable array of LEDs that can show facial expressions and a computer. Its battery pack is fit to operate continuously for 5 hours.

The SocialRobot identifies the elderly person using face recognition and then reads their emotion from the response that they give to its questions using voice recognition. The speech data was analysed and from this, the emotion of the elderly person was derived. The accuracy of this system was 82%. The idea is that the robot uses the response as input for the actions it takes afterwards, e.g. if the person is sad, they will encourage them to initiate a skype call with their friends. If the person is bored, they will encourage them to play cards online with friends.

USE evaluation of the initial plan

User

Human-robot interaction : According to Kacperck[1], effective communication in elderly care is dependent on the nurse’s ability to listen and utilize non-verbal communication skills. Argyle[2] says there are 3 distinct forms of human non-verbal communication:

- Non-verbal communication as a replacement for language

- Non-verbal communication as a support and complement of verbal language, to emphasize a sentence or to clarify the meaning of a sentence

- Non-verbal communication of attitudes and emotions and manipulation of the immediate social situation, for example when sarcasm is used.

Facial expressions play an important role in these forms of non-verbal communication. However, robots do not have the natural ability to recognize emotions as well as humans do. This can lead to problems with elderly care robots. For example, a patient might try consciously or subconsciously to communicate something using facial expressions and the display of emotions, and the robot does not recognize this or recognizes it inaccurately. The elderly person may get frustrated because they have to put everything they feel and want into words, which may lead to them appreciate their care less. In the worst case, they may not accept the robot because it will feel too inhumane and cold.

The SocialRobot project already uses emotion recognition based on tone of speech to deal with these problems. But we think this is not optimal. The first form of human non-verbal communication gives rise to problems. If non-verbal communication is used as a replacement for speech, tone recognition won’t help you determine the current emotional state. Emotion recognition based on face expression would work better in this case. On the other hand, when speech is used, analyzing the meaning of the words used often already provides information about the emotional state of the speaker. Both facial emotion recognition and tone based emotion recognition should be able to complete the information in this case. The highest accuracy could be reached by combining image based and tone based emotion recognition, but such complicated software might not be necessary if facial emotion recognition already gives satisfactory results.

Misusers : Every technology is prone to be misused as well. Facial emotion recognition technology can be very dangerous if it comes into the wrong hands and is used for immoral purposes. Totalitarian societies can use facial recognition technology to monitor continuously how their inhabitants are behaving and whether they are speaking the truth. Applications closer to elderly care might also be misused: Data and its interpretation on the emotions of a vulnerable elderly person is stored in a robot. This information could be used in a way that goes against the free will of the elderly person, for example when it is shared with their carers against their will.

Society

combat loneliness : In most western societies, the ageing population is ever increasing. This, in addition to the lack of healthcare personnel, poses a major problem to the future elderly care system. Robots could fill the vacancies and might even have the potential to outperform regular personnel: Using smart neural networks, they can anticipate the elderly person's wishes and provide 24/7 specialized care, if required in the own home of the elderly person. The use of care robots in this way is supported by (20), which reports that care robots can not only be used in a functional way, but also to promote the autonomy of the elderly person by assisting them to live in their own home, and to provide psychological support. The latter is necessary, as researchers from the Amsterdam Study of the Elderly (AMSTEL) found that about 20% of the Dutch elderly experience feelings of loneliness. They have often lost a significant part of their social contacts and possibly their partner, leading to loneliness. The research links loneliness to the onset of dementia. Therefore, the reduction of social isolation is detrimental to both the quality of life and the mental state of the elderly.

While emotion recognition can be used on various kinds of target groups (see state-of-the-art section), the high levels of loneliness amongst elderly are the motivation for the choice of elderly as our target group. However, elderly people are still a broad target group with a wide range in needs, in which the following categories can be defined:

- Elderly people with regular mental and functional capacities.

- Elderly people with affected mental capacities but with decent physical capabilities.

- Elderly people with affected mental and physical capacities.

All of the categories of elderly people might cope with loneliness, but category 2 and 3 are more likely to need a care robot. They are also a vulnerable group of people, as they might not have the mental capacity to consent to the robot's actions. In this respect, interpreting the person's social cues is vital for their treatment, as they might not be able to put their feelings into words. For this group of elderly, false negatives for unhappiness can especially have an impact. To deduce what impact it can have, it is important to look at the possible applications of this technology in elderly care robots.

As the elderly, especially those of categories 2 and 3, are vulnerable, their privacy should be protected. Information regarding their emotions can be used for their benefit, but can also be used against them, for example to force psychological treatment if the patient does not score well enough on the 'happiness scale' as determined by the AI. Therefore, the system should be safe and secure. If possible, at least in the first stages secondary users can play a large role as well. Examples of such secondary users are formal caregivers and social contacts. The elderly person should be able to consent to the information regarding their emotions being shared to these secondary users. Therefore, only elderly people of category 1 can be included in this study.

privacy and autonomy

Enterprise

- Enterprises who develop the robot

There already are enterprises which are developing robots that can determine facial expression to a certain extent. Think of the smart doll (15), for which The Eyes of Things (EoT) platform is used, Google Glass (11) [, Microsoft Kinect, Microsoft HoloLens. ] Also, the robot of the SocialRobot project is an example. Except for the SocialRobot, all these applications are not developed especially for elderly people. Anyway, these kinds of enterprises could be interested in software or robot which is specialized in facial recognition of elderly people on the other hand. Namely, they already develop the robots and the designs which are interesting for a robot usable in elderly care. So since there are not robots which can determine facial expression specific of elderly people at the moment and the demand of such robots could be increasing in the near future, these enterprises could be willing to invest in the software for facial recognition for elderly people. Also, the enterprises which are developing Care robots could be interested in software which can determine the facial expression of elderly people. Furthermore, the enterprises of companion robots, just like Paro, could implement such software in their robots too.

- Enterprises who provide healthcare services

State-of-the-Art technology

To promote the clarity of our literature study, the state-of-the art technology section has been subdivided into three sections: Sources that provide information regarding the technical insight, sources that explain more about the implications our technology can have on USE stakeholders and sources that describe possible applications of our technology.

Technical insight

Neural networks can be used for facial recognition and emotion recognition. The approaches in literature can be classified based off the following elements:

- The database used for training of the data

- The feature selection method

- The neural network architecture

An example of a database is Cohn-Kanade extended (CK+, see fig. 1.). It has pictures from a diverse set of people, the participants were aged 18 to 50, 69% female, 81%, Euro-American, 13% Afro-American, and 6% other groups. The participants started out with a neutral face and were then instructed to take on several emotions, in which different muscular groups in the face (called action units or AUs by the researchers) were active. The database contains pictures with labels, classifying them into 7 different emotions: anger, disgust, fear, happiness, sadness, surprise and contempt.

Source 5 has used this database. To extract the features, they first cropped the image and then normalized the intensity (see fig. 2.). The researchers computed the local deviation of the normalised image with a structuring element that had a size of NxN.

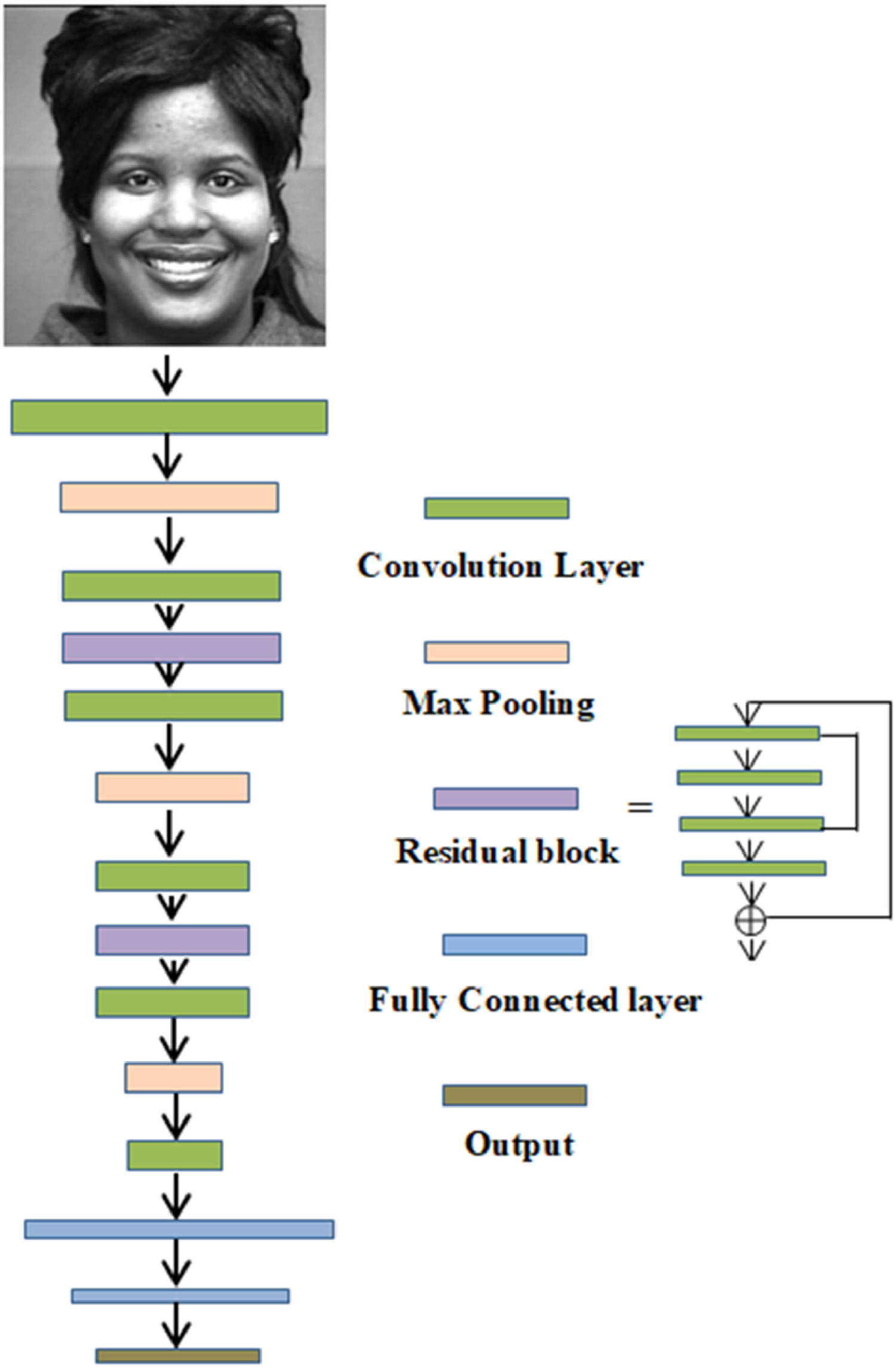

Then as can be seen in fig. 3. , the neural network architecture chosen by the researchers consisted of six convolution layers and two blocks of deep residual learning, called a 'deep convolutional neural network'because of its size. In addition to that, after each convolution layer there was a max pooling layer and there are 2 Fully Connected layers.

There are different ways of connecting neurons in machine learning. If we want to fully connect the neurons, and the input image is a small 200x200 pixel image, it already has 40,000 weights. The convolutional layers apply a convolution operation to the input, reducing the number of free parameters. The max pooling layers combine the outputs of several neurons of the previous layer into a single neuron in the next layer, specifically the one with the highest value is taken as input value for the next layer, further reducing the number of parameters. The Fully Connected layers connect each neuron in one layer to each layer in the next layer. After training the neural network, each neuron has a weight.

The rest of the sources used a similar approach, and have been classified in the same manner in the table down below:

| Article number | Database used | Feature selection method | Neural Network architecture | Additional information |

|---|---|---|---|---|

| 1 | own database | It recognizes facial expressions in the following steps: division of the facial images in three regions of interest (ROI), the eyes, nose and mouth. Then, feature extraction happens using a 2D Gabor filter and LBP. PCA is adopted to reduce the number of features. | Extreme Learning Machine classifier | This article entails a robotic system that not only recognizes human emotions but also generates its own facial expressions in cartoon symbols |

| 3 | Karolinska Directed Emotional Face (KDEF) dataset | Their approach is a Deep Convolutional Neural Network (CNN) for feature extraction and a Support Vector Machine (SVM) for emotion classification. | Deep Convolutional Neural Network (CNN) | This approach reduces the number of layers required and it has a classification rate of 96.26% |

| 5 | The dataset used was Extended Cohn-Kanade (CK+) and the Japanese Female Expression (JAFFE) Dataset | not mentioned | Deep Convolutional Neural Networks (DNNs) | The researchers aimed to identify 6 different facial emotion classes. |

| 7 | own database | The human facial expression images were recorded and then segmented by using the skin color. Features were extracted using integral optic density (IOD) and edge detection. | SVM-based classification | In addition to the analysis of facial expressions, also speech signals were recorded. They aimed to classify 5 different emotions, which happened at an 87% accuracy (5% more than the images by themselves). |

| 8 | own database | unknown | Bayesian facial recognition algorithm | This article is from 1999 and stands at the basis of machine learning, using a Bayesian matching algorithm to predict which faces belong to the same person. |

| 9 | unknown | This article uses a 3D candidate face model, that describes features of face movement, such as 'brow raiser' and they have selected the most important ones according to them | CNN | The joint probability describes the similarity between the image and the emotion described by the parameters of the Kalman filter of the emotional expression as described by the features, and it is maximized to find the emotion corresponding to the picture. This article is an advancement of the methods described in 8. The system is more effective than other Bayesian methods like Hidden Markov Models, and Principal Component Analysis. |

| 10 | Cohn-Kanade database | unknown | Bayes optimal classifier | The tracking of the features was carried out with a Kalman Filter. The correct classification rate was almost 94%. |

| 22 | own database | unknown | CNN | The method of moving average is ultilized to make up for the drawbacks of still image-based approaches, which is efficient for smoothing the real-time FER results |

USE implications

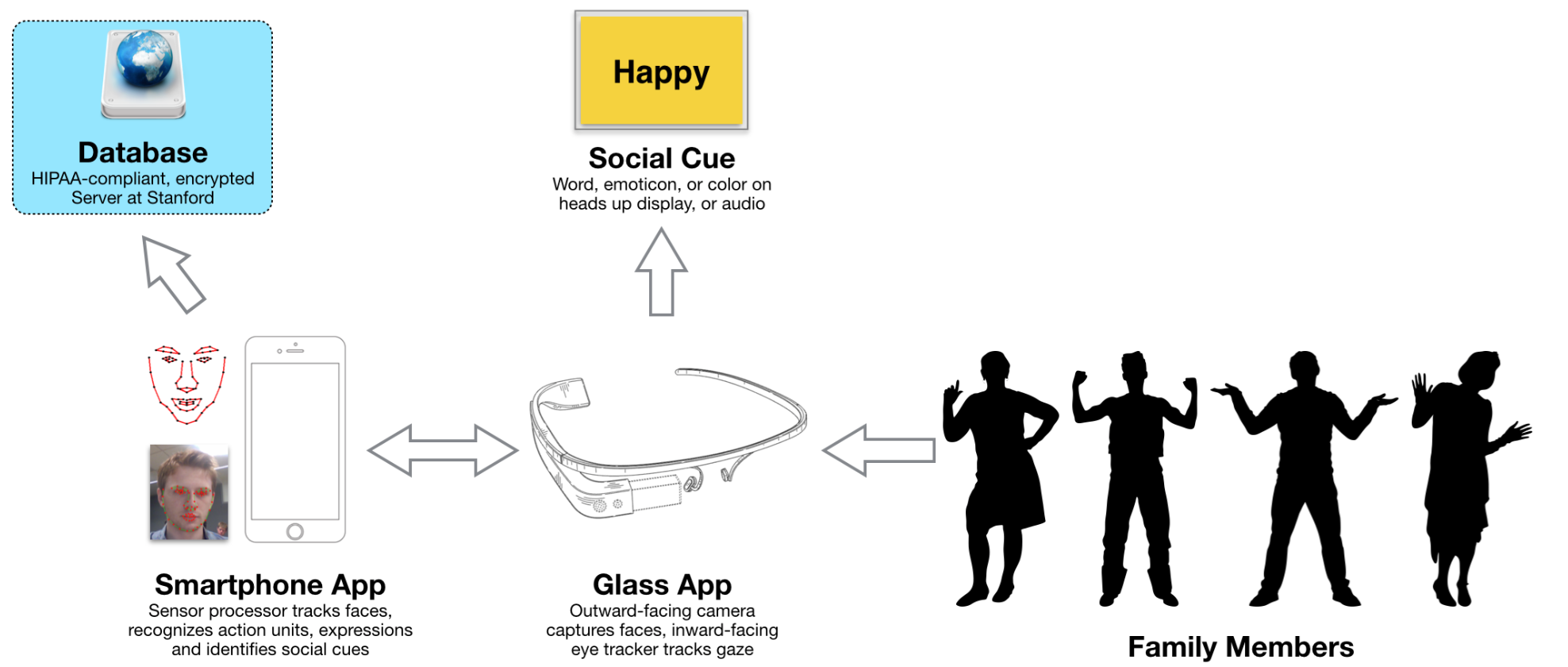

12) Some elderly have problems recognizing emotions. This is problematic, as primary care facilities for the elderly try to care using their emotions, e.g. to cheer the elderly person up by smiling. It would be very useful for the elderly to have a device similar to the Autismglass in source 11. The Autismglass is in fact a Google Glass that was equipped with facial emotion recognition software (see fig. 4.). It is currently used to help children diagnosed with autism recognise the emotions of the people surrounding them.

20) Assistive social robots have a variety of effects or functions, including (i) increased health by decreased level of stress, (ii) more positive mood, (iii) decreased loneliness, (iv) increased communication activity with others, and (v) rethinking the past. Most studies report positive effects. With regards to mood, companion robots are reported to increase positive mood, typically measured using evaluation of facial expressions of elderly people as well as questionnaires.

Privacy

“Amazon’s smart home assistant Alexa records private conversations” [3] Americans were in a paralytic privacy shock and these headlines were all over the news less than a year ago. But if you think about the functionality of this smart home assistant it shouldn’t come to you as a surprise that it’s recording every word you say. To be able to react on its wake word “Hey Alexa” it should be recording and analyzing everything you say. This is were the difference between “recording” and “listening” enters the discussion. When the device is waiting for it’s “wake word” it is “listening”: Recordings are deleted immediately after a quick local scan. Once the wake word is said the device starts recording. And here comes the shocking part, these recordings are not processed offline nor were they deleted afterwards. After online processing of the recordings, which doesn’t necessarily lead to privacy violation when properly encrypted, the recordings were saved at amazon’s servers to be used for further deep learning. The big problem was that Alexa would sometimes by triggered by other words than its “wake word” and start recording private conversations which were not meant to be in the cloud forever. These headlines affected feeling of privacy of the general public. Amazon was even accused of using data from its assistant to make personalized advertisements for its clients.

Introducing a camera in peoples house is a big invasion of their privacy, and if privacy isn’t seriously considered during development and implementation similar problems as in the case of Amazon will arise.

Image processing

The question we would all ask when a camera is installed in our home is “What happens with the video recordings?”. In the case of Alexa, the recordings ended up on a server of Amazon. How will we prevent that the videos which the robot makes end up online? There is a simple solution for that; our software will run completely offline. And the video recordings will be deleted immediately after processing. The processing itself is done by a neural network which will be trained in such a way that in only returns a string with the emotion. The neural network itself will gather more which is needed for the processing, for example the position of the face in the picture, but this information can’t be obtained outside the network. This is important because the robot will probably have other functions for which it needs to be connected to the internet and these functionalities shouldn’t have access to other information than the currently detected emotion. In this way we ensure that the only data which can be accessed outside the program will be the current emotion.

Deduced information

A closed program does not guarantee privacy protection. In the case of Amazon, we see that Amazon in accused of using Alexa’s “normal output” to create personal advertisements. In our case giving a list of the emotional state of a person during the complete day still is a big invasion of privacy. For local feedback, where the robot notices whether its action leads to happiness, this isn’t a problem. But let’s look at the situation of Bart in the introduction, where the robot suggests the homecare nurses to take Bart outside more often. There is personal information about Bart being shared with an extern party. In this case the robot should first ask for permission to share the data. This could decrease the effectivity of the robot, as Bart might not agree to ask the nurses to take him outside more often because he thinks this is a burden for them and decides to not share the information. But this is the price we must pay for privacy, and it is Bart’s right to make this decision.

Feeling of safety

Would you like a camera recording you on the toilet? Even though this camera only recognizes your emotions and keeps that information to itself unless you agree to share it, the answer would probably be “no”. And it should be, because you wouldn’t feel save when you were recorded during your visit to the toilet. When a robot with a camera is introduced into your house your feeling of safety will be affected. To minimize this impact the robot won’t be recording during the whole day. The camera will be integrated in the eye of the robot. When the robot is recording the eyes are open. When it is not recording the eyes will be closed and there will be a physical barrier over the camera. This should give the user a feeling of privacy when the eyes of the robot are closed. The robot will only record you when you interact with it. So as soon as you address it its eyes will open, and it will start monitoring your emotional state until the interaction stops. Besides that, the robot will have a checkup function. After consultation with the elderly person this function can be enabled. This will make the robot wake every hour and check the emotional state of the elderly person. If this person is sad the robot can start a conversation to cheer him or her up. If this person is in pain the robot might suggest adjusting medication.

Privacy of third parties

There are situations in which there is another person in the house except for the user of the care robot. There are three cases in which this person might be recorded. The first case is when this third party starts interacting with the robot. We choose not to use face identification of the user to protect his/her privacy. So, the robot won’t know that it is recording a third party. But in our opinion by starting a conversation with the robot the third party consents to being recorded. If the user interacts with the robot in presence of a third party the robot will focus on the user and emotion recognition will be performed on the most prominent face in the video which should be the user. The second case occurs when the checkup function is used. The robot might go for a check up routine while a third party is in the house. If it sees the face of this party, it will perform emotion recognition and could start a conversation. This can only be prevented by an option to switch the checkup function off during the visit. The implementation of such a switch should be discussed when the decision is made to use this checkup function.

Possible applications

The research team of (6) has developed an android which has facial tracking, expression recognition and eye tracking for the purposes of treatment of children with high functioning autism. During the sessions with the robot, they can enact social scenarios, where the robot is the 'person' they are conversing with.

Source (11) describes facial and emotion recognition with the Google glass, for children with Autism Spectrum Disorder (ASD). See also https://www.youtube.com/watch?v=_kzfuXy1yMI for a demonstration of Stanford's 'autismglass'.

Source (15) has developed a doll which can recognize emotions and act accordingly using an Eyes of Things (EoT). This is an embedded computer vision platform which allows the user to develop artificial vision and deep learning applications that analyse images locally. By using the EoT in the doll, they eliminate the need for the doll to connect to the cloud for emotion recognition and with that reduce latency and remove some ethical issues. It is tested on children.

Source (22) uses facial expression recognition for safety monitoring and health status of the old.

Impact on life

What is the robots impact on the life of an elderly person.

Our robot proposal

Steps to be taken

First a study of the state-of-the art was done, to get familiar with the different techniques of using a CNN for facial recognition. It will also be crucial for deciding how our project will go beyond what is already researched. Then a database with relevant photos will be constructed for training the CNN as well as one to test it. A CNN will be constructed, implemented and trained to recognize emotions using the database with photos. After training the CNN will be tested with the testing database, and if time allows it, it will be tested on real people. The usefulness of this CNN in elderly care robots will then be analysed, as well as the ethical aspects.

What can our robot do?

The SocialRobot platform can execute various actions. Based on the actions, a minimum standard for the accuracy of facial emotion recognition can be specified, as the actions can have varying degrees of invasiveness.

- facial recognition: The robot can recognise the face of the elderly person and their carers.

- emotion recognition: Using a combination of facial data and speech data, the robot classifies the emotions of the elderly person.

- navigate to: The robot can go to a specific place or room in the elderly person's environment.

- approach person: The robot can use the same principle to detect a person and approach them safely.

- monitoring: Monitoring of the elderly person's environment.

- docking/undocking: The robot can autonomously drive towards a docking station to charge is batteries.

- SoCoNet call: The robot can retrieve and manage the information of the elderly in a so-called SoCoNet database.

- speech synthesis: The robot can use predefined text-to-speech recordings to verbally interact with the user.

- information display: The robot can display information on a tablet interface.

- social connection: The robot has a Skype interface to establish communication between the elderly person and their carers/friends.

Emotion Classification

The current package of emotion recognition in the SocialRobot platform contains the following emotions: Anger, Fear, Happiness, Disgust, Boredom, Sadness, Neutral. This is because the researchers of the project have used openEAR software which employs the Berlin Speech Emotion Database (EMO-DB). However, not all of these emotions are equally important for our application. In addition to that, we do not recognise boredom as an emotion, as this is rather a state. The most important emotions for the treatment of elderly persons are probably anger, fear and sadness. For the purposes of our research project, boredom and disgust can be omitted, as the inclusion of unnecessary features may complicate the classification process.

Actions

The actions described under "What can our robot do?" can be combined for the robot to fulfill several tasks. We have chosen 3 tasks that are especially important for elderly care of lonely elderly, given the privacy requirements described in the previous sub-section. These three actions are associated with the emotion 'sadness', but of course many other actions can be envisioned. For each of the other emotions, we have decided to illustrate possible actions, but in less detail than for the emotion 'sadness'. This approach is similar to that of (https://pdfs.semanticscholar.org/ca2e/d17131fb7fc132d59f171d57fed447835c4e.pdf), where the difference is that that project investigates the application of speech emotion recognition specifically, and we mainly investigate the influence of facial emotion recognition.

anger

It is possible that the elderly person does not accept the SocialRobot in their house at times, and that they do not want to have the SocialRobot near them while they are angry. In the case that a high anger score is reached, the SocialRobot could ask the elderly person whether they want the SocialRobot to move back towards their docking station. If the docking station is inside the home of the elderly person, the SocialRobot could additionally alert a carer to ensure that no harm is inflicted on both the robot and the elderly person themselves.

fear

Many elderly people have mobility issues. If the elderly person under the surveillance of the SocialRobot falls, they will probably score high under fear. The SocialRobot can then carefully move towards the location of the elderly person and ask them whether they want the robot to request help. If the answer is yes, the robot can reassure the elderly person that help is on the way and alert their carer.

happiness

On the birthday of the elderly person, their grandchildren visit them. They will likely score high on the happiness score, as they are happy to see their family. In such a situation, the robot does not really have to carry out an action, as it is not immediately necessary. However, the robot could further facilitate the social connection between the elderly person and their grandchildren by letting them play a game together on the user interface.

neutral

Just like with happiness, if the facial expression of the elderly person is neutral are happy there is not a direct point in carrying out an action. However, it is important for the robot to keep monitoring the face of the elderly person. It is possible that the robot basically does not have enough information to find a facial expression. If this is the case, the robot could ask the elderly person a question, for example whether they are happy. The answer and the facial expression could give the robot more information about the state of the person.

The three actions for sadness have been illustrated with a chronological overview of a potential situation where the person is sad. Based on the score for sadness, the SocialRobot chooses for one of the three actions.

Get Carer

This situation is most useful in elderly care homes. As elderly care homes are becoming increasingly understaffed, a care robot could help out by entertaining the elderly person or by determining when they need additional help from a human carer. The latter is a complicated task, as the autonomy of the elderly person is at stake, and each elderly person will have a different way of communicating that they need help. It is possible to include a button in the information display of the robot which says 'get carer'. In this case, the elderly person can decide for themselves if they need help. This could be helpful if the elderly person is not able to open a can of beans or does not know how to control the central heating system. This option is well suited for our target group of elderly people that have full cognitive capabilities, for simple actions that have a low risk of violating the dignity of the elderly person.

However, if the elderly person feels depressed and lonely, it can be harder for them to admit that they need help. The robot could use emotion recognition to determine a day-by-day score (1-10) for each emotion. These scores can be monitored over time in the SoCoNet database. The elderly person should give consent for the robot to share this information with a carer. A less confronting approach might be to show the elderly person the decrease in happiness scores on the display and to ask them whether they would want to talk to a professional about how they are feeling. Then, a 'yes' and 'no' button might appear. If the elderly person presses 'yes', the robot can get a carer and inform them that the elderly person needs someone to talk to, without reporting the happiness scores or going into detail what is going on.

| SocialRobot | Elderly person | Carer |

|---|---|---|

| Moves to the elderly person's location | ||

| Ask elderly person if they want to request help | Responds to SocialRobot "yes" or presses "yes" button on the display | |

| SocialRobot turns camera toward elderly person's face | Has a sad face | |

| Recognises elderly person's face | ||

| Classifies elderly person's 'sadness' emotion with a score above 7/10 | Receives a notification of the elderly person's emotion score and that they need help | |

| Goes towards Elderly person |

Call a friend

Lonely elderly often do have some friends or family, but they might live somewhere else and not visit them often. The elderly person should not get in a social isolement, as this is an important contributor of loneliness. As mentioned before, loneliness is not only detrimental to their quality of life, but also to their physical health. Therefore, it is important for the care robot to provide a solution to this problem. The SocialRobot platform could monitor the elderly person's emotions over time, and if 'sad' scores higher than usual, the robot could encourage the elderly person to initiate a skype call with one of their relatives/friends via the function social connection. The elderly person should still press a button on the display to actually start the call, to ensure autonomy.

| SocialRobot | Elderly person | Friend |

|---|---|---|

| Moves to the elderly person's location | ||

| Initiates a conversation about the weather | Responds to SocialRobot "I am sad that I cannot go outside" | |

| Recognises elderly person's answer | ||

| SocialRobot turns camera toward elderly person's face | Has a sad face | |

| Recognises elderly person's face | ||

| Classifies elderly person's 'sadness' emotion with a score below 7/10, but above 4/10 | ||

| Asks elderly person if they want to call a friend | Responds "yes" or pushes "yes" button on the display | |

| Initiate a video call with the elderly person's favourite contact | Friend receives call notification |

Have a conversation

Sometimes, the lonely person just needs someone to talk to, but does not have available relatives or friends. In such cases, the robot might step in and have a basic conversation with the elderly person concerning the weather or one of their hobbies. If humans have a conversation, they tend to get closer to each other, be it for the sole purpose of understanding each other better. The SocialRobot can approach the elderly person by recognising their face and moving towards them. The SocialRobot is 120 cm, which is proven to be an approachable height that does not intimidate the elderly person.

The initiation of the conversation could be done by the elderly person themselves. For this purpose, it might be a good idea to give the SocialRobot a name, like 'Fred'. Then, the elderly person can call out Fred, so that the robot will undock itself and approach them. If the initiation of the conversation comes from the robot, it is important for the robot to know when the elderly person is in a mood to be approached. If the person is 'sad' or 'angry' when the robot is in the midst of approaching the person, then the robot should stop and ask the elderly person 'do you want to talk to me?'. If the elderly person says no, the robot will retreat.

| SocialRobot | Elderly person | |

|---|---|---|

| Moves to the elderly person's location | ||

| Initiates a conversation about the weather | Responds to SocialRobot "I am sad that I cannot go outside" | |

| Recognises elderly person's answer | ||

| SocialRobot turns camera toward elderly person's face | Has a sad face | |

| Recognises elderly person's face | ||

| Classifies elderly person's 'sadness' emotion with a score below 4/10 | ||

| Asks elderly person to continues the conversation | Responds "yes" or pushes "yes" button on the display | |

| Asks a question about the person's favourite hobby |

Consequence analysis

The actions described in the previous section can have several consequences when the emotion classification results are corrupted by false positives or false negatives.

While most current literature on emotion classification is geared towards improving the reliability of the classification, they not set a minimum classification accuracy per emotion. It therefore seems that this is a relatively unexplored field of technological ethics. Instead of setting a minimum value, we have thus decided to solely regard the consequences of false positives and false negatives for each emotion. For the purposes of solving our research question, false positives and negatives concerning the recognition of 'sadness' were found to be particularly important.

| Emotion | False Positive cost | False Negative cost |

|---|---|---|

| Moves to the elderly person's location | ||

| Ask elderly person if they want to request help | Responds to SocialRobot "yes" or presses "yes" button on the display | |

| SocialRobot turns camera toward elderly person's face | Has a sad face | |

| Recognises elderly person's face | ||

| Classifies elderly person's 'sadness' emotion with a score above 7/10 | Receives a notification of the elderly person's emotion score and that they need help | |

| Goes towards Elderly person |

anger

If the system has a false negative for anger, this might have immense consequences. Even though the robot is not able to physically touch the person, the robot might still unwillingly enter the elderly person's private space. If the elderly person is angry, this might result in harm to the robot or the person themselves. This results in a great cost imposed on the A false positive might result in unnecessary workload on behalf of the carer.

Deliverables

The deliverables of this project include:

- Software, a neural network trained to recognize emotion from pictures of facial expressions. This software should be able to distinghuish between the 7 basic facial expressinons: Anger, Disgust, Joy, fear, suprise, sadness.

- A Wiki page, this will describe the entire process the group went through during the research, as well as a technical and USE evaluation of the product.

- The analysed results of a survey, geared towards the acceptance of robots in their home by elderly people.

- The analysed results of an interview, conducted on several groups of carers in an elderly home.

Data sets

https://en.wikipedia.org/wiki/Facial_expression_databases

- Cohn-Kanade: http://www.consortium.ri.cmu.edu/data/ck/CK+/CVPR2010_CK.pdf

- RAVDESS: only video's

- JAFFE: only japanese women. Not relevant for our resaerch

- MMI

- Belfast Database

- MUG In the database participated 35 women and 51 men all of Caucasian origin between 20 and 35 years of age. Men are with or without beards. The subjects are not wearing glasses except for 7 subjects in the second part of the database. There are no occlusions except for a few hair falling on the face. The images of 52 subjects are available to authorized internet users. The data that can be accessed amounts to 38GB.

- RaFD Request for access has been sent (Rik). Access denied.

- FERG[4] avatars with annotated emotions Request for access has been sent (Rik). Acces granted

- AffectNet[5]

- Huge database (122GB) contains 1 million pictures collected from the internet of which 400 000 are manualy annotated. Acces granted

- IMPA-FACE3D 36 subjects 5 elderly open acces

- FEI only neutral-smile university employees

- Aff-Wild downloaded (Kilian)

Facial Emotion Recognition Network

There is also a technical aspect to our research. This involves creating a convolutional neural network (CNN) that can differentiate between seven emotions from facial expressions only. The seven emotions used are mentioned in the emotion classification section of our robot proposal.

This CNN needs to be trained before it can be used. This training is done by providing the CNN with the data you want to analyze (in this case images of facial expressions) with the correct label, the output you would want the network to give you for this input. The network iteratively optimizes its weights by using for instance the sum of the squared errors between the predicted value and the groud truth (given lables). During this training, the validation data is also used. The accuracy and loss of this separate validation set is shown during training. This separate dataset is used to see whether the network is overfitting on the training data. In that case the network would just be learning to recognize the specific images in the training set, instead of learning features that can be generalized to other data. This is prevented by looking at how the network performs on the validation set. After training, to see how good the CNN performs, another separate dataset called the test set is used.

For the training, validation and initial testing, a dataset called FacesDB Bron is used. There will be two test sets, one of people within the estimated ages below 60, and one of people with estimated ages above 60. After this, the goal is to perform a test on elderly people, where images of their facial expressions are processed in real-time. The first thing to be tested is whether a network trained only on images of people younger than the estimated age of 60, will still predict the right emotions for elderly people. If this works, the real-time test will be performed.

Dataset

The Dataset that is used for training, validation and initial training is called FacesDB. This dataset consists of 38 participants, each acting out the seven different emotions. In this dataset, five of the 38 participants are considered to be “elderly”. These five will be excluded from all sets and put into a separate test set. There will also be a validation set, consisting of 3 participants, and a “younger” test set, also consisting of 3 people.

The images in this dataset are 640x480 pixels, with the faces of the participants in the middle of the image. The background of all images is completely black.

Architecture

For a complicated problem like this, a simple CNN does not suffice. This is why, instead of building our own CNN, for now a well-known image classification model is used. This CNN is called VGG16. This network has been trained for the classification of 1000 different objects in images. Using transfer learning, we will be using this network for facial emotion classification.

Results

For now there are some problems left before we have actual results. These problems are that VGG16 only works for 224x224 pixel size images. Three solutions have come to mind thus far, which are:

1) training the network fully, instead of just the last layer. This means we use the architecture of VGG16, but lose the advantage of VGG16 being trained on other images beforehand.

2) Downsample all images. This would preserve the value for the weights gained from the previous training. The downside is that, by downsampling, information from the image will be lost, making training on the images more difficult.

3) Cropping the image, cutting away some of the background. This would be the optimal solution, but this would be difficult to implement for the real-time test.

Besides this, the capturing of footage, turining it into images and feeding it into the network also still needs to be coded.

Real time data cropping

For a real time application of our software we use the building webcam of a laptop. Using OpenCV 4.1 [6] getting video footage is straight forward. But the size of the obtained video frames is not 224 by 224 pixels. Which gives rise to a similar problem as mentioned above for the database pictures. Two possible solutions are down sampling the video frames or cropping the images. The images form the database are rectangles in portrait. The images from the webcam are rectangles in landscape. Down sampling these to square format would lead to respectively wide and long faces which can’t be compared properly. So, we decided it was necessary to crop the faces. The point with cropping is that you don’t want to cut away (parts of) the face from the image. Using a pretrained face recognition CNN the location of the face in the image can be determined. After that a square image around this face is cut out which can be down sampled if necessary to obtain a 224 by 224-pixel image. Based on the “Autocropper” application [7] we wrote a code which performs the tasks described above.

Interview

This interview will be done with preferably 3-5 caretakers or other secondary parties which are involved in elderly care. On forehand, we came up with some questions to steer the conversations in the same direction.

- Positie in zorg (Wie bent u):

- Wat is uw functie?

- Wat is uw (in)directe contact met ouderen in deze functie?

- Profesionele relatie met robot

- Heeft u al vaker gewerkt met een sociale of companion robot?

- Zoja, wat zijn uw ervaringen?

- Heeft u al vaker gewerkt met een sociale of companion robot?

- Mening over robot

- Zou het een positieve bijdrage leveren aan uw werk, als er een robot is die naast u ook regelmatig checkt hoe het met de ouderen gaat?

- Denkt u dat door het inzetten van deze robots u minder werkdruk zult hebben, waardoor u meer tijd heeft voor patiënt-gerichte zorg?

- Is het beter dat een robot deze checks doet om de zoveel tijd of dat hij 24/7 opneemt?

- Wat zou u ervan vinden als zo'n robot hier in het verzorgingstehuis komt?

- Voelt u uzelf veiliger op het moment dat de ogen van de robot dicht zijn, wanneer hij niet opneemt?

- Verbetert de zorg erdoor volgens u?

- Denkt u dat zo'n robot een goede bijdrage kan leveren in het leven van de ouderen?

- Zou het een positieve bijdrage leveren aan uw werk, als er een robot is die naast u ook regelmatig checkt hoe het met de ouderen gaat?

- Privacy

- Wat vindt u ervan dat u opgenomen wordt wetende dat de robot 24/7 opneemt?

- Wat denkt u dat ouderen ervan zullen vinden dat ze continue opgenomen worden?

- Vindt u het prima dat de robot u opneemt terwijl u een ouderen verzorgt met het doel om te kijken of dit goed gaat met het idee om de zorgkwaliteit te verbeteren?

- Zoja, mogen beelden verspreid worden onder collegas, wie heeft er allemaal toegang tot de beelden?

- Mogen beelden alleen gedeeld (of zelfs opgenomen) worden wanneer een bepaalde emotie wordt uitgedrukt?

- Zonee, waarom niet?

- Zoja, mogen beelden verspreid worden onder collegas, wie heeft er allemaal toegang tot de beelden?

Survey

The Technology Acceptance Model (TAM) (source 1) states that two factors determine the elderly person's acceptance of a robot technology: - perceived ease-of-use (PEOU) - perceived usefulness (PU)

The TAM model has successfully been used before on 'telepresence' robot applications for elderly (see source 2). Telepresence is when a robot monitors the elderly person using smart sensors, such as cameras and speech recorders in the case of the SocialRobot project. Just like in source 2, the TAM model has been used to formulate a questionnaire, where the elderly person can indicate to what extent they agree with a statement on a 5-point Likert scale. This scale goes from "I totally agree", which is given the maximum score of 5, to "I totally disagree", which is given the minimum score of 1. As most elderly people in the Netherlands have Dutch as their mother tongue, the questionnaire was translated to Dutch. First follows an introduction to the questionnaire.

Intro vragenlijst

Wij doen onderzoek naar de sociale acceptatie van een zorgrobot die gebruikt maakt van gezichtsemotie herkenning. Hierover willen wij graag uw mening. Deze zal anoniem worden verwerkt (zonder naam en persoonlijke gegevens). De resultaten worden gebruikt in een user-casestudie. Hieronder volgt een introductie van de robot technologie daarna volgt de vragenlijst.

Als basis beschouwen we de robot uit het SocialRobot project. Het SocialRobot project is een Europees project waarin een zorgrobot voor in de oudere zorg is ontwikkeld. Deze robot kan zich zelfstandig voortbewegen door het huis. Hij kan interactie aangaan met de gebruiker zowel door spraak als met een touchscreen. Hij heeft daarnaast toegang tot internet en kan dus ook contact opnemen met derde partijen zoals de thuiszorg of familie. Deze robot willen wij uitbreiden met een camera die gebruikt wordt voor gezichtsemotie herkenning. Wanneer de robot interactie aangaat met de gebruiker zal de camera geactiveerd worden om de gezichtsemotie van de gebruiker te lezen. Zo kunnen gesprekken bijvoorbeeld beter op de emotionele staat gebruiker worden afgestemd. Om de privacy van de gebruiker te waarborgen zullen de camerabeelden direct na analyse worden verwijderd. De verkregen gegevens over de emotie van de gebruiker zullen alleen met anderen worden gedeeld als de robot hier toestemming voor heeft gehad. Als de robot bijvoorbeeld opmerkt dat de gebruiker vaak pijn heeft, zal hij de gebruiker voorstellen deze informatie met de arts te delen zodat die betere pijnstillers kan voorschrijven. De robot zou uitgerust kunnen worden met een klep die over de camera schuift als deze niet opneemt. Dit om het gevoel van privacy van de gebruiker te bevorderen. Tot slot is er een optionele “check up” functie. Als deze functie wordt ingeschakeld zal de robot een aantal keer per dag de gebruiker opzoeken en zijn emotionele staat bepalen. De robot kan dan bijvoorbeeld, als hij ziet dat iemand in een melancholische bui is, een gesprek aangaan om de aandacht af te leiden.

Er volgt nu een aantal stellingen. U kunt uw mening over deze stellingen aangeven door aan te kruizen of u het helemaal eens / eens / neutraal / oneens / helemaal oneens bent. Alvast hartelijk dank voor uw bijdrage.

Perceived Usefulness:

- Having the SocialRobot platform in my house would enable me to accomplish tasks more quickly. (Als ik Fred (de naam van onze SocialRobot) in mijn huis zou hebben dan zou ik sneller taken uit kunnen voeren, zoals huishoudelijke taken)

- Having the SocialRobot platform in my house would enable me to take the most out of my day and be more productive. (Ik zou productiever zijn en meer uitvoeren op een dag als ik Fred in mijn huis had)

- I would find the SocialRobot platform useful. (Ik zou Fred nuttig vinden)

Perceived Ease of Use:

- Learning to operate the SocialRobot would be easy for me. (Ik zou Fred makkelijk kunnen aansturen)

- I would find it easy to get the SocialRobot to do what I want it to do. (Ik zou Fred makkelijk de taken uit kunnen laten voeren die ik hem wil laten doen)

- My interaction with the SocialRobot would be more clear and understandable when it is able to read my emotions. (Ik beter kunnen communiceren met Fred als hij mijn emoties kon zien)

For the sake of brevity and applicability, some of the questions from the TAM model were omitted. This was because the nuances between these questions and the questions listed above were unclear, or unclear in translation or because the questions were more relevant for secondary users than for elderly people. To investigate the effect of this robot on the feeling of privacy and loneliness, the following questions were added to the questionnaire:

- I would have a conversation with the SocialRobot about the weather. (Ik zou het met Fred over het weer hebben)

- I would tell the SocialRobot how I'm feeling. (Ik zou Fred vertellen hoe ik me voel)

- Automatic emotion recognition by the robot would help me interact with it. (Het zou me helpen in de interactie met de robot als hij automatisch mijn emoties kan herkennen)

- I would appreciate the SocialRobot to suggest actions (like calling a family member or going outside to buy groceries) when I feel lonely. (Ik zou het waarderen als Fred voorstellen doet (zoals het opbellen van een vriend of naar buiten gaan om boodschappen te doen) als Fred merkt dat ik eenzaam of verdrietig ben)

- I would feel comfortable with video and audio recordings by the SocialRobot, as long as I know they are deleted afterwards and not sent to my carers without my permission. (Ik zou het niet erg vinden als er audio- en videoopnamen van mij gemaakt worden door Fred, als ik zeker weet dat deze opnamen daarna verwijderd worden en niet naar mijn hulpverleners/mantelzorgers gestuurd kunnen worden zonder mijn toestemming)

source 1: Perceived Usefulness, Perceived Ease of Use, and User Acceptance of Information Technology. https://www.jstor.org/stable/pdf/249008.pdf?casa_token=ukAFiSiYoDUAAAAA:_iUHC7Fd2_xMibYpF0WUjNqgAdElFbUFmxJqN0IV_TM4_haSl2x308UbZJ741y_s_r1Ee-9Jz9TgucJHZaTDT4kzyiMTnLdbKvucbZdtA-AKlJDRzFv6

source 2: Evaluation of an Assistive Telepresence Robot for Elderly Healthcare. https://link.springer.com/article/10.1007/s10916-016-0481-x

Planning

A detailed planning will be kept up to date in this Gantt Chart. This Gantt Chart will be updated during this course and will be used to visualise the tasks at hand and their deadlines, together with the people who are responsible for the delivery of said task. (The person that is responsible is not the only person working at that task, but will be the person who is responsible that the task is finished within time).

First Week

contains a subject (Problem statement and objectives), What do they require?, objectives, users, state-of-the-art, approach, planning, milestones, deliverables, who will do what, SotA: literature study, at least 25 relevant scientific papers and/or patents studied, summary on the wiki!

Second Week

- Gather databases and request access + make a list of the databases: Rik + Selina (affectnet)

- Try the machine learning model on Cohn-Kanade database: Kilian

- Structure the wiki and write a detailed proposal for our research project: Arthur

- Second half of the sources in state-of-the art: Wietske

Third Week

- Concretere planning

- HTI psycholoog contacteren, Raymond Cuijpers (eerst Weria vragen naar formulier). Het zal waarschijnlijk niet binnen Weria’s onderzoeksvoorstel vallen. Welk bejaardentehuis benadert hij en wat is hij concreet van plan om te doen?

- Weria mailen Rik

- Interviews met verpleegkundigen/mantelzorgers, vragenlijst/interview maken.

- Voorstel gebruik maken van het programma

- Wat voor invloed heeft dat dan op het leven van de oudere? Wietske

- Als oudere wil je liever niet een camera in je huis. Privacy. Wat voor effect zou het hebben als de robot je aankijkt? Zou het een idee zijn om de robot alleen opnames te laten maken als de oudere hem aankijkt/oproept? Rik

- Accuracy in verschillende contexten, bij de verschillende acties die de robot uit kan voeren. Hierbij kijken naar SocialRobot en het artikel uit het eerste vak. Wat voor acties kan de robot uitvoeren? Arthur

- USE requirements. Hoe zit het bijvoorbeeld met de requirements van de secondary users, namelijk de verzorgers en de mantelzorgers? Enterprises? Selina

- Verder met dataset en programma Kilian

Week 4

- Arthur: make Survey for elderly + look at specific reactions of robot

- Wietske: finish part about "impact on elderly" + email Lambert with question about the interview + update planning

- Selina: contact elderly care home finish part about enterprises

- Kilian: fix library issues + write prorgress on neural network

- Rik: Contact Cuijpers + work on questions for an interview with caretakers + write intro survey/interview

Week 5 - Arthur: survey intro uitwerken met wat de robot kan doen en een user case - Selina: Interview afspreken en laatste hand aan interview vragen - Rik: programmeer camera voor in programma - Kilian: werk aan neuraal netwerk

Sources

Sources for the second self-study: https://ieeexplore.ieee.org/stamp/stamp.jsp?arnumber=7405084 This study is actually very close to the application we had in mind. The assistive care robot recognises the elderly person's face and then reads their emotion from the response that they give to questions (so, it is related to speech processing, not to facial expression analysis). The accuracy of this system was 82%. The idea is that the robot uses the response as input, e.g. if the person is sad, they will encourage them to initiate a skype call with their friends, if the person is bored, they will encourage them to play cards online with friends.

This is another example of such a system that uses voice analysis for interactive human-robot contacts: https://ieeexplore.ieee.org/stamp/stamp.jsp?arnumber=7480174

https://ieeexplore.ieee.org/stamp/stamp.jsp?arnumber=4755969 This robot has facial expressions, but it does not interact with its users.

1) https://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=8039024 A Facial Expression Emotion Recognition Based Human-robot Interaction System

2) https://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=956083

3) https://link.springer.com/content/pdf/10.1007%2Fs00521-018-3358-8.pdf A hybrid deep learning neural approach for emotion recognition from facial expressions for socially assistive robots.

4) https://link.springer.com/content/pdf/10.1007%2F978-94-007-3892-8.pdf

5)Extended deep neural network for facial emotion recognition, with extensive input data manipulation: https://reader.elsevier.com/reader/sd/pii/S016786551930008X?token=3E015F2B3E9E6290D0EA5A3C8CA42C6F7198698E6A17043ADA159C2A5106C4053CBDEE27E39196AE6C415A0DDAF711F4

6) https://ieeexplore.ieee.org/abstract/document/1556608 "An android for enhancing social skills and emotion recognition in people with autism"

7) https://pdfs.semanticscholar.org/e97f/4151b67e0569df7e54063d7c198c911edbdc.pdf "A New Information Fusion Method for Bimodal Robotic Emotion Recognition"

8) "Bayesian face recognition" https://www.sciencedirect.com/science/article/pii/S003132039900179X

Kalman filters for emotion recognition:

9) https://link.springer.com/chapter/10.1007/978-3-642-24600-5_53: Kalman Filter-Based Facial Emotional Expression Recognition This article uses a 3D candide face model, that describes features of face movement, such as 'brow raiser' and they have selected the most important ones according to them. The joint probability describes the similarity between the image and the emotion described by the parameters of the Kalman filter of the emotional expression as described by the features, and it is maximised to find the emotion corresponding to the picture. The system is more effective than other Bayesian methods like Hidden Markov Models and Principle Component Analysis.

10) https://ieeexplore.ieee.org/stamp/stamp.jsp?arnumber=4658455: Kalman Filter Tracking for Facial Expression Recognition using Noticeable Feature Selection. This paper used conventional CNNs to recognise the facial expression, but the tracking of the features was carried out with a Kalman Filter.

11) Facial recognition with the Google glass, for children with Autism Spectrum Disorder (ASD): https://humanfactors.jmir.org/2018/1/e1/ Second Version of Google Glass as a Wearable Socio-Affective Aid: Positive School Desirability, High Usability, and Theoretical Framework in a Sample of Children with Autism. See also https://www.youtube.com/watch?v=_kzfuXy1yMI for a demonstration of Stanford's autism class.

12) But autistic children are not the only target group that has difficulty recognizing emotions in others. This is also the case for elderly: https://www.tandfonline.com/doi/pdf/10.1080/00207450490270901. EMOTION RECOGNITION DEFICITS IN THE ELDERLY. This is problematic, as primary care facilities for the elderly try to care using their emotions, e.g. to cheer the elderly person up by smiling.

13) https://www.researchgate.net/profile/Antonio_Fernandez-Caballero/publication/278707087_Improvement_of_the_Elderly_Quality_of_Life_and_Care_through_Smart_Emotion_Regulation/links/562e0bc808ae518e34825f40/Improvement-of-the-Elderly-Quality-of-Life-and-Care-through-Smart-Emotion-Regulation.pdf. This paper proposes that the quality of life of the elderly improves if smart sensors that recognize their emotions are installed in their environment. DOI: 10.1007/978-3-319-13105-4_50

14) https://tue.on.worldcat.org/oclc/4798799506 "Face recognition technology: security versus privacy" good source for arguments about face recognition. Keep in mind that we plan on developing software to recognize emotions not to identify faces. Also gives current (2004) state of face recognition technology.

Discussed in this article: 1) outline a realistic understanding of the current state of the art in face recognition technology, 2) develop an understanding of fundamental technical tradeoffs inherent in such technology, 3) become familiar with some basic vocabulary used in discussing the performance of recognition systems, 4) be able to analyze the appropriateness of suggested analogies to the deployment of face recognition systems, 5) be able to assess the potential for misuse or abuse of such technology, and 6) identify issues to be dealt with in responsible deployment of such technology.

15) https://www.mdpi.com/2073-8994/10/9/387/htm "Smart Doll: Emotion Recognition Using Embedded Deep Learning" This article describes a doll which uses local emotion recognition software. Exactly the kind of software we want to develop. Cohn Kanade Extended is used as a database for facial expressions.

The potential of deep learning has been addressed in the form of CNN inference. They have used EoT in the real case of an emotional doll.

16) Neural network for emotion recognition, used because it can adapt to the user and context of the situation. (User and context adaptive neural networks for emotion recognition) https://www.sciencedirect.com/science/article/pii/S092523120800218X

17) By using a technique called "transfer learning", neural networks can be trained on a certain set of images, unrelated to the goal of the neural network. After this, the network can be trained on a small data set, so it can implement the needed functionality. https://www.researchgate.net/profile/Vassilios_Vonikakis/publication/298281143_Deep_Learning_for_Emotion_Recognition_on_Small_Datasets_Using_Transfer_Learning/links/56e7b18408ae4c354b1bc8d8/Deep-Learning-for-Emotion-Recognition-on-Small-Datasets-Using-Transfer-Learning.pdf

18) https://ieeexplore.ieee.org/abstract/document/5543262 About the "Cohn Kanade Extended" data set

19) https://s3.amazonaws.com/academia.edu.documents/43626411/Feelings_of_loneliness_but_not_social_is20160311-5371-cjo4vg.pdf?AWSAccessKeyId=AKIAIWOWYYGZ2Y53UL3A&Expires=1556914705&Signature=AVL61%2Fnb2bpN5xHwlTpHQ9nBTcw%3D&response-content-disposition=inline%3B%20filename%3DFeelings_of_loneliness_but_not_social_is.pdf Feelings of loneliness, but not social isolation, predict dementia onset: results from the Amsterdam Study of the Elderly (AMSTEL)

This study was carried out on a large group of elderly from Amsterdam, of whom 20% experienced feelings of loneliness. They have linked loneliness to dementia onset.

20) https://www.researchgate.net/publication/229058790_Assistive_social_robots_in_elderly_care_A_review Assistive social robots in elderly care: a review

A variety of effects or functions of assistive social robots have been studied, including (i) increased health by decreased level of stress, (ii) more positive mood, (iii) decreased loneliness, (iv) increased communication activity with others, and (v) rethinking the past. Most studies report positive effects (Table 1). With regards to mood, companion robots are reported to increase positive mood, typically measured using evaluation of facial expressions of elderly people as well as questionnaires.

21) https://www.intechopen.com/download/pdf/12200 "Emotion Recognition through Physiological Signals for Human-Machine Communication " --> does not use expressions for emotion recognition

22) https://ieeexplore.ieee.org/abstract/document/8535710 "Real-time Facial Expression Recognition on Robot for Healthcare" --> as indicator to health status of the elderly.

24) A. Mollahosseini; B. Hasani; M. H. Mahoor, "AffectNet: A Database for Facial Expression, Valence, and Arousal Computing in the Wild," in IEEE Transactions on Affective Computing, 2017.

23) https://link.springer.com/content/pdf/10.1007%2Fs10676-014-9338-5.pdf --> Sharkey, A. (2014). Robots and human dignity: A consideration of the effects of robot care on the dignity of older people. Ethics and Information Technology, 16(1), 63-75.

25) https://link.springer.com/content/pdf/10.1007%2Fs10676-016-9413-1.pdf --> Draper, H. & Sorell, T. (2017). Ethical values and social care robots for older people: an international qualitative study. Ethics and Information Technology, 19(1), 49-68.

- ↑ Lynn Kacperck. (2014, December). Non-verbal communication: the importance of listening. Retrieved May 5, 2019, from https://www.magonlinelibrary.com/doi/abs/10.12968/bjon.1997.6.5.275

- ↑ Argyle, M. (1972). Non-verbal communication in human social interaction. In R. A. Hinde, Non-verbal communication. Oxford, England: Cambridge U. Press. Retrieved May 5, 2019, from https://psycnet.apa.org/record/1973-24485-010

- ↑ https://www.washingtonpost.com/technology/2019/05/06/alexa-has-been-eavesdropping-you-this-whole-time/?utm_term=.03b81ccac280

- ↑ D. Aneja, A. Colburn, G. Faigin, L. Shapiro, and B. Mones. Modeling stylized character expressions via deep learning. In Proceedings of the 13th Asian Conference on Computer Vision. Springer, 2016.

- ↑ A. Mollahosseini; B. Hasani; M. H. Mahoor, "AffectNet: A Database for Facial Expression, Valence, and Arousal Computing in the Wild," in IEEE Transactions on Affective Computing, 2017.

- ↑ Pypi, "OpenCV", https://pypi.org/project/opencv-python/

- ↑ F. Leblanc, “Autocropper”, Github, https://github.com/leblancfg/autocrop