Firefly Eindhoven - Three-Drone Visualization and Simulation

* Detail the Simulink simulation environment

Introduction

The Drone Simulation/Visualization was initially made to obtain a visual simulation of the drone trajectories. The purpose was to simulate the behavior of the drone when changes in the high-level controller were made. In addition, this also served the purpose of acting as a proof of concept for the show when the drones were not available.

But these visualizations became very significant at the TMC Event, when the team plotted the movement of the actual drone in simulation environment and the other two virtual drones behaved as slaves and followed their trajectory according to the movement of the master drone or the real drone. The visualization aimed to replicate the environment of the show itself.

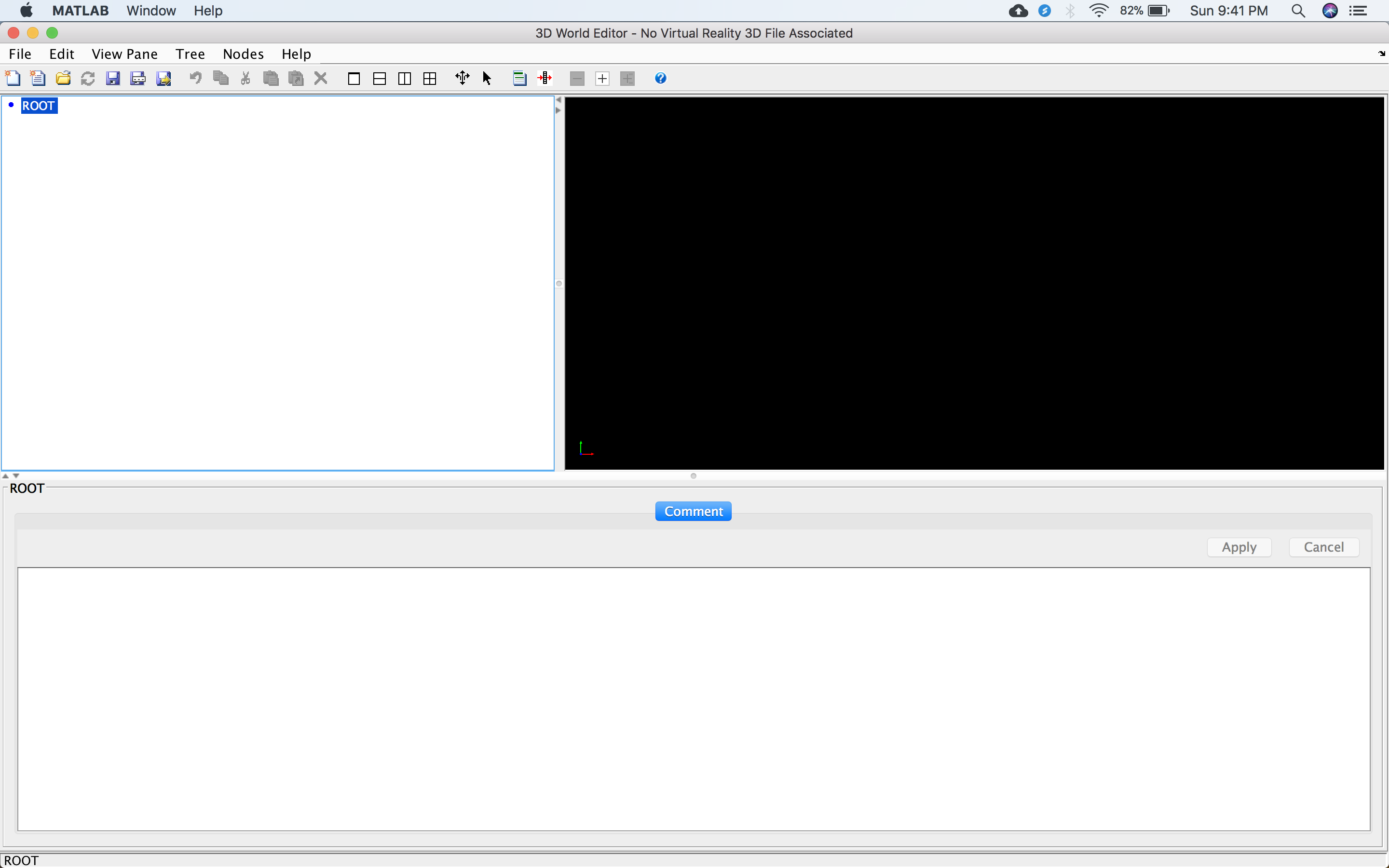

The 3D World Editor

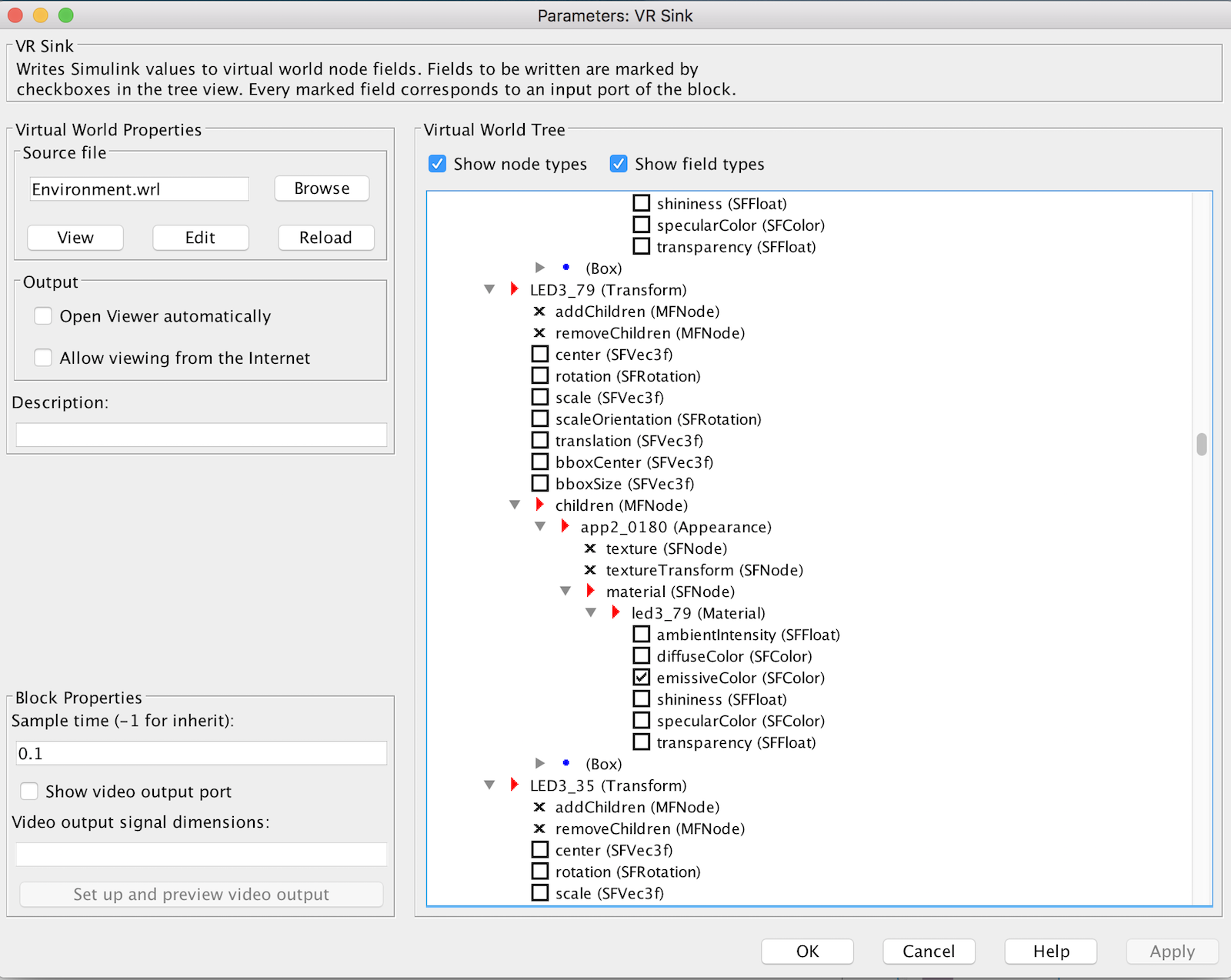

The three-dimensional of visualization of the complete show was made using the 3D World Editor tool of Matlab/Simulink. 3D world editor is a VRML (Virtual Reality Modeling Language) editor. The simulink block that is used for making 3D animations is called VRSink. This can be located in the Simulink 3D Animation in the library browser in Simulink.

Clicking on the VR Sink block opens a dialogue box. And then using the 'New' button opens the 3D World Editor where the complete 3D Animation can be created. Once the simulation environment has been created, the environment can be saved as a .wrl file. This can be then be added as a source file for the VR Sink block. The animation created using this editor will be static and the movement objects is done via connecting the other simulink blocks to the VR Sink block.

Making the Simulation Environment

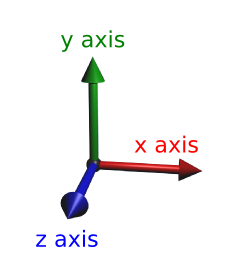

Colour Codes for Axis:

1. Red Arrow: x-axis

2. Green Arrow: y-axis.

3. Blue Arrow: z-axis

Under the Root node, any object can be added as a Transform. For instance adding a block or box can done under this hierarchical order:

Transform

children

Shape

appearance

Appearance

material

Material

texture

textureTransform

Geometry

Box

The group prepared two simulation environments. One which represents the Robot Soccer Field and the other looked like TMC Location for the Drone Show at the TMC Event.

Robot Soccer Field Visualization

TMC Event Visualization

TMC Location Visualization

The visualization used to the TMC Event represents the environment of the location. The simulation consists of the following transforms:

1. UWB1

2. UWB2

3. UWB3

4. Ground

5. BackWall

6. RightNet

7. LeftNet

8. Quad1

9. Quad2

10. Quad3

11. Shadow1

12. Shadow2

13. Shadow3

14. CornerA (Viewpoint)

15. CornerB (Viewpoint)

16. CornerC (Viewpoint)

17. South (Viewpoint)

18. SouthLarge (View point)

19. West (Viewpoint)

20. WestLarge (Viewpoint)

21. East (Viewpoint)

22. EastLarge (Viewpoint)

23. North (Viewpoint)

24. NorthLarge (Viewpoint)

25. Top (Viewpoint)

The Ground and the Backwall Tranforms are just collection of two blocks with very small thickness but very large width and height. Compared to the Ground, the Backwall is just rotated along the y-axis by 90 degrees.

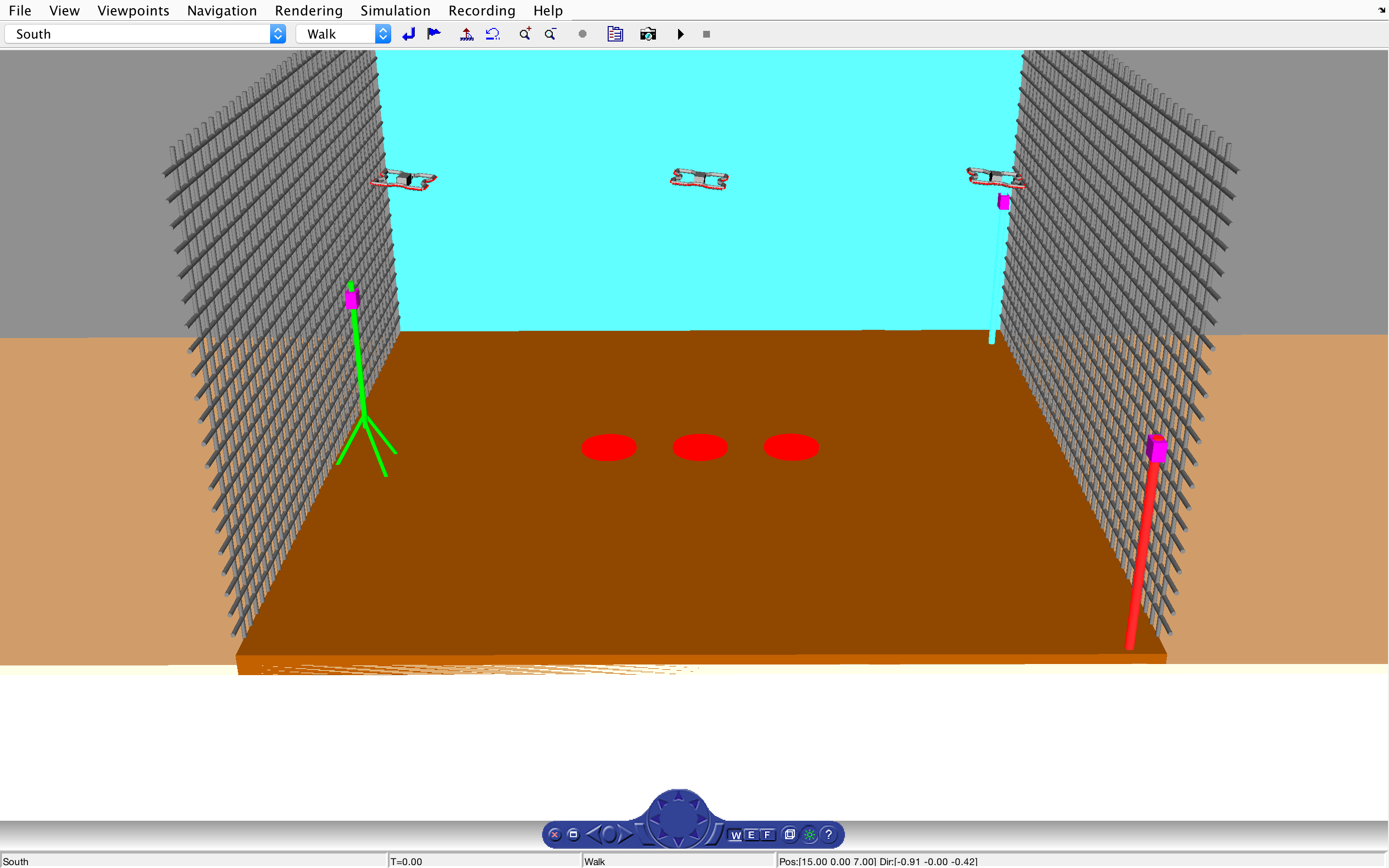

Setting up manually by altering the values of Pos and Dir vectors one by one is very tedious and difficult. Therefore one the objects are set, it is possible to open the VR Sink block as a visualization where by using the mouse it is possible to adjust the viewpoint. Once the correct viewpoint is selected it is possible to save the current view as a viewpoint by clicking on 'Create Viewpoint' in the Viewpoint menu in the top pane of the window.

VR Sink Simulation Window

The Drone used in the simulation were created using cylinders and blocks. Each drone has four arms and a hull. Each arm consists of five cylinders. And the Hull is just a box. In addition, each drone also consists of 88 LEDs, 22 per arm. These LEDs are also blocks added manually.

The shadow of each drone is a thin cylinder that has the same translation input as the Drone, but with the values of 'z' fixed to 0.4.

The Nets are also cylinders with very small radius and large heights placed closed to each other. And the Ultra-Wide Band units and beacons are also made using cylinders and blocks.

In order to receive signals from the blocks external to the VR Sink block, open the VR Sink block and in the simulation menu in the top pane click on block parameters.

This will open the Parameters of the VR Sink block. In the virtual world tree, the check boxes behind the transform parameters represent whether the the VR Sink will have an input port corresponding to that signal or not. Checking the checkbox creates an input port in the VR Sink block via which it is possible to have variable values of those parameters.

For instance, the LED patterns for the simulation can be obtained by feeding the RGB Values of the LEDs using the LED Pattern files, where these RGB values change over simulation time. This is also how the translation and orientation for the drones and the shadows is achieved in the simulation.

In order to make the simulation as real as possible, the size ratios of the drones to the show area and the movements of the drone are set to be the same as in actuality.

Parameters Block

The 3D World Editor also provides flexibility to include images in the simulation/animation. The simulation prepared for the TMC Event contains the logos of the sponsors. This was added in the following way:

BackWall

children

BackWallSmall

children

Shape

appearance

Appearance

texturetransform

texture

Then on texture, it is possible to right click and insert text transform from the harddisk of the computer, this could be a .png of .jpg file.

While working on the simulation editor, it is important to note the importance and the difference between the diffusive and emissive color. Diffusive colors are colors that will be seen when the object is viewed indirectly or from an angle however emissive color is the color of the object in real sense. When adding images to an object one should be careful that these colors do not interfere with the picture itself.

Overview of the Visualization Block

The VR Sink block for the simulation models is placed in the subsystem called Visualization. This subsystem takes in four inputs, namely a signal that comprises of all the states of the drones and the time vector. And three other signals, each of which contains led input values for a drone.

Inside the Visualization subsystem, the received signals are first demuxed into three signals each corresponding to a drone, which is further demuxed into six signals namely 'x', 'y', 'z', 'phi', 'theta' and 'psi'. For Drone1, these values are then commented out as these values are obtained via the sensor system. For Drone2 and Drone3 these values of angles are then converted into world frame which are further converted to the virtual reality frame before they can be fed to the VR Sink block. Before sending these values to the VR Sink block, the translation signals are multiplexed as one 'translation' signal and same is done for the angles, which are multiplexed as 'rotation' signal.

Since, the 'x' and 'y' coordinates of each drone and its shadow are exactly the same, therefore, in addition to connecting the translation signal to the VR Sink block, this signal is also connected to the 'DronePosShadowPos' block which truncates the value of 'z' as the 'z' coordinate of shadow is fixed to the ground. And the output of this is also send to the VR Sink block. This is repeated for all the three drones.

In addition, the leds signals are also fed into the VR Sink block. Each Ledinput is first demuxed into 88 signals. Each signal consists of colour values of each LED for the whole duration of the show.

The values for Drone1 are received via UDP in another subsystem and then passed to Visualization subsystem via the 'Goto' tag.

And finally a block is added that makes the simulation run in real time.

High Level Control and Supervisory

The Supervisory subsystem acts as the ground station for the simulation. This implies that each of the drones in the simulation are independent of each other, which means that for them the only thing present in the simulation is the drone itself. The supervisor acts as the central control system of the simulation that collects all the positions of the drones and sends them as commands separately to each drone. The supervisor contains a state machine divides the whole trajectory into subsections. It utilizes both trajectory tracking and path following, for moving drones to initial positions of each subsection, and for navigating them through the trajectory, respectively. The used subdivision allows finer control as well as periodic re-synchronization of the drones.