PRE2019 4 Group6

Group Members

| Name | Student ID | Study | |

|---|---|---|---|

| Coen Aarts | 0963485 | Computer Science | c.p.a.aarts@student.tue.nl |

| Max van IJsseldijk | 1325930 | Mechanical Engineering | m.j.c.b.v.ijsseldijk@student.tue.nl |

| Rick Mannien | 1014475 | Electrical Engineering | r.mannien@student.tue.nl |

| Venislav Varbanov | 1284401 | Computer Science | v.varbanov@student.tue.nl |

TODO

Group chooses a subject (in the core of robotics, USE aspects are leading, can involve study, analysis, design, prototype etc.)

%% Facial/Emotional Recognition with Chatbot

Problem statement

For the past few decades, the population of old people has been rapidly increasing, due to the advancement of healthcare education. This rapid increase means that a lot more caretakers are necessary to help get those people through the day. Not only physically, but also mentally. Depression and loneliness are right around the corner for a lot of old people if there is not enough interaction with caretakers or family members. One study showed that there is a significant relationship between depression and loneliness of the elderly. Meaning that if there is not enough social interaction, they will have a high chance of developing a severe depression further growing the feeling of loneliness.[1] It is therefore vital that there are enough caretakers to prevent this. Unfortunately, the shortage of caretakers is growing. To combat this the usage of care take robots is being developed quite extensively. These robots can not yet replace the full physical help a real person can give but can give some mental help by giving the elderly someone to talk to. This social interaction can include having a simple conversation with the person or routing a call to family members. In order to have a more complicated conversation, the robot requires to understand more about the state of the person. Finding the state of a person is very important as humans are emotional creatures. If a robot could get a clue how someone is feeling, this could greatly improve the interaction. While increasing the intelligence of the robot would solve this issue, this proves to be very difficult. Therefore, only small steps in increasing the robots world view can be made.

Objective

The proposal for this project is to use emotion detection in real-time to get a feeling about how the person is feeling so that it can use this information to further help the person. This reading of emotions from the face will not be perfect, but there are already some good results using neural networks.[2] However, the implementation of this emotion detection for specific use on the elderly with a chatbot has not been explored very deeply.

Previous Work

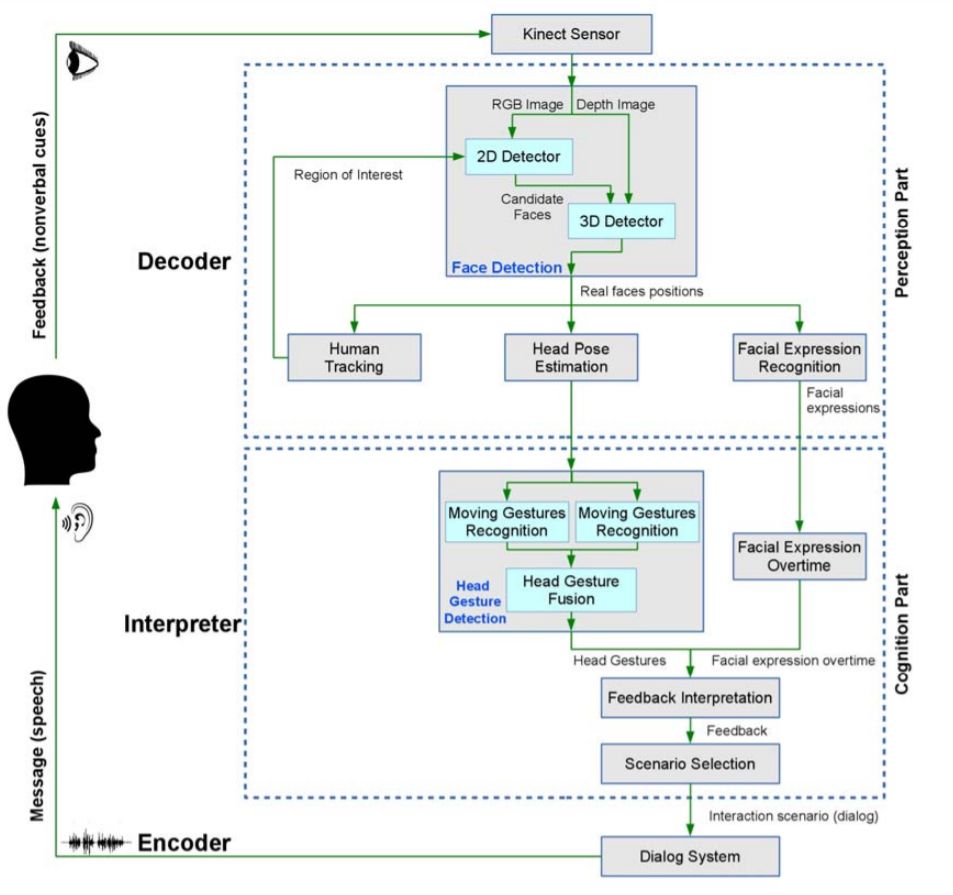

The facial recognition with emotion detection software has already been done before. One method that could be employed for facial recognition is done by the following block diagram:

Figure 1: Block diagram of facial recognition algorithm[5]

USE Aspects

Users

Homebound elderly people are the main user of the robot.

They will interact with the robot on a daily basis by talking to it, having their emotions checked and other miscellaneous activities such as recharging and moving said robot.

Society

Society consists of three main stakeholders. The government, the medical assistance and visitors.

Primarily, as with all newly introduced technology, the government's priority is to regulate and introduce laws with these new robots, ensuring no privacy infringement or other malpractices are applied.

Secondly, robots may deliver feedback to hospitals or therapists in case of severe depression or other negative symptoms.

Finally, any visiting individual may indirectly be exposed to the robot. To ensure their emotions are not unwillingly measured or privacy compromised, laws and regulations must be set up.

Enterprise

Robots must be developed, created and dispatched to medical centers to then send to an elderly. The relevant enterprise stakeholders in this case are the developing companies and the hospitals and therapists to ensure logistic and administrative validity.

%% Emotional Support

Approach(r), planning(v), milestones(v) and deliverables(v)

Who’s doing what?

- Max - Rick - Venislav - Coen

SotA: literature study, at least 25 relevant scientific papers and/or patents studied, summary on the wiki!(all)

[1]. Misra N. Singh A.(2009, June) Loneliness, depression and sociability in old age, referenced on 27/04/2020

[2] Zhentao Liu, Min Wu, Weihua Cao, Luefeng Chen, Jianping Xu, Ri Zhang, Mengtian Zhou, Junwei Mao. A Facial Expression Emotion Recognition Based Human-robot Interaction System. IEEE/CAA Journal of Automatica Sinica, 2017, 4(4): 668-676 http://html.rhhz.net/ieee-jas/html/2017-4-668.htm

[3] Jonathan, Andreas Pangestu Lim, Paoline, Gede Putra Kusuma, Amalia Zahra. Facial emotion Recognition Using Computer Vision. 2018, : https://www.researchgate.net/publication/330780352_Facial_Emotion_Recognition_Using_Computer_Vision

[4] Amir Hossein Fghih Dinevari, Osmar Zaiane. A Software Agent for At-Home Elderly Care. University of Alberta. 2016. https://webdocs.cs.ualberta.ca/~zaiane/postscript/etelemed16.pdf

[5] Saleh, S.; Sahu, M.; Zafar, Z.; Berns, K. A multimodal nonverbal human-robot communication system. In Proceedings of the Sixth International Conference on Computational Bioengineering, ICCB, Belgrade, Serbia, 4–6 September 2015; pp. 1–10. http://html.rhhz.net/ieee-jas/html/2017-4-668.htm

Approach

This project has multiple problems that need to be solved in order to create a system / robot that is able to combat the emotional problems that the elderly are facing. In order to categorize the problems are split into three main parts:

Technical

The main technical problem faced for our robot is to be able to reliable read the emotional state of another person and using that data being able to process this data. After processing the robot should be able to act accordingly to a set of different actions.

Social / Emotional

The robot should be able to act accordingly, therefore research needs to be done to know what types of actions the robot can perform in order to get positive results. One thing the robot could be able to do is have a simple conversation with the person or start the recording of an audio book in order to keep the person active during the day.

Physical

What type of physical presence of the robot is optimal. Is a more conventional robot needed that has a somewhat humanoid look. Or does a system that interacts using speakers and different screens divided over the room get better results. Maybe a combination of both.

The main focus of this project will be the technical problem stated however for a more complete use-case the other subject should be researched as well.

Papers on Emotions, Creating the AI and creating the Chatbot

Planning

| Week | Task | Date/Deadline | Coen | Max | Rick | Venislav |

|---|---|---|---|---|---|---|

| 1 | ||||||

| Introduction meeting | 20.04 | 00:00 | ||||

| Group meeting: subject choice | 25.04 | 00:00 | 00:00 | 00:00 | ||

| 2 | ||||||

| Wiki: problem statement | 29.04 | 00:00 | ||||

| Wiki: objectives | 29.04 | 00:00 | ||||

| Wiki: users | 29.04 | 00:00 | ||||

| Wiki: user requirements | 29.04 | 00:00 | ||||

| Wiki: approach | 29.04 | 00:00 | ||||

| Wiki: planning | 29.04 | 00:00 | ||||

| Wiki: milestones | 29.04 | 00:00 | ||||

| Wiki: deliverables | 29.04 | 00:00 | ||||

| Wiki: SotA | 29.04 | 00:00 | 00:00 | 00:00 | 00:00 | |

| Group meeting | 30.04 | 00:00 | ||||

| Tutor meeting | 30.04 | 00:00 | ||||

Milestones

| Week | Milestone |

|---|---|

| 1 (20.04 - 26.04) | Subject chosen |

| 2 (27.04 - 03.05) | Project initialised |

| 3 (04.05 - 10.05) | Facial/Emotional recognition research finalised |

| 4 (11.05 - 17.05) | Facial/Emotional recognition software developed |

| 5 (18.05 - 24.05) | Chatbot research finalised |

| 6 (25.05 - 31.05) | Chatbot implemented |

| 7 (01.06 - 07.06) | Facial/Emotional recognition software integrated in Chatbot |

| 8 (08.06 - 14.06) | Wiki page completed |

| 9 (15.06 - 21.06) | Chatbot demo video and final presentation completed |

| 10 (22.06 - 28.06) | N/A |

| 11 (29.06 - 05.07) | N/A |

Deliverables

- Wiki page

- Chatbot with Facial/Emotional recognition software

- Chatbot demo video

- Final presentation

Log

meetings

Weekly Meetings on Monday (start at 10:00) and Thursdays (at 13:30)

| Name | Time Spent (hrs) | Description |

|---|---|---|

| Coen | x | Introduction Lecture + Meeting 1 |

| Rick | x | Introduction Lecture + Meeting 1 |

| Venislav | x | Introduction Lecture + Meeting 1 |

| Max | 1 hour meeting + 4 hours research writing introduction | Introduction Lecture + Meeting 1 |