Mobile Robot Control 2024 Rosey

This wiki page describes the work undertaken by Team Rosey during the Mobile Robot Control course. The objective was to develop functional code for a robot to navigate a map, handling both known and unknown, static and dynamic obstacles, to reach specific waypoints efficiently and in a controlled manner.

The work is organized by weeks, showcasing the team's progress in solving the given assignments and advancing toward the Final Challenge. Design decisions are justified by evaluating potential advantages and disadvantages, providing a clear explanation of our approach.

Group members:

| Name | student ID |

|---|---|

| Tessa Janssen | 1503782 |

| Jose Puig Talavera | 2011174 |

| Ruben Dragt | 1511386 |

| Thijs Beurskens | 1310909 |

| Pablo Ruiz Beltri | 2005611 |

| Riccardo Dalferro Nucci | 2030039 |

Week 1 - Don't crash code

Exercise 1 - The art of not crashing.

Overall approach

Every member of the group worked on this exercise separately, after which we combined and discussed the different approaches. Though each implementation the members of our group came up with is slightly different, they all have the same starting approach. The robot is constantly looping through the laser data and when the returned laser range of one of the laser points is within a predefined distance, the robot is commanded to act on this. Additionally, most approaches only loop through the front/middle part of the laser data to make sure only obstacles that are actually in the robots path are taken into account. The different methods of going about this are explained below.

Implementation 1: Stopping

Riccardo Dalferro Nucci & Ruben Dragt

In this implementation the instruction to the robot is the most simple. The robot is instructed to keep moving forward using the io.sendBaseReference function and when the laser returns a distance within 0.3 meters, the robot is commanded to stop by setting the forward velocity in io.sendBaseReference back to 0. Using this method means the robot will not continue moving as long as the obstacle is within the 0.3 meters. If the robot is in front of a wall it will stay there forever, but with a moving obstacle such as a human walking by, the robot will eventually move forward again until the next obstacle. Video of the simulation can be found here.

Implementation 2: Turning away

Using this method, the robot is not instructed to just stop but to also turn away from the obstacle. The added benefit of this approach is that the robot will be able to start moving forward again, as the laser will eventually stop returning obstacles in the set distance.

- Method 1 Tessa Janssen - In this code, the robot loops over part of the data by setting the for loop from

scan.ranges.size()/2 - rangetoscan.ranges.size/2 + range(setting variable range at 150 means a view of approximately 1.2 rad). Then, when an obstacle is detected in within a set distance e.g. 0.5 m, the forward velocity is set to 0, but the angular velocity is given a positive value. This results in the robot turning left when it has reached an obstacle. When there are no longer any obstacles the angular velocity is set back to 0 and the forward velocity is given a positive value. The downside of always turning left is that the robot can get easily get stuck in corners and will not take into account efficiency. Video of the simulation can be found here.

- Method 2 Pablo Ruiz Beltri - In this approach, a safety distance and a cone size (in number of laser points) are set. The cone is centered in front of the robot. While we are properly connected, the laser data in this cone is verified to be greater than the safety distance. If it is, the robot moves forward, if not the robot rotates. To keep a forward path, the direction of rotation is determined by assessing if the safety distance violation was in the left or the right of the cone. After testing this approach, it is concluded that it is vulnerable to corners, where safety distance violations come equally from left and right, leaving the robot in a blocked position.

- Method 3 Thijs Beurskens - Here, the cone of vision of the robot is determined by setting the minimum and maximum angle, which is then translated to the amount of points the for loop should cover. The turning direction of the robot is determined by checking on which side of the robot the obstacles are closer and thus turning where there is more space. The code also keeps track of which direction it is turning, which prevents the robot from getting stuck in a corner. This approach has again the benefit of being able to keep moving around a map/obstacle course while also being more clever on where it can go. This approach makes the robot less likely to get stuck in certain areas and makes it more robust to changing environments.

- Method 4 Jose Puig Talavera - My implementation for the dont_crash() code initially checks whether the robot is receiving laser and odometry data. If yes, command a forward motion of 0.5 meters per second. Then, read laser data through a cone of vision. If one of the laser range values is within or at 0.3 meters, stop the vehicle. Check distance to the right (i = 200), then check distance to the left (i =800). If left > right, turn +0.3 radians per second (counter-clockwise). Inversely, if right > left, turn -0.3 radians per second (clockwise). A video of the simulation can be found here. I personally like the part where it gets stuck at a point where the distance to the left and right are really similar && has an obstacle within 0.3 meters.

- Method 5 Riccardo Dalferro Nucci - After successfully implementing a dont_crash() that would only stop once the robot encountered any obstacles, a new updated version was created. As the first version, this code initially checks if the LaserData is received correctly, if so, a velocity of 0.5 meters per second in the x direction is given. While the robots moves an for cycle checks if there is any obstacle in the cone of vision of the robot, which is of plus and minus one radiant from the x axis. When this loop detects an object it stops the forward motion. If the closest object is on the right it will turn left and viceversa. This code still showed some problem, like for example when the right and left obstacles are at the same distance the robot just start to oscillate in between and it is unlikely to get out of that. It also showed some problem when going towards a wall from a very skewed angle, some times it was turning to slow and slightly bumping into the obstacle. A video of the simulation can be found here.

Exercise 2 - Testing your don't crash

During the first testing session it became clear that the parameters that were used in simulation needed to be adjusted to the robot in real life. Especially the velocity needed to be tuned down when using Coco/Bobo as they do not have the speed limiter built in. Moving too fast towards an obstacle meant that when it saw an obstacle within the safety range, it did not have enough time to stop before hitting the obstacle. Though tuning the velocities and obstacle distances was a doable fix and the code from method 3 (Thijs) ended up working very nicely in an environment with multiple different obstacles. The full video showing this approach can be seen here and a brief clip is shown in figure 1.

Vector field histogram:

Thijs Beurskens, Pablo Ruiz Beltri & Riccardo Dalferro Nucci

Concept

The main idea behin the Vector Field Histogram (VFH) method is to treat objects as vectors in a 2D cartesian histogram grid and create a polar histogram to determine possible "open spaces" to get to the goal. A polar histogram is created, centered in the robot's current position, using the LaserData and a number of sectors that can be given as an input. Every Laser Data from min_angle to max_angle (the data behind the robot are considered as not visible, even though defined in the polar histogram) is then treated as a vector, where its magnitude and direction are computed based on the following formula.

[math]\displaystyle{ \beta = \arctan(y - y_{current}, x - x_{current}) }[/math],

[math]\displaystyle{ m = a - b * distance }[/math],

where a is the product of b and the maximum distance, which needs to be given as input along with b. Moreover, distance is the magnitude of the vector expressing the current position. Using a sendPath command, a visual representation of the sectors was given. Once the histogram is completly defined the program finds every valley, which means every sector with a value below threshold (also given as input) and chooses the best one, that is the one closest to the target direction. Every value of the histogram is normalized. A control input is then given to the robot to make it go in that direction. To note that a PID has been added to control the rotational speed, in order to best align the robot to the goal direction.

Simulation and Implementation

When simulating the code, the visual representation of the polar histogram was very useful, allowing to debug eventual errors in the code. The code was tested in the given maps file, with increasing difficulty. The simulations showed that with an increasing number of obstacles the robot was prone to cutting corners, up to the point where in map 4, due to a very narrow point of the map, the robot slightly bumped into a wall, which was caused by the fact that the robot has no knowledge of its dimensions. This problem was then solved by increasing the threshold. Screen recording of the simulation can be find in map1 and map4

Once the program was working well in the simulation it was tested in the lab. At first, the Hero robot was moving very erratically, and was very aggressively cutting corners. Upon closer inspection, it was clear that the code assumed the laser range finder is at the center of the robot, but this is not the case for Hero. The problem was resolved by translating all the laser points to compensate for the offset. As it can be seen from Fig. 2, the code showed good results also on the real robot.

Reflection

Advantages of the approach

The first advantage of this approach is the computational efficiency. Moreover, the implementation on the real robot showed a good robustness to noisy sensor data. Furthermore, the mathematical tools used, such as the polar histogram and the vectors, were quite familiar object, which allowed to implement the code without too many difficulties. Since the object density is computed on real-time LaserData the program is quite robust for what concerns moving objects or changing environments. Finally, since the code looks for closest free valley to the goal direction, the VFH method does not usually suffer from local minima.

Disadvantages of the approach

The first disadvantage is that this method might struggle with narrow passages and sharp corners. Moreover, since this code uses real time LaserData the robot needs to have a relatively low speed, to be able to compute the new direction before bumping into an obstacle.

What could result in failure?/How would you prevent these scenarios from happening?

As said above, a narrow passage or a sharp corner could result in failure. This could be fixed by properly tuning the threshold. This problem was clear when testing on the real set-up, when the robot would hit some corner or struggling when trying to pass in a narrow passage. This could also be caused by the fact that the robot has no knowledge of its dimensions at all, and it could therefore be solved by implementing this.

After the test in the lab, when this problems arised, some modifications were made to the original code in order to try to solve them. First of all, the actual movement direction is now weighted based on the histogram values in the valley, which resulted in a smoother control input. Moreover, the eligibility of the valley, which was initially based on the angle, was made dependent on the actual width of the valley. By checking the distance to the object and the angle in fact, it is possible to compute the actual width of the free valley, which partially solved the narrow passages problem. A video of this new version implemented in the lab can be found here.

Artificial potential fields:

Tessa Janssen, Jose Puig Talavera & Ruben Dragt

Concept

The main idea behind an artificial potential field is calculating both an attractive potential towards a target and a repulsive potential away from obstacles, which are summed together to form the potential field. The resulting force vector is calculated by taking the negative of the gradient of the potential field.

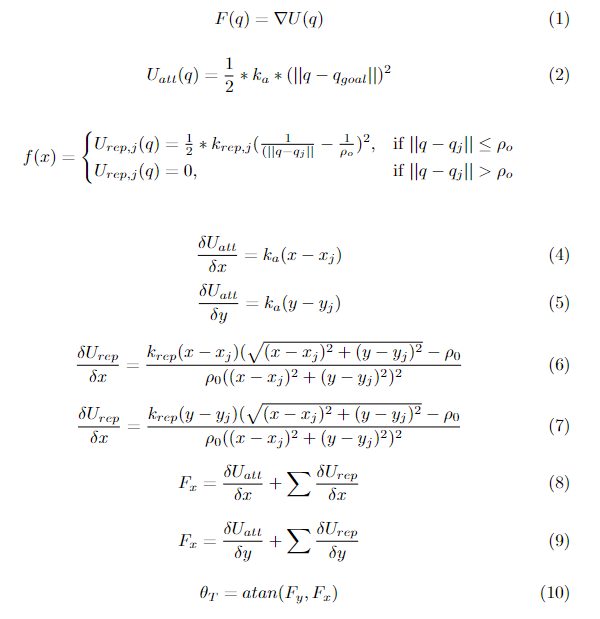

The attractive potential is determined by the equation 2 and is dependent on the difference between the current position of the robot and the target position. The target position was set as a point 6 meters further in the y direction than the starting position, the current pose was calculated by using odometry data from the robot (with starting coordinate (1.5, 0, 0)). Because the odometry is not perfect, this introduced a small error to the final position of the robot, which was not corrected for in this exercise. The repulsive force is given by equation 3 and it uses laser data to determine the distance from the robot to an obstacle. For this specific implementation, each laser point in the for-loop returning a distance that fell within the predefined space buffer ρo was registered as a separate obstacle, with the corresponding repulsive potential being added to the total potential field. The constants katt and krep were used to adjust the relative importance of attracting and repulsing.

The forces are broken down into [math]\displaystyle{ F_x }[/math] and [math]\displaystyle{ F_y }[/math] components after calculating the analytical partials derivatives of the attractive and repulsive potential energies. The partial derivatives of the attractive potential energy are shown in equations 4 and 5. The partial derivatives of the repulsive potential energy are in equations 6 and 7. The resulting force vector at the current location of the robot was used as input to the robot by calculating the angle corresponding to the vector. These are in equations 8 and 9. The commanded angle is the arctangent of the x and y components of the force (shown in equation 10). This was used as reference angular velocity for the robot, while y-velocity (world frame) was kept mostly constant. Mostly constant here means that in the original implementation the velocity of the robot was kept low to allow the robot to have enough time to respond to all obstacles, but after testing, code was added that made sure the robot picked up its speed when there were no obstacles in sight.

Simulation and Implementation

Testing the code in simulation revealed that tuning katt, krep, ρo, and the forward and angular velocity were very important. Map 1 compared to map 4, for example, showed that when there are not a lot of obstacles, the robot can get away with higher attraction to the target, making the path more efficient. With more obstacles, however, the robot would get too close to the walls and the krep had to be retuned as well as increasing ρo. In the first few iterations of the code, the robots movements were very jittery. This was due to the calculation of the angle of the force vector. When moving up in difficulty through the different maps, the forward velocity and angular velocity had to be reduced in order to get a smooth trajectory. Screen recordings of the simulation were made for both map 1 and map 4.

One of the first test sessions after the code was working well in simulations, already showed a smooth trajectory as can be seen in the full video here or in the snippet in figure 3. The robot moves around the objects to the target approximately 6 meters away, but at a pace that is quite boring and seems inefficient from a users point of view. Code was then added to make the robot increase its speed when it no longer saw any objects within the buffer ρo. This helps slightly with efficiency, but only once it was already outside of the maze we set up. Another video including this speed increase can be found here.

Reflection

Advantages of the approach

One advantage about the potential fields is that it makes sense intuitively: walk away from obstacles and walk towards your goal. The mathematical components that are required to calculate the potentials, gradients and corresponding vectors are also familiar methods, making it a very doable method to implement from scratch. Another advantage is that the repulsion to obstacles is calculated on real-time laser data. This makes the robot quite robust to changing environments as new obstacles (walls around the corner or people standing in the way) are incorporated into the navigation immediately.

Disadvantages of the approach

A disadvantage that is clear from both the simulation and the test sessions is that in order for the robot to be able to recognize all obstacles in time, its velocity needs to be quite low. This speed-accuracy trade-off is especially evident in more complex environments with many obstacles. And although it is an advantages that the robot updates the obstacles around it constantly, the computational speed is not always sufficient to recognize moving obstacles such as people. In addition, in case of local minima in the gradient, the robot will get stuck while not having reached its target. Another disadvantage that was best seen in the simulation was that the robot most from left to right a lot when passing through a narrow street as the resulting force vector keeps shifting from left to right.

What could result in failure?/How would you prevent these scenarios from happening?

One situation that could lead to failure is a local minimum as explained above. In case the robot is faced with a wall directly between itself and the target and the repulsive potential is equal and exactly opposite to the attraction potential, the result will be net zero and the robot will not move. This might be prevented by including some code that makes sure that if the input to the robot is zero, but it has not yet reached it target it will go in a certain direction. This correction should of course be careful of nearby obstacles. Another potential failure is not recognizing a living obstacle passing by and crashing into it. Completely eliminating this is hard, but it can be mitigated by having a low speed and increasing the range in which it classifies obstacles. Both of these give the algorithm more time to process the obstacle.

A* algorithm implementation:

Pablo Ruiz Beltri, Riccardo Dalferro Nucci & Ruben Dragt

Concept and Implementation

The A* algorithm is a widely used path finding and graph traversal algorithm. It combines the benefits of Dijkstra's Algorithm and Greedy Best-First Search to find the shortest path between nodes in a weighted graph. The calculation efforts are a bit higher than the previous mentioned algorithms but an advantages of the A* algorithm over the other two algorithms is that it will always find the shorters route if there exists one. To do this it uses a value of the cost to reach a target and a value for the distance from that new node to the target. The code that calculates the shortest path between the nodes to the end goal by follows the next structure

Initialization

The code starts with an open set called open_nodes containing the initial node _start_nodeID, and another set closed_nodes where the visited nodes will be stored, to make sure that they are only visited once. The next step is to initialize a cost map to keep track of the cost from the start node to each node "h-cost", using the function calculate_distance(). Then a heuristic map is initialized estimating the cost from each node to the goal called "g-cost", these are initialized at the start as infinity and will be recalculated for each visited node to the surrounding nodes. This way the nodes with no edge connecting them from the current_node will have a cost that is infinitaly large. The f-cost is the summation of the g-cost and h-cost is calculated for the initial node and the nodes connected to the inital node by edges.

Processing

While the open set is not empty, select the node with the lowest f-cost from the open nodes set. If this node is the goal, the path is found. Otherwise, the current node is moved from the open set to the closed set. While moving to different nodes the parent node of the node is also stored in a list path_node_IDs, the eventual parent node list will have the nodes stored that are part of the shortest path from the starting node to the goal.

Expansion and repeat

For each neighbor of the current node, calculate the tentative g-cost. If this tentative g-cost is lower than the previously recorded g-cost for that neighbor, update it and change the parent node to the current node. This will also result in a new f-cost namely the f-cost for the neighbor is f = new g + h. Finally if the neighbor is not in the open set, add it. This process is then continued untill the goal is reached or the open set is empty, indicating no path exists. To store the optimum path from the initial node to the goal path_node_IDs, we start at the goal node and move to its parent node, because of this every node will have a list of a certain length with its parent nodes. If the current node is the goal node the robot just has to follow the parent nodes of the goal node to reach the goal.

Probabilistic Road Map implementation:

Tessa Janssen, Jose Puig Talavera & Thijs Beurskens

Overall approach

For this exercise a framework of code to create a Probabilistic Road Map (PRM) was already given. We worked through the given exercises one by one to obtain the final result as is shown in figure 4. More detailed explanations of each sub-exercise are given below.

Inflate the map

The first exercise was to inflate the occupied spaces in a map to adjust for the size of the robot: inflatedMap(). This was done by looping over the pixels of the image by column and row and checking if the pixel has a value below the threshold that was previously defined in the assignment (thresh_occ = 130). If this is the case, the pixel is deemed occupied and a matrix of size radius x radius is looped through, centered around that pixel. Then every pixel in that square is given value 0. The variable radius can be set depending on the size of the robot that is used.

Check vertices

The second exercise was writing a function that checked if the vertices generated into the map were at least a certain distance away from each other: checkIfVertexIsValid(). Our function loops through the existing vertices in the Graph, and for every vertex calculates the distance between this one and new_vertex that is given as an argument. The distance between the new vertex and the existing ones is calculated by applying the Pythagorean theorem on the distances between the first and second coordinates of the vertices. If the distance is smaller than some allowed distance (here we chose 0.25 meters), the function returns false, meaning the vertex is not added to the Graph.

Check edges

The final exercise is about checking if an edge between two vertices is valid: checkIfEdgeIsValid().

First this means checking if the two vertices an not too far away from each other (checkIfVerticesAreTooClose()). This function was filled with pretty much the exact same code used incheckIfVertexIsValid(), only now the function checks if the distance between two vertices is bigger than some allowed distance (here we chose 1 meter). If this is the case the function returns false and no edge is created between these two vertices.

A second requirement is that the edge between two vertices cannot cross an obstacle:checkIfEdgeCrossedObstacle(). This function converts the coordinates (first and second) of two vertices into their pixel equivalent and arranges them to make sure that pixel 1 is always to the left of pixel 2. The function loops over all pixels that lie in the square you can draw with the two pixels as opposite corners. Then for each pixel in this square it is checked if it is occupied, using the same threshold as before. If it is indeed occupied, the distance from this pixel to the line connecting the corner pixels is calculated. If this distance is within a 2 pixel range, it is said to be in the 'edge' territory and the function will return true: the edge crosses an obstacle. This method was chosen because it only considers obstacles a problem when they are actually on the edge between two vertices. This makes efficient use of the map as will not remove edges only because they are too close to obstacles.

Combining A* and PRM

Combining the two frameworks described above results in a path planned such as in figure **. The A* algorithm uses the PRM graph as input vertices to find the optimal path to the starting position to the target.

Combining Local and Global planning

For a first iteration of combining the local and global planning, the code from the local planners in week 2 - Local Navigation was used to replace the path planner in this assignments framework. This meant that the global planner would provide the local navigation with its next target as defined by the A* algorithm. In this version the Vector Field Histogram method was used. From the simulation it became clear that parameters such as when a 'target' was reached needed to be tuned. Due to the local navigation avoiding obstacles along the way, the robot would sometimes 'miss' a vertex in the planned path. To mitigate this and keep the robot from needlessly searching for this one vertex, the radius in which a target was marked as reached was increased to xx. This meant that the robot only had to pass nearby in order to continue to the next one. This first version of global+local planning was tested in the lab by building the map_compare to scale. The full video can be seen here and a brief snippet is show in figure 5.

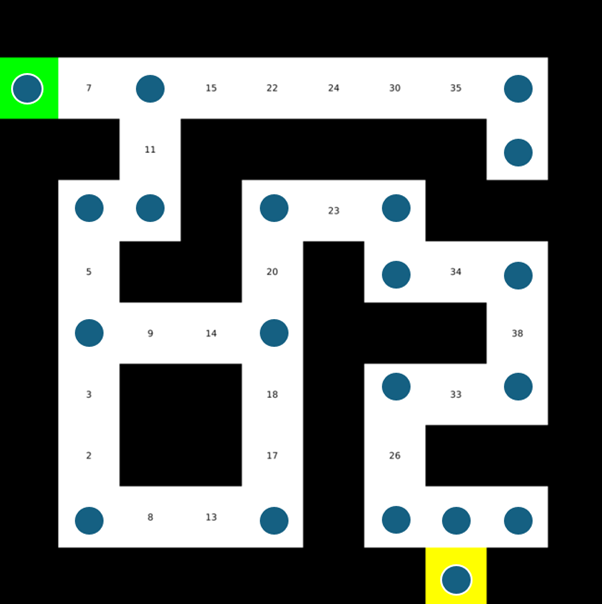

How could finding the shortest path through the maze using the A* algorithm be made more efficient by placing the nodes differently? Sketch the small maze with the proposed nodes and the connections between them. Why would this be more efficient?

Removing the nodes that are on a straight line would make the algorithm more efficient. We would still need nodes on all junctions as it is a potential location where the robot could turn. The sketch in figure 6 shows an example of how the nodes can be placed more efficiently. This method only needs 20 nodes compares to the 42 you would need for each block of the grid.

Would implementing PRM in a map like the maze be efficient?

No it would not be more efficient because there is no guarantee that all the nodes would connect and if a corner is missed then algorithm would not find the turn.

What would change if the map suddenly changes (e.g. the map gets updated)?

If the map gets updated and the global navigation (A* and PRM) is not configured again on the new map it could occur that a node that the robot tries to go to because it was chosen by the A* algorithm is no longer reachable. For example a person or object could cover the node, in this case the local navigation will make sure that the robot does not crash but the node will not be reached and therefore the robot will be stuck in a never ending loop. A way to overcome this is to build in a timer where after a certain amount of time the robot moves on to the new node or the laser data is used to update the map and the global navigation function is configured again on the new map with the obstacle in it.

How did you connect the local and global planner?

Connecting the local and global planner requires three main steps. First of all, a library for the local planner was made. Then this library was connected to the global planner via CMakeLists.txt. Finally an instance of the local planner and the pose tracker was made and the points created by the global planner were passed to it.

Test the combination of your local and global planner for a longer period of time on the real robot. What do you see that happens in terms of the calculated position of the robot? What is a way to solve this?

Implementing local and global planner together for a long time period showed some problems in the robot position. Particularly, this was drifting more and more with time. The only solutions to this problem is to add localization usign LRF data.

Run the A* algorithm using the gridmap (with the provided nodelist) and using the PRM. What do you observe? Comment on the advantage of using PRM in an open space.

The advantage of PRM in open space is that it can create diagonals which are more efficient than simply orthogonal directions.

Week 5 + Week 6 - Localization

Particle filters are a type of probabilistic algorithm used for estimating the state of a system that evolves over time. The main idea behind this concept is to approximate a probability density function representing a continuous random variable by a set of "particles", where each particle represents a possible value of the state. Particles are moved based on the robot's odometry and the weight of each particle can be adjusted based on the LRF data. Finally, particles are resampled based on their weights to focus on the more likely poses. Some advantages of this method are that the particles can be distributed over the map in any form and that the predictions and measurements models have minimal restriction (i.e. the noise can be non-gaussian and the model can be non linear). The main disadvantages are the fact that the computational load is obviously proportional to the number of particles and that the number of required particles scales poorly with the state dimension.

0. Exploring the code framework

How is the code is structured?

The workable code is split into two folders called FilterComponents and Filter. The Particle and Resampler classes live in the Filter components folder whereas the ParticleFilter and ParticleFilterBase classes live in Filter folder. The Particle class holds all the meaningful information and methods regarding each particle. This class will be called by methods in the other classes. Depending on the arguments passed to the constructor, a particle with uniform distribution or Gaussian distribution can be constructed. The other classes are in charge of predicting, updating and resampling the particles. The code is nested with Particle being the lowest level and ParticleFilter being highest level.

What is the difference between the ParticleFilter and ParticleFilterBase classes, and how are they related to each other?

The difference between the ParticleFilter and ParticleFilterBase classes is that ParticleFilterBase implements the estimation and propagation of the particles whereas the ParticleFilter class determines when and how particles should update and propagate. ParticleFilter is a public subclass of ParticleFilterBase, meaning all the methods and members from ParticleFilterBase are also available in ParticleFilter.

How are the ParticleFilter and Particle class related to eachother?

The ParticleFilterBase class (and thus also the ParticleFilter class) contains a ParticleList, which is a vector of Particles. The Particle class contains methods for updating individual particles, and ParticleFilterBase contains methods for evaluating the complete ParticleList.

Both the ParticleFilterBase and Particle classes implement a propagation method. What is the difference between the methods?

The ParticleFilterBase and Particle propagation methods do two different things. The propagateSample method of the Particle class ensures a detailed pose update of one particle, incorporating the motion model and noise. On the other hand, the propagateSamples method of the ParticleFilterBase class calls the propagateSample method for every particle by looping through each one.

1. Week 5

1.1 Initializing the particle filter

As explained above, the particle filter estimates the pose of the robot through a set of weighted particles, each of them representing a hypothesis of the current robot pose. The set of all the particles approximates the probability distribution over all possible robot poses. In this part of the assignment the methods which construct the set of particles is implemented. The implementation can either be done using uniform or gaussian distribution.

Inside the Particle class, precisely in the Particle method, a random pose is generated. A pose is composed of x and y coordinates, and an orientation theta. Depending on the input given to the function, the pose can either be generated with a uniform distribution (Particle::Particle(&world, &weight, *generatorPtr;) or with a gaussian one (Particle::Particle(&world, mean[3], sigma[3], &weight, *generatorPtr);) .

Finally, the uniform distribution is easier to implement and it does not require any a priori knowledge of the initial position of the robot, but is obviously less precise and it will take more iteration to find the actual position of the robot. The gaussian distribution, on the other hand, it requires a good estimate of the initial position, but it will be more likely for most of the particles to be close to the real pose, allowing to find it faster.

1.2 Calculating the pose estimate

Interpret the resulting filter average. What does it resemble? Is the estimated robot pose correct? Why?

The filter average resembles the center of mass of a particle cloud. The denser the weight, the more influence it will have on the robot pose. Right now, the estimated robot pose is not correct since no laser data is being applied and solely relying on odometry.

Imagine a case in which the filter average is inadequate for determining the robot position

The filter average is inadequate for determining the robot position when there is wheel slip or uneven terrain (loss of odometry data). If the odometry data is noisy then this will also cause issues in estimating the robot pose.

1.3 Propagate the particles with odometry

Why do we need to inject noise into the propagation when the received odometry information already has an unknown noise component?

Injecting noise would be unnecessary if the odometry information didn't have any noise component. The unknown noise has to be compensated for using the laser data, and to do that we need to check multiple locations. Explicitly injecting noise distributes the particles across a wider area, which increases the chance that at least one of the particles is in the correct location. Moreover, noise maintains particle diversity, which ensures that particles do not converge to quickly to a narrow area, potentially missing the true robot pose. Thanks to this, the filter remains adaptable to new sensor data.

What happens when we stop here, and do not incorporate a correction step?

Without incorporating the correction step, the particle filter will be no better than simply following the odometry data. By simply injecting noise, without checking the likelihood of the particles, there is no additional information gained, and the error is likely bigger than using just the odometry data.

2. Week 6

2.1 Correcting particle with LiDAR

What does each of the component of the measurement model represent, and why is each necessary.

In the implemented measurement model method, each component helps to have an accurate representation of the likelihood of a measurement given a predicted particle pose. The gaussian component models the probability of the actual measurement being close to the predicted one (the normalization of the gaussian integral makes sure that the likelihood is properly scaled over the range of possible measurement. Furthermore, the exponential component accounts for when the actual measurement is shorter than the predicted one, while the discrete component models the probability of a maximum range reading, which usually indicates that no obstacles were detected within the sensors range. Finally, the uniform component accounts for random measurements that do not fit the other models, basically providing the same probability for any measurement.

With each particle having N >> 1 rays, and each likelihood being ∈ [0, 1], where could you see an issue given our current implementation of the likelihood computation.

The first issue comes from the number of rays for particle, being this way bigger than 1 it increases the computational complexity. This could jeopardize the real-time performance of the program. It is also important to correctly normalize each likelihood, in order to avoid having inaccurate weight updates after the resampling.

2.2 Re-sampling the particles

What are the benefits and disadvantages of both the multinomial resampling and the stratified resampling?

Multinomial sampling is a simple method to implement since it involves drawing samples based on particle weight and requires little to no preprocessing of data. On the other hand, this sampling method is known for yielding higher variance since there are scenarios where only high weight particles will be chosen from a set. For stratified sampling however, the set has to be divided into strata therefore making it a slightly more complex problem. On the positive side however, stratified sampling results in reduced variance and higher sample diversity due to its nature of breaking down the set into strata.