PRE2020 3 Group12

Group Members

| Name | Student ID |

|---|---|

| Bart Bronsgeest | 1370871 |

| Mihail Tifrea | 1317415 |

| Marco Pleket | 1295713 |

| Robert Scholman | 1317989 |

| Jeroen Sies | 0947953 |

Planning

| Week 2 | Finish literature study + prepare list of topics to be included in the wiki |

Everyone:

|

| Week 3 | Define our goals for simulating human behavior |

Bart:

Mihail:

Marco:

Jeroen:

Robert:

|

| Week 4 | Prepare footage for the questionnaire |

Bart:

Jeroen, Marco & Robert:

Marco:

Mihail:

|

| Week 5 | Send out questionnaire |

Everyone:

Jeroen, Marco & Robert:

Robert:

Bart:

Mihail:

Marco:

Jeroen:

Jeroen & Robert:

|

| Week 6 | Wiki filled as much as possible + start on data analyzing/results |

Mihail:

Jeroen:

Mihail, Marco & Robert:

Bart:

Everyone:

Marco:

Robert:

|

| Week 7 | All data processed + wiki finished |

Mihail:

Everyone:

Bart:

Jeroen:

Marco:

Robert:

|

| Week 8 | Final presentation |

Bart:

Jeroen:

Everyone:

Marco:

Mihail:

Robert:

Marco, Bart & Robert:

|

Introduction

B: entire introduction: sources.

For the longest time in human history, humans have seized every opportunity they could find to automate and make their lives easier. This already started in the classical period with a very famous example, where the Romans would build large bridge-like waterways, aqueducts, to automatically transport water from outer areas to Rome, automated by the force of gravity and the general water cycle of nature. Today, computational sciences is opening ways for AI and robotics have opened many new opportunities for this automation. Think about all the robots that are used in production to do the same programmed task over and over again, classification of images (often better than human results) with deep learning networks, and more recently, the combined robotics and AI task of self-driving cars!

Slowly but surely, robots can take over repetitive, task-specified jobs, and do them more efficiently than their human counterparts. Currently, however, such jobs are very concrete. For robots to have more versatility, they simply need to become more like us. Robots should be adaptive, be able to learn from their mistakes, and handle anomalies efficiently; essentially, a robot should have a level of decision-making and freedom equivalent to that of a human being. For example, when a robot is specifically tasked with picking up a football from the ground, it should find out a way to get the football back when it's accidentally thrown in a tree. Another issue arises in this social situation as well: the robot has to have a certain level of flexibility in its movement, since it should not just be able to move around and pick up a ball, but also to use tools that help it in fulfilling its "out of the ordinary" task. All in all, one thing becomes very clear: These tasks can not be hardcoded, they have to be learned.

It stands to reason that building a robot with all implementations specified above, a robot suitable in a social environment, requires a humanoid design. This causes many challenges, among which the most notable would be the challenges grouped into Electrical/Mechanical Engineering and Computer/Data Science. A human has many joints and muscles for various movements, expressions, and goals. The human face in particular is an incredibly complex design, consisting of 43 muscles, using about 10 of those muscles to smile and about 30 to simply laugh. All these joints and muscles have to work together perfectly since every joint's state influences the entire structure of joints and muscles. One huge aspect in the collaborative work of these joints and muscles is our sense of balance, meaning how the robot prevents itself from falling over. At every iteration, the robot has to check the state of its balance and decide which joints and muscles need to be given a task for the next iteration. Additionally, there is the Computer Science-related challenge of mapping such an unpredictable environment to binary code interpretable by the robot. Every signal from its surroundings has to be carefully processed and divided accordingly. The robot has to learn, diving into AI, and more specifically, Reinforcement Learning. Reinforcement Learning currently only exists as a solution to very basic learning problems, where the rules are very concretely specified, and suffers from the environment being too broad in terms of data. This is why it is still a very experimental field, and many robot studies include abstract definitions of states and policies for environment data that heavily imply the usage of Reinforcement Learning in theory, but lack a practical implementation. B: is this relevant for our research?

As a consequence, social/humanoid robots are still a widely researched topic. The research has for example huge usage potential in care facilities and other forms of social care B: social care? for the elderly, people with a disability, or even in the battle against loneliness and depression. Many papers cover how the robot could be taught certain skills with respect to the environment (Reinforcement Learning) and how it would communicate with humans. R: The chinese wall is jealous of the length of this sentence However, an oftentimes overlooked question is whether this robot would be accepted by humans at all. Many studies have found that a robot in the shape of a cute animal — a more easily modeled robot than a humanoid robot because humans expect less from the robot and the mimicked behavior is often very repetitive and standard — has a positive impact on its environment. People like engaging with the robot, as some see it as a kind of pet, and it successfully requests the attention of its audience. But, as we have established, such a robot would be very limited in the tasks it could fulfill, and modeling a humanoid robot is not as easy as modeling the behavior of an animal because we expect a lot more detail from the humanoid robot being humanoid beings ourselves. What would this robot look like to make it appealing instead of scary?

R: Mention we will discuss this later This phenomenon, a robot becoming scary as it approaches human-likeness, is what is referred to as the Uncanny Valley. Humans are able to relate to objects that act and look in a certain human way (generally humanoid), but something strange happens when we approach reality. If a robot or screen-captured character is manually made to look exactly like a human, we are often scared, disgusted, and 'uncanny' of it B: you can't be "uncanny" of something. The robot is uncanny. It's as if we hate that it tries to fool us for being human, when we know it isn't from the various signs we pick up on as red flags. Because modeling a perfect human is impossible with the current state-of-the-art, designers try to refrain from creating a very human-like robot even if it has to be humanoid to properly fulfill its tasks. However, in a social situation, a robot has to be appealing enough for us to appreciate, value, and even accept it in our environment. On the other hand, though, if the robot is too 'cute', we tend to not open up to the robot at all, but rather use our attention to watch it, actively 'awwh' it, and help it in any way we can; not very useful when the purpose is to help you, although this can be helpful in battling loneliness. J: A lot of 'help', suggestion: and help it in any way we can; not very useful when its purpose is to assist you, although this could be used in battling loneliness.

Since most of this technology is still very experimental, it is important that we already J: already start to define the variables involved in determining this Uncanny Valley with the examples we can already J: already offer. Such examples are already J: already widely available, not only due to attempts in developing human robots, but because of the art of recreating a human face in CGI (Computer Generated Imagery). By defining variables for various attributes of the appearance of a robots and its results on humans, or even just elderly, adults and students, we can help designers fall into the same pitfalls that others already J: already have. Designers can decide on the appearance of a robot based on what exactly they need the robot to be able to do: a center of appearance studies.

Relevance of Robot Appearance

B: Maybe move this part to theoretical exploration? In a social context, robots may be subject to judgement from humans based on their appearance Walters, M.L., Syrdal, D.S., Dautenhahn, K. et al. Avoiding the uncanny valley: robot appearance, personality and consistency of behavior in an attention-seeking home scenario for a robot companion. (2008). The perceived intelligence of the robot is correlated to the attractiveness of the robot since it is the case that humans make a ‘mental model’ of the robot during social interaction and adjust their expectations accordingly:

- “If the appearance and the behavior of the robot are more advanced than the true state of the robot, then people will tend to judge the robot as dishonest as the (social) signals being emitted by the robot, and unconsciously assessed by humans, will be misleading. On the other hand, if the appearance and behavior of the robot are unconsciously signaling that the robot is less attentive, socially or physically capable than it actually is, then humans may misunderstand or not take advantage of the robot to its full abilities.”

It is thus very important to predict and attribute the correct level of attractiveness depending on the intellectual capabilities of a robot. Not only that, but humans attribute different levels of trust and satisfaction when dealing with robots, depending on how much they like it Li, D., Rau, P.L.P. & Li, Y. A Cross-cultural Study: Effect of Robot Appearance and Task. (2010). Furthermore, an anthropomorphic robot is said to be better when high sociability tasks are required Lohse M et al (2007) What can I do for you? Appearance and application of robots. In: Artificial intelligence and simulation of behaviour, which is a statement that does not lack controversy as some research did not find any conclusive evidence of this aspect Li, D., Rau, P.L.P. & Li, Y. A Cross-cultural Study: Effect of Robot Appearance and Task. (2010).

Study Objective

Designing the appearance of a robot can be quite challenging. If it looks too different from a human, it might be difficult to create a sense of warmth, interaction and humanlikeness. If it is very humanlike, it runs the risk of falling into the uncanny valley.

In this study, we will attempt to assess how robot appearance and movement can impact the perception of the robot, and how this might differ among three different levels of humanlikeness. This will be investigated using data from surveys. The concrete results of this study will be used to set up a consultancy firm. This firm will help robot developers by giving them advice about how the robot should look. This advice will be based upon multiple things, like the environment the robot will be working in, how/how much it moves and what kind of people will interact with it.

J: I think it's a bit strange, because this says more or less the same thing as the problem statement in the enterprise right? So maybe expand on one and remove the other?

Theoretical Exploration

State of the Art

B: sources

Atlas

The Atlas robot made by Boston Dynamics with the purpose of accomplishing the tasks necessary for search and rescue missions has increased in popularity in the public eye especially for its similarity in appearance to a human. The capability of this robot, as showcased in the YouTube videos released by the manufacturer [1]J: link?, namely Boston Dynamics, accomplishes a set of physical tasks such as jumping, running in uneven terrain, and acrobatic postures such as a handstand and a somersault. The 150 cm tall, weighing 80kg, the robot is able to maneuver acros obstacles with human like mobility. <-- B: not a correct sentence. In the past couple of years the maneuverability of the robot has increased substantially and supposedly resembles movements of professional athletes. The robots has 28 hydraulic joints, which it uses to move fluidly and gracefully. These joints are moved using complex algorithms that optimize the set of joints to be moved and to what degree to reach a target state. First, an optimization algorithm transforms high-level descriptions of each maneuver into dynamically-feasible reference motions. Then Atlas tracks the motions using a model predictive controller that smoothly blends from one maneuver to the next.” Boston Dynamics wrote.

The similarity to the human body is thus only achieved by its structure and movement. Although, it is worth mentioning that defining human body components such as a round head, a face, together with hands are missing components from this robot. That is a reason why we estimate that this robot sits right before the uncanny valley.

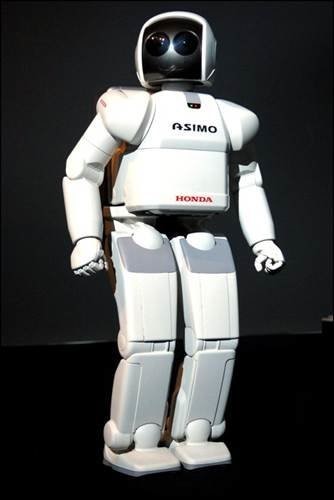

Honda ASIMO

The ASIMO (Advanced Step in Innovative Mobility) robot produced by Honda, was developed to achieve the same physical abilities as humans, especially walking. This humanoid robot, unlike Atlas, comes equipped with hands and a head, and its structure is more like the one of a human. Also, ASIMO has the ability to recognize postures and gestures of humans, moving objects, sounds and faces and its surrounding environment. Its surroundings are captured by two camera's located in the "eyes" of the robot, this all allows ASIMO to interact with humans. It is capable of following or facing a person talking to it and recognizing and shaking hands, waving and pointing. ASIMO can distinguish between different voices or sounds, therefore it is able to identify a person by its voice and therefore R: Two times "therefore" successfully face the person speaking in a conversation. ASIMO's advanced level of interaction with users is the reason why we think that this robot is situated higher than Atlas when it will come down to user preference.

Geminoid DK

Geminoid DK robot was conceived with the purpose of pushing the state-of-the-art robot imitation of humans. By creating a surrogate of himself, the creator was able to achieve remarkable human features that would otherwise be hard to create without a base model. With a facial structure almost indistinguishable from the human one, only movement remains a factor that plays a role in the uncanniness of this robot. Thus, we predict that by analyzing this model, we will achieve some conclusions with regards to human movement in robots J: Maybe a bit vague. The robot mimics the external appearance and the facial characteristics of the original, being its creator and a Danish professor Hendrik Scharfe. Apart from the movements in the robot’s facial expressions and head it is not able to move on it’s own (own = remotely by the operator J: huh?). The Geminoid DK does not possess any intelligence of itself and has to be remotely controlled by an operator. Pre-programmed sequences of movements can be executed for subtle motions such as blinking and breathing. Moreover, speech of the operator can be transmitted through the computer network of the geminoid to a speaker located inside the robot. At this moment the geminoid DK is used to examine how the presence, the appearance, the behavior and the personality traits of an anthropomorphic robot affects the communication with human partners.

Human Perception of Others

Human beings interact which each other on a very frequent basis. These interactions can be partially explained by using psychological, social-psychological, and behavioral theories that have been developed over the years. It is of utter importance to understand how exactly humans interact because many robot developers aim to create robots that are as close to an actual human as possible. Understanding how humans interact with computers and/or technology is not enough, there is an important human factor that needs investigation.

Human non-verbal perception of others is influenced by both body aspect and body language. On the other hand B: i dont know if "on the other hand" fits here, verbal communication is very intricate and can change our perception of others through what the messages that are communicated. For example, people that lie very often are harder to resonate with and can find themselves judged by others. However, more meaningful to us will be the non-verbal category of perception. People are judged by both their looks and by their actions, and rightfully so. The ability to deduce others’ intentions, moods, and actions by their movement alone is one of the greatest skills that humans possess. Kinematics plays an especially important role in this: "human actions visually radiate social cues to which we are exquisitely sensitive" from [2], “when we observe another individual acting we strongly ‘resonate’ with his or her action” from [3].

Although movement is a big leap in the detection of social cues, it is not at all necessary. A static picture of a person is enough to detect the mood of the person well as some inferred features from their beauty. It even produces a physical response on the side of the viewer, since more angry-looking people will trigger the dilatation of the viewer’s pupils [Pupillary Responses to Robotic and Human Emotions: The Uncanny Valley and Media Equation Confirmed]. Furthermore, the level of intelligence of the observed individual can be inferred from their beauty [Looking Smart and Looking Good: Facial Cues to Intelligence and their Origins].

Human Robot Interaction (HRI)

B: SOURCES Robotics integrate ideas from information technology with physical embodiment. They obviously share the same physical spaces as people do in which they manipulate some of the very same objects. Human-robot interaction therefore often involves pointers to spaces or objects that are meaningful to both robots and people. Also, many robots have to interact directly with people while performing their tasks. This raises a lot of questions regarding the 'right way' of interacting.

The United Nations (U.N.) in their most recent robotics survey (U.N. and I.F.R.R., 2002) B: can you find the actual authors of this research? Also, use & instead of "and"., grouped robots into three major categories, primarily defined through their application domains: industrial robotics, professional service robotics and personal service robotics. The earliest robotics belongs to the industrial category, which started in the early 1960, then much later, the professional service robots category started growing, and at a much faster pace than the industrial robots. Both categories manipulate and navigate their physical environments, however professional service robots assist people in the pursuit of their professional goals, largely outside industrial settings. For example robots that clear up nuclear waste contribute to that category. The last category promises the most growth. These robots assist or entertain people in domestic settings or in recreational activities.

The shift from industrial to service robotics and the increase of the number of robots that work in close proximity to people, raises a number of challenges. One of them is the fact that these robots share the same physical space with people. These people could be professionals trained to operate robots, but they could also be children, elderly or people with disabilities, whose ability to adapt to robotic technology may be limited.

One of the key factors in solving these challenges B: what challenges? is autonomy. Industrial robots are mostly designed to do routine tasks over and over again and are therefore easily programmed. Sometimes they require environmental modifications, for example a special paint on the floor that helps them navigate properly, which is no problem in the industrial sector, though for service robots this is much harder to accomplish. In that case such modifications are not always possible, which requires the robots to have a higher level of autonomy. Additionally, these robots tend to be targeted towards low-cost markets, which results in much more difficulty endowing it with autonomy. For example, the robot dog shown in the figure is equipped with a low-resolution CCD camera and an onboard computer whose processing power lags behind most professional service robots by orders of magnitude.

Robots require interfaces for interacting with people, though industrial robots tend not to interact directly with people and therefore its interfaces are very limited. <-- B: really weird sentence. Maybe rephrase or just remove entirely Most service robots require richer interfaces. We can distinguish between two interfaces: for indirect and direct interaction. Indirect interaction would be defined as when a person operates a robot, while with direct interaction the robot acts on its own and the person responds or vice versa. As a general rule of thumb, the interaction with professional service robots is usually indirect, whereas the interaction with personal service robots tends to be more direct.

There exists a range of interface technologies for indirect interaction. One example is the master-slave interface in which a robot copies the motions of its operator. Direct interface options are much less established for service robots. There are robots that are able to speak, but don't understand spoken language. Furthermore, other robots might understand spoken language or use a keyboard interface. Though the robot's ability to talk might create the false perception of human-level intelligence, which can result in miscommunications. Since the robots vocabulary might, and most likely will, be limited. <-- B: are these two sentences relevant? And if so, need to be rephrased Some robots posses the ability to track what someone is looking at, which can be used to refer to physical objects and a recent study has investigated modalities as diverse as head motion, breath expulsion, and electrooculographic signals as alternative interfaces.

Interface technologies that are unique to robots also exist. They require physical embodiment, thus a robot might need to exhibit facial expressions for example and humanoid robots may appeal to people differently than other technological artifacts.

This being said, the human-robot interaction is a field in change. The field develops itself so fast that the types of interaction that can be performed today differ substantially from those that were possible even a decade ago. Furthermore, the interaction can only be studied based on the available technology, and because the field of robotics is still very young, there are still many unknown factors in human-robot interaction.

Uncanny Valley

B: SOURCES

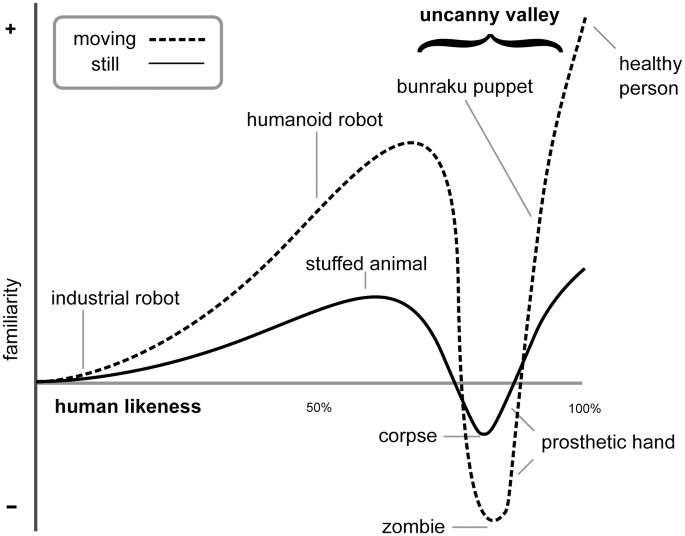

In 1970, Masahiro Mori, a Japanese roboticist, defined a scale for the human emotional response to non-human entities. A summary of the translation of the original paper is linked below. Mori talks about how most phenomena in life are described by monotonically increasing functions. However, when we climb towards the goal of making an entity seem more human, this is not the case. There is a certain feeling of disgust when an entity is almost human-like, but not exact; as if our mind doesn't like that it's being mislead.

Mori defined this scale with examples available in his time period. He thought this might have something to do with our natural ability to recognize a corpse and tell it apart from a human in that it isn't alive, but we will explore more theories about the cause of the Uncanny Valley later. Consequently, the minimum of the uncanny valley is that of a corpse. It is also established that movement changes this scale drastically. Of course, we are particularly grossed out, or even terrified, by a walking corpse. The fact that exactly that which we find unappealing starts moving and acting like a human when we know it is not, scares us more than the still image. Below, you can find the scale and certain key examples that defined the first iteration of the Uncanny Valley. B: refer to the image as image x and put it underneath the image as well along with a description

Theories About the Uncanny Valley

There have been numerous attempts at explaining the cognitive mechanism that causes this phenomenon. Some of the most noteworthy theories are listed below.

- Pathogen Avoidance: Uncanny features of an entity might trigger our cognitive responses of disgust that evolved to protect our bodies from diseases. The more human an entity looks, the more we associate with it. Hence, every defect or small malfunction the entity shows compared to the human behavior they are trying to imitate/perform leads to an instinctual warning that we should keep our distance. This warning elicits a behavioral response that matches our response to a corpse or visibly diseased individual.

- Mate Selection: Humans constantly 'rate' other humans based on several instinctive, often bodily features. When we are able to perceive an entity as human-like, we automatically check all these features in the entity and can be easily disgusted when our expectations are not met.

- Mortality Salience: When we are almost fooled by an entity to pass as human, but we can still tell that something is off by minor mismatched traits, we end up in a state of fear. Knowing that a human model can be recreated by humans themselves not only elicits the less realistic fear of annihilation (a commonly discovered concept in Science-Fiction), but it also makes us realize we are nothing but soulless machines. Given that humans have a part of their brain dedicated to the belief of a higher entity, this leads to conflict. Furthermore, it makes us scared of our mortality. Not only does an almost human entity remind us of a human in a worsened state, seeing its limits and defects results in a fear of losing bodily control or even dying, given that we cognitively perceive the entity as human-like enough to relate to on an emotional level.

- Conflicting Perceptual Cues: Produced by the activation of conflicting cognitive representations, this theory suggests that the feeling of eeriness is caused by the mind having both reasons to believe the entity is human, and reasons to believe it isn't. Several studies support this possibility, and one particularly clear measure was that a negative impact is maximized when at the midpoint of a morph between fake and real (like the characters in the movie Cats). However, other research shows that this effect can only be responsible for a portion of the Uncanny Valley effect. Several mechanisms have been linked to this response, and there is still debate on which of these mechanisms, if not all, are responsible for this effect: Perceptual mismatch, categorization difficulty, frequency-based sensitization and inhibitory devaluation are all candidates.

Design Principles as a Consequence of the Uncanny Valley

There are a number of design principles many designers already follow in some form to avoid the Uncanny Valley altogether. While this often means staying in front of the Uncanny Valley, and thus making it clear that your character is not trying to be 'real', there are other principles that might help.

- All body parts of entities should match each other's detail: A robot may quickly look uncanny when real and fake elements are mixed to create a character. For example, a robot that moves perfectly like a human but is unable to match facial emotions can quickly be seen as uncanny. It is important that the realism of its appearance should perfectly match the realism of its behavior.

- Realism and expectation: When an entity looks very practical, people don't have too high expectations. In contrast, the opposite is true when an entity looks very human-like, where people often expect many additional, unnecessary features. It is important that your design is useful and even essential to what the entity is supposed to be doing, or its limitations will cause eeriness.

- Human facial proportions should always accompany photorealistic texture: Only when the texture of an entity is not too human-like, some leeway is allowed in these proportions, and can even help lifting your design out of the Uncanny Valley.

Critiques of the Uncanny Valley Theory

- Outdated: Mori defined his scale for the Uncanny Valley in 1970. While it is clear from many examples that Mori was indeed onto something, his definition is rather outdated. After all, a Bunraku puppet was the only entity that managed to 'pass' the Uncanny Valley and was therefore considered to be one of the most human-like figures Mori was aware of. Nowadays, we have managed to approach artificial humans or human-like entities much better than could ever be imagined in 1970, which has proven that the scale is indeed outdated and has thus gone through several 'iterations' to most accurately predict the Uncanny Valley with state of the art designs.

- Generation Specific: Younger generations are more used to robots, CGI and alike, and are therefore potentially less affected by this more or less hypothetical phenomenon.

- The Uncanny Valley is a collection of distinct phenomena: Phenomena labeled as uncanny have very distinct causes and rely on various different stimuli. These stimuli sometimes even overlap and can vary based on cultural belief and its comfort as well as someone's state of mind and the goal of the entity in question.

- Not one degree of human-likeness: While the Uncanny Valley is perceived as this perfect diagram with 1 valley near the end, researchers such as David Hanson have pointed out that this valley may appear anywhere on the monotonically increasing line. This idea supports the view that the Uncanny Valley arises from conflicting categorization or even issues with categorical perception, which cause conditions such as Capgras Delusion. J: maybe explain Capgras Delusion briefle, or is Capgras Delusion common knowledge and am I just being stupid?

- Good design can negate the Uncanny Valley: Using the idea that humans are programmed to find baby-like features cute, incorporating cartoon-ish features can help lift any design out of the Uncanny Valley. David Hanson further supported this by having participants rate photographs of robots on various points on the scale, where he could 'fix' the eerie robots by adding baby- and cartoon-like features. This is in conflict with the theoretical basis, which states that the human-likeness of an entity should perfectly match in any (body)part.

Godspeed and RoSAS Scale

Godspeed Scale

Because the aim is to determine how a robot is rated on a set of items, and how movement alters these ratings, it is of critical importance to find a scale that measures this accurately. Luckily, many different scales exist for these purposes. One of such scales is the Godspeed scale, developed by Bartneck, C. et al. (2009). This scale aims to provide a way to assess how different robots score on the following dimensions: anthropomorphism (1), animacy (2), likeability (3), perceived intelligence (4) and perceived safety (5). Each of these dimensions has a set of items associated, on which participants can rate the robot in question. Each item is presented in a semantic differential format. Examples of these items are: artificial-lifelike, mechanical-organic, unkind-kind, irresponsible-responsible and agitated-calm. Participants are asked to evaluate to which extent all of these items apply to the robot using a 5-point likert-scale (Bartneck, 2009).

Godspeed Scale Critiques

Even though this is a widely used tool for assessing robot appearance, there has been some critique. Some scholars have argued that there are quite a few issues regarding this scale, and that it needs further investigation. Here we will go over two main issues with the Godspeed scale, as discussed by Carpinella (2017), as well as . J: as well as what?

First of all, sometimes the items do not load onto the dimensions like expected. For example, according to the godspeed scale the item fake-natural is supposed to load onto the anthropomorpism dimension, but research has shown that this is not always the case. This means that the fake-natural item might not contribute much to the score of the anthropomorphism dimension, and that it could be statistically useless. On the other hand, sometimes items load onto factors that they are not supposed to load onto. The item inert-interactive loading onto the perceived intelligence dimension is an example of such a situation. According to the Godspeed scale it is not supposed to be on there, yet research tells us that it might (Carpinella, 2017). Situations like these suggest that the dimensions and their corresponding items might need further investigation and/or tweaking.

Secondly, the scale uses a semantic differential response format, which means that the items used to rate the robots have two extremes, instead of just being one word. This makes sense when an item uses antonyms as endpoints, like unpleasant-pleasant, yet in some situations this is not the case (Chin-Chang & MacDorman, 2010). It can be argued, for example, that the item awful-nice contains to endpoints that are not direct antonyms of eachother. This might be evidence that the Godspeed scale is not as exact as one might think.

RoSAS: Robot Social Attribute Scale

Building on the work of Carpinella (2017), Bartneck et al. (2017) developed a new scale called the Robot Social Attribute Scale, or RoSAS in short. This scale is based on extensive research and has its roots in the Godspeed scale. There are three main differences between the Godspeed scale and the RoSAS.

| Warmth | Competence | Discomfort |

|---|---|---|

| Aggressive | Knowledgeable | Feeling |

| Awful | Interactive | Happy |

| Scary | Responsive | Organic |

| Awkward | Capable | Compassionate |

| Dangerous | Competent | Social |

| Strange | Reliable | Emotional |

Firstly, the RoSAS tests robot appearance on three dimensions instead of five: warmth, discomfort and competence. These three dimensions are derived from the Godspeed scale, but should be more reliable and robust (Bartneck et al., 2017). These dimensions are measured using six items per dimension, which can be seen in table 1.

Secondly, instead of presenting the items in a semantic differential response format, the items in the RoSAS scale are simply one word. Participants are asked to which extent these words they associate to the robot.

Lastly, the RoSAS scale has a total of 18 items, whereas the Godspeed scale has 24.

Method

All participants will be asked to fill out the questionnaire. In this questionnaire, subjects will see an image of a robot, and a video of the same robot moving, for three different R: anthropomorphic robots. These robots fall into three different categories: slightly humanlike, humanlike and extremely humanlike. These three categories will be illustrated by the following robots: Atlas (Boston Dynamics), Honda ASIMO (Honda), and Geminoid DK (Aalborg University, Osaka University, Kokoro & ATR). To ensure that confounding variables like habituation to the robot have a minimal effect on participant judgement, the order of presentation is counterbalanced. This means that half of all participants will first see the image, then the video (normal order), and the other half will first see the video, then the image (reversed order). The order in which the different robots are presented is randomized. For a visual representation, please refer to table 2.

Subject responses will be measured using the Robotic Social Attributes Scale (RoSAS), developed by Carpinella, M. et al. (2017). Each robot will be rated on its warmth, discomfort and competence twice: once after seeing a still image, and once after seeing a videoR:, not necessarily in this order. All three measurement dimensions (warmth, discomfort and competence) consists of six items that are supposed to measure the dimension they are in. Furthermore, after having rated a robot in both the image and video condition, participants will be asked if their opinion about the robot changed after the second exposure, and if so, how/why. We are using a sample size of 40 students. This is a convenience sample; during COVID it can be hard to find participants, so we chose students because they are the easiest for us to reach. We set the size at 40 because we feel like this was achievable, and still of decent size. R: We could also mention here that students are a good target audience since they will eventually interact with the robots. The statistical software that will be used to analyze the data is Stata/IC 16.0.

Results

The data gathered by the survey were processed in two different ways. Firstly, the results corresponding to the RoSAS scale were analyzed using statistical tests like ttests J: maybe a bit nitpicky, but shouldn't it be t-test and the Wilcoxon Rank Sum Test, to find mean differences for the dimensions between moving and non-moving conditions. Other then the RoSAS data, participants were also asked, for each robot, whether their opinion on the robot changed due to the second exposure, and if so, how/why. This in qualitative data and was therefore processed using a simple thematic analysis.

RoSAS Data

For all three robots, the data was analyzed seperately. For each robot, the 18 RoSAS items were divided over 3 dimensions according to the RoSAS scale using Cronbach's Alpha: warmth, discomfort and competence. Then, for each dimension, the difference in means was computed for the still-image and video condition. Which statistical test to perform was determined by analyzing the distribution of the dimensions using Shapiro-Wilk W tests and Skewness-Kurtosis tests J: strange sentence. If, according to these tests, the distribution is normal, a simple two sided ttest J:t-test was performed. When this was not the case, we tried to transform the data. Lastly, if this did not yield a useable distribution, a Wilcoxon Rank Sum Test was performed. In all of these tests, H0: μmoving = μnotmoving, Ha: μmoving ≠ μnotmoving.

Atlas

For warmth, there was a significant difference in means between the movement conditions (μmoving = 3.6125, μnotmoving = 2.8542, p = 0.0090). Both for discomfort (μmoving = 2.5375, μnotmoving = 2.9792, p = 0.0716) and competence (μmoving = 5.0208, μnotmoving = 4.7042, p = 0.2469), there was no statistically significant difference in means between the movement conditions. To compute these values, a two-sided ttest J:t-test was used for warmth and discomfort, and a rank sum test was used for competence.

ASIMO

Both warmth (μmoving = 4.6708, μnotmoving = 3.5250, p = 0007) and competence (μmoving = 5.0958, μnotmoving = 4.1292, p = 0.0004) show significantly different means for the movement conditions. Just like Atlas, there is no significant difference in means for discomfort (μmoving = 2.1292, μnotmoving = 2.5000, p = 0.0699). Again, we used a two-sided ttest J:t-test to compute the values for warmth and discomfort, whereas a rank sum test was used for competence.

Geminoid DK

None of the dimensions had a statistically significant difference in means between the movement conditions (warmth: μmoving = 3.9083, μnotmoving = 4.1958, p = 0.3886; discomfort: μmoving = 3.8083, μnotmoving = 3.5417, p = 0.4148; competence: μmoving = 4.1125, μnotmoving = 4.3333, p = 0.4522). All values corresponding to Geminoid DK were computed using two-sided ttests J:t-test.

For a visual representation of these results, please refer to table 2:

| Warmth | Discomfort | Competence | ||||

|---|---|---|---|---|---|---|

| Moving | Not Moving | Moving | Not Moving | Moving | Not Moving | |

| Atlas | 3.6125 | 2.8542 | 2.5375 | 2.9792 | 5.0208 | 4.7042 |

| ASIMO | 4.6708 | 3.525 | 2.1292 | 2.5 | 5.0958 | 4.1292 |

| Geminoid DK | 3.9083 | 4.1958 | 3.8083 | 3.5417 | 4.1125 | 4.3333 |

Additionaly, the differences in dimension ratings between different robots was also analyzed. The results are shown in table 3. All results in this table were computed using rank sum tests, since the dimension data was never normally distributed for the different robots, and no transformations were possible.

| Warmth | Discomfort | Competence | ||||

|---|---|---|---|---|---|---|

| ASIMO | Geminoid | ASIMO | Geminoid | ASIMO | Geminoid | |

| Atlas | p = 0.0003 m_atlas = 3.2333 m_asimo = 4.0979 |

p = 0.0003 m_atlas = 3.2333 m_gem = 4.0521 |

p = 0.0144 m_atlas = 2.7583 m_asimo = 2.3146 |

p = 0.0001 m_atlas = 2.7583 m_gem = 3.6750 |

p = 0.3993 (RS) m_atlas = 4.8625 m_asimo = 4.6125 |

p = 0.0011 m_atlas = 4.8625 m_gem = 4.2229 |

| ASIMO | p = 0.8084 m_gem = 4.0521 m_asimo = 4.0979 |

p = 0.0000 m_gem = 3.6750 m_asimo = 2.3146 |

p = 0.0337 m_gem = 4.2229 m_asimo = 4.6125 | |||

J: I couldn't really verify the analysis itself (because of lack of knowledge), but I felt like the way the data was represented was rather repetitive. Most sentences were basically the same, Idk if that is normal for an analysis like this, but if not I think we could rewrite some sentences to use different words and stuff.

Qualitative Data

To analyze the qualitative data obtained from the open questions, we performed a thematic analysis. All answers were analyzed one by one, and in iterative fashion, five main themes were found. Almost all responses can be associated with one of these five themes:

- The video makes the robot look more friendly.

- The video makes the robot look more organic/humanlike.

- The video shows what the robot is capable of, and how it acts.

- The video makes the robot look creepy/awkward.

- The video makes the robot look less humanlike.

Conclusion

With the obtained results, there are multiple conclusions to be made.

First of all, it is apparent that for the robot Atlas, subjects perceived it to be warmer after having seen the video. This statement is based on the fact that the mean rating of warmth for Atlas is significantly higher for the moving condition than for the still-image condition. This could possibly be because of theme 1 and 2. These themes suggest that seeing the video makes the robot appear more friendly, organic and humanlike, which are all things that could contribute to the perceived warmth of a robot. On the other hand, neither the discomfort nor the competence J: You use competence (or varations a lot) dimension were rated significantly different between movement condition. This suggests that seeing Atlas move has no impact on how discomforting/competent J:competent it is perceived to be.

Secondly, the data on ASIMO suggests that seeing it move has an effect on how warm and competentJ:competent it is perceived. Just like with Atlas, ASIMO is seen as warmer in the video than in the image. However, data suggests that it is also perceived as more competentJ:competent in a video than in a picture. One possible explanation as to why ASIMO is viewed more competent in the video than Atlas, is that ASIMO looks more like a human/looks more interactive than Atlas (theme 2). When analyzing the qualitative data corresponding to ASIMO, one can see that many people mentioned it looked way more humanlike in the video. We do not see this response in the same quantity for Atlas.

Furthermore, for Geminoid DK, perceptions of warmth, discomfort and competenceJ:competence did not differ significantly between the movement conditions. In this research, there is no conclusive evidence as to why this might be the case. However, one can speculate. J: a positive comment: I like this random sentence a lot. It so quirky in the middle of a serious conclusion. One explanation could be that Geminoid DK already looks so much like an actual human in the picture, the video does not change anything. Further research is required to back up this statement. Even though we did not find any significant results using the RoSAS scale, the open questions did give some insight into the way Geminoid DK was perceived. In most cases where the opinion changed, the reason falls into either theme 4 or 5. This response might be a strong showing of the uncanny valley effect, since theme 4 and 5 never showed up for Atlas and ASIMO. Because Geminoid DK is so humanlike, but not quite human, it is easily perceived as eery, discomforting and awkward.

Lastly, the analysis of dimension ratings between the robots also provided some interesting results. These results are summed up below:

- Warmth

- ASIMO is perceived to be warmer than Atlas.

- Geminoid DK is perceived to be warmer than Atlas.

- ASIMO and Geminoid DK are perceived to be equally warm.

- Discomfort

- Atlas is perceived to be more discomforting than ASIMO.

- Geminoid DK is perceived to be more discomforting than Atlas.

- Geminoid DK is perceived to be more discomforting than ASIMO.

- Competence

- Atlas and ASIMO are perceived to be equally competent.

- Atlas is perceived to be more competent than Geminoid DK.

- ASIMO is perceived to be more competent than Geminoid DK.

Out of these results, some main inferences can be made:

- Geminoid DK is perceived to be the most discomforting out of all three robots, by quite a bit. This can be explained using the uncanny valley. Since this robot is so humanlike, but not quite human, it falls into the valley and can be percevied as eery or discomforting.

- Out of all robots, Atlas is perceived to be the least "warm". This might be because it is the least humanlike. It has the least in common with humans themselves, and therefore people might struggle to find warmth in such a robot.

- Geminoid DK is rated as the least competent robot. A possible reason for this finding is that, because it is so humanlike, it encourages people to compare it to an actual human, moreso than with the other two robots. When (unfairly) comparing a robot against a human being, it is way easier to call it incompetent, whereas comparing robots to robots might provide more leveled playing grounds.

Discussion

Future Research

First and foremost, future research needs to be concluded on a random sample. This is so that the audience interviewed is broad and more general. As of now, a convenience sample was a limitation due to lack of volunteers, but in the future, research done by the enterprise will consists of randomly chosen individuals. Due to the broader audience of the future studies, we want to encapsulate questions distinguishing the individuals taking the test by ethnicity, culture, religion, sex, and age. This hopefully will yield significant differences, such that each category can be improved individually. For example, if we realize that the Asian market does not like Geminoid Dk as much, but prefers a robot that is more appropriate to their culture, then future studies could use more examples to correctly measure the influence that this factor has on likeness for example. Due to prior research, it is already expected that culture will have an influence on people’s opinions, but the magnitude of this influence needs to be better measured. An international robot manufacturer would want to know for sure if making an entirely new robot look for the Asian market would be worthwhile in terms of cost of production vs return. The same point can be made with regards to elder-looking robots that might have an influence on human likeliness with respect to age.

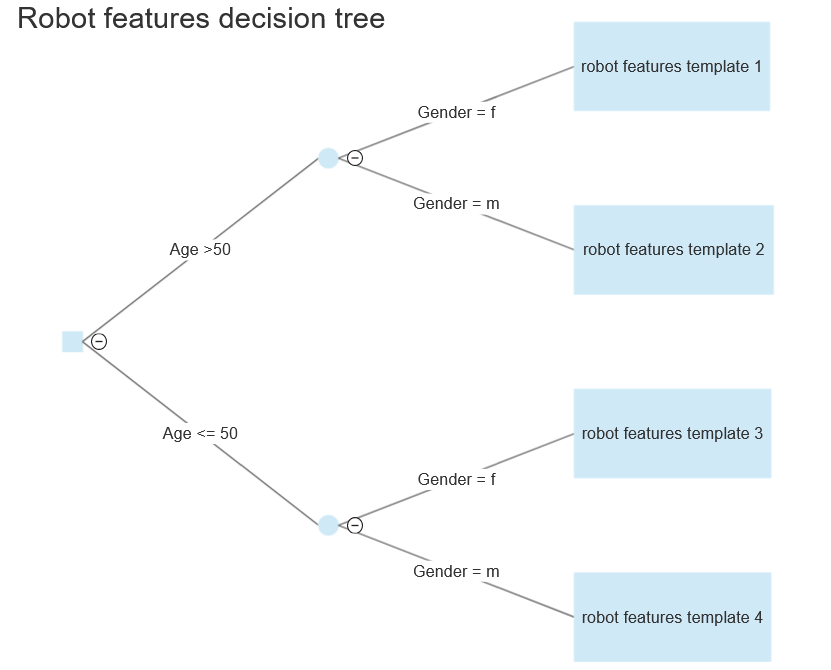

We would further want to construct a decision tree that encapsulates the different dimensions as much as possible. This decision tree is meant to return the best robot parameters in terms of its features for a given spectrum of the market. For example, using the following tree, a manufacturer that intends to deploy its robots in the European market, for ages 60 to 80, mostly female, then the best choice of robot would have the features represented in "template 1". It is worth mentioning that this example is simplistic in comparison to the true depth of a tree that could be reached using years’ worth of research.

As the hypothesis proposed by Mori [cite]J: Marking this because I think we shouldn't forget this. is applicable to the effect that movement had in our research, (namely…)J: Marking this because I think we shouldn't forget this., we further intend to break down the movement into multiple categories. This will start with the more human-like robots, where different facial movements will be compared to one another using a similar survey approach. Furthermore, facial micro-expressions and micro-facial movements (MFM) will be analyzed to check whether they have an influence on the robot’s ratings. Although this would be nice in theory, no state-of-the-art robot is able to perform such micro-facial movements. Thus, a new approach is needed to generate videos of robots making a certain set of MFMs. We propose the usage of a Generative Adversarial Network (GAN) to perform video synthesis, also known under the term “deepfake”. It has been proven that given enough video data, a GAN can generate videos mimicking facial expressions down to very high levels of realism. Such an approach can be utilized in creating a deepfake video of the original robot and seemingly give it the ability to perform certain micro-facial movements. After generation J: did you mean generations?, such videos will be compared directly to their original counterpart, namely the video of the robot without MFM, using a survey approach like the one used in our studies.

If the addition of MFM performs better than normal movementJ: I feel like this is a bit vague, maybe elaborate on what performing better means or something, then the creation of a robot with this ability will be incentivized. By comparing the difference in likeliness between an original video and the deepfake to the difference in likeliness between the static image and the video, the significance of MFM can be deduced. To further expand this statement, it is necessary that we understand that movement of very humanlike robots is generally considered to lower the likeliness of the robot. Thus, there might be certain limitations to the realistic features that these robots can replicate, one of could be MFMs. By looking at how much the likeliness increases due to MFM presence, assuming it increases, then a percentage of the total difference in likeliness between a static image and the original video can be explained as expectations that humans have for MFMs.

Enterprise

Introduction

In the past years, researchers, movie makers and robot designers have tried to get closer to the 'manufactured human.' But, as we know, going up does not mean making progress; as we try to approach a robot or other entity imitating a human, we are victim to our own technological limits. Especially in movie-making, designers can model, texture and rig characters to share the same joints as the actor, have it follow the actor's movements, and as a result, get a realistic character in the movie. One of the best examples of this is probably Avengers: Infinity War, a movie based around a character that did not even exist, named Thanos. However, the performance of the actor (Josh Brolin) was perfectly captured by the computer-generated model of Thanos, and regardless of the validity of the existence of this character, people could understand the character and, all in all, take him seriously.

However, even in what seem to be endless possibilities in movie-making, not all attempts are successful. A very good example of this, which shares many aspects with Thanos (namely a full CGI character that people needed to relate to), is the movie Cats. It's a movie that tried to merge a cat and a human, or humanoid figure rather, into one. In many scenes of the movie, the super-accurate human face morphed with the cat body is often quite scary, especially in some specific camera angles. This is also why the trailer was perceived very badly, and ultimately why the movie failed with the public, obtaining a loss of several tens of millions of dollars. Another good example is Sonic the Hedgehog (2020), where filmmakers went back after lots of criticism to remodel the CGI-model of Sonic and make him more appealing. The biggest change here was the size of the eyes of Sonic, but also the general cartoonishness that the character conveys. Apart from models not existing in the real world, there have also been various attempts at deep-fakes. Often used to bring an actor for a movie that has passed away 'back to life', for example in the recent final episode of the Mandalorian. These deep-fakes are very realistic remakes of human beings, but the viewer can nearly always tell that something is off. Sometimes the mouth doesn't move human enough, the eyes have a weird glance, the body doesn't make enough small movements or the lighting is off. There could be many reasons for the model not being perfect, but a viewer can almost always notice. Our brains know that they are being fooled.

Problem

Together with this ability to notice whether an entity is real or fake comes a repulsion towards such fake characters. People usually don't like it when they are faced with something that is really close to a human, but just isn't really a human. But what is also true is that people like other humanlike creatures. Robots that are more humanoid will often be received better than robots that don't. Therefore it is of most importance for robot-designers to carefully select the appearance of their robot.

The problem with this is that there isn't much data on the topic. The field of robotics is fairly new, and especially in combination with the appearance there is not much information to be found. Teams with the desire to create a robot are therefore forced to do their own research, which costs them enormous amounts of money and resources. And this all while they are trying to focus on the implementation of the robot.

Vision

With our company we aim to fill in the gap for organisations that would want to develop a robot, but don't want to spend this much on the research of the appearance of the robot. We will offer specialised, research driven consultancy to stimulate the growth of robotics. Because we only focus on the appearance of these robots our research will most likely be more specific towards this goal and more elaborate as well. We plan on keep expanding our knowledge to guarantee the best advice to our customers, so they can focus on what they really want: creating the robot.

ADD THAT WE SPOKE WITH EXPERTS TO JUSTIFY CLAIMS WE MAKE

Users, Society and Enterprise

The following user groups are involved with the appearance of a robot and would benefit from a consultancy in robot appearance:

Researchers

Researchers will benefit from such a consultancy because researchers can more carefully specify their goals. Generally pushing the state-of-the-art and therefore approaching human-like robots in their research, having a clear view on what can make a robot fall J: suggestion: having a clear view on what causes a robot to fall into the Uncanny Valley can help researchers to focus on what prevents those robots to fall into the Uncanny Valley as well. If the goal is better defined, it is easier to reach it. J: remove it/it is easier reachable/ more reachable

J: I feel like you use a lot of comma's, I highlighted the ones that could be removed imo Clementine, 34 and researcher at TU/e, has made amazing progress with her research! She has successfully found a way for a robot to learn from its environment, much like humans do when they are young. She has created a successful reward and policy system,J:, by which the robot rates all actions it can take and potential better alternatives. Additionally, the robot has implemented balance,J:, and can effortlessly align all its joints for smooth movement. However, the robot is only able to do practical tasks and does not have any form of reading humans, nor the ability to match certain emotions in a social group. The robot would only be required hold basic conversations with humans and is mainly meant for its practical use, but it does need to be accepted and trusted in a social circle. While not complex, trying to have the robot look human in order to be accepted among humans opens the risk of falling in the Uncanny Valley,J:, and therefore,J:, a different approach has to be considered. But Clementine finds it hard to determine what she could implement for facial expressions and face design to have communication go smoothly, but J: two times but in the sentence, also starting sentence with but also be relatable or even cute enough to be associated with from a human perspective. To finalize her research,J:, however, she can't overthink it,J:, and Clementine is forced to finish her initial design, hoping the Uncanny Valley is completely avoided.

Designers

Designers will benefit from the various variables that are defined in J: the research research, J:, on which the consultancy will be based. Generally staying in front of the Uncanny Valley, they have more freedom in what their robot will look like and could, with our help, very easily select the variables that matter based on the tasks their robot will be designed to fulfill. Designers can design their robots for their intended clients,J:, without risking finalizing a design unappealing to this audience.

Rory, 43 years old, is a lead designer in a team that develops a home buddy. Meant to fight loneliness, this robot does not require too many practical abilities, but it does need a lot of information on humans, their emotions,J:, and human language. Rory has managed to use image classification along with already developed AI for speech recognition to recognize human emotions and, in a way, understand what the person is saying. However, for humans to relate with robots on an emotional level, robots need to have a specific kind of human-ness and cuteness,J:, without frightening the owner. However, J: two times however avoiding the Uncanny Valley is hard,J:, and several prototypes will have to be made and judged by his team and others before the product can go on the market. After a tiring performance test, where it is specially tested how well received the robot is in social circles, he can finally decide which of the robots can go on the market. When the correct design has been picked,J:, Rory wants to mass-produce it by building production engines that will automatically assemble the robot, but for now,J:, he will have to design every single prototype separately.

Clients

Clients will not have to deal with uncanny robots designed to help them again. This will ensure that no clients will have to fear or feel uneasy with an experimental social robot, which is in their best interest due to the costs and manpower required to care for them that can now be replaced J: which can now be replaced by robots. With a perfectly designed robot in terms of appearance, issues that can not be dealt with very well in the present-day, such as loneliness and depression, can now be battled using these care robots.

Henry, 87 years old, has become mobility impaired over the years. Ever since his wife died,J:, he loved walking by his daughter and grandchildren, who live a few blocks away. But sadly, visiting them has become harder and harder,J:, and he more often than not chooses to not go out, but rather stay at home. Recently, his grandchildren started studying in the city,J:, and his daughter has made a recent career development that forces a lot of time from her. Henry is very proud of his children and grandchildren, but struggles more and more to take care of himself and his house. He also misses visiting his relatives often,J:, and even though he still has old friends of his come over every once in a while, being alone with only the tv gets tiring very quickly. He has thought about getting a pet, but Henry is afraid that he can't take care of the pet J: take care of it as well as he would hope. Because he is still fit enough to stay in,J:, the local care home could not help him much further, since J: since is a bit weird, because he is fit, the care home could not help him, since they could only do... the best they could do is sending a volunteer every once in a while to check up on him. Life doesn't get Henry down very fast, but even he is starting to realize that these last years have been getting more lonely. All Henry wishes for right now is a buddy who could stay with him for a while.

Social volunteers

Social volunteers will have a massive advantage for this consultancy as a consequence of the state-of-the-art being pushed forward. When care robots in certain more concrete situations can be developed sooner using the well-defined variables for appearance,J:, they can take over 'smaller' tasks of a social volunteer. Social volunteers often like doing their work a lot, but with the shortage it can take a lot of time, which is something not all volunteers have to offer too much of. Using care robots, social volunteers can spend their time elsewhere, maybe even by engaging with the cared-for even more than before.

J: I'm missing the user story

Implementation Details

We want to create an online platform B: here it just sounds like we are only gonna have a site and nothing else on which users can receive feedback on the competence and likeability of a robot. There is much data on this topic available at the moment, for example how the appearance of a robot affects humans reactions towards it. Though the combination of the appearance and actual movement is much harder to determine. On our platform we offer two types of services. Users can get assisted on the design of their robot. They will receive feedback during their design process with multiple interaction points with our data analysts. Users can also request a final analysis when the product is finished. The specific terms can then be negotiated, but it could be done in the form of them sending a finished design model that we could judge or one of our analysts could travel to the actual robot to see the performance in real life.

The most amount of work will be the setup of the whole platform and the gathering of the data. For the former we need an intuitive interface. It should be easy for customers to submit and they should be able to share information that might help with the consultancy beforehand. We will support video content to be uploaded along with some easy to answer questions like the purpose of the robot and the target audience. Then the first appointment can be planned, which will be in the form of an online call. During this meeting the initial needs of the client will be assesed and an initial feedback will be given on the design. Based on the users' needs the next actions will be set up to guide them as well as possible.

The data needed to be able to perform such an assessment will be gathered beforehand. A user research will be conducted among people of different age groups. In this research we aim to be able to map the likeability of robots of various levels of humanness compared to their way of moving. At first we do not focus on a specific age group yet B: we do right? students. We will transform the data gathered into a usable model that we can use to assess robots in general. Later we plan on expanding the research to increase accuracy. We would like to add the age group of the end user in our model as well as the role the robot will be fulfill, as we expect both to have a significant influence on how much a robot will be liked.

After this initial setup there are a couple key factors to the whole process. First of all we need to maintain the platform and employ enough consultants to serve our customers. Second of all we need publicity, the service we provide is very specific, so it is of much importance that we are able to reach our target audience. At last we would like to improve the current model. As said above, we would like to expant it to other age groups and specify it towards different functionalities for the robot. For this we need more research and more data.

Enterprise Structure

The enterprise itself will consist of just a few people and will mostly be located online. Clients will be able to send in their robot designs and they would be rated and evaluated online. After the robot attractiveness scale has been created <-- B: we dont really have a scale right? it will not quickly age and therefore we do a need for need full-time data analysts <-- B: this sentence doesnt make sense. We do need one or more employees to perform maintenance to the website/platform we will be using and one or more employees that are taking care of advertisements. Thus the body of employees will be relatively small.

Cost and profit

Since the enterprise will be mostly located online we can decide against hiring or buying an office. There are a J: remove the a benefits to having an office, for example you can have a central meeting point, and therefore we only decide against getting an office if the profits won’t allow for it. If we do so, we might need to hire computers, which can be located anywhere, for hosting the websites and running the evaluations. We should be able to hire only specific amounts of processing power of a computer J: computers might be confusing, maybe machines?, therefore we could reduce costs. The evaluation service we offer shall not be expensive, this is because we assume most anthropomorphic robot manufacturers already have a team designated to the design of the robot. Thus we cannot ask too much money, since then they will just solely rely on the designated team for design evaluation. However, making little profit is not necessarily bad, since the costs for maintaining the enterprise and performing the evaluation are also very low.

J: I think this whole piece could benefit from an update tbh. This was written in the beginning and we changed quite a couple things afterwards.

Aspect of the platform used by clients

As said above, the platform offers the user a way to receive feedback on the design of their robot. They will be able to receive guidance on multiple occasions during their design process, which results in a better performing robot. Organisations focussed on the implementation of such robots can stay focussed on these tasks.

Here you can see a mockup for our platform. We chose a clean design and used mostly bright colors to support the ease of the process. The platform will be relatively small, since it doesn't need much more than a way of people signing up to our services. In the future we would like to expand the platform with a user account system, where all the data of a project could be stored so people could easily access it even at later times.

We offer customer service, in case there are any questions or problems, though we assume this won't be needed much. Since during our services we have a lot of interaction moments, we expect to clear up many questions during those meetings. Though in case customers do feel confused, we want to provide an easy way to state their problems.

When a user would like to utilize our services they can initiate that as expected on the platform. We require them to fill in a couple of questions: - What is the role you're robot is going to fulfill? - What is the target audience of your robot? Next to that we allow them to add more information that might help us in our judgement, they could for example upload images, videos, or even a complete Blender or similar project. We then contect them afterwards to set up a first meeting. The initial information, as said, is used to make a first estimate of the situation and the consultancy the customer might need. In the first meeting this is further elaborated together with the customer. The process will then vary dependent on the needs and requests of the customer. They might only need some small advice or need much more help during their whole design process. During this first meeting also a price agreement will be made according to the customer's needs.

For the final product analysis the total process will even be more varying. We could visit the robot in real life for the most accurate advice, or we could try to consult to our best abilities using digital information we receive from the customer. And as with the first service, the price depends on this as well. We expect that this service will be the most attractive for our customers, since it only requires them to upload their project and fill in some questions to get a response. It also won't take much work from our part to complete such a review, so it will also be relatively cheap. Though we do not guarantee the accuracy of the consult as we were limited in the information to base our judgement upon.

Funding campaign

To successfully start up the enterprise we would need to get some funding from other organizations. Organization that might want to fund the enterprise are stakeholders that would benefit from the service the enterprise offers, like a robotics manufacturer or a care home that uses robots. This funding would be mostly used to hire data analysts to develop the robot attractiveness scales and for the advertisement of the company. These are necessary for the enterprise until it is able to manage and survive on its own. A campaign plan would show what steps would benefit and help start up the enterprise, therefore we have laid out such a campaign plan below.

Step 1: Approach to market. How do you intend to go about selling?

Since our service (evaluation of robot attractiveness) is quite niche we have to specifically target large companies <-- B: Really? Only big companies?. Any large scale advertising should only be restricted to the robotics industry. A good way to get the attention of companies would be to ask for a visit so we could pitch the benefits of using our service.

Step 2: What is the expected business profits in the next 5 years?

The first 5 years the enterprise would return little revenue, since the scale of attractiveness has to be created and we have to come to the attention of companies. We can offer little in these 5 years, all income has to be spend on advertisement and hiring data scientists. At the end of the 5 years the enterprise should be able to provide more and better services however, so we can promote the enterprise as an investment in the future. J: I think this is a bit weird. Why won't we be able to earn money from the beginning? Because we have a solid service and I don't see why the service would improve so much over 5 year that it would suddenly be that much better that it woudl make a difference. Againg I think this is outdated text.

Step 3: What are the relevant skills and qualifications you have to enable you go into this particular business?

Robot attractiveness has its basis in human psychology, since beauty is in eye of the beholder and the beholders are human. Specifically knowing what traits or aspects of robot and human appearance trigger what emotion is essential. It allows us exclusively evaluate each of these aspects to create a scale J: scale on which certain influencial traits or human or robot appearance is ranked. Our team has members that are proficient and knowledgable in creating this scaleJ: scale. Moreover, we have a few members that are experienced in processing data and finding patterns in the data. This should also benefit the quality of the end result.

Step 4: What resources would you need to start-up the enterprise and run it consistently for at least one year?

First and foremost, we would need the resources that allow us to reach potential customers and show them the enterprise exists. Specifically this entails contacting and visiting companies and pitching the benefits of using the service our enterprise offers. Beforehand we do need some results we can show, so these need to be prepared. These results could very well be the evaluation of the appearance of already existing robots. We can back the results of the evaluation with findings in research and thus validify our evaluation. Moveover, if we consider the possibility for physical consultation sessions we would need an office. However, we can postpone this until we have properly started up the enterprise. Another necessary resource we need for the enterprise to run consistently is an online platform on which we can perform evaluations, companies can find information on the enterprise and contact us. Lastly, depending on how we create scale J: scalethat evaluates robot appearance, we might need some more funds for paying participants that take part in experiments or fill in extensive surveys.

Input from Experts

Talk with Richard Kuijpers

For the project we spoke with Richard Kuijpers, owner of Smartrobot.solutions, which is a company that focuses on solving problems by using robots. During the talk we asked multiple questions regarding the concept of our enterprise and whether it would succeed and be profitable according to him. In this section we discuss the results from that talk. The first question we asked Richard was whether it is difficult to gather information on how the appearance of a robot influences it’s likeability. Richard responds that it is, in fact, most robotics developers create a very general appearance so that the robot could suit multiple preferences. Keeping the robot small avoids and reduces possible intimidation on human users. Moreover, the eyes and voice are also very important, since humans tend to focus on these factors.

We pitched our enterprise idea and Richard segwayed into a story on the specifics of the robotics market. This market is still today very small and mostly centered in Asia. Per year not a lot of robots are put on the market and some robotics companies go bankrupt because of too high development costs of the robots. The concept of our enterprise however is viable and, according to Richard’s opinion, would be profitable, but just not yet. When the robotics market grows and robots are deployed in more fields the amount of robots put on the market will increase as well. Therefore, he estimates that in ten to twenty years our enterprise would be profitable, since then we would have enough clients to offer our service to.

Moreover, we asked Richard what we realistically could charge for our services. Most expert consultants work with an hourly pay of 125 euros. Therefore he advised us to keep to this and evaluate how long an individual consultation would take in hours. Thus we can calculate how much money we can predict to earn per client.

Last but not least, we asked whether Richard had any tips on gathering funds for starting up the enterprise. Some universities give subsidies to students for starting an enterprise, there are also some government regulations that would allow us to receive some funds. Companies that we can offer our service to might be interested in funding the startup, but only when the robotics industry becomes more profitable in the future. Therefore, starting the enterprise would be difficult today, but Richard believes that in a decade or two we can pull it off.

Talk with Haico Sandee

The second expert we spoke to was Haico Sandee, CTO and founder of Smart Robotics. Smart Robotics is a company that aims to solve mostly logistic problems with robotics. They specialize in robotic arms. Similar to our talk with Richard Kuijpers we asked Haico questions about whether our enterprise idea would be viable and profitable. First and foremost we asked him if he thinks the appearance of a robot is very relevant to the likeability of the robot. Haico mentioned that it does, but we should be cautious in defining one “best” appearance. Likeability of appearance is very subjective and heavily influenced by racial differences. Most Americans are more inclined to think robots should look strong, therefore a cute robot might be suboptimal. However, cute robots apparently work better in Asia, so there is no definitive best robot design and thus it is immediately very difficult to perfect robot appearance Haico mentions.

Moreover, there is little information on robot appearance at the moment. Since the robotics field is still quite new and the importance of functionality of robots has overshadowed the appearance. Therefore a company that would focus solely on robot appearance would be a gap in the market according to Haico. However he also stresses that the production of a robot is very costly nowadays, therefore those robotics production companies are less likely to want to pay for our services. Two solutions were offered that could solve this problem:

1) Wait 5 to 10 years. At the moment robots are mostly sold business-to-business. There are not enough robots produced for consumers (private use) and thus the robotics market stays small. When after a few years, robots are able to be used more in and around the house more will be sold. Also, the appearance of the robots will be of greater importance.

2) Broaden our target audience by broadening our specialization. We could do so by, for example, instead of only robot appearance also focus on appearance of (robot) interfaces. This broadening would be very beneficial to us early on, it would allow for more customers and therefore the profiling of the enterprise. After a few years, we could return our specialization to only robot appearance, if wanted.

Haico also commented on our two versions of robot evaluation we would offer. The first type of evaluation as explained above was the single evaluation and the second type was a more guidancelike evaluation of the robot appearance. Haico mentioned that both services have a different target audience. Therefore by offering both we broaden our target audience, which is good in this case, since we might struggle in having enough customers. Moreover, the price for the services of the enterprise also depends on what type of service the customer wants. Therefore it would be wise to elaborate more on the two types. Haico estimates that for the single evaluation we can ask about 110 to 120 euros per hour per person and for the guidancelike evaluation we can ask 60 to 70 euros per hour per person.

Future Expansions and Improvements

Appendices

Appendix A

Appendix B

Papers and summaries

Bart Bronsgeest

Bartneck, C. et al. (2009). My Robotic Doppelgänger - A Critical Look at the Uncanny Valley.

Breazeal, C. & Scassellatie, B. (2002). Robots That Imitate Humans.