PRE2019 3 Group14: Difference between revisions

| Line 96: | Line 96: | ||

== Neural Network == | == Neural Network == | ||

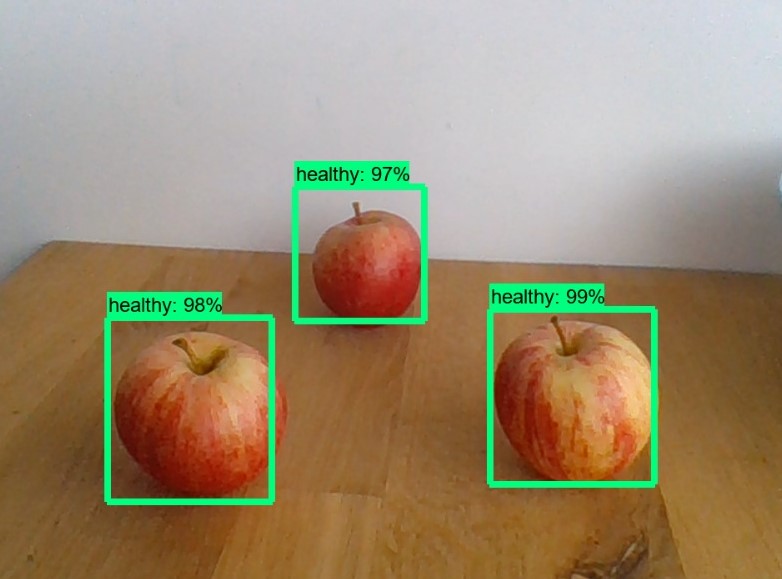

Our neural network is an adaption of the SSD MobileNet v2 quantized model, using images we labeled ourselves as training data. The | Our neural network is an adaption of the SSD MobileNet v2 quantized model, using images we labeled ourselves as training data. The reason why we use the SSD MobileNet v2 model is that it is specifically made to run on less powerful devices, like a Raspberry Pi. The quantized model uses 8-bit instead of 32-bit numbers, further lowering the memory requirements. | ||

[[File:NN1.jpeg|400px]] | [[File:NN1.jpeg|400px]] | ||

Revision as of 12:20, 9 March 2020

Team Members

Sven de Clippelaar 1233466

Willem Menu 1260537

Rick van Bellen 1244767

Rik Koenen 1326384

Beau Verspagen 1361198

Subject:

Due to climate change, the temperatures in the Netherlands keep rising. The summers are getting hotter and drier. Due to this extreme weather fruit farmers are facing more and more problems harvesting their fruits. In this project, we will develop a robot that will help apple farmers, since apple farmers especially have problems with these hotter summers. Apples get sunburned, meaning that they get too hot inside so that they start to rot. Fruit farmers can prevent this by sprinkling their apples with water, and they have prune their apple trees in such a way that the leaves of the tree can protect the apples against the sun.

This is where our robot, the Smart Orchard, comes in. Smart Orchard focuses on the imaging of apples. The robot will be used for two tasks, recognizing ripe apples and looking for sunburned apples. First, Smart Orchard will be trained with images of ripe/unripe/sunburned apples in order for it to understand the distinct attributes between them. It will then use this knowledge to scan the apples in the orchard by taking pictures of its surroundings. The robot will process the data and show the amount of ripe and sunburned apples via an app or desktop site to the user, who is the keeper of the orchard as well as the farmers that work there. The farmers can use this information quite well since the harvesting of fruit can be done based on the data. Furthermore, the farmers cannot sprinkle their whole terrain at once, because most of the time they have just a few sprinkler installations. With the data collected by the robot, the farmers can optimize the sprinkling by looking for the location with the most apples that have suffered from the sun, and thus where they can most efficiently place their sprinkler.

Also, Smart Orchard can be used to find trees that have too many unripe apples. Trees that have too many apples must get pruned so that all of the energy of the tree can be used to produce bigger, tastier apples. Since the robot will notify the farmers how many ripe and unripe apples there are at a given location, the farmers can go to that location directly without having to look themselves. This also increases the efficiency with which they can do their job.

Objectives

The objectives of this project can be split into the main-objectives and secondary-objectives.

Main

- The system, consisting of a drone equipped with a camera and a separate neural network, should be able to make a distinction between ripe and unripe apples.

- The neural network should be able to make this distinction with an accuracy of at least 87% whilst only having images of appletrees taken by the drone.

- The neural network must reach this accuracy with only limited data available, more specifically: at least 30% of the appletree is in the image and at least 10% of the fruit.

- The neural network should be able to recognize sunburn with an accuracy of at least 87%, whilst at least 30% of the appletree is in the image and at least 10% of the fruit.

- The data (classification of apples) obtained from the neural network should be processed into a model, particularly a coordinate grid of the orchard.

- The user must be able to interact with the system by means of a web application and through that make reliable agricultural decisions as well as being able to give feedback to the system.

- Depending on user preferences the system should be able to work autonomously to a certain degree. More specifically: as of boot up the drone should be able to start flying autonomously through the orchard and take images, the system must update its data and model, and the drone should return to its docking station.

- With the use of obtained data, the system should be able to create a list of recommended agricultural instructions/actions for the user like harvesting and watering.

Secondary

- The interface of the system should be clear and intuitive.

- The system should be an improvement in terms of efficiency and production of the apple orchard.

Users

The main users that will benefit from this project are keepers of apple orchards, who will be able to divide tasks more efficiently. The farmers that work at the orchard also benefit from the application, since it will tell them where to go, such that they do not have to look for bad apples themselves.

State-of-the-art

Artificial neural networks and deep learning have been incorporated in the agriculture sector many times due to its advantages over traditional systems. The main benefit of neural networks is they can predict and forecast on the base of parallel reasoning. Instead of thoroughly programming, neural networks can be trained [1]. For example: to differentiate weeds from the crops[2], for forecasting water resources variables [3] and to predict the nutrition level in the crops[4].

It is difficult for humans to identify the exact type of fruit disease which occurs on the fruit or plant. Thus, in order to identify the fruit diseases accurately, the use of image processing and machine learning techniques can be helpful[5]. Deep learning image recognition has been used to track the growth of mango fruit. A dataset containing pictures of diseased mangos has been created and was fed to a neural network. Transfer learning technique is used to train a profound Convolutionary Neural Network (CNN) to recognize diseases and abnormalities during the growth of the mango fruit[6][7][8].

Approach

Regarding the technical aspect of the project, the group can be divided into 3 subgroups. A subgroup that is responsible for the image processing/machine learning, a subgroup that is responsible for the graphical user interface and a subgroup that is responsible for configuring the hardware. In the meetings the subgroups will explain the progress they have made, discuss the difficulties they have encountered and specify the requirements for the other subgroups.

The USE aspect of the project is about gathering information about the user base that might be interested in using this technology. This means that we will need to set up meetings with owners of apple orchards to get a clear picture of what tasks the technology should fulfill. Furthermore, we shall need to inspect if the project is feasible from a business perspective. For this we will need to make an accurate production-cost approximation of the project and an approximation for the sale price of the project.

Interview with fruit farmer Jos van Assche

We interviewed a fruit farmer to get a good insight in what the user wants. These questions are necessary in the process of our product. The interview can be seen below:

Question 1: How many square meters of apple trees do you have in the orchard and how many ripe apples do you harvest on average per tree?

Answer 1: We have 130000 squared meters of apple trees, there is one tree on every 2.75 squared meters, so in total there are 47000 apple trees.

Question 2: Which apple diseases could you easily scan with the help of image recognizing?

Answer 2: Mildew (in dutch: Meeldauw) and damage of lice (luizenschade) are also useful to scan. However, it is more useful to check where the most ripe and colored apples are to see where we can start harvesting the apples.

Question 3: How do you determine nowadays when a row of apple trees is ready to be harvest?

Answer 3: We check the ripeness of apples by cutting them through and sprinkle it with iodine, when the black color of the iodine changes to white, it means that there is enough sugar inside the apple and that it is ready to harvest. Furthermore we check the color of the apple whether it is red enough or just tasting it. Moreover it is possible to detect ripe apples with sound waves to measure the hardness of the apple.

Question 4: How many sprinkler installations do you have and how do you determine where to place them?

Answer 4: We do not have fixed sprinkler installations, however we ride with water when needed. Useful would be to determine exactly which trees need water.

Question 5: In what kind of program would you like to view the data of the orchard: In a mobile app, a website or a computer program?

Answer 5: An app would be useful, however it would also be handy to see the overview/positioning of the ripe/sick apples on a computer (in the tractor) to determine where you have to be.

Question 6: Imagine our product will be available to buy, would it by an addition to the current way of working? Which additions/improvements would you suggest to our concept?

Answer 6: It would be a nice addition of the way of the current way of working. There is already a kind of computer program on the market called Agromanager (8000 euros), which is developed by a son of a farmer in Vrasene called Laurens Tack, this App makes administration easier for farmers. It tracks the water spray machine, so positioning, and it says where to dose more. But this is more a administrative application.

Conclusions after interview

After taking the interview with farmer Jos van Assche, the following conclusions and suggestions were taken/considered. In the first place, the farmer thought it was useful to get the application on both mobile phone and computer. That is why it was chosen to make a web application with .NET, this application can be opened in a web browser on both computer and mobile phone. The second remark of the farmer was that our concept would be quite useful to determine where to spray his apples with water, to prevent sunburned apples. Furthermore he gave us as a suggestion to also look at mildew and damage from lice. These two "diseases" are also important for the farmer, this we will explain in the section diseases below. However, our main focus will first be on the ripe and sunburned apples, because the farmer also confirmed that these were the main issues. The third conclusion of the interview is that the farmer on color and iodine test based, checks when to start harvesting the apple trees. With our application he does not need to check the color of the apples in the orchard anymore. He only has to check the sugar level if necessary with iodine. As last Jos suggested us to look at a existing application called Agromanager. This is more a administrative application, however for the design of our application it would be useful to get a look at it.

Diseases to check for

In this section, the "diseases" are discussed, which could be detected with our Smart Orchard. The following questions are discussed: What the disease is, why it is important for the farmer to check for it and what could be done to prevent the disease after it is noticed by the Smart Orchard. The three following diseases could be traced by the robot: Sunburned apples, Mildew and damage by lice.

Sunburned Apples

Apples can get sunburned due to the hot sun at warm days in the summer. The apple surface gets to hot, when hanging in the sun for too long. This leads to the death of cells at the surface of the apple and the color of the apple changes to brown. Then the apple starts to rot and it is not suited to harvest and sell anymore. When this problem could be solved the farmer has a bigger harvest. With our Smart Orchard the apples are scanned for this problem, when it sees a sunburned apple, the farmer will be noticed. Then the farmer can place shadow nets or can place his water spray installations on the most needed/critical parts on his orchard.

Mildew

Mildew is a mold, which lives on the leaves of the apple tree. It is a kind of white powder at the leaves and is therefore easy to scan. The Mildew is unwanted, because the Mildew propagates/spreads out fast and it takes nutrients from the tree. That is why trees after being infected by the mold do not grow any more, give no apples and the leaves are going to dry out. Finally the tree will die. With the Smart Orchard the Mildew can be spotted and the farmer can take actions to stop the Mildew. The farmer can spray the tree with water, when it is dry. Furthermore he can set out earwigs, which eat this mold. Moreover, the farmer can prune infected parts of the tree.

Damage by lice

Blood lice and other lice species are a bit harder to scan. Blood louse leaves a white "woolly" kind of dust behind on the leaves of the tree. When the tree is seriously attacked by the louse, galls can be formed on the trunk or in branches. This can cause irregular growth and tree shape, especially with young plant material. These galls can also form an entrance gate for other diseases and mold, such as fruit tree cancer. If the Smart Orchard can detect this woolly kind of dust of the louse, the trees can be saved from diseases. The farmer can set out ichneumon wasps ("sluipwespen") or earwigs, which eat these parasites or they can use chemical pesticides or herbicides to clean the tree from the lice.

Neural Network

Our neural network is an adaption of the SSD MobileNet v2 quantized model, using images we labeled ourselves as training data. The reason why we use the SSD MobileNet v2 model is that it is specifically made to run on less powerful devices, like a Raspberry Pi. The quantized model uses 8-bit instead of 32-bit numbers, further lowering the memory requirements.

Approach to get a realistic and valid view of the counted apples by the raspberry pi

This section is still in making, first all information is dropped here

In this section the processing of the obtained raw data by the raspberry pi is discussed. This processing is important to get a realistic and valid view of the counted apples, to avoid double counting and "forgotten" hidden apples. This data processing method is based on two papers: .

Single Shot Detector (SSD). Though the SSD paper was published only recently (Liu et al., [26]), we use the term SSD to refer broadly to architectures that use a single feed-forward convolutional network to directly predict classes and anchor offsets without requiring a second stage per-proposal classification operation (Figure 1a). Under this definition, the SSD metaarchitecture has been explored in a number of precursors to [26]. Both Multibox and the Region Proposal Network

(RPN) stage of Faster R-CNN [40, 31] use this approach to predict class-agnostic box proposals. [33, 29, 30, 9] use SSD-like architectures to predict final (1 of K) class labels. And Poirson et al., [28] extended this idea to predict boxes, classes and pose. 2.1.2 Faster R-CNN. In the Faster R-CNN setting, detection happens in two stages (Figure 1b). In the first stage, called the region proposal network (RPN), images are processed by a feature extractor (e.g., VGG-16), and features at some selected intermediate level (e.g., “conv5”) are used to predict classagnostic box proposals. The loss function for this first stage takes the form of Equation 1 using a grid of anchors tiled in space, scale and aspect ratio. In the second stage, these (typically 300) box proposals are used to crop features from the same intermediate feature

The neural network gives us a count per image frame, composed of the sum of all patch counts. However, this in itself does not directly correspond to yield. To provide yield data we need to integrate counts of the viewed trees map which are subsequently fed to the remainder of the feature extractor (e.g., “fc6” followed by “fc7”) in order to predict a class and class-specific box refinement for each proposal. The loss function for this second stage box classifier also takes the form of Equation 1 using the proposals generated from the RPN as anchors. Notably, one does not crop proposals directly from the image and re-run crops through the feature extractor, which would be duplicated computation. However there is part of the computation that must be run once per region, and thus the running time depends on the number of regions proposed by the RPN. Since appearing in 2015, Faster R-CNN has been particularly influential, and has led to a number of follow-up works [2, 35, 34, 46, 13, 5, 19, 45, 24, 47] (including SSD and R-FCN). Notably, half of the submissions to the COCO object detection server as of November 2016 are reported to be based on the Faster R-CNN system in some way. over the whole dataset. To avoid double counting of fruits we need to track the individual regions. In this section we provide a validation method for our approach on the application of yield estimation. However, we only use this method for empirical validation of the counting approach, not as a solution to the yield estimation problem which integrates the components shown in Figure 2. In cluttered environments, such as apple orchards, the appearance of any given cluster will change drastically between views. It is possible that in some frames, the apples are partially occluded or not visible at all. For this reason, we need to merge the counts across frames. In addition to tracking fruits between images we need to avoid double counting when scanning a tree row from the front and from the back. For our test setup we first reconstruct both, the front and back side of the tree row using the Agisoft software package. We merge both reconstructions, using an algorithm described in a parallel submission [23]. We use the method of [3] to detect all single instances of fruits in both video sequences. All of these detections are back-projected into the 3D reconstruction to obtain fruit locations in 3D. Fig. 8 shows an example of the merged 3D reconstruction and Fig. 9 the back-projection of a detected cluster. A connected component analysis is performed in 3D to get all apple clusters. Finally, image patches are generated by projecting each component into the frames from which they are visible. After this step all correspondences among image clusters over each video sequence are defined. The proposed counting network and the GMM based method are used to generate per patch counts. From all corresponding patch counts we take the three maximum predictions and report the mean count. In a post-processing step, all apples lying on the ground are removed for the single side counts. To merge the counts from both sides the counts were summed up over all the connected components. The intersection areas among the components are computed and and we add/subtract the weighted parts accordingly. Fig 7 shows the results. The accuracy of the proposed method is 96.97% in dataset 1 and 96.72% in dataset 2. The performance of Gaussian Mixture Model based approach is 94.81% in dataset 1 and 91.97% in dataset 2. The most common type of error is the one seen in Figures 10a, 10c, 10e and 10g. Here the image clearly contains multiple apples, but due to occlusions by other fruit or leaves only a small portion of it is visible in the image. In all of these cases the second fruit is too small to be picked up. The error shown in Figure 10b shows brown and yellow ground apples in the original image. This image patch is further located in the shadow in the background of the image. The network can only pick out the two most significant features, with the rest being treated as image noise. This failure case is prevalent in test set 1, but does not occur in the others. This type of error can be avoided by excluding all apples on the ground during the detection phase. For the error case shown in Figure 10d even human labelers can not give an accurate count with 100% certainty. The fruits form a dense cluster with little to distinguish between them. Our method finds two of the three fruits. The error shown in Figure 10f shows a ground truth label which has been annotated incorrectly. Even when taking special care during the labeling process these cases occurred often. However, by using synthetically rendered data this error could be eliminated. Lastly, we see an example of strong occlusion effects together with shadows in Figure 10h. The fruits are occluded by leaves which cuts one of them in two halves that are counted independently by the network. (a) (b) (c) (d) (e) (f) (g) (h) Fig. 10: Some example failure cases of our method V. CONCLUDING REMARKS In this paper we addressed the problem of accurately counting fruits directly from images. We presented a solution that uses AlexNet CNN which we modified and fine-tuned on our own training data. We presented results which show that the method is more accurate than the previous, GMM based approach in three out of our four test data sets. Our multi-class classification approach achieves accuracies between 80% and 94% without the need of any pre- or postprocessing steps. The deep learning network presented in this paper generalizes across different fruit colors, occlusions and varying illumination conditions. The method was further evaluated on two rows to test its suitability for yield estimation. The fruits were counted from the front and backside of the tree row individually before merging them. Our approach achieved 96.7 and 96.9% accuracy with respect to ground truth obtained by counting harvested apples.

Planning:

Week 2

Create datasets

Preliminary design of the app

First version of the neural network

Research everything necessary for the wiki

Update the wiki

Week 3

Find more data

Coding of app

Improve accuracy of neural network

Edit wiki to stay up to date

Get information about the possible robot

Setup of Raspberry Pi

Week 4

Finish map of user interface

Go to an orchard for interview

Implement user wishes into the rest of the design

Week 5

Implement the highest priority functions of the user interface

Week 6

Reflect on application by doing user tests

Reflect on whether the application conforms to the USE aspects

Week 7

Implement user feedback in the application

Week 8

Finish things that took longer than expected

Milestones

There are five clear milestones that will mark a significant point in the progress.

- After week 2, the first version of the neural network will be finished. After this, it can be improved by altering the layers and importing more data to learn from.

- After week 4, we have taken an interview with a possible end-user. This feedback will be invaluable in defining the features and priorities of the app.

- After week 5, the highest priority functions of the user interface will be present. This means that we can let other people test the app and give more feedback.

- After week 7, all of the feedback will be implemented, so we will have a complete end product to show at the presentations.

- After week 8, this wiki will be finished, which will show a complete overview of this project and its results.

Deliverables

- A system prototype that is able to recognize ripe apples out of a dataset containing unripe apples and apples with diseases for orchard farmers.

- The wiki page containing all information regarding the project.

- A presentation about the product

Logbook

| Week | All | Sven | Willem | Rick | Rik | Beau | |

|---|---|---|---|---|---|---|---|

| 1 (3-2 / 9-2) | Wiki page | Neural network training database[8], more in depth defining/researching on subject[4], wiki[2] | looking for webapp frameworks [4] learning c# [6] htmlcss [2] | Designing neural network[10], wiki[6] | Neural network training database[10], References regarding neural network and fruit recognition[4], wiki[1] | Specifying objectives [2], sketching ideas of desktop application [8] | |

| 2 (10-2 / 16-2) | Meeting/Working with the whole group [8] | search and ordering necessary raspberry pi components[1], interview with farmer/user[4], wiki[4] | c# [4] Building NetCore webapp [10] | Object detection with Tensorflow [8] | References regarding neural network and fruit counting [4], wiki[4], | Following a tutorial for web application [12] | |

| 3 (17-2 / 23-2) | Meeting/Working with the whole group [5] | Help making animation with Rik [4], writing the conclusion part after interview [2], writing diseases part [4] | Database[1], logo[1], website [3], c# [3], bootstrap 4 [3] | Setup Raspberry Pi [6], wiki[2] | Animation[14], wiki[2] | Following a tutorial for web application [12] | |

| 4 (2-2 / 8-3) | Working on neural network database (pictures) [6], researching data processing part of counting apples[8] | configuring database to site, implementing account creating services, google maps API [16] | Working on neural network[20] | Training neural network database [6], wiki[1], animation[2] | Training neural network database [7], Updating Wiki [2], Finding references [3] | ||

| 5 (9-3 / 15-3) | |||||||

| 6 (16-3 / 22-3) | |||||||

| 7 (23-3 / 29-3) | |||||||

| 8 (30-3 / 2-4) |

[ ] = number of hours spent on task

References

- ↑ https://www.sciencedirect.com/science/article/pii/S2589721719300182

- ↑ https://elibrary.asabe.org/abstract.asp?aid=7425

- ↑ https://www.sciencedirect.com/science/article/pii/S1364815299000079

- ↑ https://ieeexplore.ieee.org/abstract/document/1488826

- ↑ http://www.ijirset.com/upload/2019/january/61_Surveying_NEW.pdf

- ↑ https://www.ijrte.org/wp-content/uploads/papers/v8i3s3/C10301183S319.pdf

- ↑ Rahnemoonfar, M. & Sheppard, C. 2017, "Deep count: Fruit counting based on deep simulated learning", Sensors (Switzerland), vol. 17, no. 4.

- ↑ Chen, S.W., Shivakumar, S.S., Dcunha, S., Das, J., Okon, E., Qu, C., Taylor, C.J. & Kumar, V. 2017, "Counting Apples and Oranges with Deep Learning: A Data-Driven Approach", IEEE Robotics and Automation Letters, vol. 2, no. 2, pp. 781-788