PRE2019 3 Group12: Difference between revisions

TUe\20169341 (talk | contribs) |

|||

| Line 94: | Line 94: | ||

===Neural Networks=== | ===Neural Networks=== | ||

===='''What is a neural network:'''==== | |||

Neural networks are a set of algorithms, modeled loosely after the human brain, that are designed to recognize patterns. They interpret sensory data through a kind of machine perception, labeling or clustering raw input. The patterns they recognize are numerical, contained in vectors, into which all real-world data, be it images, sound, text or time series, must be translated. | |||

Neural networks help us cluster and classify. You can think of them as a clustering and classification layer on top of the data you store and manage. They help to group unlabeled data according to similarities among the example inputs, and they classify data when they have a labeled dataset to train on. (Neural networks can also extract features that are fed to other algorithms for clustering and classification; so you can think of deep neural networks as components of larger machine-learning applications involving algorithms for reinforcement learning, classification and regression.) | |||

The most important applications, in our case are: | |||

===='''Classification'''==== | |||

All classification tasks depend upon labeled datasets; that is, humans must transfer their knowledge to the dataset in order for a neural network to learn the correlation between labels and data. This is known as supervised learning. | |||

Detect faces, identify people in images, recognize facial expressions (angry, joyful) | |||

Identify objects in images (stop signs, pedestrians, lane markers…) | |||

Recognize gestures in video | |||

Detect voices, identify speakers, transcribe speech to text, recognize sentiment in voices | |||

Classify text as spam (in emails), or fraudulent (in insurance claims); recognize sentiment in text (customer feedback) | |||

Any labels that humans can generate, any outcomes that you care about and which correlate to data, can be used to train a neural network. | |||

===='''Clustering'''==== | |||

Clustering or grouping is the detection of similarities. Deep learning does not require labels to detect similarities. Learning without labels is called unsupervised learning. Unlabeled data is the majority of data in the world. One law of machine learning is: the more data an algorithm can train on, the more accurate it will be. Therefore, unsupervised learning has the potential to produce highly accurate models. | |||

Search: Comparing documents, images or sounds to surface similar items. | |||

Anomaly detection: The flipside of detecting similarities is detecting anomalies, or unusual behavior. In many cases, unusual behavior correlates highly with things you want to detect and prevent, such as fraud. | |||

===='''Model:'''==== | |||

Here’s a diagram of what one node might look like. | |||

A node layer is a row of those neuron-like switches that turn on or off as the input is fed through the net. Each layer’s output is simultaneously the subsequent layer’s input, starting from an initial input layer receiving your data. | |||

===='''Convolutional Neural Network :''' ==== | |||

===='''What is CNN:''' ==== | |||

For computer vision and image recognition, the most used type of Neural Network is a Convolutional Neural Network | |||

A Convolutional Neural Network (ConvNet/CNN) is a Deep Learning algorithm which can take in an input image, assign importance (learnable weights and biases) to various aspects/objects in the image and be able to differentiate one from the other. The pre-processing required in a ConvNet is much lower as compared to other classification algorithms. While in primitive methods filters are hand-engineered, with enough training, ConvNets have the ability to learn these filters/characteristics. | |||

===='''Model:'''==== | |||

The current state of the art library for AI and machine learning is OpenCv. OpenCV (Open Source Computer Vision Library) is an open source computer vision and machine learning software library. OpenCV was built to provide a common infrastructure for computer vision applications and to accelerate the use of machine perception in the commercial products. Being a BSD-licensed product, OpenCV makes it easy for businesses to utilize and modify the code. | The current state of the art library for AI and machine learning is OpenCv. OpenCV (Open Source Computer Vision Library) is an open source computer vision and machine learning software library. OpenCV was built to provide a common infrastructure for computer vision applications and to accelerate the use of machine perception in the commercial products. Being a BSD-licensed product, OpenCV makes it easy for businesses to utilize and modify the code. | ||

Revision as of 19:58, 9 February 2020

Group Members

| Name | ID | Major | |

|---|---|---|---|

Subject

A real-time camera-based recognition device using capable of identifying vacant seats in areas with dynamic workplaces. These include areas like workspaces at Metaforum for studying, seats at a library or empty desks at flexible workplaces at a company. This information can be communicated to the users which will minimize the time and effort it takes to find a place to work.

Problem Statement

During examination weeks many student want to work and study at the workplaces in the MetaForum. However, during the day it often very busy and it might take a while to find a place to sit, costing effort and precious study time. If student knew the locations of vacant seats it would significantly reduce the amount of time it takes to find them and will also reduce the distance that needs to be walked, decreasing the noise for the people already studying. This same issue could arrive at various other places such as public libraries or flexible workspaces. An automatic method to determine the vacant workplaces would be ideal to deal with situations like this.

Objectives

- Library or other flexible workplaces

The system should be able to tell the user whether there is an empty space (or more) in the area and if so, it should also be able to tell the user where this place is located. Additionally, it can also tell whether a person has left the workplace earlier than the end of the reserved time so that the workplace can be set to "available" sooner.

- Room reservation

It should be able to detect whether there are people in the room and if there is no one in the room within certain time window, it should set the room to available for reservation.

Users

The users we plan to target with our project include multiple groups of people, since it can be applied in various environments. Possible users include students, employees making use of flexible work spaces or library visitors. Each of these users can benefit from the technology in two situations. The first is in larger study or working halls, comparable to Metaforum, for checking if there are available places. The second is in smaller study rooms, comparable to booking rooms in Atlas for cancelling reservations if the student doesn't show up or leaves earlier.

Approach

Here a number of possible approaches are described with their advantages and disadvantages. Our goal is to use the "camera-based recognition software approach", but if that fails, we have some other possible methods to achieve our goal.

Camera-based recognition software: The system uses a camera to detect empty workplaces. It can tell whether there is a person sitting at the workplace or if it’s free for other people. The camera will be installed in higher places to decrease the chance of other objects obstructing the view of the camera.

Advantages:

- Not only can it tell whether a person is sitting in a certain workplace or not, it can also tell whether a person is only taking a break or leaving the workplace for someone else to take it (by looking if the person has also taken his/her stuff with him/her)

- (Relatively) easy to install

- Limited amount is needed, since one camera (if placed right) can cover a big area, also reducing the overall costs

Disadvantages:

- Visual data of people will get captured, this means that people might feel that their privacy is violated.

- Developing an algorithm that can accurately conclude everything might become difficult

Echo location-based:

The system is based on the reflection of sound waves emitted by a sound source. The reflected sound will be different based on whether a person is sitting on the chair or not. The distance the sound has to travel is shorter if there is a person (taller than the chair) sitting on the chair.

Advantages:

- It does not capture any visual data which people might feel their privacy violated by.

- It can have quite a lot of range for one sensor, since it measures the differences between signals over time and always have something to compare.

Disadvantages:

- Other objects can deteriorate the signal if they’re close to the chair or person, making the measuring of the sound waves less accurate.

- It can only detect if there is someone in the chair, it cannot tell if the person leaves stuff on the desk indicating he or she is coming back or not.

Movement-based:

Movement based cameras can work on multiple premises, passive infrared (PIR), microwave, ultrasonic, tomographic motion detector, video camera software, gesture detector. Many of the current movement-based sensors have a combination of the two but they use the different methods to detect a movement. In general a movement based sensor can also be divided into zones which detect the movement in that zones separately.

Advantages:

- A person studying will generally move while writing, typing etc so it is easy to detect a person sitting in a chair or at a location. Which is good to detect quiet spaces.

- It can not capture any visual data which people might feel their privacy violated by depending on which detection is use.

Disadvantages:

- It can only detect movement, so if there is no movement in a certain zone it does not know if there is an actual chair at that place and it can only see there is no movement.

- A movement based sensor must have a lot of different zones for movement detection, if it is one big zone then any movement in that zone will render it as busy which makes it hard to detect empty chairs.

- Since chairs are not fixed in one location they can sit in between two zones which could render two zones as busy while 1 chair in one of those zones might still be empty which cannot be seen.

Thermographic-based / Infrared:

Thermographic-based detection focuses on the premise of the different temperatures between objects to distinguish them. Since humans, chairs, tables and floor have different temperatures a thermographic visual should be able to distinguish them based upon their temperatures.

Advantages:

- A thermographic based camera detects heat, so when a person is sitting on a chair it will detect a higher temperature which indicates that the chair is occupied, while a colder object is more likely to be a chair.

- Heat lingers when someone leaves a chair, the chair will have a higher temperature than normal since a person just sat on it and transferred heat onto it. This could be used as a sort of buffer to make sure that the chair is empty for a bit before stating it as empty.

- While visual data is collected, a thermographic image will make it hard to actually identify a person which will decrease the violation of privacy people might feel.

Disadvantages:

- There probably will be a difference in the base temperature of the chair due to the difference in the weather over the year and even in a single day. A chair next to a window where the sun is shining on will probably have a higher temperature than a chair in the shadow. In the summer, unless the *temperature is really well regulated, the area in the view of the camera will have a general higher temperature than in the winter, which could make it harder to detect differences. So a lot of factors have to be incorporated into the design to even the effect

- Normal consumer thermographic cameras have a small viewing angle, and work precisely relatively close. If you want to detect objects further away the preciseness of the camera goes down so it means you must install more cameras on lower ceilings to have it accurate and it can become quite expensive.

State-of-the-art

Neural Networks

What is a neural network:

Neural networks are a set of algorithms, modeled loosely after the human brain, that are designed to recognize patterns. They interpret sensory data through a kind of machine perception, labeling or clustering raw input. The patterns they recognize are numerical, contained in vectors, into which all real-world data, be it images, sound, text or time series, must be translated. Neural networks help us cluster and classify. You can think of them as a clustering and classification layer on top of the data you store and manage. They help to group unlabeled data according to similarities among the example inputs, and they classify data when they have a labeled dataset to train on. (Neural networks can also extract features that are fed to other algorithms for clustering and classification; so you can think of deep neural networks as components of larger machine-learning applications involving algorithms for reinforcement learning, classification and regression.) The most important applications, in our case are:

Classification

All classification tasks depend upon labeled datasets; that is, humans must transfer their knowledge to the dataset in order for a neural network to learn the correlation between labels and data. This is known as supervised learning. Detect faces, identify people in images, recognize facial expressions (angry, joyful) Identify objects in images (stop signs, pedestrians, lane markers…) Recognize gestures in video Detect voices, identify speakers, transcribe speech to text, recognize sentiment in voices Classify text as spam (in emails), or fraudulent (in insurance claims); recognize sentiment in text (customer feedback) Any labels that humans can generate, any outcomes that you care about and which correlate to data, can be used to train a neural network.

Clustering

Clustering or grouping is the detection of similarities. Deep learning does not require labels to detect similarities. Learning without labels is called unsupervised learning. Unlabeled data is the majority of data in the world. One law of machine learning is: the more data an algorithm can train on, the more accurate it will be. Therefore, unsupervised learning has the potential to produce highly accurate models. Search: Comparing documents, images or sounds to surface similar items. Anomaly detection: The flipside of detecting similarities is detecting anomalies, or unusual behavior. In many cases, unusual behavior correlates highly with things you want to detect and prevent, such as fraud.

Model:

Here’s a diagram of what one node might look like.

A node layer is a row of those neuron-like switches that turn on or off as the input is fed through the net. Each layer’s output is simultaneously the subsequent layer’s input, starting from an initial input layer receiving your data.

Convolutional Neural Network :

What is CNN:

For computer vision and image recognition, the most used type of Neural Network is a Convolutional Neural Network A Convolutional Neural Network (ConvNet/CNN) is a Deep Learning algorithm which can take in an input image, assign importance (learnable weights and biases) to various aspects/objects in the image and be able to differentiate one from the other. The pre-processing required in a ConvNet is much lower as compared to other classification algorithms. While in primitive methods filters are hand-engineered, with enough training, ConvNets have the ability to learn these filters/characteristics.

Model:

The current state of the art library for AI and machine learning is OpenCv. OpenCV (Open Source Computer Vision Library) is an open source computer vision and machine learning software library. OpenCV was built to provide a common infrastructure for computer vision applications and to accelerate the use of machine perception in the commercial products. Being a BSD-licensed product, OpenCV makes it easy for businesses to utilize and modify the code.

The library has more than 2500 optimized algorithms, which includes a comprehensive set of both classic and state-of-the-art computer vision and machine learning algorithms. These algorithms can be used to detect and recognize faces, identify objects, classify human actions in videos, track camera movements, track moving objects, extract 3D models of objects, produce 3D point clouds from stereo cameras, stitch images together to produce a high resolution image of an entire scene, find similar images from an image database, remove red eyes from images taken using flash, follow eye movements, recognize scenery and establish markers to overlay it with augmented reality, etc. OpenCV has more than 47 thousand people of user community and estimated number of downloads exceeding 18 million. The library is used extensively in companies, research groups and by governmental bodies.

From those the current state of the heart in terms of object detections is an algorithm called Cascade Mask R-CNN. A multi-stage object detection architecture, the Cascade R-CNN, is proposed to address these problems. It consists of a sequence of detectors trained with increasing IoU thresholds, to be sequentially more selective against close false positives. Comparing it to the last year state of art algorithm , Cascade Mask R-CNN has an overall 15% increase in performance, and a 25% performance increase regarding the state of art of 2016.

This method/apporach can be found in the following papers:

They use a convolutional Neural Network to detect land use from satellite images with an accuracy of around 98,6%. https://ieeexplore.ieee.org/abstract/document/7447728

Hybrid Task Cascade for Instance Segmentation[1]

CBNet: A Novel Composite Backbone Network Architecture for Object Detection. https://arxiv.org/pdf/1909.03625v1.pdf

Cascade R-CNN: High Quality Object Detection And Instance Segmentation. https://arxiv.org/pdf/1906.09756.pdf

This is a paper which describes the workings, differences and origin of multiple InfraRed cameras and techniques. [2]

Real-time image-based parking occupancy detection using deep learning. http://ceur-ws.org/Vol-2087/paper5.pdf

Real-time Detection of Seat Occupancy and Hogging (Similar project) https://ink.library.smu.edu.sg/cgi/viewcontent.cgi?article=4118&context=sis_research More sources with seat occupancy detection and face recognition https://ieeexplore.ieee.org/document/4745783 http://www.nlpr.ia.ac.cn/2012papers/gnhy/nh14.pdf https://www.isprs.org/proceedings/XXXIII/congress/part5/230_XXXIII-part5.pdf

An app uses movement detectors to see which rooms are free https://universitybusiness.com/app-helps-students-find-empty-study-space/

Parking Space Vacancy Detection

Similar systems have already been implemented in real-life situation for parking spaces for cars.[3] Many parking environments have implemented ways to identify vacant parking spaces in an area using various methods: Counter-based systems, which only provide information on the number of vacant spaces, sensor-based systems, which requires ultrasound, infrared light or magnetic-based sensors to be installed on each parking spot, and image or camera-based systems. These camera systems are the most cost-efficient method as it only requires a few cameras in order to function and many areas already have cameras installed. This system is however rarely implemented as it doesn't work too well in outdoor situations.

Researchers from the University of Melbourne have created a parking occupancy detection framework using a deep convolutional neural network (CNN) to detect outdoor parking spaces from images with an accuracy of up to 99.7%.[4] This shows the potential that image based systems using CNN's have in these types of tasks. A major difference that our project has from this research however, is the movement of the spaces. Parking spaces of vehicles always stay on the same position. A CNN is therefore able to focus on a specific part of the camera and focus on one parking space. In our situation the chairs can move around and aren't in a constant position. The neural network will therefore first have to find and identify all the chairs in its vision before it can detect whether it is vacant or not, an additional challenge we need to overcome.

Another research group have performed a similar research using deep learning in combination with a video system for real-time parking measurement.[5] Their method combines information across multiple image frames in a video sequence to remove noise and achieves higher accuracy than pure image-based methods.

Seat or Workspace Occupancy Detection

A group students from the Singapore Management University have tried to tackle a similar problem.[6] In their research they proposed a method using capacitance and infrared sensors to solve the problem of seat hogging. Using this they can accurately determine whether a seat is empty, occupied by a human, or the table is occupied by items. This method does require a sensor to be placed underneath each table and since in our situation the chairs move around, this method can't be used everywhere.

Autodesk research also proposed a method for detecting seat occupancy, only this time for a cubicle or an entire room.[7] They used decision trees in combination with several types of sensors to determine what sensor is the most effective. The individual feature which best distinguished presence from absence was the root mean square of a passive infrared motion sensor. It had an accuracy of 98.4% but using multiple sensors only made the result worse, probably due to overfitting. This method could be implemented for rooms around the university but not for individual chairs.

Planning

Milestones

For our project we have decided upon the following milestones each week:

- Week 1: Decide on a subject, make a planning and do research on existing similar products and technologies.

- Week 2: Finish preparation research on USE aspects and subject and have a clear idea of the possibilities for our projects.

- Week 3: Start writing code for our device and finish the design of our prototype.

- Week 4: Create the dataset of training images and buy the required items to build our device.

- Week 5: Have a working neural network and train it using our dataset.

- Week 6: Test our prototype in a staged setting and gather results and possible improvements.

- Week 7: Finish our prototype based on the test results and do one more final test.

- Week 8: Completely finish the Wiki page and the presentation on our project.

Deliverables

This project plans to provide the following deliverables:

- A Wiki page containing all our research and summaries of our project.

- A prototype of our proposed device.

- A presentation showing the results of our project.

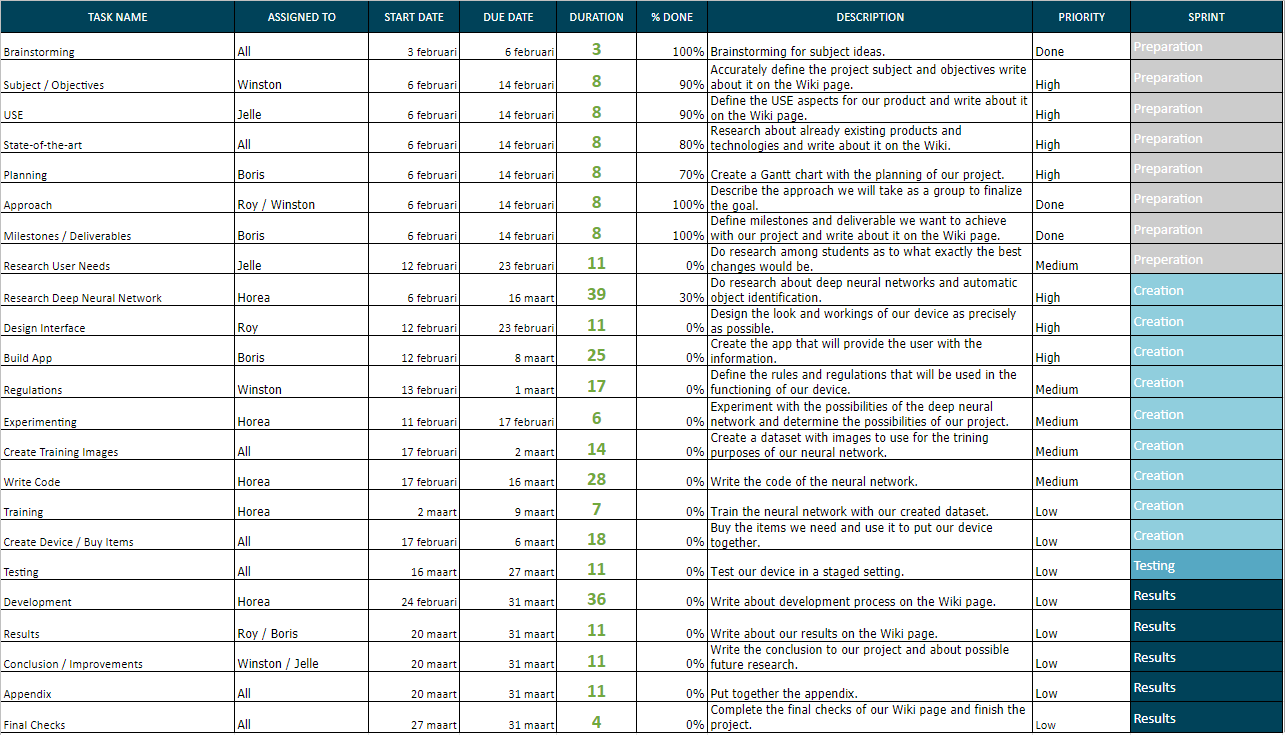

Schedule

Our current planning can be seen in the table below. This planning is not entirely finished and will be updated as the project goes on.

Logbook

Week 1

| Name | Activities (hours) | Total time spent |

|---|---|---|

| Boris | Brainstorming (1), Research Papers (3), Write Planning (2.5), Write State-of-the-art (3.5), Write Subject and Problem Statement (0.5), Other Wiki Writing (2) | 12.5 |

| Horea | Brainstorming (1), Look for relevant papers (2), Write subject, objectives, and approach (3),Clean up state of the art (1), clean up the references (1), Wiki writing (2) | 10 |

| Jelle | ... | 0 |

| Roy | ... | 0 |

| Winston | ... | 0 |

References

- ↑ Kai Chen et al.,http://openaccess.thecvf.com/content_CVPR_2019/papers/Chen_Hybrid_Task_Cascade_for_Instance_Segmentation_CVPR_2019_paper.pdf "Hybrid Task Cascade for Instance Segmentation"

- ↑ Carlo Corsi, "History highlights and future trends of infrared sensors", pages 1663-1686, April 2010

- ↑ Bong, D.B.L., "Integrated Approach in the Design of Car Park Occupancy Information System (COINS)", IAENG International Journal of Computer Science, 2008

- ↑ Acharya, Debaditya, "Real-time image-based parking occupancy detection using deep learning", CEUR Workshop Proceedings, 2018

- ↑ Cai, Bill Yang, "Deep Learning Based Video System for Accurate and Real-Time Parking Measurement", IEEE Internet of Things Journal, 2019

- ↑ Nguyen, Huy Hoang, "Real-time Detection of Seat Occupancy and Hogging", IoT-App, 2015

- ↑ Hailemariam, Ebenezer, "Real-Time Occupancy Detection using Decision Trees with Multiple Sensor Types", Autodesk Research, 2011