Embedded Motion Control 2012 Group 8: Difference between revisions

Jump to navigation

Jump to search

| Line 90: | Line 90: | ||

*** take a decision about the image recognition (custom method or openCV) | *** take a decision about the image recognition (custom method or openCV) | ||

*** star making a full and detailed description of the strategy for the wiki. | *** star making a full and detailed description of the strategy for the wiki. | ||

* '''Week 8''' | |||

** CONGRATULATIONS to TU/e team for winning in Mexico!!!!!!!! | |||

** The testing from previous week was a success : | |||

*** First successful try : http://www.youtube.com/watch?v=cE1UBeHTDFI | |||

*** Robot with improvements going through the maze : http://www.youtube.com/watch?v=gCE-ouQsTF8 | |||

==Algorithms and software implementation== | ==Algorithms and software implementation== | ||

Revision as of 14:47, 25 June 2012

Group 8

Tutor: Sjoerd van den Dries

| Member | |

|---|---|

| Alexandru Dinu | Alexandru Dinu |

| Thomas Theunisse | Thomas Theunisse |

| Alejandro Morales | Alejandro Morales |

| Ambardeep Das | Ambardeep Das |

| Mikolaj Kabacinski | Mikolaj Kabacinski |

Progress

- Week 1

- Installed Ubuntu + ROS + Eclipse

- Working on the tutorials...

- Week 2

- Finished with the tutorials

- Try to find a solution for rviz crashing on one of the computers

- Have the first meeting with the tutor

- Discuss the possible strategies for resolving the maze

- Make a list of the names and structures of relevant topics that can be received from the simulated robot

- Try to practice a bit with subscribing to certain topics from the simulated robot and publish messages to make him navigate around the maze

- Progress

- resolved the issue with rviz

- did testing on the robot and we are now able to make him move, take turns, measure the distance in front of him and take decisions based on that

- made a node that subscribes to the scan topic and publish on /cmd_vel topic

- starting testing code to include the odometry information in our strategy

- started thinking about the image recognition of the arrows

- connected to the svn server and successfully shared code

- Week 3

- Have second meeting with tutor on Wednesday (clear some questions about the maze and corridor contest)

- Redo the code based on last discussion (we came up with a new strategy for taking corners)

- Restructure the software's framework for ease of future improvements

- Try to use the messages from the camera with our arrow guiding system

- Progress week 3

- increased the modularity of the code

- we have a new approach to taking corners ( still issues with the transformation from the odometry (rotation), but it will be resolved in week 4)

- the robot still have some issues with taking some corners, also to be resolved in week 4

- Week 4

- Have a final version of the navigational system

- Start work on the arrow recognition

- Prepare for next weeks presentation of chapter 10

- Progress

- we've made a demo video of the robot going through the maze : http://www.youtube.com/watch?v=2SGSvnsFX9M&feature=youtu.be

- we have an algorithm in matlab for the the arrow recognition, the code will be ported to C code and tested locally

- Week 5

- Held the presentation for chapter 10

- Made the code compliant with the new simulator for the corridor

- tested on the robot on friday but it didn't work. On the first try, our node was sending the proper Twist messages on the topic /robot_in , they were visible with the rostopic echo robot_in , but the robot didn't move. With the rest of the attempts, the robot was not sending laser readings anymore. Because our code is waiting for a synchronisation flag to be chanced on the first arrival of a message, the execution remained stuck in an endless while because of this. We didn't find out if it was a problem with our code that made the robot non responsive or simply a problem with the communication to and from the robot.

- We believe the robot will work on the real maze because :

- we did a lot of testing on the simulated corridor with different orientations and starting positions

- The decision making behind is not based on any static points, so it will not be affected by a change in the laser readings or a change in the odometry readings (from simulator to robot)

- We will conduct a new test on Monday morning and it will work this time :)

- Updated the SVN to make it easy to use for testing. The previous time we experienced some problems when moving the code from one laptop to another, because we only used SVN until now only for fail safe purposes, without having a proper package there.

- The strategy used is the following:

- Wait for the first reading from the laser scan before deciding what to do

- Check if it is inside the corridor or not. If not, continue advancing towards the corridor (without hitting the walls) until you have proper readings at +95 and -95 degrees.

- Once inside the corridor, check the relative position to the walls and if not parallel to them, rotate in the required direction until parallel.

- Next go forward until you sense a turn possible and take it using our 3 step turning algorithm.

- Week 6

- tried the new version of the simulator -> didn't work for the first one, it showed a really strange behavior at the end of the turning cycle. With the last one + some modifications to the turning and going ahead seems to be working fine. We are still experiencing some problems with our algorithm in the simulator when starting at an angle or starting outside the maze. because of the way the simulated robot is turning, sometimes it takes a while to find the center pointing position (in strange,rare cases it even goes in a loop moving from left to right). We are now able to go through the maze.

- had the first hour with the robot -> unexpected problem with turning when really close to the wall. jazz is not round and when turning , it touches the wall with its behind. We solved that (at least in the simulator) by changing how much the robot advances when taking a turn. Now it should stay farther from the walls. Because we didn't have a full hour of simulating , we only got a chance to try a couple of situations, but looks promising.

- added some extra fail-safe measures to prevent the robot from going really close to the walls ( may need some tunning)

- still working on the image processing. work perfect in matlab code, but node in C code ( to be investigated for one more week, afterwards switch to openCV)

- experienced some strange behavior in the simulator when increasing the speed above 0.2 -> it receives messages with some kind of lag. it doesn't sense the turns imediatelly and initiates the turning procedure a bit late leading to either crashing into the wall (fail-safe disabled) or taking a long time to recover (fail-safe enabled)

- Week 7

- To do :

- test on the robot again and in case something unexpected happens, bag everything :) (make a video to upload on wiki)

- take a decision about the image recognition (custom method or openCV)

- star making a full and detailed description of the strategy for the wiki.

- To do :

- Week 8

- CONGRATULATIONS to TU/e team for winning in Mexico!!!!!!!!

- The testing from previous week was a success :

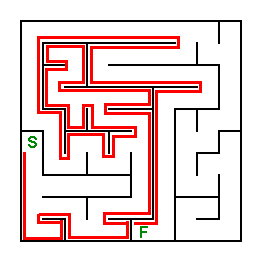

- First successful try : http://www.youtube.com/watch?v=cE1UBeHTDFI

- Robot with improvements going through the maze : http://www.youtube.com/watch?v=gCE-ouQsTF8

Algorithms and software implementation

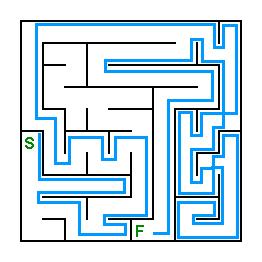

- Maze solving algorithms

- The wall follower (also known as the left-hand rule or the right-hand rule):

- If the maze is simply connected, that is, all its walls are connected together or to the maze’s outer boundary, then by keeping one hand in contact with one wall of the maze the player is guaranteed not to get lost and will reach a different exit if there is one; otherwise, it will return to the entrance. (Disjoint mazes can still be solved with the wall follower method, if the entrance and exit to the maze are on the outer walls of the maze. If however, the solver starts inside the maze, it might be on a section disjoint from the exit, and wall followers will continually go around their ring. The Pledge algorithm can solve this problem.)

- Trémaux's algorithm

- Trémaux's algorithm is an efficient method to find the way out of a maze that requires drawing lines on the floor to mark a path, and is guaranteed to work for all mazes that have well-defined passages. A path is either unvisited, marked once or marked twice. Every time a direction is chosen it is marked by drawing a line on the floor (from junction to junction). When arriving at a junction that has not been visited before (no other marks), pick a random direction (and mark the path). When arriving at a marked junction and if the current path is marked only once then turn around and walk back (and mark the path a second time). If this is not the case, pick the direction with the fewest marks (and mark it). When the robot finally reach the solution, paths marked exactly once will indicate a direct way back to the start.

- The wall follower (also known as the left-hand rule or the right-hand rule):

|

|

- Relevant topics that can be received by the robot

- laserdata provided by the laser scanner, will be used for wall detection;

- images captured by the monocular camera, will be used for detecting arrows;

- odometry provided by the base controller.

|

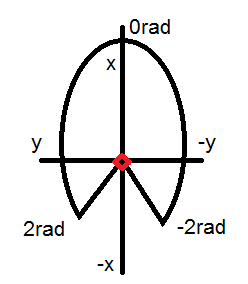

- Image processing

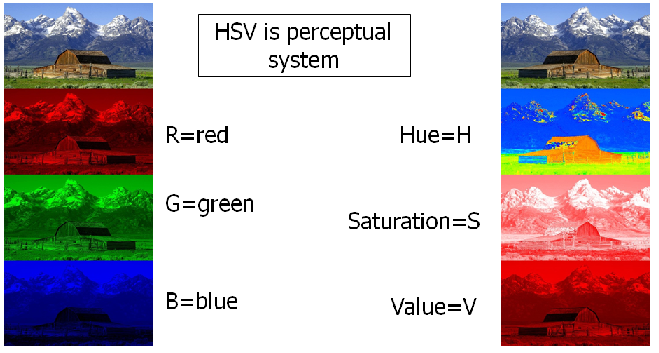

- The Jazz robot contains a monocular camera, which will be used to detect arrows in order to be able to find the exit of the maze faster. The camera will capture images of a RGB (red, green, blue) color system. But it is better to use HSV instead of RGB, because one color for different illuminations can be selected by angle range and saturation range.

- Algorithm

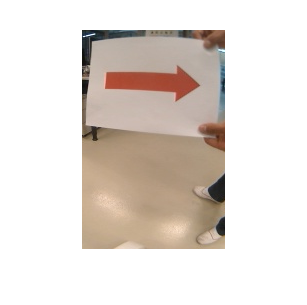

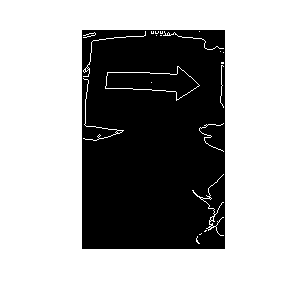

- The image process algorithm uses the contrast between the arrow and its background as a starting point to transform the image into a set of white shapes over a black background. Then the edges of these white shapes are found and analyzed. The analysis consists in localizing the closed contours to relate the points that form them to their centroid to get a direction criterion.

- First, the image it’s converted from a RGB format to a grayscale format,

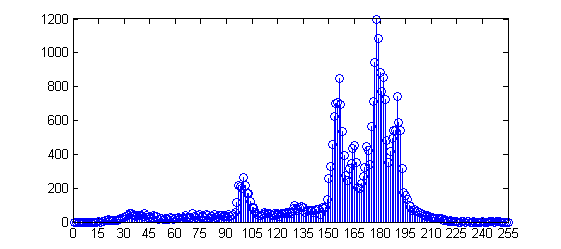

- The histogram of this greyscale image is determined,

- Using Otsu’s method a threshold is found to transform the grayscale image into a binary image,

- Then a simple edge algorithm is used. The edge image is mapped to find close contours for which their centroid is calculated,

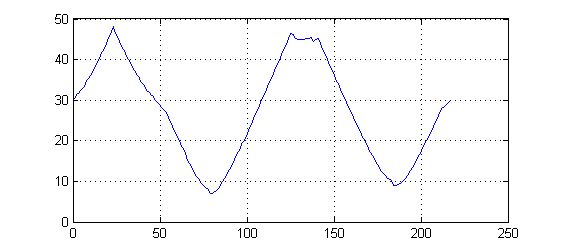

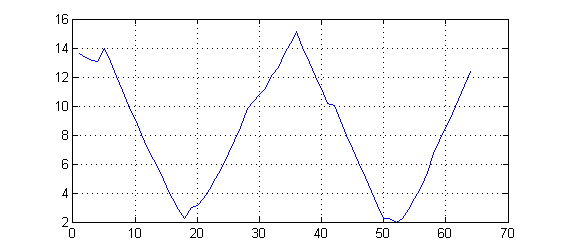

- The distance from the centroid to each point of the contour is calculated resulting in the next curve,

- The relation between the maximum value and the direction used to calculate the distances is used as the criteria to define the direction of the arrow.

- An example of an arrow pointing in the opposite direction is depicted below,