PRE2015 4 Groep2: Difference between revisions

No edit summary |

|||

| Line 52: | Line 52: | ||

===Society=== | ===Society=== | ||

[https://drive.google.com/ | |||

There are several problems to which our solution can relate such as: | |||

There will not be enough food in the near future for all the people | |||

There is an increasing shortage of workers due to aging | |||

Decrease in wage gap due to overabundance of food | |||

Post scarcity | |||

Facts supporting these statements are: | |||

The world population is predicted to grow from 6.9 billion in 2010 to 8.3 billion in 2030 and to 9.1 billion in 2050. By 2030, food demand is predicted to increase by 50% (70% by 2050). The main challenge facing the agricultural sector is not so much growing 70% more food in 40 years, but making 70% more food available on the plate. | |||

Roughly 30% of the food produced worldwide – about 1.3 billion tons - is lost or wasted every year, which means that the water used to produce it is also wasted. Agricultural products move along extensive value chains and pass through many hands – farmers, transporters, store keepers, food processors, shopkeepers and consumers – as it travels from field to fork. | |||

In 2008, the surge of food prices has driven 110 million people into poverty and added 44 million more to the undernourished. 925 million people go hungry because they cannot afford to pay for it. In developing countries, rising food prices form a major threat to food security, particularly because people spend 50-80% of their income on food. | |||

''Cited from:'' http://www.un.org/waterforlifedecade/food_security.shtml | |||

[https://drive.google.com/open?id=0BxKlXUVjSWzHalZmMEFZeGFDYVk] | |||

'''''Figure:''' Evolution of the average diet composition of a human'' | |||

[https://drive.google.com/open?id=0BxKlXUVjSWzHcDAzbl9VYldLNmc] | |||

'''''Figure:''' places with space and conditions appropriate for food production'' | |||

''Graphs’ source:'' http://www.fao.org/fileadmin/templates/wsfs/docs/expert_paper/How_to_Feed_the_World_in_2050.pdf | |||

Going from all the above mentioned we can address several calls listed in the Horizon 2020 Work Programme 2016-2017. Some would require a slight shift of our focus, but could well be addressed after our project is completed too since one can build upon our knowledge, making it easier to solve such issues in a likewise way. These include: | |||

SFS-05-2017: Robotics Advances for Precision Farming | |||

SFS-26-2016: Legumes - transition paths to sustainable legume-based farming systems and agri-feed and food chains | |||

SFS-34-2017: Innovative agri-food chains: unlocking the potential for competitiveness and sustainability | |||

Our solution helps because by integrating the robots’ operation system into the system of distributors, less food will be wasted, and thus more will be available for others when developing the system in such a way that distribution can reach further for instance. Additionally we can help reduce poverty or limit the number of people that are driven into poverty, robots can reduce prices significantly on the middle to long term (before 2050 is certainly doable). By using the procedure we will use to make sure computer vision combined with machine learning is able to identify strawberries in the field, it will become easier to produce more legumes (semi-)automatically, thus addressing the SFS-26-2016 call. Our solution meets the expected mentioned at SFS-15-2017, namely: ‘increase in the safety, reliability and manageability of agricultural technology, reducing excessive human burden for laborious tasks’. Our robotic solution aims at reducing the number of people needed at a farm site. Also it specifically meets one of the expected impacts from the SFS-34-2017 call which says solutions should ‘enhance transparency, information flow and management capacity’. This is what the system behind the robot is intended to achieve. | |||

===Enterprise=== | ===Enterprise=== | ||

Revision as of 20:14, 5 June 2016

We are developing a neural network to determine fruit ripeness. The robot will be initially developed for controlling the quality of strawberries. As creating a complete prototype is probably not feasible to do in nine weeks, we start with focusing on the detecting and sensing part. For that we will develop a system which scans fruits and determines their ripeness. It can also consider other factors like for example if the fruit looks appealing.

Group 2 members

- Cameron Weibel (0883114)

- Maarten Visscher (0888263)

- Raomi van Rozendaal (0842742)

- Birgit van der Stigchel (0855323)

- Mark de Jong (0896731)

- Yannick Augustijn (0856560)

Project description

To have a robot that can classify fruits based on their ripeness and appeal factors. The fruits are detected while on a transport belt. --or-- In the field.

Add problem description

Requirements

Functional requirements

- The robot should be able to detect fruit using a Kinect camera.

- It should be able to classify the ripeness of the fruit based on a convolutional neural network

- The robot will query an online database about the ripeness of a certain fruit and the database will return the percentile of ripeness the fruit is in based on different fruit image sets

- The farmer should be able to take pictures of overripe/underripe fruit to add to the training set to give feedback to improve the robot

- The farmer should be able to interface with the database as well as different harvesting metrics through a mobile device.

Non-functional requirements

- It should be relatively simple to add the Kinect+Raspberry Pi to an existing harvesting system.

- The farmer should be able to use the system with minimal prior knowledge

- The robot should perform better than a human quality controller

- This robot should have all the safety features necessary to ensure no critical failures.

USE aspects

User

Primary users are farmers and their workers, who directly use the robot. The following aspects hold:

- Their work becomes far less intensive and heavy. Instead of directly harvesting, farmers can let the robot do the work. They would now only occasionally need to check the harvest and possibly adjust some parameters. This work is less heavy than harvesting and therefore less health problems due to heavy work can be expected.

- More free time for other things. This is because the new work takes far less time. Also there is no need anymore for training seasonal workers.

Secondary users are distributors that pick up the fruits from the farms. They use the robot occasionally when they need to get the fruits that are picked by the robot. Their work is mostly unaffected, however some parts of their work can be left to the robot, depending on how advanced the robot is. One of these things is selecting fruits based on ripeness and appealing factor. This can be done by the robot. The robot could also directly package and seal the fruits.

A tertiary user is the company that is developing and maintaining this robot. It indirectly uses the robot during development.

(I assume that this is a societal aspect:) Another tertiary user is the harvesting worker. A worker that is harvesting fruit manually does not directly come in contact with the robot. The robot does however influence these workers, as it takes away their jobs. This aspect should be researched more. These harvesting workers are likely people with a low education and students wanting to earn a little more. The people with low education can be expected to have a hard time finding a new job.

Society

There are several problems to which our solution can relate such as: There will not be enough food in the near future for all the people There is an increasing shortage of workers due to aging Decrease in wage gap due to overabundance of food Post scarcity

Facts supporting these statements are: The world population is predicted to grow from 6.9 billion in 2010 to 8.3 billion in 2030 and to 9.1 billion in 2050. By 2030, food demand is predicted to increase by 50% (70% by 2050). The main challenge facing the agricultural sector is not so much growing 70% more food in 40 years, but making 70% more food available on the plate. Roughly 30% of the food produced worldwide – about 1.3 billion tons - is lost or wasted every year, which means that the water used to produce it is also wasted. Agricultural products move along extensive value chains and pass through many hands – farmers, transporters, store keepers, food processors, shopkeepers and consumers – as it travels from field to fork. In 2008, the surge of food prices has driven 110 million people into poverty and added 44 million more to the undernourished. 925 million people go hungry because they cannot afford to pay for it. In developing countries, rising food prices form a major threat to food security, particularly because people spend 50-80% of their income on food. Cited from: http://www.un.org/waterforlifedecade/food_security.shtml

[1] Figure: Evolution of the average diet composition of a human

[2] Figure: places with space and conditions appropriate for food production

Graphs’ source: http://www.fao.org/fileadmin/templates/wsfs/docs/expert_paper/How_to_Feed_the_World_in_2050.pdf

Going from all the above mentioned we can address several calls listed in the Horizon 2020 Work Programme 2016-2017. Some would require a slight shift of our focus, but could well be addressed after our project is completed too since one can build upon our knowledge, making it easier to solve such issues in a likewise way. These include:

SFS-05-2017: Robotics Advances for Precision Farming

SFS-26-2016: Legumes - transition paths to sustainable legume-based farming systems and agri-feed and food chains

SFS-34-2017: Innovative agri-food chains: unlocking the potential for competitiveness and sustainability

Our solution helps because by integrating the robots’ operation system into the system of distributors, less food will be wasted, and thus more will be available for others when developing the system in such a way that distribution can reach further for instance. Additionally we can help reduce poverty or limit the number of people that are driven into poverty, robots can reduce prices significantly on the middle to long term (before 2050 is certainly doable). By using the procedure we will use to make sure computer vision combined with machine learning is able to identify strawberries in the field, it will become easier to produce more legumes (semi-)automatically, thus addressing the SFS-26-2016 call. Our solution meets the expected mentioned at SFS-15-2017, namely: ‘increase in the safety, reliability and manageability of agricultural technology, reducing excessive human burden for laborious tasks’. Our robotic solution aims at reducing the number of people needed at a farm site. Also it specifically meets one of the expected impacts from the SFS-34-2017 call which says solutions should ‘enhance transparency, information flow and management capacity’. This is what the system behind the robot is intended to achieve.

Enterprise

Image sets

We are creating image sets of ripe and unripe strawberries using the format as presented here:

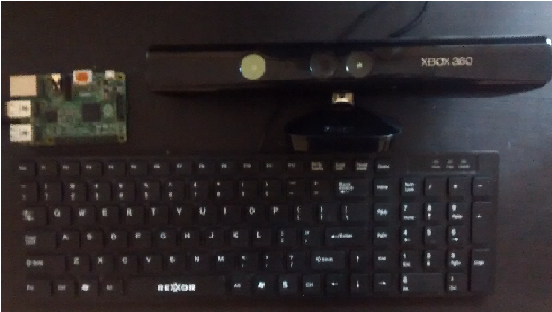

The images are all 64x64, twice the height and width of the CIFAR-10 set, as we will use less images but better quality pictures. These images will be converted into a binary format and segmented into training sets. These images were taken with a Kinect camera attached to a Raspberry Pi. Uniform lighting conditions were used to match that of a quality control facility.

The following setup is being used for taking/uploading images:

Conversation with Cucumber Farmer

Trip to PicknPack workshop at Wageningen University

Via one of our mentors we were pointed at the PicknPack project. In this project, research is being done on solutions for easy packaging of food products. The goal of the research is to provide knowledge on possible solutions for picking food from (harvest) bins and packing the food, and meanwhile doing quality control and adding traceability information. To that end they created a prototype industrial conveyor system with machines that provide this functionality. The system is designed to be flexible and allow easy changing of the processed food type/category. While checking out the project, we saw a workshop announcement where they planned on showing the prototype and giving information. The time was possible for us and the content was interesting, so we decided to go with a group of 3 (Mark, Cameron, Maarten). We went on May 26 to Wageningen University for the workshop.

The quality assessment module is the most interesting for our project, and we were pleasantly surprised to learn that their approach used components that we were planning to use ourselves. In the quality assessment module, they set up an RGB camera, a hyperspectral camera, a 3D camera and a microwave sensor. The RGB camera is used for detecting ripeness. A simple algorithm was used for this. Weight was also estimated using the 3D camera. Features they measure via the hyperspectral camera are sugar content, raw/cooked state (for meat products), chlorophyll. The microwave sensor is used for freshness and water content (among other things).

TODO continue from here.

They didn't choose to use a neural network, a PhD student mentioned the black box nature (you don't know what exactly happens) and overfitting as potential problems

A big one was transparency, every single product is traceable and all information about it can be found in a large database, which can be accessed with a qr code. It is also mandatory to be able to trace the product one step backwards and forwards in the supply chain, which is done through an RFID chip on the package.

There was an app which allowed the operator of the machine to fine tune the algorithm. The programs runs several differently tuned versions of the algorithm and allow the user to select the best result. Then the machine will run the algorithm you selected on a few different product (e.g. tomatoes) and if everything is okay it uses that algorithm and if not you can go back to the first step. This is not really applicable to our solution though, because we are using a neural net and not a statistical model, which means we will probably have to use the shared database idea for adding more pictures and tuning.

Cleanliness of the machine was also very important, they had a separate cleaning robot which could reach every part of the machine, again I don't know how important this is for us.

Some enterprise needs they mentioned are the growing market for personalized fresh food ordered online, more demand for food overall and the need to utilise advances in technology.

A collection of our own pictures can be found via this link.

Visit to Kwadendamme Farm

Overview

We learned an immense amount of information from the Steijn farmers in Kwadendamme. These farmers plant Conference pears and Elstar apples, and they process over 900,000kg of fruit every year across 15 hectares of land. As seen in the picture above, the tress are kept to a relatively low height (around 2 meters) and the are aligned nicely in even rows.

Farmer Problems

The main priority of the farmer is to maximize his revenue. In our conversations with Farmer Rene, he mentioned that the biggest detractor from their bottom line was labor. The hourly wage for a Romanian/Polish harvester was 16 euros per hour (which includes tax, healthcare and other employee benefits), even though only 7 euros of that ends up going directly to the worker. The farmers only hire farmhands during 3-4 weeks of the year when they are harvesting their apples and pears, and the rest of the year the majority of their work goes into pruning, fertilizing, and maintaining the plants.

Another way for the farmer to increase his bottom line is to drive top line growth. If the farmer can produce plants that yield 20% more fruit, this translates to an almost equally proportional revenue increase. The farmers mentioned that a robotic system that continually keeps track of the number of produce each row of trees (or individual tree if such accuracy is attainable) is bearing. We discussed the concept of a density map for their land, and the idea resonated with them. We decided here that we would develop the planning for a high-tech farm in which our image processing system would play as a proof of concept for one of the many technologies that would be implemented in the overall farm. This allows us to focus on a more broad perspective, while still creating a technically viable solution.

Usefulness of Our System

The farmers recognized our system as being a necessary innovation within the quality assurance side of the produce supply chain, and they would benefit from a fruit-counting robot utilizing our machine vision software. The proof of concept we will demonstrate at the end of the year is an example implementation of our machine vision system as it applies to quality assurance, but from the farmers' comments it is clear there is plenty of room for innovation in computer vision within the agricultural field.

We also asked the farmers how they felt about certain technologies we saw at Delft, such as the 3D X-ray scanners for detecting internal quality of fruit. Again, they said that such technologies had their place in quality assurance but it was not useful to them as proving an apple that looks good on the outside has internal defects just subtracts from the total number of apples they can sell to the intermediary trader between them an Albert Heijn.

App

Idea behind the app

One of the requirements of the system was for the user/farmer to be able to add pictures of unripe and ripe fruit to the database in order to be improve the system. This was chosen because if it was hypothesized that more pictures under different lighting settings and different circumstances, of different classifications of the fruit would results into a better and faster neural network, that would improve the overall performance of the system. Furthermore we also wanted to give the farmer an extra way of getting more insight about his/her farm and the used system. For example how much fruit was picked, the overall quality of this fruit, the temperature and ways to connect to the CCTV system of the farm en keep an eye on everything.

We decided to use an app for this since we cannot expect the user to have knowledge about programming or any other difficult means to add pictures to the database. With this app the farmer would be able to add pictures to the database and label them, get more information about his/her farm and he/she would be able to go into the database to delete or change the labels of pictures.

Different versions of the app

V1

The first version of the app was made during the 4th week of the course. This app had the functionalities that were described in the "idea behind the app" section. The user could log in to his/her personal account, take pictures, label them and add them to the databse. Furthermore the farmer could get insight in the performance of his/her farm and could change and delete pictures from the database.

The first version of the app can be found here: File:Use app.pdf

V2

The second version of the app was made during the 6th week, it differed from the first version because the user was not able anymore to take pictures and upload them themselves. During the meetings it was found that taking a picture and labeling it and then uploading it would simply take too much time for the farmer to make it profitable. Therefore the uploading pictures part was replaced by a rating part. This system would take pictures of a fruit it was not yet sure whether the quality was high enough. This pictures would then be stored and presented to the farmer who could then rate them as good or bad by swiping them either to the right or the left of the screen. This ensured that the user could quickly rate the pictures and improve the system, by feeding it more and more pictures everytime.

The second version of the app can be found here: File:Use app v2.pdf

V3

The third version of the app was made during the 7th week of the course, and also had some major differences from its earlier versions. The main difference was that the insights into the farm where now done per regio of the farm. This means that the farmer was now able to see, by using color coding, which sector of its farm was performing best and worst and everything in between. The farmer could then click on the regio and could see more statistics about this sector and the performance. Furthermore the farmer could also see an overall view of all his produce and the overall performance of the farm.

The third version of the app can be found here: File:Use app v3.pdf

V4

The fourth version of the app was also made during the 7th week of the course and included multiple screens that were color coded. The farmer could now also use the earlier mentioned segments for seeing the following stats: Processed today, Average Quality, Revenue, Time to harvest and Kgs left.

Furthermore the database tab was changed to an overall system performance overview, since it was thought that the changing 1 or 2 pictures each time would not make a significant contribution to the performance of the system.

The fourth version of the app can be found here: File:Use app v4.pdf

V5

The fifth version of the app was also made during the 7th week and included some minor changes with regard to the fourth version. The names of the buttons were changed in the main menu and numbers were added to the different segments in the overview, this would ensure that the farmer would have a better pverview and did not have to press any buttons to check on the numbers of each sector.

The fifth version of the app can be found here: File:Use app v5.pdf

Screenflows

ZIP file of the app

Planning

Week 2

Clarifying our project goals

Working on USE aspects

Finalize planning and technical plan

Sketch a prototype

Week 3

Preliminary design for app

First implementation of app

Database/Server setup

CNN, and basics of neural networks

Week 4

App v1.0 with design fully implemented

Kinect interfacing to Raspberry Pi completed

USEing intensifies

Finish back-end design and choose frameworks

Week 5

App v2.0 with design fully implemented and tested

Further training of CNN

Working database classification (basic)

Casing (with studio lighting LED shining on fruit)

Week 6

Improve CNN

Expand training sets (outside of strawberries (if possible))

User testing on app

Reflect on USE aspects and determine if we still preserve our USE values

Week 7

Implement feedback from testing app

Improve aesthetic appeal detection (if time)

Week 8

Finish everything

Buffer period

Final reflection on USE value preservation

Week 9

Improve wiki for evaluation

Peer review

Fallback:

App for user to report feedback in the form of images of high/low quality fruit.

Have Rpi take pictures using Kinect and send to database

Choose a more binary classification (below 50%/above 50% quality)

Flesh out the design more (if implementation fails)

Technical aspects

Database

Application

Application design (User interface)

Research

Harvesting robots

- Description of an autonomous cucumber harvesting robot, designed and tested in 2001

- Paper from 1993 describing the then state-of-the-art and economic aspects. It has a chapter on economic evaluation.

- Recent TU/e paper discussing the state-of-the-art on tomato harvesting. It focuses on the mechanical part and does not include sensing and detecting.

Sensing technology

(Older)

- Yamamoto, S., et al. "Development of a stationary robotic strawberry harvester with picking mechanism that approaches target fruit from below (Part 1)-Development of the end-effector." Journal of the Japanese Society of Agricultural Machinery 71.6 (2009): 71-78. Link

- Sam Corbett-Davies , Tom Botterill , Richard Green , Valerie Saxton, An expert system for automatically pruning vines, Proceedings of the 27th Conference on Image and Vision Computing New Zealand, November 26-28, 2012, Dunedin, New Zealand Link

- Hayashi, Shigehiko, Katsunobu Ganno, Yukitsugu Ishii, and Itsuo Tanaka. "Robotic Harvesting System for Eggplants." JARQ Japan Agricultural Research Quarterly: JARQ 36.3 (2002): 163-68. Web. Link

- Blasco, J., N. Aleixos, and E. Moltó. "Machine Vision System for Automatic Quality Grading of Fruit." Biosystems Engineering 85.4 (2003): 415-23. Web. Link

- Cubero, Sergio, Nuria Aleixos, Enrique Moltó, Juan Gómez-Sanchis, and Jose Blasco. "Advances in Machine Vision Applications for Automatic Inspection and Quality Evaluation of Fruits and Vegetables." Food Bioprocess Technol Food and Bioprocess Technology 4.4 (2010): 487-504. Web. Link

- Tanigaki, Kanae, et al. "Cherry-harvesting robot." Computers and Electronics in Agriculture 63.1 (2008): 65-72. Direct Dianus

- Evaluation of a cherry-harvesting robot. It picks by grabbing the peduncle and lifting it upwards.

- Hayashi, Shigehiko, et al. "Evaluation of a strawberry-harvesting robot in a field test." Biosystems Engineering 105.2 (2010): 160-171. Direct Dianus

- Evaluation of a strawberry-harvesting robot.

State of the art

A small number of tests have been done with machines for harvesting strawberries. These are large, bulky and expensive machines like Agrobot. Cost prices are in the order of 50,000 dollar. Todo: add citations.

A lot of research is done towards inspection by means of machine vision. Todo: add citations and continue.

Further reading

- Aeroponics (we most likely won’t use this as an irrigation method)

- Why to avoid monoculture

Manual strawberry harvesting process

Meetings

Moved to Talk:PRE2015_4_Groep2.