PRE2023 3 Group5: Difference between revisions

m →Week 8 |

|||

| Line 1,173: | Line 1,173: | ||

|- | |- | ||

|Zhiyang | |Zhiyang | ||

| | |4 | ||

| | |Presentation (2), writing discussion (2) | ||

|- | |- | ||

|Samir | |Samir | ||

Revision as of 17:54, 10 April 2024

Fall Guard

Group Members

| Name | Student ID | Department |

|---|---|---|

| Yuting Dong | 1546104 | Computer Science |

| Jie Liu | 1799525 | Computer Science |

| Zhiyang Zhang | 1841734 | Mechanical Engineering |

| Samir Saidi | 1548735 | Computer Science |

| Feiyang Yan | 1812076 | Computer Science |

| Nikola Mitsev | 1679759 | Computer Science |

Introduction

Problem Statement

Nowadays, there is an increasing amount of elderly or impaired people whose health conditions or disabilities prevent them from taking care of themselves. Meanwhile, a large number of them have no choice but to live alone entirely or for most of the time. For some people, especially those with limited mobility, even walking in their own home is a struggle. They can easily get hurt if they are not taken care of properly, and there is a likelihood that their family may remain unaware of potential harm. There have been occasions where individuals fall over at home without anyone noticing which might lead to severe, or even fatal, consequences. The caretaker is thus a necessary role that must be present in our society to ensure the well-being and quality of life for individuals facing these challenges. However, contemporary families often face the dual dilemma of struggling to allocate time for caregiving responsibilities and encountering financial barriers that hinder their ability to engage professional caregivers. Consequently, a significant portion of the population in need is left without the essential care they require.

Objectives

Considering the current situations that the individuals with limited mobility are facing, we have decided to design a home robot with a primary focus on safety monitoring that is easily accessible to individuals. The robot should be able to have an eye on the individuals while they are walking and take actions when they fall over but cannot manage to get up on their own. The main goal of the robot is to ensure the safety of the user and reach out for help whenever needed. On one hand, the robot closely monitors the individual's mobility at home and swiftly responds to instances of falls. On the other hand, it enhances the overall safety of individuals living at home and provide peace of mind for both users and their caregivers or family. Moreover, the robot can also easily be applied in public areas such as hospitals or nursing homes for the same purpose. However, it may raise privacy concerns if the data recorded by the robot is not kept secure.

As an initial idea, the design of the robot covers these features:

1. Built-in program: The robot should be designed as a digital program that can be easily installed and uses cameras as its primary sensor.

2. Fall Detection and Response System: The robot is implemented with high-accuracy human detection and fall detection artificial intelligence algorithms to detect instances of falls and decision-making abilities to react to the instances accordingly.

3. Emergency Communication: The robot incorporates a communication system that allows itself to alert family members, caregivers, or emergency services in case of a fall that cannot be managed by the users themselves.

4. User-Friendly Interface: A user-friendly interface should be designed for the caregivers or family members, which is preferably an application or a portal that can be installed in smartphones. Alerts and updates can be sent to the smartphones remotely. It is also possible to access the settings of the robot through these platforms for example to remotely control the camera or to input room-specific information to ensure comprehensive camera coverage throughout the designated areas.

5. Privacy Protection Measures: The robot is integrated with robust data encryption protocols and privacy features to address concerns related to the recording and storage of personal information, ensuring the user's privacy is maintained.

State-of-the-art

Literature review

Dementia is a syndrome primarily defined by the deterioration of one's cognitive ability beyond what would be expected of biological aging, affecting one's learning and memory, attention, executive function, language, motor perception and social function (Shin, 2022[1]). As a result, this condition can make it difficult, or even impossible, for one with dementia to live independently, often requiring the assistance of a professional caretaker or an informal caretaker (family) in the later stages of the condition. As one would expect, this puts strain on a country's healthcare system, both in terms of personnel and finances; in 2023, the WHO (World Health Organization) estimated that 55 million people worldwide were living with dementia, and they expect this number to nearly triple to 155 million by 2050 (World Health Organization, 2023[2]). The WHO (2023[2]) additionally estimated that the cost of dementia care in 2019 amounted to $1.9 trillion. That is why there is currently a growing demand for robotic caretakers, with a wide range of assistive functionalities, for example, keeping the patient company, reminding them of various tasks, or calling emergency services or other trusted people when the need arises. In 2023, the market for elderly care assistive robots was valued at $2.5 billion, and was projected to grow at a rate of 12.4% to $8.1 billion by 2033 (Saha, 2023[3]).

The increasing aging population faces many challenges, but people with dementia in particular, due to their deteriorating cognitive function, often have difficulties identifying fall risks and can accidentally trip and fall over objects in their home (Canadian Institute for Health Information, n.d.[4], Dementia UK, n.d.[5], Fernando et al., 2017[6]) It is well known that falling is a high danger to elderly people, presenting risk of injury, hospitalization, and can even lead to death. In fact, such accidental falls are one of the leading causes of hospitalization and death in elderly populations (Stinchcombe et al., 2014[7], Kakara, 2023[8], Centers for Disease Control and Prevention, n.d.[9]). Moreover, there are challenges involving emergency response coordination, which can lead to delays in the patient being given treatment after falling (Hesselink et al., 2019[10]). Therefore, there is certainly a need to protect elderly people living alone at home from such risks, both via proactive measures involving identifying fall risks and notifying the user (and possibly removing fall risks autonomously, with a sufficiently advanced robot design), but also, by notifying emergency services and the caretaker(s) of the patient if they have fallen and need assistance or hospital care. Since it has been shown that slower EMS responses is associated with increased mortality (Adeyemi et al., 2023[11]), a robot capable of preventing falls would help relieve some of the burden on the healthcare system, caretaker(s), and allow the patient to live more independently.

Naturally, a user-centered approach must be taken when designing solutions, with focus on making the human-computer interaction as user-friendly as possible. In fact, one of the major challenge impeding the large-scale adoption of elderly care social robots into care homes and in cases of independent living is the complexity of many current robots causing more trouble for caregivers and patients rather than relieving them of it; according to Koh (2021[12]), the multiple visual, auditory and tactile interaction of social robots presents challenges and confusion for people with dementia. This makes the focus on user-friendly HCI even more important. Part of this disconnect between the expected and actual relief of burden seems to stem from how current solutions focus on too many things at once and attempt to make a general care robot, rather than specializing in one area. Furthermore, having a complex user interface is a pitfall, as previously discussed, increased complexity creates added confusion for people living with dementia. Despite these pitfalls and challenges, many case studies and state-of-the-art reviews have shown the effectiveness of a variety of elderly care robots (Carros et al., 2020[13], Raß et al., 2023[14], D'Onofrio et al., 2017[15], Søraa et al., 2023[16], Johnson et al. 2014[17], Vercelli et al., 2018[18]). Therefore, by identifying the needs of our user and the stakeholders, it should be possible to create a simple-to-use, yet effective, robot that addresses our problem.

Due to gaps in the literature, and the inherent difficulty of this problem, existing solutions can also fall short. For example, Igual et al. (2013[19]) identify several challenges and issues regarding current fall detection systems. Some of the challenges, according to them, include decreased performance in real-life conditions, usability of the system, and a reluctance by its user to accept using it. Furthermore, the issues identified include the limitations of smartphones in smartphone-based detection systems, privacy concerns, difficulty in comparing performance between different systems due to different data-gathering methods, and testability of the system. As such, there are many opportunities for improvement, and our robot should aim to be such an improvement.

Despite the evidence supporting the effectiveness of such robotics in the field of elderly care, papers have also shown its benefits to be overstated. For example, Broekens et al. (2009[20]) showed that although there is some evidence that companion type robots have positive effects in healthcare for elderly people with respect to at least mood, loneliness and social connections with others, the strength of this evidence is limited, due to a variety of factors. However, this mainly applies to companion social robots, which our robot is not. So, we expect that our robot should still provide many benefits to our user group as long as it is designed with them in mind.

As a result of the above, we highlight the need for our proposed solution, because it aims to address some of the challenges and issues that were previously raised, and provides something new that current products do not. With this in mind, we give a brief overview of such current products below, discussing their advantages and, where applicable, where they fall short. We later provide an analysis of our user group, as well as address various ethical concerns that must be taken into account while designing the robot.

Current products

2D-Lidar-Equipped Robot

The LiDAR system continuously scans the environment, creating map of the surroundings. The robot is programmed to recognize the typical shapes and positions of a person standing, sitting, or lying down. If the LiDAR system detects a shape that matches the profile of a person lying on the ground, the robot can interpret this as a person having fallen. The action of the robot is not specified once a fallen person is detected; the paper is more concerned with the detection itself, this was tested in simulations discussed in the paper.[21]

Mobile robot with Microsoft Kinect

The robot includes 3 main components: a Microsoft Kinect sensor, a simple mobile robot, and a PC. The Kinect sensor is mounted on top of the robot and rotates to scan the environment. The sensor data is sent to the connected PC, which processes the information. The PC is programmed to recognize patterns in the sensor data that indicate a person has fallen. This could be based on the shape, position, or movement of the detected objects. If the system detects a fallen person, it sends notifications to a medical expert, authorities, and family members. The main issue with this design is the re-detection of a fallen person, and the difference of detecting a fallen person between different types of robots.[22]

Sensors embedded in the house

The infrared sensors are embedded into the floor throughout the area where fall detection is needed. The system is programmed to recognize the patterns of infrared radiation that indicate a person has fallen. If the system detects a fallen person, it sends a signal to a robot. This robot is equipped with features to assist a fallen person. The robot navigates to the location of the fallen person. It can do this because the system knows which sensors detected the fall, and therefore where the person is located. Once the robot reaches the person, it asks if help is needed. This could be done using a built-in speaker and speech recognition technology. The robot can provide assistance in several ways. by providing the fallen person with a mobile phone or by helping the person stand up using the handles on the robot itself.[23]

Image recognition robots

In this scenario, a LOLA companion robot is repurposed for fall detection. The LOLA robot is originally designed to provide companionship and assistance to people, especially the elderly or those with special needs. A camera is attached to the robot; this allows the robot to visually monitor its environment. The robot uses two algorithms to interpret the images taken by the camera: a Convolutional Neural Network (CNN) and a Support Vector Machine (SVM). The CNN is a type of deep learning algorithm that is especially good at processing images. It can be trained to recognize complex patterns in the images, such as the shape and posture of a human body. The SVM is a type of machine learning algorithm that’s used for classification and regression tasks. In this case, the SVM could be used to classify the output of the CNN. There is no specific reaction of the robot in case a fallen person is detected, the paper was focused on the detection itself.[24]

Wearable Fall Detection Devices

One existing scheme: Apple Watch [25]. If the Apple Watch detects a hard fall, it taps the user on the wrist, sounds an alarm, and displays an alert. The user can choose to contact emergency services or dismiss the alert. If the user's Apple Watch detects that they are moving, it waits for them to respond to the alert and will not automatically call emergency services. After that, if the watch detects that they have been immobile and unresponsive for about a minute, it will call emergency services automatically.

USE analysis

The design of the robot must be taken in coordination with a variety of stakeholders, and not just our target user (who we have defined to be elderly people with mild memory loss due to dementia, living alone at home). Examples of such stakeholders are: formal/professional caregivers, informal caregivers (such as family members), and doctors. Each of these stakeholders have different needs and priorities regarding the care of our target user, and thus, will have different perspectives that will ultimately influence how we design our robot.

Users

Target group 1: Elderly and impaired individuals living alone. According to the World Health Organization (WHO), the number of people aged 65 or older is expected to more than double by 2050, reaching 1.6 billion. The number of people aged 80 years or older is growing even faster[26]. From the statistics of Centers for Disease Control and Prevention (CDC), one out of four older adults will fall each year in the United States, making falls a public health concern, particularly among the aging population[27]. Each year, about 36 million falls are reported among older adults each year, resulting in more than 32000 deaths, and one out of every five falls causes an injury, such as broken bones or a head injury[28].

Needs:

- In-time fall detection: This is the primary concern for this group. A fall can have serious consequences, and they need a solution that can quickly detect falls and request help if needed.

- Ease of use: As this group is over 65, learning how to use a complicated technological product is unrealistic. A simple user interface is thus required for this design.

- Automatic and accurate: Monitoring the user and requesting help should be be done automatically and accurately; otherwise, it would cause a burden on users and caregivers.

- Privacy and safety: As this robot needs to monitor the activities of users, their data should either be stored safely or not stored at all.

- Interference with the user: This robot should not restrict or interfere with the activities of the user group.

Target group 2: Informal/formal caregivers and doctors. Some family members in developing countries do not have enough time to look after their elderly because of reasons like severe work overtime; while developed countries are also experiencing the shortage of caregivers. These target groups are potential users, as they expect a method to lighten their burden.

Needs:

- Reliable assistant: It is stressful and tiring for them to keep an eye on the activities on the elderly. Even the professional caregivers cannot focus all their attention on their patients, so they prefer a reliable assistant robot to help them while they are doing other tasks, like preparing medicine. The notification from the robot must also be reliable, otherwise it would be another burden for them.

- Remote monitoring and real-time information: They may want to use the robot to check the situation of the user when an emergency happens, such that they can take the next step immediately.

Another considerations for both major target groups: The product should be cheap enough such that most families can afford it.

Analysis on interviewee 1

Background information: Interviewee is a customer data analysis department in a financial bank. His children are working in the US. He does exercise regularly and mainly plays tennis. There was a family member of his who experienced a severe fall which caused a bone fracture. The interviewee has experience with using a service robot and using generative AI tools.

Feedback from the interviewee: He is interested in using a robot to monitor the fall of the user via camera, as it is more convenient compared to other methods, like wearable devices. He prefers that user interactions with the robot happens using voice commands, as he wants to keep the robot at a safe distance. The interviewee clearly pointed out that he wanted the robot to contact the emergency contact via a phone call or similar methods, as other methods are usually easily ignored. The interviewee also mentioned that he prefers that the robot has a non-human like face, and a cute face would be more ideal, as he did not want a human-like robot following him everywhere, which is unnerving for him especially at night. The interviewee exhibited many concerns related to the robot. The first one is the issue of safety; he is afraid that the robot will block, hit or collide with him, causing more falls. The second concern is that he is worried about the privacy aspects, fearing that the company might misuse the data. As most data comes from the user's daily life, he did not want his data is used by a company, even for research purposes.

Analysis on interviewee 2

Background information: She is retired; she used to be a government department officer. She has one child who works in the same city where she lives, but only visits her once a week. She likes to play tennis. She has experienced several falls at home but not very often, and she does not have too much experience with interacting with robots.

Feedback from the interviewee: She is quite concerned about falling because she lives alone and no one can help her immediately when she falls. She is positive towards the idea and interested in having a robot which uses a camera to detect her falls. However, she is worried about the privacy problem, and she hopes that her photos will not be stored or used for other intentions. She expects that the robot is able to make a phone call to her son and also to the emergency services, because she thinks other methods, like SMS messages are not quick nor efficient. Moreover, she does not want the robot following her around all the time. She hopes she can control the robot and set when the robot can follow her. About the appearance of the robot, she does not want a huge object at home which occupies too much space. She is also concerned about the accuracy of the detection and whether or not the robot can avoid obstacles.

Analysis on interviewee 3

Background information: The interviewee is a middle school teacher, around 40 years old, who lives with with her mother and a caregiver. She is experiencing a severe leg illness and her mother has Parkinson's disease, so she hires a caregiver to look after her mother and do housework for her. Her mother experienced a fall many years ago, while she falls once or twice a year, but they are not very severe and she has got used to this thing.

Feedback from the interviewee: She was open to the robot as she hoped to see an increasing variety of devices for detecting falls. Secondly, she preferred the robot to have a non-human-like appearance and use voice commands to control it when an emergency happens, although she was skeptical about its accuracy. She did not object to collecting user data and sending it to the company, as long as it is fully compliant with the law and the company is only using the data to improve accuracy. But the most concern from the interviewee is the accuracy and reliability of the robot. She does not believe that the robot with camera can detect the fall accurately. She has experience using Siri, a voice assistant made by Apple, but the accuracy disappointed her, so she has doubts about detecting the fall with the camera. The second concern that she mentioned frequently after seeing our prototype was that the robot itself could potentially fall. She was afraid that the robot was too tall and heavy, as many obstacles at home could make the robot fall, and once it falls, it would be troublesome to help the robot up.

Society

As the living standards keep increasing, and more medical resources are publicly available, the life expectancy in many countries increases. This results in more aging population in more developed countries. This population needs more care from the public. However, the number of caregivers does not meet the need of the aging population. A report shows that by 2030, there will be a shortage of 151,000 caregivers in the US [29]. Therefore, governments are interested in investing in automatic care robots, to undertake some responsibilities of caring for the elderly. In addition, the elderly are more and more willing to live independently at home instead of needing someone to take care of them all the time. They would like to have a robot that can automate the job of a caregiver, but at the same time, they are concerned about the potential problems such as data leaks and acceptance from society. These problems need to be addressed in the design of the robot.

Needs:

- Social acceptance: This robot should be socially accepted by the public, so that most elderly will be willing to use this product. Some people might think that installing a robot at home that monitor them every day is not safe and will be an invasion of privacy.

- Data privacy: The potential problem of data breaches should be considered by the developers of the robot and the government; data privacy measures must be taken into account, and data storage methods must be in full compliance with the law.

Enterprise

Robotic companies are the main stakeholders. As the elderly population grows, there will be more and more potential customers. Fall detection robots suit the needs of these customers. Hence, the robotic companies are interested in developing such robots. Hospitals and nursing/care homes will also be interested in fall detection robots to some extent; lack of caregivers is also affecting the revenue of hospitals. If there are fall detection robots that can detect the fall of patients, hospitals can spend less time monitoring the patients.

Needs:

- No failure case: If the robot consistently fails to detect falls, it will have a large negative impact on the developer companies and hospitals. This is because society will lose their trust on such robots. Therefore, the companies would like to ensure that the robot is always able to detect falls.

- Price: As there are fewer human caregivers, companies start investing in robots for a replacement. But, if the price of a robot is higher than hiring a human caregiver, then there will be no need to purchase such a robot. Therefore, the price must be affordable for users.

Requirements

Design specifications

Rae et al. (2013[30]) found that the persuasion of a telepresence robot was less persuasive when the robot was shorter than the user. Thus, for requirement 1.1, we decided that the robot should be about the same height or slightly taller than the user, to minimize the loss of persuasion that may occur when the robot is shorter than the user. For requirement 1.2, this is merely an estimate based off the Atlas robot made by Boston Dynamics, which weighs 75-85 kilograms depending on its version, thus, we took the average across the 3 versions (75, 80, 85 kg respectively). For requirement 1.3, we would like the robot to be able to move (Req 1.8), and in order to do so it needs wheels, which, ideally, can also be rotated (Req. 1.9). Normally, at least 2 wheels are sufficient, but 3 or 4 wheels would also work. The design of our robot also has a body containing a microphone, speaker and camera at its front (Req 1.5 + 1.7) and a charging port at the back to recharge its battery (Req 1.6), with the inside of the body housing all of the electronics and wiring (Req. 1.4). Since we would like the robot to be able to make a video call to the caretaker or family members upon fall detection, the robot needs to have at least those elements.

| Index | Description | Priority |

|---|---|---|

| 1.1 | The robot shall have a height of at most 1.7 meters. | Must |

| 1.2 | The robot shall have a weight of at most 80 kilograms. | Must |

| 1.3 | The robot shall be mounted on a base containing at least two wheels. | Must |

| 1.4 | The robot shall have a body casing to house its electronics. | Must |

| 1.5 | The robot shall be equipped with a camera on its body. | Must |

| 1.6 | The robot shall have a rechargeable battery as a power source. | Must |

| 1.7 | The robot shall have a screen, microphone and speaker capable of making video calls. | Must |

| 1.8 | The robot shall be able to move using its wheels. | Must |

| 1.9 | The robot shall be able to rotate its wheels. | Should |

The test plan is as follows:

| Index | Precondition | Action | Expected Output |

|---|---|---|---|

| 1.1 | None | Measure the height of the robot. | The height of the robot is at most 1.7 meters. |

| 1.2 | None | Measure the weight of the robot. | The weight of the robot is at most 80 kilograms. |

| 1.3 | None | Inspect the robot's structure. | The robot has a base containing two wheels. |

| 1.4 | None | Inspect the robot's structure. | The robot has a body casing and electronics inside of it. |

| 1.5 | None | Inspect the robot's structure. | The robot has a camera on its body. |

| 1.6 | The robot is powered on. | Charge the robot using the provided charger. | The robot begins to charge its battery with no issues. |

| 1.7 | The robot is powered on. | Pretend to fall down, such that the robot calls the designated contact. | The designated contact responds, you can see, hear, and talk to them with no issues, and vice versa. |

| 1.8 | The robot is powered on. | Set up the robot and move in any direction. | The robot starts to move. |

| 1.9 | The robot is powered on. | Set up the robot and move in one direction, then turn in any angle and continue moving. | The robot starts to move, then turns, and moves in a new direction. |

Functionalities

In terms of core functionality we would like the robot to be able to continuously monitor the user (Req 2.10) to detect falls (Req 2.1) and notify caretakers or family members as soon as possible. The timeframe was decided to be 30 seconds long (Req 2.2), after researching some other devices on the market, we see that on average, they contact emergency services or caretakers within 30 seconds of detecting a fall and the user not responding. We would like the user to be able to cancel the notification if desired (Req. 2.8), in case the fall was not serious. However, in cases where the robot detects that the user is completely immobile for 10 seconds, then it must immediately notify the caretakers or family members without waiting for the full 30 seconds (Req 2.9). We decided on this 10 second time frame, also because of similar devices on the market having similar time ranges - we simply took the average of these times.

We decided to make the notification in the form of a VOIP/Wi-Fi video call to the designated emergency contact, or a SIM-card based call if the robot does not have access to the Internet (Req 2.3). This is because having a video call adds to the level of reliability of the robot and can allow for a more dynamic two-way interaction between the user and their emergency contact. However, if the contact is unavailable, the robot will instead immediately call local emergency services instead and request help (Req 2.4). In this case, it will instead send a text message to the contact (Req 2.5) and attach a photo (Req 2.7), but in any case, the information relayed must contain at least the name, date of birth, and location of the user (Req 2.6) to allow emergency services or a professional caretaker to identify the user and assist them. The reason we included these three attributes is because in most medical settings in the Netherlands, one's name and date of birth (and possibly address) is required to access the services of the clinic the user is registered to. In some cases BSN and the name of the huisarts may additionally be required for emergency services, but we could not find a definitive answer about this protocol. If it turns out that it is required, then we would need to also add this information to Req. 2.6.

In terms of processing and data storage, the robot should not be able to store data or send it online to a server, due to privacy concerns by the user base which were identified during the interviews (Req. 2.11, 2.12).

| Index | Description | Priority |

|---|---|---|

| 2.1 | The robot shall be able to detect falls. | Must |

| 2.2 | When the robot detects a fall, the robot shall notify the designated emergency contact within 30 seconds. | Must |

| 2.3 | The notification shall be in the form of a VOIP video call to the emergency contact, or a SIM-card based call if an Internet connection is unavailable. | Must |

| 2.4 | If there is only one emergency contact which has not responded to the call, or if there are two or more emergency contacts and at least two have not responded to the call, the robot shall instead call local emergency services (112, 911, etc.). | Should |

| 2.5 | When the robot detects a fall, and the emergency contact has not responded (as described in 2.4), the robot shall send a text message to the emergency contact. | Could |

| 2.6 | The notification (text or call) that the robot sends shall contain the name, date of birth, and location of the user. | Must |

| 2.7 | When the robot detects a fall, and the emergency contact has not responded (as described in 2.4), the robot shall take a picture of the fall and send the picture to the designated emergency contact via SMS. | Could |

| 2.8 | When the robot detects a fall, the robot shall have a mechanism to allow the user to cancel the notification within 30 seconds. | Should |

| 2.9 | When the robot detects a fall, the robot shall additionally detect if the user is immobile, and if they are immobile for at least 10 seconds after the fall detection, it shall send the notification to the emergency contact within 1 second of determining the user is immobile. | Should |

| 2.10 | The robot shall monitor the user continuously using the camera mounted on its body. | Must |

| 2.11 | All data that the robot processes or sends shall be stored locally on a hard drive. | Must |

| 2.12 | The robot shall delete any video data within 1 minute after it has been processed. | Must |

The test plan is as follows:

| Index | Precondition | Action | Expected Output |

|---|---|---|---|

| 2.1 | The robot is powered on. | Pretend to fall down. | The robot detects the fall and notifies you of it via voice. The robot asks you if you are okay and if you need help. |

| 2.2 | The robot is powered on. | Pretend to fall down. | The robot detects the fall and notifies the designated contact within 30 seconds. The notification is either a VOIP video call or a SIM card based call, if the internet is not available. The robot in the call says that the user has fallen and requires assistance. The robot says the name, date of birth, and the address of the user. |

| 2.3 | The robot has detected a fall. | To test the video call, simply wait until the robot notifies the contact. To test the SIM call, turn off the internet, repeat the fall, and wait until the robot notifies the contact. | The video call and SIM call both successfully connect to the emergency contact with no issues. The robot in the call says that the user has fallen and requires assistance. The robot says the name, date of birth, and the address of the user. |

| 2.4 | The robot has detected a fall. | Wait until the robot notifies the contact. Make sure the emergency contact(s) has/have not responded. | The robot notifies local emergency services. The robot in the call says that the user has fallen and requires assistance from an ambulance. The robot says the name, date of birth, and the address of the user. |

| 2.5 | The robot has detected a fall. | Wait until the robot notifies the contact. Make sure the emergency contact(s) has/have not responded. | The emergency contact(s) receive(s) a text message from the robot. The text message says that the user has fallen and requires assistance. The text message contains the name, date of birth, and the address of the user. |

| 2.6 | The robot has sent a text message (2.5) | Inspect the text message. | The text message contains the name, date of birth, and location of the user. |

| 2.7 | The robot has sent a text message (2.5) | Inspect the text message. | The text message contains a picture of the fall. |

| 2.8 | The robot has detected a fall. | Tell the robot to cancel the notification. | The robot cancels the notification process and goes back to idly following you around. |

| 2.9 | The robot has detected a fall. | Stay immobile for 10 seconds. | The robot sends a notification to the emergency contact within 1 second. The robot in the call says that the user has fallen and requires assistance. The robot says the name, date of birth, and the address of the user. |

| 2.10 | The robot is powered on. | Move or pretend to fall down. If you pretend to fall down, when the robot asks you if you need assistance, say no. | If the user moves, the robot follows the user around. If the user pretends to fall down, the robot will notify the user of it via voice, asking them if they need assistance. After replying no, the robot goes back to following the user around. |

| 2.11 | None | Inspect the robot's internal hard drive. | The robot has processed or sent data in the hard drive. |

| 2.12 | None | Inspect the robot's internal hard drive. | The only video data remaining is from 1 minute before the robot was turned off in order to inspect it. |

User Interface

The user interface for the robot should be very light and focused mainly on achieving its core functionality. Thus, we have decided to use a voice controlled system (Req 3.1), due to our user group having difficulties with complex technology which would present challenges for them interacting with our robot otherwise. This is also supported by our interviews. The voice controlled system would have a predefined set of commands that it would respond to, and it would respond to them only if preceded by its name, then the command (Req 3.2). This is to prevent the robot from accidentally being told to do something that the user did not ask for, for example, if it hears something interpreted as a command from somewhere else (TV for example). Speech data is interpreted by a natural language processing algorithm and classified into one of the predefined commands from 3.6. This does not mean the robot will only respond to these exact commands; it should be able to interpret speech for nuance, for example, "Yeah no, I'm fine" must be interpreted as "Do not need help" by the robot. The robot should be able to change, add, and delete emergency contacts in order to perform its function (Req 3.3, 3.4, 3.5).

| Index | Description | Priority |

|---|---|---|

| 3.1 | The robot's interface shall be controlled using voice commands classified as follows: "Need help", "Do not need help", "change emergency contact", "add emergency contact",

"delete emergency contact", "end call", "mute microphone", "mute camera".. |

Must |

| 3.2 | The robot shall only respond to commands beginning with its name. | Must |

| 3.3 | The robot shall have a mechanism for changing the designated emergency contact, such as a family member or caretaker. | Must |

| 3.4 | The robot shall have a mechanism for adding multiple emergency contacts. | Must |

| 3.5 | The robot shall have a mechanism for deleting emergency contacts (but not if there is only one contact) | Must |

The test plan is as follows:

| Index | Precondition | Action | Expected Output |

|---|---|---|---|

| 3.1 | The robot is powered on. | Go through each action and check that it matches the expected output.

|

Check the output based on the corresponding action.

|

| 3.2 | The robot is powered on. | Go through each action and check that it matches the expected output.

|

In every case, the robot does not respond to the user. The state that the robot was previously in remains in all cases, so it should be possible to retry the command by saying its name first. |

| 3.3 | The robot is powered on. | Tell the robot to change an emergency contact. | The robot responds by asking you which contact you would like to change. |

| 3.4 | The robot is powered on. | Tell the robot to add a new emergency contact. | The robot responds by asking you information about the contact. |

| 3.5 | The robot is powered on. | Tell the robot to delete an emergency contact. | The robot responds by asking you which contact you would like to delete, or, if there is only one contact, that it cannot delete the contact. |

Safety

The safety of the user is important, thus, we have included some requirements to ensure that. The most important is prevention of false negatives (Req 4.1), which refer to falls not being detected. Because it is impossible to make a system that is 100% reliable, we have elected to ensure a 99% reliability rate, that is, that the rate of false negatives in the system cannot be more than 1%. We believe that any higher than that and the system becomes too unreliable for our users to use. (waiting until we have figured out how the robot will follow the user)

| Index | Description | Priority |

|---|---|---|

| 4.1 | During training, the rate of false negative falls shall be at most 1%. | Should |

The test plan is as follows:

| Index | Precondition | Action | Expected Output |

|---|---|---|---|

| 4.1 | The robot is powered on. | Inspect the training statistics of the robot. | The rate of false negative falls is no more than 1%. |

Performance

The below requirements are gathered from various estimates of specifications that a computer would need to have in order to efficiently perform the various algorithms and functionalities described in this section. While sensor fusion is currently a "should have", we suspect it will become a "must have" seeing as camera and accelerometer data is typically combined using sensor fusion and the resulting output is used to identify falls. However, this will become more clear in the implementation phase.

| Index | Description | Priority |

|---|---|---|

| 5.1 | The robot shall have a CPU which is at least quad-core and has a minimum clock speed of 2.5 GHz. | Must |

| 5.2 | The robot shall have an integrated or dedicated GPU with at least 700 CUDA cores (or equivalent). | Must |

| 5.3 | The robot shall have at least 16 GB of RAM. | Must |

| 5.4 | The robot shall have a hard drive with at least 250 GB of available space. | Must |

| 5.5 | The hard drive that the robot uses shall be a solid-state drive (SSD). | Should |

| 5.6 | The robot shall have a dedicated sensor fusion processing unit to support tasks requiring sensor fusion. | Should |

| 5.7 | The robot shall have a network card with support for Wi-Fi. | Must |

| 5.8 | The robot shall be powered with a rechargeable battery with a battery life of at least 16 hours before needing to recharge. | Must |

The test plan for the above is fairly straightforward, only needing simple inspection of the hardware specifications for all components specified above. Therefore, we will not display a full test plan.

Algorithm

The robot uses various algorithms to accomplish its functionalities. It must use an object detection algorithm with functionality that allows it to identify falls (Req 6.1, 6.2) and a pathfinding algorithm to move and monitor the user (Req 6.3). Furthermore, for the voice interface, it would need a voice processing algorithm, text-to-speech algorithm, and a natural language processing algorithm (Req 6.4, 6.5. 6.6) to be able to receive commands from, and interact with, the user.

| Index | Description | Priority | Notes |

|---|---|---|---|

| 6.1 | The robot shall use an object detection algorithm in order to identify objects and people in its environment. | Must | Algorithms 6.1 - 6.6 could additionally have constraints based off time/memory complexity, as well as considerations to be taken based off the environment the robot is to operate in. |

| 6.2 | The robot shall use the algorithm in 6.1 to identify when an object or person has fallen. | Must | |

| 6.3 | The robot shall use a pathfinding algorithm in order to move from one location to another. | Must | |

| 6.4 | The robot shall use a natural language processing algorithm in order to interpret and classify commands. | Could | |

| 6.5 | The robot shall use a text-to-speech algorithm in order to talk to the user. | Could | |

| 6.6 | The robot shall use a voice processing algorithm in order to recognize speech. | Could |

Completing the test plans of the previous sections means that the test plans for this section should all work correctly. In particular, most requirements of section 2 will test 6.1 and 6.2, requirements 1.8 and 1.9 will test 6.3, all requirements of section 3 will test 6.4, 6.5 and 6.6.

Further requirements on these algorithms are shown below:

Object detection and fall detection

The algorithm must be a real-time algorithm. That is, it must be able to continuously track and identify the user. Furthermore, the algorithm should be robust in detecting people, for example, in different lighting conditions, different people, or obstacles that could obscure its view. False positives are not too harmful, as the user can cancel the notification easily, but false negatives must be avoided as much as possible, as mentioned previously. The algorithm itself would need to be trained as it will likely be a neural network based one, but, there should be little to no calibration required on the user's side, because of possible difficulties in understanding how to work with the robot.

Pathfinding

The algorithm must be able to make a safe, efficient path from point A to point B for the robot to take. It must be able to integrate itself with the object detection algorithm to identify obstacles and path around them. Furthermore, it must be able to dynamically adjust the path as needed, because of our safety requirements previously listed. In terms of efficiency, there should be some method to split the search space into smaller, more manageable clusters, such that the time and space complexity do not become too high.

Natural language processing

Accuracy is of course the most important part here, thus, the algorithm should be able to accurately interpret the input it is given and classify it into one of the given commands. The robot interface will be developed in English, thus, it should at least be able to understand English text, but in the future, if the robot is to be localized, the algorithm should also be able to understand other languages. It should be robust enough to understand different English dialects and the differences in them (American English vs. British English, for example, figures of speech can differ across these and other dialects). The algorithm could also have the ability to learn from the user, that way, it is able to classify commands faster.

Text-to-speech

The algorithm should be able to convert text into clear, understandable speech for the user. Correct intonation, stress, emphasis and enunciation is highly valued, but not an absolute requirement. As with the previous requirements, the algorithm should work for English text.

Voice processing

The algorithm must be able to recognize speech and translate it into text for the NLP algorithm to process. The algorithm should still work in the presence of noise, for example, speech coming from the TV should be ignored or suppressed. To do this, it could distinguish the user's voice from others' voices.

Specification

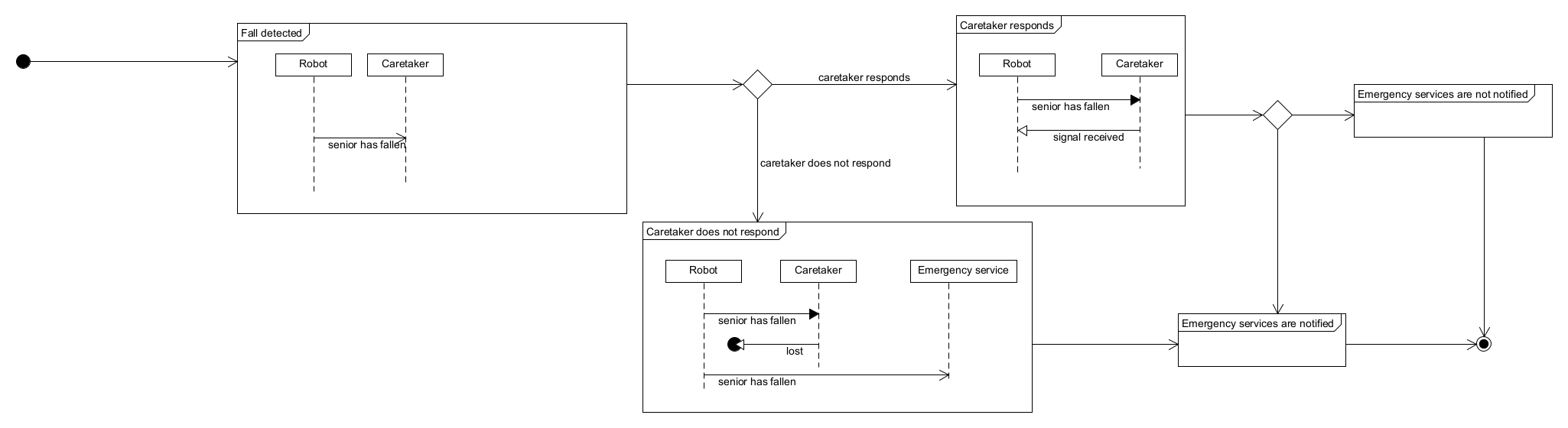

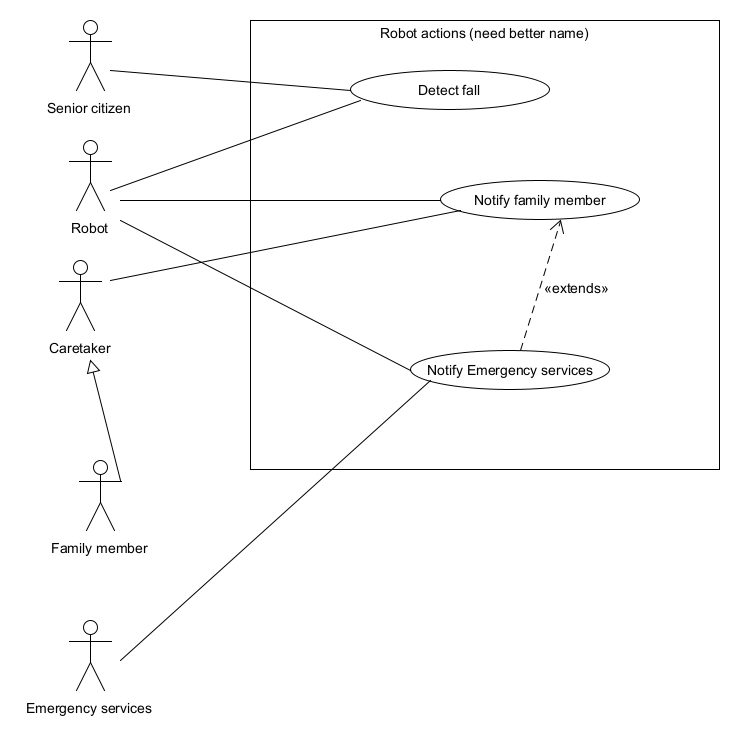

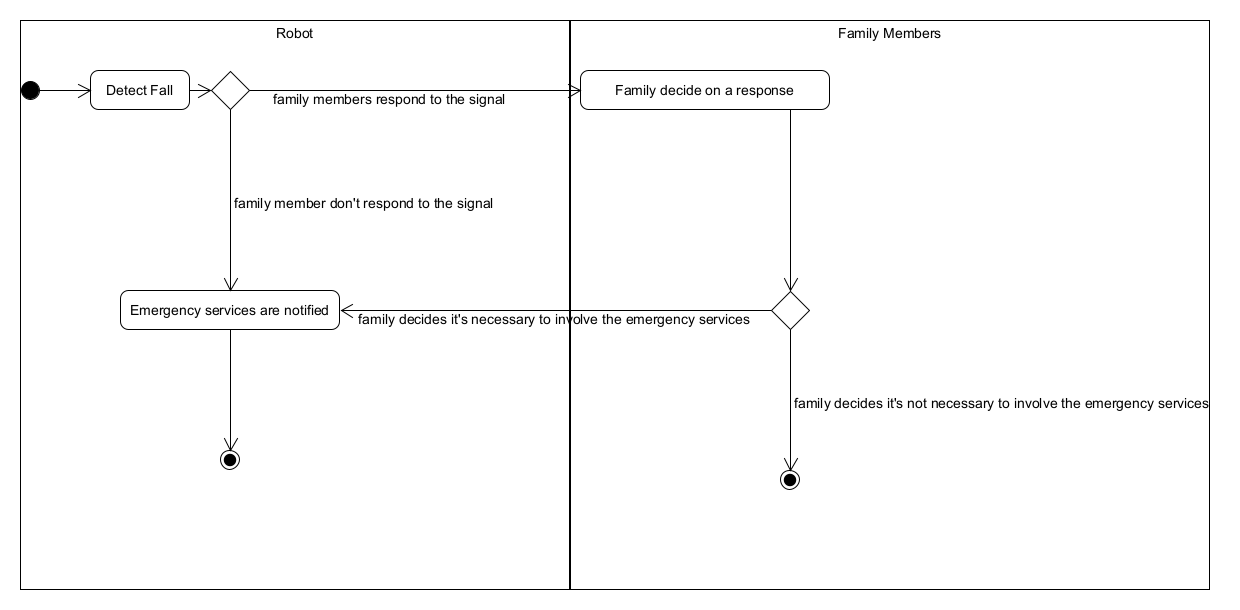

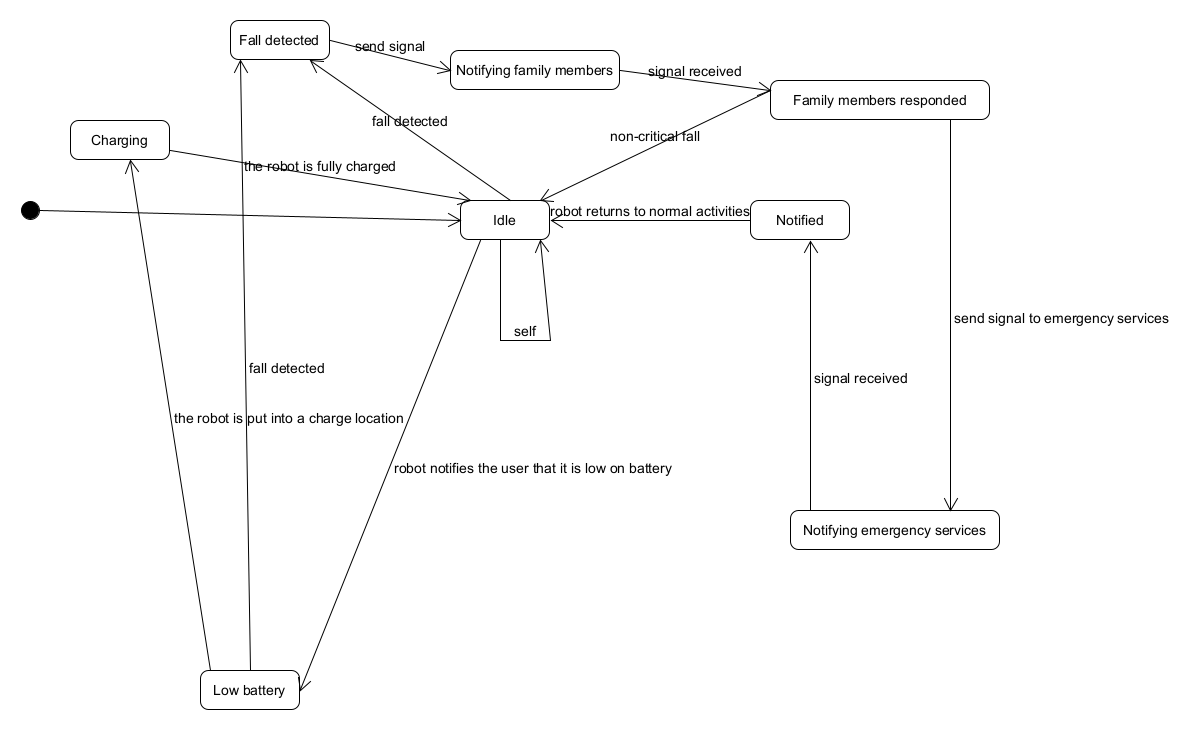

The robot is specified by four different types of UML diagrams: sequence, use case, state machine and activity. The diagrams were chosen as they cover all the necessary aspects of the robot from interaction with actors to the robots internal implementation.

1. Sequence diagram: This models the communication between different interaction partners. A sequence diagram contains different types of messages (synchronous, asynchronous, response message, create message). For this particular sequence diagram we show all possible sequences of interactions. We show synchronous messages with a normal arrow, and asynchronous message with a dotted arrow.

2. Use case diagram: The use case diagram describes the behavior of a system from the view of the user. For our implementation we show the view points of every actor, without addressing the internal implementation.

3. Activity diagram: The activity diagram focuses on modeling procedural processing aspects of a system. It specifies the control flow and data flow between various steps the actions required to implement an activity.

4. State machine diagram: The state machine diagram can be used to show the states in which a system or an object can find itself during its “life cycle”, that is, from its creation to its destruction. The diagram also shows the conditions under which the transitions between these states occur.

Ethical analysis

The introduction of this home robot designed for safety monitoring, particularly for individuals with limited mobility, raises several ethical considerations that must be carefully addressed. As is mentioned previously, privacy and data security are two of the main concerns when it comes to information collecting using cameras and remote data sharing. Other than that, the user (mainly the individuals being monitored) may feel the loss of their full independence and autonomy under monitoring. The introduction of these kinds of robots may also result in people's excessive reliance on technologies, which can potentially reduce their sense of responsibility for their loved ones. The issues and the possible solutions will be discussed as follows:

1. Privacy concerns: The primary ethical concern is related to privacy. The built-in camera, while essential for safety monitoring, may intrude on the personal privacy of individuals within their homes since it involves the collection and processing of sensitive data involving their private lives. It is crucial to implement robust privacy protection features, ensuring that users have control over camera access and that visual data is securely stored. Clear communication and transparency regarding data usage and storage are essential and the users should be well-informed about every aspect of the technology regarding privacy before opting to use it.

2. Program performance: It is essential for the program to be sufficiently accurate especially when it is dealing with health-related tasks. Any misjudgments or mistakes in decision-making may result in severe consequences. It may raise the problem of accountability and responsibility, as the program developer in this case is supposed to take full responsibility for any unexpected failure of the program. Therefore, the program must undergo strict testing protocols to ensure its precision and reliability.

3. Compromised autonomy: While the robot aims to enhance safety and well-being, the autonomy and privacy of the user are to some extent compromised. Being constantly monitored, even by their loved ones deprives individuals of their personal space. Striking a balance between providing necessary care and preserving personal freedom, and obtaining full, informed consent from individuals before deploying the robot in their homes is crucial.

4. Dependence on technology: With the help from the robot, it is no longer necessary for the family members to be physically present most of the time to attend to the user. Dependence of the robot will increase more and more to the point that humans do not take responsibility for their loved ones. Providing companionship, particularly for elderly individuals, holds equal importance. Moreover, if most of the caretaker jobs have been taken over by robots, then most human caretakers will have lost their jobs.

5. Affordability and continuous improvement: It is critical to ensure that the home robot is affordable and accessible to a broad range of individuals. The technology is aimed to solve health-related problems for the entire society rather than the privileged few. The developers hold the responsibility to control the investment on the product and to limit the cost. At the same time, it would be a good practice for the developer to gather feedback and stay responsive. Continuous improvement is desired to address any unforeseen ethical challenges.

Design

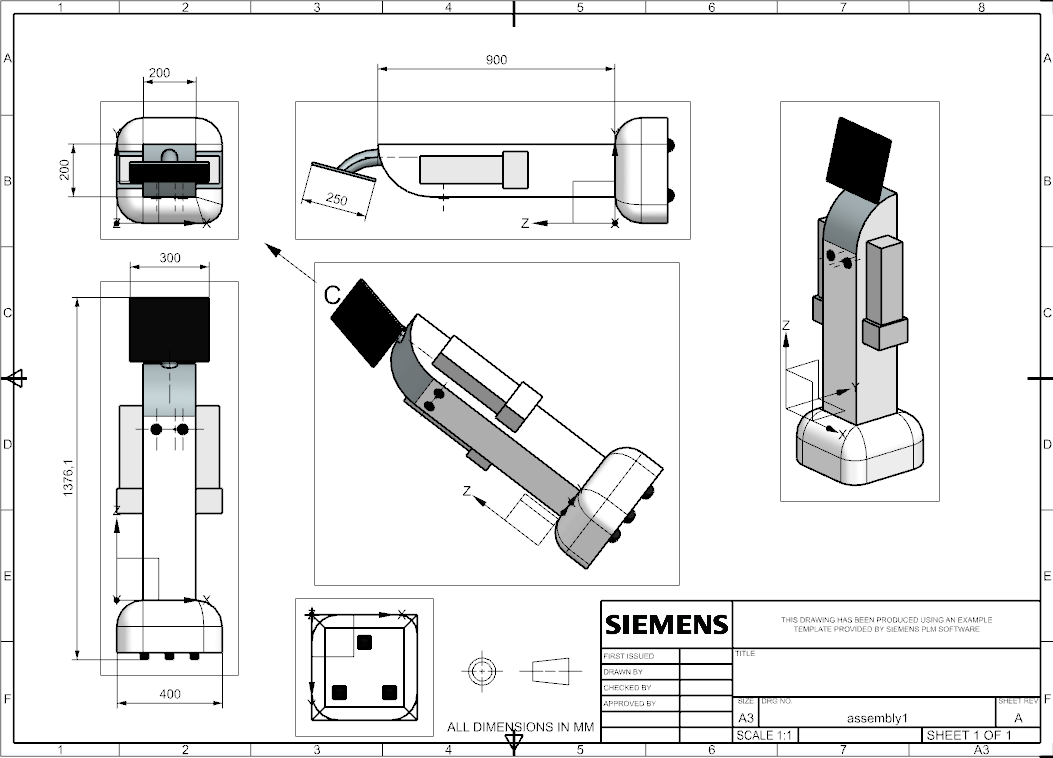

After making sketches, we decided that although the robot's design serves its purpose, a more user friendly design could be created. Thus, we adjusted it such that the screen is now the "head" of the robot, which now has arms. The wheels are still attached to the base of the robot, but, they are now hidden underneath the base. We decided on this as our design for the time being, and created CAD models to demonstrate how the robot might look like. The technical drawings have been generated from the CAD model as shown below.

Prototype

Unfortunately, due to the restrictions of the project, it is not possible for us to implement all of the requirements we have developed. As a prototype, we have picked some significant features and functions that will be implemented by the end of the this project.

| Index | Description |

|---|---|

| 2.1 | The robot shall be able to detect falls. |

| 2.2 | When the robot detects a fall, the robot shall notify the designated emergency contact within 30 seconds. |

| 2.3 | The notification shall be in the form of a VOIP video call to the emergency contact, or a SIM-card based call if an Internet connection is unavailable. |

| 2.5 | When the robot detects a fall, and the emergency contact has not responded (as described in 2.4), the robot shall send a text message to the emergency contact. |

| 2.6 | The notification (text or call) that the robot sends shall contain the name, date of birth, and location of the user. |

| 2.7 | When the robot detects a fall, and the emergency contact has not responded (as described in 2.4), the robot shall take a picture of the fall and send the picture to the designated emergency contact via SMS. |

| 2.8 | When the robot detects a fall, the robot shall have a mechanism to allow the user to cancel the notification within 30 seconds. |

| 2.10 | The robot shall monitor the user continuously using the camera mounted on its body. |

| 3.1 | The robot's interface shall be controlled using voice commands classified as follows: "Need help", "Do not need help". |

| 4.1 | During training, the rate of false negative falls shall be at most 1%. |

| 6.1 | The robot shall use an object detection algorithm in order to identify objects and people in its environment. |

| 6.2 | The robot shall use the algorithm in 6.1 to identify when an object or person has fallen. |

| 6.4 | The robot shall use a natural language processing algorithm in order to interpret and classify commands. |

| 6.5 | The robot shall use a text-to-speech algorithm in order to talk to the user. |

| 6.6 | The robot shall use a voice processing algorithm in order to recognize speech. |

As can be seen from the selected requirements in the table above, most of the elements belong to functionalities, which will be the major part of our implementation. We have omitted sections 1 and 5 for brevity, because we do not yet know if we will be able to use an actual robot or if we will have to simulate it using a laptop. In either case, both would satisfy those requirements by default (most if not all of them). We will be using pre-trained models to realize the functionalities related to fall detection, natural language processing, voice processing and text-to-speech. The other functionalities will be implemented by ourselves.

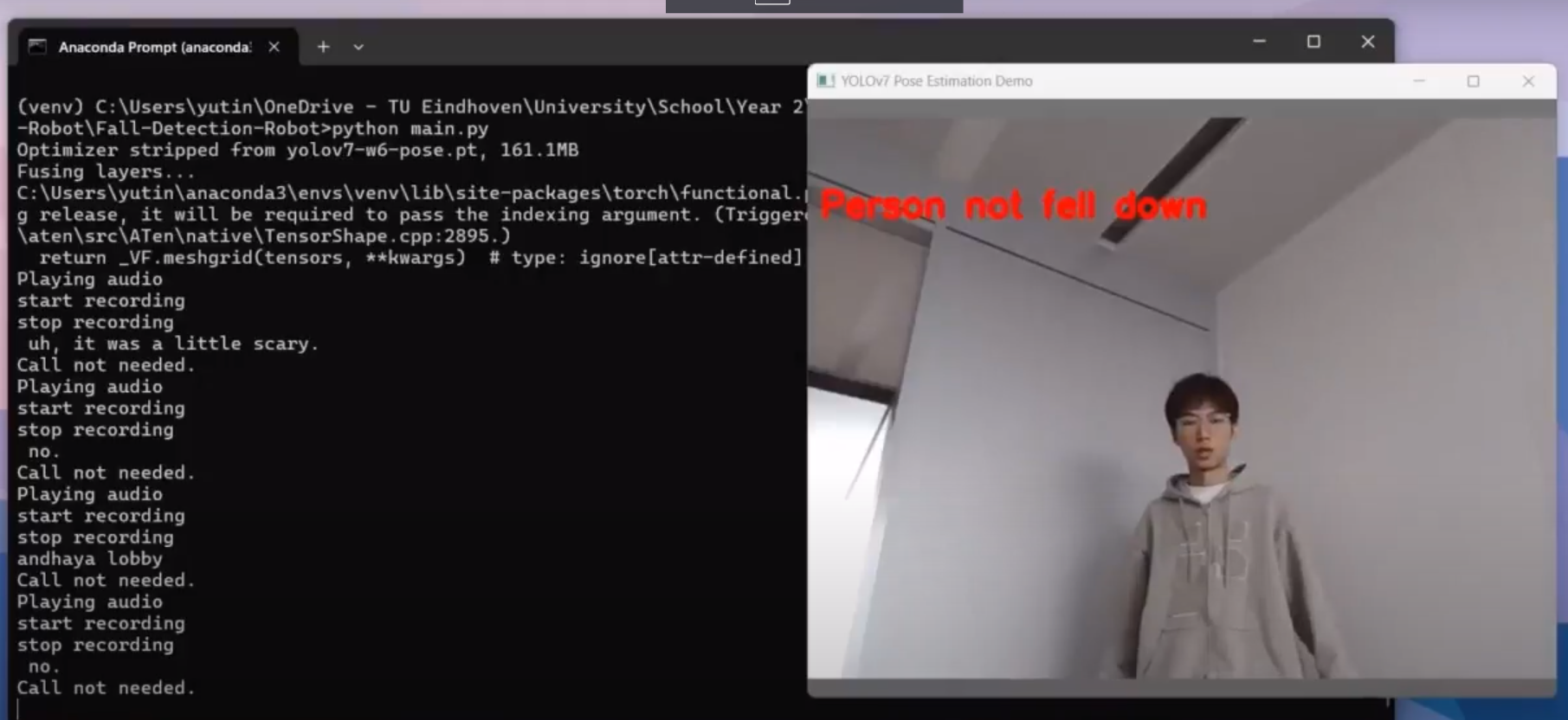

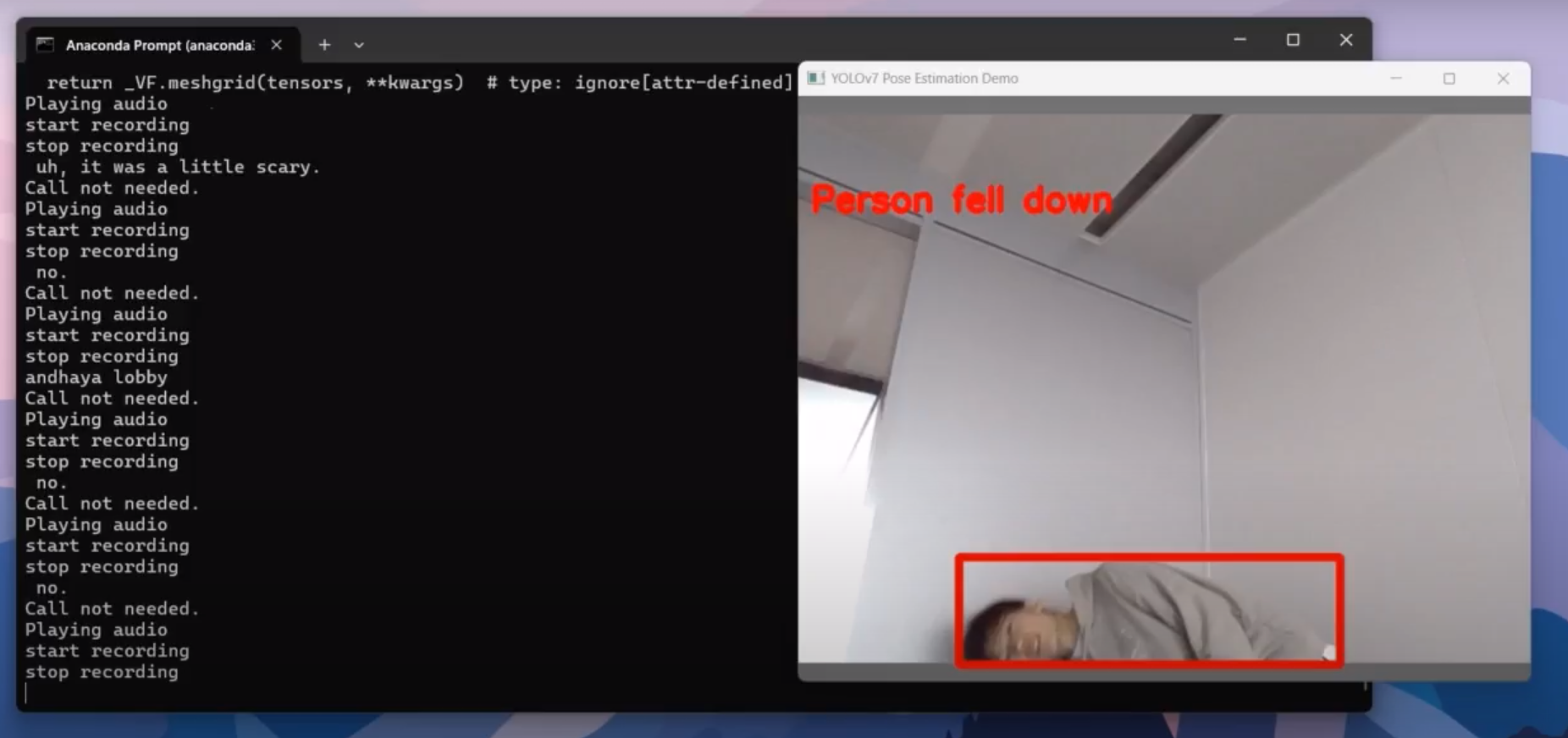

Fall detection Algorithm

We used two libraries implemented using the yolov7[31] object detection model. The first one[32] is a package for human pose estimation. It can activate the laptop camera to detect in real-time a human pose as a skeleton. The second one[33] is a package to detect a person falling down in a video. We combined those two packages to detect real-time human falls and show it on screen.

1. yolov7[31]

Yolov7 is the most accurate as well as the fastest object-detection AI model. Its GitHub repository provides several branches for object recognition, pose estimation, Lidar and other functionalities. It can perform detection on videos and images provided by users. It leaves the space for users to customize the algorithm. For example, users can train the model on their own datasets, or modify some key points to have different biases on object recognition. Therefore, it is a good model for us to use in this project to detect human falls. It can only detect poses of images and videos, but our robot needs to detect human falling in real-time. Hence, some integration with other algorithms is needed.

2. yolov7-pose-estimation[32]

This library is a modification of yolov7. The main reason we use this model is that it is able to apply the yolov7 pose detection on the webcam in real-time. This perfectly suits our need because with this library we can detect the pose of the user in real time. The last step is to identify when the user will be recognized as being falling down.

3. yolov7_keypoints[33]

This library is another modification of yolov7. It uses a fall detection algorithm to detect the human fall. It extracts some human body key points and calculate the y coordinate difference between shoulder, hip, and foot. When the height difference between shoulder and hip, hip and foot, shoulder and foot is small enough, it detects it as a fall.

Therefore, we integrated the second and the third library to solve the problem of detecting a human fall in real time. Now, we are able to use a web cam to detect whether a human falls down.

Voice Recognition Algorithm

When an emergency happens, the user might not be able to move, and interviewees also mentioned that they preferred to use voice commands to call emergency contacts in this situation, so we decided to add voice recognition functions to our robot. When a possible fall has been detected, the robot shall ask the user whether they need help. If the user gives a positive response or does not reply, the robot shall call the emergency contact immediately. To analyze the user's response, a suitable voice recognition API is needed.

1. Whisper[34] is an automatic speech recognition (ASR) system invented by OpenAI, and is trained on 680,000 hours of multilingual and multitask supervised data collected from the web. Whisper leverages a powerful algorithm called a Transformer, commonly used in large language models. It is a free, open-source, capable of handling accents, and multiple languages support. However, the downside of Whisper is that it will take a long time to translate voice into a script. But this API provides users with different processing models with different translating speeds and accuracy, and if we can restrict the user's reply to some simple words, like yes or no, the processing time is still acceptable.

2. Google Speech-to-Text AI[35]. Similarly to Whisper, it is also capable of translating voice into a script. As it is a cloud-based service, it requires no local setup, but the downside for is that it is not free to use. After the first 60 minutes each month, it will charge users 0.024 dollars per minute, which could be a factor to affect the users' purchasing decisions.

Programmable calling and SMS texting

Programmable calling and SMS texting have also been implemented. Twilio has been used as the application programming interface to develop the program in order to initiate the call and to send the text message. A host phone number has been created on Twilio and the number of one of the group members that is going to receive the call and the SMS message has been assigned.

Test plan & results

We make a test plan and make sure it covers everything listed in the implementation. Then we perform testing and record the results.

The test procedure, showing an optimal order of testing all functionalities, is listed below. The order is shown in the plan itself, so, starting with 2.1, then 3.1, then 2.8, and so on, until 4.1. As a reminder, there are no particular test plans for the requirements of Section 6, because all requirements tested below will also implicitly test the Section 6 requirements. We have modified the the Action and Expected Output columns of some tests for clarity.

| Index | Requirement | Precondition | Action | Expected Output |

|---|---|---|---|---|

| 2.1 | The robot shall be able to detect falls. | The robot is powered on. | Pretend to fall down. | The robot detects the fall and notifies you of it via voice. The robot asks you if you are okay and if you need help. |

| 3.1 | The robot's interface shall be controlled using voice commands classified as follows: "Need help", "Do not need help". | The robot is powered on. | Note: it may be more optimal to test 2 first, then test 1.

Go through each action and check that it matches the expected output.

|

Check the output based on the corresponding action.

|

| 2.8 | When the robot detects a fall, the robot shall have a mechanism to allow the user to cancel the notification within 30 seconds. | The robot has detected a fall. | Tell the robot to cancel the notification. | The robot cancels the notification process and goes back to idly following you around. |

| 2.2 | When the robot detects a fall, the robot shall notify the designated emergency contact within 30 seconds. | The robot is powered on. | Pretend to fall down. | The robot detects the fall and notifies the designated contact within 30 seconds. The notification is either a VOIP video call or a SIM card based call, if the internet is not available. The robot in the call says that the user has fallen and requires assistance. The robot says the name, date of birth, and the address of the user. |

| 2.10 | The robot shall monitor the user continuously using the camera mounted on its body. | The robot is powered on. | Move or pretend to fall down. If you pretend to fall down, when the robot asks you if you need assistance, say no. | If the user moves, the robot follows the user around. If the user pretends to fall down, the robot will notify the user of it via voice, asking them if they need assistance. After replying no, the robot goes back to following the user around. |

| 2.3 | The notification shall be in the form of a VOIP video call to the emergency contact, or a SIM-card based call if an Internet connection is unavailable. | The robot has detected a fall. | To test the video call, simply wait until the robot notifies the contact. To test the SIM call, turn off the internet, repeat the fall, and wait until the robot notifies the contact. | The video call and SIM call both successfully connect to the emergency contact with no issues. The robot in the call says that the user has fallen and requires assistance. The robot says the name, date of birth, and the address of the user. |

| 2.5 | When the robot detects a fall, and the emergency contact has not responded (as described in 2.4), the robot shall send a text message to the emergency contact. | The robot has detected a fall. | Wait until the robot notifies the contact. Make sure the emergency contact(s) has/have not responded. | The emergency contact(s) receive(s) a text message from the robot. The text message says that the user has fallen and requires assistance. The text message contains the name, date of birth, and the address of the user. |

| 2.6 | The notification (text or call) that the robot sends shall contain the name, date of birth, and location of the user. | The robot has sent a text message (2.5) | Inspect the text message. | The text message contains the name, date of birth, and location of the user. |

| 2.7 | When the robot detects a fall, and the emergency contact has not responded (as described in 2.4), the robot shall take a picture of the fall and send the picture to the designated emergency contact via SMS. | The robot has sent a text message (2.5) | Inspect the text message. | The text message contains a picture of the fall. |

| 4.1 | During training, the rate of false negative falls shall be at most 1%. | The robot is powered on. | Inspect the training statistics of the robot. | The rate of false negative falls is no more than 1%. |

Discussion & Future steps

After testing our program on a real robot, we have demonstrated that our system can be successfully implemented on a home robot and could operate as expected. To make the whole design process complete, we have carried out a reflection analysis based on what we have right now and what we did not manage which will be discussed here.

In total, we managed to implement three main features on the robot: a fall detection algorithm, simple voice recognition and emergency calling. These three features work in series which altogether form a complete cycle. The fall detection algorithm is the first activated system which monitor the user's movement and detect falls. During our experiment, this algorithm performed perfectly as expected. Although sometimes there was some delay when the system is trying to react due to lag caused by the computer we used, the output of the detection was accurate. To ensure better performance of the program, a computer with stronger computing power is required. The method used for the fall detection model as described in the Design section is generally accurate except that sometimes it can mistakenly treat it as a fall when the user is trying to lie down or to sleep. That is when the voice recognition system comes into play. Currently the robot is only able to recognize simple commands such as "yes" or "no". It checks with the user if an emergency call is needed when it sees a fall. However, situations may arise where the user repeatedly bends down and stands up while cleaning the floor, which may confuse the robot. To avoid repetitive detection and checking, further improvements can be made to the system which will be discussed in the Future steps below. Once a call is needed according to the output of voice recognition, the robot will call the designated emergency contact and at the same time send a text message including the name, date of birth and location of the user. For demonstration, a Twilio business account has been created with a free trial. A virtual number is taken and implemented in the program which will expire in one month. In reality, the emergency communication system has to be much more comprehensive, as can be seen from the Future steps discussed below.

Apart from the main functionalities, we could have attached more importance to dealing with ethical concerns.

Future steps

1. One potential enhancement to the current robot would involve integrating a video call function. The scenario of a sudden fall can be intricate, and present technology doesn't guarantee 100% accuracy in fall detection. Therefore, the most pragmatic approach to accurately assessing the situation would be through a video call to the caregiver. This would enable the caregiver to gain a clearer understanding of the user's condition.

2. Utilizing a large language model could significantly enhance our ability to interpret user responses. Currently, our system converts user responses into strings and checks for keywords associated with positive or negative sentiments, such as "yes" and "no." However, in real-world scenarios, user responses can be unpredictable and may not neatly fit into predefined categories of positivity or negativity. Given the advanced capabilities of large language models today, it would be more realistic and accurate to leverage them for response analysis, rather than relying solely on predetermined keywords.

3. The robot currently employs the Yolo7 API for detecting sudden falls, but its analysis is limited to a single frame, preventing measurement of the object's velocity and acceleration. In future iterations, introducing variables to track a sequence of the object's locations would allow us to calculate its speed and acceleration, thereby improving the accuracy of fall detection.

Conclusion

Family members' time constraints for caregiving and financial limitations in hiring professional caregivers have combined to result in the lack of adequate care for elderly people living alone. Moreover, these elderly people may also suffer from health conditions or disabled issues and the number of elderly individuals facing these challenges continues to rise. We decided to develop a home robot designed to safeguard the elderly who are living alone and with limited mobility from being injured caused by falling.

Our project starts with the research on the problem, particularly in elderly care. The literature reveals the concern in care of the elderly, due to a shortage of caregivers. Moreover, the population of people with dementia continues to grow, consequently leading to a growing demand for robotic caretakers. Hence, our focus shifts towards designing a home robot that safely monitors the individual and reach out for help in case of a fall of the user. Firstly, a list of current existing products is conducted, which provide initial inspiration for our robot’s functionalities. Then, to more accurately address and solve the problem, a USE analysis and an ethical analysis are performed, and interviews with three users are conducted.

These findings form the specifications and sequence diagrams that determine the functions of the robot. In general, the robot should be able to detect fall accurately, be mobile, communicate with the user, contact other people and the emergency if needed, user-friendly, and store the data locally. Achieving these features is feasible through the implementation of trainable object detection, pathfinding algorithms, natural language processing, text to speech and voice processing algorithms.

To validate our concept, we implemented the core functionalities of our design on a programmable robot from the robotics lab. This prototype is able to detect human falls, ask if the user needs help, receive the user’s command and notify pre-set emergency contacts through calling and SMS. This robot passes the test plan and operates as expected. This shows the viability of our solution in addressing the research problem.

All in all, the development of our design addresses the challenges of the aging population and a shortage of caregivers. Our project offers a solution that can enhance the quality of life for both the elderly and their families. Furthermore, the implementation of our prototype demonstrates the potential of deploying robots in healthcare area to help with vulnerable populations worldwide.

Nevertheless, due to time constraints, not all features of our design is implemented in the prototype. Future improvements on the algorithms and more tests need to be conducted in order to deploy the design in the real world.

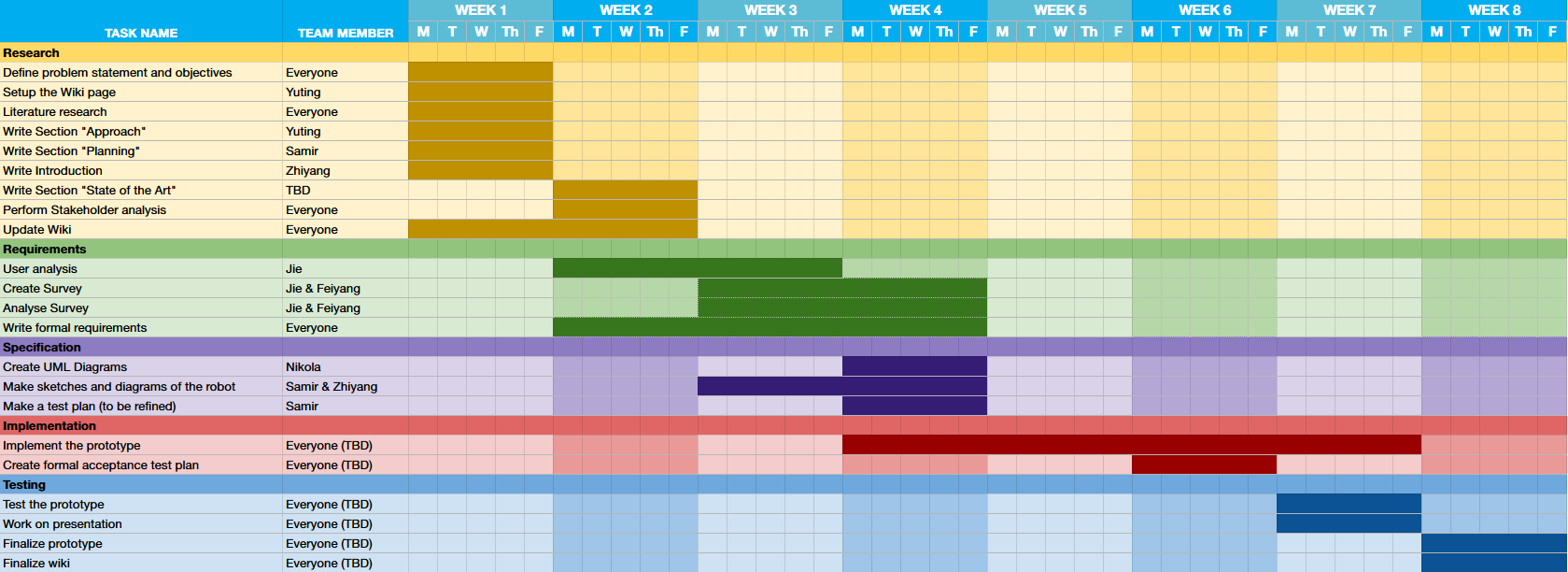

Approach

In order to reach the objectives, we split the project into 5 stages. The five stages are distributed into 8 weeks with some overlaps. Everyone in the team is responsible for some tasks in these stages.

- Research stage: In week 1 and 2, we mainly focus on the formulation of problem statements, objectives, and research. We first need to make a plan for the project. The direction of this project is fixed by defining the problem statement and objectives. Doing literature research helps us to gather information of state-of-the-art, the advantages and limitations of current solutions.

- Requirements stage: From week 2 to week 4 we will do user analysis to further determine the goal and requirements of our product. We will collect information about user needs by surveys and interviews. The surveys and interviews can contain information found in the research stage. For example, how does the user think about the current solution, what improvements can be made.

- Specification stage: This stage is in week 3 and 4, in which we create the specification of our product using techniques such as UML diagrams and drawing user interface. From the user analysis and the research, we can create the specification in more detail. After this, a test plan will be made so that the product can be tested to see whether it meets the requirements and the specification.

- Implementation stage: The prototype of our product will be implemented in this stage from week 5 to week 7. We plan to only create the digital part of the product due to time constraints. Also, a more formal test plan will be constructed for later use.

- Testing stage: In week 7 and 8, the prototype will be tested by the test plan and we can examine whether the product reaches our goal and solves the problem. The finalization on the prototype, presentation and wiki page will be done in this stage.

Planning

We created a plan for the development process of our product based off of the previously described approach. This plan is shown in the Gantt chart below:

Task Division

We subdivided the tasks amongst ourselves as follows:

| Research | Requirements | Specification | Implementation | Testing | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Task | Group member | Task | Group member | Task | Group member | Task | Group member | Task | Group member | ||||

| Define problem statement and objectives | Everyone | User Analysis | Jie | Create UML Diagrams | Nikola | Implement the prototype | Everyone | Test the prototype | Everyone | ||||

| Setup the Wiki page | Yuting | Create Survey | Jie & Feiyang | Define user interface | Samir & Zhiyang | Create formal acceptance test plan | Everyone | Work on presentation | Everyone | ||||

| Literature research | Everyone | Analyse Survey | Jie & Feiyang | Make a test plan | Samir | Finalize prototype | Everyone | ||||||

| Write Section "Approach" | Yuting | Write Formal Requirements | Everyone | ||||||||||

| Write Section "Planning" | Samir | ||||||||||||

| Write Introduction | Zhiyang | ||||||||||||

| Write Section "State of the Art" | Samir & Nikola | ||||||||||||

| Perform Stakeholder Analysis | Everyone | ||||||||||||

| Update Wiki | Everyone | ||||||||||||

Milestones

By the end of Week 1 we should have a solid plan for what we want to make, a brief inventory on the current literature, and a broad overview of the development steps required to make our product.

By the end of Week 3 we should have analysed the needs of our users and stakeholders, formalized these needs as requirements according to the MoSCoW method, and have a clear state-of-the-art.

By the end of Week 4 we should have specified the requirements as UML diagrams, blueprints, etc., created a basic user interface, and created an informal test plan.

By the end of Week 6 we should have created a formal acceptance test plan.

By the end of Week 7 we should have finished the implementation of our product's prototype.

By the end of Week 8 we should have tested the product according to the acceptance test plan, finished the presentation, finalized the prototype, and finalized our report.

Deliverables

The final product will be a robot that is programmed to detect when a user falls and alerts emergency services if they do. Furthermore, we would like it to be capable of identifying fall risks and alerting the user of them, but we do not yet know if this can also be done within the course timeframe.

Logbook

Week 1

| Name | Total hours | Tasks |

|---|---|---|

| Yuting | 6 | Introduction lecture (1), meeting (1.5), Approach section (1.5), literature study (2) |

| Jie | 6 | Introduction lecture (1), meeting (1.5), Approach section (1.5), literature study (2) |

| Zhiyang | 6 | Introduction lecture (1), meeting (1.5), Introduction section (1.5), literature study (2) |

| Samir | 6 | Introduction lecture (1), meeting (1.5), Gantt chart (1), Planning section (1.5), Literature research (1) |

| Feiyang | 5 | Introduction lecture (1), meeting (1.5), literature study (2.5) |

| Nikola |

Week 2

| Name | Total hours | Tasks |

|---|---|---|

| Yuting | 5.5 | Feedback session + meeting (1.5), brainstorming on new ideas (1), Research (2), Analysis of target groups(1) |

| Jie | 7 | Feedback session + meeting (1.5), brainstorming on new ideas (1), Analysis of target groups(3), State of the art section(1) |

| Zhiyang | 6.5 | Feedback session + meeting (1.5), brainstorming on new ideas (1), rewriting Introduction (2), Ethical analysis section (2) |

| Samir | 10.5 | Feedback session + meeting (1.5), brainstorming on new ideas (1), State of the art section (8) |

| Feiyang | 4.5 | Feedback session + meeting (1.5), brainstorming on new ideas (1), Analysis of target groups(2) |

| Nikola |

Week 3

| Name | Total hours | Tasks |

|---|---|---|

| Yuting | 10 | Feedback session + meeting (2), research on solutions (2), requirements (2), group meeting on Thursday (2), user analysis (2) |

| Jie | 10.5 | Feedback session + meeting (2), research on solutions (2), group meeting on Thursday (2), interview questions (2), interview (1), translate result(0.5), analysis feedback(1) |

| Zhiyang | 10.5 | Feedback session + meeting (2), group meeting on Thursday (2), CAD model (5), update requirements and CAD drawings on Wiki (1.5) |

| Samir | 6.5 | Feedback session (0.5), group meeting on Thursday (2), sketching (4), updating wiki (1) |

| Feiyang | 7 | Feedback session + meeting (2), research on solutions (2), group meeting on Thursday (2), interview (1) |

| Nikola |

Week 4

| Name | Total hours | Tasks |

|---|---|---|

| Yuting | 13 | Feedback session + meeting (2), research on fall detection algorithms (6), meeting Thursday (1), study and implement the algorithm (4) |

| Jie | 5 | Feedback session + meeting (2), interview the third interviewee (1), interview analysis (1), algorithm search (1) |

| Zhiyang | 9 | Feedback session + meeting (2), contact the robotics person (1), agenda meeting Thursday (1), meeting Thursday (1), select requirements for implementation (4) |

| Samir | 12 | Feedback session + meeting (2), research and update requirements (7), meeting Thursday (1), test plans (2) |

| Feiyang | 6 | Feedback session + meeting (2), research on algorithms (2), interview analysis (1), meeting Thursday (1) |

| Nikola |

Week 5

| Name | Total hours | Tasks |

|---|---|---|

| Yuting | 6 | Feedback session + meeting (2), agenda Thursday meeting (1), update fall detection algorithm to wiki (3) |

| Jie | 8 | Feedback session + meeting (2), agenda Thursday meeting (1), researching and implementing different voice recognition API (4), updating

Wiki(1). |

| Zhiyang | 9 | Feedback session + meeting (2), agenda Thursday meeting (1), researching and implementing programmable calling and messaging (6) |

| Samir | 10 | Feedback session + meeting (2), research and update requirements (3), researching methods for implementation (5) |

| Feiyang | 7 | Feedback session + meeting (2), Thursday meeting (1), research methods for implementing voice command (4) |

| Nikola |

Week 6

| Name | Total hours | Tasks |

|---|---|---|

| Yuting | 6.5 | Feedback session + meeting (2), agenda Thursday meeting (1.5), integrate fall detection algorithm with voice calls, sms (2), improve the code quality (1) |

| Jie | 10 | Feedback session + meeting (2), Thursday meeting (1.5), learning and configure Whisper API locally (2 hour), learn python syntax(0.5),

learning speaker and recorder API(2), implementing prompt, record, and analyze user response module (2) |

| Zhiyang | 6.5 | Feedback session + meeting (2), Thursday meeting (1.5), implementing voice call and SMS (2), contact the robot person and schedule the meeting (1) |