PRE2019 3 Group2: Difference between revisions

TUe\20183095 (talk | contribs) |

TUe\20183095 (talk | contribs) |

||

| Line 130: | Line 130: | ||

Unfortunately, the NAO robot is not able to perform every motion a human can do due to a limited amount of links and joints. The list of human idle movements from experiment A and the literature is : | Unfortunately, the NAO robot is not able to perform every motion a human can do due to a limited amount of links and joints. The list of human idle movements from experiment A and the literature is : | ||

* | *Eye stretching | ||

* | *Mouth movement | ||

* | *Cough | ||

* | *Touching face | ||

*Touching hair | |||

*Looking around | |||

*Neck movement | |||

*Arm movement | |||

*Hand movement | |||

*Lean on something | |||

*Body stretching | |||

The human idle movements 1 until X were either found in the database[ref] or made in Choregraphe 2.1.4[ref]. | The human idle movements 1 until X were either found in the database[ref] or made in Choregraphe 2.1.4[ref]. | ||

Revision as of 09:53, 17 March 2020

Research on Idle Movements for Robots

Group Members

| Name | Study | Student ID |

|---|---|---|

| Stijn Eeltink | Mechanical Engineering | 1004290 |

| Sebastiaan Beers | Mechanical Engineering | 1257692 |

| Quinten Bisschop | Mechanical Engineering | 1257919 |

| Daan van der Velden | Mechanical Engineering | 1322818 |

| Max Cornielje | Mechanical Engineering | 1381989 |

Abstract

Introduction

Problem statement

The future will contain robots, which will interact with humans in a very socially intelligent way. Robots will, then, demonstrate humanlike social intelligence and non-experts will not be able to distinguish robots and other human agents anymore. To accomplish this, robots need to develop a lot further: the social intelligence of robots needs to be increased a lot, but also the movement of the robots. Nowadays, robots do not move the way humans do. For instance, when moving your arm to grab something, humans tend to overshoot a bit[Source?]. A robot specifies the target and moves towards to object in the shortest way possible.

Humans try to take the least resistance path. This means they also use their surroundings to reach for their target. For instance, lean on a table to cancel out the gravity force. Furthermore, humans use their joints more than robots do.

This lack of movement creates a big problem for a robot's motion for idle movements. An idle movement is a movement during rest or waiting (e.g touching your face). For humans and every other living creature in the world, it is physically impossible to stand precisely still. Robots, however, when not in action stand completely lifeless. Creating a static (and, therefore, an unnatural) environment during the interaction between the robot and the corresponding person. It is unsure if the robot is turned on and can respond to the human, and it can also create an uncomfortable scenario for the human[source?]. Another thing is that humans are always doing something or holding something while having a conversation. To improve the interaction with humans and robots, the questions that have to be answered are: "Which idle movements are considered the most appropriate human-human interaction?", "Which idle movements are considered the most appropriate human-robot interaction?", and "Which idle movements are most realistic and manageable for a robot to perform without looking unnatural, resulting in the best outcome on human-robot interaction?". In this research, we will look at all these things by observing human idle movements, test robot idle movement, and research into the most preferable movements according to participants.

Objectives

It is still very hard to get a robot to appear in a humanistic natural way as robots tend to be static (whether they move or not). As a more natural human-robot interaction is wanted, the behavior of social robots needs to be improved on different levels. In this project, the main focus will be on the movements that make a robot appear more natural/lifelike during idling, which is called 'idle movements'. The objectives of the research will be to find out which idle movements make a robot appear in a more natural way. In this research, the information will be based on previous research that has been done on this subject (which is explained in the chapter 'State of the art'). More information will be gathered by observing people in videos; these videos will differ in categories as will be explained later in the chapter 'Approach'. These videos will give a conclusion on what the best idle movements are for certain conversations.

Next to this, the NAO robot will be experimented with. The NAO robot can be seen in figure x. This robot has many human characteristics such as human proportions and facial properties. The only big difference is the height as the NAo robot is just 57 centimeters. The NAO robot will be performing different idle movements and future users give their responses to these movements. With these acquired responses, the received data is supposed to give a conclusion on what the best idle movements are and will hopefully make the robot appear more life-like. Due to the fact that in the future humanoid robots will also improve, possible expectations on the most important idle movements will also be given. Altogether, it is hoped for that the information will give greater insight into the use of idle movements on humanoid robots to be used in future research and projects on this subject.

Users

When taking a look at the users of social robots, a lot of groups can be taken into account. This is caused by the fact that it is uncertain to what extent robots will be used in the future. Focusing on all the possible users for this project would be impossible and 'designing for everyone, is designing for no-one'. A selection has been made of users who will likely be the first to benefit from or have to deal with social robots. This selection is based on collected research from other papers[1], where these groups where highlighted and researched on their opinion on robot behavior. The main focus of research papers on social robots is for future applications in elderly care, and therefore elderly people are the main subject. Another group of users who will reap the benefits of social robots are physically or mentally disabled people.

The hospitals, care centers, and nurses who care for these people nowadays will also be users of such social robots. These contacts are not necessarily the primary users but are still a lot in contact with the robots (making them the secondary users). They can feel threatened by social robots [ref] as the robots might take over their jobs. Though, the main focus during the project is on the primary users as they will have the most contact with the robots. For those people in need of care, it is essential that these social robots are as human-like as possible. This will help them better accept the presence of a social robot in their private environment. One key element of making a robot appear life-like is the presence of (the to be studied) idle motions.

Companies and manufacturers of social robots will benefit from this research, implementing it in their product and will, therefore, also be (secondary) users. They want to offer their customers the best social robot, they are able to produce, which in turn has to be as human-like as possible. Thus, it is key to include idle movements in their design as this would increase their sales, resulting in more profit over time.

Approach, Milestones and Deliverables

Approach

To get knowledge about idle movements, observations have to be done on humans. Though, the idle movements will be different for various categories (see experiment A), which requires research beforehand. This research will be done via state of the art. Papers, such as papers from R. Cuijpers and/or E. Torta and Hyunsoo Song, Min Joong Kim, Sang-Hoon Jeong, Hyeon-Jeong Suk, and Dong-Soo Kwon, contain a lot of information concerning the idle movements that are considered important. Therefore, it is important to read the papers of the state of the art carefully. The state of the art will be explained briefly in the chapter ‘State of the art’. After the research, observations can be done. The perfect observation method is dependent on the category. Examples of such methods are observing people walking (or standing) around public spaces, such as on the campus of the university or on the train. However, for this research, videos or live streams will be watched on (e.g) Youtube. The noticed idle movements can be listed in a table and can be tallied. The most tallied idle movement will be considered the best for that specific category. However, that does not mean it will work for a robot as the responses of users might be negatively influenced. Experiment B will clarify this.

The best idle movement per category will be used in experiment B. This experiment will be done by using the NAO robot. The experiment makes use of a large number of participants (which optimally would consist out of the primary users, see chapter 'Users'). The NAO robot will start a conversation with the participant for a given amount of time. This is done twice (depending on the number of idle movements used), once with the NAO robot not using any idle movements and, then, using different idle movements for different types of conversations. The used idle movements will be based on the research as listed above and are in the same order for every participant. The participant will have to fill in a Godspeed questionnaire[2] before the conversation, after the first conversation, and after the last conversation. This ensures that there can be looked into the way people's thoughts change relative to the robot's behavior. The Godspeed questionnaire includes many different questions such as animacy or anthropomorphism. These questions should also be answered with a scale between 1-5. By using the data of this experiment, a diagram can be made to the responses of the participants to the various idle movements. Via this, the best idle movement can be decided for each category. The result can also occur in a combination of various idle movements as being the best.

MOET DIT VERANDERT WORDEN OMDAT EXPERIMENT B IS VERANDERD?

Milestones and Deliverables

See Planning V1.

Experiment A

The first experiment will be a human-only experiment. To understand the meaning of robot idle-movement better humans have to be observed. People will be observed in multiple places such as mentioned in the Approach but still have different movements. Listed down below, it is stated which type of video has been used (e.g youtube videos or live streams). The videos differ as the idle movements most likely differ in different categories. For instance, when people are having a conversation with a friend a possible idle move will be shaking with their leg and or feet or biting their nails. However, when a job interview people tend to be a lot more serious and try to focus on acting 'normal'. The different categories for the idle movement with multiple examples to implement on the robot are listed down below. Furthermore, these examples are based on eleven motions suggested by {{{ref}}} and these eleven motions are eye stretching, mouth movement, coughing, touching the face, touching hair, looking around, neck movement, arm movement, hand movement, lean on something and body stretching.

| Category | Examples of idle movements |

|---|---|

| Casual conversation | nodding, briefly look away, scratch head, putting arms in the side, scratch shoulder, change head position. |

| Waiting / in rest | lightly shake leg/feet up and down or to the side, put fingers against head and scratch, blink eyes (LED on/off), breath. |

| Serious conversation | nodding, folding arms, hand gestures, nod, lift eyebrows slightly, touch the face, touch/hold arm. |

The listed eleven motions are used for the tallying of the idle movements so that its full comparison can be given between the categories. The type of tape/video used is, thus, dependent on such a category. For each category, it will be explained which type of video has been used and why. Furthermore, the analysis of the video has been done twice, as that was one of the main problems stated during the presentation.

Casual conversation

Casual conversation is talking about non-serious activities, which can occur between two strangers but also between two relatives. The topics of those conversations are usually not really serious, resulting in laughter or just calm listening. No different from the serious conversation, attention should be paid to the speaker. During conversations, the listeners will show such attention towards the speaker, which can generally be done by having eye contact. Furthermore, lifelike behavior is also important as it results in a feeling of trust towards the listener. Therefore, it is important for a robot to have the ability to establish such trust via idle movements.

Eye contact for the NAO robot has already been researched and it is established that it is important.{{{ref}}} The usage of idle movements for the gain of trust is also considered important. Examples of such idle movements are nodding, briefly looking away, scratching the head, putting the arms in the side (and changing its position), scratching the shoulder and changing the head position. These idle movements are corresponding with what has been found in the idle movement research. Template:Ref In casual conversations, nodding can confirm the presence of the listener and ensure that attention is being paid. Briefly looking away can mimic the movement of thought as people tend to look away and think while talking. Scratching your head might mimic the same idea, as scratching your head is associated with thinking. Moreover, scratching your head might also give the speaker a feeling of confidence due to the mimic of liveliness. Putting the arms in the side is generally a gesture of confidence and also of relaxation. Mixing up the number of arms in the side and the position of the legs will give the speaker an idea that the robot will relax, which gives the speaker a feeling of confidence. Scratching the shoulder is just a general mimic that ensures that the robot will look alive and seems confident. The change of the head position has the same purpose, the change of head position generally is a movement that occurs when thinking and during questioning what has been said. Both reasons give the speaker an idea that the robot is more lifelike. All of these examples of idle movements come back to the (already spoken about) research, resulting in the stated eleven motions (see the first paragraph of 'Experiment A').

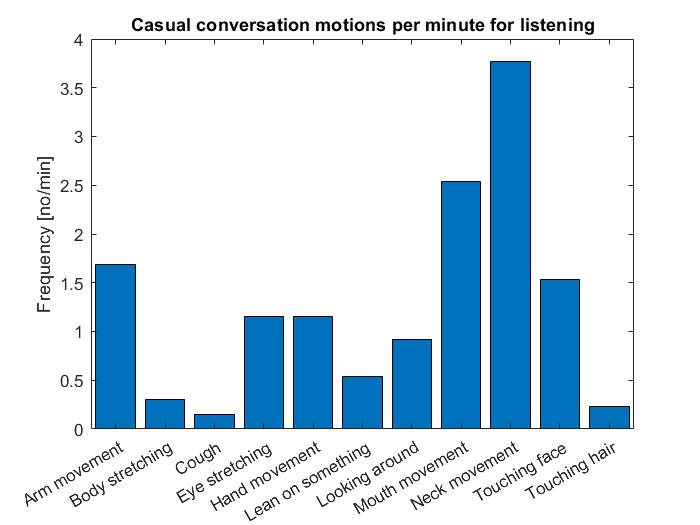

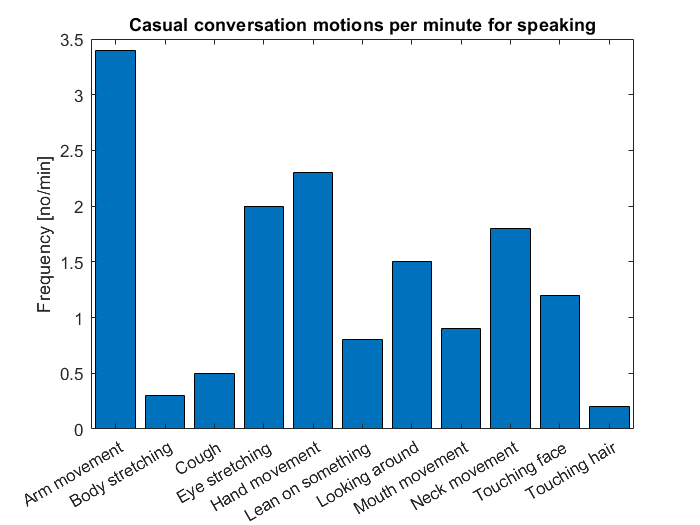

After knowing what idle movements to look for, it was important to investigate ways to tally the motions. Since there are many different ways to casually talk to someone, three different videos were taken (all from youtube). One of them is a podcast between four women [3], another one is a conversation between a dutch politician and a dutch presenter [4] and the last one is of an American host show[5]. The three videos were all watched fully and the number of each motion was being noted. The tallying was done for the listener and for the speaker so that the differences can also be spotted. The data of both can be seen in the bar graphs in Figures 1 and 2.

What can be seen in figure 1 is that neck movements are the most important motion, followed by mouth movements, arm movements and touching face (respectively). This can be explained due to the fact that people tend to nod a lot as a way to show acceptance and agreement with what has been said. However, head shaking is also seen as such a neck movement. The mouth movement is wetting their lips or just saying "yes", "okay" or "mhm". Again, with the underlying meaning being acceptance and agreement. Both motions are also a way of showing that attention is being paid. The arm movement can be explained by the fact that people scratch or just feel bored. Nonetheless, this movement shows life-like characteristics. Touching their faces is the same principle as the arm movement as it can happen due to boredom, an itch or something else. Next to these four, there were no motions that stood out.

From figure 2, the arm movement, eye stretching, hand movement, neck movement, and looking around stood out. The arm and hand movement can be explained by the fact that people tend to explain things via gestures. These gestures are done by using your arm and hand. The eye stretching is used to create emotion (e.g being in shock or being surprised). The neck movement is a result of approval or disapproval of things that are being told. Looking around is a factor in thinking and, if people look away, they are thinking about what they remember of a scenario or what the best way is to tell something. Furthermore, nothing really stood out.

In this research, nothing will be done with the speaking research. However, it is good to see that the motions differ per action (listening or speaking) and that the eleven motions can be applied to these.

Waiting / in rest

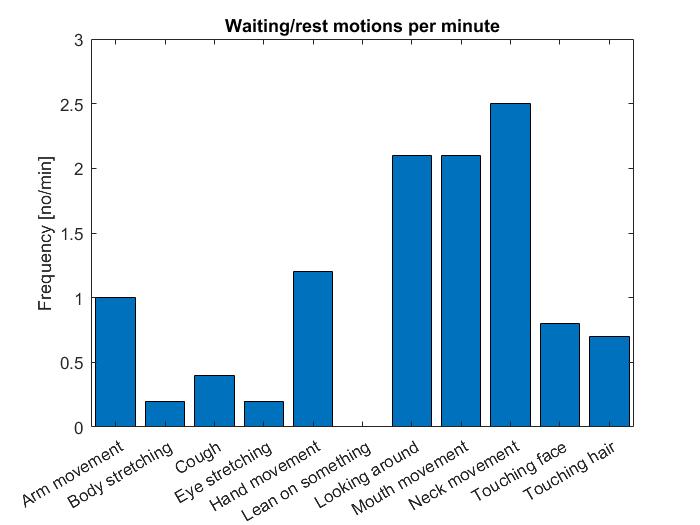

Often people find themselves in waiting or in a rest position. People normally think that they are really doing nothing in those situations. However, everybody makes some small movements when doing nothing. This is one of the things that makes a person or animal look alive. There are many different things that can be described as idle movements, but this research will only look into a couple of them. When you take a close look at people when they are doing nothing you will realize that they do these movements more often than you think. In this part of the experiment, different videos were looked into where people are in the situation of doing nothing or are in rest. All the movements above are checked when done in the videos. Quinten refereer hiernaar alsjeblieft All the results are then put together and added to a bar chart. In this way, we can find out which idle movements are most performed when in waiting or in rest. Figure ... shows the bar chart of the results.

When looking at the bar chart above, it can be concluded that the neck movements are done the most. This can be explained by the fact that people move their heads while looking at their mobile phones or just nodding to people walking by. The second most frequent idle movements are looking around and mouth movement. Looking around is normal during waiting as people want to make sure what is happening around them and this movement makes them seem more alive and involved in the environment. The mouth movement is mainly people licking their lips or smiling to people walking by. The lip-licking is a preparation for a conversation that would maybe be happening in the near future and smiling is showing other people that you are present. The other movement that stood out is the hand movement, which can be explained by the fact that people are nervous when alone or just anxious. The movements that are most often performed should probably be implemented in a robot when it is in rest/waiting to acquire a better human-like appearance of the robot.

Serious conversation

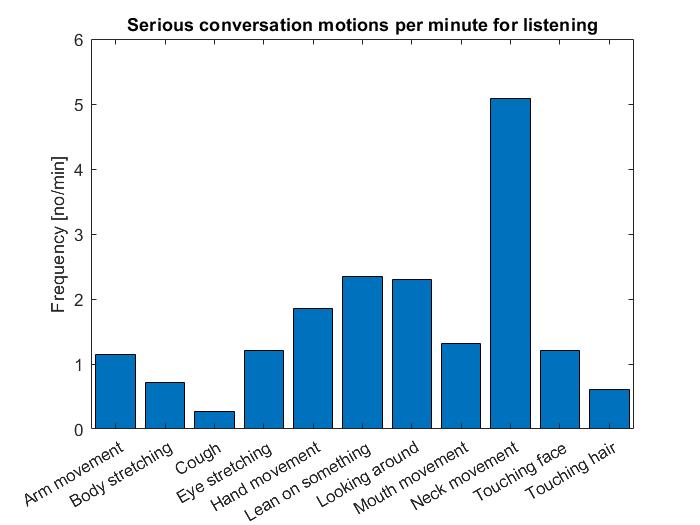

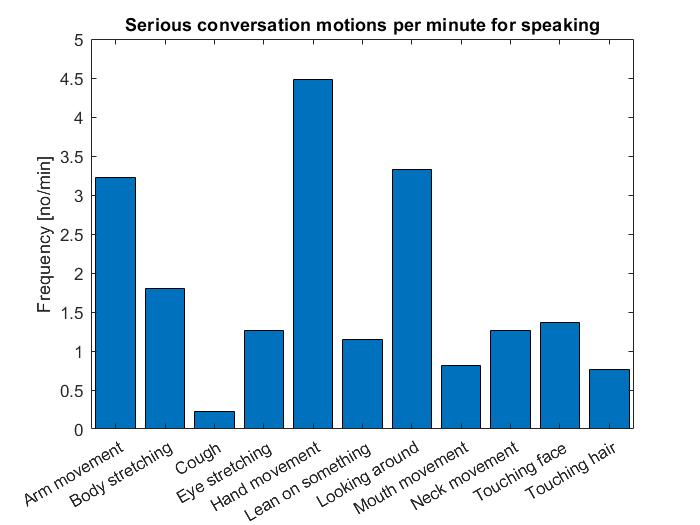

The human idle movements in a serious or formal environment has been done by studying the idle movements in conversations like job interviews [6], ted talks [7] and other relative formal interactions like a debate [8] or a parent-teacher conference. Comparing the idle movements with other categories as like the friendly conversation people there is a big difference to be seen. In a serious conversation, people try to come over smart or serious. As in friendly conversation, people do not think about their idle movements as much. The best example of this behavior is the tendency of humans to touch or scratch their face, nose, ear or any other part of the head. These idle movements are more common in friendly conversation because in a formal interaction people try to prevent coming over as childish or impatient. The idle movements, in this category, that are special are moves like hand gestures that follow the rhythm of speech. When talking another common idle movement is to raising eyebrows when trying to come over as convincing. When listening, however, a common idle movement is to fold hands. This comes over as smart or to express an understanding of the subject. The last type of idle movements that are very common are moves like touching the arm or blinking are also used in friendly conversations. In previous research ( rev Japanese article) there has been done research on idle movements for any type of conversation, now the same will be done for these categories counting all the idle motions for the videos mentioned, included the idle motions mentioned earlier, which stood out without counting the movements. Two bar charts have been made counting all the idle motions in the videos mentioned at the top of this paragraph. However, in two of the three videos, only one person is relevant to count the idle motions. So in the video of the job interview, only the job giver is considered because this corresponds better with the other videos and makes more sense for the robot to rather not be the applicant but the employer. However, the employer is listening most of the time and in the other two videos, the main characters are speaking. So in a serious conversation, a difference has to be made between speaking and listening. The two bar charts look like the following:

What can be seen in figure ..., is that neck movements really stand out. This is caused by the fact that people tend to nod a lot during a serious conversation, nodding ensures that the person comes across as understanding or smart. Furthermore, looking around and leaning on something are standing out. Looking around can be a factor of confidence and calmness during a serious conversation, showing that the person is comfortable being there. The leaning on something is mostly a table in the job interview and ensures that the person listening seems to be present and fully concentrated about the conversation (which really is helpful with, for example, a job interview). Furthermore, hand movement is an important factor. As already said, hands are put over each other on the table to show presence as well.

Figure ... shows a high frequency at hand movement. This is caused by the fact that people want to come across smart or interesting, which is achieved by using gestures while talking. That also explains the peak for arm movements as they are also important in gestures. Looking around is caused by people thinking. While thinking during a serious conversation, people look away to look concentrated. Furthermore, looking at someone while thinking might make them uncomfortable. Lastly, body stretching has a relatively high frequency. Body stretching generally gives a feeling that someone is comfortable in a conversation; it also shows that the person is present (if not done extremely abundant).

In this research, nothing will be done with the speaking research. However, it is good to see that the motions differ per action (listening or speaking) and that the eleven motions can be applied to these.

Experiment B1

Experiment B1 is divided into different parts. These are:

- The NAO robot

- Determining (NAO compatible) Idle movements from literature and *Experiment A.

- The participants

- Setting up experiment B1

- The script

- The questionnaire

- The results

- Limitations

The NAO robot

The NAO robot is an autonomous, humanoid robot developed by Aldebaran Robotics. It is widely used in education and research. In the paper from Torta[], where the idea of this project is originated, is the NAO robot used as well. The robot is packed with sensors, for example, cameras which can detect a face, position sensor, velocity sensor, acceleration sensors, motor torque sensor and range sensors.[ref]. It can speak multiple languages, including Dutch. All of our participants were dutch. Therefore the experiment will be done in Dutch to get the best results, without having a language barrier determine the quality of the conversation.

Determining (NAO compatible) Idle movements from literature and Experiment A

Unfortunately, the NAO robot is not able to perform every motion a human can do due to a limited amount of links and joints. The list of human idle movements from experiment A and the literature is :

- Eye stretching

- Mouth movement

- Cough

- Touching face

- Touching hair

- Looking around

- Neck movement

- Arm movement

- Hand movement

- Lean on something

- Body stretching

The human idle movements 1 until X were either found in the database[ref] or made in Choregraphe 2.1.4[ref].

- Idle movements of the NAO robot 1

- Idle movements of the NAO robot 2

- Idle movements of the NAO robot 3

- ...

The other human idle movements cannot be tested due to the limitations of the NAO robot or were not relevant enough for the conversation with the NAO robot.

The participants

As explained in [ref users] there are a lot of users that can be taken into account. Due to limitations, it was decided to take random participants to participate in experiment B. 18 Participants will be participating in this experiment. The participants all filled in a document, giving their consent to let their data be used for this experiment and for this experiment only.

Setting up experiment B(Open Question or ask to read something?-> implementation of categories in A?)

A room will be booked close to the social robotics lab. The experiment will be done in week 6. The experiment will be performed as follows:

- The NAO robot will start a conversation

- The NAO robot will ask an open question, to which the participant needs to give an elaborate answer.

- During the answer, an idle movement is performed by the NAO robot.

- After the answer is given, the NAO asks another question

- During the answer, the same idle movement is performed by the NAO robot

At first, our plan was to pick the 3 most performed idle movements per category and test them all separately on the different conversations. However, having the same movement over and over again during a conversation is weird, humans do not do this either. With a robot, it looks even weirder. So to let the conversations be more natural we came up with the following. Pick the three most used idle movement per category (serious and casual) for speaking and listening. Some idle movements were not an option because of the degrees of freedom of the robot. Now a file on Choregraphe is made for the serious movements and the casual movements. The total of 6 movements per category (3 speaking + 3 listening) were placed randomly in the Choregraphe file to simulate human behaviour. Another file was made with no movements at all, so in total there are 3 movements files (serious movements, casual movements and no movements). Our goal of this research is to see if idle movements make a difference in a conversation and if different idle movements in different types of conversation will make a difference. So two different conversations were setup. Each conversation will last about 2 minutes, having around 8 questions asked by the robot. Where one of the three idle movement programs are done. Before and after a conversation is done, a questionnaire is taken by the participant. The participant will undergo 2 conversations with the NAO robot, firstly the casual conversation, in our case a chat about the weather and plans for the summer. The second conversation is a serious conversation, in our case a job interview. The 3 idle movements files from Choregraphe will be linked to the conversations, having 6 different conversations in total. The serious conversation has one with the serious idle movements, the casual movements and no movements at all. The casual conversation has the same three different type of options. To have as much data for every conversation a total of 18 participants were used. This means that every type of conversation with different movements has been done 6 times and 54 questionnaires have been filled in.

The script

The questionnaire

(add questions to hide obvious parts?) To determine the user's perception of the NAO robot the Godspeed questionnaire[2] will be used. The Godspeed uses a 5-point scale. 5 categories are used. These are Anthropomorphism, Animacy, Likeability, Perceived Intelligence and Perceived Safety. As noted in [2] as well, there is an overlap between Anthropomorphism and Animacy. The link to the actual questionnaire can be found here(https://www.overleaf.com/7569336777bwmwdmhvmmbc)

This questionnaire does have limitations, these are discussed in the results 'limitations'.

The results

Deliverables.

By using the data of this experiment, a diagram can be made to the responses of the participants to the various idle movements. Via this, the best idle movement can be decided for each category. The result can also occur in a combination of various idle movements as being the best.

The result can also occur in a combination of various idle movements as being the best.

Limitations

The participants

In the ideal case, more participants could be used. The users' groups would be better represented. This would mean that the data could show differences in data between different users groups. Unfortunately, with the resources, limitations and duration of the project, this is not possible.

The Godspeed questionnaire

The interpretations of the results of the Godspeed questionnaire does have limitations, as explained in [2]. These are:

- Extremely difficult to determine the ground truth

- Many factors influence the measurements(eg. cultural background, prior experience with robots, etc.)

These limitations of the questionnaires result in the fact that the results of the measurements can not be used as an absolute value, but rather to see which option of idling is better.

Experiment B2

Experiment B1 is divided into different parts. These are:

- The participants

- Setting up experiment B2

- The questionnaire

- Results

- Limitations

The participant

Setting up experiment B2

The questionaire

results

limitations

State of the Art

The papers are assigned to different categories, these categories indicate the contribution the papers can have towards our project.

Foundation

These papers lay the foundation of our project, our project will built on the work that has been done in these papers.

Research work presented in this paper[9] addressed the introduction of a small–humanoid robot in elderly people’s homes providing novel insights in the areas of robotic navigation, non–verbal cues in human-robot interaction, and design and evaluation of socially–assistive robots in smart environments. The results reported throughout the thesis could lie in one or multiple of these areas giving an indication of the multidisciplinary nature of research in robotics and human-robot interaction. Topics like robotic navigation in the presence of a person, adding a particle filter to already existing navigational algorithms, attracting a person's attention by a robot, and the design of a smart home environment are discussed in the paper. Our research will add additional research to this paper.

This paper[10] presents a method for analyzing human-robot interaction by body movements. Future intelligent robots will communicate with humans and perform physical and communicative tasks to participate in daily life. A human-like body will provide an abundance of non-verbal information and enable us to smoothly communicate with the robot. To achieve this, we have developed a humanoid robot that autonomously interacts with humans by speaking and making gestures. It is used as a testbed for studying embodied communication. Our strategy is to analyze human-robot interaction in terms of body movements using a motion capturing system, which allows us to measure the body movements in detail. We have performed experiments to compare the body movements with subjective impressions of the robot. The results reveal the importance of well-coordinated behaviors and suggest a new analytical approach to human-robot interaction. This paper lays the foundation for our project, so it is key to read it carefully!

The behavior of autonomous characters in virtual environments is usually described via a complex deterministic state machine or a behavior tree driven by the current state of the system. This is very useful when a high level of control over a character is required, but it arguably does have a negative effect on the illusion of realism in the decision-making process of the character. This is particularly prominent in cases where the character only exhibits idle behavior, e.g. a student sitting in a classroom. In this article[11], we propose the use of discrete-time Markov chains as the model for defining realistic non-interactive behavior and describe how to compute decision probabilities to normalize by the length of individual actions. Lastly, we argue that those allow for more precise calibration and adjustment for the idle behavior model then the models being currently employed in practice.

Foundation / Experiment

This paper[12] describes the design idle motions in which service robots act in its standby state. In the case of the robots developed so far, they show proper reactions only when there is a certain input from humans or the environment. In order to find the behavioral patterns at the standby state, we observed clerks at the information center via video ethnography, and idle motions are extracted from video data. We analyzed idle motions, and apply extracted characteristics to our robot. Moreover, by using the robot which can show those series of expressions and actions, our research needs to find that people can feel like they are alive.

Experiment

These papers will be useful when preparing or setting up our experiments.

The design of complex dynamic motions for humanoid robots is achievable only through the use of robot kinematics. In this paper[13], we study the problems of forward and inverse kinematics for the Aldebaran NAO humanoid robot and present a complete, exact, analytical solution to both problems, including a software library implementation for real-time onboard execution. The forward kinematics allow NAO developers to map any configuration of the robot from its own joint space to the three-dimensional physical space, whereas the inverse kinematics provide closed-form solutions to finding joint configurations that drive the end effectors of the robot to desired target positions in the three-dimensional physical space. The proposed solution was made feasible through decomposition into five independent problems (head, two arms, two legs), the use of the Denavit-Hartenberg method, the analytical solution of a non-linear system of equations, and the exploitation of body and joint symmetries. The main advantage of the proposed inverse kinematics solution compared to existing approaches is its accuracy, its efficiency, and the elimination of singularities. In addition, we suggest a generic guideline for solving the inverse kinematics problem for other humanoid robots. The implemented, freely-available, NAO kinematics library, which additionally offers center-of-mass calculations and Jacobian inverse kinematics, is demonstrated in three motion design tasks: basic center-of-mass balancing, pointing to a moving ball, and human-guided balancing on two legs.

The design of complex dynamic motions for humanoid robots is achievable only through the use of robot kinematics. In this paper[14], we study the problems of forward and inverse kinematics for the Aldebaran NAO humanoid robot and present a complete, exact, analytical solution to both problems, including a software library implementation for real-time onboard execution. The forward kinematics allow NAO developers to map any configuration of the robot from its own joint space to the three-dimensional physical space, whereas the inverse kinematics provide closed-form solutions to finding joint configurations that drive the end effectors of the robot to desired target positions in the three-dimensional physical space.

The ability to realize human‐motion imitation using robots is closely related to developments in the field of artificial intelligence. However, it is not easy to imitate human motions entirely owing to the physical differences between the human body and robots. In this paper[15], we propose a work chain‐based inverse kinematics to enable a robot to imitate the human motion of upper limbs in real-time. Two work chains are built on each arm to ensure that there is motion similarity, such as the end effector trajectory and the joint‐angle configuration. In addition, a two-phase filter is used to remove the interference and noise, together with a self‐collision avoidance scheme to maintain the stability of the robot during the imitation. Experimental results verify the effectiveness of our solution on the humanoid robot Nao‐H25 in terms of accuracy and real‐time performance.

Content‐based human motion retrieval is important for animators with the development of motion editing and synthesis, which need to search similar motions in large databases. Obtaining text‐based representation from the quantization of mocap data turned out to be efficient. It becomes a fundamental step of many researches in human motion analysis. Geometric features are one of these techniques, which involve much prior knowledge and reduce data redundancy of numerical data. This paper[16] describes geometric features as a basic unit to define human motions (also called mo‐words) and view a human motion as a generative process. Therefore, we obtain topic motions, which possess more semantic information using latent Dirichlet allocation by learning from massive training examples in order to understand motions better. We combine the probabilistic model with human motion retrieval and come up with a new representation of human motions and a new retrieval framework. Our experiments demonstrate its advantages, both for understanding motions and retrieval.

Human motion analysis is receiving increasing attention from computer vision researchers. This interest is motivated by a wide spectrum of applications, such as athletic performance analysis, surveillance, man-machine interfaces, content-based image storage and retrieval, and video conferencing. This paper[17] gives an overview of the various tasks involved in motion analysis of the human body. We focus on three major areas related to interpreting human motion: motion analysis involving human body parts, tracking a moving human from a single view or multiple camera perspectives, and recognizing human activities from image sequences. Motion analysis of human body parts involves the low-level segmentation of the human body into segments connected by joints and recovers the 3D structure of the human body using its 2D projections over a sequence of images. Tracking human motion from a single view or multiple perspectives focuses on higher-level processing, in which moving humans are tracked without identifying their body parts. After successfully matching the moving human image from one frame to another in an image sequence, understanding the human movements or activities comes naturally, which leads to our discussion of recognizing human activities.

Experiment / Extend

The motion of a humanoid robot is one of the most intuitive communication channels for human-robot interaction. Previous studies have presented related knowledge to generate speech-based motions of virtual agents on screens. However, physical humanoid robots share time and space with people, and thus, numerous speechless situations occur where the robot cannot be hidden from users. Therefore, we must understand the appropriate roles of motion design for a humanoid robot in many different situations. We achieved the target knowledge as motion-design guidelines based on the iterative findings of design case studies and a literature review. The guidelines are largely separated into two main roles for speech-based and speechless situations, and the latter can be further subdivided into idling, observing, listening, expecting, and mood-setting, all of which are well-distributed by different levels of intension. A series of experiments proved that our guidelines help create preferable motion designs of a humanoid robot. This study[18] provides researchers with a balanced perspective between speech-based and speechless situations, and thus they can design the motions of a humanoid robot to satisfy users in more acceptable and pleasurable ways.

Extend

These papers include work that is related to our project, but does not overlap.

The main objective of the research project[19] was to study human perceptual aspects of hazardous robotics workstations. Two laboratory experiments were designed to investigate workers' perceptions of two industrial robots with different physical configurations and performance capabilities. The second experiment can be useful in our research, they investigated the minimum value of robot idle time (inactivity) perceived by industrial workers as system malfunction, and an indication of the ‘safe-to-approach’ condition. It was found that idle times of 41 s and 28 s or less for the small and large robots, respectively, were perceived by workers to be a result of system malfunction. About 20% of the workers waited for only 10 s or less before deciding that the robot had stopped because of system malfunction. The idle times were affected by the subjects' prior exposure to a simulated robot accident. Further interpretations of the results and suggestions for the operational limitations of robot systems are discussed. This research can be useful to further convince people that idle motions are not only to make people feel comfortable near robots, but also as a safety measure.

A framework and methodology to realize robot-to-human behavioral expression is proposed in the paper[20]. Human-robot symbiosis requires to enhance nonverbal communication between humans and robots. The proposed methodology is based on movement analysis theories of dance psychology researchers. Two experiments on robot-to-human behavioral expression are also presented to support the methodology. One is an experiment to produce familiarity with a robot-to-human tactile reaction. The other is an experiment to express a robot's emotions by its dances. This methodology will be key to realize robots that work close to humans cooperatively and thus will also couple idle movements to emotions, for example, when a robot wants to express it is nervous.

Research has been done towards the capability of a humanoid robot to provide enjoyment to people. For example, who picks up the robot and plays with it by hugging, shaking and moving the robot in various ways. [21] Inertial sensors inside a robot can capture how its body is moved when people perform such “full-body gestures”. A conclusion of this research was that the accuracy of the recognition of full-body gestures was rather high (77%) and that a progressive reward strategy for responses was much more successful. The progressive strategy for responses increases perceived variety; persisting suggestions increase understanding, perceived variety, and enjoyment; and users find enjoyment in playing with a robot in various ways. This resulted in the conclusion that motions should be meaningful, responses rewarding, suggestions inspiring and instructions fulfilling. This also results in increased understanding, perceived variety, and enjoyment. This conclusion suggests that the movements of a robot should make meaningful (to humans) motions, which is what we are trying to achieve with idle movements.

Another paper presents a study that investigates human-robot interpersonal distances and the influence of posture, either sitting or standing on the interpersonal distances.[22] The study is based on a human approaching a robot and a robot approaching a human, in which the human/robot maintains either a sitting or standing posture while being approached. The results revealed that humans allow shorter interpersonal distances when a robot is sitting or is in a more passive position, and leave more space when being approached while standing. The paper suggests that in future work more non-verbal behaviors will be investigated between robots and humans and their effect, combined with the robot’s posture, on interpersonal distances. As idle movements might influence the feelings of safety positively which results in shorter personal distances, but that might be discussed later. However, this research suggests that the idle movement might have different effects on people during different postures of the people. This has to be taken into account once testing the theorem suggested in this research.

This paper[23] presents the combined results of two studies that investigated how a robot should best approach and place itself relative to a seated human subject. Two live Human Robot Interaction (HRI) trials were performed involving a robot fetching an object that the human had requested, using different approach directions. Results of the trials indicated that most subjects disliked a frontal approach, except for a small minority of females, and most subjects preferred to be approached from either the left or right side, with a small overall preference for a right approach by the robot. Handedness and occupation were not related to these preferences. We discuss the results of the user studies in the context of developing a path planning system for a mobile robot.

Comparable

Work in these papers is comparable to our project.

In this paper[24], we explored the effect of a robot’s subconscious gestures made during moments when idle on anthropomorphic perceptions of five-year-old children. We developed and sorted a set of adaptor motions based on their intensity. We designed an experiment involving 20 children, in which they played a memory game with two robots. During moments of idleness, the first robot showed adaptor movements, while the second robot moved its head following basic face tracking. Results showed that the children perceived the robot displaying adaptor movements to be more human and friendly. Moreover, these traits were found to be proportional to the intensity of the adaptor movements. For the range of intensities tested, it was also found that adaptor movements were not disruptive towards the task. These findings corroborate the fact that adaptor movements improve the affective aspect of child-robot interactions and do not interfere with the child’s performances in the task, making them suitable for CRI in educational contexts. This research focusses only on children, but the concept of the research is the same as ours.

Research has already been done by looking into the subtle movements of the head while waiting for a reaction to the environment.[25] The main task of this research was to describe the subtle head movements when a virtual person is waiting for a reaction from the environment. This technique can increase the level of realism while performing human-computer interactions. Even though it was tested for a virtual avatar, the head movements will still be the same for a humanoid robot, such as the NAO robot. These head movements might be of importance for the idle movements of the NAO robot, therefore it is important to use this paper for its complications and accomplices.

Furthermore, research has been done for the emotions of robots.[26] To be exact for the latter, poses were used to create emotions. Five poses were created of the emotions: sadness, anger, neutral, pride and happiness. The recognition of all poses was not the same percentage and the velocity of a movement also has an influence on the interpretation of the pose. This has a lot in common with idle movements. Idle movements have to positively influence the emotions of the user (as to giving the robot a more life-like representation and, thus, a safe feeling for the user). By keeping this research in mind, the velocity of the movement has to be set right as that might influence the interpretation of the movement (aggressive or calm) and, if set wrong, can give the user an insecure feeling.

In the field of starting a conversation, research has also been done.[27] This research looks into a model of approaching people while they are walking. The research, the latter research, concluded that a proposed model (which made use of efficient and polite approach behavior with three phases: finding an interaction target, interaction at public distance and initiating conversation at social distance) was much more successful than a simplistic way (proposed: 33 out of 59 successes and simplistic: 20 out of 57 successes). Moreover, during the field trial, it was observed that people enjoyed receiving information from the robot, suggesting the usefulness of a proactive approach in initiating services from a robot. This positive feedback is also wanted for the use of idle movements, even though the idle movements might not be as obvious as the conversation approach in this research. It is important to notice that in this research an efficient and polite approach is more successful and even more efficient.

This article[28] outlines a reference architecture for social head gaze generation in social robots. The architecture discussed here is grounded in human communication, based on behavioral robotics theory, and captures the commonalities, essence, and experience of 32 previous social robotics implementations of social head gaze. No such architecture currently exists, but such an architecture is needed to serve as a template for creating or re-engineering systems, provide analyses and understanding of different systems, and provide a common lexicon and taxonomy that facilitates communication across various communities. The resulting reference architecture guides the implementation of social head gaze in a rescue robot for the purpose of victim management in urban search and rescue. Using the proposed reference architecture will benefit social robotics because it will simplify the principled implementations of head gaze generation and allow for comparisons between such implementations. Gazing can be seen as a part of idle movements.

This paper[29] will focus on the establishment of a social event that refers in our case study to a social android robot simulating interaction with human users. Within an open-field study setting, we observed a shifting of interactive behavior, presence setting and agency ascription by the human users toward the robot, operating in parallel with the robots’ different modulations of activity. The activity modes of the robot range from a simple idling state, to a reactive facetrack condition and finally an interactive teleoperated condition. By relating the three types of activity modes with three types of (increasing social) presence settings—ranging from co-location to co-presence and finally, to social presence—we observed modulations in the human users’ interactive behavior as well as modulations of agency ascription toward the robot. The observations of the behavioral shift in the human users toward the robot lead us to the assumption that a fortification of agency ascription toward the robot goes hand in hand with the increase in its social activity modes as well as its display of social presence features.

This research[30] details the application of non-verbal communication display behaviors to an autonomous humanoid robot, including the use of proxemics, which to date has been seldom explored in the field of human-robot interaction. In order to allow the robot to communicate information non-verbally while simultaneously fulfilling its existing instrumental behavior, a “behavioral overlay” model that encodes this data onto the robot’s pre-existing motor expression is developed and presented. The state of the robot’s system of internal emotions and motivational drives is used as the principal data source for non-verbal expression, but in order for the robot to display this information in a natural and nuanced fashion, an additional para-emotional framework has been developed to support the individuality of the robot’s interpersonal relationships with humans and of the robot itself. An implementation of the Sony QRIO is described which overlays QRIO’s existing EGO architecture and situated schema-based behaviors with a mechanism for communicating this framework through modalities that encompass posture, gesture and the management of interpersonal distance.

Support

These papers can verify or support our work.

In this study[31], a simple joint task was used to expose our participants to different levels of social verification. Low social verification was portrayed using idle motions and high social verification was portrayed using meaningful motions. Our results indicate that social responses increase with the level of social verification in line with the threshold model of social influence. This paper verifies why our research on idle motions is needed in the first place and states that in order to have a high social human-robot interaction, idle motions are a necessity.

Some robots lack the possibility to produce humanlike nonverbal behavior (HNB). Using robot-specific nonverbal behavior (RNB) such as different eye colors to convey emotional meaning might be a fruitful mechanism to enhance HRI experiences, but it is unclear whether RNB is as effective as HNB. This paper[32] presents a review on affective nonverbal behaviors in robots and an experimental study. We experimentally tested the influence of HNB and RNB (colored LEDs) on users’ perception of the robot (e.g. likeability, animacy), their emotional experience, and self-disclosure. In a between-subjects design, users interacted with either a robot displaying no nonverbal behavior, a robot displaying affective RNB, a robot displaying affective HNB or a robot displaying affective HNB and RNB. Results show that HNB, but not RNB, has a significant effect on the perceived animacy of the robot, participants’ emotional state, and self-disclosure. However, RNB still slightly influenced participants’ perception, emotion, and behavior: Planned contrasts revealed having any type of nonverbal behavior significantly increased perceived animacy, positive affect, and self-disclosure. Moreover, observed linear trends indicate that the effects increased with the addition of nonverbal behaviors (control< RNB< HNB). In combination, our results suggest that HNB is more effective in transporting the robot’s communicative message than RNB.

Planning V1

Division of work

The research is performed in a total of eight weeks. In those weeks two experiments are done:

1. Experiment A: .....;

2. Experiment B:.......

| Week | Datum start | To Do & Milestones | Responsible team members |

|---|---|---|---|

| 1 | 3 Feb. | Determining the subject

Milestones: |

Responsible members: 1. Everyone; |

| 2 | 10 Feb. | setting up experiments

Milestones: |

Responsible members: 1. Sebastiaan; |

| 3 | 17 Feb. | Doing experiment A

Milestones: |

Responsible members: 1. Stijn; |

| 24 Feb. | Break: Buffer for unfinished work in week 1-3 | ||

| 4 | 2 March | Process experiment A and start preparing for NAO robot'

Milestones: |

Responsible members:

1. Quinten; |

| 5 | 9 March | Finilize preparing experiment B and testing the NAO robot

Milestones: |

Responsible members: 1. Max; |

| 6 | 16 March | Performing experiment B and process results

Milestones: |

Responsible members:

1. Daan; |

| 7 | 23 March | Evaluate experiments, draw conclusion and work on wiki

Milestones: |

Responsible members:

1. Sebastiaan; |

| 8 | 30 March | Finilze wiki and final presentation

Milestones: |

Responsible team members:

1. Sebastiaan, Quinten, Daan and Max; |

Time spent

Below the link to an overleaf file which shows the time everyone spent per week: https://www.overleaf.com/2323989585tvychvjqthwr

- LINK DOET HET NU??? Broadbent, E., Stafford, R. and MacDonald, B. (2009). Acceptance of healthcare robots for the older population: review and future directions[33]

References

- ↑ Torta, E. (2014). Approaching independent living with robots. https://pure.tue.nl/ws/portalfiles/portal/3924729/766648.pdf

- ↑ Bartneck, C., Kulić, D., Croft, E. and Zoghbi, S. (2009). Measurement instruments for the anthropomorphism, animacy, likeability, perceived intelligence, and perceived safety of robots. International Journal of Social Robotics 1(1), 71–81.

- ↑ Magical and Millennial Episode on Friends Like Us Podcast, Marina Franklin, https://www.youtube.com/watch?v=Vw9M4bVZCSk

- ↑ Een gesprek tussen Johnny en Jesse, GroenLinks, https://www.youtube.com/watch?v=v6dk6eI1qFQ

- ↑ Joaquin Phoenix and Jimmy Fallon Trade Places, The Tonight Show Starring Jimmy Fallon, https://www.youtube.com/watch?v=_plgHxLyCt4

- ↑ Job Interview Good Example copy (2016) https://www.youtube.com/watch?v=OVAMb6Kui6A

- ↑ How motivation can fix public systems | Abhishek Gopalka (2020) https://www.youtube.com/watch?v=IGJt7QmtUOk

- ↑ Climate Change Debate | Kriti Joshi | Opposition https://www.youtube.com/watch?v=Lq0iua0r0KQ

- ↑ Torta, E. (2014). Approaching independent living with robots. Eindhoven: Technische Universiteit Eindhoven https://pure.tue.nl/ws/portalfiles/portal/3924729/766648.pdf

- ↑ Takayuki Kanda, Hiroshi Ishiguro, Michita Imai, and Tetsuo Ono (2003). Body Movement Analysis of Human-Robot Interaction http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.98.3393&rep=rep1&type=pdf

- ↑ Streck, A., Wolbers, T.: (2018). Using Discrete Time Markov Chains for Control of Idle Character Animation 17(8). https://ieeexplore.ieee.org/document/8490450

- ↑ Song, H., Min Joong, K., Jeong, S.-H., Hyen-Jeong, S., Dong-Soo K.: (2009). Design of Idle motions for service robot via video ethnography. In: Proceedings of the 18th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN 2009), pp. 195–99 https://ieeexplore.ieee.org/abstract/document/5326062

- ↑ https://link.springer.com/article/10.1007%2Fs10846-013-0015-4

- ↑ Kofinas, N., Orfanoudakis, E., Lagoudakis, M.,: (2014). Complete Analytical Forward and Inverse Kinematics for the NAO Humanoid Robot, 31(1), pp. 251-264 https://link.springer.com/article/10.1007/s10846-013-0015-4

- ↑ Rosenthal-von der Pütten, A. M., Krämer, N. C., & Herrmann, J. (2018). The effects of humanlike and robot-specific affective nonverbal behavior on perception, emotion, and behavior. International Journal of Social Robotics, 10(5), 569-582. https://onlinelibrary.wiley.com/doi/epdf/10.4218/etrij.2018-0057

- ↑ Zhu, M., Sun, H., Lan, R., Li, B.: (2011). Human motion retrieval using topic model, 4(10), pp. 469-476. https://onlinelibrary.wiley.com/doi/abs/10.1002/cav.432

- ↑ Aggarwal, J., Cai, Q.: (1999). Human Motion Analysis: A Review, 1(3), pp. 428-440. https://www.sciencedirect.com/science/article/pii/S1077314298907445

- ↑ Jung, J., Kanda, T., & Kim, M. S. (2013). Guidelines for contextual motion design of a humanoid robot. International Journal of Social Robotics, 5(2), 153-169. https://link.springer.com/article/10.1007/s12369-012-0175-6

- ↑ Waldemar Karwowski (2007). Worker selection of safe speed and idle condition in simulated monitoring of two industrial robots https://www.tandfonline.com/doi/abs/10.1080/00140139108967335

- ↑ Toru Nakata, Tomomasa Sato and Taketoshi Mori (1998). Expression of Emotion and Intention by Robot Body Movement https://pdfs.semanticscholar.org/9921/b7f11e200ecac35e4f59540b8cf678059fcc.pdf

- ↑ Cooney, M., Kanda, T., Alissandrakis, A., & Ishiguro, H. (2014). Designing enjoyable motion-based play interactions with a small humanoid robot. International Journal of Social Robotics, 6(2), 173-193. https://link.springer.com/article/10.1007/s12369-013-0212-0

- ↑ Obaid, M., Sandoval, E. B., Złotowski, J., Moltchanova, E., Basedow, C. A., & Bartneck, C. (2016, August). Stop! That is close enough. How body postures influence human-robot proximity. In 2016 25th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN) (pp. 354-361). IEEE. https://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=7745155

- ↑ Dautenhahn, K., Walters, M., Woods, S., Koay, K. L., Nehaniv, C. L., Sisbot, A., Alami, R. and Siméon, T. (2006). How may I serve you?: a robot companion approaching a seated person in a helping context. https://hal.laas.fr/hal-01979221/document

- ↑ Thibault Asselborn, Wafa Johal and Pierre Dillenbourg (2017). Keep on moving! Exploring anthropomorphic effects of motion during idle moments https://www.researchgate.net/publication/321813854_Keep_on_moving_Exploring_anthropomorphic_effects_of_motion_during_idle_moments

- ↑ Kocoń, M., & Emirsajłow, Z. (2012). Modeling the idle movements of the human head in three-dimensional virtual environments. Pomiary Automatyka Kontrola, 58(12), 1121-1123. https://www.infona.pl/resource/bwmeta1.element.baztech-article-BSW4-0125-0022

- ↑ Beck, A., Hiolle, A., & Canamero, L. (2013). Using Perlin noise to generate emotional expressions in a robot. In Proceedings of the Annual Meeting of the Cognitive Science Society (Vol. 35, No. 35). https://escholarship.org/content/qt4qv84958/qt4qv84958.pdf

- ↑ Satake, S., Kanda, T., Glas, D. F., Imai, M., Ishiguro, H., & Hagita, N. (2009, March). How to approach humans? Strategies for social robots to initiate interaction. In Proceedings of the 4th ACM/IEEE international conference on Human-robot interaction (pp. 109-116). https://dl.acm.org/doi/pdf/10.1145/1514095.1514117

- ↑ Srinivasan, V., Murphy, R. R., & Bethel, C. L. (2015). A reference architecture for social head gaze generation in social robotics. International Journal of Social Robotics, 7(5), 601-616. https://link.springer.com/article/10.1007/s12369-015-0315-x

- ↑ Straub, I. (2016). ‘It looks like a human!’The interrelation of social presence, interaction and agency ascription: a case study about the effects of an android robot on social agency ascription. AI & society, 31(4), 553-571. https://link.springer.com/article/10.1007/s00146-015-0632-5

- ↑ Brooks, A. G. and Arkin, R. C. (2006). Behavioral overlays for non-verbal communication expression on a humanoid robot. https://smartech.gatech.edu/bitstream/handle/1853/20540/BrooksArkinAURO2006.pdf?sequence=1&isAllowed=y

- ↑ Raymond H. Cuijpers, Marco A. M. H. Knops (2015). Motions of Robots Matter! The Social Effects of Idle and Meaningful Motions https://www.researchgate.net/publication/281841000_Motions_of_Robots_Matter_The_Social_Effects_of_Idle_and_Meaningful_Motions

- ↑ Rosenthal-von der Pütten, A. M., Krämer, N. C., & Herrmann, J. (2018). The effects of humanlike and robot-specific affective nonverbal behavior on perception, emotion, and behavior. International Journal of Social Robotics, 10(5), 569-582. https://link.springer.com/article/10.1007/s12369-018-0466-7

- ↑ https://link.springer.com/article/10.1007/s12369-009-0030-6