Embedded Motion Control 2013 Group 5: Difference between revisions

No edit summary |

No edit summary |

||

| Line 124: | Line 124: | ||

== Software architecture == | == Software architecture == | ||

Our current software architecture can be seen in figure four. We have centralized our motion planning in the strategy node. This node | Our current software architecture can be seen in figure four. We have centralized our motion planning in the strategy node. This node receives messages from the /safe_drive node, which tells the system to either stop or continue with the operation (driving/solve maze). | ||

If the safety is off, the /strategy node starts computing the next step. If it is on, pico is halted until resetted. | |||

Next, the data gathered from the laser range finder (LRF) is converted into a set of lines using the hough transform. Here, each line is represented by a radius (perpendicular to the line) and an angle w.r.t. reference line. The top view of the robot with these parameters are depicted in figure XXX_show. | |||

figure XXX_show top view with rho en theta | |||

Using these angles, we can identify the walls that are located to the left and to the right of PICO by sorting the data received from the hough transform by angle. We now know the location and orientation of the left and right wall w.r.t. PICO. | |||

USING SETPOINT... verhaal rob | |||

Using this information we know what the next step is. | |||

If a junction or corner is detected, a corresponding flag is raised. | |||

-motionplanning plaatje rob | -motionplanning plaatje rob | ||

Revision as of 11:00, 4 October 2013

Group members

| Name: | Student ID: |

| Arjen Hamers | 0792836 |

| Erwin Hoogers | 0714950 |

| Ties Janssen | 0607344 |

| Tim Verdonschot | 0715838 |

| Rob Zwitserlood | 0654389 |

Tutor:

Sjoerd van den Dries

Planning

| DATE | TIME | PLACE | WHAT |

| September, 16th | 15:30 | GEM-N 1.15 | Meeting |

| September, 23th | 10:00 | [unknown] | Test on Pico |

| September, 25th | 10:45 | GEM-Z 3A08 | Corridor competition |

| October, 23th | 10:45 | GEM-Z 3A08 | Final competition |

To do list

| DATE | WHO | WHAT |

| asap | Tim, Erwin | Exit detection |

| asap | Rob, Ties, Arjen | Move through the corridor |

Logbook

Week 1

- Installed the following software:

- Ubuntu

- ROS

- SVN

- Eclipse

- Gazebo

Week 2

- Did tutorials for ROS and the Jazz simulator.

- Get familiar to 'safe_drive.cpp' and use this as base for our program.

Week 3

- Played with the Pico in the Jazz simulator by adding code to safe_drive.cpp.

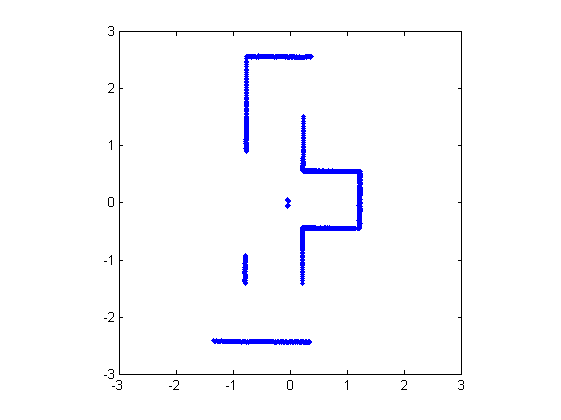

- Translated the laser data to a 2d plot (see Figure 1).

- Used the Hough transform to detect lines in the laser data. For the best result, the following steps are used:

- Made an image of the laser data points.

- Converted the RGB-image to grayscale.

- Used a binary morphological operator to connect data points which are close to each other. This makes it easier to detect lines.

- Detected lines using the Hough transform.

- To implement this algorithm in C++ code OpenCV will be used.

- The algorithm is implemented in the safe_drive.cpp.

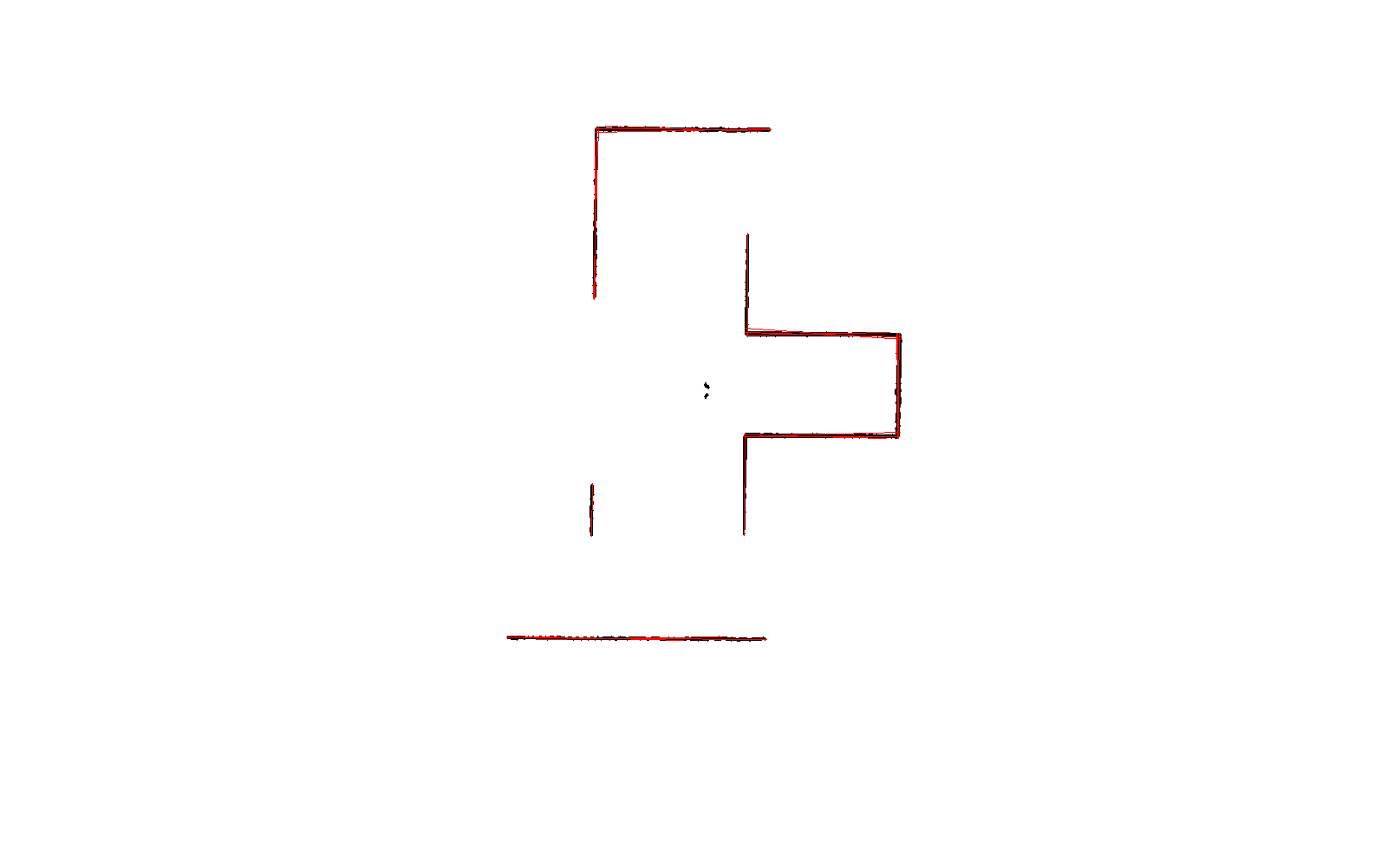

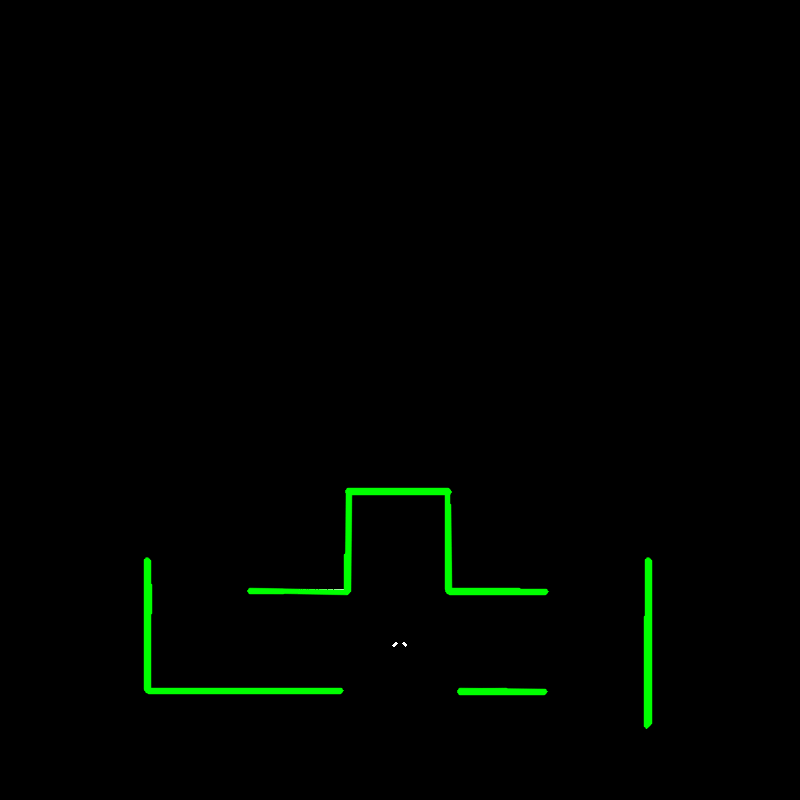

- The line detection method mentioned above works fine and is tested in the simulation (see figure 3,the green lines are the detected lines).

Week 4

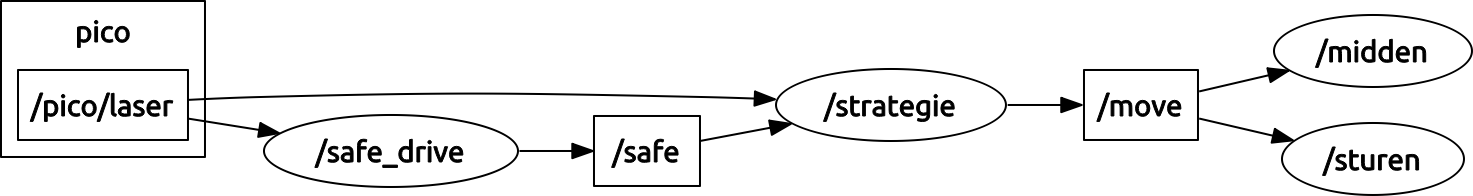

- During the corridor competition pico failed to leave the maze, this was due to a paramount safety check (close range / collision) in the code. With no time left to edit, we were forced to give up. After this we decided to reorganize our software architecture by means of building seperate communicating nodes. This new scheme is depicted in figure four.

Software architecture

Our current software architecture can be seen in figure four. We have centralized our motion planning in the strategy node. This node receives messages from the /safe_drive node, which tells the system to either stop or continue with the operation (driving/solve maze). If the safety is off, the /strategy node starts computing the next step. If it is on, pico is halted until resetted.

Next, the data gathered from the laser range finder (LRF) is converted into a set of lines using the hough transform. Here, each line is represented by a radius (perpendicular to the line) and an angle w.r.t. reference line. The top view of the robot with these parameters are depicted in figure XXX_show.

figure XXX_show top view with rho en theta

Using these angles, we can identify the walls that are located to the left and to the right of PICO by sorting the data received from the hough transform by angle. We now know the location and orientation of the left and right wall w.r.t. PICO.

USING SETPOINT... verhaal rob

Using this information we know what the next step is. If a junction or corner is detected, a corresponding flag is raised.

-motionplanning plaatje rob

Some interesting reading

- A. Alempijevic. High-speed feature extraction in sensor coordinates for laser rangefinders. In Proceedings of the 2004 Australasian Conference on Robotics and Automation, 2004.

- J. Diaz, A. Stoytchev, and R. Arkin. Exploring unknown structured environments. In Proc. of the Fourteenth International Florida Artificial Intelligence Research Society Conference (FLAIRS-2001), Florida, 2001.

- B. Giesler, R. Graf, R. Dillmann and C. F. R. Weiman (1998). Fast mapping using the log-Hough transformation. Intelligent Robots and Systems, 1998.

- Laser Based Corridor Detection for Reactive Navigation, Johan Larsson, Mathias Broxvall, Alessandro Saffiotti http://aass.oru.se/~mbl/publications/ir08.pdf