PRE2019 4 Group3: Difference between revisions

No edit summary |

No edit summary |

||

| (927 intermediate revisions by 5 users not shown) | |||

| Line 1: | Line 1: | ||

[[File:logo.png|500px|center]] | |||

= Group Members = | |||

{| class="wikitable" style="border-style: solid; border-width: 1px;" cellpadding="3" | {| class="wikitable" style="border-style: solid; border-width: 1px;" cellpadding="3" | ||

| Line 37: | Line 36: | ||

|} | |} | ||

= Problem Statement = | |||

Over 5 trillion pieces of plastic are currently floating around in the oceans <ref name=TOC>Oceans. (2020, March 18). Retrieved April 23, 2020, from https://theoceancleanup.com/oceans/</ref>. For a part, this so-called plastic soup, exists of large plastics, like bags, straws, and cups. But it also contains a vast concentration of microplastics: these are pieces of plastic smaller than | Over 5 trillion pieces of plastic are currently floating around in the oceans <ref name=TOC>Oceans. (2020, March 18). Retrieved April 23, 2020, from https://theoceancleanup.com/oceans/</ref>. For a part, this so-called plastic soup, exists of large plastics, like bags, straws, and cups. But it also contains a vast concentration of microplastics: these are pieces of plastic smaller than 5[mm] in size <ref name=microdef> Wikipedia contributors. (2020, April 13). Microplastics. Retrieved April 23, 2020, from https://en.wikipedia.org/wiki/Microplastics </ref>. | ||

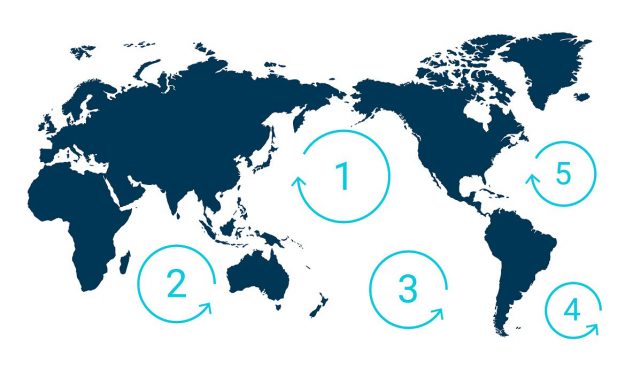

There are five garbage patches across the globe <ref name=TOC></ref>. In the garbage patch in the Mediterranean sea, the most prevalent microplastics were found to be polyethylene and polypropyline <ref name=microplastics> Suaria, G., Avio, C. G., Mineo, A., Lattin, G. L., Magaldi, M. G., Belmonte, G., … Aliani, S. (2016). The Mediterranean Plastic Soup: synthetic polymers in Mediterranean surface waters. Scientific Reports, 6(1). https://doi.org/10.1038/srep37551</ref>. | There are five garbage patches across the globe <ref name=TOC></ref>. In the garbage patch in the Mediterranean sea, the most prevalent microplastics were found to be polyethylene and polypropyline <ref name=microplastics> Suaria, G., Avio, C. G., Mineo, A., Lattin, G. L., Magaldi, M. G., Belmonte, G., … Aliani, S. (2016). The Mediterranean Plastic Soup: synthetic polymers in Mediterranean surface waters. Scientific Reports, 6(1). https://doi.org/10.1038/srep37551</ref>. | ||

[ | A study in the Northern Sea showed that 5.4[%] of the fish had ingested plastic <ref name=ingestion>Foekema, E. M., De Gruijter, C., Mergia, M. T., van Franeker, J. A., Murk, A. J., & Koelmans, A. A. (2013). Plastic in North Sea Fish. Environmental Science & Technology, 47(15), 8818–8824. https://doi.org/10.1021/es400931b</ref>. | ||

The plastic consumed by the fish accumulates - new plastic does go into the fish, but does not come out. The buildup of plastic particles results in stress in their livers <ref name=plasticeffects>Rochman, C. M., Hoh, E., Kurobe, T., & Teh, S. J. (2013). Ingested plastic transfers hazardous chemicals to fish and induces hepatic stress. Scientific Reports, 3(1). https://doi.org/10.1038/srep03263</ref>. Beside that, fish can become stuck in the larger plastics. Thus, the plastic soup is becoming a threat for sea life. | |||

[[File:garbage.jpg|400px|Image: 800 pixels|center|thumb|The locations of the five garbage patches around the globe<ref name=TOC>Oceans. (2020, March 18). Retrieved April 23, 2020, from https://theoceancleanup.com/oceans/</ref>.]] | |||

A lot of this plastic comes from rivers. A study published in 2017 found that about 80[%] of plastic trash is flowing into the sea from 10 rivers that run through heavily populated regions. The other 20[%] of plastic waste enters the ocean directly <ref>Stevens, A. (2019, December 3). Tiny plastic, big problem. Retrieved May 10, 2020, from https://www.sciencenewsforstudents.org/article/tiny-plastic-big-problem</ref>, for example, trash blown from a beach or discarded from ships. | |||

In 2019, over 200 volunteers walked along parts of the Maas and Waal <ref name=plasticsoepMaasWaal>Peels, J. (2019). Plasticsoep in de Maas en de Waal veel erger dan gedacht, vrijwilligers vinden 77.000 stukken afval. Retrieved May 6, from https://www.omroepbrabant.nl/nieuws/2967097/plasticsoep-in-de-maas-en-de-waal-veel-erger-dan-gedacht-vrijwilligers-vinden-77000-stukken-afval</ref>, and they found 77.000 pieces of litter of which 84[%] was plastic. This number was higher than expected. The best way to help cleaning up the oceans is to first make sure the influx stops. In order to do so, it is important to know how much waste flows from certain rivers to the ocean. At this moment there is no good monitoring of waste flow in rivers, usually everything is counted by hand. | |||

In this project, a contribution will be made to the gathering of information on the litter flowing through the river Maas, specifically the part in Limburg. There will be worked together with the company Noria. They made a machine that removes waste from the water. More information on their project and their interests is provided within the 'Users' section. The device that will be designed will be placed on the Noria as an information-gathering device. It will use image recognition to identify the waste. A design will be made and the image recognition will be tested. Lastly, it will be thought out how the device will be able to save information and communicate it. | |||

=== Objectives === | === Objectives === | ||

* Do research into the state of the art of current recognition software, | * Do research into the state of the art of current recognition software, river cleanup devices and neural networks. | ||

* Create a software tool that | * Create a software tool that recognizes and counts different types of waste. | ||

* Test this software tool and form a conclusion on the effectiveness of the tool. | * Test this software tool and form a conclusion on the effectiveness of the tool. | ||

* Create a design for the image recognition device. | |||

* Think of a way to save and communicate the information gathered. | |||

=== Users === | === Users === | ||

In this part the different users will be discussed. | In this part the different users or stakeholders will be discussed. | ||

==== | |||

===== Schone Rivieren (Schone Maas) ===== | |||

Schone rivieren is a foundation which is established by IVN Natuureducatie, Plastic Soup Foundation and Stichting De Noordzee <ref name ='sr'>Schone Rivieren. (2020, May 19). Schone Rivieren. Retrieved June 17, 2020, from https://www.schonerivieren.org/</ref>. This foundation has the goal to have all Dutch rivers plastic-free in 2030. They rely on volunteers to collectively clean up the rivers and gather information. They would benefit a lot from the information gathered by the Waste Identifier, because it provides the organization with useful data that can be used to optimize the river cleanup. | |||

A few of the partners will be listed below. These give an indication of the organizations this foundation is involved with. | |||

* ''Rijkswaterstaat (executive agency of the Ministry of Infrastructure and Water Management)'' - Rijkswaterstaat is interested in information about the amount of waste in rivers and the clean up of this. | |||

* ''Nationale Postcode Loterij (national lottery)'' - They donated 1.950.000 euros to the foundation. This indicates that the problem is seen as significant. This donation helps the foundation to grow and allows them to use resources. | |||

* ''Tauw'' - Tauw is a consultancy and engineering agency that offers consultancy, measurement and monitoring services in the environmental field. It also works on the sustainable development of the living environment for industry and governments. | |||

Lastly, the foundation also works together with the provinces Noord-Brabant, Gelderland, Limburg, and Utrecht. | |||

===== Rijkswaterstaat ===== | |||

= | Rijkswaterstaat is the executive agency of the Ministry of Infrastructure and Water Management, as mentioned before <ref name='rws'>Rijkswaterstaat. (2020, June 12). Rijkswaterstaat. Retrieved June 17, 2020, from https://www.rijkswaterstaat.nl/</ref>. This means that it is the part of the government that is responsible for the rivers of the Netherlands. They also are the biggest source of data regarding rivers and all water related topics in the Netherlands. Other independent researchers can request data from their database. This makes them a good user, since this project could add important data to that database. Rijkswaterstaat also funds projects, which can prove helpful if the concept that is worked out in the project is ever realized. | ||

===== RanMarine Technology (WasteShark) ===== | |||

= | RanMarine Technology is a company that is specialized in the design and development of industrial autonomous surface vessels (ASVs) for ports, harbors and other marine and water environments. The company is known for the WasteShark. This device floats on the water surface of rivers, ports and marinas to collect plastics, bio-waste and other debris <ref name='ranmarine'>WasteShark ASV | RanMarine Technology. (2020, February 27). Retrieved May 2, 2020, from https://www.ranmarine.io/</ref>. It currently operates at coasts, in rivers and in harbors around the world - also in the Netherlands. The idea is to collect the plastic waste before a tide takes it out into the deep ocean, where the waste is much harder to collect. | ||

The | [[File:wasteshark.jpg|400px|Image: 400 pixels|center|thumb|The WasteShark in action<ref name="ranmarine"></ref>.]] | ||

=== | WasteSharks can collect 200 liters of trash at a time, before having to return to an on-land unloading station. They also charge there. The WasteShark has no carbon emissions, operating on solar power and batteries. The batteries can last 8-16 hours. Both an autonomous model and a remote-controlled model are available <ref name="ranmarine"></ref>. The autonomous model is even able to collaborate with other WasteSharks in the same area. They can thus make decisions based on shared knowledge <ref name="cordis"></ref>. An example of that is, when one WasteShark senses that it is filling up very quickly, other WasteSharks can come join it, for there is probably a lot of plastic waste in that area. | ||

This concept does seem to tick all the boxes (autonomous, energy neutral, and scalable) set by The Dutch Cleanup. A fully autonomous model can be bought for under $23000 <ref name="functions">Swan, E. C. (2018, October 31). Trash-eating “shark” drone takes to Dubai marina. Retrieved May 2, 2020, from https://edition.cnn.com/2018/10/30/middleeast/wasteshark-drone-dubai-marina/index.html</ref>, making it pretty affordable for governments to invest in. | |||

The autonomous WasteShark detects floating plastic that lies in the path of the WasteShark using laser imaging detection and ranging (LIDAR) technology. This means the WasteShark sends out a signal, and measures the time it takes until a reflection is detected <ref name="lidar">Wikipedia contributors. (2020, May 2). Lidar. Retrieved May 2, 2020, from https://en.wikipedia.org/wiki/Lidar</ref>. From this, the software can figure out the distance of the object that caused the reflection. The WasteShark can then decide to approach the object, or stop / back up a little in case the object is coming closer <ref name="functions"></ref>, this is probably for self-protection. The design of the WasteShark makes it so that plastic waste can go in easily, but can hardly go out of it. The only moving parts of the design are two thrusters which propel the WasteShark forward or backward <ref name="cordis">CORDIS. (2019, March 11). Marine Litter Prevention with Autonomous Water Drones. Retrieved May 2, 2020, from https://cordis.europa.eu/article/id/254172-aquadrones-remove-deliver-and-safely-empty-marine-litter</ref>. This means that the design is very robust, which is important in the environment it is designed to work in. | |||

The fully autonomous version of the WasteShark can also simultaneously collect water quality data, scan the seabed to chart its shape, and filter the water from chemicals that might be in it <ref name="functions"></ref>. These extra measurement devices and gadgets are offered as add-ons. To perform autonomously, this design also has a mission planning ability. In the future, the device should even be able to construct a predictive model of where trash collects in the water <ref name="cordis"></ref>. The information provided by the Waste Identifier can be used by RanMarine Technology in the future to guide the WasteShark to areas with a high number of litter. | |||

===== Noria ===== | |||

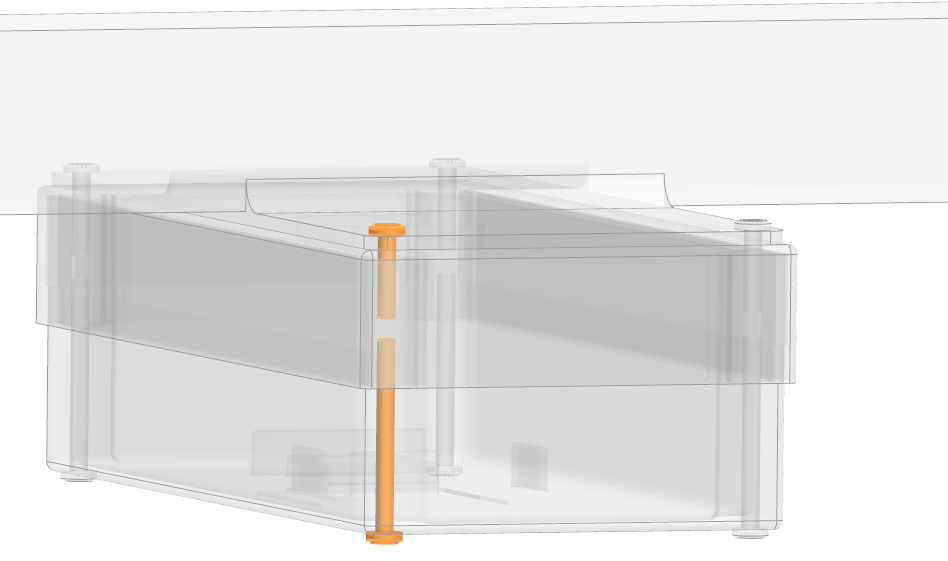

Noria focuses on the development of innovative methods and techniques to tackle the plastic waste problem in the water. They focus on tackling this problem from the time the plastic ends up in the water until it reaches the sea <ref name=noria>Noria - Schonere wateren door het probleem bij de bron aan te pakken. (2020, January 27). Retrieved May 21, 2020, from https://nlinbusiness.com/steden/munchen/interview/noria-schonere-wateren-door-het-probleem-bij-de-bron-aan-te-pakken-ZG9jdW1lbnQ6LUx6YXdoalp2cGpvcEVXbVZYaFI=</ref>. In the figure below, the system of Noria can be seen. It works with a large rotating mill, of which the blades consist of sieves. As the blades rotate, using an electric motor, macroplastics and other debris are removed out of the top layer of the water. Eventually the waste ends up in the middle of the machine, where it falls into a storage bin. Via Rijkswaterstaat, contact has been made with the founder and owner of Noria, Rinze de Vries. Rinze de Vries is interested in working together for this project. Therefore, there is decided to apply an image recognition system on the Noria system to detect the amount and type of waste that is collected by the system of Noria. | |||

[[File:noria.jpg|500px|Image: 400 pixels|center|thumb|System of Noria in action <ref name=noria>Noria - Schonere wateren door het probleem bij de bron aan te pakken. (2020, January 27). Retrieved May 21, 2020, from https://nlinbusiness.com/steden/munchen/interview/noria-schonere-wateren-door-het-probleem-bij-de-bron-aan-te-pakken-ZG9jdW1lbnQ6LUx6YXdoalp2cGpvcEVXbVZYaFI=</ref>.]] | |||

A pilot has been executed with the Noria. This pilot is aimed at testing a plastic catch system in the lock of Borgharen. The following conclusions can be drawn from this pilot: | |||

* More than 95[%] of the waste released into the lock was taken out of the water with the Noria system. This applies to waste as well as organic waste with a size of 10 to 700 mm. | |||

* At this moment, it is quite a challenge to drain the waste from the system. | |||

=== Requirements === | |||

For the Waste Identifier a number of requirements has been set that are listed below. In order to make the requirements concrete and relevant it has been decided to contact potential users. One of the users, Rijkswaterstaat, responded to the request and decided that it was allowed to conduct an interview with one of their employees, Ir. Brinkhof, who is a project manager. He is specialized in the region of the Maas and has insight in all projects and maintenance. Another interview has been conducted with Ir. Rinze de Vries, who is the owner of Noria. Both these interviews can be found at the end of this page in the section 'Conducted interviews'. Based on the conducted interviews the following requirements have been set. | |||

==== Requirements for the Software ==== | ==== Requirements for the Software ==== | ||

* The program | * The program should be able to identify and classify different types of waste; | ||

* | * The program should be able to count the amount of each waste type that flows into the Noria; | ||

* The | * The program should be able to identify and count waste in the water correctly for at least 90[%] of the time; | ||

* Data should be converted to information; | |||

* The same piece of waste should not be counted multiple times. The same threshold of counting 90[%] correctly applies here. | |||

==== Requirements for the Design ==== | |||

* The design should be weatherproof; | |||

The | * The design should operate at all moments when Noria is also operating; | ||

* | * The design should be robust, so it should not be damaged easily; | ||

* | * The design should not interfere with the rotating parts of the Noria; | ||

* | * The design should have its own power source. | ||

Finally, literature research about the current state of the art must be provided. At least 25 sources must be used for the literature research of the software and design. | |||

= Planning = | = Planning = | ||

=== Approach === | === Approach === | ||

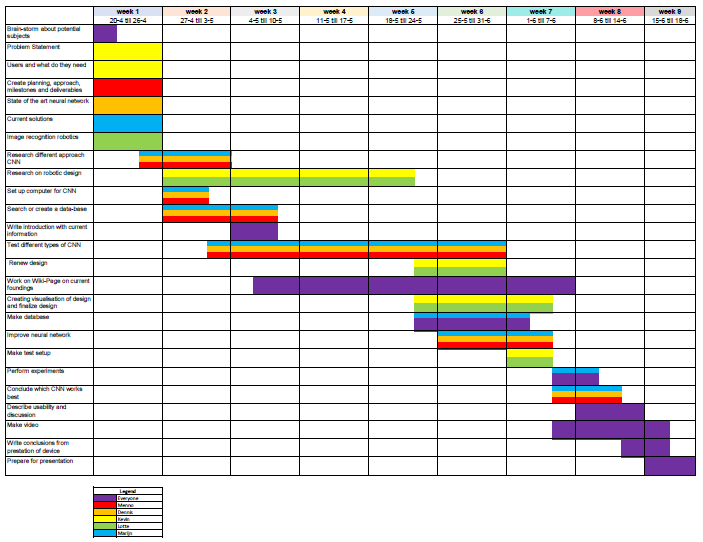

For the planning, a Gantt Chart is created with the most important | For the planning, a Gantt Chart is created with the most important goals and subgoals that need to be tackled. The group is split into people who create the design and applications of the Waste Identifier, and people who work on the creation of the neural network. The overall view of the planning is that in the first two weeks, a lot of research has to be done. This needs to be done for, among other things, the problem statement, users and the current technology. In the second week, more information about different types of neural networks and the working of different layers should be investigated to gain more knowledge. Also, this could lead to installing multiple packages or programs on laptops, which needs time to test whether they work. During this second week, a data-set should be created or found that can be used to train our model. If this cannot be found online and thus should be created, this would take much more time than one week. However, it is hoped to be finished after the third week. After week 5, an idea of the design should be elaborated with the use of drawings or digital visualizations. Also all the possible neural networks should be elaborated and tested, so that in week 8 conclusions can be drawn for the best working neural network. This means that in week 8, the Wiki-page can be finished with a conclusion and discussion about the neural network that should be used and about the working of the device. Finally, week 9 is used to prepare for the presentation. | ||

The activities are subdivided related to the neural network/image recognition and the design of the device. Kevin and Lotte will work on the design of the device and Menno, Marijn and Dennis will look work on the neural networks. | |||

[[File:gannt.png|800px|Image: 800 pixels|center|thumb| | [[File:gannt.png|800px|Image: 800 pixels|center|thumb|Project planning.]] | ||

=== Milestones === | === Milestones === | ||

{| border=1 style="border-collapse: collapse;" cellpadding = 2 | |||

! Week | |||

! Milestones | |||

|- | |||

| 1 (April 20th till April 26th) | |||

| Gather information and knowledge about chosen topic. | |||

|- | |||

| 2 (April 27th till May 3rd) | |||

| Further research on different types of neural networks and having a working example of a neural network. | |||

|- | |||

| 3 (May 4th till May 10th) | |||

| Elaborate the first ideas of the design of the device and find or create a usable database. | |||

|- | |||

| 4 (May 11th till May 17th) | |||

| First findings of correctness of different neural networks and tests of different types of neural networks. | |||

|- | |||

| 5 (May 18th till May 24th) | |||

| Conclusion of the best working neural network and making final visualisation of the design. | |||

|- | |||

| 6 (May 25th till May 31st) | |||

| First set-up of wiki page with the found conclusions of neural networks and design with correct visualisation of the findings. | |||

|- | |||

| 7 (June 1st till June 7th) | |||

| Creation of the final wiki-page. | |||

|- | |||

| 8 (June 8th till June 14th) | |||

| Presentation and visualisation of final presentation. | |||

|} | |||

=== Deliverables === | === Deliverables === | ||

* Design of the | * Design of the Waste Identifier | ||

* Software for image recognition | * Software for image recognition | ||

* Complete wiki-page | * Complete wiki-page | ||

| Line 124: | Line 185: | ||

= State-of-the-Art = | = State-of-the-Art = | ||

=== Quantifying Waste === | |||

Plastic debris in rivers has been quantified before in three ways <ref name="counting">Emmerik, T., & Schwarz, A. (2019). Plastic debris in rivers. WIREs Water, 7(1). https://doi.org/10.1002/wat2.1398</ref>. First of all, by quantifying the sources of plastic waste. Second of all, by quantifying plastic transport through modelling. Lastly, by quantifying plastic transport through observations. The last one is most in line with what will be done in this project. No uniform method for counting plastic debris in rivers was made. So, several plastic monitoring studies each thought of their own way to do so. The methods can be divided up into 5 different subcategories <ref name="counting"></ref>: | |||

1. Plastic tracking: Using GPS (Global Positioning System) to track the travel path of plastic pieces in rivers. The pieces are altered beforehand so that the GPS can pick up on it. This method can show where cluttering happens, where preferred flowlines are, etc. | |||

2. Active sampling: Collecting samples from riverbanks, beaches, or from a net hanging from a bridge or a boat. This method does not only quantify the plastic transport, it also qualifies it - since it is possible to inspect what kinds of plastics are in the samples, how degraded they are, how large, etc. This method works mainly in the top layer of the river. The area of the riverbed can be inspected by taking sediment samples, for example using a fish fyke <ref>Morritt, D., Stefanoudis, P. V., Pearce, D., Crimmen, O. A., & Clark, P. F. (2014). Plastic in the Thames: A river runs through it. Marine Pollution Bulletin, 78(1–2), 196–200. https://doi.org/10.1016/j.marpolbul.2013.10.035</ref>. | |||

3. Passive sampling: Collecting samples from debris accumulations around existing infrastructure. In the few cases where infrastructure to collect plastic debris is already in place, it is just as easy to use them to quantify and qualify the plastic that gets caught. This method does not require any extra investment. It is, like active sampling, more focused on the top layer of the plastic debris, since the infrastructure is too. | |||

4. Visual observations: Watching plastic float by from on top of a bridge and counting it. This method is very easy to execute, but it is less certain than other methods, due to observer bias, and due to small plastics in a river possibly not being visible from a bridge. This method is adequate for showing seasonal changes in plastic quantities. | |||

5. Citizen science: Using the public as a means to quantify plastic debris. Several apps have been made to allow lots of people to participate in ongoing research for classifying plastic waste. This method gives insight into the transport of plastic on a global scale. | |||

===== Automatic Visual Observations ===== | |||

Cameras can be used to improve visual observations. One study did such a visual observation on a beach, using drones that flew about 10 meters above it. Based on input from cameras on the UAVs, plastic debris could be identified, located and classified (by a machine learning algorithm) <ref>Martin, C., Parkes, S., Zhang, Q., Zhang, X., McCabe, M. F., & Duarte, C. M. (2018). Use of unmanned aerial vehicles for efficient beach litter monitoring. Marine Pollution Bulletin, 131, 662–673. https://doi.org/10.1016/j.marpolbul.2018.04.045</ref>. Similar systems have also been used to identify macroplastics on rivers. | |||

Another study made a deep learning algorithm (a CNN - to be exact, a "Visual Geometry Group-16 (VGG16) model, pre-trained on the large-scale ImageNet dataset" <ref name="classify">Kylili, K., Kyriakides, I., Artusi, A., & Hadjistassou, C. (2019). Identifying floating plastic marine debris using a deep learning approach. Environmental Science and Pollution Research, 26(17), 17091–17099. https://doi.org/10.1007/s11356-019-05148-4</ref>) that was able to classify different types of plastic from images. These images were taken from above the water, so this study also focused on the top layer of plastic debris. | |||

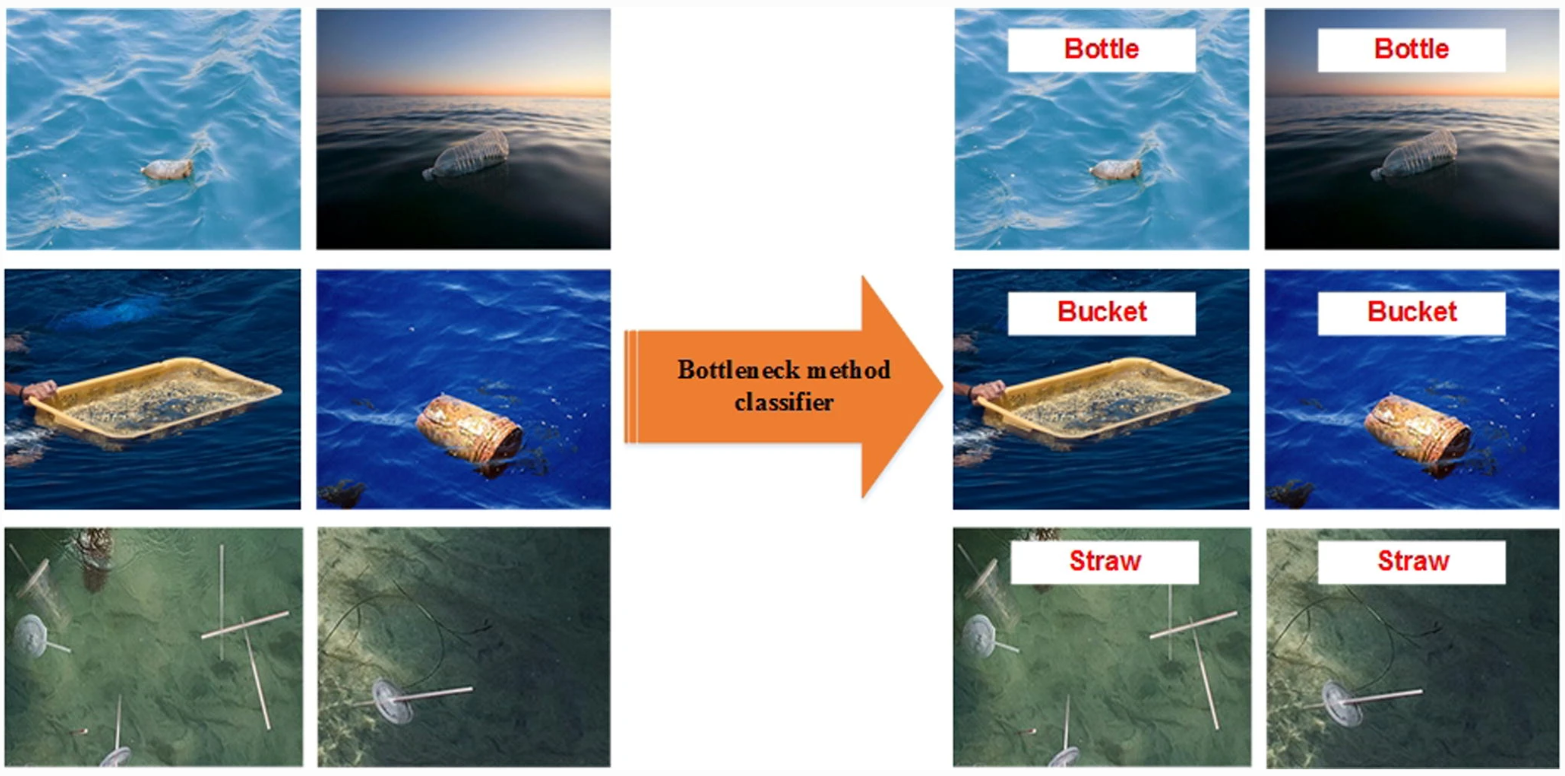

[[File:classification.png|600px|Image: 600 pixels|center|thumb|The plastic debris in these images was automatically classified by a deep learning algorithm.]] | |||

The algorithm had a training set accuracy of 99[%]. But that does not say much about the performance of the algorithm, because it only says how well it categorizes the training images, which it has seen lots of times before. To find out the performance of an algorithm, it has to look at images it has never seen before (so images that are not in the training set). The algorithm recognized plastic debris on 141 out of 165 brand new images that were fed into the system <ref name="classify"></ref>. That leads to a validation accuracy of 86[%]. It was concluded that this shows the algorithm is pretty good at what it should do. | |||

Their improvement points are that the accuracy could be even higher and more different kinds of plastic could be distinguished, while not letting the computational time be too long. | |||

=== Image Recognition === | |||

Over the past decade or so, great steps have been made in developing deep learning methods for image recognition and classification <ref name="ImRecNow">Seif, G. (2018, January 21). Deep Learning for Image Recognition: why it’s challenging, where we’ve been, and what’s next. Retrieved April 22, 2020, from https://towardsdatascience.com/deep-learning-for-image-classification-why-its-challenging-where-we-ve-been-and-what-s-next-93b56948fcef</ref>. In recent years, convolutional neural networks (CNNs) have shown significant improvements on image classification <ref name="DLImage">Lee, G., & Fujita, H. (2020). Deep Learning in Medical Image Analysis. New York, United States: Springer Publishing.</ref>. It is demonstrated that the representation depth is beneficial for the classification accuracy <ref name ="deepCnn">Simonyan, K., & Zisserman, A. (2015, January 1). Very deep convolutional networks for large-scale image recognition. Retrieved April 22, 2020, from https://arxiv.org/pdf/1409.1556.pdf</ref>. Another method is the use of VGG networks, that are known for their state-of-the-art performance in image feature extraction. Their setup exists out of repeated patterns of 1, 2 or 3 convolution layers and a max-pooling layer, finishing with one or more dense layers. The convolutional layer transforms the input data to detect patterns and edges and other characteristics in order to be able to correctly classify the data. The main parameters with which a convolutional layer can be changed, is by choosing a different activation function or kernel size <ref name ="deepCnn">Simonyan, K., & Zisserman, A. (2015, January 1). Very deep convolutional networks for large-scale image recognition. Retrieved April 22, 2020, from https://arxiv.org/pdf/1409.1556.pdf</ref>. | |||

There are still limitations to the current image recognition technologies. First of all, most methods are supervised, which means they need big amounts of labelled training data, that need to be put together by someone <ref name="ImRecNow">Seif, G. (2018, January 21). Deep Learning for Image Recognition: why it’s challenging, where we’ve been, and what’s next. Retrieved April 22, 2020, from https://towardsdatascience.com/deep-learning-for-image-classification-why-its-challenging-where-we-ve-been-and-what-s-next-93b56948fcef</ref>. This can be solved by using unsupervised deep learning instead of supervised. For unsupervised learning, instead of large databases, only some labels will be needed to make sense of the world. Currently, there are no unsupervised methods that outperform supervised. This is because supervised learning can better encode the characteristics of a set of data. The hope is that in the future unsupervised learning will provide more general features so any task can be performed <ref name = "Unsupervised">Culurciello, E. (2018, December 24). Navigating the Unsupervised Learning Landscape - Intuition Machine. Retrieved April 22, 2020, from https://medium.com/intuitionmachine/navigating-the-unsupervised-learning-landscape-951bd5842df9</ref>. Another problem is that sometimes small distortions can cause a wrong classification of an image <ref name="ImRecNow">Seif, G. (2018, January 21). Deep Learning for Image Recognition: why it’s challenging, where we’ve been, and what’s next. Retrieved April 22, 2020, from https://towardsdatascience.com/deep-learning-for-image-classification-why-its-challenging-where-we-ve-been-and-what-s-next-93b56948fcef</ref> <ref name ="distSens">Bosse, S., Becker, S., Müller, K.-R., Samek, W., & Wiegand, T. (2019). Estimation of distortion sensitivity for visual quality prediction using a convolutional neural network. Digital Signal Processing, 91, 54–65. https://doi.org/10.1016/j.dsp.2018.12.005</ref>. This can already be caused by shadows on an object that can cause color and shape differences <ref name="RBrooks">Brooks, R. (2018, July 15). [FoR&AI] Steps Toward Super Intelligence III, Hard Things Today – Rodney Brooks. Retrieved April 22, 2020, from http://rodneybrooks.com/forai-steps-toward-super-intelligence-iii-hard-things-today/</ref>. A different pitfall is that the output feature maps are sensitive to the specific location of the features in the input. One approach to address this sensitivity is to use a max pooling layer. Max pooling layers reduce the number of pixels in the output size from the previously applied convolutional layer(s). The pool-size determines the amount of pixels from the input data that is turned into 1 pixel from the output data. Using this, has the effect of making the resulting down sampled feature maps more robust to changes in the position of the feature in the image <ref name ="deepCnn">Simonyan, K., & Zisserman, A. (2015, January 1). Very deep convolutional networks for large-scale image recognition. Retrieved April 22, 2020, from https://arxiv.org/pdf/1409.1556.pdf</ref>. | |||

=== Neural Networks === | === Neural Networks === | ||

Neural networks are a set of algorithms that are designed to recognize patterns. They interpret sensory data through machine perception, labeling or clustering raw input. The patterns they recognize are numerical, contained in vectors. Real-world data, such as images, sound, text or time series, | Neural networks are a set of algorithms that are designed to recognize patterns. They interpret sensory data through machine perception, labeling or clustering raw input. The patterns they recognize are numerical, contained in vectors. Real-world data, such as images, sound, text or time series, need to be translated into such numerical data to process it <ref name=neuralbeginner>Nicholson, C. (n.d.). A Beginner’s Guide to Neural Networks and Deep Learning. Retrieved April 22, 2020, from https://pathmind.com/wiki/neural-network</ref>. | ||

There are different types of neural networks <ref name=typesneural>Cheung, K. C. (2020, April 17). 10 Use Cases of Neural Networks in Business. Retrieved April 22, 2020, from https://algorithmxlab.com/blog/10-use-cases-neural-networks/#What_are_Artificial_Neural_Networks_Used_for</ref>: | There are different types of neural networks <ref name=typesneural>Cheung, K. C. (2020, April 17). 10 Use Cases of Neural Networks in Business. Retrieved April 22, 2020, from https://algorithmxlab.com/blog/10-use-cases-neural-networks/#What_are_Artificial_Neural_Networks_Used_for</ref>: | ||

| Line 134: | Line 225: | ||

* Hopfield networks: Hopfield networks are used to collect and retrieve memory like the human brain. The network can store various patterns or memories. It is able to recognize any of the learned patterns by uncovering data about that pattern <ref name=hopfield> Hopfield Network - Javatpoint. (n.d.). Retrieved April 22, 2020, from https://www.javatpoint.com/artificial-neural-network-hopfield-network</ref>. | * Hopfield networks: Hopfield networks are used to collect and retrieve memory like the human brain. The network can store various patterns or memories. It is able to recognize any of the learned patterns by uncovering data about that pattern <ref name=hopfield> Hopfield Network - Javatpoint. (n.d.). Retrieved April 22, 2020, from https://www.javatpoint.com/artificial-neural-network-hopfield-network</ref>. | ||

* Boltzmann machine networks: Boltzmann machines are used for search and learning problems <ref name=boltzmann>Hinton, G. E. (2007). Boltzmann Machines. Retrieved from https://www.cs.toronto.edu/~hinton/csc321/readings/boltz321.pdf</ref>. | * Boltzmann machine networks: Boltzmann machines are used for search and learning problems <ref name=boltzmann>Hinton, G. E. (2007). Boltzmann Machines. Retrieved from https://www.cs.toronto.edu/~hinton/csc321/readings/boltz321.pdf</ref>. | ||

==== Convolutional Neural Networks ==== | ==== Convolutional Neural Networks ==== | ||

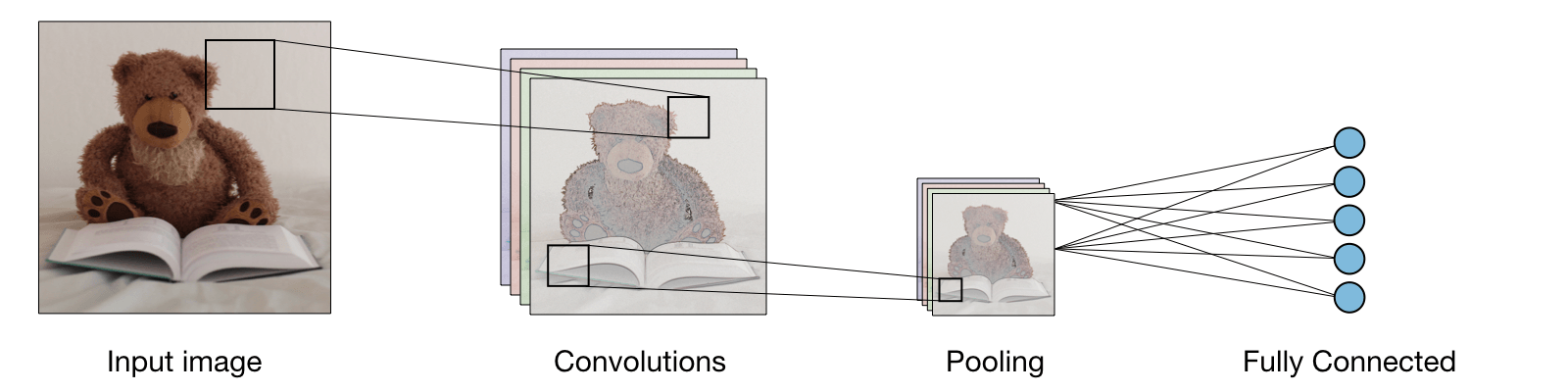

In this project, the neural network should retrieve data from images. Therefore a convolutional neural network | In this project, the neural network should retrieve data from images. Therefore a convolutional neural network could be used. Convolutional neural networks are generally composed of the following layers <ref name=convolution>Amidi, A., & Amidi, S. (n.d.). CS 230 - Convolutional Neural Networks Cheatsheet. Retrieved April 22, 2020, from https://stanford.edu/%7Eshervine/teaching/cs-230/cheatsheet-convolutional-neural-networks</ref>: | ||

[[File:CNN.png|800px|Image: 800 pixels|center|thumb|Layers in a convolutional neural network]] | [[File:CNN.png|800px|Image: 800 pixels|center|thumb|Layers in a convolutional neural network.]] | ||

The convolutional layer transforms the input data to detect patterns, edges and other characteristics in order to be able to correctly classify the data. The main parameters with which a convolutional layer can be changed are by choosing a different activation function, or kernel size. Max pooling layers reduce the number of pixels in the output size from the previously applied convolutional layer(s). Max pooling is applied to reduce overfitting. A problem with the output feature maps is that they are sensitive to the location of the features in the input. One approach to address this sensitivity is to use a max pooling layer. This has the effect of making the resulting downsampled feature maps more robust to changes in the position of the feature in the image. The pool-size determines the amount of pixels from the input data that is turned into 1 pixel from the output data. Fully connected layers connect all input values via separate connections to an output channel. Since this project has to deal with a binary problem, the final fully connected layer will consist of 1 output. Stochastic gradient descent (SGD) is the most common and basic optimizer used for training a CNN <ref name=CNNrad>Yamashita, Rikiya & Nishio, Mizuho & Do, Richard & Togashi, Kaori. (2018). Convolutional neural networks: an overview and application in radiology. Insights into Imaging. 9. 10.1007/s13244-018-0639-9 </ref>. It optimizes the model using parameters based on the gradient information of the loss function. However, many other optimizers have been developed that could have a better result. Momentum keeps the history of the previous update steps and combines this information with the next gradient step to reduce the effect of outliers <ref name=gdl>Qian, N. (1999, January 12). On the momentum term in gradient descent learning algorithms. - PubMed - NCBI. Retrieved April 22, 2020, from https://www.ncbi.nlm.nih.gov/pubmed/12662723</ref>. RMSProp also tries to keep the updates stable, but in a different way than momentum. RMSprop also takes away the need to adjust learning rate <ref name=generalization>Hinton, G., Srivastava, N., Swersky, K., Tieleman, T., & Mohamed , A. (2016, December 15). Neural Networks for Machine Learning: Overview of ways to improve generalization [Slides]. Retrieved from http://www.cs.toronto.edu/~hinton/coursera/lecture9/lec9.pdf</ref>. Adam takes the ideas behind both momentum and RMSprop and combines into one optimizer <ref name=stoch_optim>Kingma, D. P., & Ba, J. (2015). Adam: A Method for Stochastic Optimization. Presented at the 3rd International Conference for Learning Representations, San Diego.</ref>. Nesterov momentum is a smarter version of the momentum optimizer that looks ahead and adjusts the momentum based on these parameters <ref name=convergence>Nesterov, Y. (1983). A method for unconstrained convex minimization problem with the rate of convergence o(1/k^2).</ref>. Nadam is an optimizer that combines RMSprop and Nesterov momentum <ref name=Nesterovmomentum>Dozat, T. (2016). Incorporating Nesterov Momentum into Adam. Retrieved from https://openreview.net/pdf?id=OM0jvwB8jIp57ZJjtNEZ</ref>. | The convolutional layer transforms the input data to detect patterns, edges and other characteristics in order to be able to correctly classify the data. The main parameters with which a convolutional layer can be changed are by choosing a different activation function, or kernel size. Max pooling layers reduce the number of pixels in the output size from the previously applied convolutional layer(s). Max pooling is applied to reduce overfitting. A problem with the output feature maps is that they are sensitive to the location of the features in the input. One approach to address this sensitivity is to use a max pooling layer. This has the effect of making the resulting downsampled feature maps more robust to changes in the position of the feature in the image. The pool-size determines the amount of pixels from the input data that is turned into 1 pixel from the output data. Fully connected layers connect all input values via separate connections to an output channel. Since this project has to deal with a binary problem, the final fully connected layer will consist of 1 output. Stochastic gradient descent (SGD) is the most common and basic optimizer used for training a CNN <ref name=CNNrad>Yamashita, Rikiya & Nishio, Mizuho & Do, Richard & Togashi, Kaori. (2018). Convolutional neural networks: an overview and application in radiology. Insights into Imaging. 9. 10.1007/s13244-018-0639-9 </ref>. It optimizes the model using parameters based on the gradient information of the loss function. However, many other optimizers have been developed that could have a better result. Momentum keeps the history of the previous update steps and combines this information with the next gradient step to reduce the effect of outliers <ref name=gdl>Qian, N. (1999, January 12). On the momentum term in gradient descent learning algorithms. - PubMed - NCBI. Retrieved April 22, 2020, from https://www.ncbi.nlm.nih.gov/pubmed/12662723</ref>. RMSProp also tries to keep the updates stable, but in a different way than momentum. RMSprop also takes away the need to adjust learning rate <ref name=generalization>Hinton, G., Srivastava, N., Swersky, K., Tieleman, T., & Mohamed , A. (2016, December 15). Neural Networks for Machine Learning: Overview of ways to improve generalization [Slides]. Retrieved from http://www.cs.toronto.edu/~hinton/coursera/lecture9/lec9.pdf</ref>. Adam takes the ideas behind both momentum and RMSprop and combines into one optimizer <ref name=stoch_optim>Kingma, D. P., & Ba, J. (2015). Adam: A Method for Stochastic Optimization. Presented at the 3rd International Conference for Learning Representations, San Diego.</ref>. Nesterov momentum is a smarter version of the momentum optimizer that looks ahead and adjusts the momentum based on these parameters <ref name=convergence>Nesterov, Y. (1983). A method for unconstrained convex minimization problem with the rate of convergence o(1/k^2).</ref>. Nadam is an optimizer that combines RMSprop and Nesterov momentum <ref name=Nesterovmomentum>Dozat, T. (2016). Incorporating Nesterov Momentum into Adam. Retrieved from https://openreview.net/pdf?id=OM0jvwB8jIp57ZJjtNEZ</ref>. | ||

=== | ==== YOLO ==== | ||

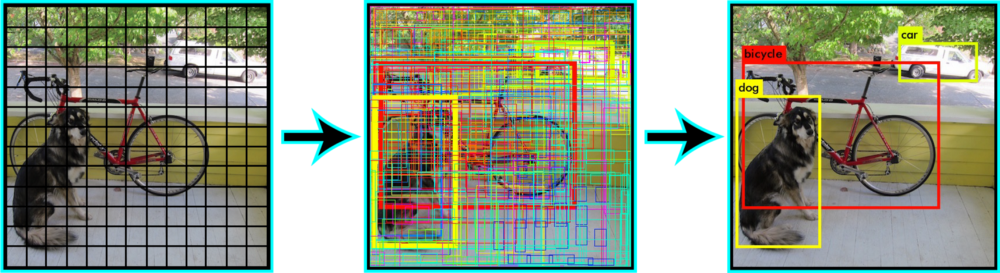

YOLO is a deep learning algorithm which came out on may 2016. It is popular because it’s very fast compared with other deep learning algorithms <ref name = yolo> Canu, S. (2019, June 27). YOLO object detection using Opencv with Python. Retrieved May 26, 2020, from https://pysource.com/2019/06/27/yolo-object-detection-using-opencv-with-python/ </ref>. For YOLO, a completely different approach is used than for prior detection systems. In prior detection systems, a model is applied to an image at multiple locations and scales. High scoring regions of the image are considered detections. For YOLO a single deep convolutional neural network is applied to the full image. This network divides the image into a grid of cells and each cell directly predicts a bounding box and object classification <ref name = yolo3>Brownlee, J. (2019, October 7). How to Perform Object Detection With YOLOv3 in Keras. Retrieved May 29, 2020, from https://machinelearningmastery.com/how-to-perform-object-detection-with-yolov3-in-keras/</ref>. These bounding boxes are weighted by the predicted probabilities <ref name = yolo2>Redmon, J. (2019, November 15). pjreddie/darknet. Retrieved May 29, 2020, from https://github.com/pjreddie/darknet/wiki/YOLO:-Real-Time-Object-Detection</ref>. | |||

The newest version of YOLO is YOLO v3. It uses a variant of Darknet for training and testing. Darknet originally has 53 layers trained on ImageNet. For the task of detection, 53 more layers are stacked onto it. In total, this means that a 106 layer fully convolutional underlying architecture is used for YOLO v3. In the figure below it can be seen what the architecture of YOLO v3 looks like <ref name = yolo4> Kathuria, A. (2018, April 23). What’s new in YOLO v3? Retrieved May 29, 2020, from https://towardsdatascience.com/yolo-v3-object-detection-53fb7d3bfe6b</ref>. | |||

[[File: | [[File:YOLO_network.png|800px|Image: 800 pixels|center|thumb|YOLO network structure <ref name = yolo4> Kathuria, A. (2018, April 23). What’s new in YOLO v3? Retrieved May 29, 2020, from https://towardsdatascience.com/yolo-v3-object-detection-53fb7d3bfe6b</ref>.]] | ||

==== LabelImg ==== | |||

The network needs to be trained on images of the object that is needed to be identified by the network. These images, on which the network will be trained, need to be labeled to assign them to a certain class. This can be done with LabelImg. LabelImg is a graphical image annotation tool which can be seen below. The objects need to be identified manually by creating a rectangular box around it and assigning them a label. | |||

[[File:Labelimg.png|650px|Image: 800 pixels|center|thumb|LabelImg.]] | |||

At the end, the network should be able to detect the objects that are trained on it. This can be done with different formats: photos, videos or via webcam. In the figure below, an example of the working of the network can be seen. First, the network divides the image into regions and predicts the bounding boxes and probabilities for each region. Then, these bounding boxes are weighted by the predicted probabilities. | |||

[[File:YOLO_example2.png|650px|Image: 800 pixels|center|thumb|Example object detection <ref name = example2>Bhattarai, S. (2019, December 25). What is YOLO v2 (aka YOLO 9000)? Retrieved June 1, 2020, from https://saugatbhattarai.com.np/what-is-yolo-v2-aka-yolo-9000/</ref>.]] | |||

= Further Exploration = | |||

=== Location === | |||

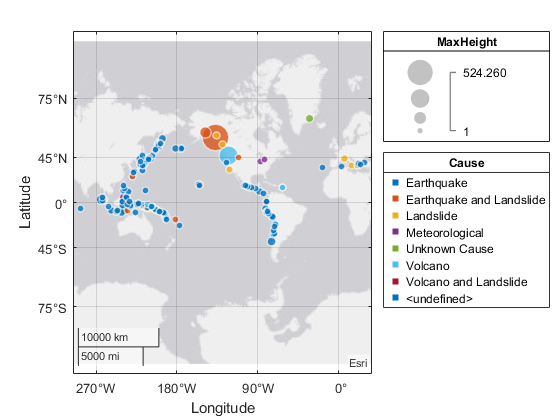

Rivers are seen as a major source of debris in the oceans <ref name=“plastic”>Lebreton. (2018, January 1). OSPAR Background document on pre-production Plastic Pellets. Retrieved May 3, 2020, from https://www.ospar.org/documents?d=39764</ref> . The tide has a big influence on the direction of the floating waste. During low tide the waste flows towards the sea, and during high tide it can flow over the river towards the river banks <ref name='plasticresearch'> Schone Rivieren. (2019). Wat spoelt er aan op rivieroevers? Resultaten van twee jaar afvalmonitoring aan de oevers van de Maas en de Waal. Retrieved from https://www.schonerivieren.org/wp-content/uploads/2020/05/Schone_Rivieren_rapportage_2019.pdf</ref>. | |||

A big consequence of plastic waste in rivers, seas, oceans and river banks is that a lot of animals can mistake plastic for food, often resulting in death. There are also economic consequences. More waste in waters, means more difficult water purification, especially because of microplastics. It costs extra money to be able to purify the water. Also, cleaning of waste in river areas, costs millions a year <ref name = “cleaningwaste”> Staatsbosbeheer. (2019, September 12). Dossier afval in de natuur. Retrieved May 3, 2020, from https://www.staatsbosbeheer.nl/over-staatsbosbeheer/dossiers/afval-in-de-natuur</ref>. | |||

A large-scale investigation has taken place into the wash-up of waste on the banks of rivers. At river banks of the Maas, an average of 630 pieces of waste per 100 meters of river bank was counted, of which 81[%] is plastic. Some measurement locations showed a count of more than 1200 pieces of waste per 100 meters riverbank, and can be marked as hotspots. A big concentration of these hotspots can be found at the riverbanks of the Maas in the south of Limburg. A lot of waste, originating from France and Belgium, flows into the Dutch part of the Maas here. Evidence for this, is the great amount of plastic packaging with French texts. Also, in these hotspots the proportion of plastic is even higher, namely 89[%] instead of 81[%] <ref name='plasticresearch'> Schone Rivieren. (2019). Wat spoelt er aan op rivieroevers? Resultaten van twee jaar afvalmonitoring aan de oevers van de Maas en de Waal. Retrieved from https://www.schonerivieren.org/wp-content/uploads/2020/05/Schone_Rivieren_rapportage_2019.pdf</ref>. | |||

The Waste Identifier should help to tackle the problem of the plastic soup at its roots, the rivers. Because of the high plastic concentration in the Maas in the south of Limburg, there will be specifically looked into designing the image recognition module, for this part of the Maas. The Noria is often placed in locks to make sure it does not interfere with other water traffic. Therefore, there will also be focused on those specific parts of the river Maas in the South of Limburg. | |||

=== Waste === | |||

An extensive research into the amount of waste on the river banks of the Maas has been executed <ref name='plasticresearch'> Schone Rivieren. (2019). Wat spoelt er aan op rivieroevers? Resultaten van twee jaar afvalmonitoring aan de oevers van de Maas en de Waal. Retrieved from https://www.schonerivieren.org/wp-content/uploads/2020/05/Schone_Rivieren_rapportage_2019.pdf</ref>. As explained before, waste in rivers can float into the oceans or can end up on river banks. Therefore, the counted amount of waste on the river banks of the Maas is only a part of the total amount of litter in the rivers, since another part flows into the ocean. The exact numbers of how much flows into the oceans are not clear. However, it is certain that at the South of Limburg an average of more than 1200 pieces of waste per 100 meters of riverbank of the Maas were counted, of which 89[%] is plastic. | |||

A top 15 was made of which types of waste were encountered the most. The type of plastic most commonly found is indefinable pieces of soft/hard plastic and plastic film that are smaller than 50 [cm], including styrofoam. This indefinable pieces also include nurdles. This are small plastic granules, that are used as a raw element for plastic products. Again, the south of Limburg has the highest concentration of this type of waste. This is because there are relatively more industrial areas there. Another big part of the counted plastics are disposable plastics, often used as food and drink packaging. In total 25[%] of all encountered plastic is disposable plastic from food and drink packages. | |||

Only litter that has washed up on the riverbanks has been counted. The Waste Identifier can help with monitoring the waste flow in the water of the rivers to get a more complete view of hotspots and often encountered waste types. | |||

=== Image Database === | |||

The CNN or YOLO can be pretrained on the large-scale ImageNet. Due to this pre-training, the model has learned certain image features from this large dataset. Secondly the neural network should be trained on a database specified on this subject. This database should then randomly be divided into 3 groups. The biggest group is the training data, which the neural network uses to see patterns and to predict the outcome of the second dataset, the validation data. Ones this validation data has been analyzed, a new epoch is started, which means that the validation data is part of the training data. Once a final model has been created, a test dataset can be used to analyzed its performance. | |||

It is difficult to find a database perfectly corresponding to our subject. First of all, a big dataset of plastic waste in the ocean is available <ref name ='plasticseadata'>Buffon X. (2019, May 20) Robotic Detection of Marine Litter Using Deep Visual Detection Models. Retrieved May 9, 2020, from https://ieeexplore.ieee.org/abstract/document/8793975</ref>. A big dataset of plastic shapes can be used, although these are not from waste in the water it can still be useful <ref name = 'plasticdata'> Thung G. (2017, Apr 10) Dataset of images of trash Torch-based CNN for garbage image classification. Retrieved May 9, 2020, from https://github.com/garythung/trashnet</ref>. Using image preprocessing, it could be possible to still find corresponding shapes of plastic from pictures in the water that the camera takes. Lastly, a dataset can be created by ourselves. | |||

= Neural Network Design = | |||

Because of the higher frame rate that can be created using YOLO in comparison to CNN, it has been chosen to use YOLO as the object detection method. A dataset, that will be further explained later, will be labelled and used for training and validation. This training is done using Google Colab, so that an external GPU computer, made available by Google, can be used to improve the training speed. Here, weights from Darknet, which is the framework for YOLO, is downloaded and iterationally changed to fit the database and gain the lowest validation loss. However, the connection to Google Colab can only be made for 12 hours. Because of this, the training has been done multiple times by restarting a new training using the final weights from the previous training. By doing this, the validation loss has been reduced to 0.045. These weights have finally been trained on new images and videos, to verify the low loss. Also a counting software has been created. For this, it needs to be assumed that there is a current in the water and new waste objects only appear from the top of the frame. If an object has been detected, and no object was higher than this object, it means that this is a new object and it will be counted. | |||

Although, that part of the code for testing and training has been obtained from the PySource blog <ref name = 'Pysource'> Sergio Canu (2020, April 1) Train YOLO to detect a custom object (online with free GPU)</ref>, these codes need to be adapted to our problem statement and number of classifications. Besides, code for counting and changing the label number to our specific problem, have been created. The files can be downloaded from the following GitHub link: https://github.com/mennocromwijkk/Robots_Everywhere_3. Here, the file "bottle_and_can_Train_YoloV3 .ipynb" can be used to train the model in Google Colab. the zip-file "obj.zip" needs to be placed in a map named "yolov3" in Google Drive. The files "yolo_object_detection.py" can be used for the detection of images and "real_time_yoloV2.py" for the detection of videos, where the counting software has been added. | |||

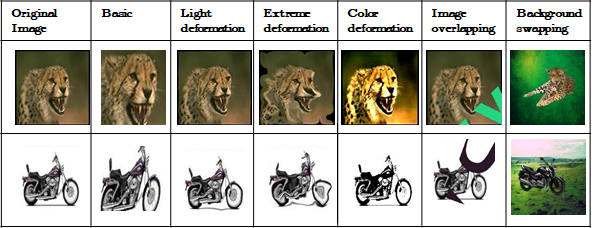

==== Data Augmentation ==== | |||

The dataset does not contain as many images as desired. If there is not enough data, neural networks tend to over-fit to the little amount of data there is, which is undesirable. That is why some way has to be found to increase the size of the dataset. One way to increase the size of a dataset is by use of data augmentation <ref name='dak1'>https://nanonets.com/blog/data-augmentation-how-to-use-deep-learning-when-you-have-limited-data-part-2/</ref><ref name='dak2'>Goyal, S. (2019, December 17). MachineX: Image Data Augmentation Using Keras. Retrieved June 19, 2020, from https://towardsdatascience.com/machinex-image-data-augmentation-using-keras-b459ef87cd22</ref> . If this is used, then not only are the original images fed into the neural network, but also slightly altered images. Alterations include: | |||

* Translation | |||

* Rotation | |||

* Scaling | |||

* Flipping | |||

* Illumination | |||

* Overlapping images | |||

* Gaussian noise, etc. | |||

[[File:data_aug.png|600px|Image: 800 pixels|center|thumb|Different uses of data augmentation. Every image is a completely new one to the neural network.]] | |||

Every altered image counts as completely new data for the neural network, which is why it is able to train using this duplicated data without over-fitting to it. | |||

Neural networks benefit from having more data to train on, simply because the classifications become stronger with more data. But on top of that, neural networks that are trained on translated, resized or rotated images are much better at classifying objects that are slightly altered in any way (this is called invariance). In the case of waste in water, training a neural network to be invariant makes a lot of sense: there is no saying whether a piece of waste will be upside-down, slightly damaged, not fully visible etc. A data augmentation code has been written and can be implemented once the dataset is final. It can be found via the following GitHub link: https://github.com/mennocromwijkk/Robots_Everywhere_3 at "data_aug.py". | |||

==== Dataset ==== | |||

The idea is that a test setup will be created and placed in a black reservoir. If the final proof-of-concept test setup is likewise, it makes sense that the dataset should have similar conditions. This is why the dataset will consist of self-taken pictures using a similar setup (position, camera angle, lighting) as the test setup, and of an online dataset which contains images of waste outside the water. This way, a dataset that is large enough to train the neural network is obtained. The final dataset can be found via the following GitHub link: https://github.com/mennocromwijkk/Robots_Everywhere_3. | |||

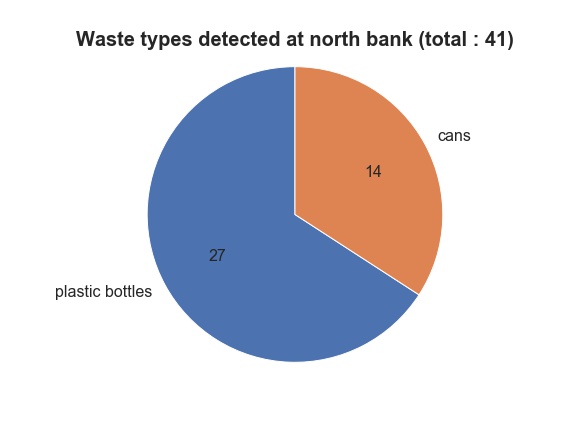

The self-taken pictures will be made from a very slight angle (so not directly above the plastic), in reasonable shade, with as little reflections as possible, to avoid confusing the neural network. The amount of useful images can later be increased using data augmentation. Different types of river waste will be gathered and submerged in the water of the reservoir. There will be images of: | |||

*Plastic bottles | |||

*Drinking cans | |||

The ground truth of the images will be categorized by hand using the labelling program 'labelImg'. With the latter, the position of the object can also be indicated. More than one type of waste can be on one image. There will also be some noise in the water, to make it a bit harder for the neural network to recognize the waste. This noise will come in the form of leaves, similar to the noise that will be faced on Noria's actual installation. | |||

This means that our final product will be mostly a proof-of-concept. If the idea is actually realized on the Noria, it is advised to recreate the dataset in its river environment, so that the neural network does not get confused over any sudden changes. Given the effectiveness in the black vat setup, the possible effectiveness of the neural network in Noria's environment can be discussed at the end of the project. | |||

===== Photos ===== | |||

Eventually 78 photos have been taken by ourselves, containing bottles and cans, to train a neural network with, together with the online dataset of plastic bottles and cans on a white back ground<ref name = 'plasticdata'> Thung G. (2017, Apr 10) Dataset of images of trash Torch-based CNN for garbage image classification. Retrieved May 9, 2020, from https://github.com/garythung/trashnet</ref>. This is a TrashNet database that is open for everybody and downloaded on GitHub.. They are compressed to 500 x 500 pixels using a Photoshop script. See the examples below. | |||

[[File:dataset2.png|600px|thumb|center|4 of the photos that were taken, and 4 augmented photos.]] | |||

Data augmentation was applied to this set of photos, expanding the dataset to 529 photos. In the Data Augmentation, use was made of: | |||

*Scaling | |||

*Translation | |||

*Rotation | |||

*Flipping | |||

Each of these augmentations was done at random. The random range was made very slight so that little problems occured with trash being stretched out unrealistically. The resulting dataset is only 18 MB and can be found via the following Github link: https://github.com/mennocromwijkk/Robots_Everywhere_3. The augmented images were categorized by hand as 'plastic' and 'can', using labelImg. They were then zipped into a folder and submitted into Google Colab to start training. | |||

====Test Plan==== | |||

''Goal:'' | |||

Test the amount of correctly identified and counted waste pieces in the water. | |||

''Hypothesis:'' | |||

At least 90[%] of the waste will be identified and counted correctly out of at least 50 images and a video of waste in water. | |||

''Materials:'' | |||

* Camera | |||

* Different types of waste | |||

* Image recognition software | |||

* Reservoir with water | |||

''Method:'' | |||

* Throw different types of waste in the water | |||

* Take at least 50 different images of this from above, with the camera (there can be more pieces of waste within one image) | |||

* Make a video of the floating waste | |||

* Add the images to a folder | |||

* Run the image recognition software | |||

* Analyze how much pieces of waste are correctly identified and counted | |||

''Note:'' due to limited resources it was not possible to make a long video of floating waste, so separate videos are made that are placed one behind the other. To get more reliable results in the future, more images and videos can be used. | |||

==== Testing Results (photos) ==== | |||

New test photos are taken of individual waste items in the reservoir, and of a very crowded reservoir filled with lots of trash. This test dataset can also be found in GitHub (https://github.com/mennocromwijkk/Robots_Everywhere_3). The idea was to see if the current neural network could also handle crowded photos of trash, granted that recognizing individual items might be pretty easy for it. | |||

Most of the test photos gave correct results. The test photos of the individual waste items all worked perfectly. However, in some of the crowded photos, one or maybe two items were missed. Sometimes this was caused by the object being a little out of frame. In other cases, the object was behind the surrounding objects, making it too hidden to be recognized. | |||

[[File:dataset3.png|600px|thumb|center|4 examples of image recognition on the crowded test photos.]] | |||

Waste being out of frame should not be an issue in the final application of the image recognition, since the camera will film over the entire width where the trash can be. Waste being too close together might cause a problem on the Noria though, as clogging of waste is a realistic problem. This problem could be (mostly) solved by training on very crowded images, which will force the neural network to look for smaller parts of waste hidden behind other parts. | |||

==== Testing Results (videos) ==== | |||

The most important part of this project is to visualize the amount and sort of waste that is being removed from the water. This will be done by using the object detection software. A script is written that classifies the waste and also counts the amount of waste objects that are removed from the water. The same neural network that is used for the photos can be used for this purpose. The neural network individually interprets each frame in this case. | |||

To test whether the counting works, new videos are recorded where the camera moves over the trash, making it as if the trash is moving from top to bottom. The idea is to use these videos to test whether the counting of waste is working or not. In the video below, a demonstration of the counting software can be seen. At this moment the software is made in a relatively simple way, because it would be good enough to show the working of the concept and there is not enough time to make a more complicated better working script. | |||

[[File:Counting.gif|1500px|thumb|center|Demonstration of counting software.]] | |||

In the second video can be seen that some objects are counted double. This occurs because the object is not detected in a certain frame. To solve this, tracking software can be used so the object can be followed. The object could then be counted when it passes a certain line (see https://www.youtube.com/watch?v=WcKx9u6XmDI) or when it is in a certain box (see https://www.youtube.com/watch?v=3Tw7q0YdcHA). | |||

[[File:Double_counting.gif|1500px|thumb|center|Two objects are counted double.]] | |||

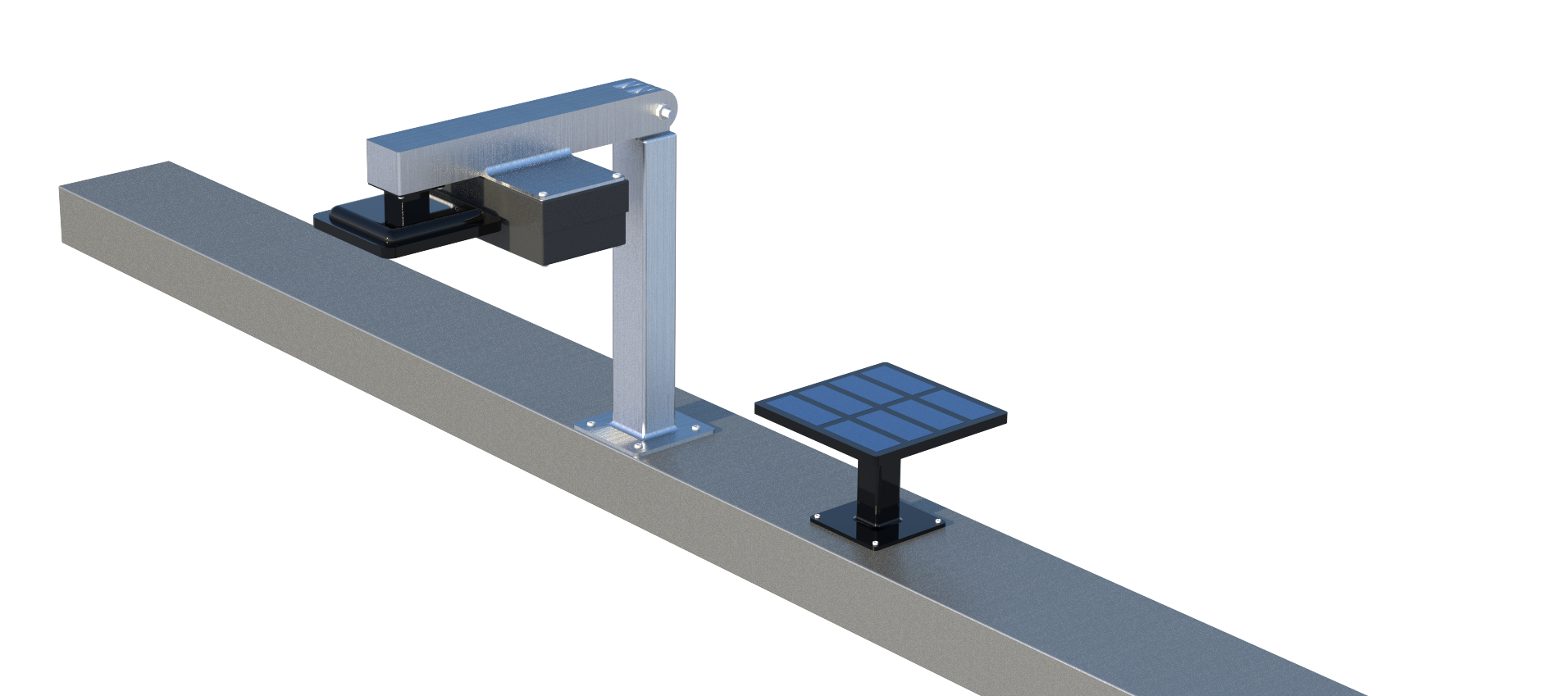

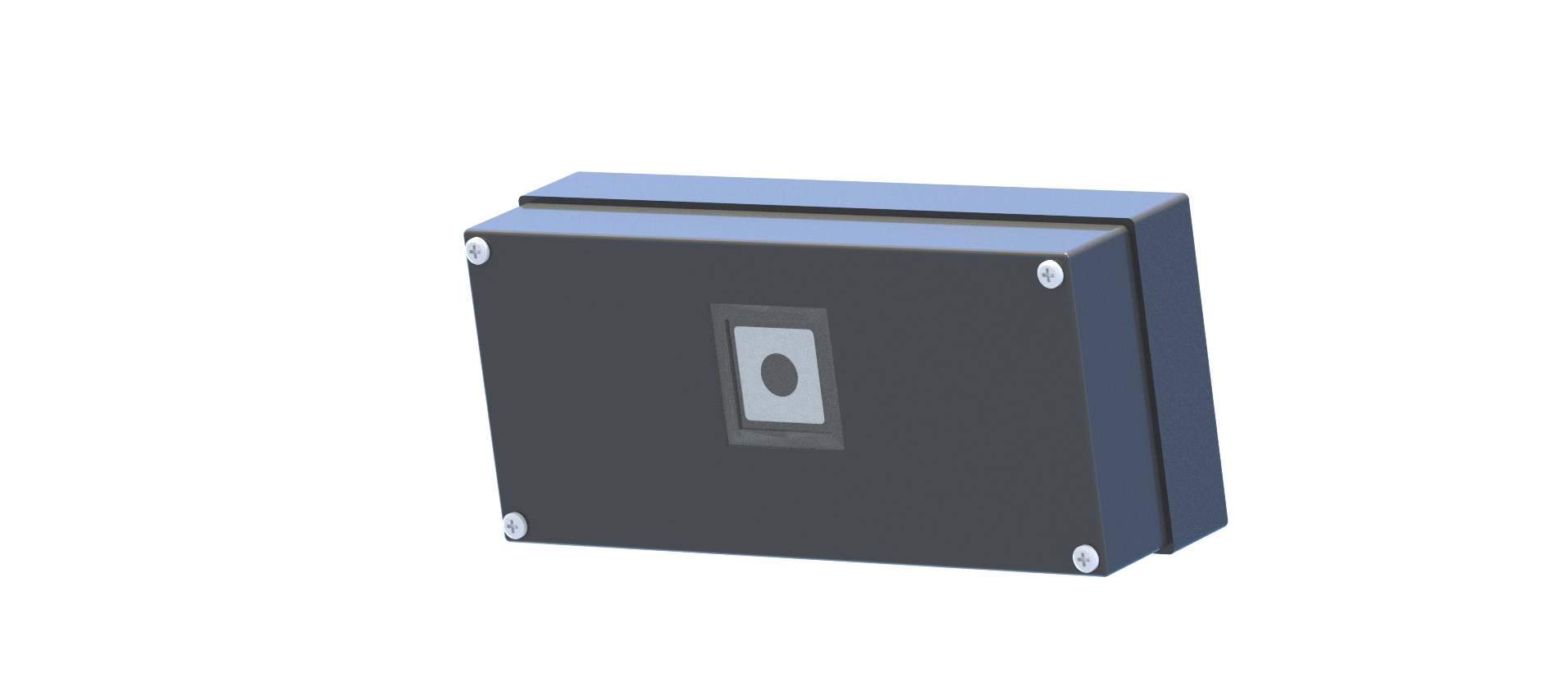

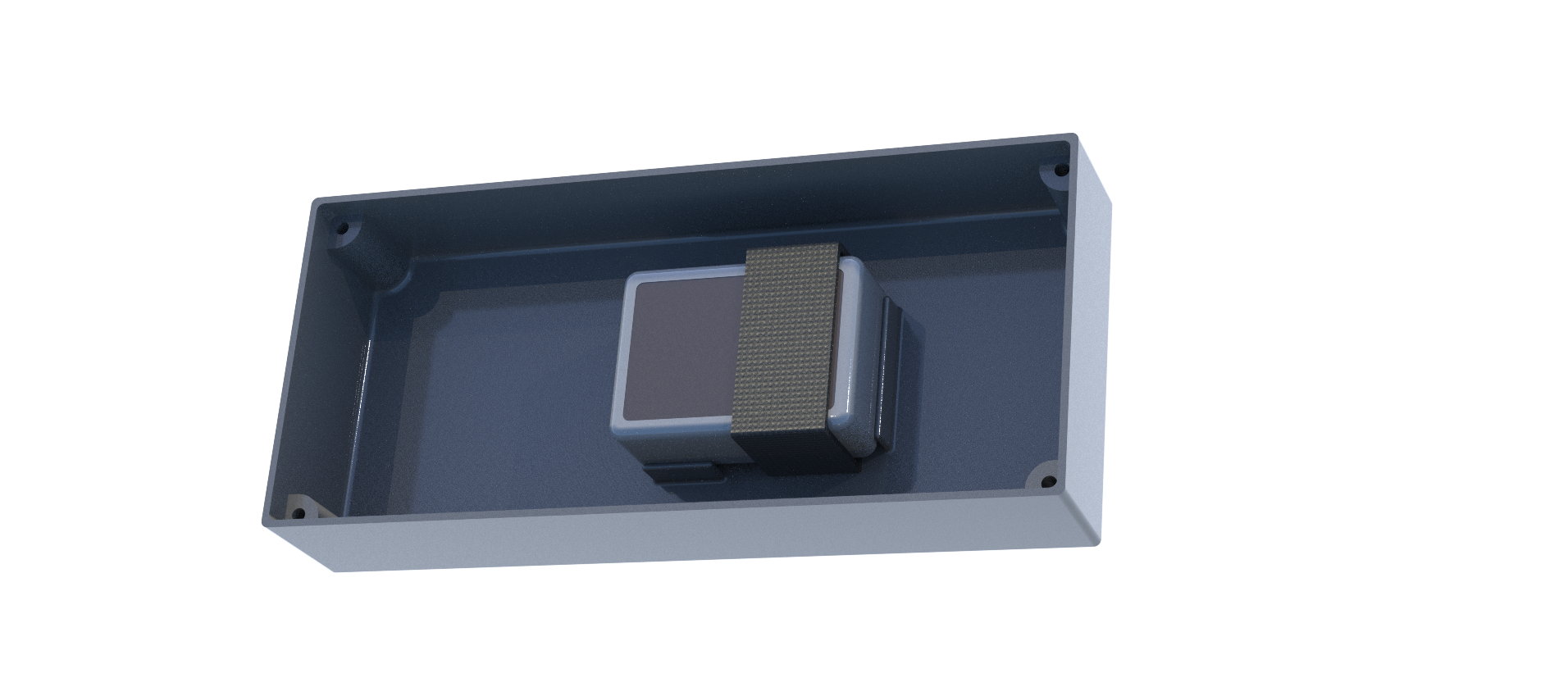

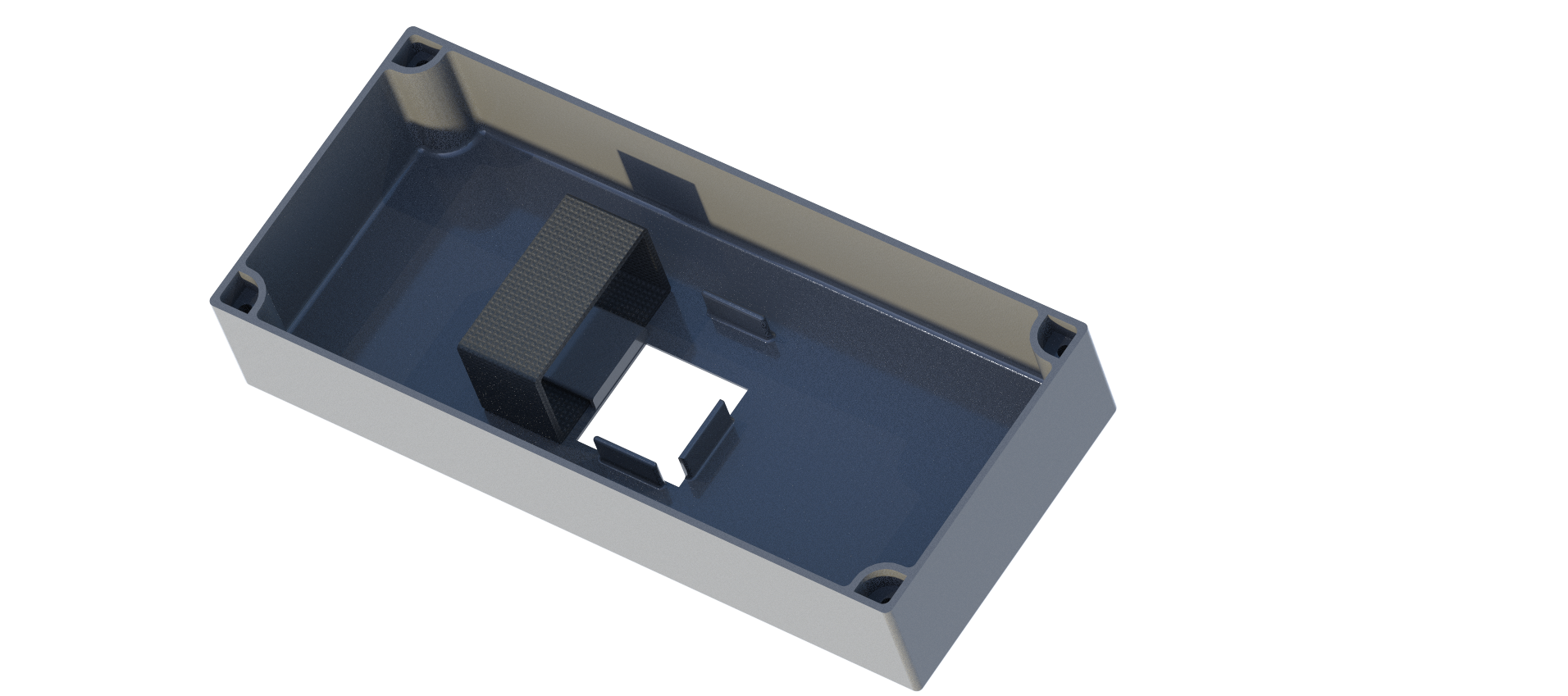

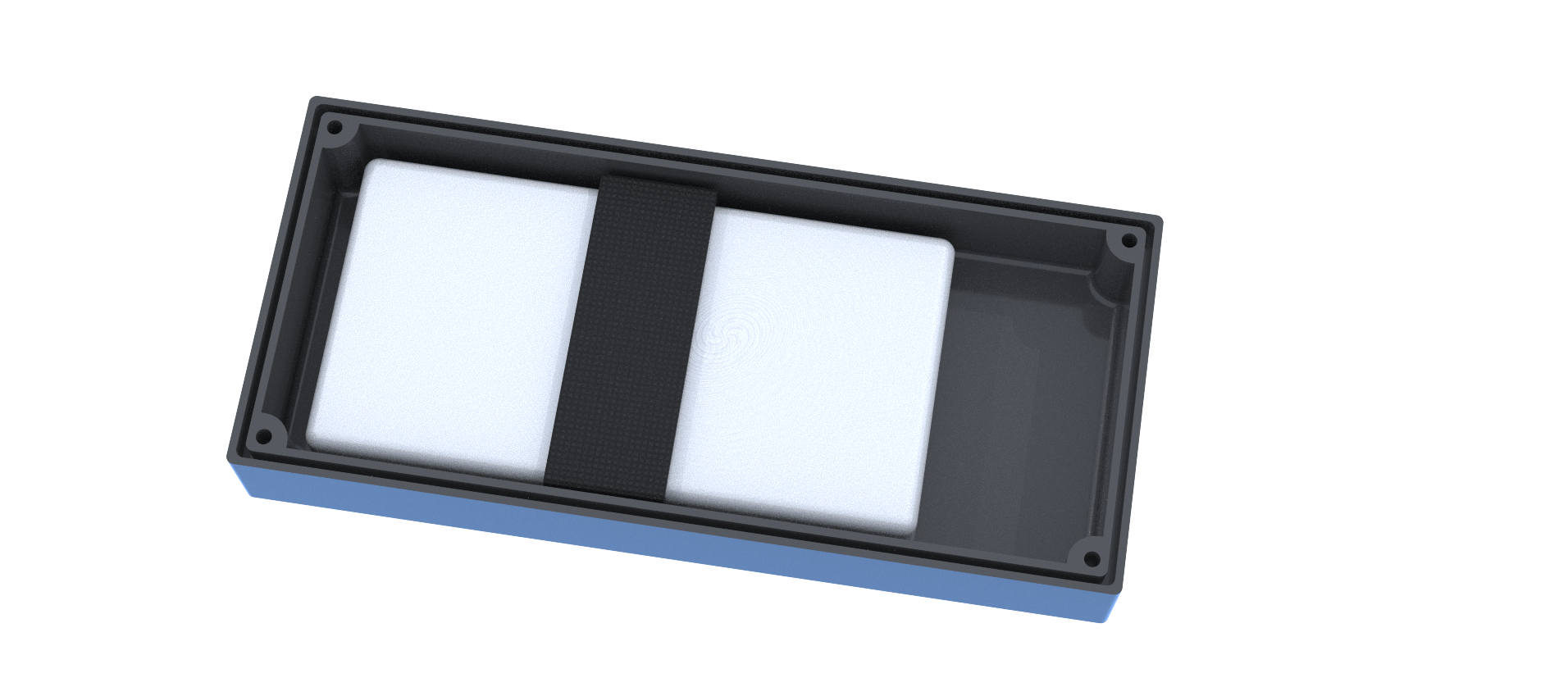

= Design = | |||

Besides the image recognition program, the module itself will need to meet the requirements mentioned at the beginning of this page. There, it is mentioned that the robot should operate at all moments when the Noria is also operating. This means that battery life should be long or some kind of power generation must be present at the Noria itself. Also, the design should be weatherproof and robust. The robot will need to have certain functionalities to be able to meet these requirements. There will be focused on specific parts of the robot that are essential to the operation of the robot. This includes: | |||

*Image recognition hardware | |||

*Data transfer | |||

*Power source | |||

*General assembly | |||

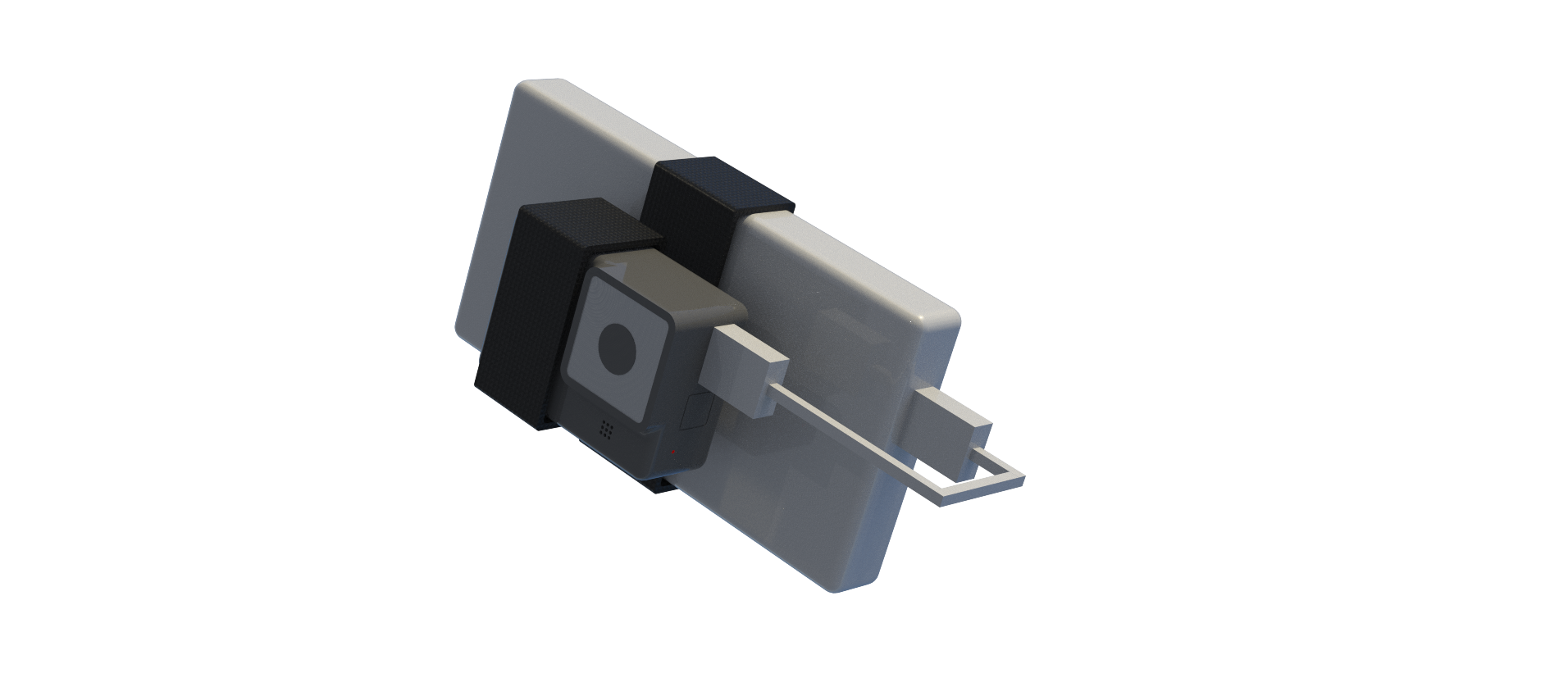

===Image Recognition Hardware=== | |||

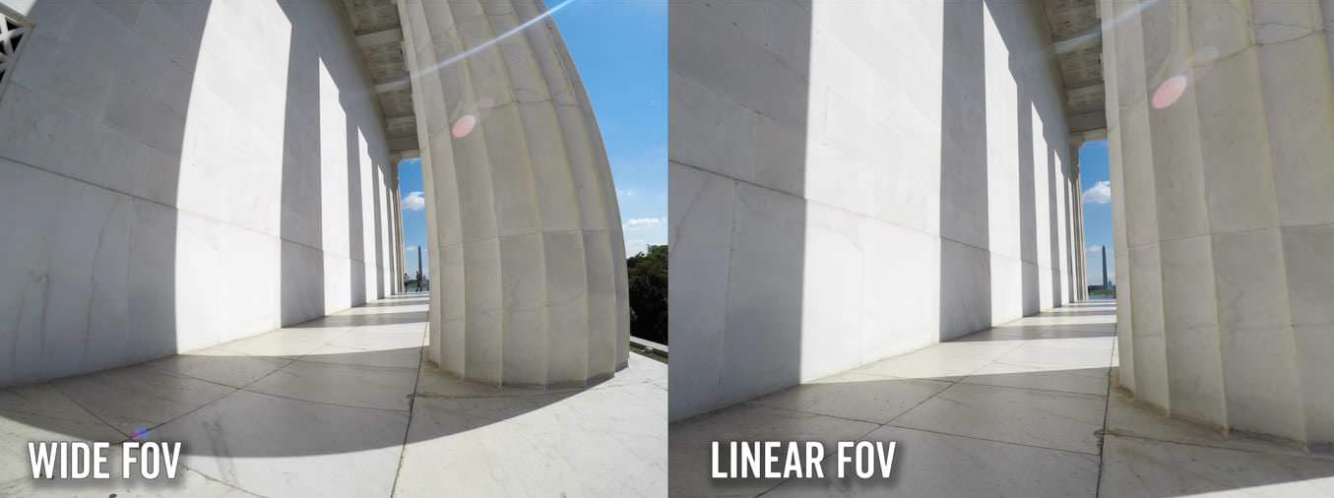

The camera that must be used, should be weatherproof. Also, the device should not run out of energy. Besides, it must be possible to retract the images from the camera to be able to use them for image recognition. Finally, the quality should of course be high enough to be able to let the image recognition work well. A common used camera is the GoPro. The GoPro Hero6, Hero7 and Hero8 can be powered externally, also with a weatherproof connection <ref name ='externalpower'>Coleman, D. (2020, April 8). Can You Run a GoPro HERO8, HERO7, or HERO6 with External Power but Without an Internal Battery? Retrieved May 22, 2020, from https://havecamerawilltravel.com/gopro/external-power-internal-battery/</ref> <ref name ='weatherproofpower'>Air Photography. (2018, April 29). Weatherproof External Power for GoPro Hero 5/6/7 | X~PWR-H5. Retrieved May 22, 2020, from https://www.youtube.com/watch?v=S6Y7a3ZtoeE</ref>. The internal battery can be left in place as a safety net in case external power cannot be provided. Without an internal battery, the camera will turn off when the external power flow stops and it will not turn back on automatically when the power source is restored. With an internal battery it will switch seamlessly when necessary. The disadvantage is of course that the internal battery can also run out of power. GoPro does not offer very long battery life when shooting for a long time, however there are ways to improve this and this will be elaborated on in the next part. For now there will be focused on the resolution that the GoPro cameras have to offer. The newest GoPro, the GoPro Hero8 Black, takes photos in 12MP and makes video footage (including timelapses) in 4K up to 60fps. Additionally, it has improved video stabilization, called HyperSmooth 2.0, which can come in handy when there are more waves, by e.g. rougher weather <ref name='gopro8'>GoPro. (n.d.). HERO8 Black Tech Specs. Retrieved May 22, 2020, from https://gopro.com/en/nl/shop/hero8-black/tech-specs?pid=CHDHX-801-master</ref>. However, lots of external extension (like additional power sources from external companies) are not compatible with the newest GoPros yet. The GoPro Hero7 Black has about the same specs when it comes to image and video quality. It also has video stabilization, but an older version, namely HyperSmooth <ref name=\gopro7>GoPro. (n.d.-a). HERO7 Black Action Camera | GoPro. Retrieved May 22, 2020, from https://gopro.com/en/nl/shop/cameras/hero7-black/CHDHX-701-master.html</ref>. More extensions are possible for the GoPro Hero7 Black, so it is better to use that version. | |||

GoPros are a compact and a relatively cheap option compared to DSLR cameras (Digital Single Lens Reflex). However, as mentioned before, battery life can be an issue. Therefore, another option could be to use the Cyclapse Pro, which can also come with extensions such as solar panels. They have a build-in Nikon or Canon camera, which can provide a higher quality <ref name='cyclapsepro'>Harbortronics. (n.d.-b). Cyclapse Pro - Starter | Cyclapse. Retrieved May 22, 2020, from https://cyclapse.com/products/cyclapse-pro-starter/</ref>. The standard implemented camera is the Canon T7, that provides 24.1MP pictures and can provide full HD videos at 30 fps <ref name ='canon'>Canon USA. (n.d.). Canon U.S.A., Inc. | EOS Rebel T7 EF-S 18-55mm IS II Kit. Retrieved May 22, 2020, from https://www.usa.canon.com/internet/portal/us/home/products/details/cameras/eos-dslr-and-mirrorless-cameras/dslr/eos-rebel-t7-ef-s-18-55mm-is-ii-kit</ref>. The camera itself is $700 USD (2 times more expensive than GoPros), and the costs increase quickly when additional components are bought. The complete Cyclapse Pro includes a Digisnap Pro controller with Bluetooth to enable time-lapsing, a Cyclapse weatherproof housing and a lithium ion battery <ref name='cyclapsepro'>Harbortronics. (n.d.). Cyclapse Pro - Standard | Cyclapse. Retrieved May 22, 2020, from https://cyclapse.com/products/cyclapse-pro-standard/</ref>. Because of this, this Cyclapse Pro module costs over $3000 USD. Also, the module is not as compact as a GoPro, since DSLR cameras themselves are already much larger than GoPros. Before a choice can be made between both options, there must be looked at data transfer options and additional power sources. | |||

===Data Transfer=== | |||

A GoPro creates its own Wifi signal to which you could connect a phone using the GoPro app. Then data could be sent from there to a computer. Another option could be Auto Upload which is part of GoPro Plus. For a monthly or yearly fee, the GoPro automatically uploads its footage to The Cloud <ref name='autoupload'>GoPro. (2020, May 22). Auto Uploading Your Footage to the Cloud With GoPro Plus. Retrieved May 23, 2020, from https://community.gopro.com/t5/en/Auto-Uploading-Your-Footage-to-the-Cloud-With-GoPro-Plus/ta-p/388304#</ref> <ref name='autoupload2'>GoPro. (2020a, May 14). How to Add Media to GoPro PLUS. Retrieved May 23, 2020, from https://community.gopro.com/t5/en/How-to-Add-Media-to-GoPro-PLUS/ta-p/401627</ref>. However, this works together with the GoPro app which requires a mobile device. The image recognition itself will use a computer. Also, when auto uploading to The Cloud, the images/videos are not deleted from the storage within the GoPro. This will be necessary for operation of the device, since otherwise the GoPro storage will be filled up quickly. Besides, it is not completely clear if Auto Upload requires that the GoPro and mobile device are connected to the same Wifi network. Finally, to auto upload, the GoPro must be connected with a power source and it needs to be charged to at least 70[%], and it may not be possible to always keep the battery above this 70[%]. | |||

A solution could be to connect GoPro to the FlashAir™ W-04 wireless SD card. This SD card can save up to 64 GB of data. The SD card can be accessed with a phone or laptop and the pictures have to be manually saved. Then the pictures can be used for the image recognition. Also, a normal SD card could be used, but this requires that the SD card is manually swapped at certain times. | |||

The Cyclapse Pro also offers Wifi options to be able to transfer data <ref name='cyclapsefaq'>Harbortronics. (n.d.-d). Support / DigiSnap Pro / Frequently Asked Questions | Cyclapse. Retrieved May 23, 2020, from https://cyclapse.com/support/digisnap-pro/frequently-asked-questions-faq/</ref>. The DigiSnap Pro within the Cyclapse Pro can transfer images from the camera to an FTP (File Transfer Protocol) server on the local network or internet. The DigiSnap Pro most popularly uses FTP image transfers via USB cellular modems and local USB download. The Digisnap Pro also provides an Android app. Every image taken by the camera can be configured within the DigiSnap Pro Android Application to automatically transfer to a specified FTP folder location on the internet using a USB cellular modem. | |||

===Power Source=== | |||

[[File:SolarX.png|200px|Image: 200 pixels|right|thumb|GoPro with SolarX extension<ref name ='CamdoSolar'>CamDo. (n.d.-b). SolarX Solar Upgrade Kit. Retrieved May 22, 2020, from https://cam-do.com/products/solarx-gopro-solar-system</ref>.]] | |||

[[File:Cyclapse.jpg|200px|Image: 200 pixels|right|thumb|Cyclapse with solar panel extension<ref name='cyclapsesolar'> Harbortronics. (n.d.-a). Cyclapse Pro - Standard | Cyclapse. Retrieved May 22, 2020, from https://cyclapse.com/products/cyclapse-pro-standard/</ref>.]] | |||

CamDo offers an add-on to the GoPro Hero3 to Hero7. It is called SolarX which is a weatherproof solar panel module <ref name ='CamdoSolar'>CamDo. (n.d.-b). SolarX Solar Upgrade Kit. Retrieved May 22, 2020, from https://cam-do.com/products/solarx-gopro-solar-system</ref>. This enables long term operation of GoPro cameras for time lapse photography. It includes a 9[W] solar panel to recharge the included V50 battery. The solar panel can be upgraded to 18[W] for use in cloudy or rainy areas. The solar panel charges the included lithium polymer battery which outputs 5[V] to power the camera and can also power other accessories within the weatherproof enclosure. The solar panel can directly be attached to the casing or can be placed separately for optimal usage. The complete module adds significant size to the GoPro, but within the casing there is extra space for additional accessories. If the camera can run indefinitely with only the solar panel, depends on the weather and camera settings. CamDo made a calculator to determine battery life and the best setup <ref name ='calculator'>https://cam-do.com/pages/photography-time-lapse-calculator?_ga=2.4808368.207575993.1590147015-1651516203.1590147015</ref>. This calculator will be used in the next section to determine whether the solar panel will provide enough power to the camera if it has to make videos 24/7. | |||

Cyclapse Pro also offers a solar panel extension <ref name='cyclapsesolar'> Harbortronics. (n.d.-a). Cyclapse Pro - Standard | Cyclapse. Retrieved May 22, 2020, from https://cyclapse.com/products/cyclapse-pro-standard/</ref>. Without solar panel, a full battery can make around 3000 images <ref name='cyclapsefaq'>Harbortronics. (n.d.-d). Support / DigiSnap Pro / Frequently Asked Questions | Cyclapse. Retrieved May 23, 2020, from https://cyclapse.com/support/digisnap-pro/frequently-asked-questions-faq/</ref>. The 20W solar panel can make sure the battery is charged. A second battery pack can be included, to increase the duration the system will operate without charging (e.g. for cloudy skies) <ref name='cyclapsebat'>Harbortronics. (n.d.-a). Cyclapse Pro - Glacier | Cyclapse. Retrieved May 22, 2020, from https://cyclapse.com/products/cyclapse-pro-glacier/</ref>. It uses a controller, the Digisnap Pro, to reduce battery usage and gives programming options <ref name='cyclapsepro'>Harbortronics. (n.d.-b). Cyclapse Pro - Starter | Cyclapse. Retrieved May 22, 2020, from https://cyclapse.com/products/cyclapse-pro-starter/</ref>. Total costs (dependent on specific add-ons), are around $4000 USD, which is significantly larger than for the GoPro. | |||

Since the Cyclapse Pro module is much more expensive, and similar performance is expected, it is chosen to go further with the GoPro Hero7 Black. Now some power calculations will be done with the previously mentioned CamDo calculator to determine if the solar panel will suffice as a power source <ref name ='calculator'></ref>. | |||

===Data Storage and Energy Consumption=== | |||

To be able to draw a conclusion on the power source, the data storage and energy consumption of the camera should be known. To calculate the data storage and energy consumption, the time lapse and solar power calculator from CamDo <ref name ='calculator'></ref> are used. This gives a rough estimation of the memory and energy needed. The estimations of the calculator will be taken with a margin, to be sure it will work in real life. | |||

The image recognition needs a video as input, also the images have to be taken one second after each other. Further, the fps does not matter since the image recognition observes every single frame, but the GoPro automatically transfers the videos in 30 fps <ref name=TimeLapseSettings>Time lapse settings. Retrieved June 05, 2020, from https://www.youtube.com/watch?v=-9htjymU5d8 </ref>. When the camera shoots 24 hours a day and has a time interval of 1 second between every photo, a 48 minute video with 86400 frames will be the result. | |||

First, the data storage. The data will be stored on an SD card. There has been chosen to store it on a normal SD card. A WiFi SD card has been considered, however there is too much data to transfer, therefore it is easier to manually use two SD cards and change them. To make a choice of SD card, there should be known how many minutes of video can be saved on the card. The following table is obtained with numbers from the CamDo calculator <ref name ='calculator'></ref>. | |||

{| border=1 style="border-collapse: collapse;" cellpadding = 2 | |||

! style="background: #BFBFBF;" colspan="1"| '''SD card size [GB]''' | |||

! style="background: #BFBFBF;" colspan="1"| '''Number of minutes that can be saved''' | |||

|- | |||

| 4 | |||

| 9 minutes | |||

|- | |||

| 8 | |||

| 18 minutes | |||

|- | |||

| 16 | |||

| 37 minutes | |||

|- | |||

| 32 | |||

| 74 minutes | |||

|- | |||

| 64 | |||

| 148 minutes | |||

|- | |||

| 128 | |||

| 297 minutes | |||

|- | |||

| 200 | |||

| 464 minutes | |||

|- | |||

| 256 | |||

| 594 minutes | |||

|} | |||

The eventual choice of SD card also depends on the energy source. This is because the energy source might have to be switched after a certain period of time as time as well. The most effective is if both the SD card and power source are switched at the same time. | |||

The approximation of energy needed is 40.80[Wh]. The internal battery delivers 4.7[Wh], meaning the GoPro needs external energy. The two options considered are a solar panel that powers an external battery or using only an external battery that will have to be switched manually. | |||

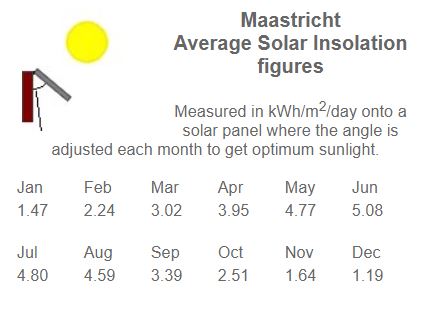

In the CamDo calculator, the solar irradiance can be filled in, this is combined with the solar panel of choice and the delivered energy will be calculated. The solar irradiance can be found at the Solar Electricity Handbook <ref name=Irradiance>Solar Electricity Handbook. Retrieved June 05, 2020, from http://www.solarelectricityhandbook.com/solar-irradiance.html </ref>. The city taken to obtain the solar irradiance was Maastricht, since that is a city close to the Maas. The following values are obtained in the ideal situation, meaning the solar panel always faces the sun. | |||

[[File:SolarIrradianceMaastricht.JPG|400px|Image: 800 pixels|center|thumb|Solar irradiance in Maastricht in ideal situation.]] | |||

The 9[W] solar panel delivers 36[Wh] of energy on a average day in June, where the highest solar irradiation is obtained. This means that multiple solar panels are needed to power the external battery needed to power the GoPro in June alone. In December, the solar radiation, ideally has an average of 1.19[kWh/m^2/day]. Two solar panels then deliver a combined energy of 17[Wh]. This is not enough to constantly power the GoPro, when it has to take a picture every second. Also, since the SD card needs to be switched, it is a better option to only use external batteries, since this is much cheaper, see the table below. | |||

{| border=1 style="border-collapse: collapse;" cellpadding = 2 | |||

! style="background: #BFBFBF;" colspan="1"| '''Item''' | |||

! style="background: #BFBFBF;" colspan="1"| '''Price''' | |||

|- | |||

| 2x 9[W] solar panel + 44[Wh] external battery<ref name ='CamdoSolar'>CamDo. (n.d.-b). SolarX Solar Upgrade Kit. Retrieved May 22, 2020, from https://cam-do.com/products/solarx-gopro-solar-system</ref> | |||

| €1761,08 | |||

|- | |||

| 2x Anker Astro E7 external battery<ref name=AnkerAstroE7>Anker Astro E7 26800 mAh external battery. Retrieved June 05, 2020, from https://chargewithpower.com/product/buy-anker-astro-e7-26800mah-ultra-high-capacity-3-port-4a-compact-portable-charger-external-battery-power-bank-with-poweriq-technology-for-iphone-ipad-nintendo-switch-and-more-online/ </ref> (96.48Wh) | |||

| €95,77 | |||

|} | |||

The Anker Astro E7 external battery is chosen because it is compatible with the GoPro Hero7 black and has an extremely high capacity. This option is much cheaper taking into account that a person already has to manually switch the SD card. | |||