Embedded Motion Control 2018 Group 8: Difference between revisions

| (117 intermediate revisions by 5 users not shown) | |||

| Line 54: | Line 54: | ||

* Find and exit the door of a room | * Find and exit the door of a room | ||

* Fulfill the task within 5 minutes (EC) | * Fulfill the task within 5 minutes (EC) | ||

* Fulfill the task within 10 minutes (HC) | |||

* Do not stand still for more than 30 seconds | * Do not stand still for more than 30 seconds | ||

* Fulfill the task autonomously | * Fulfill the task autonomously | ||

| Line 113: | Line 114: | ||

=== Specifications === | === Specifications === | ||

The following numbers are the software and hardware capabilities of PICO. | |||

*Maximum translational speed of 0.5 m/s | |||

*Maximum rotational speed of 1.2 rad/s | |||

*Translational distance range of 0.01 to 10 meters | |||

*Orientation angle range of -2 to 2 radians or 229 degrees approximately | |||

*Angular resolution of 0.004004 radians | |||

*Scan time of 33 milliseconds | |||

=== Interfaces === | === Interfaces === | ||

[[File:EMC_Interfaces.png|thumbnail|right|500px| EMC Interfaces]] | |||

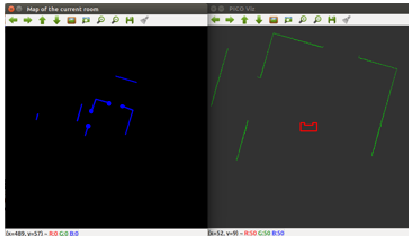

Different parts of the software have to communicate with each other in order to exchange information. In order to keep the communication structured within the software, all information is stored in the worldmodel, which all functions can access. In this way the functions are not linked to each other and the information is stored at a central place. This structure is shown in figure . Some information in the worldmodel is stored within classes and has to be accessed via so called get and set functions. This is done to make sure that information is not accidentally changed. The perception and driving parts are the only parts that are actually communicating with the robot. Perception reads the sensor data and performs several functions to the data, to transform the raw data in objects like nodes, corners and endpoints of walls. This is then stored in the worldmodel. Driving makes the robot move to the required setpoint that the planning algorithm stored in the worldmodel. | |||

=== Task Skill Motion === | |||

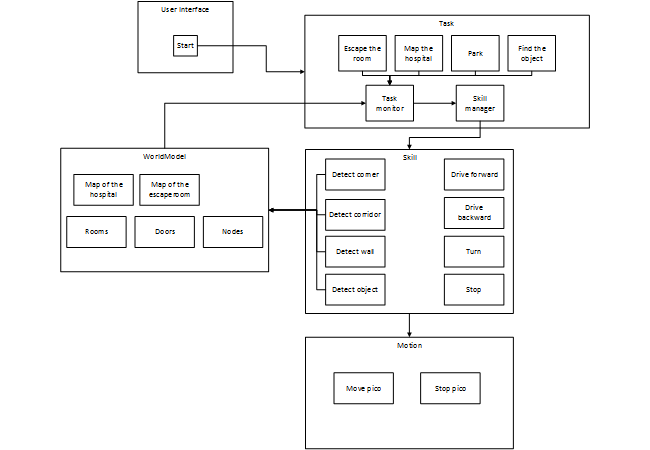

In this section a brief overview of the task-skill-motion framework is given that will be used to structure the software. In figure the task-skill-motion diagram is given. The diagram consists of 5 blocks and interconnections between them. There are also sub-blocks which represent functions of the system. Like for example in the skill block, there are the functions that detect the features of the room, but also the skill necessary to drive pico.The diagram should be read as follows: | |||

The system is started via the user interface that is connected to the task block. This block contains the tasks that pico has to fulfill, one of the 4 tasks will be selected at the start of the program. Together with the selected task, the worldmodel information is fed to the task monitor which monitors of the task is still being executed. The task monitor can decide if certain skills should be activated or not to achieve the task. The skill manager then sets the skills to active or not. The motion block contains the low level actuation that will involve moving pico around. The connections between the main blocks and the sub-blocks are the interfaces between the several software components. | |||

[[File:TaskSkillMotion.png]] | |||

== Escape Room Competition == | == Escape Room Competition == | ||

| Line 129: | Line 136: | ||

=== World Model === | === World Model === | ||

The data stored in the World Model is small and consists of only three | The data stored in the World Model is small and consists of only three Booleans. Those concepts are left, right and front and gets either the value TRUE or FALSE depending on if a obstacle is near that side of the robot. The value of each concept will get refreshed with a frequency of FREQ, deleting all the old data so that no history is stored. | ||

=== Perception === | === Perception === | ||

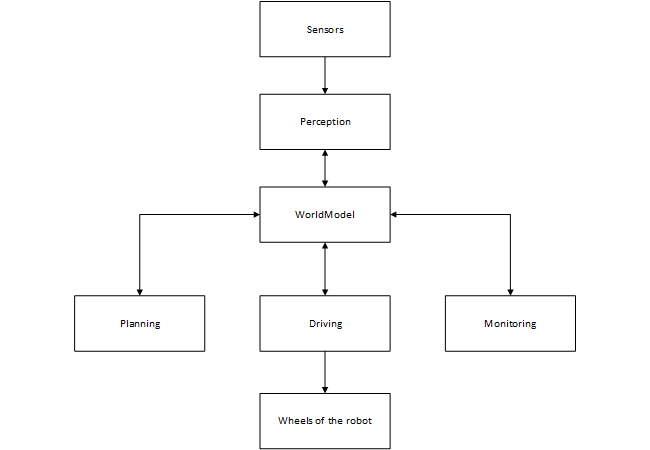

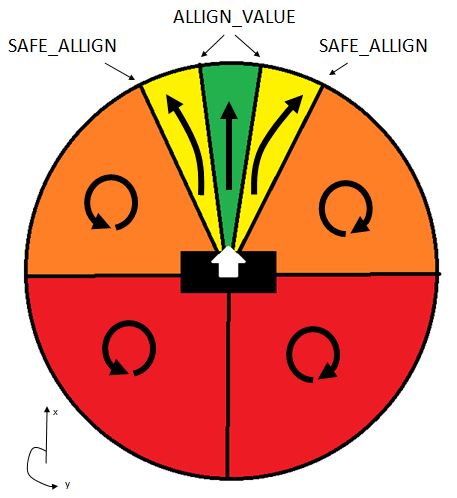

For the perception of the world for PICO, a simple space recognition code was used. In this code, the LRF data is split into three beam ranges. A left beam range, a front beam range and a right beam range. Around PICO, two circles are plotted. One is the ROBOT_SIZE circle which has a radius so that PICO is just encircled by the circle. The other circle is the MIN_DISTANCE_WALL circle which has a bigger radius than the ROBOT_SIZE circle. For each of the three laser beam ranges, the distance to an obstacle is checked for each beam, and the lowest distance which is larger than the radius of the ROBOT_SIZE circle is saved and checked if it is smaller than the radius of the MIN_DISTANCE_WALL circle. If that is true, the value TRUE is given to the corresponding laser beam range, else the value FALSE is given to that laser beam range. This scan is done with a frequency of FREQ, and by each scan, the previous values are deleted. | For the perception of the world for PICO, a simple space recognition code was used. In this code, the LRF data is split into three beam ranges. A left beam range, a front beam range and a right beam range. Around PICO, two circles are plotted. One is the ROBOT_SIZE circle which has a radius so that PICO is just encircled by the circle. The other circle is the MIN_DISTANCE_WALL circle which has a bigger radius than the ROBOT_SIZE circle. For each of the three laser beam ranges, the distance to an obstacle is checked for each beam, and the lowest distance which is larger than the radius of the ROBOT_SIZE circle is saved and checked if it is smaller than the radius of the MIN_DISTANCE_WALL circle. If that is true, the value TRUE is given to the corresponding laser beam range, else the value FALSE is given to that laser beam range. This scan is done with a frequency of FREQ, and by each scan, the previous values are deleted. | ||

[[Image:detectiongroup8.png| | [[Image:detectiongroup8.png|frame|right| Perception of PICO during the Escape Room Competition]] | ||

=== Monitoring === | === Monitoring === | ||

| Line 144: | Line 151: | ||

* Corner: The Right and Front sensor has value TRUE | * Corner: The Right and Front sensor has value TRUE | ||

The monitored data is only stored for one moment and the old data is deleted and refreshed with each new | The monitored data is only stored for one moment and the old data is deleted and refreshed with each new data set. | ||

=== Planning === | === Planning === | ||

For the planning, a simple wall following algorithm is used. | For the planning, a simple wall following algorithm is used. Our design choice was to follow the right wall. As initial state it is assumed that PICO sees nothing. PICO will than check if there is actually nothing around it by searching for an obstacle. If no obstacle is detected at near its starting position, PICO will hypothesize that there is an obstacle in front of him and will drive forward until he has validated this hypothesis by seeing. This hypothesis will always come true at a certain moment in time due to the restrictions on the world PICO is put into, except if PICO is already aligned to the exit. In that case PICO will fulfill its task immediately by driving straight forward. | ||

As soon as an obstacle is detected, PICO will assume that this obstacle is a wall | As soon as an obstacle is detected, PICO will assume that this obstacle is a wall. It will than rotate counter-clockwise until its monitoring function confirms that this obstacle is a wall. In other words only the right sensor returns the value TRUE. Due to the fact that the world only consists of walls and corners, and corners are determined ny walls, this hypothesis will again always come true when rotating. As soon as only a wall is detected, PICO will drive forward to keep the discovered wall on its right. | ||

While following the wall, it | While following the wall, it is possible that PICO detects a corner if the front sensor goes of. This can happen if PICO is actually in a corner, or if PICO is not well aligned with the wall (the front sensor sees the wall that PICO is following). However, in both cases, PICO will rotate counter-clockwise until he only sees one wall. It will be better aligned with the wall it was following. In the case that PICO is actually in a corner; it will align with and follow the other wall, that makes up the corner instead of the wall he was initially following. | ||

Another possibility that can happen | Another possibility that can happen is that PICO looses the wall it is following. This can again happen if PICO is not well aligned with the wall or if there is an open space in the wall(i.e, a door entry). In both cases, PICO rotates clockwise until sees a wall or a corner and than starts again following them as usual. | ||

=== Driving === | === Driving === | ||

The Driving for the escaperoom challenge is | The Driving for the escaperoom challenge is relatively simple. PICO has three drives states which are drive_forward, rotate_clockwise and rotate_counterclockwise. Depending on the monitoring, the planning will give which driving state needs to be done by PICO as already discussed in the planning part. | ||

=== The values === | === The values === | ||

The main values that are given in the script are listed below with an explanation on how they where determined: | |||

*ROBOT_SIXE | |||

**The size of PICO is measured and ROBOT_SIZE is set to be just big enough to make a circle with radius ROBOT_SIZE around PICO without including PICO. The ROBOT_SIZE that is measured is 0.2 m. | |||

*MIN_DISTANCE_WALL | |||

**As PICO must be able to go between two walls in a distance of 0.5 m, the MIN_DISTANCE_WALL value has to be smaller than that size, but to be more specific, it has to be smaller than 0.3 as the distance is measured from the center of PICO and PICO will have a radius of 0.2 meters. Furthermore, it has to be bigger than 0.2 as this is the size of PICO. As PICO must directly react on his surroundings, this value is set to 0.25 m to give PICO room to act on its detection. | |||

*FREQ | |||

**The Frequency at which the scan takes place must be big enough to detect the change in surrounding while PICO is moving. Furthermore, this will determine the precision of the alignment. As this takes a lot of computation power, this value will be set on 20 Hz as this is high enough to detect everything but will not ask to much computation power while PICO is following the wall and no big changes in the surrounding are measured. | |||

*FORWARD_SPEED | |||

**As PICO must act on its surroundings, and the MIN_DISTANCE_WALL value only has a margin of 0.05 m, PICO has to stop quickly. To ensure that PICO will stop in time, experiments must be done to determine the speed of PICO in which PICO is able to stop on time before the wall. | |||

*ROTATE_SPEED | |||

**As PICO tries to align with the wall, it will move with this velocity while rotating. This value is determined experimentally with the given frequency to ensure that PICO is not to much overrated between the scanning frequency interval. | |||

=== The results === | === The results === | ||

[[File:simulator.gif|frame|left| A simulation of the Escaperoom Challenge with our code]] | |||

[[File:challenge.gif|frame|right| A video of our results of the Escaperoom Challenge]] | |||

Our wall follower worked smoothly in the simulator as can be seen in the video below and during the testing sessions. However, due to our restrictions on the FORWARD_SPEED and the ROTATE_SPEED, PICO moved slowly towards the finish, but eventually gets their as could be seen on the video showing the Escaperoom Challenge. However, as we where excited that PICO moved through the hallway as only one of the three groups that made it to that point, we stopped filming. Unfortunately, just before the finish, PICO detected the other wall of the corner while aligning to the wall and monitored is as a corner so started to follow the other wall back into the room. As we tested our code with just a little bit bigger hallway and in the simulator as shown in the gif, alignment errors due to the delayed response of PICO did not occur while testing. | |||

A fault due to the bad alignment also happened while PICO was following the rooms wall. As PICO was badly aligned with the wall, it eventually lost the wall making a nice pirouette before following the wall again. | |||

Although PICO was not fast and did not get past the finish, we were proud with our results as we were one of the few groups without coding experience beforehand that made it to the hallway. | |||

=== Lessons learned === | === Lessons learned === | ||

As we learned from the Escaperoom Competition, it is important to give PICO a task that he can fulfill for a longer time so that the speed can be increased. Furthermore, if the setpoint of PICO was set at the end of the hallway and the alignment with the wall was turned off when PICO had entered the hallway, PICO would have made it to the end. However, a check on unexpected events should still be present to make only little adjustments. This would also had prevent PICO to made its pirouette while following the wall as it would have corrected its motion to fulfill its task instead of acting directly on its sensor data. | |||

Also we have learned that a good monitoring is important. As happened, PICO thought he was at a corner while he was not as a corner was poorly defined in the monitoring. As the planning state correctly acted on a corner, PICO did what he has to do on the wrong moment due to the wrong conclusion of a corner. Therefore, a good monitoring is important. | |||

== Hospital Competition Design == | == Hospital Competition Design == | ||

| Line 189: | Line 217: | ||

====Doors==== | ====Doors==== | ||

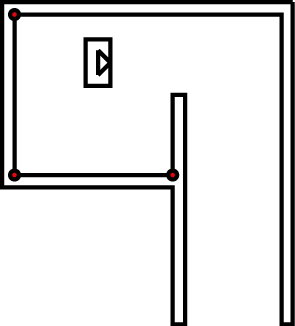

[[File:EMC_DoorDetection.png|frame|right| A door object being identified]] | |||

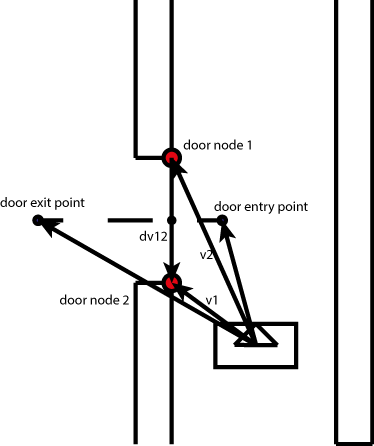

The doors contain the points | The doors contain the points to where PICO should drive to enter and exit a room. A door is created by the [[#Door_detection | monitoring module]]. These point are stored as nodes and can be called by the planning to determine the way points for the driving skill. They are determined geometrically by the corner nodes of which the door consists. They are placed at a certain distance from the corners to avoid collisions as visible in the figure to the right. | ||

====Room==== | ====Room==== | ||

A room consists of a number of nodes and a list of the existing connections. The room thus contains a vector of nodes. Room nodes are updated until the robot leaves the room. The coordinates are than stored relative to the door. This is further described under monitoring. There are two types of hypothesized doors. The strongest hypothesized door is identified by at least one outward corner. The other is identified as adjacent to an open node. | A room consists of a number of nodes and a list of the existing connections. The room thus contains a vector of nodes. Room nodes are updated until the robot leaves the room. The coordinates are than stored relative to the door. The nodes are than inverted with respect to the door. This is necessary for the possible situation where PICO needs to pass through the same door from the other side. If for it returns from another room and desires to return to the hallway, the door leading to the hallway will be flipped. In this way it will not be necessary to update the land marks in every room where PICO is not situated in but still have a usable map once PICO enters the room. This is further described under [[#Monitoring | monitoring]] and [[#Planning | planning]]. There are two types of hypothesized doors. The strongest hypothesized door is identified by at least one outward corner. The other is identified as adjacent to an open node. | ||

=== Perception === | === Perception === | ||

Firstly the data from the sensors is converted to Cartesian coordinates and stored in the LaserCoord class within Perception. From the testing it became clear that | Firstly the data from the sensors is converted to Cartesian coordinates and stored in the LaserCoord class within Perception. From the testing it became clear that PICO can also detect itself for some data points and that the data at the far ends of the range are unreliable. Therefor the attribute Boolean Valid in LaserCoord is set to false in those cases. When using the data it can be decided if the invalid points should be ex- or included. | ||

For the mapping, the corner nodes and nodal connectivity should be determined as defined in the worldmodel. To do this, the laser range data will be examined. The first action is to do a "split and merge" algorithm where the index of the data point is stored where the distance between to points is larger than a certain threshold. The laser scans radially, therefor the further an object is, the further the measurements differ from each other in the Cartesian system. The threshold value is therefor made dependent on the polar distance. | For the mapping, the corner nodes and nodal connectivity should be determined as defined in the worldmodel. To do this, the laser range data will be examined. The first action is to do a "split and merge" algorithm where the index of the data point is stored where the distance between to points is larger than a certain threshold. The laser scans radially, therefor the further an object is, the further the measurements differ from each other in the Cartesian system. The threshold value is therefor made dependent on the polar distance. | ||

| Line 223: | Line 251: | ||

=== Monitoring === | === Monitoring === | ||

The monitoring stage of the software architecture is used to check if it is possible to fit objects to the data of the perception block. For instance by combining four corners into a room. The room is then marked as an object and stored in the memory of the robot. The same is done for doors. This way it is easier for the robot to return to a certain room instead of exploring the whole hospital again. Monitoring is thus responsible for the creation of new room instances, setting of the room attribute explored to 'True' and maintaining the hypotheses of reality. Also the monitoring skill will send out triggers for the planning block if a room is fully explored. It also keeps track of which doors are already passed and which door leads to a new room. | The monitoring stage of the software architecture is used to check if it is possible to fit objects to the data of the perception block. For instance by combining four corners into a room. The room is then marked as an object and stored in the memory of the robot. The same is done for doors. This way it is easier for the robot to return to a certain room instead of exploring the whole hospital again. Monitoring is thus responsible for the creation of new room instances, setting of the room attribute explored to 'True' and maintaining the hypotheses of reality. Also the monitoring skill will send out triggers for the planning block if a room is fully explored. It also keeps track of which doors are already passed and which door leads to a new room. | ||

| Line 231: | Line 260: | ||

* Hypotheses | * Hypotheses | ||

====Door detection==== | |||

The door detection function uses the information that is stored in the world model to make a door object of two nodes. The function checks for several properties that two nodes have to have to be qualified to be a door. | |||

The door detection function uses the information that is stored in the | |||

Node properties: | Node properties: | ||

| Line 239: | Line 267: | ||

* Distance between them is 0.5 to 1.5m. | * Distance between them is 0.5 to 1.5m. | ||

* The two nodes are not connected by a wall. | * The two nodes are not connected by a wall. | ||

The door is defined by two outward facing corners to the robot, but this is actually not necessary. One of the two corners can be an open node, i.e. an unexplored node. In this way the system can recognize doors in walls that the robot did not fully explore and therefore requires less information. | The door is defined by two outward facing corners to the robot, but this is actually not necessary. One of the two corners can be an open node, i.e. an unexplored node. In this way the system can recognize doors in walls that the robot did not fully explore and therefore requires less information. | ||

When the nodes | When the nodes fulfill all the properties a new door is stored in the current room. The door detection function also determines the direction of the door relative to PICO. It checks the slope of the line between the two points. It then calculates a perpendicular line to the door and compares the two slopes. It then marks the entrypoint/exitpoint according to the calculation. This door is then also marked unexplored so the planning skill knows where to go to. An image of PICO identifying a new door can be seen right of [[#Doors| the door class description]]. | ||

| Line 256: | Line 283: | ||

'''Hospital fully explored''' | '''Hospital fully explored''' | ||

The hospital is marked as fully explored when the hallway endpoint is reached, has no more unexplored doors and all rooms are marked explored. Also all the doors in the rooms must be marked explored. | |||

====Hypotheses:==== | |||

When mapping the room the code will maintain two hypothesis of it's reality. The perceived map as described in perception is assumed to be spatially accurate. However PICO is not able to detect and perceive nodes that are behind it. Furthermore perception happens near continuously at 20 Hz. In that time PICO is able to detect which newly found nodes correspond to nodes already stored in memory by checking the distance between the old and the new node. This comparison is less robust when PICO is moving due to larger discrepancies in distance between an the old and new position of a node. For this reason PICO also maintains an odometery-based hypothesis. New positions of the nodes are predicted based on odometer signal and than compared with the perceived nodes to determine which perceived node position correspond to those in memory. Based on this the actual translation and rotation can be determined with three corresponding nodes using the following formula. These nodes need to be in front of PICO to ensure that they are not false-positives. In the case that less than 3 nodes are perceived, the perception hypothesis is assumed to be the actual room layout. | |||

When mapping the room the code will maintain two hypothesis of it's reality. The perceived map as described in perception is assumed to be spatially accurate. However PICO is not able to detect and perceive nodes that are behind it. Furthermore perception happens near continuously at 20 Hz. In that time PICO is able to detect which newly found nodes correspond to nodes already stored in memory by checking the distance between the old and the new node. This comparison is less robust when PICO is moving due to larger discrepancies in distance between an the old and new position of a node. For this reason PICO also maintains an odometery-based hypothesis. New positions of the nodes are predicted based on odometer signal and than compared with the perceived nodes to determine which perceived node position correspond to those in memory. Based on this the actual translation and rotation can be determined with three corresponding nodes using the following formula. These nodes need to be in front of PICO to ensure that they are not false-positives | |||

<math> | <math> | ||

| Line 284: | Line 309: | ||

</math> | </math> | ||

Here T is the transformation matrix. The first matrix consists of the x and y coordinates at a previous time step, the second matrix contains the corresponding positions at current time. The transformation matrix is than used to update all the actual room object contained in our world model. | Here T is the transformation matrix. The first matrix consists of the x and y coordinates at a previous time step, the second matrix contains the corresponding positions at current time. The transformation matrix is than used to update all the actual room object contained in our world model. For the matrix inversion we used the [http://eigen.tuxfamily.org/index.php?title=Main_Page Eigen library]. | ||

=== Planning === | === Planning === | ||

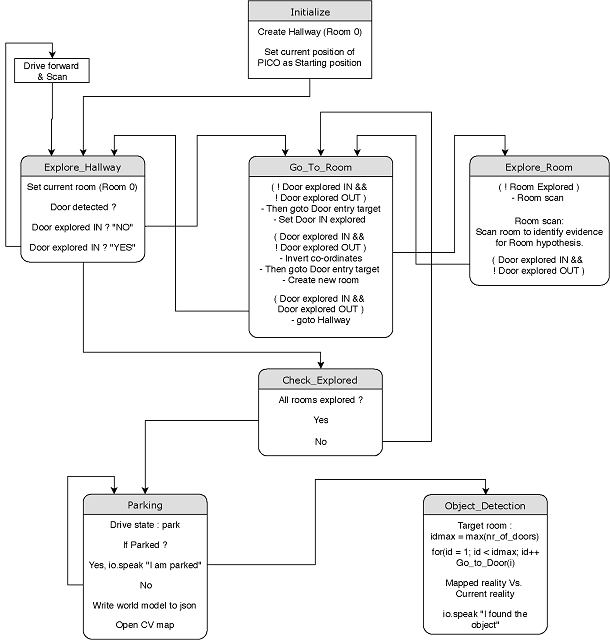

[[File: | [[File:HRC_planning_final.png|640px|right|State machine for Hospital challenge|frame]] | ||

Planning is a discrete set of "'''behavioral'''" states. Depending on the current conditions and results in world model, planning switches between appropriate states to complete the Hospital room challenge. Initially, planning is done in geometric level to | Planning is a discrete set of "'''behavioral'''" states. Depending on the current conditions and results in world model, planning switches between appropriate states to complete the Hospital room challenge. Initially, planning is done in geometric level to structure the task which best suits for the particular operation, which is to complete the Hospital room challenge. <br> | ||

<br>'''Planning at geometric level:''' <br> | <br>'''Planning at geometric level:''' <br> | ||

# Initialize the process. | # Initialize the process. | ||

| Line 299: | Line 324: | ||

# Use the high-level hint to locate target room and stop at the object. | # Use the high-level hint to locate target room and stop at the object. | ||

<br> | <br> | ||

Once planning at the geometric level is done, tasks are broken into states where each state has its own conditions to check from world model, perform the activity defined and update the world model. The state machine is formed ([[File: | Once planning at the geometric level is done, tasks are broken into states where each state has its own conditions to check from world model, perform the activity defined and update the world model. The state machine is formed ([[File:HRC_planning_final.pdf]]) to facilitate unique tasks to the corresponding states which can be reused as per the state logic. Each state is created in a unique way which does not repeat the task performed in other states and interlinked strategically to have robust and smooth flow from one another. <br> | ||

<br>'''Planning at implementation (logic) level:''' <br> | <br>'''Planning at implementation (logic) level:''' <br> | ||

* '''Initialize:''' Resets IOs, planning state, drive state to initial value. It creates the current room as Room 0 which is the hallway based on the hypothesis that the robot starts at the hallway. Before moving, it saves the current position of PICO as its starting position in the world model. Once the initial position is saved, the state is switched to Explore_Hallway <br> | * '''Initialize:''' Resets IOs, planning state, drive state to initial value. It creates the current room as Room 0 which is the hallway based on the hypothesis that the robot starts at the hallway. Before moving, it saves the current position of PICO as its starting position in the world model. Once the initial position is saved, the state is switched to Explore_Hallway <br> | ||

* '''Explore_Hallway:''' Whenever PICO is in the hallway, current_room variable in world model is set to 0. This is used to save the nodes and doors detected in the hallway is stored with hallway ID. In the hallway, PICO looks for door detection and checks whether the door IN is explored. If both the conditions are satisfied, the state is set to Go_To_Room. If no doors detected, PICO is driven forward with scanning for doors at a 20Hz scan rate. This process is repeated till PICO finds all the door. <br> | * '''Explore_Hallway:''' Whenever PICO is in the hallway, current_room variable in world model is set to 0. This is used to save the nodes and doors detected in the hallway is stored with hallway ID. In the hallway, PICO looks for door detection and checks whether the door IN is explored. If both the conditions are satisfied, the state is set to Go_To_Room. If no doors detected, PICO is driven forward towards hallway point (which is the end point of hallway stored in world model by monitoring) with scanning for doors at a 20Hz scan rate. This process is repeated till PICO finds all the door. <br> | ||

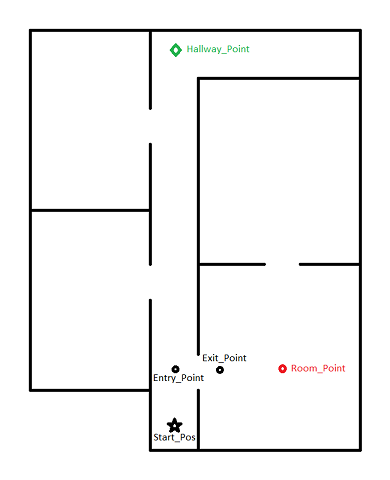

* '''Go_To_Room:''' (Note: Two variables assigned for door exploration status, namely Door_IN and Door_OUT. This logic is used in planning to make decisions while traversing through doors). Go_To_Room is the stage where target points are created to travel through doors between hallway and room. Two target points are created with respect to the door, namely entry_point and exit_point. If both door IN and door OUT of the selected door ID is not explored, the entry point of the door is set as target coordinates, drive state is set to driving. The current position of PICO is checked with the target point and when close tolerance is reached, door IN is set to explored and target points are set at the exit point. PICO is driven to the exit point and the | * '''Go_To_Room:''' (Note: Two variables assigned for door exploration status, namely Door_IN and Door_OUT. This logic is used in planning to make decisions while traversing through doors). Go_To_Room is the stage where target points are created to travel through doors between hallway and room. Two target points are created with respect to the door, namely entry_point and exit_point. If both door IN and door OUT of the selected door ID is not explored, the entry point of the door is set as target coordinates, drive state is set to driving. The current position of PICO is checked with the target point and when close tolerance is reached, door IN is set to explored and target points are set at the exit point. PICO is driven to the exit point and the new object of the Room class is created in the world model. The state is switched to Explore_Room. One more crucial function handled by this state is Invert_coordinates. This function inverts the coordinates (by multiplying -1 to the X and Y coordinates) of the room which PICO is IN while it comes out of the room. This is done by checking for door_IN explored and door_OUT unexplored. <br> | ||

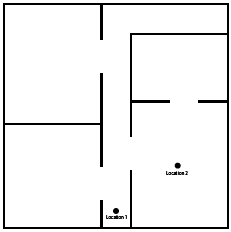

* '''Explore_Room:''' <br> | [[File:HRC_sim_final.png|right|480px|Hospital room for simulation|frame]] | ||

* '''Check_Explored:''' <br> | * '''Explore_Room:''' Explore room state does the process of scanning the room to find evidence for validating the hypothesis of the existence of room (i.e, At least 3 valid corners (convex corners)). This is done by checking for the Room_Explored variable in world model set by monitoring. If the room is not explored, room scan phase is initiated by setting drive state as Room_scan and checks for Room_Explored variable again. Once after exploring the room, if a new door is detected (i.e, Door_IN and Door_OUT of the detected door is unexplored) PICO will go through the door via Go_To_Room sequence. If NO new door is detected, PICO will go to hallway from room via Go_To_Room sequence. Thus Explore room state takes care of Room exploration, saving door/s and corners with respect to Room ID and number of doors to exit to hallway (for the high-level hint). <br> | ||

* '''Parking:''' <br> | * '''Check_Explored:''' Check explored state is sequentially checked when PICO is in the hallway. The sole purpose of this state is to monitor whether all the rooms are explored (i.e, All the doors in the hallway are explored (door_IN and door_OUT for all doors are explored)). Once all rooms are explored, Parking state is set. <br> | ||

* '''Object_Detection:''' <br> | * '''Parking:''' Once all rooms are explored, parking routine is executed. Parking of PICO backward is done via driving sequence by setting drive state to park. Once PICO is parked, Parked variable in world model is set. PICO speaks out "I am parked". The final map of hospital room is saved in HospitalRoom.json file. This JSON file contains Rooms, doors, and nodes along with its coordinates. A visual map is created using Open CV.<br> | ||

* '''Object_Detection:''' Object detection is the special case made in order to utilize the high-level hint provided to locate the target room which has the object to be located. To identify the target room ID, the Maximum number of doors to reach the room from the hallway is found. The Go_To_Door sequence is executed with the target room index. Once PICO reaches the target room, the mapped reality is compared with the current perception data. Once the object is detected, PICO stops in front of it and speaks out "I found the object".<br> | |||

'''JSON Parsing:''' <br> | |||

JSON (JavaScript Object Notation) is a lightweight data-interchange format ([[https://www.json.org/]]) which is both easily readable and easily interpretable for humans and machines. In Hospital Room Challenge, JSON is used to store world model details such as coordinates of nodes, and the door/s of Hallway (Room 0) and Rooms. JSON write function is executed after parking is completed, the result is saved in HospitalRoom.json file. For the hospital room simulation height map as shown in the right, HospitalRoom.json file can be found in this archive ([[File:HospitalRoom.zip]]). Since JSON file format cannot be uploaded to the wiki, for convenience resulting data is provided in pdf format here ([[File:HRC_json_pdf_to_view.pdf]]). | |||

=== Driving === | === Driving === | ||

For the driving, three kind of function categories are made. The first category consists of the basic driving functions which are: | |||

*Drive | |||

*Rotate | |||

*Drive and Rotate | |||

*Stop | |||

As input for those functions, the DRIVE_SPEED and ROTATE_SPEED can be given positive and negative values. In the case of a positive value, PICO will drive forward and rotate counterclockwise. In the case of a negative value, PICO will drive backwards and will rotate clockwise. | |||

Those basic functions are used in the second function category which consists of the command functions. As command functions, the following two commands are considered | |||

*Drive to Point | |||

*Park | |||

Parking can be considered as moving backwards to a point therefore the Park command function will have a lot in common with the Drive to Point function. The difference is is that the movements are mirrored. The Drive to Point function uses a target point, which is defined relatively to PICO, as input. The function starts by checking where the target point is: if it is behind PICO, at the far side of PICO, close to the side of PICO or if it is in front of PICO. PICO will than align to ensure that the target point is in front of him. If the target is close to PICO, PICO will stop. All the possible movements, except stopping when PICO is close to its target, for the Drive to Point function are illustrated in the figure below to the right with x as the radial coordinate and y as the polar coordinate. | |||

[[File:driver.jpg|framed|right|The Drive to Point function with the possible driving methods of PICO for aligning to the target point]] | |||

As third category, the actual driving function which is called after the planning function in the auto_ext file is considered which is called driving. This function gets the target and the driving_state from the WorldModel as input to decide which of the underlying driving functions has to be called. If no driving state is given, PICO will stop. | |||

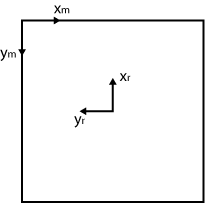

=== Mapping === | === Mapping === | ||

One part of the hospital challenge is to make a map of the entire hospital. In order to complete this task a mapping skill was added to the software. Based the room by room concept of the worldmodel, the map will also be build room by room. These separate maps will be combined to a full map of the hospital. The maps will be stored in the worldmodel in a | One part of the hospital challenge is to make a map of the entire hospital. In order to complete this task a mapping skill was added to the software. Based the room by room concept of the worldmodel, the map will also be build room by room. These separate maps will be combined to a full map of the hospital. The maps will be stored in the worldmodel in a separate class. This class will contain all the information of the maps of each of the rooms. The map building functions are stored in a separate executable that can be called by the main function. | ||

The image is made using openCV in c++. The image is represented as a matrix and its entries represent the pixels of the image. The image will contain all the walls of the rooms of the hospital. The walls of the rooms are made by checking which nodes are connected to each other. Then these nodes are used as endpoints for the openCV line element and is stored in the matrix. Since the coordinates of the nodes are given in Pico's coordinate frame(xr,yr), these have to be transformed into the matrix coordinate frame (xm,ym) (see figure). This transformation is done via a rotation matrix over <math> -\frac{\pi}{2} </math>, which results in the following rotation matrix:<math> | The image is made using openCV in c++. The image is represented as a matrix and its entries represent the pixels of the image. The image will contain all the walls of the rooms of the hospital. The walls of the rooms are made by checking which nodes are connected to each other. Then these nodes are used as endpoints for the openCV line element and is stored in the matrix. Since the coordinates of the nodes are given in Pico's coordinate frame(xr,yr), these have to be transformed into the matrix coordinate frame (xm,ym) (see figure). This transformation is done via a rotation matrix over <math> -\frac{\pi}{2} </math>, which results in the following rotation matrix:<math> | ||

\begin{bmatrix} | \begin{bmatrix} | ||

| Line 339: | Line 384: | ||

'''Resulting Map''' | '''Resulting Map''' | ||

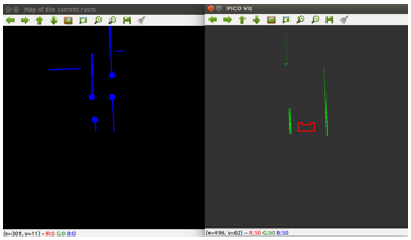

The results of the mapping is shown in figure. The figure shows the map that is made in openCV and the EMC visualiser. What is clear to see is that the laser range finder has more range than the visualizer is actually showing. In the first figure, | The results of the mapping is shown in figure. The figure shows the map that is made in openCV and the EMC visualiser. What is clear to see is that the laser range finder has more range than the visualizer is actually showing. In the first figure, PICO is at the starting position in the hallway. The visualizer doesn't show the walls inside the rooms, but as can be seen from the map that is made, they are detected. The same holds for the second picture where pico is standing inside a room. The visualizer only shows the corners of the current room, but in fact the back wall of the next room and a section of the hallway are also detected. The visualizer is also showing the door nodes that the monitoring section has found with the blue dots. | ||

[[File:Hospital_4rooms.png]] | [[File:Hospital_4rooms.png]] | ||

| Line 346: | Line 391: | ||

== Group Review == | == Group Review == | ||

=== Results and Discussion === | === Results and Discussion === | ||

From the start of the project we had all agreed upon the general direction of our design. However partly due to inexperience with programming in C++ we were hesitant to start coding until we had consensus on the exact details of our software architecture. Because of this integration of the software started relatively late resulting in a code that was not fully tested on the day of the challenge. For this reason we were not able to demonstrate the full capability of our design. | From the start of the project we had all agreed upon the general direction of our design. However partly due to inexperience with programming in C++ we were hesitant to start coding until we had consensus on the exact details of our software architecture. Because of this integration of the software started relatively late resulting in a code that was not fully tested on the day of the challenge. For this reason we were not able to demonstrate the full capability of our design. An example of functionality missed due to tardy integration effort is the updating of the drive target. As the perception of door nodes and setting of drive targets where developed separately, the updating of the drive target based on updated positions of door nodes was not effectively implemented. | ||

We are proud of our extensive design. Our mapping oriented around the determining of landmarks (nodes) which were robustly maintained using multiple hypotheses. All nodes and destinations where stored relative to PICO using no more information than necessary. Our state-machine had a concise logical structure and could determine the next action in a simple and yet robust manner. All relevant information was communicated with the | We are proud of our extensive design. Our mapping oriented around the determining of landmarks (nodes) which were robustly maintained using multiple hypotheses. All nodes and destinations where stored relative to PICO using no more information than necessary. Our state-machine had a concise logical structure and could determine the next action in a simple and yet robust manner. All relevant information was communicated with the World Model. This contained extensive information about the surrounding such as doors, connectivity between the rooms and the next destination. Basically this means that all pertinent information to running PICO is stored within the World Model object. The incoming measurement data was made usable by perception than stored in the World Model. All other modules either modified, or made commands based on this world model data. The monitoring and planning modules only communicated with the world model while the driving module read directives (such as target ect...) from the world model. The data structure of this world model object was designed in a way to take a minimal amount of space. For example nodes where stored as two coordinates to PICO and only actively updated while PICO was in the room these nodes belonged to. This is a bit comparable to how humans remember the layouts of buildings, we do not actively consider the layout of each room until we enter them. PICO would drive to targets that where dependent on landmarks such as corners or doors, as these nodes where updated, the target would be automatically updated. | ||

For the escape room challenge we had a simple implementation of a Wall follower algorithm. We were one of the only three groups to successfully get the robot moving towards the exit. This was also due to our groups early mastery of the git protocol. We had no trouble pulling the code to PICO. | For the escape room challenge we had a simple implementation of a Wall follower algorithm. We were one of the only three groups to successfully get the robot moving towards the exit. This was also due to our groups early mastery of the git protocol. We had no trouble pulling the code to PICO. | ||

All in all, we believe this course was a great experienced. We can all say that we had learned a lot about coding in C++ as well as organizing software development. Most importantly we learned a lot about robust world building practices in robotics. Our understanding of high-level robotics concepts has greatly improved. | |||

=== Recommendations === | === Recommendations === | ||

We have a few major recommendations for any group of experienced coding. First, start practicing writing code within the first week of the project. Second have an extensive conversation within the first two weeks of the project. Don´t get stuck to long on details of the design but quickly divide the work and start implementation. Making an extensive design of the data structure is difficult without previous experience. This leads to a "chicken and egg" question where uncertainty in design decisions arises from a lack of experience. For this reason it is impractical to take the linear 'waterfall model' in which implementation coming after design. Instead take the 'agile' or 'concurrent design' approach where implementation and design are done intermittently. Design decisions will become more evident after having coded. Use a modular code structure that can be easily changed. Make each team member in charge of a single module and continuously communicate on the inputs and outputs needed for each module. Flexibility and communication is key. Trying to code is more important than having a complete picture of what you are going to do beforehand. | We have a few major recommendations for any group of experienced coding. First, start practicing writing code within the first week of the project. Second have an extensive conversation within the first two weeks of the project. Don´t get stuck to long on details of the design but quickly divide the work and start implementation. Making an extensive design of the data structure is difficult without previous experience. This leads to a "chicken and egg" question where uncertainty in design decisions arises from a lack of experience. For this reason it is impractical to take the linear [https://en.wikipedia.org/wiki/Waterfall_model 'waterfall model'] in which implementation coming after design. Instead take the [https://en.wikipedia.org/wiki/Agile_software_development 'agile'] or [https://en.wikipedia.org/wiki/Concurrent_design_and_manufacturing 'concurrent design'] approach where implementation and design are done intermittently. Design decisions will become more evident after having coded. Use a modular code structure that can be easily changed. Make each team member in charge of a single module and continuously communicate on the inputs and outputs needed for each module. Flexibility and communication is key. Trying to code is more important than having a complete picture of what you are going to do beforehand. | ||

== Code snippets == | == Code snippets == | ||

The first snippet we like to share is our [https://gitlab.tue.nl/EMC2018/group10/snippets/29 main function]. We used the guidelines for a clean robot program as presented by Herman Bruyninckx. We don't need all the function that were presented. The communication is not needed as a separate function, because this is included in the EMC library. Configuration is not a separate function because all the parameters are set to be constant and can be adjusted in the config.h file. We do have computations which can be subdivided into perception, monitoring and planning. Using these guidelines a structured main file is created which is easy to understand. To further improve performance it would be possible to do certain functions at a higher or a lower rate. This can easily be done by only executing for example the the monitoring and the planning every xth loop. | The first snippet we like to share is our [https://gitlab.tue.nl/EMC2018/group10/snippets/29 main function]. We used the guidelines for a clean robot program as presented by Herman Bruyninckx. We don't need all the function that were presented. The communication is not needed as a separate function, because this is included in the EMC library. Configuration is not a separate function because all the parameters are set to be constant and can be adjusted in the config.h file. We do have computations which can be subdivided into perception, monitoring and planning. Using these guidelines a structured main file is created which is easy to understand. To further improve performance it would be possible to do certain functions at a higher or a lower rate. This can easily be done by only executing for example the the monitoring and the planning every xth loop. | ||

| Line 367: | Line 415: | ||

* 2. there is no corner in between the nodes | * 2. there is no corner in between the nodes | ||

Therefor a line drawn between the two nodes can be considered a wall. | Therefor a line drawn between the two nodes can be considered a wall. | ||

Finally the [https://gitlab.tue.nl/snippets/53 door detection] is added. In this code all nodes in the current room are evaluated and compared to all other nodes. As noted the nodes do not need to be closed. It will suffice to have one open and one closed node, but in that case the node should be detected at least n times. With the n defined as a magic number in the configuration. In the compareNodes function the actual calculations are done: so checking whether the nodes are in line with the wall, and at the correct door opening distance. The values of door entry and exit point can than be determined and are stored in the xin, xout, yin, yout variables. This is than stored in the worldModel via the storeDoor function. | |||

Latest revision as of 15:07, 14 June 2019

Group members

| Name: | Student id: | |

| Srinivasan Arcot Mohanarangan (S.A.M) | s.arcot.mohana.rangan@student.tue.nl | 1279785 |

| Sim Bouwmans (S.) | s.bouwmans@student.tue.nl | 0892672 |

| Yonis le Grand | y.s.l.grand@student.tue.nl | 1221543 |

| Johan Baeten | j.baeten@student.tue.nl | 0767539 |

| Michaël Heijnemans | m.c.j.heijnemans@student.tue.nl | 0714775 |

| René van de Molengraft & Herman Bruyninckx | René + Herman | Tutor |

Initial Design

The initial design file can be downloaded by clicking on the following link:File:Initial Design Group8.pdf.

A brief description of our initial design is also given below in this section. PICO has to be designed to fulfill two different tasks, namely the Escape Room Competition (EC) and the Hospital Competition (HC). When either EC or HC is mentioned in the text below, it means that the corresponding design criteria is only needed for that competition. If EC or HC is not mentioned, the design criteria holds for both challenges.

Requirements

An overview of the most important requirements from Main page:

- No "hard" collisions with the walls

- Find and exit the door of a room

- Fulfill the task within 5 minutes (EC)

- Fulfill the task within 10 minutes (HC)

- Do not stand still for more than 30 seconds

- Fulfill the task autonomously

- Map the complete environment (HC)

- Reverse into wall position behind the starting point after an exploration of the environment (HC)

- Find a newly placed object in an already explored environment (HC)

Functions

These were the initial functions we identified on a high level:

- Driving

- Translating

- Rotating

- Comparing Data

- For trajectory planning

- For newly placed object recognition

- Line fitting (least squares in C++)

- Trajectory and action planning

- Configuration

- Coordination

- Communication

- Mapping

- Parking

Components

| Component | Specifications |

| Computer |

|

| Sensors |

|

| Actuator | Holonomic vase (omni-wheels) |

| Soft Ware Modules |

|

Specifications

The following numbers are the software and hardware capabilities of PICO.

- Maximum translational speed of 0.5 m/s

- Maximum rotational speed of 1.2 rad/s

- Translational distance range of 0.01 to 10 meters

- Orientation angle range of -2 to 2 radians or 229 degrees approximately

- Angular resolution of 0.004004 radians

- Scan time of 33 milliseconds

Interfaces

Different parts of the software have to communicate with each other in order to exchange information. In order to keep the communication structured within the software, all information is stored in the worldmodel, which all functions can access. In this way the functions are not linked to each other and the information is stored at a central place. This structure is shown in figure . Some information in the worldmodel is stored within classes and has to be accessed via so called get and set functions. This is done to make sure that information is not accidentally changed. The perception and driving parts are the only parts that are actually communicating with the robot. Perception reads the sensor data and performs several functions to the data, to transform the raw data in objects like nodes, corners and endpoints of walls. This is then stored in the worldmodel. Driving makes the robot move to the required setpoint that the planning algorithm stored in the worldmodel.

Task Skill Motion

In this section a brief overview of the task-skill-motion framework is given that will be used to structure the software. In figure the task-skill-motion diagram is given. The diagram consists of 5 blocks and interconnections between them. There are also sub-blocks which represent functions of the system. Like for example in the skill block, there are the functions that detect the features of the room, but also the skill necessary to drive pico.The diagram should be read as follows: The system is started via the user interface that is connected to the task block. This block contains the tasks that pico has to fulfill, one of the 4 tasks will be selected at the start of the program. Together with the selected task, the worldmodel information is fed to the task monitor which monitors of the task is still being executed. The task monitor can decide if certain skills should be activated or not to achieve the task. The skill manager then sets the skills to active or not. The motion block contains the low level actuation that will involve moving pico around. The connections between the main blocks and the sub-blocks are the interfaces between the several software components.

Escape Room Competition

For the Escape Room Competition, a simple algorithm was used as code. This was done so that the group members without much C++ programming experience could get used to it while the more experienced programmers already worked on the perception code for the Hospital Competition. Therefore, the code used for the Escape Room Competition was a little bit different than the initial design because the aimed perception code was not fully debugged before the Escape Room Competition so that it could not be implemented in the written main code for the Escape Room Competition.

World Model

The data stored in the World Model is small and consists of only three Booleans. Those concepts are left, right and front and gets either the value TRUE or FALSE depending on if a obstacle is near that side of the robot. The value of each concept will get refreshed with a frequency of FREQ, deleting all the old data so that no history is stored.

Perception

For the perception of the world for PICO, a simple space recognition code was used. In this code, the LRF data is split into three beam ranges. A left beam range, a front beam range and a right beam range. Around PICO, two circles are plotted. One is the ROBOT_SIZE circle which has a radius so that PICO is just encircled by the circle. The other circle is the MIN_DISTANCE_WALL circle which has a bigger radius than the ROBOT_SIZE circle. For each of the three laser beam ranges, the distance to an obstacle is checked for each beam, and the lowest distance which is larger than the radius of the ROBOT_SIZE circle is saved and checked if it is smaller than the radius of the MIN_DISTANCE_WALL circle. If that is true, the value TRUE is given to the corresponding laser beam range, else the value FALSE is given to that laser beam range. This scan is done with a frequency of FREQ, and by each scan, the previous values are deleted.

Monitoring

For the Escape Room Competition, PICO would only monitor its surroundings between the ROBOT_SIZE circle and the MIN_DISTANCE_WALL circle by giving a meaning to its sensor data (Left, Right, Front). The four meanings are:

- Nothing: All sensor data has the value FALSE

- Obstacle: At least one of the sensors has the value TRUE

- Wall: The Right sensor has value TRUE, while the Front sensor has value FALSE

- Corner: The Right and Front sensor has value TRUE

The monitored data is only stored for one moment and the old data is deleted and refreshed with each new data set.

Planning

For the planning, a simple wall following algorithm is used. Our design choice was to follow the right wall. As initial state it is assumed that PICO sees nothing. PICO will than check if there is actually nothing around it by searching for an obstacle. If no obstacle is detected at near its starting position, PICO will hypothesize that there is an obstacle in front of him and will drive forward until he has validated this hypothesis by seeing. This hypothesis will always come true at a certain moment in time due to the restrictions on the world PICO is put into, except if PICO is already aligned to the exit. In that case PICO will fulfill its task immediately by driving straight forward.

As soon as an obstacle is detected, PICO will assume that this obstacle is a wall. It will than rotate counter-clockwise until its monitoring function confirms that this obstacle is a wall. In other words only the right sensor returns the value TRUE. Due to the fact that the world only consists of walls and corners, and corners are determined ny walls, this hypothesis will again always come true when rotating. As soon as only a wall is detected, PICO will drive forward to keep the discovered wall on its right.

While following the wall, it is possible that PICO detects a corner if the front sensor goes of. This can happen if PICO is actually in a corner, or if PICO is not well aligned with the wall (the front sensor sees the wall that PICO is following). However, in both cases, PICO will rotate counter-clockwise until he only sees one wall. It will be better aligned with the wall it was following. In the case that PICO is actually in a corner; it will align with and follow the other wall, that makes up the corner instead of the wall he was initially following.

Another possibility that can happen is that PICO looses the wall it is following. This can again happen if PICO is not well aligned with the wall or if there is an open space in the wall(i.e, a door entry). In both cases, PICO rotates clockwise until sees a wall or a corner and than starts again following them as usual.

Driving

The Driving for the escaperoom challenge is relatively simple. PICO has three drives states which are drive_forward, rotate_clockwise and rotate_counterclockwise. Depending on the monitoring, the planning will give which driving state needs to be done by PICO as already discussed in the planning part.

The values

The main values that are given in the script are listed below with an explanation on how they where determined:

- ROBOT_SIXE

- The size of PICO is measured and ROBOT_SIZE is set to be just big enough to make a circle with radius ROBOT_SIZE around PICO without including PICO. The ROBOT_SIZE that is measured is 0.2 m.

- MIN_DISTANCE_WALL

- As PICO must be able to go between two walls in a distance of 0.5 m, the MIN_DISTANCE_WALL value has to be smaller than that size, but to be more specific, it has to be smaller than 0.3 as the distance is measured from the center of PICO and PICO will have a radius of 0.2 meters. Furthermore, it has to be bigger than 0.2 as this is the size of PICO. As PICO must directly react on his surroundings, this value is set to 0.25 m to give PICO room to act on its detection.

- FREQ

- The Frequency at which the scan takes place must be big enough to detect the change in surrounding while PICO is moving. Furthermore, this will determine the precision of the alignment. As this takes a lot of computation power, this value will be set on 20 Hz as this is high enough to detect everything but will not ask to much computation power while PICO is following the wall and no big changes in the surrounding are measured.

- FORWARD_SPEED

- As PICO must act on its surroundings, and the MIN_DISTANCE_WALL value only has a margin of 0.05 m, PICO has to stop quickly. To ensure that PICO will stop in time, experiments must be done to determine the speed of PICO in which PICO is able to stop on time before the wall.

- ROTATE_SPEED

- As PICO tries to align with the wall, it will move with this velocity while rotating. This value is determined experimentally with the given frequency to ensure that PICO is not to much overrated between the scanning frequency interval.

The results

Our wall follower worked smoothly in the simulator as can be seen in the video below and during the testing sessions. However, due to our restrictions on the FORWARD_SPEED and the ROTATE_SPEED, PICO moved slowly towards the finish, but eventually gets their as could be seen on the video showing the Escaperoom Challenge. However, as we where excited that PICO moved through the hallway as only one of the three groups that made it to that point, we stopped filming. Unfortunately, just before the finish, PICO detected the other wall of the corner while aligning to the wall and monitored is as a corner so started to follow the other wall back into the room. As we tested our code with just a little bit bigger hallway and in the simulator as shown in the gif, alignment errors due to the delayed response of PICO did not occur while testing.

A fault due to the bad alignment also happened while PICO was following the rooms wall. As PICO was badly aligned with the wall, it eventually lost the wall making a nice pirouette before following the wall again.

Although PICO was not fast and did not get past the finish, we were proud with our results as we were one of the few groups without coding experience beforehand that made it to the hallway.

Lessons learned

As we learned from the Escaperoom Competition, it is important to give PICO a task that he can fulfill for a longer time so that the speed can be increased. Furthermore, if the setpoint of PICO was set at the end of the hallway and the alignment with the wall was turned off when PICO had entered the hallway, PICO would have made it to the end. However, a check on unexpected events should still be present to make only little adjustments. This would also had prevent PICO to made its pirouette while following the wall as it would have corrected its motion to fulfill its task instead of acting directly on its sensor data.

Also we have learned that a good monitoring is important. As happened, PICO thought he was at a corner while he was not as a corner was poorly defined in the monitoring. As the planning state correctly acted on a corner, PICO did what he has to do on the wrong moment due to the wrong conclusion of a corner. Therefore, a good monitoring is important.

Hospital Competition Design

World Model

Our strategy is to store the least information as possible, since with less data the system is simplier and most likely more robust. In the worldmodel all the necissary information is stored for the four main functions of the program (perception, monitoring, planning and driving ). This way, there is no communication between the individual functions. They take the worldModel as input, do operations and store data to the worldmodel. The main object in the worldmodel is the rooms vector, which contains all the rooms. In this subclass all the perception and monitoring data regarding that room is stored. So it contains a list of all doors in the room and of all the nodes in the room. The worldmodel also contains flags for the planning and the driving such as it's current task and it's current driving skill.

Nodes

A node is a class containing the data on the (possible) corners. This contains the position of the point (in x and y) relative to Pico. And the 'weight' of the node is stored, this is how many times this node has been perceived. This value comes in to play to hypothesis whether or not this node is a actual corner. To categorize the different types of nodes the following Boolean attributes of the node objects are stored:

| Node type | Subtype | Definition |

| Open Node | The unconfirmed end of a line | |

| closed Node | Inward Corner | Confirmed intersection of two lines.The angle the node vector (which is always relative to PICO) and each of the intersecting lines is less than 90 degrees at moment of detection. |

| Outward corner | Confirmed intersection of two lines.The angle the node vector (which is always relative to PICO) and at least one of the intersecting lines is more than 90 degrees at moment of detection. |

Doors

The doors contain the points to where PICO should drive to enter and exit a room. A door is created by the monitoring module. These point are stored as nodes and can be called by the planning to determine the way points for the driving skill. They are determined geometrically by the corner nodes of which the door consists. They are placed at a certain distance from the corners to avoid collisions as visible in the figure to the right.

Room

A room consists of a number of nodes and a list of the existing connections. The room thus contains a vector of nodes. Room nodes are updated until the robot leaves the room. The coordinates are than stored relative to the door. The nodes are than inverted with respect to the door. This is necessary for the possible situation where PICO needs to pass through the same door from the other side. If for it returns from another room and desires to return to the hallway, the door leading to the hallway will be flipped. In this way it will not be necessary to update the land marks in every room where PICO is not situated in but still have a usable map once PICO enters the room. This is further described under monitoring and planning. There are two types of hypothesized doors. The strongest hypothesized door is identified by at least one outward corner. The other is identified as adjacent to an open node.

Perception

Firstly the data from the sensors is converted to Cartesian coordinates and stored in the LaserCoord class within Perception. From the testing it became clear that PICO can also detect itself for some data points and that the data at the far ends of the range are unreliable. Therefor the attribute Boolean Valid in LaserCoord is set to false in those cases. When using the data it can be decided if the invalid points should be ex- or included.

For the mapping, the corner nodes and nodal connectivity should be determined as defined in the worldmodel. To do this, the laser range data will be examined. The first action is to do a "split and merge" algorithm where the index of the data point is stored where the distance between to points is larger than a certain threshold. The laser scans radially, therefor the further an object is, the further the measurements differ from each other in the Cartesian system. The threshold value is therefor made dependent on the polar distance.

The point before and after each split is hypothesized as a outward corner. This might be a corner, but can also be part of a (unseen) wall. The corner is stored as a invalid node, until it is certain that it is actual outward corner.

Old method

Then in the section between splits the wall is consecutive, however there could be corners inside that section. These corners need to be found.

The original idea was to check if the lines at the begin and end of the section with three hypothesis:

- They belong to the same line. No further action

- They are parallel lines. Two corners must lay in this section. Do a recursive action by splitting the section in two.

- To different lines. The intersection is most likely a corner.

To determine the lines a linear least squares method is used. This is done for a small selection of points at the beginning and the end of the section. If the error from the fit is too large the selections of points is moved until the error is satisfied.

This method proved to be not robust. For horizontal or vertical fits the lines y=ax+b do not produce the correct coefficients. Even with a swap, so x=ay+b this method fails in some cases. It would probably be possible to continue with this method, but it is decided to abandon this search method within the section and adopt to an alternative. This will be a good example of a learning curve.

Current implementation

The method which is currently implimented is inspired by one of last years groups: link to Group 10 2017. The spit and merge can stay in place, the change is the "evaluate sections" function.

Monitoring

The monitoring stage of the software architecture is used to check if it is possible to fit objects to the data of the perception block. For instance by combining four corners into a room. The room is then marked as an object and stored in the memory of the robot. The same is done for doors. This way it is easier for the robot to return to a certain room instead of exploring the whole hospital again. Monitoring is thus responsible for the creation of new room instances, setting of the room attribute explored to 'True' and maintaining the hypotheses of reality. Also the monitoring skill will send out triggers for the planning block if a room is fully explored. It also keeps track of which doors are already passed and which door leads to a new room.

The functions of monitoring are:

- Door detection

- Room fully explored

- Hospital fully explored

- Hypotheses

Door detection

The door detection function uses the information that is stored in the world model to make a door object of two nodes. The function checks for several properties that two nodes have to have to be qualified to be a door.

Node properties:

- One node is an outward corner the other one can be a end node or open node.

- Distance between them is 0.5 to 1.5m.

- The two nodes are not connected by a wall.

The door is defined by two outward facing corners to the robot, but this is actually not necessary. One of the two corners can be an open node, i.e. an unexplored node. In this way the system can recognize doors in walls that the robot did not fully explore and therefore requires less information.

When the nodes fulfill all the properties a new door is stored in the current room. The door detection function also determines the direction of the door relative to PICO. It checks the slope of the line between the two points. It then calculates a perpendicular line to the door and compares the two slopes. It then marks the entrypoint/exitpoint according to the calculation. This door is then also marked unexplored so the planning skill knows where to go to. An image of PICO identifying a new door can be seen right of the door class description.

Room fully explored

The current room that the robot is in is marked as explored when there are three inward corners found in the room. The choice to make the room explored when there are three corners and not four is that it might happen that the entrance to the room is not in the middle of a wall, but at one of the ends and then the room consists of three corners and not four.

Hospital fully explored The hospital is marked as fully explored when the hallway endpoint is reached, has no more unexplored doors and all rooms are marked explored. Also all the doors in the rooms must be marked explored.

Hypotheses:

When mapping the room the code will maintain two hypothesis of it's reality. The perceived map as described in perception is assumed to be spatially accurate. However PICO is not able to detect and perceive nodes that are behind it. Furthermore perception happens near continuously at 20 Hz. In that time PICO is able to detect which newly found nodes correspond to nodes already stored in memory by checking the distance between the old and the new node. This comparison is less robust when PICO is moving due to larger discrepancies in distance between an the old and new position of a node. For this reason PICO also maintains an odometery-based hypothesis. New positions of the nodes are predicted based on odometer signal and than compared with the perceived nodes to determine which perceived node position correspond to those in memory. Based on this the actual translation and rotation can be determined with three corresponding nodes using the following formula. These nodes need to be in front of PICO to ensure that they are not false-positives. In the case that less than 3 nodes are perceived, the perception hypothesis is assumed to be the actual room layout.

[math]\displaystyle{ \begin{bmatrix} T \end{bmatrix} = \begin{bmatrix} x_{1,t-\Delta t} & y_{1,t-\Delta t} & 1 \\ x_{2,t-\Delta t} & y_{2,t-\Delta t} & 1 \\ x_{3,t-\Delta t} & y_{3,t-\Delta t} & 1 \\ \end{bmatrix}^{-1} \cdot \begin{bmatrix} x_{1,t} & y_{1,t} & 1 \\ x_{2,t} & y_{2,t} & 1 \\ x_{3,t} & y_{3,t} & 1 \\ \end{bmatrix} }[/math]

Here T is the transformation matrix. The first matrix consists of the x and y coordinates at a previous time step, the second matrix contains the corresponding positions at current time. The transformation matrix is than used to update all the actual room object contained in our world model. For the matrix inversion we used the Eigen library.

Planning

Planning is a discrete set of "behavioral" states. Depending on the current conditions and results in world model, planning switches between appropriate states to complete the Hospital room challenge. Initially, planning is done in geometric level to structure the task which best suits for the particular operation, which is to complete the Hospital room challenge.

Planning at geometric level:

- Initialize the process.

- Explore hallway to detect for doors.

- When new doors detected, enter the door and create room.

- Scan the room for convex corners (room corner) and concave corners (door).

- Scan all the rooms.

- Go to the parking position.

- Create the final map.

- Use the high-level hint to locate target room and stop at the object.

Once planning at the geometric level is done, tasks are broken into states where each state has its own conditions to check from world model, perform the activity defined and update the world model. The state machine is formed (File:HRC planning final.pdf) to facilitate unique tasks to the corresponding states which can be reused as per the state logic. Each state is created in a unique way which does not repeat the task performed in other states and interlinked strategically to have robust and smooth flow from one another.

Planning at implementation (logic) level:

- Initialize: Resets IOs, planning state, drive state to initial value. It creates the current room as Room 0 which is the hallway based on the hypothesis that the robot starts at the hallway. Before moving, it saves the current position of PICO as its starting position in the world model. Once the initial position is saved, the state is switched to Explore_Hallway

- Explore_Hallway: Whenever PICO is in the hallway, current_room variable in world model is set to 0. This is used to save the nodes and doors detected in the hallway is stored with hallway ID. In the hallway, PICO looks for door detection and checks whether the door IN is explored. If both the conditions are satisfied, the state is set to Go_To_Room. If no doors detected, PICO is driven forward towards hallway point (which is the end point of hallway stored in world model by monitoring) with scanning for doors at a 20Hz scan rate. This process is repeated till PICO finds all the door.

- Go_To_Room: (Note: Two variables assigned for door exploration status, namely Door_IN and Door_OUT. This logic is used in planning to make decisions while traversing through doors). Go_To_Room is the stage where target points are created to travel through doors between hallway and room. Two target points are created with respect to the door, namely entry_point and exit_point. If both door IN and door OUT of the selected door ID is not explored, the entry point of the door is set as target coordinates, drive state is set to driving. The current position of PICO is checked with the target point and when close tolerance is reached, door IN is set to explored and target points are set at the exit point. PICO is driven to the exit point and the new object of the Room class is created in the world model. The state is switched to Explore_Room. One more crucial function handled by this state is Invert_coordinates. This function inverts the coordinates (by multiplying -1 to the X and Y coordinates) of the room which PICO is IN while it comes out of the room. This is done by checking for door_IN explored and door_OUT unexplored.

- Explore_Room: Explore room state does the process of scanning the room to find evidence for validating the hypothesis of the existence of room (i.e, At least 3 valid corners (convex corners)). This is done by checking for the Room_Explored variable in world model set by monitoring. If the room is not explored, room scan phase is initiated by setting drive state as Room_scan and checks for Room_Explored variable again. Once after exploring the room, if a new door is detected (i.e, Door_IN and Door_OUT of the detected door is unexplored) PICO will go through the door via Go_To_Room sequence. If NO new door is detected, PICO will go to hallway from room via Go_To_Room sequence. Thus Explore room state takes care of Room exploration, saving door/s and corners with respect to Room ID and number of doors to exit to hallway (for the high-level hint).

- Check_Explored: Check explored state is sequentially checked when PICO is in the hallway. The sole purpose of this state is to monitor whether all the rooms are explored (i.e, All the doors in the hallway are explored (door_IN and door_OUT for all doors are explored)). Once all rooms are explored, Parking state is set.

- Parking: Once all rooms are explored, parking routine is executed. Parking of PICO backward is done via driving sequence by setting drive state to park. Once PICO is parked, Parked variable in world model is set. PICO speaks out "I am parked". The final map of hospital room is saved in HospitalRoom.json file. This JSON file contains Rooms, doors, and nodes along with its coordinates. A visual map is created using Open CV.

- Object_Detection: Object detection is the special case made in order to utilize the high-level hint provided to locate the target room which has the object to be located. To identify the target room ID, the Maximum number of doors to reach the room from the hallway is found. The Go_To_Door sequence is executed with the target room index. Once PICO reaches the target room, the mapped reality is compared with the current perception data. Once the object is detected, PICO stops in front of it and speaks out "I found the object".

JSON Parsing: