Embedded Motion Control 2017 Group 2: Difference between revisions

| (404 intermediate revisions by 6 users not shown) | |||

| Line 1: | Line 1: | ||

This Wiki describes the design and implementation of software which lets a robot autonomously solve a maze. The robot used during the project is called PICO. PICO is a driving robot which has a Laser Range Finder (LRF) sensor which can make a 2D scan of the world. It has a holonomic base, which grants complete driving freedom. This base is equipped with encoders that provide odometry measurements, handily transformed to displacement measurements of the x, y and alpha coordinates with respect to its starting position. Unfortunately these measurements are prone to errors, which show especially when accumulated over time. These sensors give PICO very basic human-like capabilities; the LRF can be compared to the eyes of a human and the odometry to the human sense of [https://en.wikipedia.org/wiki/Proprioception proprioception]. The only thing that PICO is missing is the equivalent to a brain; which exactly what needs to be developed in this course. PICO also has a computer on board which runs Linux 14.04. The software is programmed using the program language is C++ and managed using GitLab. | |||

We have chosen the rather ambitious goal of actually creating an intelligent being - something remotely resembling a human - instead of just following a wall, since we found it both very interesting and challenging. | |||

Within the project there are two main challenges: the corridor challenge and the maze challenge. The corridor challenge is a first test, in which the robot needs to drive through and turn out of an exit on either side of a corridor, for which to have the basic capabilities it needs later have to already be developed. The maze challenge is the final test, where PICO has to find its way out of an unknown maze, fully autonomously. | |||

= Group Members = | = Group Members = | ||

| Line 52: | Line 56: | ||

</table> | </table> | ||

<!-- | |||

= Minutes = | = Minutes = | ||

Minutes of team meetings are available here: [http://cstwiki.wtb.tue.nl/index.php?title=Embedded_Motion_Control_2017_Group_2_Minutes] | Minutes of team meetings are available here: [http://cstwiki.wtb.tue.nl/index.php?title=Embedded_Motion_Control_2017_Group_2_Minutes] | ||

--> | |||

= Initial design = | = Initial design = | ||

Our [[:File:initial-design-document.pdf|initial design]] shows the basic strategy for solving the maze challenge. It contains roughly the following. | |||

''' Introduction: ''' The goal of this project is to autonomously | ''' Introduction: ''' The goal of this project is to autonomously get out of an unknown maze with the PICO robot, as fast as possible. The robot has sensors, | ||

actuators and a computer on board to navigate through the maze. The computer runs on Linux 14.04 and the | actuators and a computer on board to navigate through the maze. The computer runs on Linux 14.04 and the programming | ||

language used for the software is C++. In this section the initial design is discussed, which consist of the requirements, | language used for the software is C++. In this section the initial design is discussed, which consist of the requirements, | ||

functions, components, specifications and interfaces. This design will be used to build structured software and define required interfaces. | functions, components, specifications and interfaces. This design will be used to build structured software and define required interfaces. | ||

'''Requirements: ''' To solve the maze problem the following are required | '''Requirements: ''' To solve the maze problem the following points are required | ||

*Finding the exit | *Finding the exit | ||

*Finding and opening the door | *Finding and opening the door | ||

| Line 73: | Line 78: | ||

*All tasks must be executed autonomously | *All tasks must be executed autonomously | ||

*The system should work on any maze | *The system should work on any maze | ||

*The robot | *The robot must not get trapped in loops | ||

'''Functions: ''' The functions which have to be implemented are subdivided into the different contexts. Motion functions describe how | '''Functions: ''' The functions which have to be implemented are subdivided into the different contexts. Motion functions describe how | ||

| Line 96: | Line 101: | ||

*Finding the exit ''Once a door has been found and opened the task is to go through the door and finish the challenge.'' | *Finding the exit ''Once a door has been found and opened the task is to go through the door and finish the challenge.'' | ||

= | === Initial software architecture === | ||

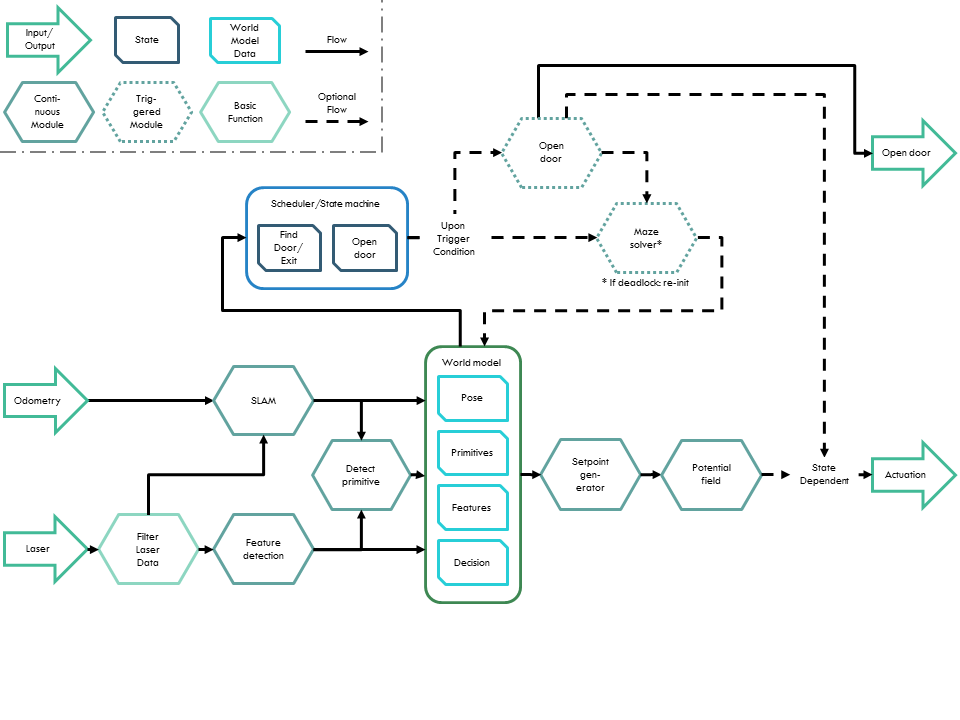

The initial design can be put into a diagram, which is shown in | The initial design can be put into a diagram, which is shown in the Figure 1. Below it, the initial design behind modules that were changed later is justified. Updated versions and unchanged modules are explained in the final software sections and justification for changing the modules especially is given in [[Embedded_Motion_Control_2017_Group_2#Main_loop|the architecture section]]. | ||

[[File:software-architecture-pre-corridor.png|thumb|upright=4|center|Figure 1 Software architecture before corridor challenge]] | |||

'''SLAM''' | |||

The original Simultaneous Localization And Mapping (SLAM) algorithm was designed to use the raw odometry and laser data and process this into world frame robot pose and, crucially, world frame features. The world frame is defined as the current position and rotation (called a ''pose'') of PICO with respect to the initial pose of PICO. Features are defined as re-observable landmarks, in our case corners of walls, and are saved as locations with respect to the initial pose of PICO. A possible method of SLAM is the well known | |||

[https://en.wikipedia.org/wiki/EKF_SLAM Extended Kalman Filter (EKF) SLAM], but many different types of SLAM exist. | |||

''After evaluating multiple SLAM algorithms, it had been decided that the aforementioned EKF SLAM fits bests with the posed problem. The reason for this choice is that it works well in circumstances where there are clear landmarks; a maze with with many right-angled corners definitely belongs to this class. Another benefit to this method is that these landmarks could be readily used in the primitive detection. Lastly, EKF SLAM is known to be effective for problems of moderate size, the data size will not become huge and slow to process for a few hundred landmarks.'' | |||

'''World model''' | |||

In the initial design, the world model was meant to be a separate entity - a container of data structures, which main task was to ensure that all other modules could retrieve the latest data from a single place whenever it was available, making sure that no two modules operated on different data. | |||

'''Driving''' | |||

In the driving skill a driving strategy is to be implemented which is able to avoid obstacles while driving in a set direction, taken from the maze solver. Avoiding obstacles means that laser data is required to measure distances to objects in the world. | |||

''For the driving skill the best method was chosen to be a [[Embedded_Motion_Control_2017_Group_2#Potential field| potential field]] method. Correct implementation ensures PICO will never hit a wall while driving towards a desired location. A downside of this method is that PICO might get stuck if there a wrong decision is made.'' | |||

= The corridor challenge = | |||

The strategy for the corridor challenge was to have basic feature detection and potential field working, as well as some form of set-point generation. Because the SLAM algorithm was not finished yet, feature detection was build as a separate module that used jumps in the laser data in order to detect exits consisting of two corners in the corridor wall. This made set-point generation relatively easy; the set-point was set just after the first detected corner on the left or right of PICO. | |||

Using this strategy, PICO was able to complete the corridor challenge and ended up in fourth place. This could have been better, however, we were given time penalties since PICO scratched the wall twice. The reason that this happened was quickly found, and ended up being divided over the lower and upper part of the software. | |||

PICO was able to detect the exit from far back — while it was still far from it. Unfortunately, it immediately decided to drive towards this exit. This does not mean that the set-point was placed incorrectly, as it was still placed in the middle of the corridor. But the set-point attracted PICO already quite heavily to the right. This is where the second cause comes into play, the potential-field. The potential-field was not tuned perfectly, but more importantly, the actual width of PICO was taken not into account. This meant that the repulsive forces did not tend to infinity as PICO neared the wall, but rather to a high, but apparently not high enough, number. The width of PICO was added afterwards and reduced touching the walls to nearly zero during the following tests. Another final way to prevent the rapid change of direction we experienced, came in the form of our set-point generator: we needed to smoothly switch between set-points and not just move directly towards the first opening that was observed. | |||

= Final software architecture = | |||

''This section explains the difference in architecture pre- and post-corridor challenge and the reasons why these differences were made. Subsections will provide more detail on the technique and implementation of each of the modules.'' | |||

After the corridor challenge, it became apparent that a re-evaluation of the software architecture was needed — it needed to be split up into more modules that focus on one thing in order to improve clarity and a few extra functionalities needed to be added for completeness. One main split is between the initialization and the main loop execution. | |||

=== Main loop === | |||

The final main loop data flow scheme is depicted in Figure 2. | |||

[[File:SchematicMainLoop.png|thumb|upright=4|center|Figure 2 Software architecture after corridor challenge]] | |||

[[ | Firstly, the [[Embedded_Motion_Control_2017_Group_2#SLAM|SLAM algorithm]] had to be changed since building our own EKF SLAM proved to be too time-consuming. A switch was made to a ROS functionality called Gmapping, which was used solely to obtain an accurate position. A new landmark detection was build in a module called [[Embedded_Motion_Control_2017_Group_2#Feature_detection|feature detection]], that uses a split-and-merge method to obtain wall-segments and wall-corners using the laser data. | ||

This laser data also had to be filtered, since tests on the actual robot showed that the LRF sensor produced ''shadow points'' that interfered with the current algorithms. More information on this filter can be found in the [[Embedded_Motion_Control_2017_Group_2#Shadow_point_filtering|shadow point filtering]] section. | |||

[ | Furthermore, from the evaluation of the corridor challenge it became apparent that the Driving skill had to be split into two parts aswell: one ensuring smooth [[Embedded_Motion_Control_2017_Group_2#Set-point_generation|setpoint generation]] that would increase speed and reduce potential wall-bumps and another that focused solely on the [[Embedded_Motion_Control_2017_Group_2#Potential_field|potential field]] functionality. | ||

Another big change in the architecture was the split into continuous and triggered modules and the scheduler that came with it. During the development of our software it became apparent that it was benificial to have some modules that acted continuously on the ''last known'' robot status (for instance generating setpoints based upon the last decision), but also to have some modules which would only be called when they were needed (like the maze solver which needs to only make a decision when the robot is close to a ''decision point''). More detail on the implementation of the Scheduler can be found | |||

[[Embedded_Motion_Control_2017_Group_2#Scheduler|here]]. | |||

A seperate [[Embedded_Motion_Control_2017_Group_2#Open_door|open door]] module was added in the architecture aswell, which was another big change. This is because it ''hijacked'' the loop execution; that is, all modules except SLAM stop their operation when the open door module is called, since it has its own actuation capability. This is admittedly bad software design, but the module was too difficult to consume into the main loop execution otherwise. | |||

Lastly, in order to implement the as before mentioned scheduled execution, the continuously executing setpoint generator needed to have access to the maze solver's last made decision. Therefore, this was included in the World Model. | |||

Please note that the ''World Model'' is only an architectural object; in the actual implementation of the architecture it was omitted and the needed information was simply asked ''only once'' from the relevant module and then stored as a variable in the main loop. As mentioned earlier, a world model was decided to be unnecessary and overly complicating. | |||

=== Initialization === | |||

Before the main loop can be executed, the robot and all the modules on it have to be initialized. A few special actions happen during this, of which an overview is depicted below. | |||

[[File:SchematicInitialization.png|thumb|upright=4|center|Figure 3 Initialization software architecture]] | |||

The ''position initially'' task is the most notable extra initialization step. The [[Embedded_Motion_Control_2017_Group_2#Maze_solver|maze solver]] relies on the directions from the [[Embedded_Motion_Control_2017_Group_2#Primitives|primitive detection]] being correct in the world frame; the provided NORTH, EAST, SOUTH and WEST with respect to the initial rotation of the robot have to actually represent these cardinal directions. This is especially important since the architecture requires that a new decision point can always be found by driving in one of the directions. This results in the requirement that the robot has to be initialized perpendicular to a wall. Since all corners are approximately right angled this results in the available directions at every decision point corresponding to the actual NORTH, EAST, SOUTH and WEST. | |||

Solving this problem is fairly straight forward using the [[Embedded_Motion_Control_2017_Group_2#Feature detection|feature detection]] module. Firstly, the angle between the first two features, which are always in the same section, is calculated. Note that this angle is the angle observed from the PICO robot, not an angle in some world frame — no world frame is defined yet at this point. This angle is then used to turn PICO such that is in alignment with it. This is repeated until the angle is within a threshold of approximately 3 degrees. This process is shown as in simulation in the animation below. | |||

After aligning with a wall the first decision point is made using the (0, 0) position and the possible actions set according to a laser measurement which measures open directions in the starting position. Note the initial decision point that is made at the end of the simulation above. Afterwards, the starting pose of the mapping is set to the aligned pose by calling ''SLAM initialize'' and lastly one execution of the last part of the main loop is done: the maze solver makes an initial decision, a set-point is generated from this decision and a velocity command is generated by the potential field. | |||

= | = Final software implementation = | ||

For the final software implementation, the following elements were made: an interface for debugging the code, a general toolset for supporting functionality and the implementation of all main skills. | |||

== | == Debugging interface == | ||

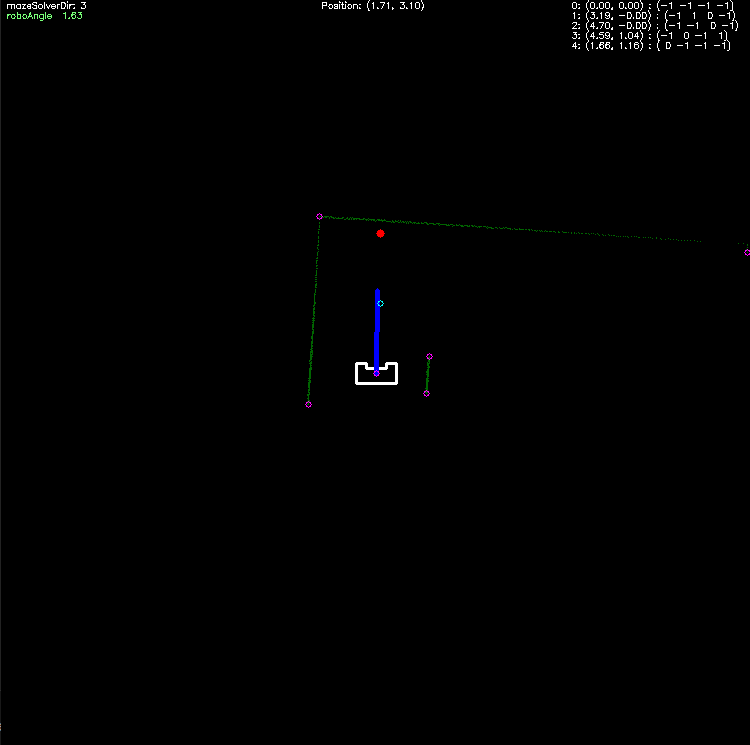

In order to efficiently get an idea of what the robot ''sees'' and what the status of its various modules is - information essential to debugging - a visualizer was made. It is based on the provided <code>emc-viz</code> visualizer, which uses [http://opencv.org/ OpenCV]. | |||

It provides visualization for the following data: | |||

* robot dimensions | |||

* (filtered) laser data | |||

* generated setpoint | |||

* potential field total force vector (blue line) | |||

* highlighted points (useful for debugging in general, but used for the landmarks primarily) | |||

* currently detected primitive (also called decision point) position (blue dot) | |||

* maze solver internal decision point memory | |||

* current direction the maze solver wants to travel towards | |||

* the current angle of the robot and whether it is within the bounds wherein new primitives are being detected (red/green text) | |||

* the current position of the robot in world frame | |||

A snapshot of the visualizer showing all this data is provided below. | |||

= | [[File:visualizer-snapshot.png|thumb|upright=4|center|Figure 5 Snapshot of the visualizer in action]] | ||

= | == General toolset == | ||

A distinction is made between skills, which are divided in classes, and a toolset function, which implements supporting functionality. These functions help cope with the non-ideality of the real-world, for example, in the ideal situation the laser data from PICO is noise free and its odometry data starts at 0 every time the program is executed. Violations of such situations have to be handled, and preferably only at one point in the code; this provides clarity and, most importantly, negates the situation where two modules would operate on different sets of data, while they should not. | |||

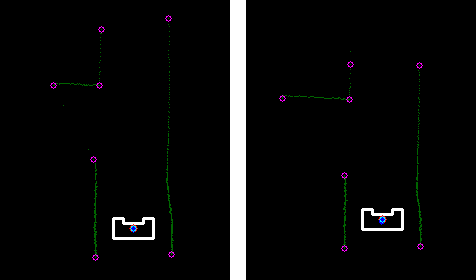

The most notable function in the toolset is the ''' Shadow point filtering'''. | |||

Using the Laser Range Finder in practise raises a problem when scanning an ending wall: shadow points appear. They are ghost measurements, placed at an arbitrary distance behind the wall, but always at exactly the angle to the ending, and are caused by the way the sensor works (detecting reflected laser points). An example of such shadow points is depicted in the leftmost figure below. Since they negatively effect the [[Embedded_Motion_Control_2017_Group_2#Feature detection|Feature Detection]] and [[Embedded_Motion_Control_2017_Group_2#Potential_field|Potential Field]], they need to be filtered out. | |||

= | [[File:shadowfilt.png|thumb|upright=4|center|Figure 6 Shadow points and them filtered out]] | ||

The Shadow Point Filter uses one attribute of these points to discern and detect them: they commonly don't have many other measured points around them. It thus looks before and after the point in the laser data array for N steps, and checks whether less than M of these points are within a radius r of it. Is this the case, then the point is either removed or set to distance 100. Thus, we keep track of two different arrays, which each have their own usefulness for different applications. | |||

The filter with parameters {N, M, r} = {10, 5, 0.1} applied on the aforementioned figure, is shown in rightmost figure above. | |||

As can be seen, the filter does remove some ''good'' points, but since they're far away this has no influence on the performance of the system — it even makes it more robust. | |||

== Main skill set == | == Main skill set == | ||

The main skill set implements, as the name suggests, skill functions defined in the architecture. | The main skill set implements, as the name suggests, skill functions defined in the architecture. | ||

=== SLAM === | === SLAM === | ||

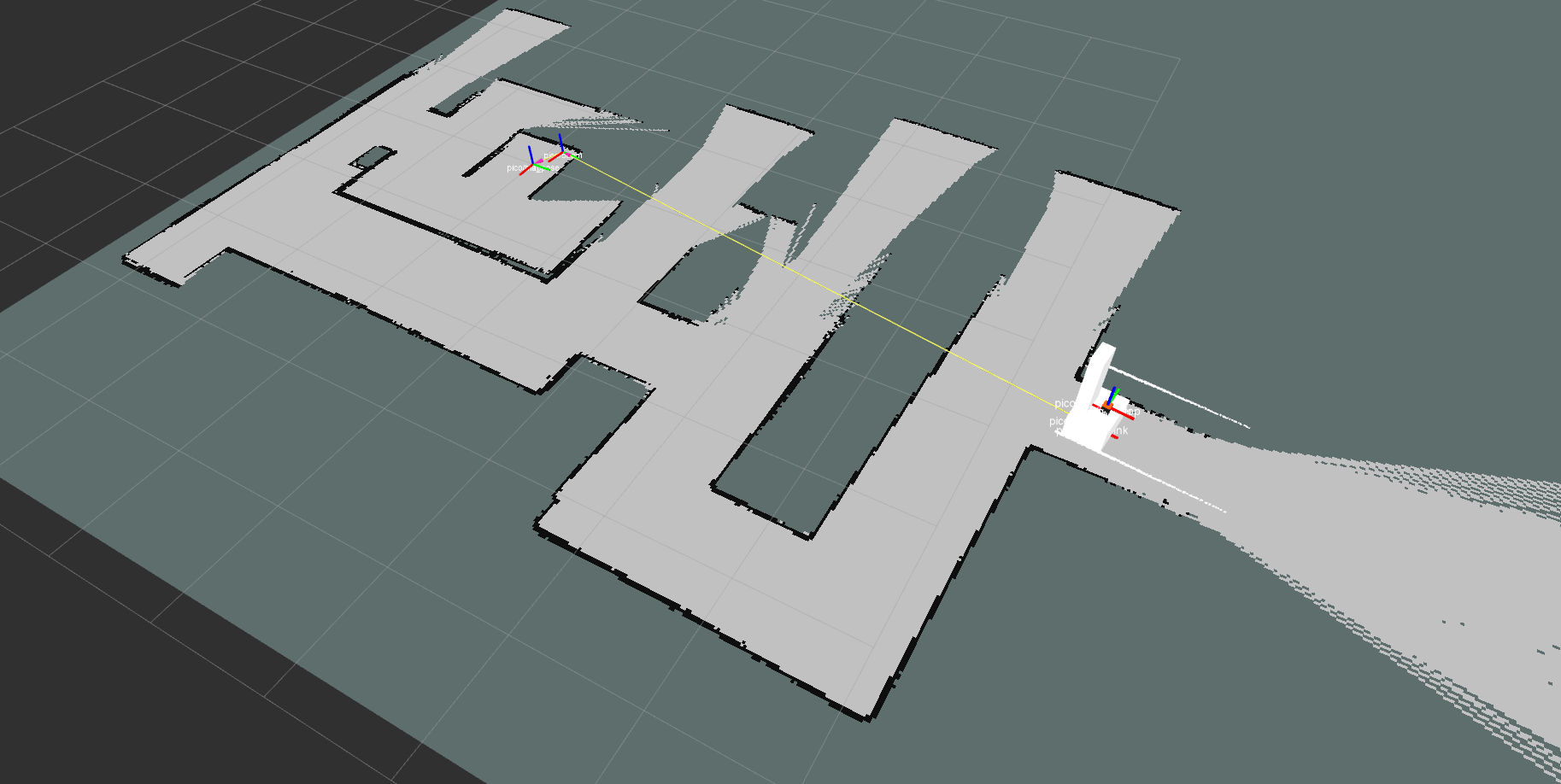

Because of the chosen maze-solving algorithm, a global position is required. The odometry of PICO is inaccurate and therefore useless for determining the position PICO in the long term. The laser range finder, however, is much more accurate and thus using this data simultaneously improves the accuracy of the estimated position. Because the environment is unknown, an algorithm called Simultaneous Localisation And Mapping (SLAM) is needed. There are many variations of SLAM algorithms available, however, choosing the correct algorithm, implementing it and tuning it can take a lot of effort. Therefore a ROS node called Gmapping[http://openslam.org/gmapping][http://wiki.ros.org/gmapping] is used. Gmapping is an implementation of a highly efficient Rao-Blackwellized particle filter. | |||

Gmapping is used solely to provide PICO with a more accurate global position than provided by the odometry. The created map is not used for detection of primitives, therefore the accuracy of the map is irrelevant — only the accuracy of the position is relevant. To improve the accuracy of the position, two parameters are tuned. The update interval of the mapping is shortened. This way the occupancy grid is updated at a higher frequency. The localisation update is based on rotation and translation of the robot. These update thresholds are lowered together with map update interval. To compensate for the relative bad odometry, the localisation thresholds are lowered even more. Time updates of the location are disable. This prevents jumps in the position, when PICO is not driving. Tuning is done on the straightness of the map and reducing the odometry compensation in the simulator, which should be nihil. | |||

Gmapping cooperates with TF[http://wiki.ros.org/tf]. TF handles all the different coordinate frames and their transformations. TF also allows us to transform points in a specific frame to an other frame. | |||

[[File:gmapping.png|thumb|upright=4|center|Figure 7 Map produced by Gmapping including TF frames]] | |||

=== Feature detection === | === Feature detection === | ||

For determining the | [[File:cornerDetection2.png|thumb|upright=2|right|Figure 8 Faded green: wall, red: corner feature, blue: end/beginning node]] | ||

For determining the type and location of a primitive, features called segments and corners are required. Corner are defined as locations where two connected walls meet, while segments are the features which are not corners; they describe beginning and end points of wall segments. In Figure 8 such features are shown, corners in red and segments in blue. A small adaptation of the laser data is needed in order to make the algorithm more robust; out-of-range data is set equal to 100 indicating an open section. In these out-of-range sections no features are set. | |||

The strategy to find segment features is based on the difference between two consecutive laser points, if the difference is greater than a threshold value (set at . | The strategy to find segment features is based on the difference between two consecutive laser points, if the difference is greater than a threshold value (set at 0.3 meter) the end of a wall segment is detected and both points are set as segment features. For the corner features a split and merge algorithm is applied to every wall segment, defined as the set of features spanning from an even segment feature to the next segment feature, i.e. from 0 to 1 and 2 to 3). | ||

The split and merge algorithm works in the following sequence: | The split and merge algorithm works in the following sequence: | ||

| Line 171: | Line 232: | ||

#::goto 2</pre> | #::goto 2</pre> | ||

# done | # done | ||

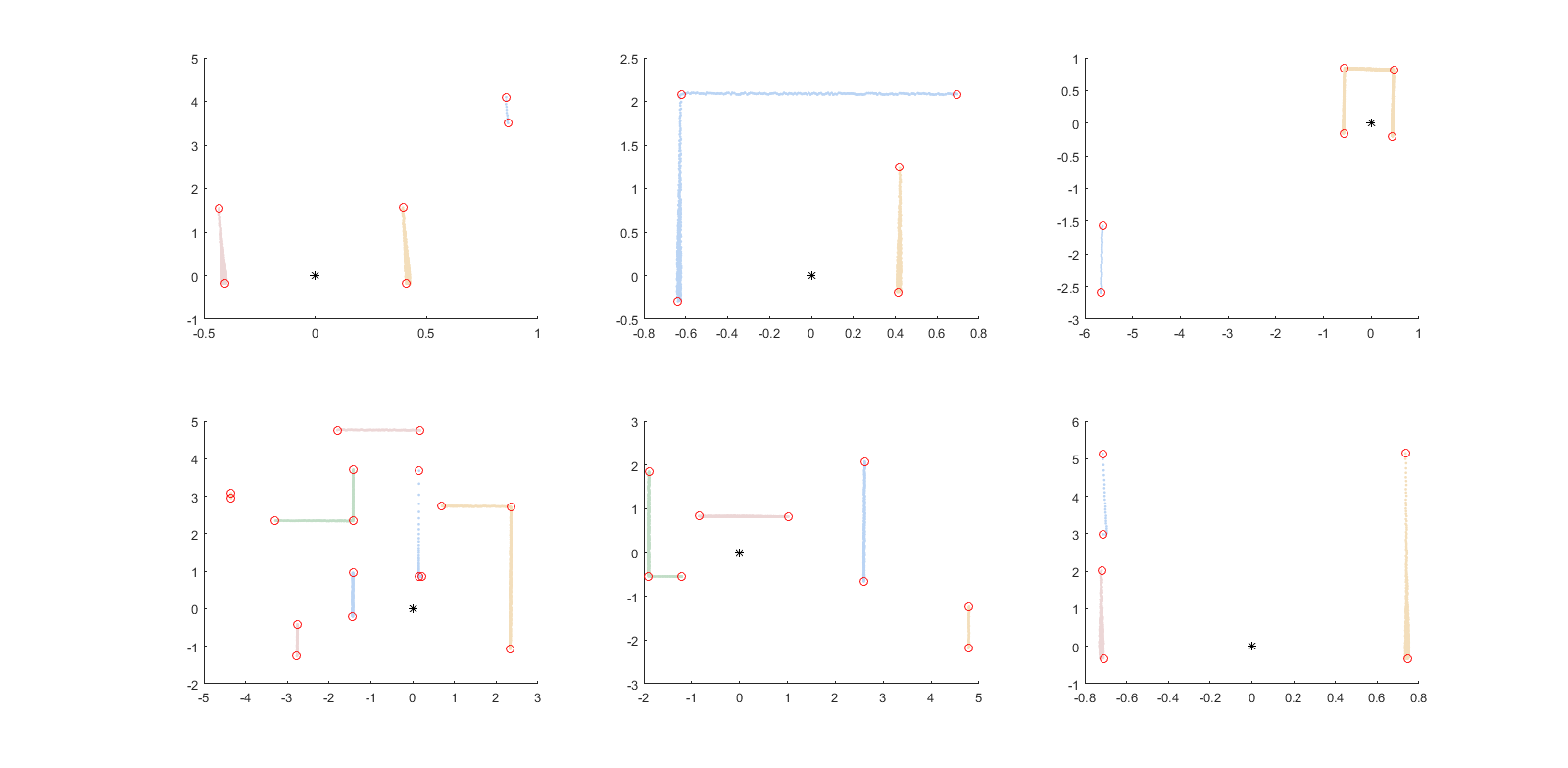

[[File:cornerDetection.png|thumb|upright=4|center|Figure 9 Some examples of feature detection using split and merge strategy. Note that segments are indicated by colour.]] | |||

The split and merge algorithm is also shown in | The split and merge algorithm is also shown in Figure 10, with some resulting examples in Figure 9. This split and merge algorithm has been tested in MATLAB first of which executable code is provided in [[File:split-and-merge-matlab.zip]]. As a comparison to the MATLAB code also a [https://gitlab.com/snippets/1665713 snippet] of the class implementing the algorithm is posted. For completeness a simple animation of the algorithm is shown in Figure 10. | ||

[[File: | [[File:Split-and-merge.gif|thumb|upright=4|center|Figure 10 Animation of the split and merge procedure]] | ||

'''Dependencies: ''' | '''Dependencies: ''' | ||

Feature detection uses laser data as its input, therefore the quality of the output depends on the accuracy of the data. If accurate data is supplied the parameters defining the strictness of the detection (i.e. the maximum allowed distance between calculated and measured lines) can be increased. This however comes with | Feature detection uses laser data as its input, therefore the quality of the output depends on the accuracy of the data. If accurate data is supplied the parameters defining the strictness of the detection (i.e. the maximum allowed distance between calculated and measured lines) can be increased. This however comes with the major drawback that shadow points result in walls being split into multiple sections, as the distance of the laser makes a jump upon encountering shadow points. To prevent this behaviour, the aforementioned shadow-point filter is used. | ||

Another possible cause of error is how out of range data is handled, if this data is discarded there | Another possible cause of error is how out of range data is handled, if this data is discarded there are circumstances where two sections are joint together. This happens when the range before an out of range section is equal to the range after an out of range section. A result is that two sections are joint and therefore primitive detection will give a wrong output. | ||

'''Trade offs: ''' | '''Trade offs: ''' | ||

As mentioned in the Dependencies, the shadow point filter plays a large role in the quality of the features. In this a trade-off has to be made between filtering bad data and removing relevant information. A strict shadow point filter takes out shadow points but also leads to far-away, correct laser data being removed as well (as one laser increment leads to large range difference. | As mentioned in the Dependencies, the shadow point filter plays a large role in the quality of the features. In this a trade-off has to be made between filtering bad data and removing relevant information. A strict shadow point filter takes out shadow points but also leads to far-away, correct laser data being removed as well (as one laser increment leads to large range difference). | ||

=== | === Primitives=== | ||

A maze can be split up into primitives; parts of the maze where the robot can make a significant change in driving direction. The four considered directions are north, east, south and west. As mentioned earlier, these directions are with respect to the global map. In addition, each direction can contain a possible door. To determine what kind of primitive a certain part of the maze is, features and sections are used, as described in the previous section. The locations of features are relative to the robot, so within the body frame. To check if the robot can drive in one of the four directions, the options of these directions are firstly determined in this body frame. These body directions are: straight, back, right and left. | |||

'''Primitive detection''' | |||

Detection of primitives can be done using different methods. The two methods that have been examined are, firstly, to make a correlation check between the observed laser data and the laser data corresponding to the preprogrammed possible primitives and, secondly, to use the features and sections. As noted, to make the correlation work it is important that for every possible primitive a laser pattern is defined. On the contrary, the feature and section detection had been already made and is working robustly. Because of these reasons it was chosen to explore the second option. | |||

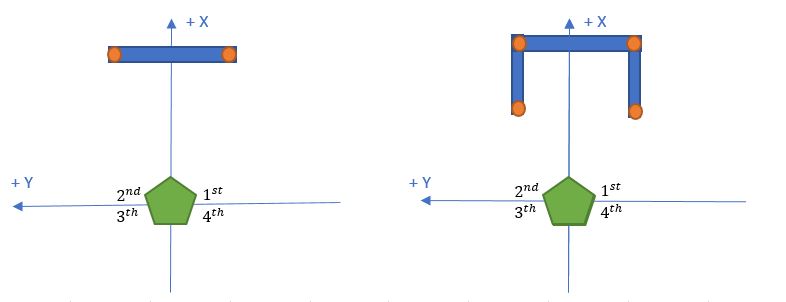

The local direction '''straight''' is only a driving direction if no section exists in front of the robot or if the section in front of the robot is more than 1.2 meters away. A section is in front of PICO when it has a feature in the first and second quadrant. For this section, there is also a check in place on whether the spotted sections represent a door, which is done by looking if the horizontal wall is less then 1.6 meter and if it contains two vertical walls next to it. In the figure below examples of a straight section and a straight section with a door are depicted. | |||

[[File:PrimStraight2.JPG|thumb|upright=5|center|Figure 11 Go straight]] | |||

'''left''' | Since this module can neglect the initial primitive (decision point), the local direction '''back''' is always a driving direction since the robot came from there; as mentioned before, the robot does not drive sideways or back. | ||

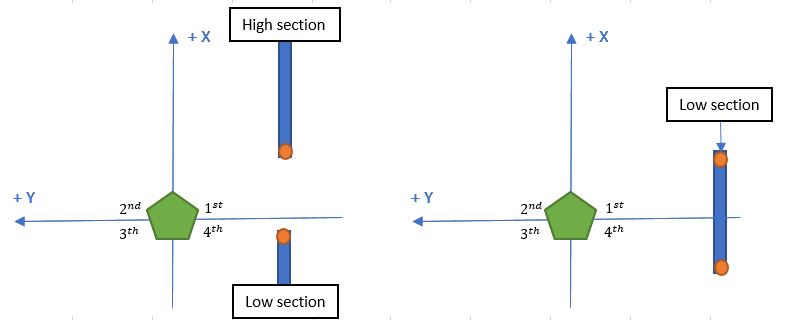

The local direction '''right''' is a driving direction if enough space is in between the two sections before and after or a right section is 1.2 meters away. To determine the space in between two sections, a low and high section need to found. The terms low and high refer to the number of the section which is found by feature detection. These sections are therefore chronologically ordered in counter-clockwise fashion. The low section is the closest section to PICO, having the first feature in the fourth quadrant (see image for definition). The high section is the next section starting in either the fourth or first quadrant, which is closest to PICO. In Figure 12 an example is shown of a high and low section with enough space in between them to be considered a decision point, also a right section which is far away is shown. | |||

[[File:PrimRight1.JPG|thumb|upright=5|center|Figure 12 Take a right turn]] | |||

The local direction '''left''' uses the same method as right, only now the features in the second and third quarter are used. | |||

Since all directions are determined locally but the maze solver requires global directions, the local directions are converted using the current rotation of PICO obtained from the SLAM module. | |||

'''Door detection right and left''' | |||

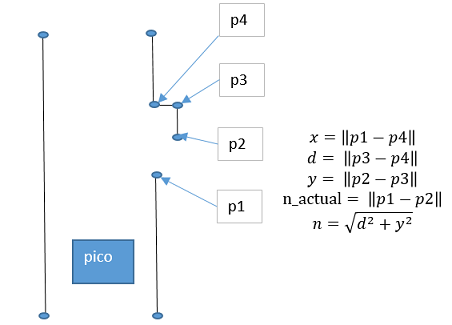

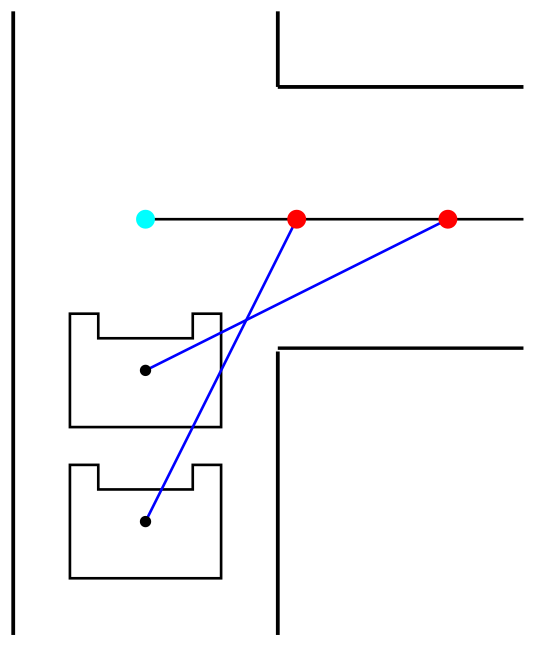

Door detection of doors in front of the robot has been explained in the straight direction section, however the maze can also have doors on the left and right of a corridor. Since the profile of a door is subject to strict constraints a distance based detection is implemented. Four consecutive features are sufficient to detect these doors. Using the definitions as stated in Figure 13. The algorithm used to detect the doors is as such: | |||

# x, y, d, n_actual, n are calculated which are lengths between nodes | |||

# It is checked if width of door matches definition | |||

#:if ( x>0.5 && x<1.5 ) goto 3 | |||

#:else goto 5 | |||

# It is checked if depth of door matches definition, within a treshold of 0.12 | |||

#:if ( d>(0.3-threshold) && d<(0.3 + thresh) ) goto 4 | |||

#:else goto 5 | |||

# Check if the expected distance between p1 and p2 is equal with the actual distance between p1 and p2. Again within a margin. | |||

#:if abs(n-n_actual) < 0.1*n_actual | |||

#:: door is detected and a decisionpoint is placed in the middle of p4 and p1 | |||

# Increment feature | |||

[[File:doordetection.png|thumb|upright=5|center|Figure 13 Door detection left and right]] | |||

'''Decision point''' | |||

In addition to the global directions also the location of a primitive needs to be determined because this is used by the maze solver and the set-point generator. The world frame location of a primitive together with its global directions is called a Decision Point (DP). Please recall that in the PICO frame, x is defined to be positive pointing forward and y is defined to be positive pointing left. The world frame x location from the robot to the DP must then exactly be in the middle of a right/left turn or 0.5 meters in front of a dead end or a door. The world frame y location from the robot to the DP is zero. To get the world frame x and y in the map the x and y location of the robot need to be added to it. To improve robustness, decision points can only be determined when the robot has a certain angle and an averaging filter is used to filter wrong decision points. If the robot differs ±0.3 rad from the driving direction then the primitives cannot be determined robustly any more and are then also filtered away. | |||

'''Simulation''' | |||

In the animation below, the robot drives through a simple maze. In the left corner is shown if the robot angle allows to search for new primitives. In the right corner is shown the output of the maze solver. When the robot drives through the maze it finds two decision points. The first decision point contains a possibility to go to right and left. Both possibilities are detected with a low and a high section. The second decision point contains a possibility to go right and is also detected with a low and high section. | |||

[[File:Left_right.gif|thumb|upright=5|center|Figure 14 Left and right primitive detection]] | |||

'''Trade off''' | |||

Using different filters on the primitive detection made the primitive detection in general a lot robuster but also had some negative effects on the detection. The negative effects were missing decision point because not all parameters of the filters were fully tuned and not all threshold on ranges which determine when searching for primitives is allowed were fully tuned. | |||

'''Conclusion''' | |||

The code for determining the primitives became bigger and bigger along the project. This due to fixing bugs and adding new cases. To make the code work without any bugs or missing cases, it needs a lot of time testing in simulation. | |||

=== Maze solver === | === Maze solver === | ||

The mazesolver is designed to make decisions at each primitive, it has to make decisions in such a way that it will find its way out the maze in preferably the shortest path. It also has to make sure that the robot does not get stuck in a loop. | |||

The | |||

There are multiple possible algorithms for designing a mazesolver. | |||

Since it is not know where the exit of the maze is strategies using a heuristic, like A* will not be considerd. A few options that we did consider are: | |||

'''Wall follower''' | |||

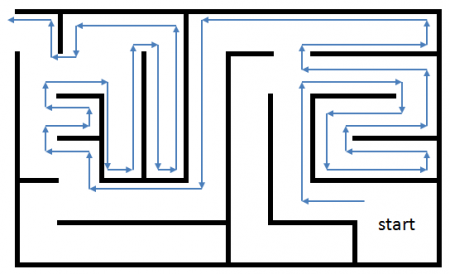

This algorithm is very simple it always follows the wall on one side | This algorithm is very simple it always follows the wall on one side of PICO, which has the disadvantage that there is a possibility for endless loops. An example of a maze explored by a wall-follower algorithm is shown in Figure 15. | ||

[[File:wallfollower.png|thumb|upright=10|center| | [[File:wallfollower.png|thumb|upright=10|center|Figure 15 Maze explored using a wall-follower]] | ||

'''Pledge algorithm''' | |||

This is an extension of the wall follower algorithm, | This is an extension of the wall follower algorithm. Next to following a wall, this algorithm also keeps a count of the sum of the turns to avoid obstacles. Using this count it can avoid getting stuck in loops and is therefore a viable option to solve the problem. | ||

'''Trémaux's algorithm/ Depth-first search''' | |||

The Trémaux's algorithm is an | The Trémaux's algorithm is an algorithm that remembers the decisions made on a crossing, it uses this information to prevent it form entering the same path twice and thus it should avoid endless loops. This algorithm will also be quicker compared to pledge as it has memory. A visualisation of Trémaux's algorithm is shown in Figure 16. | ||

The algorithm has the following steps: | The algorithm has the following steps: | ||

| Line 225: | Line 331: | ||

**If not, choose arbitrarily one of the remaining paths with the fewest number of marks (zero if possible, else one), follow that path, and mark it. | **If not, choose arbitrarily one of the remaining paths with the fewest number of marks (zero if possible, else one), follow that path, and mark it. | ||

Depth-first is a special class of the Trémaux algorithm, where the solver has a preferred order of choosing paths. Its name comes from the fact that this algorithm will always explore an entire branch of a tree before going to the next one. | |||

[[File:Tremaux.gif|thumb|upright=10|center|Figure 16 Trémaux's algorithm solving a maze]] | |||

[ | '''Breadth-first search''' | ||

With breadth first we will first check how many nodes there are connected to the current node and explore these in a fixed order by number, during each exploration the new connected nodes will be numbered incrementally. The biggest difference with Depth-first search is that at each node first all the options are explored before going one node deeper. | |||

An in depth explanation can be found in this [https://www.youtube.com/watch?v=ec0IJsiIuWk video], for the interested reader. | |||

The wall follower algorithm is not suitable for our application since the maze to be solved might have loops. The Pledge algorithm however might work, but is still a relatively dumb search method and will be slow for large mazes. Trémaux's algorithm/depth-first is already a bit smarter since it keeps track of the intersections it has been on. This requires some memory but not an entire map of the maze, since it is simply possible to follow wall back to the last intersection. Only the coordinates of each intersection with the explored options have to be remembered. This algorithm is better suited for our application than the breadth first algorithm since the breadth first would require the robot to drive back after each iteration while the depth-first only has to drive back on a dead end. Therefore it is decided to use the depth-first algorithm as the maze solver to be implemented. | |||

The wall follower algorithm is not suitable for our application since | |||

<u>'''Implementation'''</u> | |||

To implement the Depth-first algorithm a class is created to keep track of the nodes at which decisions where made and calculate the next direction to be explored. Such a node would contain its position in the world frame, and the options for each of the for directions. The for directions are NORTH, EAST, SOUTH and WEST with respect to the initial robot pose and are always thus absolute directions in the world frame. | |||

To implement the | |||

Such a node would contain its position in the world frame, and the options for each of the for directions. | |||

The for directions are | |||

The options for each direction are: | The options for each direction are: | ||

| Line 255: | Line 354: | ||

3 : there is an exit trough here. | 3 : there is an exit trough here. | ||

These were chosen in this way such that the | These were chosen in this way such that the maze solver just can pick the direction with the highest value. After choosing a direction it is set to -1 in the node, this ensures that the robot will not take the same direction the next time it comes at this node. If the robot finds a node with no options to explore it will return to the previous node and explore the next option of this node. If the robot encounters a node that is already in its list but is not a parent to the previous node it will turn around and it will mark the path in that node as explored, -1, this will prevent the robot form exploring the same path twice from different directions. | ||

'''Dependencies''' | |||

If for some reason the maze solver encounters a node which has only -1 options it will be in a deadlock since no direction is valid according to the algorithm. This situation could occur if a decision point was missed and PICO drives to an already explored node. Also having a decision point explored two times results in similar behaviour, this should however not occur as the function to call the maze solver should filter out only good decision points. If a deadlock occurs however, the best option is to reinitialise the software and start solving the maze as if it had not been explored before. | |||

=== Scheduler === | |||

To ensure the maze solver only makes decisons at actual decision points, a separate function to call the maze solver was created. This function checks if a new primitive really is a new primitive or that it rather is the previous primitive just shifted a bit, further it checks if we are close enough to the primitive to make a decision. Finally if there is a possible door detected this function will first call a function to check for a door and then call the maze solver with the outcome of this function. | |||

=== Open door === | |||

The door opening function simply checks the average laser distance in 3 zones left right and in front. After checking the distance it will ring the bell wait a few seconds and check again. If one of the distances has increased over a certain threshold the door is considered opened and the maze solver will decide to go through this door. During this routine other the main loop doesn't execute any other tasks. | |||

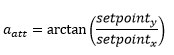

=== Set-point generation === | === Set-point generation === | ||

The decision taken by the mazesolver need to be transformed to driving. The first step is a setpoint. This setpoint is used by potential-field to sent velocity commands to the I/O. | |||

The setpoint generator places the setpoint on a straight line in the direction chosen by the maze solver. These direction are rotated relative to the initial pose. This is possible, because the maze is designed to be right-angled. Errors in the initial rotation of PICO relative to the corridors and deviations in placement of the decision points could cause problems. A correction of the setpoint based on the current laser would be an option. However the errors on the initial rotation and the locations of the decision points are small enough to be taken care of by the potential-field. | |||

To prevent PICO from touching the walls, when taking a turn, the setpoints are always placed on a predefined distance from the center of PICO. Therefore the input of the potential-field is bounded at a controllable level. The closer PICO is to the decision point the further sideways the setpoint is placed. This is implemented by constraining one coordinate to the coordinate of the decision point and the other coordinate is free to be calculated, so that the setpoint is at the desired distance. The constrained coordinate is depended of the chosen direction. This is shown in Figure 17. | |||

The setpoint generator provides the system with a global setpoint. This setpoint is afterwards transformed to a local setpoint. This is required, because the potential-field only uses local information. The setpoint is only a position. The rotation of the robot is handled by the potential-field. | |||

[[File:setpoint.png|thumb|upright=2|center|Figure 17 Smooth set-point transitioning when changing direction]] | |||

=== Potential field === | === Potential field === | ||

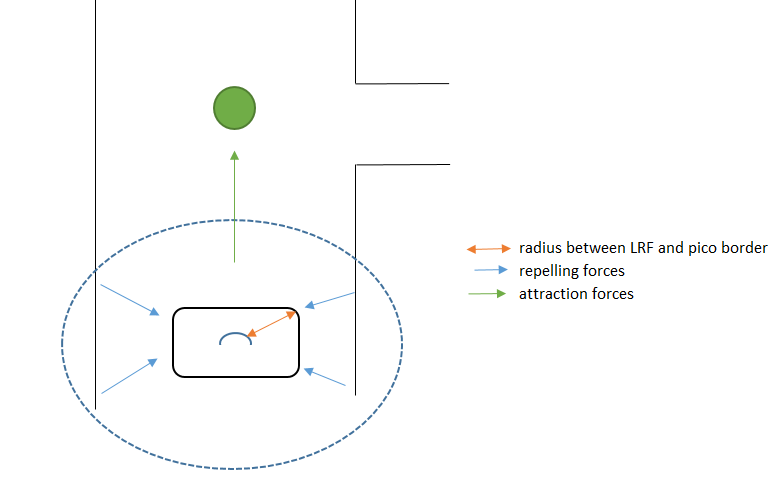

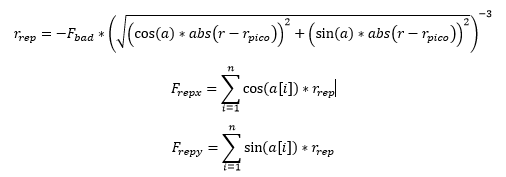

A potential-field algorithm is designed to make sure PICO never collides with walls and to drive trough the maze. A schematic of the repelling and attraction forces is made shown in Figure 18. The green dot is the desired position of the robot, which is the output of the setpoint-generator, the blue dotted circle is the range of the LRF. It is not require to use the full range of the LRF because avoiding collision is only relevant in close range to any walls. For this reason the range of the potential field is limited to 4 meters. A [https://gitlab.com/snippets/1665710 snippet] of the potential-field class is given to show how this is implemented in c++ as this simple class shows the structure of other classes as well. | |||

[[File:Potential-Field.png|thumb|upright=3|center|Figure 18 Potential field schematic]] | |||

''' Repelling forces ''' | |||

The repelling forces are calculated by the following formulas: | |||

[[File:Frep.png|center]] | |||

r_rep is the length of the repelling force vector. a Is the angle of the laser beam in rad, r is corresponding the measured range of the laser. The measured distance is then r subtracted by r_PICO the range between the borders of PICO and the LRF sensor. This is done to make sure the force goes to infinity when the robot is at collision with the wall. The laser data (a,r) is converted to(x,y), after this is done the length is calculated and this is taken to the power -3, the closer to the wall the bigger the force. This length is scaled with a factor F_bad, this factor had to be determined during tests. F_bad is tuned by placing the robot in a corridor while driving forward, if F_bad is too high PICO would vibrate true the corridor. If F_bad is too low than PICO would hit walls. | |||

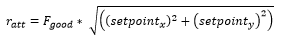

''' Attraction force ''' | |||

The attraction forces are calculated by a quadratic equation: | |||

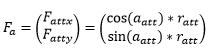

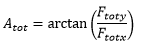

[[File:ratt1.png|center]] | |||

r_att is the length of the attraction force vector, setpoint_x,setpoint_y are coordinates in the body frame generator by the setpoint-generator, F_good is the tunable parameter during tests. A setpoint is set with the tuned F_bad parameter and F_good is increased until PICO would actually drive to the setpoint. | |||

[[File:aatt.png|center]] | |||

a_att is the angle of the vector in rad. | |||

[[File:Fa.png|center]] | |||

F_a is the attraction force vector. A quadratic equation is used to increase the attraction force for set points far away. The closer to the set point the lower the attraction force. | |||

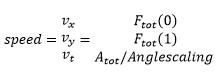

''' Speed calculation ''' | |||

Speed is calculated based on the repelling and attraction forces. First both vectors are added to obtain a total force: | |||

[[File:Ftot.png|center]] | |||

F_tot is the sum of the attraction and repelling forces. | |||

[[File:Atot.png|center]] | |||

A_tot is the angle between the x and y component of F_tot. These parameters are converted to speed parameters: | |||

[[File:speed.png|center]] | |||

The speed parameters vx,vy are limited on +-0.3m/s and vt on +-1.2rad/s because driving slower guaranteed avoiding walls at all times and made it easier for the primitive detection to detect decisionpoints. | |||

A animationis made to show what happends to Ftot and Atot, represented by the blue line. Whenever PICO is in the center of a corridor the repelling forces on both sides cancel each other out, so Ftot ~ Fatt. Whenever PICO moves close to a wall the repelling forces increase with a power of 3 because it is devided with a number smaller than 1. Close to walls Ftot~Frep. | |||

[[File:Potential-Field.gif|thumb|upright=3|center|Figure 19 Avoiding walls with potential field]] | |||

= Evaluation maze challenge = | |||

Numerous parts of the code were changed after the last test session and therefore only tested in simulation. The placement of decision points in open space was added after the last test session and another problem was the speed limit of PICO. The speed limit was reduced from 0.3 to 0.15, because this improved the primitive detection in simulation. This speed limit turned out to be too low, although it improved primitive detection. Due to the friction, PICO was barely turning and had especially much effort with driving forward and overcoming static friction, it needed a little love tap sometimes. Besides the driving problem, the other parts of the code were working correctly. After opening the door one decision point was missed, however, and this caused PICO to get in an undetected deadlock state. | |||

[[File:Maze_solving.gif|thumb|upright=5|center|Figure 20 Solving mazes!]] | |||

'''Solving the maze in simulation''' | |||

In simulation, however, the mazes of this and last year were solved by the code version that was used during the maze challenge. A short animation is shown in Figure 20 where all parts of our code are working together. Note the visualizer features, highlighting each element: the red dot is the generated set-point, the blue line is the total force of the potential field, the purple dots are the detected features and the blue dots are the decision points placed by the primitive detection. In the upper right corner the decision points with the directions are shown. The Upper left corner shows the chosen direction of the maze-solver and the current rotation of the robot. Moreover, when the angle of PICO with respect to the starting position shows in red, the robot is in an incorrect rotation and the primitive detection is temporarily disabled. This is implemented for angles outside a threshold of 0.3 rad around the 90 degree corners, since the primitive detection often failed in these orientations. | |||

Full simulation using the maze from [https://www.youtube.com/watch?v=6uX8d2biI_A this] and [https://www.youtube.com/watch?v=buuI6-kGCbk last] year again shows that the implementation works in simulation. | |||

= Recommendation = | |||

In addition to the global directions also the location of a primitive needs to be determined because this is used by the maze solver and the set-point generator. The world frame location of a primitive together with its global directions is called a Decision Point (DP). Please recall that in the PICO frame, x is defined to be positive pointing forward and y is defined to be positive pointing left. The world frame x location from the robot to the DP must then exactly be in the middle of a right/left turn or 0.5 meters in front of a dead end or a door. The world frame y location from the robot to the DP is zero. To get the world frame x and y in the map the x and y location of the robot need to be added to it. To improve robustness, decision points can only be determined when the robot has a certain angle and an averaging filter is used to filter wrong decision points. If the robot differs ±0.3 rad from the driving direction then the primitives cannot be determined robustly any more and are then also filtered away. | |||

= Files = | = Files = | ||

[[:File:initial-design-document.pdf|initial-design-document.pdf]] | |||

[[:File:initial-design-presentation.pdf|initial-design-presentation.pdf]] | |||

[[:File:Final-design-presentation.pdf|final-design-presentation.pdf]] | |||

= Code snippets = | |||

[https://gitlab.com/snippets/1665713 Landmarkdetection.cpp] | |||

[https://gitlab.com/snippets/1665712 Landmarkdetection.h] | |||

[https://gitlab.com/snippets/1665710 Potential-field.cpp] | |||

[https://gitlab.com/snippets/1665711 Potential-field.h] | |||

[https://gitlab.com/snippets/1665714 main loop] | |||

Latest revision as of 17:34, 17 June 2018

This Wiki describes the design and implementation of software which lets a robot autonomously solve a maze. The robot used during the project is called PICO. PICO is a driving robot which has a Laser Range Finder (LRF) sensor which can make a 2D scan of the world. It has a holonomic base, which grants complete driving freedom. This base is equipped with encoders that provide odometry measurements, handily transformed to displacement measurements of the x, y and alpha coordinates with respect to its starting position. Unfortunately these measurements are prone to errors, which show especially when accumulated over time. These sensors give PICO very basic human-like capabilities; the LRF can be compared to the eyes of a human and the odometry to the human sense of proprioception. The only thing that PICO is missing is the equivalent to a brain; which exactly what needs to be developed in this course. PICO also has a computer on board which runs Linux 14.04. The software is programmed using the program language is C++ and managed using GitLab.

We have chosen the rather ambitious goal of actually creating an intelligent being - something remotely resembling a human - instead of just following a wall, since we found it both very interesting and challenging.

Within the project there are two main challenges: the corridor challenge and the maze challenge. The corridor challenge is a first test, in which the robot needs to drive through and turn out of an exit on either side of a corridor, for which to have the basic capabilities it needs later have to already be developed. The maze challenge is the final test, where PICO has to find its way out of an unknown maze, fully autonomously.

Group Members

| Name: | Report name: | Student id: |

| Matthijs van der Burgh | M.F.B. van der Burgh | 0771381 |

| Joep Linssen | J.M.H.G.H. Linssen | 0815502 |

| Sil Schouten | S. Schouten | 0821521 |

| Daniël Pijnenborg | D.H.G. Pijnenborg | 0821583 |

| Rens Slenders | R. Slenders | 1028611 |

| Joeri Roelofs | J. Roelofs | 1029491 |

| Yanick Douven | Y.G.M. Douven | Tutor |

Initial design

Our initial design shows the basic strategy for solving the maze challenge. It contains roughly the following.

Introduction: The goal of this project is to autonomously get out of an unknown maze with the PICO robot, as fast as possible. The robot has sensors, actuators and a computer on board to navigate through the maze. The computer runs on Linux 14.04 and the programming language used for the software is C++. In this section the initial design is discussed, which consist of the requirements, functions, components, specifications and interfaces. This design will be used to build structured software and define required interfaces.

Requirements: To solve the maze problem the following points are required

- Finding the exit

- Finding and opening the door

- Avoiding obstacles at all times

- The maze should be solved within 5 min

- All tasks must be executed autonomously

- The system should work on any maze

- The robot must not get trapped in loops

Functions: The functions which have to be implemented are subdivided into the different contexts. Motion functions describe how the software gets input from and supplies output to PICO. Skill functions describe how different skills are executed in the software. Lastly Task functions implement task scheduling.

The motion functions have been determined as:

- Basic actuation Give PICO the ability to move around and rotate

- Getting information Receive information from LRF and odometry sensors.

- Opening door Make a sound to open door.

The more advanced skill functions are:

- Mapping Use information from ”Get information” to determine and save a map of the environment. This creates the world model for PICO.

- Localisation Determine where PICO is located in the map created in ”Mapping”. This will most likely be implemented in the same function.

- Object avoidance Use current LRF data to make sure PICO does never hit a wall.

- Maze solving Use the map in combination with current and previous locations within the map to determine a route out of the maze.

- Door and exit detection Using the map to detect if possible doors have been found and if PICO has finished the maze.

The task control implements switching between the following tasks:

- Map making Done throughout the challenge to improve current map.

- Finding doors Done in first stage of the challenge when no door has been found yet

- Finding the exit Once a door has been found and opened the task is to go through the door and finish the challenge.

Initial software architecture

The initial design can be put into a diagram, which is shown in the Figure 1. Below it, the initial design behind modules that were changed later is justified. Updated versions and unchanged modules are explained in the final software sections and justification for changing the modules especially is given in the architecture section.

SLAM

The original Simultaneous Localization And Mapping (SLAM) algorithm was designed to use the raw odometry and laser data and process this into world frame robot pose and, crucially, world frame features. The world frame is defined as the current position and rotation (called a pose) of PICO with respect to the initial pose of PICO. Features are defined as re-observable landmarks, in our case corners of walls, and are saved as locations with respect to the initial pose of PICO. A possible method of SLAM is the well known Extended Kalman Filter (EKF) SLAM, but many different types of SLAM exist.

After evaluating multiple SLAM algorithms, it had been decided that the aforementioned EKF SLAM fits bests with the posed problem. The reason for this choice is that it works well in circumstances where there are clear landmarks; a maze with with many right-angled corners definitely belongs to this class. Another benefit to this method is that these landmarks could be readily used in the primitive detection. Lastly, EKF SLAM is known to be effective for problems of moderate size, the data size will not become huge and slow to process for a few hundred landmarks.

World model

In the initial design, the world model was meant to be a separate entity - a container of data structures, which main task was to ensure that all other modules could retrieve the latest data from a single place whenever it was available, making sure that no two modules operated on different data.

Driving

In the driving skill a driving strategy is to be implemented which is able to avoid obstacles while driving in a set direction, taken from the maze solver. Avoiding obstacles means that laser data is required to measure distances to objects in the world.

For the driving skill the best method was chosen to be a potential field method. Correct implementation ensures PICO will never hit a wall while driving towards a desired location. A downside of this method is that PICO might get stuck if there a wrong decision is made.

The corridor challenge

The strategy for the corridor challenge was to have basic feature detection and potential field working, as well as some form of set-point generation. Because the SLAM algorithm was not finished yet, feature detection was build as a separate module that used jumps in the laser data in order to detect exits consisting of two corners in the corridor wall. This made set-point generation relatively easy; the set-point was set just after the first detected corner on the left or right of PICO.

Using this strategy, PICO was able to complete the corridor challenge and ended up in fourth place. This could have been better, however, we were given time penalties since PICO scratched the wall twice. The reason that this happened was quickly found, and ended up being divided over the lower and upper part of the software.

PICO was able to detect the exit from far back — while it was still far from it. Unfortunately, it immediately decided to drive towards this exit. This does not mean that the set-point was placed incorrectly, as it was still placed in the middle of the corridor. But the set-point attracted PICO already quite heavily to the right. This is where the second cause comes into play, the potential-field. The potential-field was not tuned perfectly, but more importantly, the actual width of PICO was taken not into account. This meant that the repulsive forces did not tend to infinity as PICO neared the wall, but rather to a high, but apparently not high enough, number. The width of PICO was added afterwards and reduced touching the walls to nearly zero during the following tests. Another final way to prevent the rapid change of direction we experienced, came in the form of our set-point generator: we needed to smoothly switch between set-points and not just move directly towards the first opening that was observed.

Final software architecture

This section explains the difference in architecture pre- and post-corridor challenge and the reasons why these differences were made. Subsections will provide more detail on the technique and implementation of each of the modules.

After the corridor challenge, it became apparent that a re-evaluation of the software architecture was needed — it needed to be split up into more modules that focus on one thing in order to improve clarity and a few extra functionalities needed to be added for completeness. One main split is between the initialization and the main loop execution.

Main loop

The final main loop data flow scheme is depicted in Figure 2.

Firstly, the SLAM algorithm had to be changed since building our own EKF SLAM proved to be too time-consuming. A switch was made to a ROS functionality called Gmapping, which was used solely to obtain an accurate position. A new landmark detection was build in a module called feature detection, that uses a split-and-merge method to obtain wall-segments and wall-corners using the laser data.

This laser data also had to be filtered, since tests on the actual robot showed that the LRF sensor produced shadow points that interfered with the current algorithms. More information on this filter can be found in the shadow point filtering section.

Furthermore, from the evaluation of the corridor challenge it became apparent that the Driving skill had to be split into two parts aswell: one ensuring smooth setpoint generation that would increase speed and reduce potential wall-bumps and another that focused solely on the potential field functionality.

Another big change in the architecture was the split into continuous and triggered modules and the scheduler that came with it. During the development of our software it became apparent that it was benificial to have some modules that acted continuously on the last known robot status (for instance generating setpoints based upon the last decision), but also to have some modules which would only be called when they were needed (like the maze solver which needs to only make a decision when the robot is close to a decision point). More detail on the implementation of the Scheduler can be found here.

A seperate open door module was added in the architecture aswell, which was another big change. This is because it hijacked the loop execution; that is, all modules except SLAM stop their operation when the open door module is called, since it has its own actuation capability. This is admittedly bad software design, but the module was too difficult to consume into the main loop execution otherwise.

Lastly, in order to implement the as before mentioned scheduled execution, the continuously executing setpoint generator needed to have access to the maze solver's last made decision. Therefore, this was included in the World Model.

Please note that the World Model is only an architectural object; in the actual implementation of the architecture it was omitted and the needed information was simply asked only once from the relevant module and then stored as a variable in the main loop. As mentioned earlier, a world model was decided to be unnecessary and overly complicating.

Initialization

Before the main loop can be executed, the robot and all the modules on it have to be initialized. A few special actions happen during this, of which an overview is depicted below.

The position initially task is the most notable extra initialization step. The maze solver relies on the directions from the primitive detection being correct in the world frame; the provided NORTH, EAST, SOUTH and WEST with respect to the initial rotation of the robot have to actually represent these cardinal directions. This is especially important since the architecture requires that a new decision point can always be found by driving in one of the directions. This results in the requirement that the robot has to be initialized perpendicular to a wall. Since all corners are approximately right angled this results in the available directions at every decision point corresponding to the actual NORTH, EAST, SOUTH and WEST.

Solving this problem is fairly straight forward using the feature detection module. Firstly, the angle between the first two features, which are always in the same section, is calculated. Note that this angle is the angle observed from the PICO robot, not an angle in some world frame — no world frame is defined yet at this point. This angle is then used to turn PICO such that is in alignment with it. This is repeated until the angle is within a threshold of approximately 3 degrees. This process is shown as in simulation in the animation below.

After aligning with a wall the first decision point is made using the (0, 0) position and the possible actions set according to a laser measurement which measures open directions in the starting position. Note the initial decision point that is made at the end of the simulation above. Afterwards, the starting pose of the mapping is set to the aligned pose by calling SLAM initialize and lastly one execution of the last part of the main loop is done: the maze solver makes an initial decision, a set-point is generated from this decision and a velocity command is generated by the potential field.

Final software implementation

For the final software implementation, the following elements were made: an interface for debugging the code, a general toolset for supporting functionality and the implementation of all main skills.

Debugging interface

In order to efficiently get an idea of what the robot sees and what the status of its various modules is - information essential to debugging - a visualizer was made. It is based on the provided emc-viz visualizer, which uses OpenCV.

It provides visualization for the following data:

- robot dimensions

- (filtered) laser data

- generated setpoint

- potential field total force vector (blue line)

- highlighted points (useful for debugging in general, but used for the landmarks primarily)

- currently detected primitive (also called decision point) position (blue dot)

- maze solver internal decision point memory

- current direction the maze solver wants to travel towards

- the current angle of the robot and whether it is within the bounds wherein new primitives are being detected (red/green text)

- the current position of the robot in world frame

A snapshot of the visualizer showing all this data is provided below.

General toolset

A distinction is made between skills, which are divided in classes, and a toolset function, which implements supporting functionality. These functions help cope with the non-ideality of the real-world, for example, in the ideal situation the laser data from PICO is noise free and its odometry data starts at 0 every time the program is executed. Violations of such situations have to be handled, and preferably only at one point in the code; this provides clarity and, most importantly, negates the situation where two modules would operate on different sets of data, while they should not.

The most notable function in the toolset is the Shadow point filtering.

Using the Laser Range Finder in practise raises a problem when scanning an ending wall: shadow points appear. They are ghost measurements, placed at an arbitrary distance behind the wall, but always at exactly the angle to the ending, and are caused by the way the sensor works (detecting reflected laser points). An example of such shadow points is depicted in the leftmost figure below. Since they negatively effect the Feature Detection and Potential Field, they need to be filtered out.

The Shadow Point Filter uses one attribute of these points to discern and detect them: they commonly don't have many other measured points around them. It thus looks before and after the point in the laser data array for N steps, and checks whether less than M of these points are within a radius r of it. Is this the case, then the point is either removed or set to distance 100. Thus, we keep track of two different arrays, which each have their own usefulness for different applications.

The filter with parameters {N, M, r} = {10, 5, 0.1} applied on the aforementioned figure, is shown in rightmost figure above.

As can be seen, the filter does remove some good points, but since they're far away this has no influence on the performance of the system — it even makes it more robust.

Main skill set

The main skill set implements, as the name suggests, skill functions defined in the architecture.

SLAM

Because of the chosen maze-solving algorithm, a global position is required. The odometry of PICO is inaccurate and therefore useless for determining the position PICO in the long term. The laser range finder, however, is much more accurate and thus using this data simultaneously improves the accuracy of the estimated position. Because the environment is unknown, an algorithm called Simultaneous Localisation And Mapping (SLAM) is needed. There are many variations of SLAM algorithms available, however, choosing the correct algorithm, implementing it and tuning it can take a lot of effort. Therefore a ROS node called Gmapping[1][2] is used. Gmapping is an implementation of a highly efficient Rao-Blackwellized particle filter.

Gmapping is used solely to provide PICO with a more accurate global position than provided by the odometry. The created map is not used for detection of primitives, therefore the accuracy of the map is irrelevant — only the accuracy of the position is relevant. To improve the accuracy of the position, two parameters are tuned. The update interval of the mapping is shortened. This way the occupancy grid is updated at a higher frequency. The localisation update is based on rotation and translation of the robot. These update thresholds are lowered together with map update interval. To compensate for the relative bad odometry, the localisation thresholds are lowered even more. Time updates of the location are disable. This prevents jumps in the position, when PICO is not driving. Tuning is done on the straightness of the map and reducing the odometry compensation in the simulator, which should be nihil.

Gmapping cooperates with TF[3]. TF handles all the different coordinate frames and their transformations. TF also allows us to transform points in a specific frame to an other frame.

Feature detection

For determining the type and location of a primitive, features called segments and corners are required. Corner are defined as locations where two connected walls meet, while segments are the features which are not corners; they describe beginning and end points of wall segments. In Figure 8 such features are shown, corners in red and segments in blue. A small adaptation of the laser data is needed in order to make the algorithm more robust; out-of-range data is set equal to 100 indicating an open section. In these out-of-range sections no features are set.

The strategy to find segment features is based on the difference between two consecutive laser points, if the difference is greater than a threshold value (set at 0.3 meter) the end of a wall segment is detected and both points are set as segment features. For the corner features a split and merge algorithm is applied to every wall segment, defined as the set of features spanning from an even segment feature to the next segment feature, i.e. from 0 to 1 and 2 to 3).

The split and merge algorithm works in the following sequence:

- starting node = segment beginning, ending node = segment end

- dx and dy are calculated between starting and ending node

- distance between measured data and straight line is calculated as:

dist = abs( dy*x - dx*y + x(start)*y(start) - y(end)*x(start) )/ sqrt( dy^2 + dx^2 )

- check if all points are within threshold

if(maximum distance > threshold)

- ending node = index of maximum value

- index of maximum value is a corner node

- goto 2

- done

The split and merge algorithm is also shown in Figure 10, with some resulting examples in Figure 9. This split and merge algorithm has been tested in MATLAB first of which executable code is provided in File:Split-and-merge-matlab.zip. As a comparison to the MATLAB code also a snippet of the class implementing the algorithm is posted. For completeness a simple animation of the algorithm is shown in Figure 10.

Dependencies: Feature detection uses laser data as its input, therefore the quality of the output depends on the accuracy of the data. If accurate data is supplied the parameters defining the strictness of the detection (i.e. the maximum allowed distance between calculated and measured lines) can be increased. This however comes with the major drawback that shadow points result in walls being split into multiple sections, as the distance of the laser makes a jump upon encountering shadow points. To prevent this behaviour, the aforementioned shadow-point filter is used.

Another possible cause of error is how out of range data is handled, if this data is discarded there are circumstances where two sections are joint together. This happens when the range before an out of range section is equal to the range after an out of range section. A result is that two sections are joint and therefore primitive detection will give a wrong output.

Trade offs: As mentioned in the Dependencies, the shadow point filter plays a large role in the quality of the features. In this a trade-off has to be made between filtering bad data and removing relevant information. A strict shadow point filter takes out shadow points but also leads to far-away, correct laser data being removed as well (as one laser increment leads to large range difference).

Primitives

A maze can be split up into primitives; parts of the maze where the robot can make a significant change in driving direction. The four considered directions are north, east, south and west. As mentioned earlier, these directions are with respect to the global map. In addition, each direction can contain a possible door. To determine what kind of primitive a certain part of the maze is, features and sections are used, as described in the previous section. The locations of features are relative to the robot, so within the body frame. To check if the robot can drive in one of the four directions, the options of these directions are firstly determined in this body frame. These body directions are: straight, back, right and left.

Primitive detection

Detection of primitives can be done using different methods. The two methods that have been examined are, firstly, to make a correlation check between the observed laser data and the laser data corresponding to the preprogrammed possible primitives and, secondly, to use the features and sections. As noted, to make the correlation work it is important that for every possible primitive a laser pattern is defined. On the contrary, the feature and section detection had been already made and is working robustly. Because of these reasons it was chosen to explore the second option.

The local direction straight is only a driving direction if no section exists in front of the robot or if the section in front of the robot is more than 1.2 meters away. A section is in front of PICO when it has a feature in the first and second quadrant. For this section, there is also a check in place on whether the spotted sections represent a door, which is done by looking if the horizontal wall is less then 1.6 meter and if it contains two vertical walls next to it. In the figure below examples of a straight section and a straight section with a door are depicted.

Since this module can neglect the initial primitive (decision point), the local direction back is always a driving direction since the robot came from there; as mentioned before, the robot does not drive sideways or back.

The local direction right is a driving direction if enough space is in between the two sections before and after or a right section is 1.2 meters away. To determine the space in between two sections, a low and high section need to found. The terms low and high refer to the number of the section which is found by feature detection. These sections are therefore chronologically ordered in counter-clockwise fashion. The low section is the closest section to PICO, having the first feature in the fourth quadrant (see image for definition). The high section is the next section starting in either the fourth or first quadrant, which is closest to PICO. In Figure 12 an example is shown of a high and low section with enough space in between them to be considered a decision point, also a right section which is far away is shown.

The local direction left uses the same method as right, only now the features in the second and third quarter are used.

Since all directions are determined locally but the maze solver requires global directions, the local directions are converted using the current rotation of PICO obtained from the SLAM module.

Door detection right and left

Door detection of doors in front of the robot has been explained in the straight direction section, however the maze can also have doors on the left and right of a corridor. Since the profile of a door is subject to strict constraints a distance based detection is implemented. Four consecutive features are sufficient to detect these doors. Using the definitions as stated in Figure 13. The algorithm used to detect the doors is as such:

- x, y, d, n_actual, n are calculated which are lengths between nodes

- It is checked if width of door matches definition

- if ( x>0.5 && x<1.5 ) goto 3

- else goto 5

- It is checked if depth of door matches definition, within a treshold of 0.12

- if ( d>(0.3-threshold) && d<(0.3 + thresh) ) goto 4

- else goto 5

- Check if the expected distance between p1 and p2 is equal with the actual distance between p1 and p2. Again within a margin.

- if abs(n-n_actual) < 0.1*n_actual

- door is detected and a decisionpoint is placed in the middle of p4 and p1

- if abs(n-n_actual) < 0.1*n_actual

- Increment feature

Decision point