PRE2019 4 Group1: Difference between revisions

TUe\20172091 (talk | contribs) |

|||

| (17 intermediate revisions by 5 users not shown) | |||

| Line 40: | Line 40: | ||

====Customers==== | ====Customers==== | ||

The stakeholders that we gave the most priority are the customers, we predict that it will mostly consist of the elderly, our target group. It is our task to keep these customers satisfied, but there is little need to keep them informed about what is going on during production. Design choices should keep the limitations and desires of the elderly into account and could use their feedback. This feedback could be gathered by conducting a user test. | The stakeholders that we gave the most priority are the customers, we predict that it will mostly consist of the elderly, our target group. It is our task to keep these customers satisfied, but there is little need to keep them informed about what is going on during production. Design choices should keep the limitations and desires of the elderly into account and could use their feedback. This feedback could be gathered by conducting a user test. | ||

===Society=== | ===Society=== | ||

| Line 92: | Line 92: | ||

* ''Vision'': skeleton tracking, facial detection. Use this data to count jumping jacks, look at someone when talking to. | * ''Vision'': skeleton tracking, facial detection. Use this data to count jumping jacks, look at someone when talking to. | ||

Using different feature sets allows our team members to (partly) work individually, speeding up the development process in the difficult time that we are in right now. The parts should be tested together. Not only in the end | Using different feature sets allows our team members to (partly) work individually, speeding up the development process in the difficult time that we are in right now. The parts should be tested together. Not only in the end but also when building. This can be done by scheduling meetings with the person responsible for building the physical robot. The team members can share code via a source like GitHub, which then can be uploaded onto the robot, allowing members to test their part. | ||

When all parts work together, a video will be created, highlighting all bot functionalities. A live presentation will also be given via YouTube, to provide an interactive demonstration. | When all parts work together, a video will be created, highlighting all bot functionalities. A live presentation will also be given via YouTube, to provide an interactive demonstration. | ||

| Line 116: | Line 116: | ||

== Milestones == | == Milestones == | ||

[[File:Milestones.png| 600px |center]] | |||

| | |||

= State of the Art = | = State of the Art = | ||

| Line 205: | Line 174: | ||

==Hardware== | ==Hardware== | ||

===Raspberry Pi=== | ===Raspberry Pi=== | ||

The Raspberry Pi was chosen for its computational capabilities and will be used for the computations that CALL-E will need for | The Raspberry Pi was chosen for its networking and computational capabilities and will be used for the computations that CALL-E will need for environmental monitoring, image processing, and text-to-speech and speech-to-text conversions. | ||

===Raspberry Pi Fan=== | ===Raspberry Pi Fan=== | ||

The Raspberry Pi | The Raspberry Pi 4 has a fairly high thermal design power (TDP), causing it to quickly overheat <ref>https://www.martinrowan.co.uk/2019/06/raspberry-pi-4-hot-new-release-too-hot-to-use-enclosed/</ref> if no action is taken. We, therefore, chose a very capable active cooler to make the system as stable as possible. Other components will also be cooled somewhat by the additional airflow the fans on our cooler provide. The shell of CALL-E has been designed with two vent grilles in mind, to cause some passive airflow. | ||

===Arduino=== | ===Arduino=== | ||

The reason an Arduino was added | The reason an Arduino was added even though we already have a Raspberry Pi is that we needed a real-time system. Controlling servos is done by encoding the requested servo angles in an accurately timed logic pulse. Due to the Pi running Linux and already running a plethora of other processes, this would not have been achievable with the Pi. The jitter all of these other processes and the overhead of not running a real-time system will cause jitter in the actual angle the servos rotate to. This would also be quite noisy sound-wise. The Arduino receives angle requests from the Pi over a serial connection through USB. | ||

===Servos=== | ===Servos=== | ||

Two | Two servo motors will be used. The first is used to rotate the Kinect in the 'pan' axis and the second is used to move the right arm of CALL-E. Their movement will be controlled by the Arduino, as mentioned earlier. | ||

===Power Supply=== | ===Power Supply=== | ||

We are using a Meanwell 5V 10A power supply to convert power from a wall socket into a stable 5V DC on which the Pi, the Arduino and both servos will run. The servos are ideally run on a slightly higher voltage, but we have chosen not to complicate our architecture more for the sake of quicker servo movements. | |||

===Kinect=== | ===Kinect=== | ||

The | The Kinect was designed as a means to achieve a controller-less game console. To achieve this goal, it features an RGB camera, an IR camera, an IR dot pattern projector, a microphone array, a tilt motor and a multi-color status LED. We are happy to be able to use all of those features! | ||

* RGB camera | |||

** Used for facial tracking, to have CALL-E look at the user while interacting. | |||

* IR camera and IR dot projector | |||

** Used in tandem to obtain a depth map of the environment. This is used to track the user's pose, which in turn is used to detect physical exercise. | |||

* Microphone array | |||

** Used to enable speech-to-text conversion. Because it is an array of microphones rather than a single one, noise other than the user's voice can be filtered out more easily. | |||

* Tilt motor | |||

** Used to tilt the Kinect up and down. Together with the aforementioned pan axis servo, CALL-E can point its head directly at the user's face while interacting. | |||

* Status LED | |||

** Used to indicate whether CALL-E is awake, and also gratefully used while debugging facial tracking. | |||

===Tablet=== | ===Tablet=== | ||

Call-E is designed in a way that allows users to use any tablet. In the designs and the prototype, we used an iPad. The advantage of using a tablet is that there are already some built-in functionalities that can be used for developing the assistant. Given the time constraints of the project, deployment of the already existing solutions seems both attractive and reasonable. With a tablet as a design component, CALL-E is equipped with a screen and speakers. A tablet is mainly used as an input device and to convey information when the user prefers visual display over voice communication. Additionally, the screen is used during other activities, such as video calls, | Call-E is designed in a way that allows users to use any tablet. In the designs and the prototype, we used an iPad. The advantage of using a tablet is that there are already some built-in functionalities that can be used for developing the assistant. Given the time constraints of the project, deployment of the already existing solutions seems both attractive and reasonable. With a tablet as a design component, CALL-E is equipped with a touch screen and speakers. A tablet is mainly used as an input device, and to convey information when the user prefers visual display over voice communication. Additionally, the screen is used during other activities, such as video calls, web browsing and video playback. | ||

| Line 240: | Line 219: | ||

====Serviceability==== | ====Serviceability==== | ||

CALL-E will be easily serviceable, which is very convenient when working on a prototype. After removing the six screws holding on the front of CALL-E, this front can be taken out. | CALL-E will be easily serviceable, which is very convenient when working on a prototype. After removing the six screws holding on the front of CALL-E, this front can be taken out. | ||

[[File:callEFrontRemoved.png|thumb|400|right|The front of CALL-E unscrewed.]] | [[File:callEFrontRemoved.png|thumb|400|right|The front of CALL-E unscrewed.]] | ||

The top, which was previously contained by a 3D printed ledge interlocking the two halves of the main body, can now be lifted out to provide unrestricted access to the internal electronics. This step also opens up the rear slot used to route power cables through. This slot could even be used to route any additional cables to the Raspberry Pi, say for attaching a monitor or other peripherals for debugging purposes. | The top, which was previously contained by a 3D printed ledge interlocking the two halves of the main body, can now be lifted out to provide unrestricted access to the internal electronics. This step also opens up the rear slot used to route power cables through. This slot could even be used to route any additional cables to the Raspberry Pi, say for attaching a monitor or other peripherals for debugging purposes. | ||

[[File:callETopRemoved.png|thumb|400|right|Unrestricted access to electronics.]] | [[File:callETopRemoved.png|thumb|400|right|Unrestricted access to electronics.]] | ||

====3D printed bearing surfaces==== | ====3D printed bearing surfaces==== | ||

The Kinect has an internal motor which we will use to tilt the 'head' of CALL-E up and down. Since we want CALL-E to be able to really look at the user, we needed another axis of rotation; pan. We will be using one of the servo's included so-called 'horns', as it features the exact spline needed to properly connect to the servo. This spline has such tight features and tolerances that we prefer to attach our 3D prints to this horn, rather than to the servo directly. We cannot reliably 3D print this horn's spline. This horn will be screwed into a 3D printed base in which the Kinect's base sits. This base will be printed with a concentric bottom layer pattern, and so will the surface this base rests on. This combination makes for two surfaces that experience very little friction when rotating, even when a significant normal force is applied. In our case, this normal force will be applied by the (cantilevered) weight of the Kinect. | The Kinect has an internal motor which we will use to tilt the 'head' of CALL-E up and down. Since we want CALL-E to be able to really look at the user, we needed another axis of rotation; pan. We will be using one of the servo's included so-called 'horns', as it features the exact spline needed to properly connect to the servo. This spline has such tight features and tolerances that we prefer to attach our 3D prints to this horn, rather than to the servo directly. We cannot reliably 3D print this horn's spline. This horn will be screwed into a 3D printed base in which the Kinect's base sits. This base will be printed with a concentric bottom layer pattern, and so will the surface this base rests on. This combination makes for two surfaces that experience very little friction when rotating, even when a significant normal force is applied. In our case, this normal force will be applied by the (cantilevered) weight of the Kinect. | ||

| Line 248: | Line 228: | ||

[[File:Call-E assembled.jpg | 600px | thumb | center | Assembled version of Call-E]] | [[File:Call-E assembled.jpg | 600px | thumb | center | Assembled version of Call-E]] | ||

==Software== | ==Software== | ||

===Voice control=== | ===Voice control=== | ||

We will be using Mycroft <ref name = "Mycroft"> | We will be using Mycroft <ref name = "Mycroft">https://mycroft.ai/: Mycroft: Open Source Voice Assistant, Retrieved April 30, 2020</ref> as our platform for voice control. | ||

In order to set our own 'wakeword', and have CALL-E start listening to the user as soon as he or she calls its name, we used PocketSphinx <ref name = "PocketSphinx"> | In order to set our own 'wakeword', and have CALL-E start listening to the user as soon as he or she calls its name, we used PocketSphinx <ref name = "PocketSphinx">https://pypi.org/project/pocketsphinx/: Retrieved April 30, 2020</ref>. Ideally, we could train the integrated wakeword detection neural network to recognise the wakeword and ignore everything else, but after some research online we realised this would require far more data then we could reasonably collect. We, therefore, went with an inferior, but certainly quicker strategy, namely phoneme characterisation using PocketSphinx. We translated the phrase "Hey CALL-E" into phonemes using the phoneme set used in the CMU Pronouncing Dictionary <ref name ="CMU Pronouncing Dictionary">http://www.speech.cs.cmu.edu/cgi-bin/cmudict: Retrieved April 30, 2020</ref>. CALL-E becomes: 'HH EY . K AH L . IY .' The periods indicate pauses in speech, or generally whitespaces. | ||

====Decisions==== | ====Decisions==== | ||

| Line 274: | Line 253: | ||

The robot will use a Microsoft Kinect (v1) for vision-based input. It will provide a way for the robot to, for example, look at the interacting user to make it feel like it is actually listening to the user and also help the user do exercises. | The robot will use a Microsoft Kinect (v1) for vision-based input. It will provide a way for the robot to, for example, look at the interacting user to make it feel like it is actually listening to the user and also help the user do exercises. | ||

As the core of our software, we will use the open-source Robot Operating System (ROS). ROS provides a very advanced, reliable foundation for robotic applications. It will work in conjunction with OpenNI to provide our system the skeleton tracking data needed for helping the user do exercises, like Jumping Jacks. Our initial plan was to all run this on the Raspberry Pi 4b, using Ubuntu Mate 18.04. However, we ran into some issues getting the robotics software running on Ubuntu 18.04. The software needed to interact with the Kinect v1 was built for older versions of Ubuntu and ROS, even so the ARM architecture of the Raspberry PI was an issue for this software. Even after some thorough attempts on getting the software running, we decided to take the vision part out of the Raspberry Pi and run it on an external computer. After deciding on this, we were able to quickly implement and run the computer vision software. It is unfortunate that we can not run this on the Raspberry Pi at this time, but it means that we can continue the project forward. Moreover, looking at the software running on our external computer, we found that the computer vision software was more resource-intensive (on both CPU and GPU) than we anticipated, which also increased our doubts that the Raspberry Pi would even be able to run this at all. | As the core of our software, we will use the open-source Robot Operating System (ROS). ROS provides a very advanced, reliable foundation for robotic applications. It will work in conjunction with OpenNI to provide our system the skeleton tracking data needed for helping the user do exercises, like Jumping Jacks. Our initial plan was to all run this on the Raspberry Pi 4b, using Ubuntu Mate 18.04. However, we ran into some issues getting the robotics software running on Ubuntu 18.04. The software needed to interact with the Kinect v1 was built for older versions of Ubuntu and ROS, even so, the ARM architecture of the Raspberry PI was an issue for this software. Even after some thorough attempts on getting the software running, we decided to take the vision part out of the Raspberry Pi and run it on an external computer. After deciding on this, we were able to quickly implement and run the computer vision software. It is unfortunate that we can not run this on the Raspberry Pi at this time, but it means that we can continue the project forward. Moreover, looking at the software running on our external computer, we found that the computer vision software was more resource-intensive (on both CPU and GPU) than we anticipated, which also increased our doubts that the Raspberry Pi would even be able to run this at all. | ||

Nevertheless, we would like to take another look at trying to get the software to run on the Pi, as then no external computers would be necessary and it would all be contained in the robot casing. In the ideal situation, as we would expand the features the vision is used for, we might opt to use a different single-board computer, like the NVIDIA Jetson. These boards are much more powerful with regards to interpreting the camera data and doing Artificial Intelligence in real-time. | Nevertheless, we would like to take another look at trying to get the software to run on the Pi, as then no external computers would be necessary and it would all be contained in the robot casing. In the ideal situation, as we would expand the features the vision is used for, we might opt to use a different single-board computer, like the NVIDIA Jetson. These boards are much more powerful with regards to interpreting the camera data and doing Artificial Intelligence in real-time. | ||

====Physical Exercises==== | ====Physical Exercises==== | ||

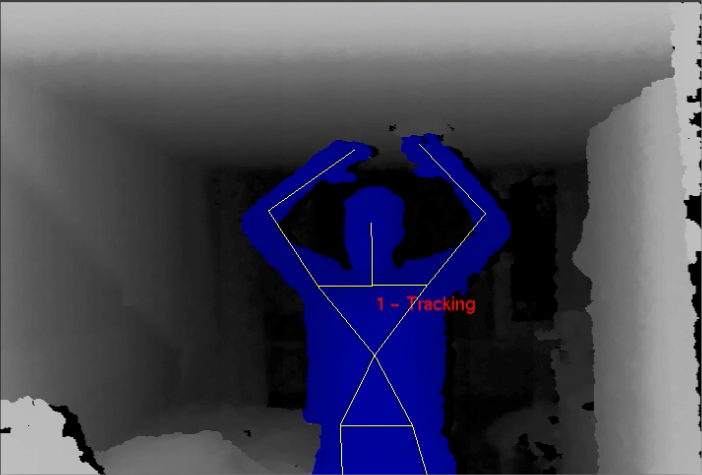

We use skeleton tracking to help the user do their exercises and stay active and healthy. We do this by utilizing the depth information provided by the Kinect 360, in combination with OpenNI and NiTE. The NiTE software is a | We use skeleton tracking to help the user do their exercises and stay active and healthy. We do this by utilizing the depth information provided by the Kinect 360, in combination with OpenNI and NiTE. The NiTE software is a proprietary piece of software that provides software to access skeleton tracking data from the sensor. This skeleton tracking data is then published to ROS and from this central system inserted into a neural network using PyBrain. | ||

[[File:SkeletonTracking.png|300|thumb|right|Skeleton Tracking demo]] | [[File:SkeletonTracking.png|300|thumb|right|Skeleton Tracking demo]] | ||

| Line 421: | Line 400: | ||

=Presentation= | =Presentation= | ||

For finishing this project, we had to prepare a presentation. We, however, did not do this the conventional way, by creating a video. Instead, we opted to do a livestream via YouTube Live. This way, we were able to do a live demo of the device and our thought was that it did our work more justice. We have used some Apple devices as cameras with Open Broadcaster Software to livestream our presentation to YouTube Live. The reason we have chosen YouTube as the platform, was simply as it would preserve the quality better than using Microsoft Teams (via either webcam input or screen sharing). However, using YouTube Live it introduced a small delay of 5 seconds, therefore we had occasional question rounds after presenting some part. | |||

Due to COVID-19, we have all worked separately on parts of the project. Therefore, getting it all together was quite a challenge. We decided that Matthijs would go to Sietze, and they were going to combine all pieces to the final product for the demo. We have used about one and a half-day to put together all the separate modules (the iPad interface, the physical device and the vision part) and set up all of the cameras, to have different perspectives for the viewers. The leftover half day we did the actual presentation. We recorded the whole livestream, with the exception of the questions, so that we could create a little shorter video of this whole presentation for the teachers to review later. | |||

[[Image:CameraWithCalle.jpg|thumb|450px|center|Our main camera with Call-E in the background]] | |||

[[Image:Studio.jpg|thumb|450px|center|Overview of our livestream studio]] | |||

=Conclusions= | =Conclusions= | ||

| Line 429: | Line 417: | ||

= Workload = | = Workload = | ||

As a group, we did not log the | As a group, we did not log the number of hours that we spent on the project. During this project, we felt that everybody put in about the same amount of effort into making the project a success. | ||

== Peer review == | == Peer review == | ||

| Line 440: | Line 428: | ||

|- | |- | ||

| Bart | | Bart | ||

| +0. | | +0.1 | ||

|- | |- | ||

| Bryan | | Bryan | ||

| - | | -0.6 | ||

|- | |- | ||

| Edyta | | Edyta | ||

| -0. | | -0.1 | ||

|- | |- | ||

| Matthijs | | Matthijs | ||

| +0. | | +0.3 | ||

|- | |- | ||

| Sietze | | Sietze | ||

| +0. | | +0.3 | ||

|} | |} | ||

| Line 464: | Line 452: | ||

Image microphone: https://d2dfnis7z3ac76.cloudfront.net/shure_product_db/product_images/files/c4f/0d9/07-/thumb_transparent/c165d06247b520e9c87156f8322804a2.png | Image microphone: https://d2dfnis7z3ac76.cloudfront.net/shure_product_db/product_images/files/c4f/0d9/07-/thumb_transparent/c165d06247b520e9c87156f8322804a2.png | ||

Image iPad: https://www.powerchip.nl/6403-thickbox_default/ipad-wi-fi-128gb-2019-zilver.jpg | Image iPad: https://www.powerchip.nl/6403-thickbox_default/ipad-wi-fi-128gb-2019-zilver.jpg | ||

Latest revision as of 19:31, 25 June 2020

CALL-E

We present an in-house assistant to help those in self-isolation through tough times. The assistant is called CALL-E (Crisis Assistant Leveraging Learning to Exercise).

A recap of our (live-streamed) presentation can be viewed here: https://youtu.be/MYfvuc0-ZtU

Group members

| Name | Student ID | Department |

|---|---|---|

| Bryan Buster | 1261606 | Psychology and Technology |

| Edyta W. Koper | 1281917 | Psychology and Technology |

| Sietze Gelderloos | 1242663 | Computer Science and Engineering |

| Matthijs Logemann | 1247832 | Computer Science and Engineering |

| Bart Wesselink | 1251554 | Computer Science and Engineering |

Problem statement and objectives

With about one-third of the world’s population living under some form of quarantine due to the COVID-19 outbreak (as of April 3, 2020) [1], scientists sound the alarm on the negative psychological effects of the current situation [2]. Studies on the impact of massive self-isolation in the past, such as in Canada and China in 2003 during the SARS outbreak or in west African countries caused by Ebola in 2014, have shown that the psychological side effects of quarantine can be observed several months or even years after an epidemic is contained [3]. Among others, prolonged self-isolation may lead to a higher risk of depression, anxiety, poor concentration and lowered motivation level [2]. The negative effects on well-being can be mitigated by introducing measurements that help in the process of accommodation to a new situation during the quarantine. Such measurements should aim at reducing the boredom (1), improving the communication within a social network (2) and keeping people informed (3) [2]. With the following project, we propose an in-house assistant that addresses these three objectives. Our target group are the elderly who needs to stay home in order to practice social distancing.

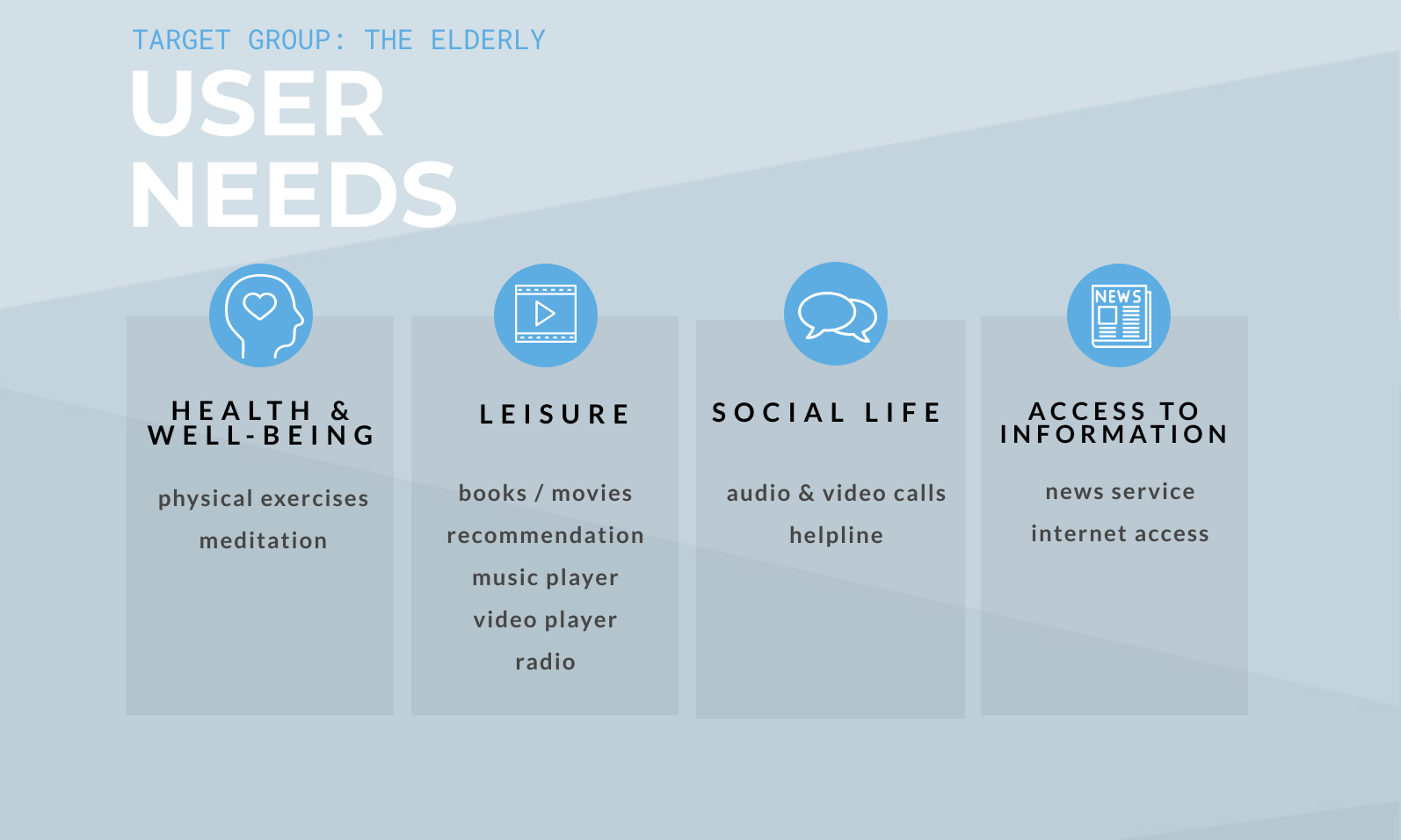

User needs

Having defined our main objectives, we can further specify the assistant's functionalities, which are tailored to our target group (the elderly). In order to facilitate the accommodation of the assistant, we have focused on users' daily life activities. Research has shown that the elderly most often engage in activities such as reading books, watching TV/DVDs/videos, socialising and physical exercises[4]. Moreover, as we do not want to expose our prospective users to unnecessary stress attributed to the introduction of new technology, we have examined the use of computers and the Internet. It has been found that the most common computer uses among the elderly are: (1) Communication and social support (email, instant messaging, online forums); (2) Leisure and entertainment (activities related to offline hobbies); (3) Information seeking (health and education); (4) Productivity (mental stimulation: games, quizzes)[5]. Additionally, we have added two extra activities: meditation and listening to music, as they have been shown to bring significant social and emotional benefits for the elderly in social isolation[6][7].

Considering our objectives and findings from the literature, we have identified four users' needs and proposed features for each category. The overview is presented below.

User-centered design

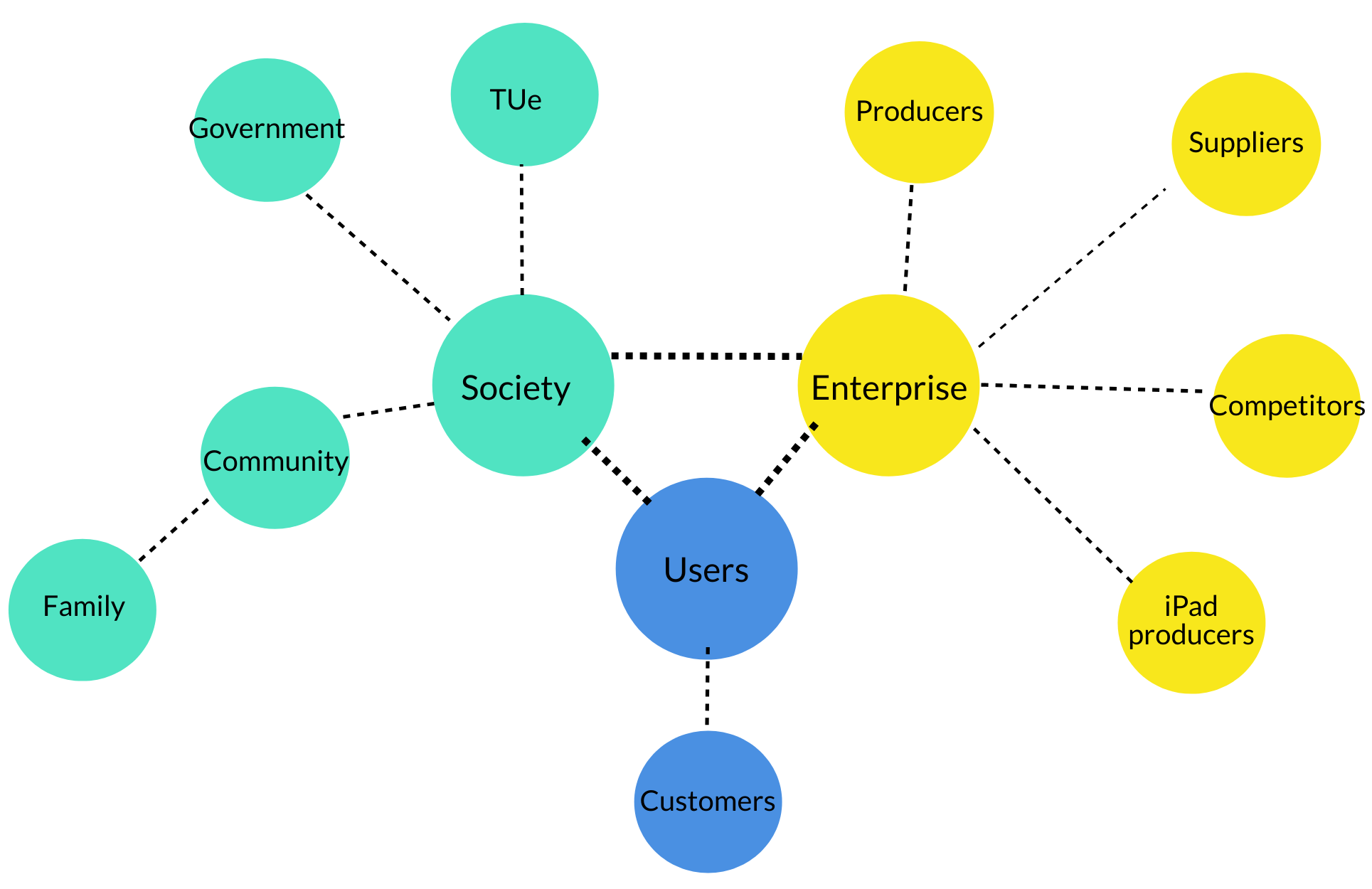

Stakeholder analysis

User

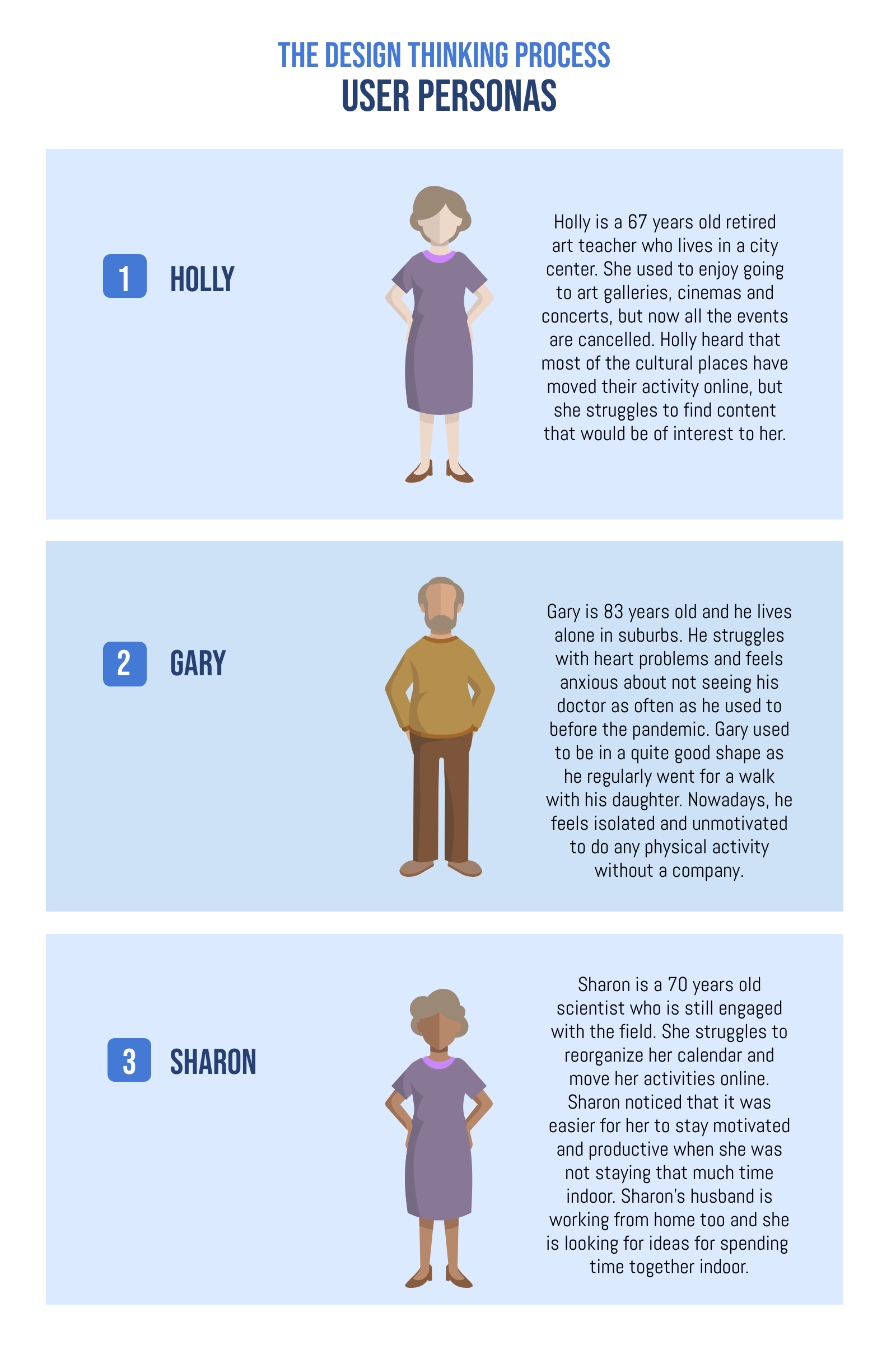

Customers

The stakeholders that we gave the most priority are the customers, we predict that it will mostly consist of the elderly, our target group. It is our task to keep these customers satisfied, but there is little need to keep them informed about what is going on during production. Design choices should keep the limitations and desires of the elderly into account and could use their feedback. This feedback could be gathered by conducting a user test.

Society

Community

One of the goals of CALL-E is to increase the social activeness of the customer. The community around the user is most likely to be affected by this and should thus at the very least be satisfied with how CALL-E affects them and the customer. They can be kept informed if they desire such. The community can consist of fellow elderly, but also caretakers and family.

Family

Besides customers, their family probably has the most interest in how CALL-E influences the user and should thus be kept satisfied. Informing them about the design process is unneeded.

Government

A stakeholder that can have a big influence on CALL-E is the government. It is in the government’s interest to make sure CALL-E is a safe product, that privacy remains unviolated and that CALL-E does as advertised to the customer.

TU/e

If the product were to be produced the name of TUe would be connected to it and thus would not appreciate big errors being made. They have to be informed and included in the design process.

Enterprise

Producers

The following stakeholders, the producers, have the responsibility of producing the CALL-E. It is important to keep these stakeholders informed and included in the design process. The design of CALL-E should take into consideration possible limitations that producers have to deal with (e.g. cost of production tools) and try to optimise the design for optimal production.

Suppliers

The next stakeholders are the suppliers of CALL-E components. While they do not need to be informed about the design process (apart from whether the part they supply is in the final design), nor do they need to be included in the design process they do benefit from (mass) production of CALL-E. If contracts were made inclusion into the design process could be made, for example, if their products lack the desired functionality the suppliers can add this to keep the contract.

tablet producers

The producer of tablets is another stakeholder. CALL-E is currently designed for usage with a tablet, and thus encourages customers to have a tablet. This effect is probably going to be small, but it is there. Future designs could make it such that multiple types of tablets will be compatible. There is no need to keep them informed, nor included.

Competitors

The last of the enterprise stakeholders are competitors. It is not recommended to keep them informed nor included. They are mentioned however because they have an interest in (the failure) of CALL-E.

User personas

Ethical considerations

By its nature, CALL-E may pose some ethical challenges that should be taken into account from the beginning of its design process. We need to consider both the intended and unintended effects of the assistant in order to be able to deliver a high-quality service. The possible ethical issues are considered in the light of the following core principles: non-maleficence, beneficence, and autonomy [8].

The non-maleficence principle states that an assistant should not harm a user. The assistive technology is very often burdened with a risk of physical harm. Fortunately, as CALL-E provides assistance through social interaction, the risk of potential physical harm is close to zero. The size and weight of the assistant allow for safe handling and stable placement. The non-maleficence principle is closely related to beneficence rule (assistant should act in the best interest of the patient). When it comes to non-physical harm, it was noted that the role of assistive technology should be properly described to a user in the early stages of deployment in order to minimize unintended deception. During the introduction of CALL-E, we have to make sure that users are aware of the assistant’s possibilities and limitations. Moreover, research shows that users may quickly become attached to an assistant and feel distressed when such an assistant is taken away [9]. It is not a major issue of concern in our case as CALL-E is indented for private users who gain full ownership when buying the assistant.

The third principle, autonomy, holds that a user has a right to make an informed, uncoerced decision. The introduction of an assistant can both positively and negatively influence the user’s autonomy. On the one hand, an assistant may facilitate independent activities that normally have to be performed with external help. However, research shows that users may feel drawn to obey an assistant and assume its predominance, which in turn results in restricted user’s autonomy [8]. In our case, CALL-E’s capabilities and limitations are clearly communicated in order to establish the role of the assistant. Moreover, communication with the user is based on suggestions and advice. The user is able to disable some functions or to shut off the entire system.

Approach

In order for us to tackle the problem as described in the problem statement, we will start with careful research on topics that require our attention, like how and where we can support the mental and physical health of people. From this research, we can create a solution consisting of different disciplines and techniques.

From there, we will start building an assistant that has the following features:

- Speech:

- Speech synthesis: being able to interact with an assistant via speech is a key part of the assistant, to tackle the loneliness problem. Using hardware microphones and pre-existing software, we can create a ‘living’ assistant.

- Speech recognition: being able to talk back to the assistant sparks up the conversation, and makes the assistant more human-like.

- Tracking: the robot has functionalities that enable it to look towards a human person when it is being activated.

- Exercising: to counter the lack of physical activities, the robot has functionalities that can prompt or motivate the user to be physically active, and to track the user’s activity.

- General information: the robot prompts the user to hear the latest news every hour between 8 am and 8 pm. Users can always request a new update through a voice command.

- Graphical User Interface: when the user is unable to talk to the assistant, there will be an option to interact with a graphical user interface, displayed on a touch screen.

- Vision: skeleton tracking, facial detection. Use this data to count jumping jacks, look at someone when talking to.

Using different feature sets allows our team members to (partly) work individually, speeding up the development process in the difficult time that we are in right now. The parts should be tested together. Not only in the end but also when building. This can be done by scheduling meetings with the person responsible for building the physical robot. The team members can share code via a source like GitHub, which then can be uploaded onto the robot, allowing members to test their part.

When all parts work together, a video will be created, highlighting all bot functionalities. A live presentation will also be given via YouTube, to provide an interactive demonstration.

The features

Physical exercise: People move less if they stay at home for a while. Therefore, the robot has an option to stay fit, where the user has to perform jumping jacks, after which the jumping jacks are counted.

Audio & video calls: The robot can suggest the user call a person in case the user has been in a silent environment for a while and has not spoken to anyone for a while.

Helpline: To prevent users from getting lonely, they have the ability to open the helpline and chat or call with employees from the helpline.

Newsservice: In times like this, it is important to stay informed. The robot has an option to play the latest NPR news via Mycroft.

Internet access: To make sure that the user has the time to relax, Call-E has a browser that can be used to access the internet.

Deliverables

By the end of the project, we will deliver the following items:

- Project Description (report via wiki)

- Project Video demonstrating functionalities

- Physical assistant

Milestones

State of the Art

Indoor localisation has become a highly researched topic over the past years. A robust and compute-efficient solution is important for several fields of robotics. Whether the application is an automated guided vehicle (AGV) in a distribution warehouse or a vacuum robot, the problem is essentially the same. Let us take these two examples and find out how state-of-the-art examples solve this problem. The option to make Call-E move around was first explored, therefore research on this topic was performed.

Logistics AGVs

AGVs for use in warehouses are typically designed solely for use inside a warehouse. Time and money can be invested to provide the AGV with an accurate map of its surroundings before deployment. The warehouse is also custom-built, so fiducial markers [10] are less intrusive than they would be when placed in a home environment. These fiducial markers allow Amazon’s warehouse AGVs to locate themselves in space very accurately. Knowing their location, Amazon’s AGVs find their way around warehouses as follows. They send a route request to a centralised system, which takes into account other AGVs’ paths in order to generate an efficient path, and commands the AGV exactly which path to take. The AGV then traverses the grid marked out with floor-mounted fiducial markers. [11] The AGVs don’t blindly follow this path, however, as they keep on the lookout for any unexpected objects on their path. The system described in Amazon’s relevant patents[10][11][12][13] also includes elegant solutions to detect and resolve possible collisions, and even the notion of dedicated larger cells in the ground grid, used by AGVs to be able to make a turn at elevated speeds.

Robotic vacuum robots

A closer-to-home example could be found when looking at Irobot’s implementation of VSLAM (Visual Simultaneous Localisation And Mapping) [14] in their robotic vacuum cleaners. SLAM is a family of algorithms intended for vehicles, robots and (3d) scanning equipment to both localise themselves in space and to augment the existing map of their surroundings with new sensor-derived information. The patent[14] involving an implementation of VSLAM by Irobot describes a SLAM variant which uses two cameras to collect information about its surroundings. It uses this information to build an accurate map of its environment, in order to cleverly plan a path to efficiently clean all floor surfaces, it can reach. The patent also includes mechanisms to detect smudges or scratches on the lenses of the cameras and notify the owner of this fact. Other solutions for gathering environmental information for robotic vacuum cleaners use a planar LiDAR sensor to gather information about the boundaries of the floor surface. [15]

SLAM algorithms

This is the point in research about SLAM that had us rethink our priorities. When reading daunting papers about approaches to solving SLAM [16], we were both intrigued and overwhelmed at the same time. While it seems a very interesting field to step into, it promised to consume too large of a portion of our resources during this quartile for us to justify an attempt to do so. Furthermore, we discussed that having our assistant be mobile at all does not directly serve a user need. Thus we decided to leave this out of the scope of the project.

Human - robot interaction

Socially assistive robotics

Socially assistive robots (SARs) are defined as an intersection of assistive robots and socially interactive robots [17]. The main goal of SARs is to provide assistance through social interaction with a user. The human-robot interaction is not created for the sake of interaction (as it is the case for socially interactive robots), but rather to effectively engage users in all sorts of activities (e.g. exercising, planning, studying). External encouragement, which can be provided by SARs, has been shown to boost performance and help to form behavioural patterns [17]. Moreover, SARs have been found to positively influence users’ experience and motivation [18]. Additionally, due to limited human-robot physical contact, SARs have lower safety risks and can be tested extensively. Ideally, SARs should not require additional training and be flexible when it comes to the user’s changing routines and demands. SARs not necessarily have to be embodied; however, embodiment may help in creating social interaction [17] and increases motivation [18].

Social behaviour of artificial agents

Robots that are intended to interact with people have to be able to observe and interpret what a person is doing and then behave accordingly. Humans convey a lot of information through nonverbal behavior (e.g. facial expression or gaze patterns), which in many cases is difficult to encode by artificial agents. This fact can be compensated by relying on other cues such as gestures or position and orientation of interaction [19]. Moreover, human interaction seems to have a given structure that can be used by an agent to break down human behavior and organize its own actions.

Voice recognition

Robots that want to interact with humans need some form of communication. For the robot we are designing one of the means of communication will be audio. The state of the art voice recognition systems use learning neural networks[20] together with natural language processing to process the input and to learn the voice of the user over time. An example of such a system is the Microsoft 2017 conversational speech recognition system.[21] The capabilities of such systems are currently limited to only be able to understand literal sentences and thus its use will be limited to recognizing voice commands.

Speech Synthesis

To allow the robot to respond to the user a text to speech system will be used. Some examples of such systems that are based on Deep Neural Networks include, Tacotron[22], Tacotron2[23] and Deep Voice 3[24]. The current state of the art makes use of end-to-end variant where these systems get trained on huge data sets of (English) voices to create a human-like voice. This system can then be commanded to generate speech that is not limited to some previously set words. These systems are not always as equally robust and thus newer systems focus more on making the already high performing system more robust, an example being Fastspeech[25]

Attracting attention of users

Attracting the attention of the user is the prerequisite of an interaction. There are multiple ways to grab this attention. Some examples being touching, moving around, making eye contact, flashing lights and speaking. Research shows that when the user is watching TV, speaking and waving are the best options to attract attention while making sure that people consider the robot grabbing attention to be a positive experience. [26] Trying to acquire eye contact during this experiment did not have positive results and should be avoided to acquire attention, but instead use it for maintaining attention.

Ethical Responsibility

The robot that we are designing, will be interacting with human people, specifically the elderly in this case. This raises questions, what tasks can we let a robot perform, without causing the user to lose human contact. For this, several research papers have been conducted that show how to establish an ethical framework [27] [28]. The paper states that it is important to keep the attitude of the user, the elderly, in mind when determining such a framework.

Next to that, research has also been performed on what to expect in a relationship with a robot, which is what the elderly will engage in[29][30]. If we want to make the robot persuade the human into physical activities, it is important that we look at how this can be persuasive [31]. Attracting the user's attention with an arm should be done in such a way that it does not harm the user's autonomy too much.

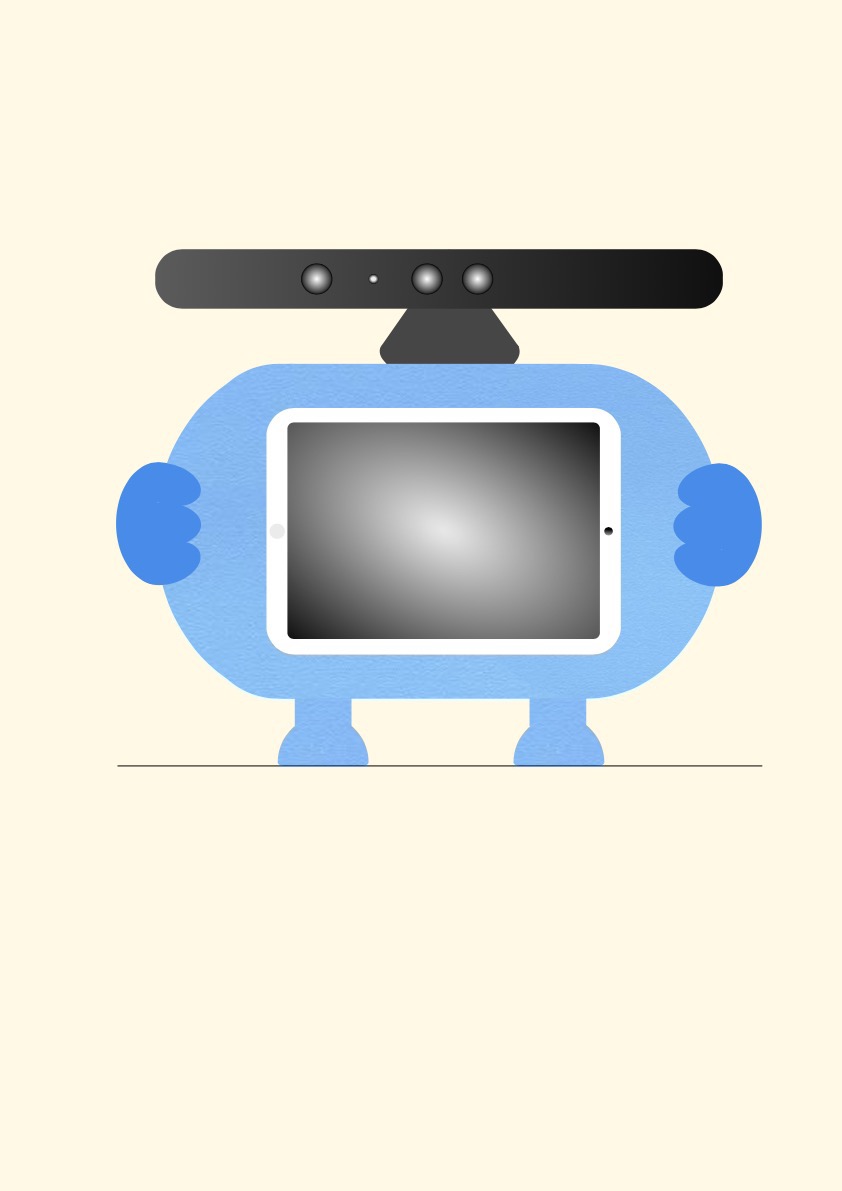

The design

Physical appearance and motivation

The main building component of CALL-E is a torso with a tablet and Kinect placed on a top. The legs and hands are added to increase the friendliness of the assistant. The only moving part is the hand and Kinect, which in the given configuration serves as a head with eyes, and can both pan (using a servo) and tilt (using the Kinect's internal tilt motor). Having only these two components movable aims at maintaining a balance between the complexity of the design and its physical attractiveness. We decided that making other parts able to move would add too much complexity to the mechanical design, and we figured that any more movement is unnecessary. The main body of CALL-E is 3D printed and the whole assistant is relatively portable (weight: 1.7kg, size: 40cm x 30cm x 30cm), which makes it easy and comfortable to use in many places in a house. The assistant combines functionalities that are normally offered by separate devices. It allows the elderly to enjoy the range of activities without the burden of learning to navigate through several systems. On top of that, CALL-E is designed in the vein of socially assistive robotics, which is hoped to improve users’ experience and motivation. We believe that all these details make CALL-E a valuable addition to the daily life of the elderly.

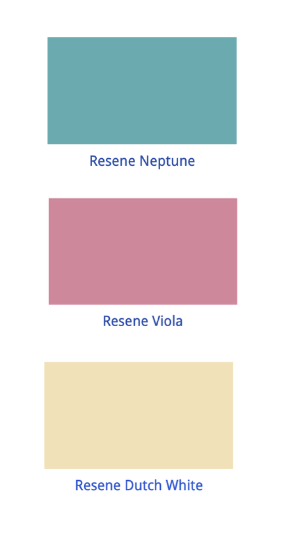

Interface

The interface is designed to facilitate user experience and accessibility. To better address user needs, the design process was based on research and already existing solutions. The minimalistic design aims at preventing cognitive overload in older adults [32]. The dominant colors are soft pastels. Research has shown that the elderly find some combinations of colors more pleasant than others. In particular, soft pinky-beiges contrasted with soft blue/greens were indicated as soothing and peaceful [33]. To avoid unnecessary distraction, the screen background is homochromous, and the icons have simple, unambiguous shapes. The font across the tabs is consistent, with increased spacing between letters [34]. The design includes tips for functionality, such as “Swipe up to begin”. The user has a possibility to adjust text and button size (not implemented in the prototype, but is incorporated in the designs) [35].

Hardware

Raspberry Pi

The Raspberry Pi was chosen for its networking and computational capabilities and will be used for the computations that CALL-E will need for environmental monitoring, image processing, and text-to-speech and speech-to-text conversions.

Raspberry Pi Fan

The Raspberry Pi 4 has a fairly high thermal design power (TDP), causing it to quickly overheat [36] if no action is taken. We, therefore, chose a very capable active cooler to make the system as stable as possible. Other components will also be cooled somewhat by the additional airflow the fans on our cooler provide. The shell of CALL-E has been designed with two vent grilles in mind, to cause some passive airflow.

Arduino

The reason an Arduino was added even though we already have a Raspberry Pi is that we needed a real-time system. Controlling servos is done by encoding the requested servo angles in an accurately timed logic pulse. Due to the Pi running Linux and already running a plethora of other processes, this would not have been achievable with the Pi. The jitter all of these other processes and the overhead of not running a real-time system will cause jitter in the actual angle the servos rotate to. This would also be quite noisy sound-wise. The Arduino receives angle requests from the Pi over a serial connection through USB.

Servos

Two servo motors will be used. The first is used to rotate the Kinect in the 'pan' axis and the second is used to move the right arm of CALL-E. Their movement will be controlled by the Arduino, as mentioned earlier.

Power Supply

We are using a Meanwell 5V 10A power supply to convert power from a wall socket into a stable 5V DC on which the Pi, the Arduino and both servos will run. The servos are ideally run on a slightly higher voltage, but we have chosen not to complicate our architecture more for the sake of quicker servo movements.

Kinect

The Kinect was designed as a means to achieve a controller-less game console. To achieve this goal, it features an RGB camera, an IR camera, an IR dot pattern projector, a microphone array, a tilt motor and a multi-color status LED. We are happy to be able to use all of those features!

- RGB camera

- Used for facial tracking, to have CALL-E look at the user while interacting.

- IR camera and IR dot projector

- Used in tandem to obtain a depth map of the environment. This is used to track the user's pose, which in turn is used to detect physical exercise.

- Microphone array

- Used to enable speech-to-text conversion. Because it is an array of microphones rather than a single one, noise other than the user's voice can be filtered out more easily.

- Tilt motor

- Used to tilt the Kinect up and down. Together with the aforementioned pan axis servo, CALL-E can point its head directly at the user's face while interacting.

- Status LED

- Used to indicate whether CALL-E is awake, and also gratefully used while debugging facial tracking.

Tablet

Call-E is designed in a way that allows users to use any tablet. In the designs and the prototype, we used an iPad. The advantage of using a tablet is that there are already some built-in functionalities that can be used for developing the assistant. Given the time constraints of the project, deployment of the already existing solutions seems both attractive and reasonable. With a tablet as a design component, CALL-E is equipped with a touch screen and speakers. A tablet is mainly used as an input device, and to convey information when the user prefers visual display over voice communication. Additionally, the screen is used during other activities, such as video calls, web browsing and video playback.

Mechanical considerations

Though not user-centered, the physical construction of CALL-E needed to be ergonomic for whoever might assemble it, even though we are simply making a prototype.

Designing for 3D printing

The parts we are not buying will need to be 3D printed. While 3D printing might be considered magic by machinists, it certainly has its limits. It still brings along a plethora of new requirements on the actual shape of our parts. Firstly, we need to take into account the build volume of the printer. Since CALL-E is taller, wider and deeper than the printer we are using can produce, we need to figure out some clever split. This split also needs to take into account tolerances of parts fitting together, and further print-ability. I will not go into details on reasons why I arrived at the actual split displayed here, but it is what I have ended up at. Every printed part has some preferred orientation to print it in, with what I believe is the best possible combination of bed adhesion and least negative draft.

CALL-E was built by a single group member in order to be able to keep our distance. A video presentation detailing this process and some more highlights can be viewed here[2].

Mechanical highlights

Serviceability

CALL-E will be easily serviceable, which is very convenient when working on a prototype. After removing the six screws holding on the front of CALL-E, this front can be taken out.

The top, which was previously contained by a 3D printed ledge interlocking the two halves of the main body, can now be lifted out to provide unrestricted access to the internal electronics. This step also opens up the rear slot used to route power cables through. This slot could even be used to route any additional cables to the Raspberry Pi, say for attaching a monitor or other peripherals for debugging purposes.

3D printed bearing surfaces

The Kinect has an internal motor which we will use to tilt the 'head' of CALL-E up and down. Since we want CALL-E to be able to really look at the user, we needed another axis of rotation; pan. We will be using one of the servo's included so-called 'horns', as it features the exact spline needed to properly connect to the servo. This spline has such tight features and tolerances that we prefer to attach our 3D prints to this horn, rather than to the servo directly. We cannot reliably 3D print this horn's spline. This horn will be screwed into a 3D printed base in which the Kinect's base sits. This base will be printed with a concentric bottom layer pattern, and so will the surface this base rests on. This combination makes for two surfaces that experience very little friction when rotating, even when a significant normal force is applied. In our case, this normal force will be applied by the (cantilevered) weight of the Kinect. The movable arm features a slightly different approach since the bearing surface, in that case, is cylindrical rather than planar. This allowed for the arm to bear directly into the shell of the main body, without the need to add much complexity to the already difficult to manufacture body shell.

Software

Voice control

We will be using Mycroft [37] as our platform for voice control. In order to set our own 'wakeword', and have CALL-E start listening to the user as soon as he or she calls its name, we used PocketSphinx [38]. Ideally, we could train the integrated wakeword detection neural network to recognise the wakeword and ignore everything else, but after some research online we realised this would require far more data then we could reasonably collect. We, therefore, went with an inferior, but certainly quicker strategy, namely phoneme characterisation using PocketSphinx. We translated the phrase "Hey CALL-E" into phonemes using the phoneme set used in the CMU Pronouncing Dictionary [39]. CALL-E becomes: 'HH EY . K AH L . IY .' The periods indicate pauses in speech, or generally whitespaces.

Decisions

The communication with our robot is two-way. Meaning that either the user starts the interaction, or the robot starts the interaction with a user (because of the need for exercising for example). This last process takes place via a decision tree [40]. A decision tree is basically a tree that can be followed, and based on certain conditions, an outcome is determined. So, the decision tree has several input features and one output.

A decision tree is trained by determining which choices give the most information. The features that we collected were:

- Average noise level over the past 60 minutes

- Current noise level

- Time of day

- Time since the last exercise

- Time since the last call

- Action (call, exercise, play news or do nothing) (label)

Based on these inputs, we generated a random dataset (without labels). Because of the current (corona) circumstances, it is not possible for us to gain the data ourselves using the sensors. To circumvent this problem, we scaled all the features that we had to a normalised scale (e.g. from 0 - 1), which enables us to correctly train the decision tree based on random data. After the random data has been generated, it has been labeled with what we think that the robot should do. After using a training program, a decision tree was generated and could be used to run in the background when our robot was active: it now has brains! Although the actual decision tree was trained, we chose to not use it during the demonstration because it was too unreliable. Instead, a manual decision tree was programmed.

Vision

The robot will use a Microsoft Kinect (v1) for vision-based input. It will provide a way for the robot to, for example, look at the interacting user to make it feel like it is actually listening to the user and also help the user do exercises.

As the core of our software, we will use the open-source Robot Operating System (ROS). ROS provides a very advanced, reliable foundation for robotic applications. It will work in conjunction with OpenNI to provide our system the skeleton tracking data needed for helping the user do exercises, like Jumping Jacks. Our initial plan was to all run this on the Raspberry Pi 4b, using Ubuntu Mate 18.04. However, we ran into some issues getting the robotics software running on Ubuntu 18.04. The software needed to interact with the Kinect v1 was built for older versions of Ubuntu and ROS, even so, the ARM architecture of the Raspberry PI was an issue for this software. Even after some thorough attempts on getting the software running, we decided to take the vision part out of the Raspberry Pi and run it on an external computer. After deciding on this, we were able to quickly implement and run the computer vision software. It is unfortunate that we can not run this on the Raspberry Pi at this time, but it means that we can continue the project forward. Moreover, looking at the software running on our external computer, we found that the computer vision software was more resource-intensive (on both CPU and GPU) than we anticipated, which also increased our doubts that the Raspberry Pi would even be able to run this at all.

Nevertheless, we would like to take another look at trying to get the software to run on the Pi, as then no external computers would be necessary and it would all be contained in the robot casing. In the ideal situation, as we would expand the features the vision is used for, we might opt to use a different single-board computer, like the NVIDIA Jetson. These boards are much more powerful with regards to interpreting the camera data and doing Artificial Intelligence in real-time.

Physical Exercises

We use skeleton tracking to help the user do their exercises and stay active and healthy. We do this by utilizing the depth information provided by the Kinect 360, in combination with OpenNI and NiTE. The NiTE software is a proprietary piece of software that provides software to access skeleton tracking data from the sensor. This skeleton tracking data is then published to ROS and from this central system inserted into a neural network using PyBrain.

For this demo version of the Call-E robot, we will be focussing on one exercise, namely jumping jacks. We use feedforward neural networks trained by backpropagation based on our own training dataset. We then created 3 python scripts: a data recorder for the skeleton tracker to record, a trainer to train a network and executor to activate a trained network. We record the different stages of a Jumping Jack, 'wide', 'small' and 'neutral'.

The 'wide' gesture is used for detecting when a user is moving from the left state to the right state and the 'small' gesture from the right to the left state. As the user is not always performing a gesture, we added a 'neutral' gesture without any motion, this will catch all states where the user is not in state 'wide' nor state 'small', so idle. We are recording data for 1 second before creating a data point. For each state, we have 30 data points, so in total, we are training the network on 90 data points, each with 1 second of gesture data. We have used 3000 epochs to train a reasonable model for recognizing the states of a jumping jack.

To integrate this with the rest of our robot, we publish some events back to ROS for our other module to subscribe to and handle this event, to say, for example, send the state back to our interface running on the iPad. When we recognize a small and then a big state then we know the user has done a jumping jack.

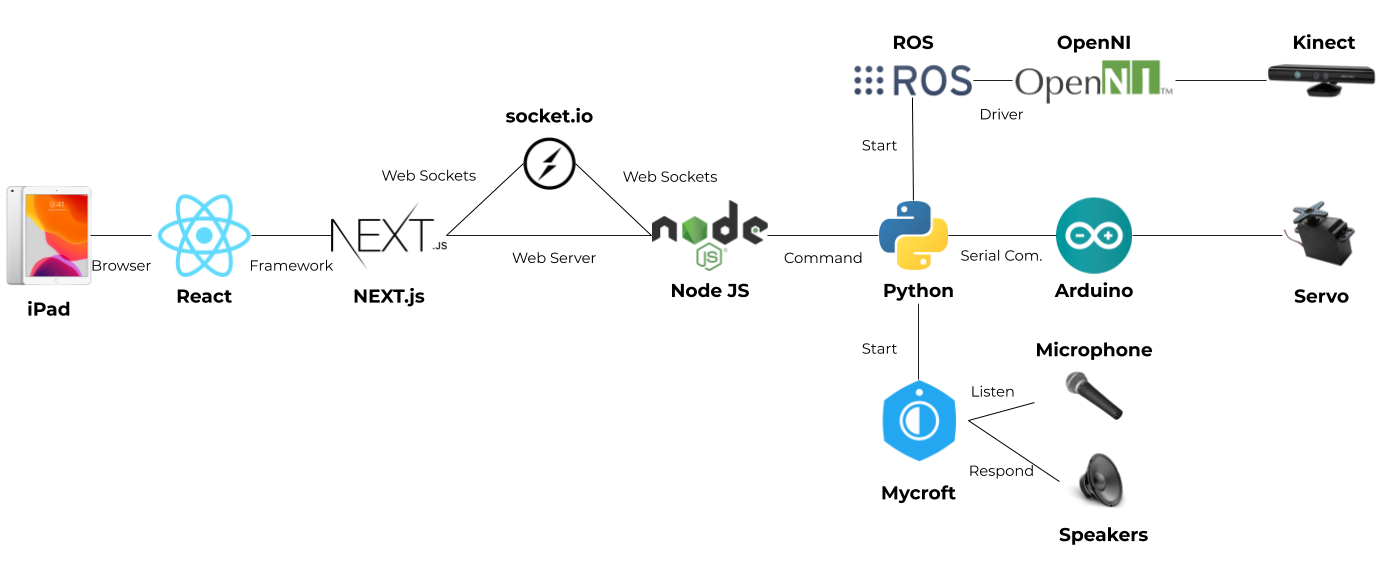

System Overview Diagram

Technical Description

As can be seen in the systems overview diagram, the app that runs on the iPad screen is on the basis of a web app. This web app is created using the front-end framework React, making it easy to create highly dynamic web pages like the frontend of Call-E. To ensure fast performance, NextJS is used. NextJS can be used to pre-generate dynamic pages, to make sure that the time that it takes until the user can interact with the page is decreased to a minimum.

Because the robot has a lot of different systems that are working together, a stable and reliable communication platform between this system was required. The choice was made to do this with web sockets, to ensure low latency packet delivery. One of the systems that need to be connected, is ROS. To do so, a small TCP connection is established between the system, exchanging simple messages like 'weather' and 'news' to indicate the user's actions. If necessary, the web server decides to forward a packet to one of the other systems. For example, when the user starts the exercises page, ROS is notified, such that it can enable the Kinect. Then, the jumping jack count is updated with a message from ROS to the web server.

Another system that should be connected to the frontend, is Mycroft. Mycroft has support for a message bus, allowing us to quickly intercept and transmit actions that Mycroft should perform. This makes it easy to let Mycroft speak custom phrases.

Every 30 minutes, there is a dedicated system check whether the robot should take the initiative that is described above. Based on the inputs that it collected (like current microphone volume for example), it can choose to make the user do exercises or play the news.

Testing

User tests

To make sure that the user needs are being met, some user tests will have to be conducted. These tests will have to measure each individual user need. Due to current circumstances, our options were greatly limited and thus what we would have liked to do will be described. Actual user testing has not taken place during the project.

Voice recognition, accepted error rates

The first iteration of CALL-E has arrived. As to be expected it did not work perfectly, CALL-E’s voice recognition system has both a higher false positive and false negative rate than desired. This causes CALL-E to move its hand up even when CALL-E is not called and could be seen as annoying. As the arm movement is part of the interaction with the user simply stopping the hand movement is undesired and thus another approach is taken. Namely trying to improve the error rates. While simply improving the model on both variables would be ideal, a trade-off is more realistic to be the case.

The goal of this test is to find out what rates of false positives and false negatives are desired by the user. This could then be used to help determine what trade-offs would be desired by the user if the current system is non-optimal.

There are two things to figure out before optimization can occur. Firstly, it needs to be checked whether there is a maximum amount of errors the user accepts and how much this would be. Secondly, it needs to be checked whether the reduction of one type of error can compensate for the error of the other type and how strong this effect is.

To measure these things an experiment would be conducted. In this experiment, the user will be asked to ‘interact with CALL-E’ through voice commands. In reality, the experimenter will control whether CALL-E reacts. The controlled variables will be the false positive rate and the false-negative rate. The measured variable will be the satisfaction rate of the user. This will be measured through a questionnaire afterward. Another preferred option would be using physiologically based methods, but the current situation limits this. Instead, the participant will be asked at what point they think CALL-E has made too many errors and this will be noted down.

The experiment will be a between-subjects design, meaning not every participant will go through the same conditions as the other participants. As CALL-E should be applicable for a general audience a within-subjects design would not add extra value but would add extra costs. There will be a control group for who CALL-E never makes an error. Since our main target group is elderly the participants would ideally also be elderly. A short overview of how the design looks like can be seen in the table underneath. X, Y and Z being the dependent variables.

| False-positive rate | False-negative rate | Total false-positive | Total false-negative | Satisfaction grade | |

|---|---|---|---|---|---|

| Control group | 0.00 | 0.00 | Xc | Yc | Zc |

| Group 1 | 0.50 | 0.50 | X1 | Y1 | Z1 |

| Group 2 | 0.25 | 0.75 | X2 | Y2 | Z2 |

| Group 3 | 0.75 | 0.25 | X3 | Y3 | Z3 |

Interface testing

CALL-E’s interface is designed to facilitate user experience and accessibility. The current design of CALL-E is based on research on already existing solutions. So while the current design is supported by research it is still to be tested by the user group.

The first goal of the test is to find out where improvements can be made. The second goal of the user test is to find out whether the user group likes the design of the interface. As releasing CALL-E while the design is lacking would be unwise.

To test the interface a thinking aloud(TA) protocol will be used. In TA the participant is asked to vocalise their thoughts out loud. It is the job of the experimenter to make sure the participant keeps thinking out loud and to record the user.

During the test, the user will be given tasks that would require them to find the location of each of the features as if they were going to use them. This way the test does not depend on the functionality of CALL-E but only on the design.

The list of tasks that would be taken is as following (order will mostly be randomised to make sure no order effect takes place).

| Tasks |

|---|

| Find out where the help button is (will always be first) |

| Find where (video)calls can be made |

| Find where the helpline is |

| Find where the internet browser can be found |

| Find out what kind of weather it will be in about an hour |

| Find out where the meditation tool is |

| Find out where you can put on some music |

| Find out where you can watch some videos |

| Find out how to change the brightness |

| Find out how to alter the text size |

| Find out how to bold the text of the interface |

Afterward, a short survey will be taken to check how satisfied the participants are with the product and to leave some room open for comments. Based on the recordings and comments of the participants, issues can be located and potentially solved.

Feuture testing

The current features of CALL-E include access to the news, access to the weather, a jumping jack counter, voice commands, (video)calls, access to the helpline of TUe and access to Wikipedia.

The first goal of the test is to find out how much the user enjoys the features. The second goal is to find the necessity of the feature. Features that are enjoyed are not always necessary for CALL-E to fulfill its goal. The last goal is to find out where potential issues lie.

The first subsection of features is ‘calls’. This consists of the video calls, audio calls, the helpline, the exerciser, news, weather, and the browser. To test whether the user likes the feature they will be given the task to use each of the individual features and give feedback on how much they like the feature. This will be done through a grade from 1-7 (1 being very unpleasant and 7 being very pleasant). These results will be recorded. They will also be asked whether they enjoy having the feature on CALL-E specifically or whether they would likely use another product for the same goal and why. After the testing of each feature, the experimenter will ask for any comments from the participant.

The second subsection of the feature is ‘pastime’. This consists of exercise and the news. Similarly to the previous test, they will be asked to test the product and rate it in a similar way. Additionally, they will be asked how bored or excited they were as a measure of boredom reduction. For the exercise part, they need to actually exercise and thus should be warned beforehand that the test requires this. Again the question of replicability will be asked.

The remaining features include the weather and the browser. The participant will be given tasks to answer certain questions with the help of these features. The time it takes to react will be seen as how good CALL-E is in giving access to information. No special warnings are required for these features and they will be tested in the same way as the previous test.

The test will end with a request for comments on CALL-E in general and will be asked whether some desired features are missing.

The participants would ideally be elderly and people who are able to exercise. For those who cannot exercise the exercise part can be skipped. Besides this, every participant will do the same tasks, though not in the same order to prevent order effect.

The user needs, extensive research

After CALL-E has been updated according to the results from the previously mentioned tests it will be tested whether CALL-E would be ready for the market. This is done by testing whether CALL-E fulfills the four user needs that were found from research. Note that the process would very likely be iterative and thus going back to the previous stage is not unlikely.

Leisure

The first user need is ‘Leisure(reduction of boredom)’. Boredom is considered to be a low arousal level and slightly less than average valence level. Boredom would be measured by first measuring the arousal level, through physiological measurements. Then by measuring the valence through the usage of a self-assessment Manikin. The goal is to get higher arousal levels and more positive emotions. The user having more positive emotions is the more important goal. Currently, the only implemented feature with a partial focus on leisure is the exercise part and the current limitations would change the usage of physiological measurements to the usage of a survey asking the user how bored they were.

Social life

The second user need is ‘Social life(social activeness)’. CALL-E will make suggestions for making calls, over the duration of a month. CALL-E will keep track of how often this proposition is taken and also keeps track of how often the user calls others even without the suggestion. These measures will be a function of time, to see if the measures increase with longer exposure to CALL-E. How often the user follows the suggestion of CALL-E will be seen as the ability of CALL-E to convince the user to be more socially active and the amount of time the user is calling and interacting with others will be the measure of social activeness. The main goal is to increase social activeness.

Access to information

Access to information will already be tested in the simpler tests and does not require an extensive variant.

User's health

The last user need is 'user's health'. User health would be measured by first measuring how much the user exercises before the introduction of CALL-E. Using this as a baseline. The amount of exercise the user does will be the measured variable and the goal is to increase this. CALL-E would be placed in the participant's house for about a month while CALL-E records the amount of exercise the user does (while CALL-E is awake).

Component tests

Future vision

The development of CALL-E was constrained by time and the fact that our team had to work individually. However, we believe that the proposed system holds great potential and could be further improved.

The important step in the improvement process would be a user-test. Currently, most of the assistant’s functionalities are based on a literature review and need to be validated. It may be the case that there are some features that we did not consider or features that the target group does not find useful. The same goes for the choices for interface appearance – although they are supported by the literature, they should be confronted with users from the target group. Moreover, the future version of CALL-E should be regularly updated based on user feedback. It is likely that with the increased use of the assistant, users will become more familiar with the system and will expect it to expand its functionalities.

One possibility for enhancing CALL-E’s functionality is to integrate already existing products with the system. There are many applications dedicated to the elderly to increase the quality of their life. For example, a Dutch company MedApp provides a reminder based system to help people manage their medicine use [41]. Currently, the company sends notifications on phones and tablets and explores the possibilities of extending their system with everyday objects. Assuming that CALL-E is used on a daily basis, it can be easily integrated with MedApp and deployed for medication alerts.

The other path for improvement concerns CALL-E’s way of interaction and communication. The important step for enhancing user experience would be an introduction of non-verbal and improvement of verbal human-robot communication. Moreover, a future version of CALL-E could have the ability to become more personalised and learn users individual preferences.

Presentation

For finishing this project, we had to prepare a presentation. We, however, did not do this the conventional way, by creating a video. Instead, we opted to do a livestream via YouTube Live. This way, we were able to do a live demo of the device and our thought was that it did our work more justice. We have used some Apple devices as cameras with Open Broadcaster Software to livestream our presentation to YouTube Live. The reason we have chosen YouTube as the platform, was simply as it would preserve the quality better than using Microsoft Teams (via either webcam input or screen sharing). However, using YouTube Live it introduced a small delay of 5 seconds, therefore we had occasional question rounds after presenting some part.

Due to COVID-19, we have all worked separately on parts of the project. Therefore, getting it all together was quite a challenge. We decided that Matthijs would go to Sietze, and they were going to combine all pieces to the final product for the demo. We have used about one and a half-day to put together all the separate modules (the iPad interface, the physical device and the vision part) and set up all of the cameras, to have different perspectives for the viewers. The leftover half day we did the actual presentation. We recorded the whole livestream, with the exception of the questions, so that we could create a little shorter video of this whole presentation for the teachers to review later.

Conclusions

The proposed system was designed for the elderly who need to stay home in order to practice social distancing. However, the universality of CALL-E’s functions makes it great assistance in everyday life, regardless of the reason why the person spends much time home. At the beginning of the project, we set three main objectives that the assistant should meet. The first objective, reduction of boredom, is addressed by adding features for physical exercise. Next to that, the user is provided with a possibility to make audio and video calls or to contact a helpline, which resonates with the second objective – improvement of the communication within a social network. The third objective, keeping people informed, is realised by access to news services with highlighted pandemic related information. The user is encouraged to engage in one of the described activities by voice commands. CALL-E is designed to assist and motivate, but not to impose a new routine on users.

Due to time constraints, not all functions are fully realised, but we believe that CALL-E holds great potential and could be successfully further developed.

Workload

As a group, we did not log the number of hours that we spent on the project. During this project, we felt that everybody put in about the same amount of effort into making the project a success.

Peer review

We all gave everyone in the group a grade on a scale of one to ten, anonymously. All 5 grades somebody received were averaged, and these were averaged again to find a total average. Everybody then had a certain difference to this total average. These deltas were computed to be the following:

| Student | Delta |

|---|---|

| Bart | +0.1 |

| Bryan | -0.6 |

| Edyta | -0.1 |

| Matthijs | +0.3 |

| Sietze | +0.3 |

References

- ↑ Infographic: What Share of the World Population Is Already on COVID-19 Lockdown? Buchholz, K. & Richter, F. (April 3, 2020)

- ↑ 2.0 2.1 2.2 Psychological Impact of Quarantine and How to Reduce It: Rapid Review of the Evidence Brooks, S. K., Webster, R. K., Smith, L. E., Woodland, L., Wessely, S., Greenberg, N., & Rubin, G. J. (2020)

- ↑ Depression after exposure to stressful events: lessons learned from the severe acute respiratory syndrome epidemic. Comprehensive Psychiatry, 53(1), 15–23 Liu, X., Kakade, M., Fuller, C. J., Fan, B., Fang, Y., Kong, J., Wu, P. (2012)

- ↑ https://www.researchgate.net/profile/Su_Yen_Chen/publication/233269125_Leisure_Participation_and_Enjoyment_Among_the_Elderly_Individual_Characteristics_and_Sociability/links/5689f04908ae1975839ac426.pdf\Leisure Participation And Enjoyment Among The Elderly: Individual Characteristics And Sociability (Chen & Fu, 2008))

- ↑ https://www.sciencedirect.com/science/article/pii/S0747563210000695\Computer use by older adults: A multi-disciplinary review. (Wagner, Hassanein & Head, 2010)

- ↑ https://www.sciencedirect.com/science/article/pii/S0197457205003794/Integrative Review of Research Related to Meditation, Spirituality, and the Elderly. (Lindberg, 2005)

- ↑ https://link.springer.com/article/10.1007/s10902-006-9024-3/Uses of Music and Psychological Well-Being Among the Elderly. (Laukka, 2006)

- ↑ 8.0 8.1 Feil-Seifer, D., & Mataric, M. (n.d.). Socially Assistive Robotics. 9th International Conference on Rehabilitation Robotics, 2005. ICORR 2005. doi:10.1109/icorr.2005.1501143

- ↑ Tapus, A., Tapus, C., & Mataric, M. J. (2009). The use of socially assistive robots in the design of intelligent cognitive therapies for people with dementia. 2009 IEEE International Conference on Rehabilitation Robotics. doi:10.1109/icorr.2009.5209501

- ↑ 10.0 10.1 US20160334799A1: Method and System for Transporting Inventory Items, Amazon Technologies Inc, Amazon Robotics LLC. (Nov 17, 2016) Retrieved April 26, 2020

- ↑ 11.0 11.1 CA2654260: System and Method for Generating a Path for a Mobile Drive Unit, Amazon Technologies Inc. (November 27, 2012) Retrieved April 26, 2020.

- ↑ US8220710B2: System and Method for Positioning a Mobile Drive Unit, Amazon Technologies Inc. (July 17, 2012) Retrieved April 26, 2020.

- ↑ US20130302132A1: System and Method for Maneuvering a Mobile Drive Unit, Amazon Technologies Inc. (Nov 14, 2013) Retrieved April 26, 2020.

- ↑ 14.0 14.1 US10222805B2: Systems and Methods for Performing Simultaneous Localization and Mapping using Machine Vision Systems, Irobot Corp. (March 5, 2019) Retrieved April 26, 2020.

- ↑ US10162359B2: Autonomous Coverage Robot, Irobot Corp. (Dec 25, 2018) Retrieved April 26, 2020.

- ↑ [1]: FastSLAM: A Factored Solution to the Simultaneous Localization and Mapping Problem, M. Montemerlo et al.

- ↑ 17.0 17.1 17.2 Defining socially assistive robotics. Feil-Seifer D., (2005)

- ↑ 18.0 18.1 Attitudes Towards Socially Assistive Robots in In- telligent Homes: Results From Laboratory Studies and Field Trials. Torta, E., Oberzaucher, J., Werner, F., Cuijpers, R. H., & Juola, J. F. (2013)

- ↑ Etiquette: Structured Interaction in Humans and Robots. Ogden B. & Dautenhahn K. (2000)

- ↑ Amodei, D., Ananthanarayanan, S., Anubhai, R., Bai, J., Battenberg, E., Case, C., ... & Chen, J. (2016, June). Deep speech 2: End-to-end speech recognition in English and mandarin. In International conference on machine learning (pp. 173-182)

- ↑ Xiong, W., Wu, L., Alleva, F., Droppo, J., Huang, X., & Stolcke, A. (2018, April). The Microsoft 2017 conversational speech recognition system. In 2018 IEEE international conference on acoustics, speech and signal processing (ICASSP) (pp. 5934-5938). IEEE

- ↑ Yuxuan Wang, RJ Skerry-Ryan, Daisy Stanton, Yonghui Wu, Ron J Weiss, Navdeep Jaitly, Zongheng Yang, Ying Xiao, Zhifeng Chen, Samy Bengio, et al. Tacotron: Towards end-to-end speech synthesis. arXiv preprint arXiv:1703.10135, 2017

- ↑ Jonathan Shen, Ruoming Pang, Ron J Weiss, Mike Schuster, Navdeep Jaitly, Zongheng Yang, Zhifeng Chen, Yu Zhang, Yuxuan Wang, Rj Skerrv-Ryan, et al. Natural tts synthesis by conditioning wavenet on mel spectrogram predictions. In 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pages 4779–4783. IEEE, 2018.

- ↑ Wei Ping, Kainan Peng, Andrew Gibiansky, Sercan O. Arik, Ajay Kannan, Sharan Narang, Jonathan Raiman, and John Miller. Deep voice 3: 2000-speaker neural text-to-speech. In International Conference on Learning Representations, 2018.

- ↑ Ren, Y., Ruan, Y., Tan, X., Qin, T., Zhao, S., Zhao, Z., & Liu, T. Y. (2019). Fastspeech: Fast, robust and controllable text to speech. In Advances in Neural Information Processing Systems (pp. 3165-3174)

- ↑ Torta, E. (2014). Approaching independent living with robots. (pp. 89-100)

- ↑ Robot carers, ethics, and older people. Sorell T., Draper H., (2014)

- ↑ [https://ieeexplore.ieee.org/document/5751968 Socially Assistive Robotics . Feil-Seiver D.., Mataric M., (2011)]

- ↑ An Ethical Evaluation of Human-Robot Relationships. De Graaf M., (2016)

- ↑ Review: Seven Matters of Concern of Social Robots and Older People. Frennert S., (2014)

- ↑ Robotic Nudges: The Ethics of Engineering a More Socially Just Human Being. Borenstein J., (2016)