Embedded Motion Control 2018 Group 6: Difference between revisions

| Line 166: | Line 166: | ||

gMapping solves the Simultaneous localization and mapping algorithm. It publishes information about the current position compared to the initial position. We want this position to be stored in the world model as a lot of choices depending on the position of the robot. The position can be obtained by listening to the transformation from the frame map, the fixed world frame created by gMapping to the frame "base_link" which is situated at the base of the robot. From this transformation, we want to know three things: the (x,y) compared to the initial position of the robot and the orientation of the robot compared to its initial orientation. This is achieved by creating a listener that listens to the broadcasted transform from the map to the "base_link" frame and saves the position and the orientation as doubles in the world model. | gMapping solves the Simultaneous localization and mapping algorithm. It publishes information about the current position compared to the initial position. We want this position to be stored in the world model as a lot of choices depending on the position of the robot. The position can be obtained by listening to the transformation from the frame map, the fixed world frame created by gMapping to the frame "base_link" which is situated at the base of the robot. From this transformation, we want to know three things: the (x,y) compared to the initial position of the robot and the orientation of the robot compared to its initial orientation. This is achieved by creating a listener that listens to the broadcasted transform from the map to the "base_link" frame and saves the position and the orientation as doubles in the world model. | ||

[[File:Part1.JPG|thumb| | [[File:Part1.JPG|thumb|right|800px|Visualization of gmapping when running the wall follower.]] | ||

[[File:Part2.png|thumb| | [[File:Part2.png|thumb|right|800px|Visualization of gmapping when running the wall follower.]]<br> | ||

=== Parking === | === Parking === | ||

Revision as of 14:47, 18 June 2018

Group members

| Name: | Report name: | Student id: |

| Thomas Bosman | T.O.S.J. Bosman | 1280554 |

| Raaf Bartelds | R. Bartelds | add number |

| Josja Geijsberts | J. Geijsberts | 0896965 |

| Rokesh Gajapathy | R. Gajapathy | 1036818 |

| Tim Albu | T. Albu | 19992109 |

| Marzieh Farahani | Marzieh Farahani | Tutor |

Initial Design

Link to Initial design report

The report for the initial design can be found here.

Requirements and Specifications

Use cases for Escape Room

1. Wall and Door Detection

2. Move with a certain profile

3. Navigate

Use cases for Hospital Room

(unfinished)

1. Mapping

2. Move with a certain profile

3. Orient itself

4. Navigate

Requirements and specification list

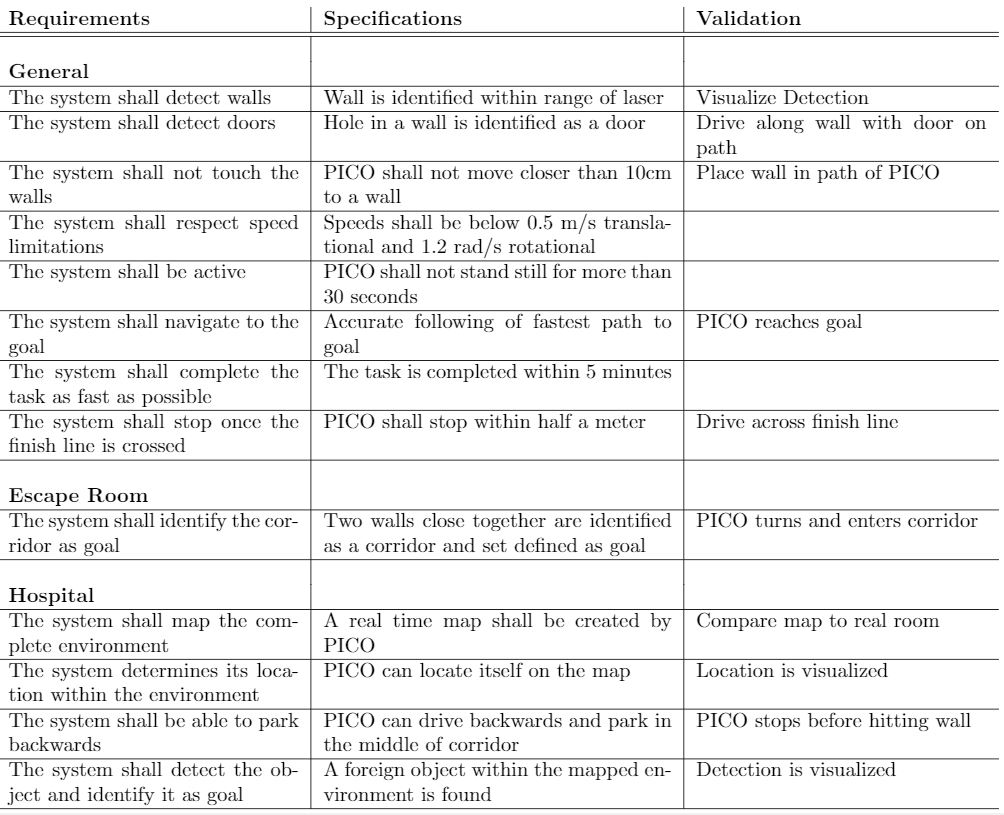

In the table below the requirements for the system and their specification as well as a validation are enumarated.

Functions, Components and Interfaces

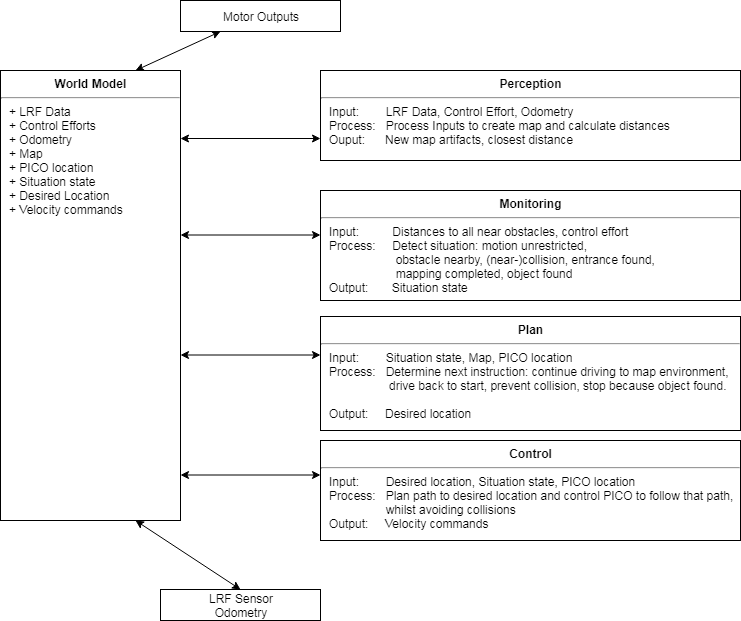

The software that will be deployed on PICO can be categorized in four different components: perception, monitoring, plan and control. They exchange information through the world model, which stores all the data. The software will have just one thread and will give control in turns to each component, in a loop: first perception, then monitoring, plan, and control. Adding multitasking in order to improve performance might be applied in a later stage of the project. Below, the functions of the four components are described. What these components will do is described for both the Escape Room Challenge (ERC) and the Hospital Challenge (HC).

In the given PICO robot there are two sensors: a laser range finder (LRF) and an odometer. The function of the LRF is to provide the detailed information of the environment through the beam of laser. The LRF specifications are shown in the table bellow,

| Specification | Values | Units |

| Detectable distance | 0.01 to 10 | meters [m] |

| Scanning angle | -2 to 2 | radians [rad] |

| Angular resolution | 0.004004 | radians [rad] |

| Scanning time | 33 | milliseconds [ms] |

At each scanning angle point a distance is measured with reference from the PICO. Hence an array of distances for an array of scanning angle points is obtained at each time instance with respect to the PICO.

The three encoders provides the odometry data (i.e) position of the PICO in x, y and &theta directions at each time instance. The LRF and Odometry observers' data plays a crucial role in mapping the environment. The mapped environment is preprocessed by two major blocks Perception and Monitoring and given to the World Model. The control approach to achieve the challenge is through Feedforward, since the observers provide the necessary information about the environment so that the PICO can react accordingly.

Interfaces and I/O relations

The figure below summarizes the different components and their input/output relations.

Overview of the interface of the software structure:

The diagram below provides a graphical overview of what the statemachine will look like. Not shown in the diagram is the case when the events Wall was hit and Stop occur. The occurence of these events will be checked in each state, and in the case they happened, the state machine will navigate to the state STOP. The state machine is likely to be more complex, since some states will comprise a sub-statemachine.

Code structure and components

Code Architecture

Gmapping

For the hospital challenge, the initial state of the environment (i.e. the hospital corridor and the rooms) has to be stored in some way. This can be done in various ways, one of which is mapping. By initially mapping the environment, all changes can be detected by comparing the current state of the environment to the initial mapped environment. Mapping itself can also be done in many ways. One commonly used method is SLAM (Simultaneous Localization and Mapping), SLAM maps the environment and simultaneously improves the accuracy of the current position based on previously mapped features. There are a lot of SLAM algorithms available, one of which is a ROS package called Gmapping. This Gmapping package uses a ROS node under the name of slam_gmapping that provides laser-based SLAM [1].

The library used by this ROS package is the library from OpenSlam [2]. The herein developed approach uses a particle filter in which each particle carries an individual map of the environment. Since the robot moves around, these particles get updated information about the environment to provide a very accurate estimate of the robot's location and thereby the provided map.

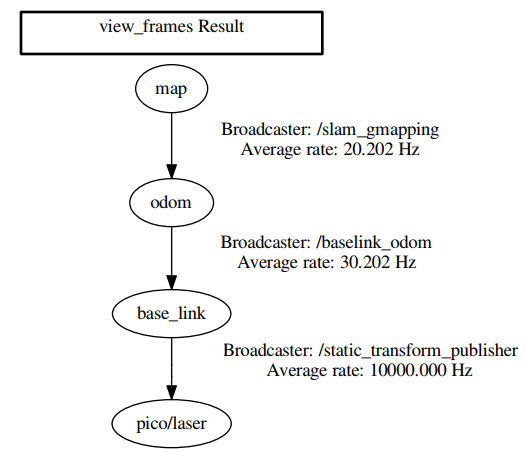

Gmapping requires three transitions between coordinate frames to be present, one between the map and the odometry, one between the odometry and baselink and one between baselink and the laser. Gmapping provides the first transition but the remaining two have to be developed. The second transition has to be made by a transform broadcaster that broadcasts the relevant information published by the odometry. The final link is merely a static transform between the baselink and the laser and can thereby be published via a static transform publisher. These transforms are made using TF2 [3]. An overview on the required transformations can be requested using the view_frames command of the TF2 package, which is shown in Figure X.

Gmapping definitely has various limitations, such as:

- Limited map size

- Computation time

- Environment has to stay constant

Result:

Getting gmapping to work within the emc framework took quite some effort, but as seen below: it works!

Available functions

Wall follower

Localization and orientation

gMapping solves the Simultaneous localization and mapping algorithm. It publishes information about the current position compared to the initial position. We want this position to be stored in the world model as a lot of choices depending on the position of the robot. The position can be obtained by listening to the transformation from the frame map, the fixed world frame created by gMapping to the frame "base_link" which is situated at the base of the robot. From this transformation, we want to know three things: the (x,y) compared to the initial position of the robot and the orientation of the robot compared to its initial orientation. This is achieved by creating a listener that listens to the broadcasted transform from the map to the "base_link" frame and saves the position and the orientation as doubles in the world model.

Parking

Finding the Door, Driving Through It

The room escape challenge and the hospital challenge have a set of basic skills in common. Among them are detecting a door, and driving through it.In the following we describe a solution we considered.

Requirements:

R1. The robot shall find the position of the door.

R2. The robot shall drive through the door.

R3. At all time, the robot shall not touch the walls.

Assumption:

A1. For the sake of simplicity, this solution makes the assumption that the robot can see all the walls around it -- that is, at any position of the robot inside the room, all the walls are within scanning distance.

A2. The robot is inside the room, at a distance from the walls that allows it to rotate without touching them -- considering the small radial movements that occur during a rotation in practice.

Design Decisions:

DD1. Based on A2, the robot shall find new information by scanning 360 degrees.

DD2. Based on A2, it can however happen that from the initial position the robot won't be able to find the door, let alone drive through it -- for instance if the initial position is close to a corner and the door is located at one of the adjacent walls. Therefore, we split our algorithm into several parts: find a position from where the robot can surely see the door, navigate there, and from there scan again to find the door. After that, drive through it.

DD3. Based on DD3, we want the robot to first find the "middle" of the room. We calculate it as the center of mass of the scanned points.

DD4. Our algorithm will perform the following:

1. Scan 360 degrees.

2. Calculate the center of mass for the scanned points, and navigate to it.

3. Scan again 360 degrees.

4. Calculate the position of the door.

5. Calculate the position of the middle of the door.

6. Drive though the middle of the door.

Remark: The best solution for step 4 is to indentify the door by finding the two discontinuities at the two margins of the door. In our solution, for the time being, we have however assumed that scanning the door will give very small values (since the distance is very large, and then the robot gives values that should be considered wrong). This solution works for the room escape challenge.

Remark: Step 6 can go wrong if the direction of driving through the middle of the door is not orthogonal to the door -- then the robot might drive through the door at a small angle and might touch the doorposts, or a wall very close to them. Therefore, after step 5, we should have:

6'. Find the point 40cm in front of the middle of the door, and navigate to it.

7'. Drive from there through the middle of the door.

For reasons related to lack of time, we implemented the solution with steps 1 - 6.

Result: In the following video we can see a test-case scenario.

video file link: https://drive.google.com/open?id=1Lwc4l8tbk_NFR4zeFK-r6-r6EjG1Ca2d

State machine and execution of the code

Challenges approach and evaluation

Escape Room Challenge

A simple code was used for the escape room challenge. It is a code that follows a wall. The idea is as follows: Once started PICO first drives in a straight, stopping 30 cm from the first encountered wall. Once a wall is detected PICO aligns it's left side with the wall and starts following the wall. Once PICO is following the wall it is testing in each loop if it can turn left. If an obstacle is detected, it checks if it can drive forward. If it can't drive forward, it will start turning right until it is aligned with the wall in front of it. Then it starts the loop again. This application is very simple but it is robust. As long as PICO is aligned with the wall to it's left and nothing is in front of it, it will drive forward. When it reaches a corner it will turn and follow the other wall. If it drives past a door it will detect empty space on it's left and turn. Due to the simple geometry of the room, the code should be sufficient for the simple task of getting out.

In the following section, more details are given about the different components of the code (perception, modeling, plan, control, world model, main)

The world model is a class with all the information stored inside. The other components, perception, modeling, plan and control function which are called at a frequency of 20 Hz by the main loop.

- World model: For this simple task, the world model is relatively simple. It contains several

- Perception: In this function, the data from the laser rangefinder is treated. First, a simple filter is applied to the data to filter are the fault data points where the laser beams hit PICO. This is done by filtering out the distances smaller than 10 cm. Then the index of each data point and the angle increment are used to calculate the angle at which the measurement was taken. Then the angle and the distance are used to convert the measurement into cartesian coordinates.

- Monitoring: In monitoring selective parts of the data are used to determine if there are obstacles to the left or front of the robot.

- Plan: For this challenge, the plan function does not add a lot of functionality. It relays the information of the monitoring. It was implemented because it is going to be used for the more complicated challenge and would simplify implementation.

- Control: In the control function, speed requirements are sent to the actuators of PICO depending on the positions of the obstacles.

The script was simple and included some elements that were not necessary but would be required for the next challenge. They were included to ease implementation at a later stage.

Result:

Merely two teams made it out of the room, one of which was us! We also had the fastest time so we won the Escape the Room Challenge!

Hospital challenge

Approach

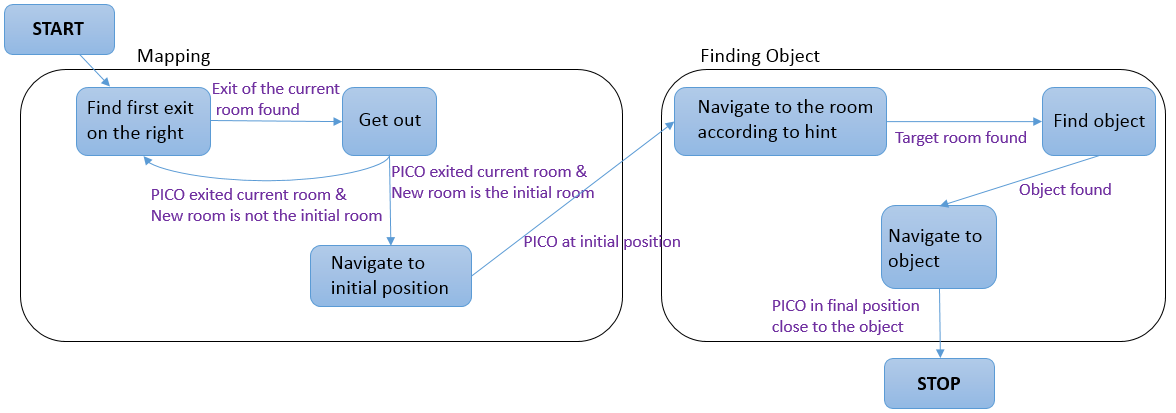

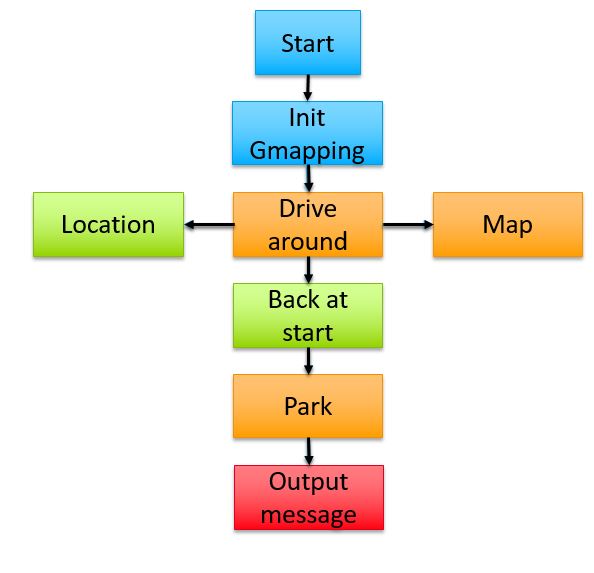

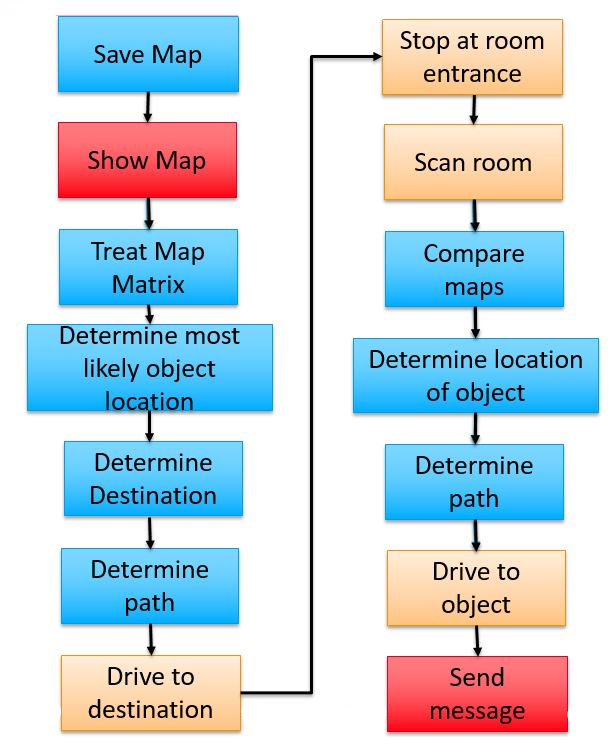

The hospital challenge had three distinct tasks that were to be performed for the challenge to be completed. First the entire environment was to be mapped. Once back at the initial position PICO should park backwards towards the wall and than finally it had to find the object and drive to it.

The used approach in the challenge was a simpler version of the planned one due to time constraints. The planned appraoch is explained in figure

Conclusion

Code

Activity Log

An overview over the meeting, activity's and planning can be found at: https://www.overleaf.com/read/vjgrrdnfzhtp

Old text

Worldmodel The world model contains the prior knowledge that is available as well as information about the surroundings of PICO. It is in the world model that the perception function stores the treated data from the laser rangefinder. Furthermore, it contains a big matrix representing the map where PICO is. This matrix is updated at each time step. Initially, all the values are unknown (represented by -1), then the values are replaced by 100 for known occupied space and 0 for known free space. This method allows for easy path planning by means of Dijkstra or A*. This matrix data can be used to create semantics for the map. From the matrix, doors, rooms, and the corridor can be classified by clever treatment of the data. A drawback of this matrix created by gmapping is that prior knowledge is not implemented. For example, sometimes the walls measured by mapping are not entirely straight or the corners are not exactly orthogonal. This makes treatment and recognition from the data harder.

Perception: In perception, the sensor data is processed. First, the data that measure distances smaller than 10 cm are filtered out, these are instances where the laser finder sees PICO itself. Then the distances and the angles are used to turn each data into cartesian coordinates. Lastly, 3 points on the left of the robot are used to check for alignment with the wall.

Monitoring Map complete Location driving: In this part of the code a

Planning

Map until known space is enclosed

drive to start

bump into wall at start

map again+ compare to the previous map

drive to object

Control

follow wall better follow trajectory drive backwards