Drone Referee - MSD 2017/18: Difference between revisions

| Line 176: | Line 176: | ||

===Interfaces=== | ===Interfaces=== | ||

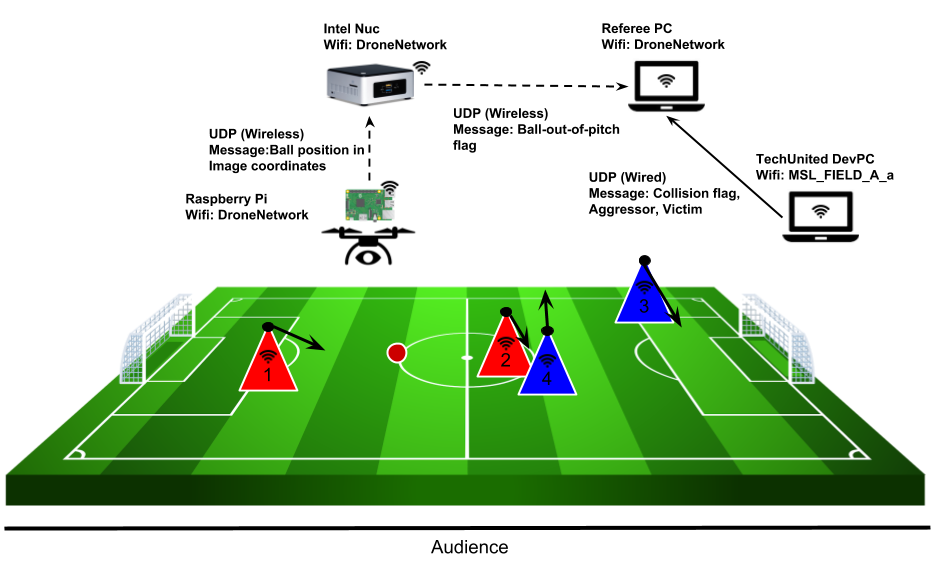

Developing additional interfaces for event detection and rule enforcement was necessary due to the following constraints. | |||

* Intel Nuc was unreliable on-board the drone. While the telemetry module and the Pixhawk helped fly the drone, a Raspberry Pi was used for the computer vision. This Raspberry Pi communicates over UDP to the Nuc. Both the Nuc and the Raspberry Pi operate over the ''DroneNetwork'' wireless network. | |||

* The collision detection utilizes the World Model of the TechUnited robots. To access this, the computer must be connected to the TechUnited wireless network. It was realized that the collision detection algorithm has nothing to do with the flight of the drone, and hence it is not necessary to broadcast the collision detection information to the Intel Nuc. Hence, this information was broadcasted directly to the remote referee over the TechUnited network. | |||

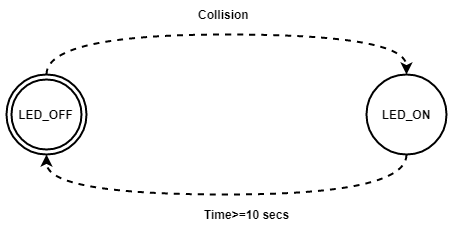

The diagram below describes all these interfaces and the data that is being transmitted/received. | |||

[[File:RuleEnforcementinterfaces.png|center|Interfaces for the event detection and rule enforcement subsystem]] | [[File:RuleEnforcementinterfaces.png|center|Interfaces for the event detection and rule enforcement subsystem]] | ||

Revision as of 09:55, 5 April 2018

Introduction

Abstract

Being a billion Euro industry, the game of Football is constantly evolving with the use of advancing technologies that not only improves the game but also the fan experience. Most football stadiums are outfitted with state-of-the-art camera technologies that provide previously unseen vantage points to audiences worldwide. However, football matches are still refereed by humans who take decisions based on their visual information alone. This causes the referee to make incorrect decisions, which might strongly affect the outcome of the games. There is a need for supporting technologies that can improve the accuracy of referee decisions. Through this project, TU Eindhoven hopes develop a system with intelligent technology that can monitor the game in real time and make fair decisions based on observed events. This project is a first step towards that goal.

In this project, a drone is used to evaluate a football match, detect events and provide recommendations to a remote referee. The remote referee is then able to make decisions based on these recommendations from the drone. This football match is played by the university’s RoboCup robots, and, as a proof-of-concept, the drone referee is developed for this environment.

This project focuses on the design and development of a high level system architecture and corresponding software modules on an existing quadrotor (drone). This project builds upon data and recommendations by the first two generations of Mechatronics System Design trainees with the purpose of providing a proof-of-concept Drone Referee for a 2x2 robot-soccer match.

Background and Context

The Drone Referee project was introduced to the PDEng Mechatronics Systems Design team of 2015. The team was successful in demonstrating a proof-of-concept architecture, and the PDEng team of 2016 developed this further on an off-the-shelf drone. The challenge presented to the team of 2017 was to use the lessons of the previous teams to develop a drone referee using a new custom-made quadrotor. This drone was built and configured by a master student and his thesis was used as the baseline for this project.

The MSD 2017 team is made up of seven people with different technical and academic backgrounds. One project manager and two team leaders were appointed and the remaining four team-members were divided under the two team leaders. The team is organized as below:

| Name | Role | Contact |

|---|---|---|

| Siddharth Khalate | Project Manager | s.r.khalate@tue.nl |

| Mohamed Abdel-Alim | Team 1 Leader | m.a.a.h.alosta@tue.nl |

| Aditya Kamath | Team 1 | a.kamath@tue.nl |

| Bahareh Aboutalebian | Team 1 | b.aboutalebian@tue.nl |

| Sabyasachi Neogi | Team 2 Leader | s.neogi@tue.nl |

| Sahar Etedali | Team 2 | s.etedalidehkordi@tue.nl |

| Mohammad Reza Homayoun | Team 2 | m.r.homayoun@tue.nl |

Problem Description

As mentioned above, the drone referee project was also performed by previous two generations of the PDEng MSD. The ideas the previous generation implemented were:

- To detect ball out of pitch

- To detect collision

However these were not in a live game and more of a proof of concept, where they performed them in a controlled simulation environment. This year the expectations of the stakeholders are to be able to monitor a 5 minute 2-against- 2 robot soccer match with a drone. The next part is a human referee who is at remote location having the drone view of the game and has a user interface which receives a set of recommendation for rule enforcement. The referee then look at the recommendation and replays to decide his final decision via user interface. This decision is then displayed on the audience screen who are near the robot soccer field; also the LEDs on the drone change color to notify whether there is a rule enforcement or not, if so then which rule is to be enforced . Finally the game restarts from the center after the rule is enforced. The rules that were to be detected and enforced were:

- Rule A: Free throw, when the ball is out of pitch, i.e. crossing the 4 lines delimiting the field a free throw is awarded to the team that last did not last touch the ball.

- Rule B: Collision detection, when two robots in pitch touch each other it is considered a foul.

System Objectives and Requirements

Project Scope

The scope of this project was refined during the design phase of the project. This was done due to considerable hardware issues and time constraints. The scope was narrowed down to the following deliverables.

- System Architecture

- Task-Skill-World model

- DSM

- Flight and Control

- Manual and Semi-Autonomous Flight

- Autonomous Flight

- Hardware and Software Interfaces

- Event Detection

- Ball-out-of-pitch Detection

- Collision detection

- Hardware and Software Interfaces

- Rule Enforcement and HMI

- Supervisory Control

- Referee/Audience GUI

- Hardware and Software Interfaces

- Demonstration Video and Final Presentation

- Wiki page

System Architecture

Architecture Description and Methodology

Implemented System Architecture

Implementation

Flight and Control

Manual Flight

Drone Localization

Autonomous Flight

Trajectory Planning and Control

Event Detection and Enforcement

Ball Out Of Pitch

Collision Detection

Collisions between robots can be detected by the distance between them and the speeds the robots are traveling at. The collision detection algorithm assumes prior knowledge of the positions of each player robot. In this project, two methods of tracking the robot position were studied and trialed - ArUco Markers, and the use of TechUnited's world model.

ArUco Markers

ArUco is a library for augmented reality (AR) applications based on OpenCV. In implementation, this library produces a dictionary of uniquely numbered AR tags that can be printed and stuck to any surface. With sufficient number of tags, the library is then able to determine the 3D position of the camera that is viewing these tags, assuming that the positions of the tags are known. In this application, the ArUco tags were stuck on top of each player robot and the camera was attached on the drone. The ArUco algorithm was reversed using perspective projections to determine the position of the AR tags using the known position of the drone (localization). However, two drawbacks of this solution were realized.

- The strategy for trajectory planning is to have the ball visible at all times. Since the priority is given to the ball, it is not necessary that all robot players are visible to the camera at all times. In certain circumstances, it is also possible that a collision occurs away from the ball and hence away from the camera’s field of view.

- In this application, the drone and the robot players will be moving at all times. At high relative speeds, the camera is unable to detect the AR tags or detects them with high latency. During trials, it was also observed that the field of view of the fish-eye camera to track these AR tags is heavily limited.

Due to these drawbacks, and considering that the drone referee will be showcased using the TechUnited robots, it was decided to use TechUnited’s infrastructure to track the player robots.

TechUnited World Model

The TechUnited robots, also known as Turtles, continuously broadcast their states to the TechUnited network. In the robotics field of TU Eindhoven, this server is a wireless network named MSL_FIELD_A_a. The broadcasted states are stored in the TechUnited World Model, which can be accessed through their multicast server. In this application, the following states are used for the collision detection method:

- Pose (x, y, heading)

- Velocity (in x direction, in y direction)

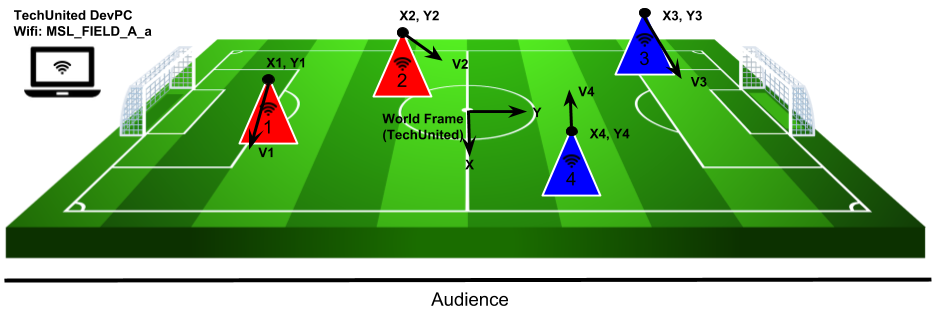

The image below shows 4 player robots divided into two teams. Using the multicast server, the position and velocity (with direction) is measured and stored. The axes in the center is the global world frame convention followed by TechUnited.

Collision Detection

Once robot positions are known, collisions between them can be detected using a function of two variables,

- Distance between the robots

- Relative velocity between the robots

These variables can be measured using the positions of the robots, their headings and their velocities. The algorithm to detect collisions uses the following pseudo-code.

while(1){

for(int i=0; i<total_players; i++){

store_state_values(i);

}

for(int reference=0; reference<total_players; reference++){

for(int target=j; target<total_players; target++){

distance_check(reference, target);

if(dist_flag == 1){

velocity_check(reference, target);

determine_guilty_player(target, reference, vel_flag);

}

}

}

}

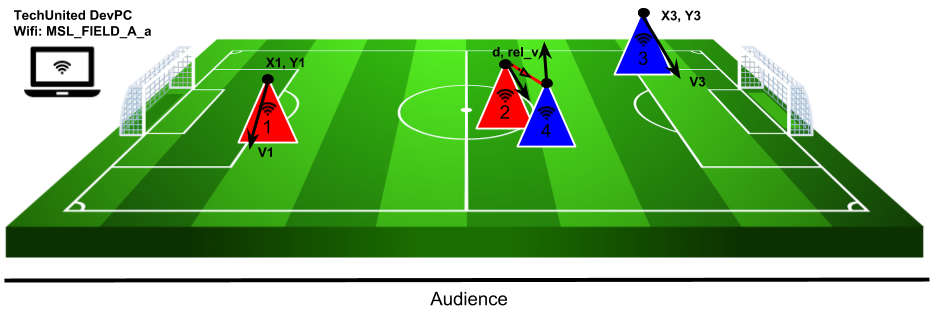

In the above pseudo-code, collisions are detected only if two robots are within a certain distance of each other. In this case, the relative velocity between these robots are checked. If this velocity is above a certain threshold, a collision is detected. The diagram below describes the scenario for a collision. In this diagram, the red line describes the two robots being close to each other, within the distance threshold. It can be considered that the robots are touching at this point. However, a collision is only defined if these robots are actually colliding. So, the relative velocity, described as rel_v' in the diagram, is checked.

The above pseudo-code is broken down and explained below:

for(int i=0; i<total_players; i++){ //check over all playing robots

store_state_values(i);

}

Store State Values: The for loop runs over all playing robots. The number of playing robots are checked before entering the while loop, in this application. The store_state_values function stores the state values of each robot into a player_state matrix. The state values stored are the pose (x, y, heading) and the velocities (vel_x, vel_y).

for(int reference=0; reference<total_players; reference++){

for(int target=reference; target<total_players; target++){

distance_check(reference, target);

...

}

}

Distance Check: For this application, a n x n matrix is made, where n describes the total number of players. The rows of this matrix describes the reference robot and the columns describe the target. In the code snippet above, two for loops can be seen. However, the second for loop starts from the reference player number. This is done to reduce redundancies and restrict the number of computations. This can be seen in the matrix description below. Every element with an x is redundant or irrelevant.

_|1|2|3|4| 1|x|0|0|0| 2|x|x|0|0| 3|x|x|x|0| 4|x|x|x|x|

So, for 4 playing robots (16 interactions), only 6 interactions are checked: 1-2, 1-3, 1-4, 2-3, 2-4, 3-4. The remaining elements in the matrix are redundant. The distance_check function checks if any two robots are within colliding distance of each other (only the above 6 interactions are checked). In this application, the colliding distance was chosen to be 50 cm. If the distance between robots is less than 50cm, the corresponding element in the above matrix is set to 1.

if(dist_flag == 1){

velocity_check(reference, target);

determine_guilty_player(target, reference, vel_flag);

}

Velocity Check: This function is called only if the distance flag is set to 1, when two robots are touching each other (distance between the robots < 50cm). Using the individual velocities and headings of each touching robot, a relative velocity is calculated at the reference robot as origin. If the absolute value of this relative velocity is greater than 5 m/s (velocity threshold in this application), the velocity flag is set. This flag, however, is a signed value, i.e. it is either +1 or -1 depending upon the direction of this relative velocity.

Determine Guilty Player: The sign of the velocity flag tells us which robot is guilty of causing this collision. If the flag is positive, the reference robot is the aggressor and the target robot is the victim of the collision. For a negative flag value, it is the other way around. This determination is important to assign blame and award free-kicks/penalties by the referee during the match.

Interfaces

Developing additional interfaces for event detection and rule enforcement was necessary due to the following constraints.

- Intel Nuc was unreliable on-board the drone. While the telemetry module and the Pixhawk helped fly the drone, a Raspberry Pi was used for the computer vision. This Raspberry Pi communicates over UDP to the Nuc. Both the Nuc and the Raspberry Pi operate over the DroneNetwork wireless network.

- The collision detection utilizes the World Model of the TechUnited robots. To access this, the computer must be connected to the TechUnited wireless network. It was realized that the collision detection algorithm has nothing to do with the flight of the drone, and hence it is not necessary to broadcast the collision detection information to the Intel Nuc. Hence, this information was broadcasted directly to the remote referee over the TechUnited network.

The diagram below describes all these interfaces and the data that is being transmitted/received.

Human-Machine Interface

The HMI implementation consists of 2 parts.

- A supervisor, which reads the collision detection and ball-out-of-pitch flag and determines the signals to be sent to the referee.

- A GUI, where the referee is able to make decisions based on the detected events and also display these decisions/enforcements to the audience.

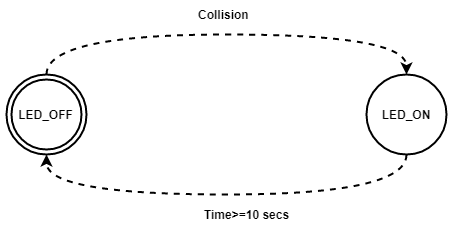

Supervisory Control

The tasks performed by the supervisor were confined to rule enforcement. These tasks are explained below.

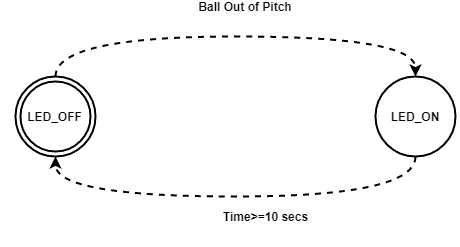

- The supervisor receives input from the vision algorithm as soon as event Ball Out of Pitch occurs. Afterwards, it provides a recommendation to the remote referee via a GUI platform running on his laptop. A constraint has been applied to terminate the recommendation automatically after 10 seconds if the referee doesn’t acknowledge the recommendation.

- The supervisor receives input about the occurrence of the event Collision Detected. As soon as this event is detected, a recommendation is sent to remote referee via GUI Platform. The termination strategy is same as the previous event termination.

- The referee has a button to show the audience the decision he has taken. For this, as soon as he pushes the button in GUI the decision is send to other screen as an LED and the supervisor terminates the LED after certain time as it releases the push button automatically.

The following automatons were used for these tasks.