PRE2017 1 Groep3: Difference between revisions

| (108 intermediate revisions by 5 users not shown) | |||

| Line 18: | Line 18: | ||

When you travel by train on a regular basis you might have noticed that when people in a wheelchair need to exit or enter the train it goes rather slow. Before they can get on or off the train, the train personnel is needed first to get some sort of ramp to let the disabled people board or exit the train. When someone in a wheelchair wants to exit or wants to board the train, the train might even be delayed because of this. As we know, trains in the Netherlands tend to be late sometimes and therefore every obstacle that is getting in the way of the schedule, should be taken care of. The boarding wheelchair is definitely one of the obstacles, because they tend to cause delays. But the perspective of the handicapped person is also important. For them the feeling of constantly being dependent on others is the worst part of living with a handicap. This dependence raises the threshold for these people to travel by train. The disabled in general lose a part of their long distance mobility when they stop using the train. This might have an impact on their social well-being (Oishi, 2010); it might be a cause for loneliness or depression, as disabled are not able to sustain distant relations (Steptoe et al., 2013). In a survey conducted by the SP 154 handicapped persons shared their complaints. Laurens Ivens and Agnes Kant translated these complaints into thirteen recommendations. Among these recommendations they state that the height difference between the train and platform should be reduced or bridged easier. They state that there should be a travel tracking system for handicapped and at last the accessibility has to be increased (Ivens and Kant, 2004). This project will research improvements for disabled people in wheelchairs travelling by train in the Netherlands. | When you travel by train on a regular basis you might have noticed that when people in a wheelchair need to exit or enter the train it goes rather slow. Before they can get on or off the train, the train personnel is needed first to get some sort of ramp to let the disabled people board or exit the train. When someone in a wheelchair wants to exit or wants to board the train, the train might even be delayed because of this. As we know, trains in the Netherlands tend to be late sometimes and therefore every obstacle that is getting in the way of the schedule, should be taken care of. The boarding wheelchair is definitely one of the obstacles, because they tend to cause delays. But the perspective of the handicapped person is also important. For them the feeling of constantly being dependent on others is the worst part of living with a handicap. This dependence raises the threshold for these people to travel by train. The disabled in general lose a part of their long distance mobility when they stop using the train. This might have an impact on their social well-being (Oishi, 2010); it might be a cause for loneliness or depression, as disabled are not able to sustain distant relations (Steptoe et al., 2013). In a survey conducted by the SP 154 handicapped persons shared their complaints. Laurens Ivens and Agnes Kant translated these complaints into thirteen recommendations. Among these recommendations they state that the height difference between the train and platform should be reduced or bridged easier. They state that there should be a travel tracking system for handicapped and at last the accessibility has to be increased (Ivens and Kant, 2004). This project will research improvements for disabled people in wheelchairs travelling by train in the Netherlands. | ||

This project will first determine the problems wheelchair-bound people face when travelling by train. Then, we look at different stakeholders and possible solutions. After that, questionnaires will be held with stakeholders to determine the needs. After this, a final design for our helping robot will be made and a prototype will show some of the working | This project will first determine the problems wheelchair-bound people face when travelling by train. Then, we look at different stakeholders and possible solutions. After that, questionnaires will be held with stakeholders to determine the needs. After this, a final design for our helping robot will be made and a prototype will show some of the working principles that need to be proven in order to give credibility to the final design. | ||

Team motto: | |||

'''Veni, vidi, wheelie!''' | |||

=USE-analysis= | =USE-analysis= | ||

| Line 42: | Line 46: | ||

To find participants for this study, several steps were taken: a call on Facebook was posted for the target groups, personal networks were contacted and NS staff on Eindhoven station were approached in person. The questionnaires could be filled in online or on paper. | To find participants for this study, several steps were taken: a call on Facebook was posted for the target groups, personal networks were contacted and NS staff on Eindhoven station were approached in person. The questionnaires could be filled in online or on paper. | ||

We aimed to get 5 participants for the disabled target group, and 2 to 3 for the NS staff. These amounts are based on what is reasonable for the scope of this project; due to time constraints it is not an option to find large groups of participants. The fact that it is hard to find | We aimed to get 5 participants for the disabled target group, and 2 to 3 for the NS staff. These amounts are based on what is reasonable for the scope of this project; due to time constraints it is not an option to find large groups of participants. The fact that it is hard to find wheelchair bound people that actually use the train was further confirmed by the low response rate on our call for questionnaires. The questionnaires were written in Dutch and answered in Dutch. | ||

Link to paper questionnaire for the disabled: [[Media: Enquete mindervaliden.pdf]] | Link to paper questionnaire for the disabled: [[Media: Enquete mindervaliden.pdf]] | ||

| Line 204: | Line 208: | ||

From the questionnaires we identified a specific user need: he/she mentioned he wanted more influence on the process. Since we are designing in an iterative manner, our concept got updated after the questionnaire result was known. It was decided to involve the concept of shared control in our autonomous robot. To close the loop, in an ideal situation we would want to test this interpretation of the user need with the user. However, due to time constraints and a lack of interest from disabled people to answer questions regarding the topic, we were unable to reach out to check this interpretation. However, a literature research can be performed to find out more on the topic of disabled people, shared control and a lack of influence. This can be found below in this Wiki. | From the questionnaires we identified a specific user need: he/she mentioned he wanted more influence on the process. Since we are designing in an iterative manner, our concept got updated after the questionnaire result was known. It was decided to involve the concept of shared control in our autonomous robot. To close the loop, in an ideal situation we would want to test this interpretation of the user need with the user. However, due to time constraints and a lack of interest from disabled people to answer questions regarding the topic, we were unable to reach out to check this interpretation. However, a literature research can be performed to find out more on the topic of disabled people, shared control and a lack of influence. This can be found below in this Wiki. | ||

==Jobs of the train staff== | |||

As was already mentioned in the results of the questionnaire for the NS staff, the NS service staff is afraid to lose their jobs when helping the disabled people is automated. Their concerns are true because when the robot functions as it is desired to function no staff is needed for helping the disabled entering and exiting the train. It is important to think about the consequences of introducing a robotic technology and what impact that it might have on people their jobs. The NS assistance is only available at 100 of the 400 trainstations in the Netherlands, more information about this can be found in the current situation chapter. When the robot is ready to be implemented in the trainstations it could be beneficial to first start at stations where the NS assistance is not available. This way disabled people are able to travel to more locations and the current NS service staff can still operate at the current trainstation. When the implementation to the other trainstation is successful it can be extended to the other, bigger, stations as well. The staff that is currently working at these stations can be used in a different way. For example for checking if there are no problems with the robots and if everyone understands how to use them properly. They can also fulfill other jobs at the NS for example the other service jobs like informing people on how to get to their destination. Of course this will result in less jobs but since the robotic concept is designed to make it easier for disabled people to travel by train this is not the primary concern. | |||

= Current Situation = | = Current Situation = | ||

This section will take a look into the current situation of train traveling for disabled. | This section will take a look into the current situation of train traveling for the disabled. | ||

== The current model== | == The current model== | ||

In the following section you will be guided through the current process of boarding a train when you are disabled. | In the following section you will be guided through the current process of boarding a train when you are disabled. | ||

| Line 229: | Line 236: | ||

== Other Countries == | == Other Countries == | ||

[[File:wheelchairlift.jpg|thumb|300px| A modern wheelchair lift]] | [[File:wheelchairlift.jpg|thumb|300px| A modern wheelchair lift]] | ||

Most railway companies in other European countries are bound by law to | Most railway companies in other European countries are bound by law to accommodate disabled people onto their train. Trains like the Eurostar have dedicated spaces inside trains in the 1st class cars, and allow for an additional passenger to come with the wheelchair bound customer. Most railways companies work like the NS system: you have to plan your trip ahead of time (online or through customer service) so the railway employees can help you along your trip. However, not all trips are allowed because railway companies like Deutsche Bahn have a specific time that they need to make sure you can transfer between trains, thus some passengers have to wait for the next train because a 10 minute transfer between trains is not possible. | ||

Either ramps or mobile wheelchair lifts are used. These are stored on the platform and chained to a pole or wall and the railway employee will put this ramp in place for you. Then, when it’s connected to the train door the railway employee will push you on board or place you on the mobile wheelchair lift. When you are on the lift both sides are closed and the employee presses a button to align the height with the train door. Once it’s done lifting, the front ramp will go down and you can ride on the train on your own. It’s also possible for trains to have a ramp inside the train floor that goes out when a button is pressed. | Either ramps or mobile wheelchair lifts are used. These are stored on the platform and chained to a pole or wall and the railway employee will put this ramp in place for you. Then, when it’s connected to the train door the railway employee will push you on board or place you on the mobile wheelchair lift. When you are on the lift both sides are closed and the employee presses a button to align the height with the train door. Once it’s done lifting, the front ramp will go down and you can ride on the train on your own. It’s also possible for trains to have a ramp inside the train floor that goes out when a button is pressed. | ||

| Line 237: | Line 244: | ||

= Robotic Solution Specifications = | = Robotic Solution Specifications = | ||

In this section the requirements, preferences and constraints of the complete solution are stated. Consequently the new solution is described. | In this section the requirements, preferences and constraints of the complete solution are stated. Consequently the new solution is described and the concepts we used to get there. | ||

== RPC's == | == RPC's == | ||

The requirements, preferences and constraints. | The requirements, preferences and constraints. | ||

| Line 281: | Line 288: | ||

'''Solutions''' | '''Solutions''' | ||

* The motion is to be planned within the kinematic constraints of the robot. A Quintic polynomial could be used to control start values of position, velocity and acceleration. The problem is that the robot is constrained in | * The motion is to be planned within the kinematic constraints of the robot. A Quintic polynomial could be used to control start values of position, velocity and acceleration. The problem is that the robot is constrained in its movement. So the orientation matters. We could describe the path as a series of robotic links, making constraints between the links. In a way the robot can always go from one to another. | ||

* The motion should be tracked by suppressing disturbances. This could be done using the kinematic equations of motion represented in a state space. | * The motion should be tracked by suppressing disturbances. This could be done using the kinematic equations of motion represented in a state space. | ||

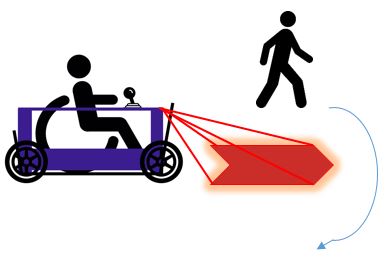

* It has to move around obstacles, human and inhuman. This could be done by planning a path around it. Proximity sensors make a map of the nearest obstacles. Just a thought on this problems allows to fantasize a solution where the controller tracks the path, but starts deviating from the path as the sensors pick up obstacles. So instead of thriving for zero error, the error could increase with sensor input. The human obstacles are mobile, which means that they could move aside if urged to. | * It has to move around obstacles, human and inhuman. This could be done by planning a path around it. Proximity sensors make a map of the nearest obstacles. Just a thought on this problems allows to fantasize a solution where the controller tracks the path, but starts deviating from the path as the sensors pick up obstacles. So instead of thriving for zero error, the error could increase with sensor input. The human obstacles are mobile, which means that they could move aside if urged to. | ||

| Line 292: | Line 299: | ||

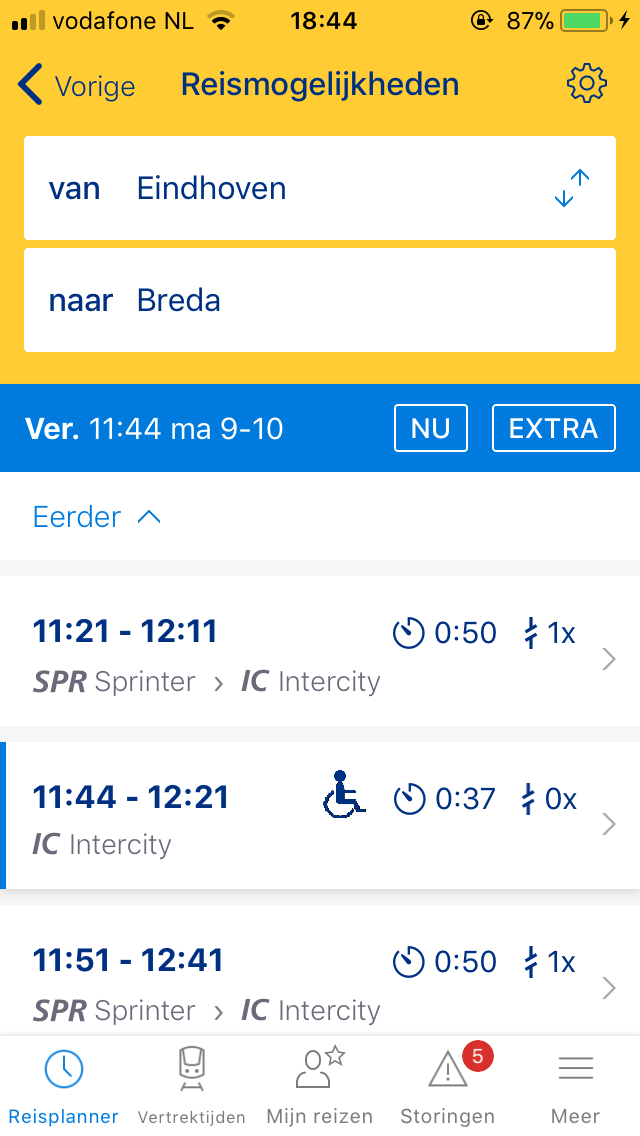

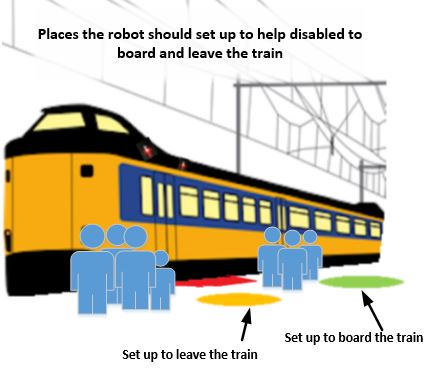

In order to be ready to dock to the train when it arrives, the disabled person has to activate the robot to move to the correct position 5-10 minutes before arrival of the train. It will have to avoid passengers and bags on the ground on its own, but ideally this problem is limited by either introducing a "wheelchair robot path" on the ground so people know where to avoid placing bags or it has sensors in front that enable it to manouvre around these objects. | In order to be ready to dock to the train when it arrives, the disabled person has to activate the robot to move to the correct position 5-10 minutes before arrival of the train. It will have to avoid passengers and bags on the ground on its own, but ideally this problem is limited by either introducing a "wheelchair robot path" on the ground so people know where to avoid placing bags or it has sensors in front that enable it to manouvre around these objects. | ||

Because it has accurate trip information through the NS app the robot will know train arrivals in which a wheelchair is, so it needs to be ready to help this person get out of the train completely on its | Because it has accurate trip information through the NS app the robot will know train arrivals in which a wheelchair is, so it needs to be ready to help this person get out of the train completely on its own, without any physical "log in" with the NS app. | ||

== Preliminary design == | == Preliminary design == | ||

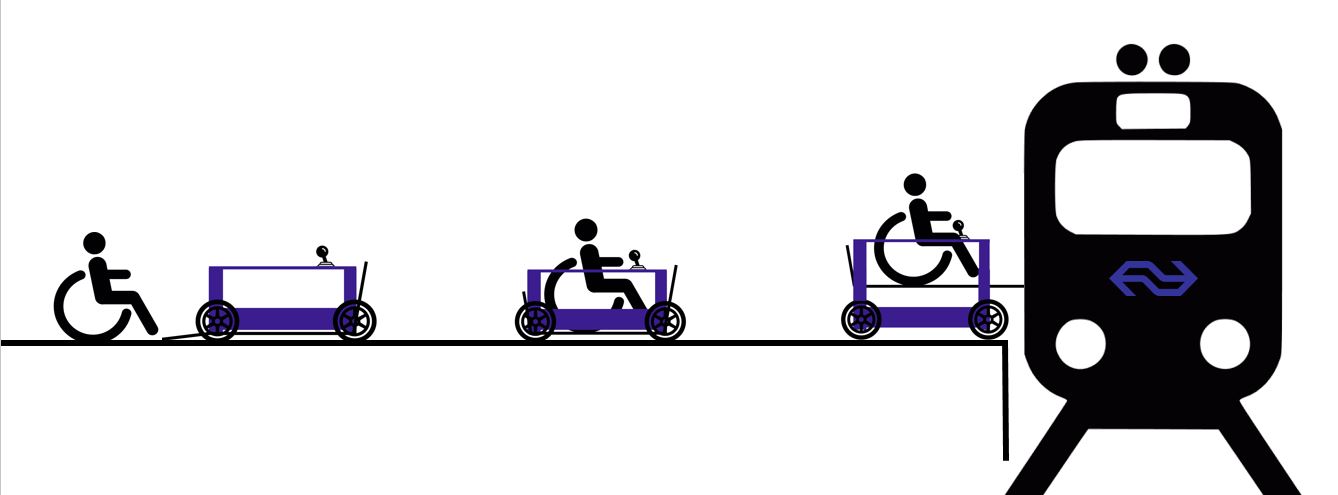

The Preliminary design is basically a combination of design 1 and 5. The design will be an autonomously driving vehicle that can be placed at each platform. The vehicle has 4 wheels and uses a horizontal plate that can be lifted up and down to be able to reach the right height to enter the train. It will be placed at one end of the platform which will be called its homing position. | The Preliminary design is basically a combination of design 1 and 5. The design will be an autonomously driving vehicle that can be placed at each platform. The vehicle has 4 wheels and uses a horizontal plate that can be lifted up and down to be able to reach the right height to enter the train. It will be placed at one end of the platform which will be called its homing position. | ||

[[File:Chairliftdown.JPG| | [[File:Chairliftdown.JPG|340px]][[File: Wheelchairfinal.png|200px]] [[File: Chairliftup.JPG|240px]] | ||

At its homing position a power station will be placed. The robot will always come back to the homing position and attach itself to the power station. The robot has to be equipped with different kinds of sensors. For example the robot should be able to sense obstacles in its driving path. When the robot senses something is in its way it should stop and give some kind of signal to let | At its homing position a power station will be placed. The robot will always come back to the homing position and attach itself to the power station. The robot has to be equipped with different kinds of sensors. For example the robot should be able to sense obstacles in its driving path. When the robot senses something is in its way it should stop and give some kind of signal to let its surroundings know that something is blocking the robot. Another design challenge is to find out how the robot can locate a door where the person can enter or exit the train. The first idea for a solution to this is to equip every train with a sensor at the very first and last door of the train, these doors will then be used as an entrance for disabled people. An advantage of this solution is that the robot can always choose the door which is nearest to its homing position and therefore less people will walk in its driveway and the time to arrive at the door will be short. | ||

== Idealized solution == | == Idealized solution == | ||

The idealized solution has to fulfill every requirement, preference and constraint. The biggest goal is that disabled people are able to travel all by themselves. This means that they can reach the platform and use the automated assistance system to exit and enter the train without any staff being involved. | The idealized solution has to fulfill every requirement, preference and constraint. The biggest goal is that disabled people are able to travel all by themselves. This means that they can reach the platform and use the automated assistance system to exit and enter the train without any staff being involved. | ||

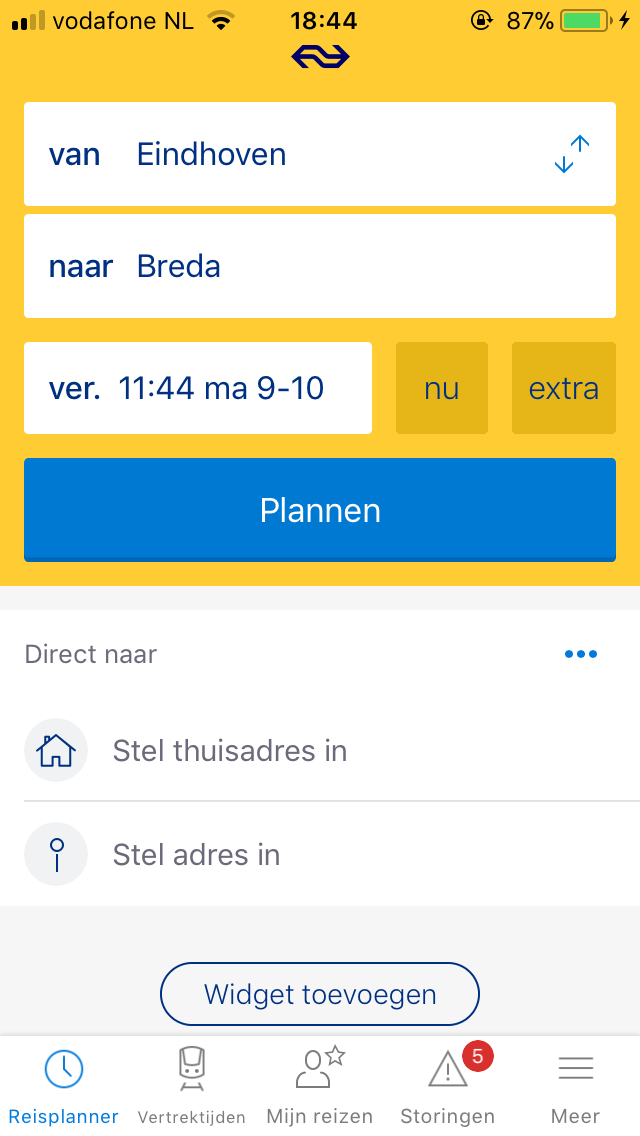

*Before using the robot, the disabled person has to use the app (see information below) to enter his trip and to reserve the robot at the platform of departure and arrival. | *Before using the robot, the disabled person has to use the app (see information below) to enter his trip and to reserve the robot at the platform of departure and arrival. | ||

| Line 309: | Line 315: | ||

*The disabled person needs to check in like every other person that uses the train. The people that need assistance when entering or exiting the train have a special OV-card which can be used at the robot touchscreen to activate the automated assistance. Since the user has entered his trip in the app, the robot knows which side of the platform he may have to drive to, in case he should drive autonomously. | *The disabled person needs to check in like every other person that uses the train. The people that need assistance when entering or exiting the train have a special OV-card which can be used at the robot touchscreen to activate the automated assistance. Since the user has entered his trip in the app, the robot knows which side of the platform he may have to drive to, in case he should drive autonomously. | ||

*The disabled person enters the robot with his/her wheelchair. He/she can select on a touchscreen whether or not to drive themselves, or let the robot drive. | *The disabled person enters the robot with his/her wheelchair. He/she can select on a touchscreen whether or not to drive themselves, or let the robot drive. | ||

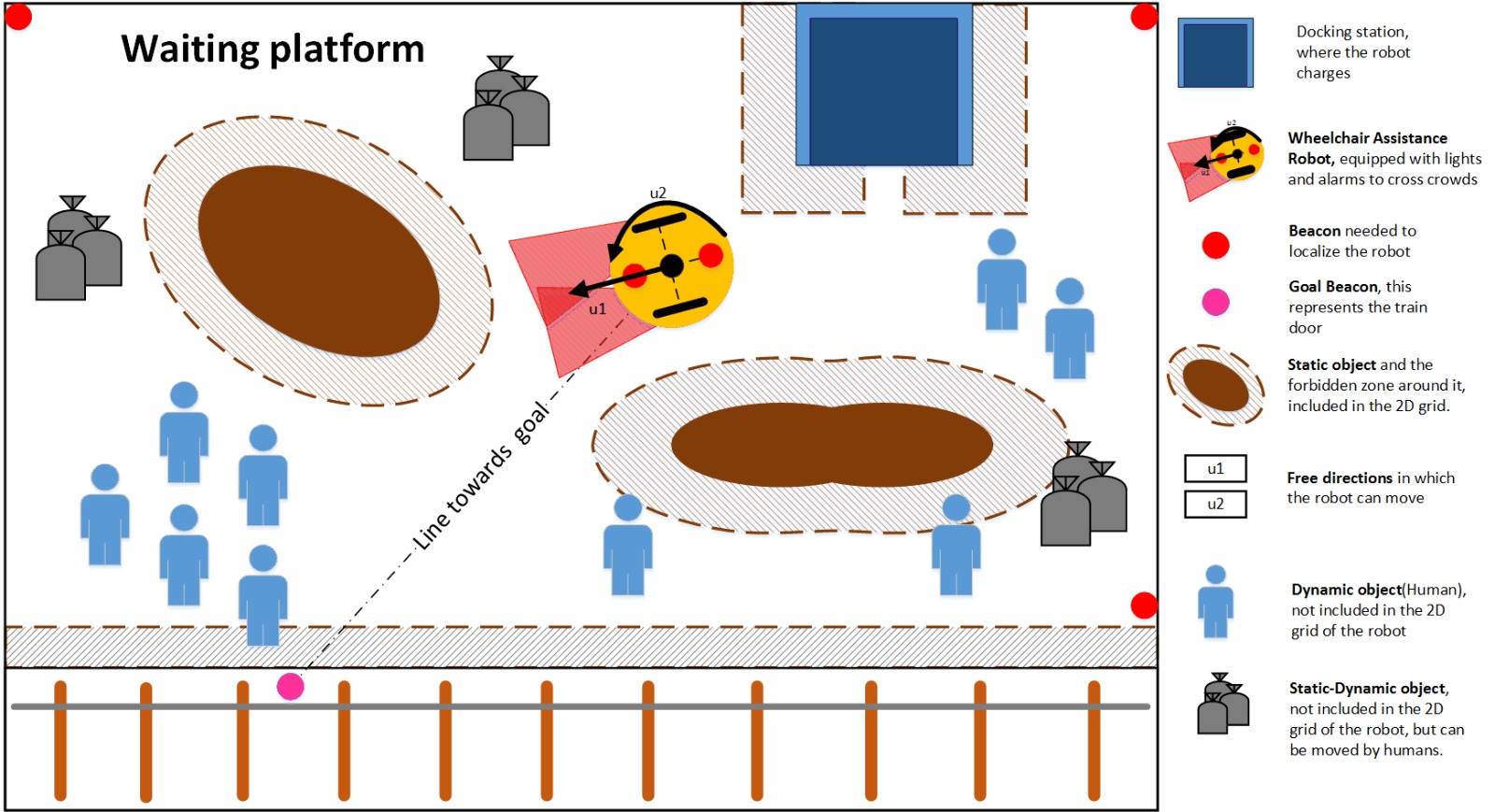

*If you want to drive yourself, you can use the joystick to navigate towards the train doors. Where one should position itself with the robot before docking is depicted in the figure below. The picture is explained in detail in the next chapter. | |||

*With the use of shared control, he/she can already drive itself towards the train, and in case the person navigates too close near an obstacle, the system will take over and redirect the robot, passing the obstacle. The person can choose if he wants to pass the object over the left or right side. When the train ultimately arrives, the robot will autonomously dock. | |||

*If the disabled person does not want to drive, the robot will autonomously drive towards its docking position. During the movement of the robot the robot should always pick the shortest but also the safest path to the door. With safest is meant that the robot should never hit any obstacles or any passengers. To realize this the robot needs to be aware of its own position and the position of obstacles and people. The robot should therefore be able to constantly adapt its driving path to avoid moving obstacles as efficient as possible. This is a very important aspect, which will be elaborated on later in this wiki. | |||

With the use of shared control, he/she can already drive itself towards the train, and in case the person navigates too close near an obstacle, the system will take over and redirect the robot, passing the obstacle. The person can choose if he wants to pass the object over the left or right side. When the train ultimately arrives, the robot will autonomously dock. | |||

*After docking, the person can enter the train. | *After docking, the person can enter the train. | ||

*In the mean time, at the desired destination, the robot is stationed already at the door where the person wants to exit the train (this information is transmitted through the OV pole) | *In the mean time, at the desired destination, the robot is stationed already at the door where the person wants to exit the train (this information is transmitted through the OV pole) | ||

| Line 322: | Line 325: | ||

== Safety regulations & Patent Check == | == Safety regulations & Patent Check == | ||

The concept needs to | The concept needs to comply with safety regulations for autonomous driving vehicles: | ||

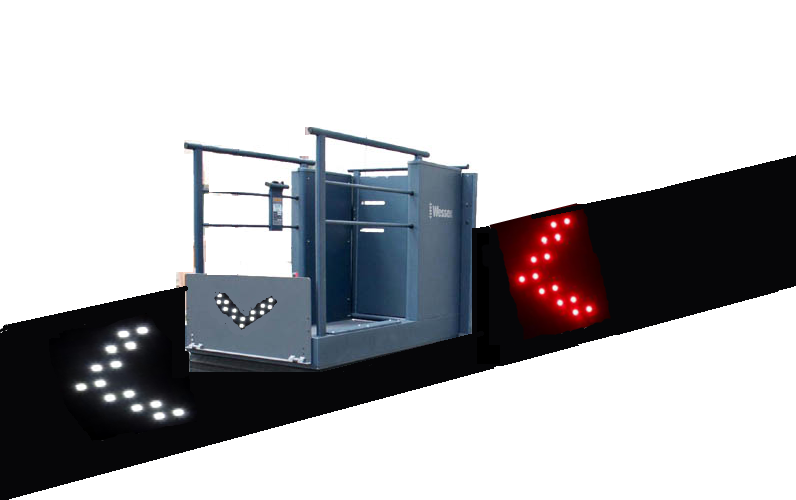

For autonomous driving vehicles there are up to today no universal laws or regulations. A congress | For autonomous driving vehicles there are up to today no universal laws or regulations. A congress(Arc, n.d.) near the end of this year should shed some light on this issue. For now we think it suffices to make the vehicle as safe as possible, so the risk of getting in a hazard while driving is minimal. The people around the vehicle should be aware it is driving and have to move out of the way, this can be achieved with an alarm and floodlights. | ||

Safety regulations for lifting people: | Safety regulations for lifting people: | ||

There are a lot of rules and regulations for lifting a person, but most of them are simple like: the lift should be designed to minimize the risk of getting in to a hazard. The full list of rules and regulations can be found here: | There are a lot of rules and regulations for lifting a person, but most of them are simple like: the lift should be designed to minimize the risk of getting in to a hazard. The full list of rules and regulations can be found here: (HSE,2008). The important thing we should take in mind regarding these safety regulations is that in no case the vehicle can flip over while lifting a person or the person can drive off the lift while going up or down. | ||

There are no existing patents regarding the basic idea of autonomous train assistance. This patent check was done by inserting the terms wheelchair, train and wheelchair, lifting into the US Patent & Trademark Office search tool which includes international trademarks. Thus, the robotic solution does not need to take patent law into account regarding the robotic wheelchair lift concept. | There are no existing patents regarding the basic idea of autonomous train assistance. This patent check was done by inserting the terms wheelchair, train and wheelchair, lifting into the US Patent & Trademark Office search tool which includes international trademarks. Thus, the robotic solution does not need to take patent law into account regarding the robotic wheelchair lift concept. | ||

| Line 344: | Line 339: | ||

* Completely safe to use for the disabled person but also completely safe to other passengers on the train. | * Completely safe to use for the disabled person but also completely safe to other passengers on the train. | ||

This requirement is guaranteed for the current solution since train staff is involved it is completely safe to use. | This requirement is guaranteed for the current solution, since train staff is involved it is completely safe to use. | ||

* Able to use continuously, if not it will cause delay for the train or the person misses the train. | * Able to use continuously, if not it will cause delay for the train or the person misses the train. | ||

| Line 356: | Line 351: | ||

* The solution should not cause delay for other people who want to board the train. | * The solution should not cause delay for other people who want to board the train. | ||

The current solution is in most cases not causing delay for other people since they wait till everyone else has entered the train before | The current solution is in most cases not causing delay for other people since they wait till everyone else has entered the train before they help the disabled person with entering the train. | ||

= Robotic Solution Concept Explained = | = Robotic Solution Concept Explained = | ||

In this chapter the new solution is discussed in detail. First the update on the current application is explained. Subsequently the interface of the robot. Then the interaction with the surroundings are | In this chapter the new solution is discussed in detail. First the update on the current application is explained. Subsequently the interface of the robot. Then the interaction with the surroundings are discussed. Eventually the robot docking is discussed. These first parts are mainly about ethics, surroundings and experience. The robot can also move on its own, so the autonomous driving chapter will discuss the capabilities of the robot, without humans. In the end the concept of shared control is discussed, as a result of the user questionnaire. | ||

== NS App integration == | == NS App integration == | ||

| Line 375: | Line 370: | ||

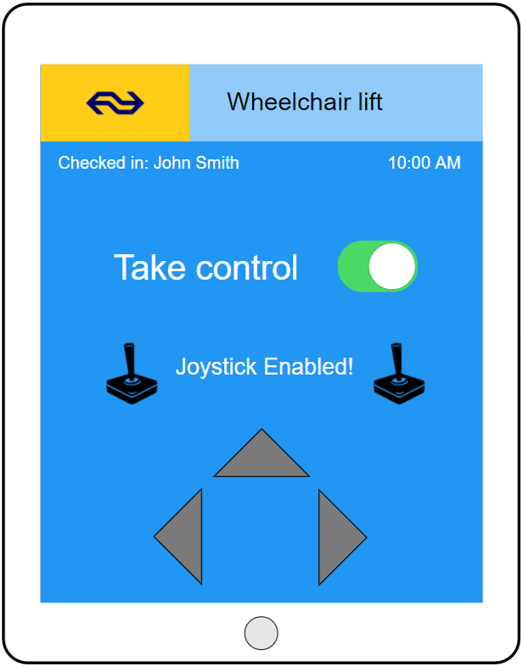

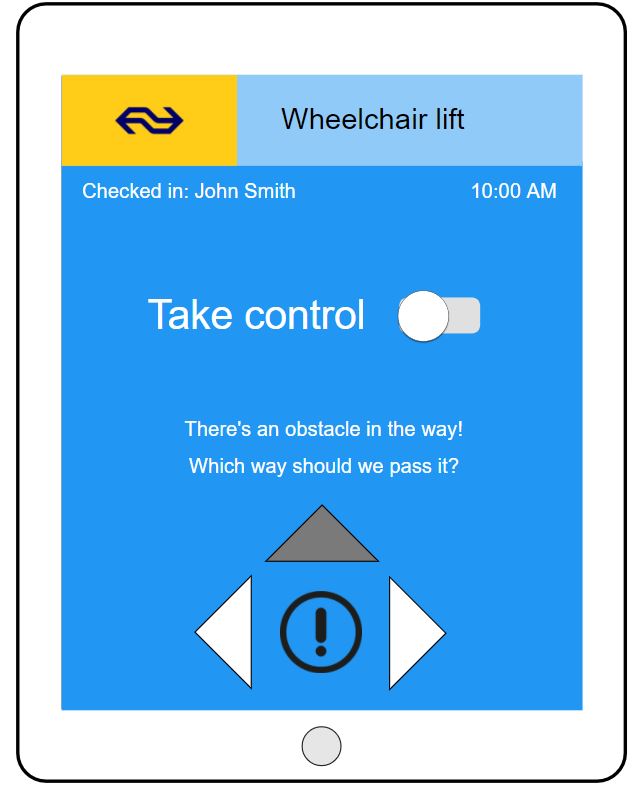

[[File:joystick.jpg|270px|Armrest]][[File:Ovcheckin.png|250px|OV-checkin]] [[File:Screen1.png|250px|touchscreen]] [[File:Screen2.JPG|260px|touchscreen]] | [[File:joystick.jpg|270px|Armrest]][[File:Ovcheckin.png|250px|OV-checkin]] [[File:Screen1.png|250px|touchscreen]] [[File:Screen2.JPG|260px|touchscreen]] | ||

On the left side of the wheelchair, an integrated touchscreen is | On the left side of the wheelchair, an integrated touchscreen is visible to the user. This touchscreen acts as a panel to activate the robot with the OV-chipcard (picture 1) and as a navigation tool. Users can toggle between joystick-mode and autonomous mode with this control. Also, when in autonomous mode navigation options are shown when objects are encountered. | ||

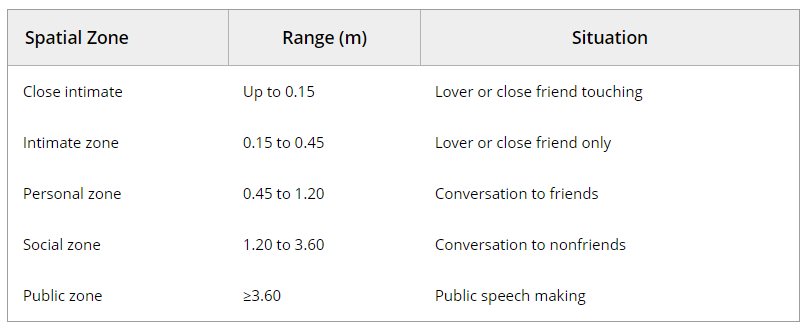

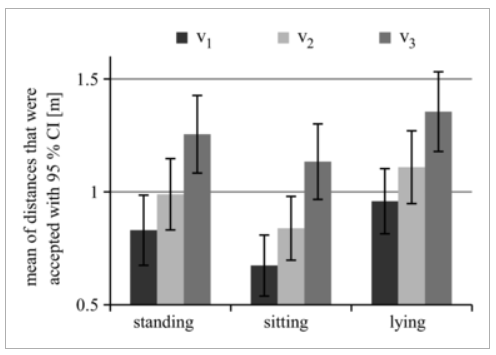

== Robot-surrounding interaction == | == Robot-surrounding interaction == | ||

| Line 425: | Line 420: | ||

*The colors on the ground will prevent people from walking in this illuminated space | *The colors on the ground will prevent people from walking in this illuminated space | ||

The | The right picture below shows how lights are used in the design. | ||

[[File:robot navigation.png|300px| Picture 1]] [[File:Lightschair.JPG|300px| Picture 2]] | [[File:robot navigation.png|300px| Picture 1]] [[File:Lightschair.JPG|300px| Picture 2]] | ||

==== Light ==== | ==== Light ==== | ||

The color of the lights is chosen to be red | The color of the lights is chosen to be red in the direction where the robot is heading towards (in front of the robot) and green on the back of the robot. The use of these colors will indicate that people are allowed to walk behin dthe robot but should avoid walking too close in front of the robot. With the lights people will avoid the front section of the robot which makes it easier to drive through a crowded area. | ||

=== Alarm === | ==== Alarm ==== | ||

The alarm cannot cause panic on the waiting platform. Therefore it should not make a similar sound to that of police, ambulance, fireguard or other emergency institutions. In fact it might even be a good idea to play some familiar music. Maybe even piano music. This is already used in Taiwan with garbage collection. The garbage trucks play "Fur Elise" from Beethoven. The people know this and go out to bring their garbage to the truck. It is draws attention and it is not especially agitating. Thus it might help to make the waiting platform slightly more friendly. The robot should indicate its approach by a sound. For the scope of this project it is not a priority to find the actual sound; however, we can identify the requirements for this sound: | - | ||

*It should be loud enough to be heard by | When people are not paying attention to the lights and get too close to the robot the robot sounds an alarm. This way people are alerted when they are standing in its way. The alarm cannot cause panic on the waiting platform. Therefore it should not make a similar sound to that of police, ambulance, fireguard or other emergency institutions. In fact it might even be a good idea to play some familiar music. Maybe even piano music. This is already used in Taiwan with garbage collection. The garbage trucks play "Fur Elise" from Beethoven. The people know this and go out to bring their garbage to the truck. It is draws attention and it is not especially agitating. Thus it might help to make the waiting platform slightly more friendly. The robot should indicate its approach by a sound. For the scope of this project it is not a priority to find the actual sound; however, we can identify the requirements for this sound: | ||

*The sound should not be very ‘alarm-like’, as this may cause a scare, and more importantly, may cause people to panic or think of an emergency. The robot passing is obviously not an emergency, and the sound should therefore indicate the | *It should be loud enough to be heard by everyone within a radius of 10 meter around the robot, even for people that are hard of hearing people and people using headphones. It should however not be too loud; it should not cause a hearing impairment, or cause annoyance or possible scare people. | ||

*To enhance pleasure in use, we could choose for a song to indicate the robot’s passing. As the sound is meant to beware people of the robot | *The sound should not be very ‘alarm-like’, as this may cause a scare, and more importantly, may cause people to panic or think of an emergency. The robot passing is obviously not an emergency, and the sound should therefore indicate the robots passing in a serene, but hearable manner. Another option would be for a robot voice to signal its passing, e.g. 'Please move!'. | ||

*To enhance pleasure in use, we could choose for a song to indicate the robot’s passing. As the sound is meant to beware people of the robot passing through, we could for example use ‘Go your own way’ from Fleetwood Mac. | |||

== Autonomous Driving == | == Autonomous Driving == | ||

In this chapter the autonomous function of the robot will be presented. The background and techniques that we like to use in a hardware implementation should be used as a starting point for the actual implementation. First the localization and orientation of the robot are discussed, because it is a starting point of planning and acting in the real world (Russel, S. and Norvig, P. | In this chapter the autonomous function of the robot will be presented. The background and techniques that we like to use in a hardware implementation should be used as a starting point for the actual implementation. First the localization and orientation of the robot are discussed, because it is a starting point of planning and acting in the real world (Russel, S. and Norvig, P., 2014). Then the planning and acting on the platform will be discussed on fundamental level. The following chapter is mainly based on the book "Artificial Intelligence, A Modern Approach" written by Stuart Russel and Peter Norvig and published in 2014. In the eventual implementation it is advised to read their book in addition to this chapter. | ||

=== Robot Hardware === | === Robot Hardware === | ||

==== Perception ==== | ==== Perception ==== | ||

Although perception appears to be effortless for humans, it requires a significant amount of sophisticated computation. The goal of vision is to extract information needed for tasks such as manipulation, navigation, and object recognition. The robot traversing the platform will have different methods to face different obstacles, but in order to choose the right branch of action the robot first has to know what is happening. Perception in our robot will be done with active sensing. The robot should combine ultrasound with laser or camera vision. Visual observations are extraordinarily rich, both the detail they can reveal and in the sheer amount of data they produce. The extra problem to face with the wheelchair robot is then to determine which aspects of the rich visual stimulus should be considered to help make good choices, and which aspects to ignore. The ultrasound sensors will mainly determine the world map and notice when objects are moving. The visual observations should help the robot distinguish between different dynamic objects (e.g. human and cat). Together with the previous Norvig and Russel also mention that visual object recognition in its full generality is a very hard problem, but with simple feature-based approaches our robot should be able to know enough to take action. Perception can also help our robot measure | Although perception appears to be effortless for humans, it requires a significant amount of sophisticated computation. The goal of vision is to extract information needed for tasks such as manipulation, navigation, and object recognition. The robot traversing the platform will have different methods to face different obstacles, but in order to choose the right branch of action the robot first has to know what is happening. Perception in our robot will be done with active sensing. The robot should combine ultrasound with laser or camera vision. Visual observations are extraordinarily rich, both the detail they can reveal and in the sheer amount of data they produce. The extra problem to face with the wheelchair robot is then to determine which aspects of the rich visual stimulus should be considered to help make good choices, and which aspects to ignore. The ultrasound sensors will mainly determine the world map and notice when objects are moving. The visual observations should help the robot distinguish between different dynamic objects (e.g. human and cat). Together with the previous Norvig and Russel also mention that visual object recognition in its full generality is a very hard problem, but with simple feature-based approaches our robot should be able to know enough to take action. Perception can also help our robot measure its own motion using accelerometers and gyroscopes. | ||

==== Effectors ==== | ==== Effectors ==== | ||

Effectors are the means by which robots move and change the shape of their bodies (Russel and Norvig, 2015). Our robot will have a differential drive for locomotion. This imposes three degrees of freedom on our robot (x,y position and xy orientation) that can be obtained with the marvelmind beacons discussed before. Only two degrees of freedom are controllable and hence the robot is nonholonomic. This makes the robot harder to control as it cannot move sideways. Yet the choice to only pick two wheels on the side of a disk makes the kinematic model of our robot a lot simpler. | Effectors are the means by which robots move and change the shape of their bodies (Russel and Norvig, 2015). Our robot will have a differential drive for locomotion. This imposes three degrees of freedom on our robot (x,y position and xy orientation) that can be obtained with the marvelmind beacons discussed before. Only two degrees of freedom are controllable and hence the robot is nonholonomic. This makes the robot harder to control as it cannot move sideways. Yet the choice to only pick two wheels on the side of a disk makes the kinematic model of our robot a lot simpler. | ||

== Robotic perception and path planning== | === Robotic perception and path planning=== | ||

Earlier on the perception criteria for the robot where discussed, here the hardware/software implementation is discussed. Russel and Norvig give the following | Earlier on the perception criteria for the robot where discussed, here the hardware/software implementation is discussed. Russel and Norvig give the following definition to perception: "Perception is the process by which robots map sensor measurements into internal representations of the environment." Perception is hard because the environment is partially observable, unpredictable, and dynamic for our robot. In addition the sensors are noisy. In all cases the robot should filter the good information and make a state estimation that contains enough information to make good decisions. | ||

=== Localization === | ==== Localization ==== | ||

In the algorithm that enables the robot to find the goal, there are positions and orientations requested. This section will elaborate on the triangulation of positions and orientations. To triangulate the position there are three beacons required <math> (A, B, C) </math>. These beacons have positions <math>[A, B, C] = [(0,0), (0,B), (C,0)</math> in which <math>B</math> & <math>C</math> are constant values since the beacons do not move. Next we calculate the distance to all the beacons from the sender/receiver on the robot. We use the timestamp -<math>t_{X,i}</math>- and the speed of the signal - now assumed to be light speed - <math>C</math>. The <math>i</math> refers to the sender receiver node to which the distance applies. In other words the <math>i</math> refers to the corresponding coordinate system. | In the algorithm that enables the robot to find the goal, there are positions and orientations requested. This section will elaborate on the triangulation of positions and orientations. To triangulate the position there are three beacons required <math> (A, B, C) </math>. These beacons have positions <math>[A, B, C] = [(0,0), (0,B), (C,0)</math> in which <math>B</math> & <math>C</math> are constant values since the beacons do not move. Next we calculate the distance to all the beacons from the sender/receiver on the robot. We use the timestamp -<math>t_{X,i}</math>- and the speed of the signal - now assumed to be light speed - <math>C</math>. The <math>i</math> refers to the sender receiver node to which the distance applies. In other words the <math>i</math> refers to the corresponding coordinate system. | ||

| Line 482: | Line 464: | ||

<math>\theta = Atan(\frac{Y_2 - Y_1}{X_2 - Y_1})</math> | <math>\theta = Atan(\frac{Y_2 - Y_1}{X_2 - Y_1})</math> | ||

==== Beacons of Marvelous Minds ==== | ===== Beacons of Marvelous Minds ===== | ||

After some research we found out that the drones team uses a [https://marvelmind.com/ Marvelmind] Robotics beacon system. The system can be used with an arduino. The company provides - among other - the following information: | After some research we found out that the drones team uses a [https://marvelmind.com/ Marvelmind] Robotics beacon system. The system can be used with an arduino. The company provides - among other - the following information: | ||

| Line 498: | Line 480: | ||

beacons simultaneously – for 2D (X,Y) tracking. The distance between beacons cannot exceed 30 m." | beacons simultaneously – for 2D (X,Y) tracking. The distance between beacons cannot exceed 30 m." | ||

This system should be used in our robot in order to keep a clear reference to the real world and know where the robot is itself. | This system should be used in our robot in order to keep a clear reference to the real world and know where the robot is itself. | ||

==== Mapping ==== | |||

To be able to plan a path first a map is needed and just knowing where you are is only part of the map creation. The robot knows where it is via the beacons and local measurement hardware. The next step is to determine where the obstacles are relative to the robot and putting them in a map. To determine where the obstacles are the robot uses ultrasound range sensors. These give a certain range and along with that the object gets a landmark and a position in the map. Russel and Norvig describe the Kalman filter and the extended Kalman filter. The difference is the approximation of the sensors and robot. The normal Kalman uses only linear models for motion and sensors. These filters are used in the so called Monte Carlo localization. In our robot solution we give the robot a map of the waiting platform before it has to traverse that. Along that the beacons are used to acquire location. This means that the robot is not required to simultaneously localize and map. In other words we do not see SLAM as needed nor desired for localization. | |||

A greater problem is to find out what kind of object is in front of us. In other words we would like to identify the landmarks. This is problematic, because we would like to use camera vision to address this problem. However, perception is complicated and this report merely describes some solutions and gives a step-up to some background. | |||

In addition the planning should be able to address uncertainties. To solve this the robot needs to re-plan the path continuously and keep asking for information when it faces high uncertainty. | |||

==== Determining location of the door ==== | |||

For the robot to be able to help a person exit the train the location of the person in the train is needed. The trains in the Netherlands do not stop at exactly the same place on a platform every time. This will cause a problem when a robot is being used. In Den Bosch they are experimenting real time updated led lights to show the people were a door will be located when the train stops. This information can be useful for determining the location of the person on board of the train. When the location of the door for handicapped persons is known the system can send this information to the robot. Then the robot knows where someone is located and can drive to that location. | |||

[[File:ledlight.PNG|400px|thumb|right]] | |||

==== Planning to move ==== | |||

=== Planning to move === | |||

The robot will use point-to-point motion to deliver the robot to the end location. The original 3D space is turned into the configuration space. This space is continuous and can be solved by either cell decomposition or skeletonization. They both reduce the continuous path-planning problem to a discrete graph-search problem. In addition the robot should be able to do compliant motion. This means that the robot is in physical contact with an obstacle and it is necessary to add this to the robot as people on the waiting platform can start to push the robot or have other forms of contact. | The robot will use point-to-point motion to deliver the robot to the end location. The original 3D space is turned into the configuration space. This space is continuous and can be solved by either cell decomposition or skeletonization. They both reduce the continuous path-planning problem to a discrete graph-search problem. In addition the robot should be able to do compliant motion. This means that the robot is in physical contact with an obstacle and it is necessary to add this to the robot as people on the waiting platform can start to push the robot or have other forms of contact. | ||

=== Moving === | ==== Moving ==== | ||

A path found by a search algorithm can be executed by using the path as the reference trajectory for a PID | A path found by a search algorithm can be executed by using the path as the reference trajectory for a PID controller. The controller is necessary for our robot as path planning alone is usually insufficient. In our demonstration we simply specified a robot controller directly. So rather than deriving a path from a specific model of the world, our controller just switches state in a finite state machine, as it encounters problems. This implementation is a lot easier and gives us a nice demonstration for the robot we build. The finite state machine also allows for easy feedback towards the user. The different states give a good indication of the actions that will be taken. However the higher level path planning is necessary for autonomously traversing the platform. | ||

== | ==== Obstacle Avoidance ==== | ||

In order to make it more suitable for the train platform case, we would like to make use of the dynamics that govern the people moving on the platform. In order to achieve this one should first make a distinction between static and dynamic objects. Otherwise it is not possible to determine which actions to take. In order to distinct static and dynamic objects, we want to use a coarse 2D grid (cell decomposition). All benches and static static objects are hard coded inside the grid. Then we use the sensors to detect objects. They will give an indication of the tile in which the object is detected. Then it will evaluate the map and decide if it knows the object or not. If it knows the object it can just follow the path that was already planned around the object, otherwise it should find a way to get past the object. In this case it could be a static-dynamic object like a case, which can be moved. Or a dynamic object that can move itself. | |||

==== Docking and entering the train ==== | |||

The robot will position itself using local light sensitive sensors and reflectors beneath the train. This only happens in the last moment. The robot is already close enough to the train so that no people can interfere with the light sensors. Also for these sensors, probability filters will have to be used to filter noise out and get reliable results. The docking of the robot will be left to the robot itself and cannot be done manually to prevent any accidents. | |||

[[File:Laser_docking.JPG|400px| Picture 1]] | |||

When docking to the train the other passengers might block the robot. As mentioned the train has information about the position of the disabled person in the train. If the disabled scans his OV-card inside the train a beacon starts, but it also activates a red light on the door that indicates that a disabled will leave the train. The same can be applied as the disabled scans his OV-chipcard at the dock. The robot connects to the beacon at the door of the train, and the established connection will also trigger a red light on the outside of the door. This will make the end phase of the docking less troublesome, because it will hopefully have the effect of deterrence at the train doors for other passengers where the robot will board. | |||

[[File:Docking_user.JPG|400px| Picture 1]] [[File:Traindock.JPG|850px| Picture 1]] | |||

The handicapped will board the train using a lift system. When the robot is docked to the train the plate will go up, when the plate is at the right hight the lift stops and the person can easily get in or off the train. | |||

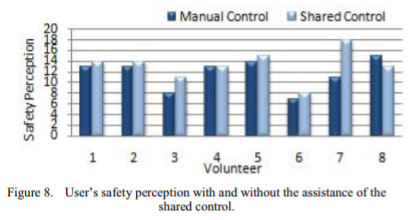

== Shared Control == | == Shared Control == | ||

| Line 546: | Line 523: | ||

Another research that serves as an inspiration for this project was performed by Connell and Viola(1990). They made a striking comparison to riding a horse and driving a car. A horse will not crash at high speed, and “if you fall asleep in the saddle, a horse will continue to follow the path it is on.” This illustrates the added value of shared control very well. In this article, the robot works as follows: the operator(the disabled person) is free to drive the robot in any direction, but the robot will refuse to continue its path if it detects an obstacle. This is similar to the way we are designing our robot. Shared control is beneficial in two cases: if the robot is too cautious (for example in a very busy environment), the disabled person can gain complete control to increase efficiency. On the other hand, when the person is either unable to drive, or tired, he can fully hand over all power to the robot. | Another research that serves as an inspiration for this project was performed by Connell and Viola(1990). They made a striking comparison to riding a horse and driving a car. A horse will not crash at high speed, and “if you fall asleep in the saddle, a horse will continue to follow the path it is on.” This illustrates the added value of shared control very well. In this article, the robot works as follows: the operator(the disabled person) is free to drive the robot in any direction, but the robot will refuse to continue its path if it detects an obstacle. This is similar to the way we are designing our robot. Shared control is beneficial in two cases: if the robot is too cautious (for example in a very busy environment), the disabled person can gain complete control to increase efficiency. On the other hand, when the person is either unable to drive, or tired, he can fully hand over all power to the robot. | ||

Because there is no information available with respect to shared control in wheelchair lifting devices, | Because there is no information available with respect to shared control in wheelchair lifting devices, when the system encounters an object or the end of the platform, it needs the user to take over the driving mechanism. But what is the best way to indicate to the user that it needs to act? Obviously, it needs to signal the user with information on what is happening, and when it desires the user to take over the control and move the machine himself. “Shared control beteen human and machine: using haptic steering wheel to aid in land vehicle guidance” (Steele et al, 2001) concludes that incorporating haptic feedback into the control device (so in our case the joystick) improves the alertness of users. A haptic feedback is for example a vibration signal to the user when the machine encounters a problem and needs to give control to the user. This alerts the user to immediately take control. “Haptic shared control: smoothly shifting control authority?” (Abbink et al, 2011) concludes that haptic shared control can lead to short-term performance benefits (faster and more accurate vehicle control, lower levels of control effort). Thus, it would be wise to incorporate a force feedback (haptic control) into the feedback system to the user. Much like the autopilot of Tesla (see reference list), which requires the driver to place its hands on/near the steering wheel and has haptic feedback when it needs to user to act, our machine could require the user to place its hand on the joystick. | ||

When the wheelchair lifting device encounters an obstacle, the end of the platform, or an error, it needs to signal to the user what is needed of him. Because a screen is already incorporated in the device due to the checking in system of the OV-chipcard, it makes sense to give this also the purpose of indicating signals when the user needs to take control. The user also needs to have the option to take control himself, without the obstacle encountering a problem. This shared control can be displayed on the screen, and it can be made touchscreen so the user can press a button and take control of the machine. This however brings a problem, because according to “Visual-haptic feedback interaction in automotive touchscreens” (Pitts et al, 2012) touchscreens in the automotive industry take away user awareness of the surroundings (because it adds a visual workload to the user). However, it also concludes that incorporating haptic feedback counters this and improves the overall situational awareness. This research suggests that is it a good idea to provide information on the screen alerting the user that he needs to take control (by pressing the button on the touchscreen), while also alerting the user with force feedback (vibration) to inform him he needs to take an action. | When the wheelchair lifting device encounters an obstacle, the end of the platform, or an error, it needs to signal to the user what is needed of him. Because a screen is already incorporated in the device due to the checking in system of the OV-chipcard, it makes sense to give this also the purpose of indicating signals when the user needs to take control. The user also needs to have the option to take control himself, without the obstacle encountering a problem. This shared control can be displayed on the screen, and it can be made touchscreen so the user can press a button and take control of the machine. This however brings a problem, because according to “Visual-haptic feedback interaction in automotive touchscreens” (Pitts et al, 2012) touchscreens in the automotive industry take away user awareness of the surroundings (because it adds a visual workload to the user). However, it also concludes that incorporating haptic feedback counters this and improves the overall situational awareness. This research suggests that is it a good idea to provide information on the screen alerting the user that he needs to take control (by pressing the button on the touchscreen), while also alerting the user with force feedback (vibration) to inform him he needs to take an action. | ||

| Line 559: | Line 536: | ||

*Besides implementing the principle of shared control, the concept itself already gives the disabled person more influence on the process, as he or she now does not have to contact the NS long beforehand, is independent of NS travel assistants and can use the robot without any help. | *Besides implementing the principle of shared control, the concept itself already gives the disabled person more influence on the process, as he or she now does not have to contact the NS long beforehand, is independent of NS travel assistants and can use the robot without any help. | ||

= Prototype = | |||

[[File:prototype_schematic.jpeg|600px|right]] | |||

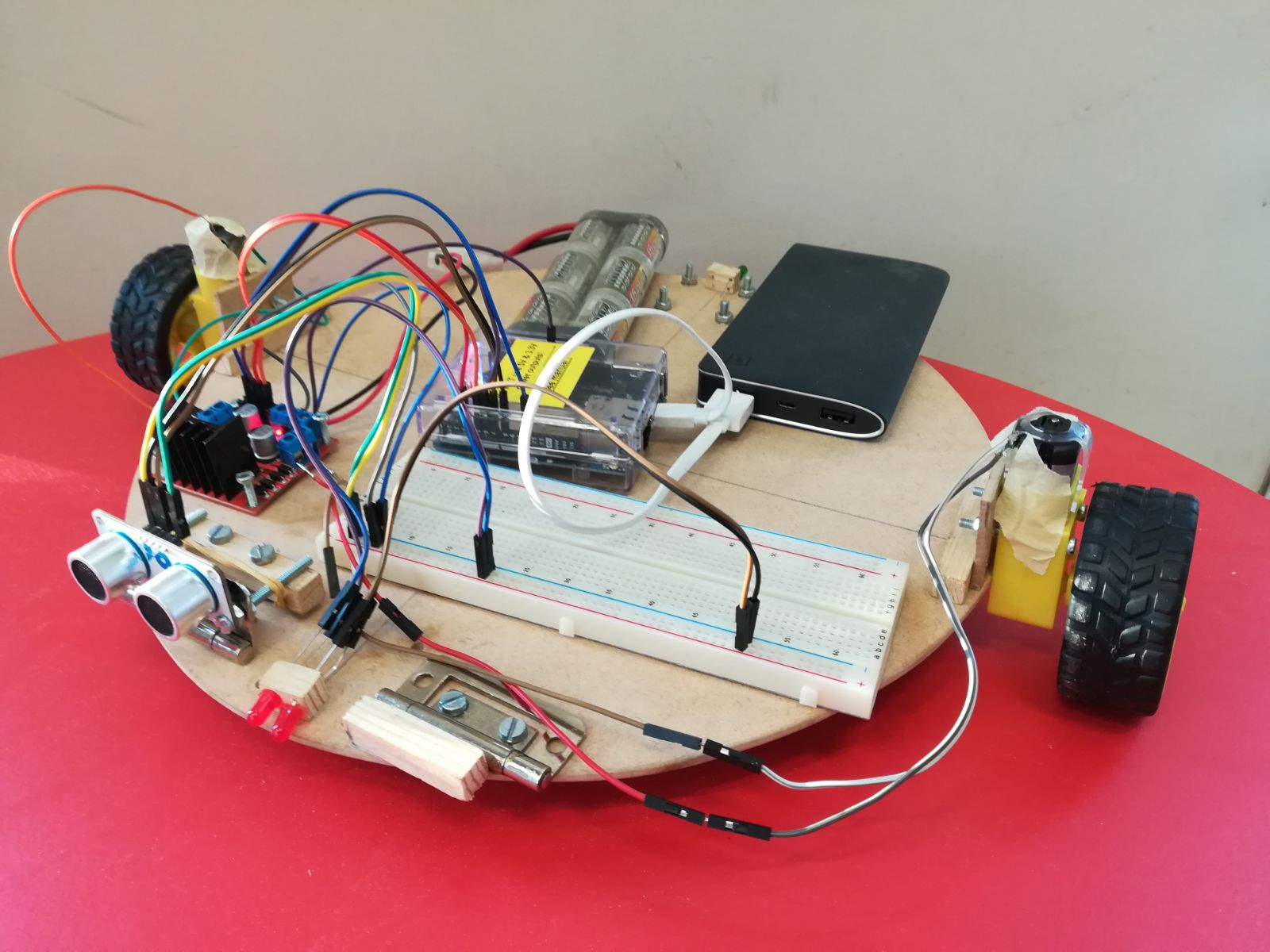

== What problem does the prototype solve? == | == What problem does the prototype solve? == | ||

The prototype is a demonstration of the shared control concept discussed earlier. This prototype will show the possibility to aid a disabled person to get from A to B. So when a person wants to drive forward into an obstacle the robot will drive around it and let the driver know it is interfering. The project itself would yield a lot of literature and little practice if it weren't for the demonstrator so it also enables us to experience the difficulties that hardware implies on the theoretical heaven we sometimes tend to experience. | |||

This | |||

== RPC's prototype == | == RPC's prototype == | ||

'''Requirements''' | '''Requirements''' | ||

* Drive in | * Drive in straight lines and make turns. | ||

* | * Be controllable by the user, through a laptop and Arduino. | ||

* Avoid hitting obstacles, therefore the robot needs to be able to sense objects in its surrounding that are at least within 1 meter of its own position. When an obstacle is too close the robot has to stop and let | * Avoid hitting obstacles, therefore the robot needs to be able to sense objects in its surrounding that are at least within 1 meter of its own position. When an obstacle is too close the robot has to stop and let the user and surroundings know that something is blocking its path. | ||

* The prototype should take over control if the user wants to move into and obstacle. (shared control) | |||

'''Preferences''' | '''Preferences''' | ||

* | * Give feedback to the user to let him know what the robot is up to. | ||

* Be able to sense an object in its surrounding and in case the object is in his pathway be able to alter its path to navigate around it. | * Be able to sense an object in its surrounding and in case the object is in his pathway be able to alter its path to navigate around it. | ||

* The prototype should be as cheap as possible. | * The prototype should be as cheap as possible. | ||

'''Constraints''' | '''Constraints''' | ||

* The prototype cannot cost more than €100,- | |||

* The prototype cannot cost more than | |||

* The prototype should have dimensions around 30X30 cm | * The prototype should have dimensions around 30X30 cm | ||

= Prototype | ==Prototype specification == | ||

[[File: | First the hardware of the solution is presented and thereafter the software implementation of the shared control is explained. | ||

== | === Hardware === | ||

=== | |||

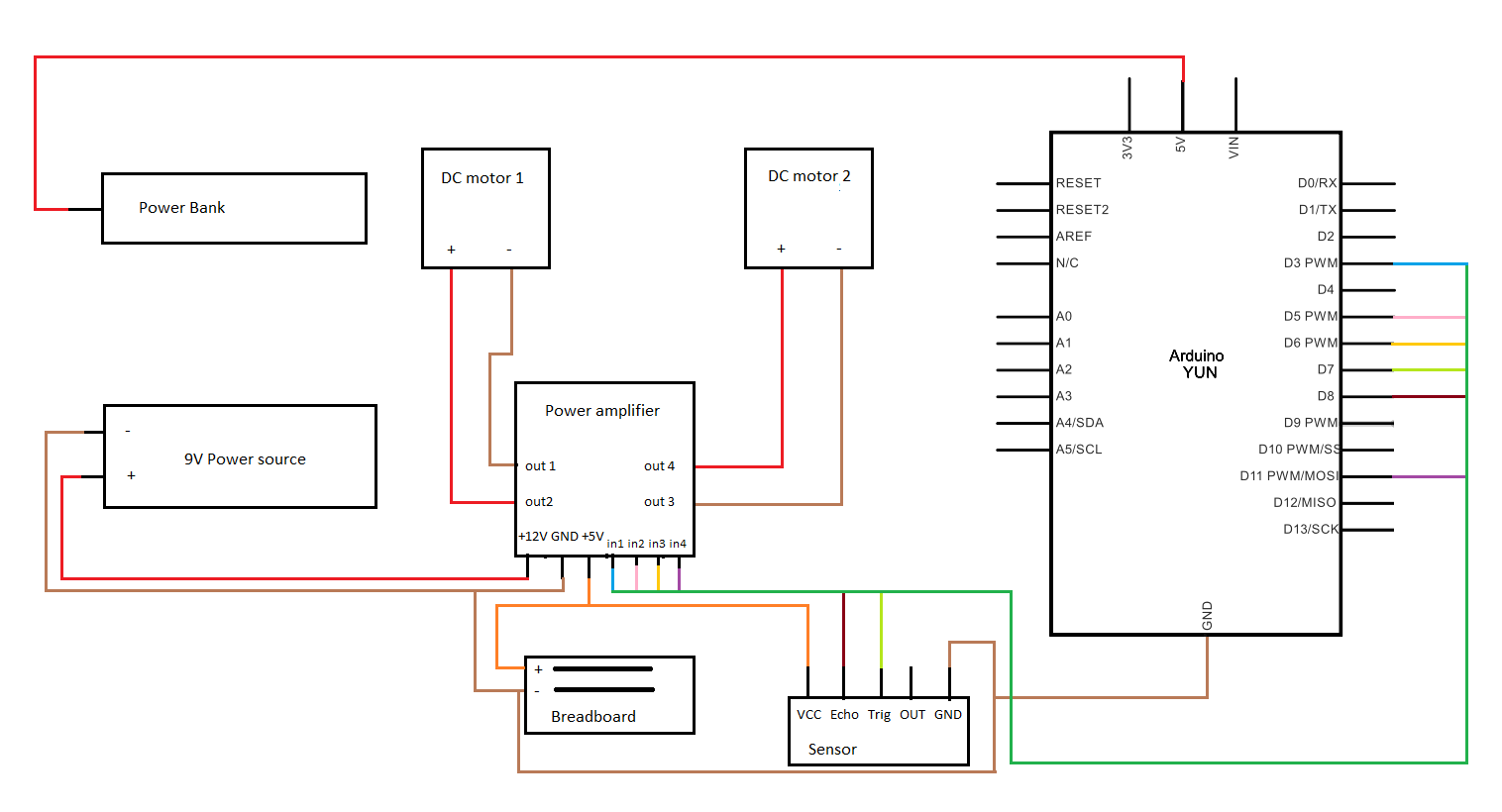

[[File:Prototype.jpeg|450px|right]] | |||

===== List of parts ===== | |||

* 2 wheels | |||

* 2 DC motors | |||

* Swivel wheel | |||

* Arduino | |||

* Plate as chassis | |||

* Hinge | |||

* Ultrasonic distance sensor | |||

* Wires | |||

* Powerbank | |||

* 9V battery | |||

* Power amplifier | |||

* Breadboard | |||

* LED-lights (green & red) | |||

A wooden plate is used as chassis for the prototype, the motors are attached to the plate which are attached to the wheels. The sensor is attached to a hinge that is situated at the front of the prototype. The hinge is fixed in one certain position to make sure that the sensor is fixed. The rest of the equipment is attached to the plate of the prototype. The sensor is an ultrasonic distance sensor, this sensor can measure a distance between 0.1 and 4.5 m. Other sensors were considered as well but this type of sensor seemed to fit our project best. Infrared sensors or vision systems had the disadvantage of being too expensive and not practical for our prototype. In the image on the right the schematic view of the electronical hardware is given. | |||

[[File:Schematic prototype.png|600px|right]] | |||

=== Software === | |||

We used our basic knowledge of programming to construct a state based algorithm. The algorithms main objective is avoid hitting obstacles. When the robots sensors' sense an obstacle, it switches state to avoid collision and maneuver around it, in the meantime it shares information with the driver. The software first defined actions the robot can take. These are: | |||

* '''leftturn''' makes the robot turn left | |||

* '''rightturn''' makes the robot turn left | |||

* '''forward''' makes the robot move forward | |||

* '''slow down''' makes the robot slow down''' | |||

* '''pause''' makes the robot stop | |||

* '''measure''' returns the distance measured by the ultrasound sensor. | |||

* '''input''' Checks for input from the user(laptop) | |||

The Arduino code is shown in the pdf below: | |||

[[File:code.pdf]] | |||

==== Software explained ==== | |||

The main task of our code is that it keeps constant track of objects in front of the vehicle, and an operator must have the ability to take control over the vehicle to stop or turn at all time. After a few rules to set up, the code goes in a loop continuously measuring the distance to objects and waiting for an input. The input is given by numbers: “5”: stop; “6”: turn right; “4”: turn left: “8”: straight forward. The first three of those inputs are direct tasks, which means when someone is to press for example “5”, “6” or “4” the vehicle will respectively immediately stop, turn right or left. The last one, “8” is where the shared control comes in. The first thing what happens if someone is to press “8”, is the sensor will measure if an object is close in front of it. If this is not the case the vehicle will drive forward. If an object is closer than 70cm away from the vehicle, the vehicle will continue forward in a slower pace until it comes in a range of 40cm from the object. Then the vehicle will turn about 90 degrees left and move forward, then it will turn right again and move forward for 2.4 seconds. Then it will turn right move forward to get to its old trajectory and turn left again. While the vehicle was evading the obstacle it was continuously measuring for new objects in front of it, when it occurs that a second object comes close to it in a range of 40cm, it will evade that object in the same manner as the first object. | |||

As can be seen in the code, we wanted to add a second sensor which measures the distance to the ground. This is done so the vehicle knows when it has reached the end of the platform and it would not drive blindly of the platform. Due to that the second sensor had a defect, we had to cancel this. This part of the code is still in the file, but the distance to the ground is fixed to 7cm, so it will not cause problems. | |||

== Results & conclusion of the prototype == | |||

In the end the robot did avoid obstacles, but it could not detect the edge of the platform. The sensor used to detect the edge of the platform failed and left us with just one sensor. In the future the single sensor could be on top of a servo. That way the view angle is much bigger. In case of our robot it sometimes missed obstacles but drove into them, because the robot itself is wider than the projected vision with the angle and distance of the object. Below a video in which the driver just wants to move forward is shown. And it can be seen that the robot moves around neatly. | |||

[[File:Prototype_demo.zip]] | |||

[[File:Ezgif-5-41857eb794.gif]] | |||

The results of the prototype are looking very promising. The vehicle was able to operate on its own in case there was an object in front of it. With more sensors this can be finetuned to make it more safe. The should be adjusted for multiple sensors, so that the vehicle always chooses the shortest way to its destination. Another thing we could not reach with our prototype, is giving the robot knowledge of its orientation and position. To make the vehicle more realistic to a real robot which can autonomously operate on a platform this is essential, but it did not fit in the scope and time of this project. The idea behind the prototype showed a lot of potential, but in order to make a definite conclusion about the feasibility of the robot more resources and time is needed. | |||

= Conclusion of the project = | |||

The project succesfully addresses the main goal of the project, which was to design a robot that enables wheelchair bound people in public transit. The robot is a complete solution from start to end, adressing most if not all problems that are part of the current solution. A questionnaire was used to identify the user needs of disabled people and NS-personnel. | |||

The current reservation system (calling the helpdesk) is replaced by integrating the reservation into the NS-app, enabling disabled people to plan their trip much closer to the departure time. The OV-chipcard is used to activate the robot, which will then trigger the navigation. | |||

We have proven the concept of shared control with our prototype, but since it is expected of the robot to be able to drive autonomously from the get-go, this is potentially not neccesary in the final design. Without the help of NS-staff, the self-sufficiency of the disabled is improved. Because this robot is able to be implemented on all NS-stations, there can be an improvement in routes that are wheelchair accessible. The lights and alarm on the robot, even if fully autonomous, are a literature-proven concept that will improve its presence on the platform. Furthermore, the actual lifting process was already proven by the existence of mobile wheelchair lifting devices. | |||

The project proposes base guidelines for personal space, lighting, sound, check-in, reservation app integration, shared control navigation, autonomous driving and docking. | |||

There are a couple of complications that need to be taken into account. Firstly, there needs to be a solution for the NS staff that are impacted by the robotic system taking over this aspect of their job. Secondly, we have only proven the shared control concept of the robot, not the autonomous driving concept. Also, we came to the conclusion that if it is expected of the robot to be able to drive autonomously to the train door, there is no need for the disabled person to board the robot at the start, but can board it when it is docked to the train door. We used the shared control concept as a solution to accomodate the needs of the disabled people asking to get more control and to get a working prototype. | |||

= Recommendations = | |||

This chapter will give some recommendations on continuation of the project. | |||

In order to improve the solution the robot first has to be able to autonomously board the train. In our project we only described a concept in which the robot should autonomously board the train. For the solution is useful it should be very robust and function for 99 percent of the time. Furthermore in case of break-down or failure the robot should have a manual override accessible by the conductor of a train. This way the robot can at least be decoupled from the train so the train can go on. | |||

In the report we have seen that the railway staff is not, to say, supporting the new solution. We believe that in further advancements the NS should be incorporated more into the solution. That way we may find a solution that gives disabled more mobility and control while the service staff of the NS is satisfied as well. | |||

In our solution we used the shared control concept to give disabled more control in their travelling. Also because we had difficulties with the prototype and were unable to let it drive autonomously. The disabled also needs to get off the train and therefore the robot has to find its own way to the train anyway. If the robot does not have to drive each disabled towards the train and back to the charge station, the capacity will be higher. Say for instance three disabled want to board the train. It would not seem logical to let the robot dock the train three times. In conclusions there should be other ways to give the disabled more control. We already gave indications for an app, but during the boarding process they could have some control. For instance the lift could be controlled by the disabled. This is something to continue with. | |||

At the moment we use a visual interface (the app) to communicate with the user. In the end the solution could help other handicaps rather then just disabled to travel by train. So further development of the interface in other senses - say voice control (sound) - could make the solution more versatile. | |||

As a final recommendation the economic feasibility should be investigated, as this report lacks any economic analysis. | |||

= Collaboration process = | = Collaboration process = | ||

| Line 673: | Line 675: | ||

**Beacons: by using beacons we can real-time track where the robot is located. Gijs and Tjacco have visited mr. Duarte and from this it became clear we can use the beacons for this project. This means however we have to test the prototype specifically at Duarte. | **Beacons: by using beacons we can real-time track where the robot is located. Gijs and Tjacco have visited mr. Duarte and from this it became clear we can use the beacons for this project. This means however we have to test the prototype specifically at Duarte. | ||

**World model: we intend to pre-program static objects in the train environment (e.g. benches). In this way, the robot knows what to avoid. | **World model: we intend to pre-program static objects in the train environment (e.g. benches). In this way, the robot knows what to avoid. | ||

** | **Object detection: the prototype should be able to detect an obstacle. In the prototype it would be to advanced to give objects a label - like person or suitcase. So the prototype goal is to simply avoid a given obstacle even when it faces one. | ||

** | **Encounter humans: In the hypothetical solution we should be able to distinguish humans from a suitcase, so when we encounter different object we would like the robot to make different choices. We imagine a human to move aside as we give sound and light indications hopefully deterring them from our path. If they keep standing still we assume they won't move and will move around them. | ||

*We also received the following feedback: we should know what the requirements are for an autonomous system in order for it to fully replace humans. We have this week further specified our list of RPC’s, and the results of the questionnaires have yielded additional user requirements. | *We also received the following feedback: we should know what the requirements are for an autonomous system in order for it to fully replace humans. We have this week further specified our list of RPC’s, and the results of the questionnaires have yielded additional user requirements. | ||

*From the meeting with the teachers we received the info that at the train station in Den Bosch sensors indicate where the train is going to be when arriving, and where the doors are. This implies that there is an information system at the NS which knows where a train will stop exactly. This may have a slight margin. This is extremely useful for the project, as this means we can assume the robot can access that information system and use that info for where it should go. For the final centimeters, it can use the beacons located in the doors to dock. | *From the meeting with the teachers we received the info that at the train station in Den Bosch sensors indicate where the train is going to be when arriving, and where the doors are. This implies that there is an information system at the NS which knows where a train will stop exactly. This may have a slight margin. This is extremely useful for the project, as this means we can assume the robot can access that information system and use that info for where it should go. For the final centimeters, it can use the beacons located in the doors to dock. | ||

| Line 723: | Line 725: | ||

*In week 8, we will be peer-reviewing one another. | *In week 8, we will be peer-reviewing one another. | ||

= | = Peer Review= | ||

After a meeting on Monday, we had an open conversation on the teamwork of the past 8 weeks. Everyone agreed that the collaboration went fluently in multiple ways; deadlines were met, after week 2 group structure was clear and the quality of work was high. This resulted in the same individual grade for the entire group: an 8. | |||

= References = | |||

Autonomous regulations congress. (n.d.). Retrieved September 21, 2017, from: http://www.autonomousregulationscongress.com/ | |||

Autopilot. (n.d.). Retrieved October 27, 2017, from https://www.tesla.com/nl_NL/autopilot | |||

Beantwoord: Bevindingen proef station 's Hertogenbosch. (n.d.). Retrieved October 27, 2017, from https://forum.ns.nl/archief-43/bevindingen-proef-station-s-hertogenbosch-873 | Beantwoord: Bevindingen proef station 's Hertogenbosch. (n.d.). Retrieved October 27, 2017, from https://forum.ns.nl/archief-43/bevindingen-proef-station-s-hertogenbosch-873 | ||

Brandl, C., Mertens, A. and Schlick, C. M. (2016), Human-Robot Interaction in Assisted Personal Services: Factors Influencing Distances That Humans Will Accept between Themselves and an Approaching Service Robot. Hum. Factors Man., 26: 713–727. doi:10.1002/hfm.20675 | |||

Butler, J. T., & Agah, A. (2001). Psychological effects of behavior patterns of a mobile personal robot. Autonomous Robots, 10, 185–202. | |||

Connell, J., & Viola, P. (1990). Cooperative control of a semi-autonomous mobile robot. In Proceedings IEEE International Conference on Robotics and Automation (Vol. 2, pp. 1118–1121). https://doi.org/10.1109/ROBOT.1990.126145 | Connell, J., & Viola, P. (1990). Cooperative control of a semi-autonomous mobile robot. In Proceedings IEEE International Conference on Robotics and Automation (Vol. 2, pp. 1118–1121). https://doi.org/10.1109/ROBOT.1990.126145 | ||

| Line 742: | Line 743: | ||

Dallaway, J. L., & Tollyfield, A. J. (1990). Task-specific people control of a robotic aid for disabled. Journal of Microcomputer Applications, 321–335. | Dallaway, J. L., & Tollyfield, A. J. (1990). Task-specific people control of a robotic aid for disabled. Journal of Microcomputer Applications, 321–335. | ||

E.V., D. Z. (2017, May 23). Retrieved October 27, 2017, from http://www.germany.travel/en/ms/barrier-free-germany/how-to-book/deutsche-bahn.html | |||

Hall, E. T. (1966). The hidden dimension: Man's use of space in public and private. London: The Bodley Head. | |||

Health and safety executive. (2008). Retrieved September 21, 2017, from: http://www.legislation.gov.uk/uksi/2008/1597/schedule/2/part/1/made | |||

Ivens, L. and Kant, A. (2004). Ontspoord, Gehandicapten bij de NS. Tweede-Kamerfractie SP. | Ivens, L. and Kant, A. (2004). Ontspoord, Gehandicapten bij de NS. Tweede-Kamerfractie SP. | ||

Koay, K.L., Syrdal, D.S., Ashgari-Oskoei, M. et al. Int J of Soc Robotics (2014) 6: 469. https://doi.org/10.1007/s12369-014-0232-4 | |||

Koay, K. L., Syrdal, D. S., Walters, M. L., & Dautenhahn, K. (2007). Living with robots: Investigating the habituation effect in participants' preferences during a longitudinal human-robot interaction study (pp. 564–569). Proceedings of the IEEE International Symposium on Robot and Human Interactive Communication, August 26–29, 2007, Jeju. | |||

Oishi, S. (2010). The psychology of residential mobility: Implications for the self, social relationships, and well-being. Perspectives on Psychological Science, 5(1), 5-21. | |||

Patent check. Retrieved October 27, 2017, from http://appft.uspto.gov/netacgi/nph-Parser?Sect1=PTO2&Sect2=HITOFF&u=%2Fnetahtml%2FPTO%2Fsearch-adv.html&r=0&f=S&l=50&d=PG01&OS=train%2BAND%2Bwheelchair&RS=train%2BAND%2Bwheelchair&PrevList1=Prev.%2B50%2BHits&TD=1328&Srch1=train&Srch2=wheelchair&Conj1=AND&StartNum=&Query=lifting%2BAND%2Bwheelchair | |||

Petry, M. R., Moreira, A. P., Braga, R. A. M., & Reis, L. P. (2010). Shared control for obstacle avoidance in intelligent wheelchairs. In 2010 IEEE Conference on Robotics, Automation and Mechatronics, RAM 2010 (pp. 182–187). https://doi.org/10.1109/RAMECH.2010.5513193 | |||

Russell, Stuart J., and Peter Norvig. Artificial Intelligence: a Modern Approach. Pearson, 2014. | |||

SPECIAL TRAVEL NEEDS. (n.d.). Retrieved October 27, 2017, from https://www.eurostar.com/rw-en/travel-info/travel-planning/accessibility | |||

Steptoe, A., Shankar, A., Demakakos, P., & Wardle, J. (2013). Social isolation, loneliness, and all-cause mortality in older men and women. Proceedings of the National Academy of Sciences, 110(15), 5797-5801 | |||

Walters, M. L., Syrdal, D. S., Koay, K. L., Dautenhahn, K., & te Boekhorst, R. (2008). Human approach distances to a mechanical-looking robot with different robot voice styles (pp. 707–712). Proceedings of the IEEE International Symposium on Robot and Human Interactive Communication, August 1–3, 2008, Munich. | |||

Złotowski, J. A., Weiss, A., & Tscheligi, M. (2012). Navigating in public space: Participants' evaluation of a robot's approach behaviour (pp. 283–284). Proceedings of the Seventh Annual ACM/IEEE International Conference on Human-Robot Interaction, March 5–8, 2012, Boston, MA. | |||

Latest revision as of 00:05, 31 October 2017

| Members of group 3 | |

| Karlijn van Rijen | 0956798 |

| Gijs Derks | 0940505 |

| Tjacco Koskamp | 0905569 |

| Luka Smeets | 0934530 |

| Jeroen Hagman | 0917201 |

Introduction

The technology of robotics is an unavoidable rapidly evolving technology which could bring a lot of improvements for the modern world as we know it nowaday. The challenge is however to invest in the kind of robotics that will make its investments worthwhile, instead of investing in research that will never be able to pay its investments back. This report is going to investigate a robotics technology with the goal of solving the initial problem statement. This chapter will describe the problem that is chosen, the objective of our project and the approach to show how the solution will take its form.

Problem Definition & Approach