Embedded Motion Control 2015 Group 5: Difference between revisions

| Line 51: | Line 51: | ||

==Considerations / Requirements / Specifications== | ==Considerations / Requirements / Specifications== | ||

[[File:Embedded_motion_control_interfaces.jpg|thumb|upright=1.0|alt=System interfaces.|System interfaces.]] After a first | To use the position and orientation of the robot and it surroundings, there is chosen to use the Laser Range Finder data. | ||

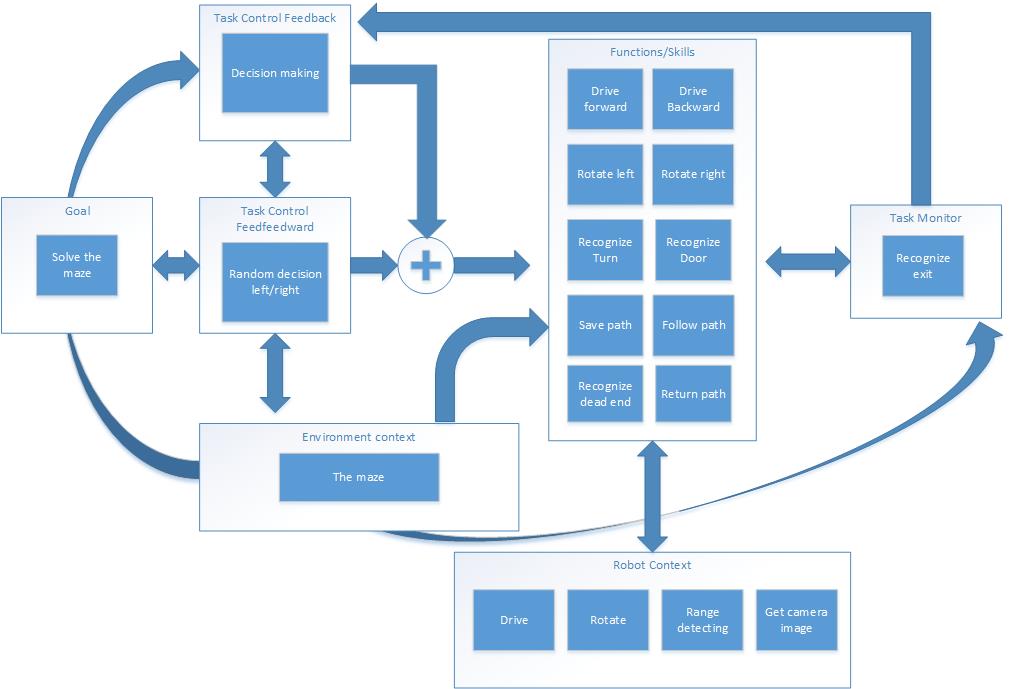

[[File:Embedded_motion_control_interfaces.jpg|thumb|upright=1.0|alt=System interfaces.|System interfaces.]] After a first successful brainstorm, the first draft of the system interface is determined. In this schematic the different contexts are presented. The goal to solve the maze is monitored by the Task monitor. Based on the observed environment and the maze solving algorithm, a set of skills is selected. Task control feedforward is used when no deterministic choice can be made. These skills are based on the robot's basic functions. | |||

==Code overview== | ==Code overview== | ||

Revision as of 09:14, 19 June 2015

This Wikipedia page is written as a log of the project of the course Embedded Motion Control. The aim of this course is to design and apply software in the context of an autonomous robot. Therefore two assessment moments are held. This Wikipedia page is structured according to these two assessment moments, namely the corridor challenge and the maze challenge.

Group Members

| Name: | Student id: |

| Bart van Willigen | 0770142 |

| Joost Peters | 0747630 |

| Robin Loose | 0771575 |

| Koen Bos | 0763939 |

| Joost Franssen | 0824821 |

| Lotte de Koning | 0655209 |

| Marjon van 't Klooster | 0819200 |

Corridor challenge

In the first assessment moment, the corridor challange, the robot has to drive autonomous through a corridor where it has to take the first exit. This moment will teach us whether our structure and our thoughts about how a software needs to be designed and applied is correct.

Considerations / Requirements / Specifications

To use the position and orientation of the robot and it surroundings, there is chosen to use the Laser Range Finder data.

After a first successful brainstorm, the first draft of the system interface is determined. In this schematic the different contexts are presented. The goal to solve the maze is monitored by the Task monitor. Based on the observed environment and the maze solving algorithm, a set of skills is selected. Task control feedforward is used when no deterministic choice can be made. These skills are based on the robot's basic functions.

Code overview

Code structure

Functions

Simulation (if we still have that)

Corridor Challenge

Evaluation

Maze challenge

Considerations / Requirements / Specifications

Code overview

=Code structure

Functions

Potential field

Simulation (if we still have that)

Corridor Challenge

Evaluation

Course Evaluation

Software design assignment

The software design assignment can be found here.

Corridor challenge

Goal: Lead PICO as fast as is robotically possible through a corridor with an unknown junction. The first side exit at the junction should be taken.

Approach

Development

Main

Tasks

- Aligning and centering the robot:

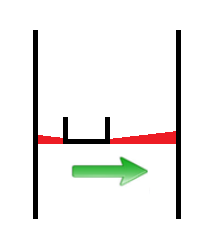

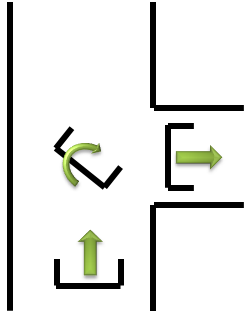

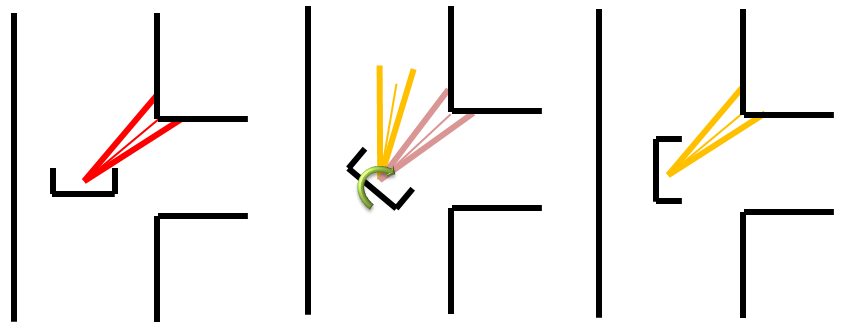

In order to drive straight and centered in a corridor, a function is created which aligns and centers the robot w.r.t. the corridor walls. The function consists of two parts; a part that uses two beam bundles on each side of the robot and compares their length in order to center the robot (Figure left), and a part that uses three small beam bundles on one side of the robot to align it with the walls. A safety check is implemented to make sure the robot ignores cracks in the corridor walls. A proportional controller is implemented for correcting the robot’s misalignment, this means that the more the robot is off-center or misaligned, the larger the control action will be to correct this.

Implementation in corridor challenge: When implementing the function for the corridor challenge, two issues arose: the proportional control could lead to problems when the system stability was compromised. Extreme high gain would result easily into a crash. A second issue was compatibility with the rotate function; during rotation at the corner, the alignment function interfered and this resulted into unwanted effects. A reason for this could be our main algorithm that consisted of a switch-case construction that was far from optimal. During the corridor challenge the align-part was disabled in order to complete the challenge.

- Recognize junction

The robot is able to robustly distinguish cracks from junction. If the laser on the left or right of the robot detects a large distance, a bundle of lasers around the perpendicular laserbeam is analyzed. The angle between the first and last bundle with a large distance is calculated and this angle, combined with the corresponding distances at the outer beams of the bundle, is used to calculate the width of the crack (or junction).

- [math]\displaystyle{ width\_crack = sin(angle\_ crack/2)*distance[outer\_ bundle]; }[/math]

This width is now used to distinguish small cracks from actual junctions in the corridor.

- Take a turn:

When a decision is made to turn left or right some skills will be performed. First PICO is positioned in the middle of the junction, with the skill: junction_mid. Then PICO will rotate on his position around his axis with the function rotate and after this PICO will drive forward until it is in the corridor. When two walls are detected next to him the center function will take over and will drive further until the exit is detected. These different skills are described below.

Skills

- Center at junction

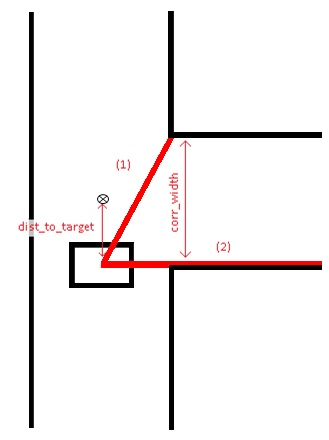

When a junction is detected and the decision has been made to enter a specific corridor, the function ‘junction_mid’ is started. The goal of this function is to stand still at the exact middle (target) of the corridor which is about to be entered. To find this exact middle, the beam to the opposing corner of the entrance is measured (1) as soon as beam (2) passes the corner. Beam (1) can then be used to compute the width of the corridor (corr_width) and therefore, the middle of the corridor is also known. Junction_mid ends when it has reached the half width of the corridor (target).

- Rotate

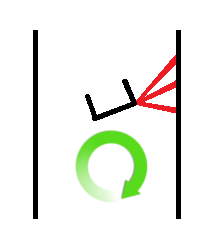

The rotation for the corridor challenge is based on the form of the corner of the wall. As example the right rotation will be used as explanation. First the corner is detected through analyzing the laser bundle at the upper right quarter of PICO, the shortest vector bundle is used as reference for the corner. For a 90 degrees rotation it can be calculated which vector bundle is needed at the left side of PICO. PICO will rotate until the vector bundle at his left side is equal to the reference vector bundle. See the rotation skill figure below.

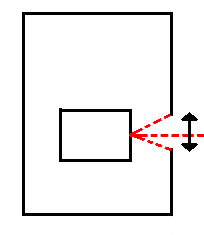

- Detect exit

This function is constantly checking if the exit of the maze is reached. The LRF data is divided into ten bundles and if all of these bundles each show an average distance larger than 1.5 m, the exit of the maze is reached. In this case, the robot stops and the program is ended.

Conclusion

Pico executed the Corridor Challenge as expected, however this was most certainly not the most efficient way:

- Centering Pico between two walls was not robust with gaps in the walls;

- Cornering was not efficient: Pico did not follow the apex of the corner;

- Pico was not programmed to drive at maximum (driving/angular) velocity;

- about 90% of the data was thrown away;

- The code's structure was insufficient.

If Pico had to do the Corridor Challenge again, certain changes would be made to the code:

- The entire code structure has to be revised;

- To make navigating the maze more robust, all data has to be used in stead of 10%;

- However this raw data has to be modified in order to make it applicable;

- The output of the modified raw data is very different from the LRF output, therefore most of the skills/tasks have to be rewritten;

- To be able to compete with other groups, Pico needs to follow the apex.

See the video of the corridor challenge!

Maze challenge

Approach

Development

Main

main(){

while ( io.ok ){

communicate(); //Receive data

detect_event(); //Recognize events

worldmodel(); //Update the worldmodel

decision_making(); //Decides which task needs to be executed

exec_decision(); //Execute decision

worldmodel(); //Memorize taken decision

}

}

Tasks

Environment Detection

- Hough Transform

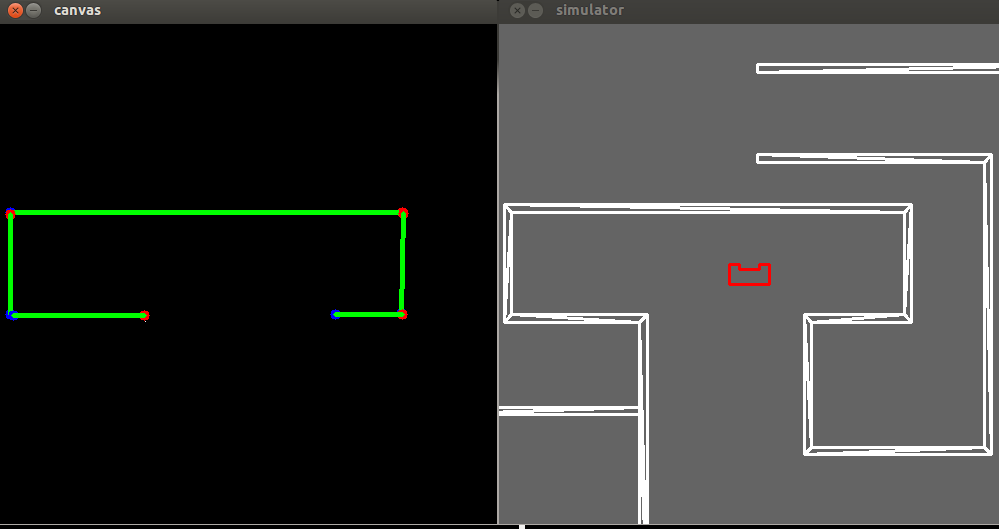

In order to interpret the data obtained from the LRF, the laser data is processed using a hough transform technique. This transforms the data points to lines, so the robot can interpret these lines as walls of the maze. The algorithm used to perform this transformation, is obtained from the OpenCV library. The Probibalistic Hough transform function is used to draw finite lines, which are described using cartesian coordinates of their extremes.

- [math]\displaystyle{ [x_0, \quad y_0, \quad x_1, \quad y_1] = HoughLinesP(LRF_{data}) }[/math]

Unfortunately, the output of this algorithm describes one wall in the maze with multiple lines. This is a redundant and (for some other functions) unwanted phenomenon. In order to make sure that all visible walls are always represented by a single line, a filtering algorithm is used.

- Hough Lines Filter

As mentioned, the output of OpenCV often consists of multiple redundant lines. To easily distinguish which lines are similar (and possibly represent the same wall), the cartesian coordinates of the lines are transformed to polar coordinates. If the radius to a line and the angle to a line are similar, it is assumed that the lines represent the same wall. All similar lines but the longest are thrown away. So the output has the same number of lines as the amount of visible walls.

- Detect Junction

The filtered Hough lines are used to detect the different types of junctions, e.g. T-junction, dead end or right turn. Vertexes of the lines are used to distinguish the different junctions:

- Corridor, No vertexes.

- Dead end, Two connected vertexes and pico is in-between the x-locations of the points.

- Left turn, One vertex to the right of pico, pico is in between the x-locations of the horizontal line.

- Right turn, One vertex to the left of pico, pico is in between the x-locations of the horizontal line.

- T-junction left, One vertex to the left of pico, both connected lines are to the left of pico.

- T-junction right, One vertex to the right of pico, both connected lines are to the right of pico.

- T-junction, No vertexes and Pico is able to detect a horizontal line in front.

- Crossing, Two vertexes, one to the right and one to the left of Pico that are not connected.

Skills

- Alignment and centering in the corridors

Since the method of detection has changed during the project, the align/center function needs to be addapted aswel. The function now uses the coordinates of the begin- and endpoints of the walls represented by lines from the Houghtransformation. The function structure is described as follows:

void center_position(all_data &Robot, vector lines, int side, bool center_on_off)

- Determine which lines are parralel to the Robot

- Determine whether they are located left or right of the Robot

Centering:

- Determine the distance perpendicular to the walls/side of the Robot (left/right)

eg.: distance left: dist_l = x0_l + y0_l/(y0_l+y1_l) * (x1_l-x0_l); , where x0,y0 and x1,y1 are the coordinates of the wall start/end point respectively

- Assign according velocity v_y to the Robot. A proportional gain is used to correct faster for larger errors.

Alignment:

- Determine the difference in x-coordinate of the begin- and endpoint of the hough line.

- Assign according velocity v_t to the Robot. A proportional gain (K) is used to correct faster for larger errors.

eg.: allign on left wall: if(x0 < x1) -> v_t = -K*abs(x1-x0) elseif(x0>x1) -> v_t = K*abs(x1-x0)

Conclusion

Appendix

Code

Scheduler

A code snippet of the main function that is used as a scheduler can be found here.

Hough lines filter

As mentioned, a filter is used to exclude redundant duplicate lines from the OpenCV hough transform library. The code snippet of the filter can be found here.

Setpoints Rotation

A code snippet of the piece of code that is used to create the parametric setpoints used for the rotation of the robot can be found here.[1].