PRE2022 3 Group5: Difference between revisions

(start of state-of-art and relevant techniques) Tag: 2017 source edit |

(→Simulation: Results: slight adjustments to the simulation conclusion) |

||

| (159 intermediate revisions by 6 users not shown) | |||

| Line 5: | Line 5: | ||

!Role | !Role | ||

|- | |- | ||

|Vincent van Haaren|| | |Vincent van Haaren||1626736 | ||

|Human Interaction Specialist | |Human Interaction Specialist | ||

|- | |- | ||

| Line 24: | Line 24: | ||

|} | |} | ||

<br /> | |||

==Introduction== | ==Introduction== | ||

In this project | In this project we have been allowed to pursue a self-defined project. Of course, the focus should be on USE; user, society, and Enterprise. Our chosen project is the design of a product. Taking inspiration from our personal experiences we’ve chosen to find a solution to solve the navigation problems we encounter in the campus buildings in the TU/e. After some research about the topic and contacting TU/e Real Estate department we found out that guidance robots for people with visual impairment had demand. This was thus chosen as our topic. More specifically defined, the problem statement is: ‘Visually impaired people have ineffective means of navigating through the, at times, confusing pathways of campus buildings.’. When researching state-of-the-art electronic travel aids, we found 3 distinct solutions: Robotic Navigation Aids, Smartphone solutions, wearable attachments. The pros and cons are described in the table below: | ||

{| class="wikitable" | {| class="wikitable" | ||

|+ | |+ | ||

| Line 46: | Line 47: | ||

|Robotic guide dog/mobile robot | |Robotic guide dog/mobile robot | ||

|The system gives room for larger hardware, as it does not require a user to carry it | |The system gives room for larger hardware, as it does not require a user to carry it | ||

|Complicated mechanicals while | |Complicated mechanicals while manoeuvering through stairs and terrain | ||

|- | |- | ||

|Robotic Navigation Aids | |Robotic Navigation Aids | ||

|Robotic Wheelchair | |Robotic Wheelchair | ||

|Suitable for the elderly and people who have a physical limitation provides navigation and mobility assistance for elderly visually impaired who cannot walk on their own, multi-handicapped, or people who have more than one disabling condition | |Suitable for the elderly and people who have a physical limitation provides navigation and mobility assistance for elderly visually impaired who cannot walk on their own, multi-handicapped, or people who have more than one disabling condition | ||

|Safety remains an issue as user mobility fully depends on the robotic wheelchair navigation, road-crossing and stair climbing are difficult circumstances where the reliability of the wheelchair is of extreme necessity | |Safety remains an issue as user mobility fully depends on the robotic wheelchair navigation, road-crossing and stair climbing are difficult circumstances where the reliability of the wheelchair is of extreme necessity | ||

|- | |- | ||

|Smartphone solutions | |Smartphone solutions | ||

| Line 76: | Line 77: | ||

These devices are intrusive as they cover ears and involve the use of hands users are burdened with the system’s weight. | These devices are intrusive as they cover ears and involve the use of hands users are burdened with the system’s weight. | ||

Requires | Requires an extended period of training | ||

|} | |} | ||

Sourced from https:// | Sourced from: <ref>Romlay, M. R. M., Toha, S. F., Ibrahim, A. M., & Venkat, I. (2021). Methodologies and evaluation of electronic travel aids for the visually impaired people: a review. ''Bulletin of Electrical Engineering and Informatics'', ''10''(3), 1747–1758. <nowiki>https://doi.org/10.11591/eei.v10i3.3055</nowiki></ref> | ||

Furthermore, another state-of-the-art solution for guiding devices was found; a device which would use electronic waypoints installed in the building, to localise the user and relay directions and information about the surroundings<ref>(1) (PDF) Guiding visually impaired people in the exhibition (researchgate.net)</ref>. | Furthermore, another state-of-the-art solution for guiding devices was found; a device which would use electronic waypoints installed in the building, to localise the user and relay directions and information about the surroundings<ref>(1) (PDF) Guiding visually impaired people in the exhibition (researchgate.net)</ref>. | ||

A previous attempt was made at the TU/e (our case study) to use this method. But because it required infrastructure to be created in all the buildings in which it would work, it was never implemented. Therefore we’ve decided to discard all solutions which would require such infrastructure. | A previous attempt was made at the TU/e (our case study) to use this method. But because it required infrastructure to be created in all the buildings in which it would work, it was never implemented. Therefore, we’ve decided to discard all solutions which would require such infrastructure. | ||

Wearable attachments have been discarded as it is inherently invasive meaning the user will have to equip it themselves. Furthermore larger attachments with many sensors are made impossible due to weight-limits, and lastly wearing such a device in extended meetings is impractical. Any such device will require some prior knowledge on how to operate it. Due to all these reasons we’ve chosen not to pursue wearable attachments. | Wearable attachments have been discarded as it is inherently invasive meaning the user will have to equip it themselves. Furthermore, larger attachments with many sensors are made impossible due to weight-limits, and lastly wearing such a device in extended meetings is impractical. Any such device will require some prior knowledge on how to operate it. Due to all these reasons, we’ve chosen not to pursue wearable attachments. | ||

We’ve decided against smartphone solutions because it would be difficult to make a one-size-fits-all solution due to differing phones and sensors. A slightly more | We’ve decided against smartphone solutions because it would be difficult to make a one-size-fits-all solution due to differing phones and sensors. A slightly more biased reason is that half of our group members are not at all adept at creating such applications and have no interest in the field. We also worried that we would struggle creating a practical app due to the limitations of the phone hardware. | ||

Robotic wheelchair was decided against due to its invasive nature and concerns for the user’s autonomy. Furthermore this solution would be very bulky which makes it unsuited for crowded spaces. The user base which will most likely consist of furthermore well-abled students which do not need such support and might feel uncomfortable using such a device. | Robotic wheelchair was decided against due to its invasive nature and concerns for the user’s autonomy. Furthermore, this solution would be very bulky which makes it unsuited for crowded spaces. The user base which will most likely consist of furthermore well-abled students which do not need such support and might feel uncomfortable using such a device. | ||

A Smart Cane is not well-suited to guide the user due to the small form factor and weight requirement which would make inside-out localisation difficult. | A Smart Cane is not well-suited to guide the user due to the small form factor and weight requirement which would make inside-out localisation difficult. | ||

The mobile platform guide robot has a few problems besides its price. The most important one is that it has trouble navigating stairs and rough terrain. Luckily the robot will (for now) only be operating indoors in TU/e buildings. The presented use case of the TU/e campus | The mobile platform guide robot has a few problems besides its price. The most important one is that it has trouble navigating stairs and rough terrain. Luckily, the robot will (for now) only be operating indoors in TU/e buildings. The presented use case of the TU/e campus has walk bridges connecting buildings and elevators in (almost) all buildings which mitigates most of the solution’s downsides. These factors make it the perfect place to implement such a guidance robot. | ||

In summary we chose a robotic guide due to its user accessibility and potential for future improvements. It is a good way for people (with visual impairment or not) to be navigated through buildings. | In summary we chose a robotic guide due to its user accessibility and potential for future improvements. It is a good way for people (with visual impairment or not) to be navigated through buildings. | ||

==State of the art== | |||

It is commonly known that the most common tools used by visually impaired people are the white cane and the guide dog. The white cane is used to navigate and identify. With its help these people get tactile information about their environment, allowing the visually impaired to explore their surroundings and detect obstacles. However, the use of this can be cumbersome, as it can get stuck in cracks, or tiny spaces. Its efficiency is also limited in the event of bad weather conditions or a crowd.<ref>''What are the problems that the visually impaired face with the white cane?'' (n.d.). Quora. <nowiki>https://www.quora.com/What-are-the-problems-that-the-visually-impaired-face-with-the-white-cane</nowiki></ref> The guide dog on the other hand can guide the user through familiar paths, while also avoiding obstacles. They can also assist with locating steps, curbs and even elevator buttons. They can also keep their user centred, when crossing sidewalks for example.<ref>Healthdirect Australia. (n.d.). ''Guide dogs''. healthdirect. <nowiki>https://www.healthdirect.gov.au/guide-dogs#:~:text=Guide%20dogs%20help%20people%20who,city%20centres%20to%20quiet%20parks</nowiki>.</ref><ref>''What A Guide Dog Does''. (n.d.). Guide Dogs Site. <nowiki>https://www.guidedogs.org.uk/getting-support/guide-dogs/what-a-guide-dog-does/</nowiki></ref> There are a couple of issues with guide dogs however. They can only work for 6 to 8 years and have a very high cost of training.<ref>''Guide Dogs Vs. White Canes: The Comprehensive Comparison – Clovernook''. (2020, 18 September). <nowiki>https://clovernook.org/2020/09/18/guide-dogs-vs-white-canes-the-comprehensive-comparison/</nowiki></ref> They also require constant work on maintaining that training. The dog can also get sick. Another potential issue is bystanders that pet or take interest in the dog while it is working, which is a detriment to the handler.<ref>''Guide Dog Etiquette: What you should and shouldn’t do – Clovernook''. (2020, 10 September). <nowiki>https://clovernook.org/2020/09/10/guide-dog-etiquette/</nowiki></ref> | |||

None of these tools can efficiently assist the person in navigating to a specific landmark in an unknown environment.<ref>Guide Dogs for the Blind. (2020, 1 July). ''Guide Dog Training''. <nowiki>https://www.guidedogs.com/meet-gdb/dog-programs/guide-dog-training#:~:text=Guide%20dogs%20take%20their%20cues,they%20are%20at%20all%20times</nowiki>.</ref> That is why currently a human assistant is preferred/needed to perform such a task, for example when walking in a museum.<ref>Guide Dogs for the Blind. (2020b, July 1). ''Guide Dog Training''. <nowiki>https://www.guidedogs.com/meet-gdb/dog-programs/guide-dog-training#:~:text=Guide%20dogs%20take%20their%20cues,they%20are%20at%20all%20times</nowiki>.</ref> In regards to the technological means there is currently no robot that is capable of efficiently performing such a task, especially is the environment is a crowded building. However, there are multiple robots that have implemented parts of this function. In the following paragraph we have divided them into their own sections for ease of reading. | |||

===Tour-guide robots=== | |||

We first begin with the tour-guide robots. These robots are used in places such as museums, university campuses, workplaces and more. The objective of these robots is to guide a user to a destination. Once at the destination these robots will most often relay information about the object, exhibition or room of the destination. In terms of implementation, these robots use a predefined map of the environment, where digital beacons are placed to mark the landmarks and points of interest. These robots also often make use of ways to detect and avoid obstacles such as using laser scanners (such as LiDAR), RGB cameras, kinetic cameras or sonars. This research paper <ref name="Review of Autonomous Campus and Tour Guiding Robots with Navigation Techniques"> Debajyoti Bosea, Karthi Mohanb, Meera CSc, Monika Yadavc and Devender K. Saini, Review of Autonomous Campus and Tour Guiding Robots with Navigation Techniques (2021), https://www.tandfonline.com/doi/epdf/10.1080/14484846.2021.2023266?needAccess=true&role=button </ref> goes in depth on the advances in this field in the recent 20 years, the most notable of which are "Cate", "Konard and Suse". As our goal is to guide visually impaired people throughout the TU/e campus, this field of robotics is of upmost interest for the navigation system of a guidance robot. | |||

====Aid technology for the visually impaired==== | |||

This section is split into two. First, we cover guidance robots for the visually impaired, after which we cover other technological aids that have been created for this user group. | |||

=====Guidance robots===== | |||

Guidance robots for the visually impaired are very similar to the tour guide robots. They often use much the same technology to navigate through the environment (predefined map with landmarks and obstacle detection and avoidance). What differentiates these robots from the tour-guide robots is the adaptation of the shape and functionality of the robots to better suit the needs of the visually impaired. The robots have handles, or leashes, which the visually impaired can hold, much the same as a guide dog or a white cane. As the user cannot see, the designs incorporate ways of communicating the intent of the robot to the user as well as ways of guiding the user around obstacles together with the robot. Examples of such designs are the Cabot<ref name="CaBot: Designing and Evaluating an Autonomous Navigation Robot for Blind People"> João Guerreiro, Daisuke Sato, Saki Asakawa, Huixu Dong, Kris M. Kitani, Chieko Asakawa, Designing and Evaluating an Autonomous Navigation Robot for Blind People (2019), https://dl.acm.org/doi/pdf/10.1145/3308561.3353771 </ref>- a suitcase shaped robot, that stands in front of the user. It uses a LiDAR to analyse its environment and incorporates haptic feedback to inform the user of its intended movement pattern. Another possible design is the quadruped robot guide dog<ref name="The Fuzzy Control Approach for a Quadruped Robot Guide Dog">https://link.springer.com/article/10.1007/s40815-020-01046-x utm_source=getftr&utm_medium=getftr&utm_campaign=getftr_pilot</ref>, which based on Spot could be used as a robotic guide dog, given some adjustments. Finally there is also this design of a portable indoor guide robot<ref name="Design of a Portable Indoor Guide Robot for Blind People">https://ieeexplore.ieee.org/document/9536077</ref>, which is a low-cost guidance robot, which also alerts the user of obstacles in the air. | |||

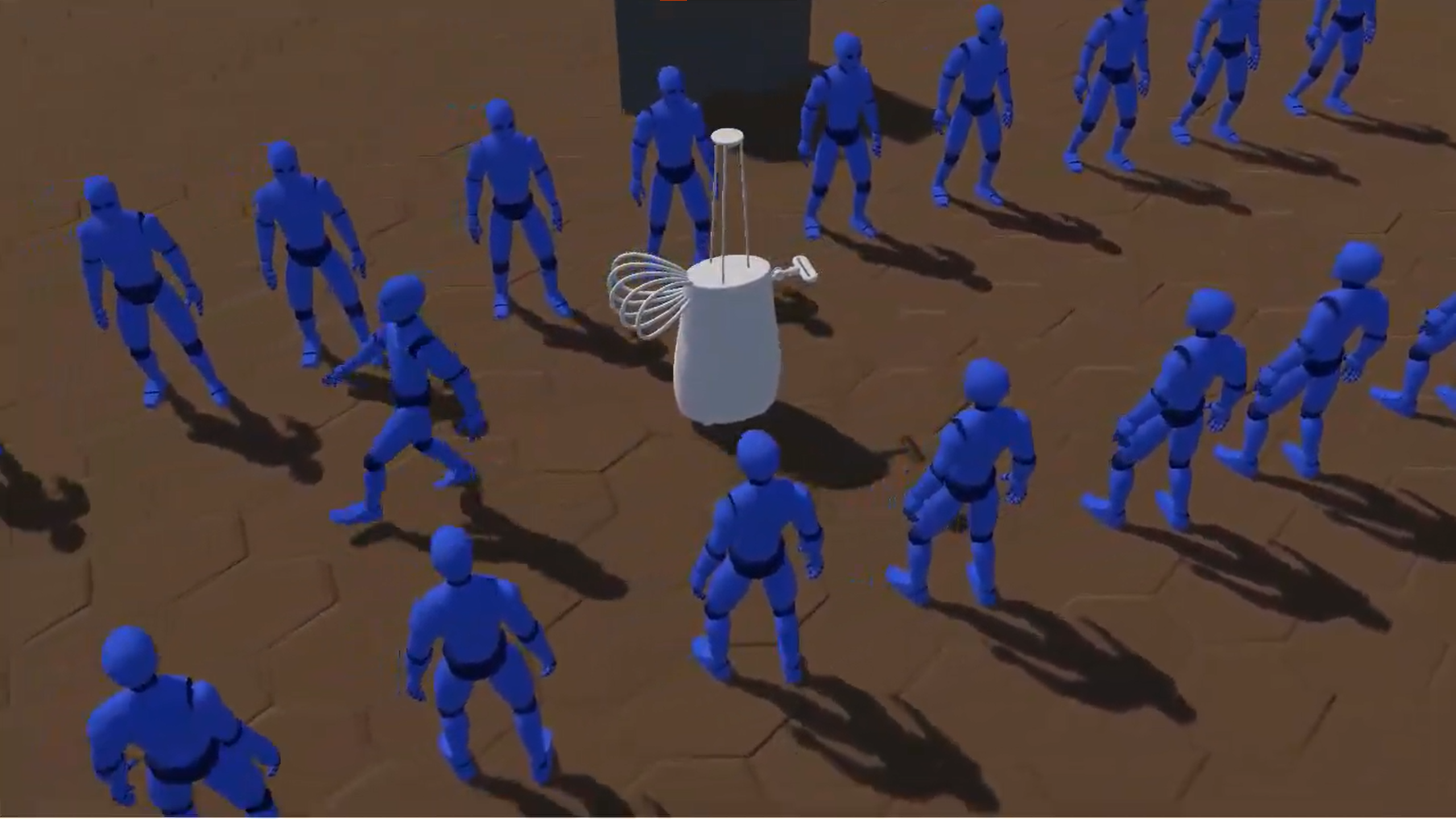

====Crowd-navigation robots==== | |||

As our design has the objective of guiding the user through a university campus it is reasonable to expect that there will be crowds of students at certain times of the day. For our design to be helpful, it needs to handle such situations in an efficient way. Thus, we took inspiration from the minor field of crowd-navigation of robotics. The goal of these robots is exactly that- enabling the robot to continue moving through a crowd, rather than freeze up, every time there is an obstacle in front of it. Some relevant research are these papers "Unfreezing the Robot: Navigation in Dense, Interacting Crowds"<ref name="Unfreezing the Robot: Navigation in Dense, Interacting Crowds">Unfreezing the Robot: Navigation in Dense, Interacting Crowds, Peter Trautman and Andreas Krause, 2010 https://ieeexplore.ieee.org/stamp/stamp.jsp?arnumber=5654369&casa_token=3UPVOvK4kjwAAAAA:IjkyGh3f-uh_x-01jDPtspxLX--eSCBTrZEGTwtVEXc8hU9D2oLLEuOCTCz6OdGHWmy76bX3JA&tag=1</ref>, a robot that can navigate crowds with deep reinforcement learning<ref name="Crowd-Robot Interaction: Crowd-aware Robot Navigation with Attention-based Deep Reinforcement Learning">Crowd-Robot Interaction: Crowd-aware Robot Navigation with Attention-based Deep Reinforcement Learning, Changan Chen, Yuejiang Liu, Sven Kreiss and Alexandre Alahi, 2019, https://ieeexplore.ieee.org/stamp/stamp.jsp?arnumber=8794134&casa_token=neBCeEpBndIAAAAA:wZuGoZYF-YCscI-kJGi5ljIIGkUFpzejSTaxySxytUbIUKeV4sUZze6lZN32gw2DmKwbw-G6ZA</ref>. | |||

==User scenarios== | |||

To get a better feeling of the problem, and the possible solutions two user scenarios are made that show the impact of the guide robot on visually impaired people that want to move through unknown crowded spaces. The design mentioned in these stories are both not what we ended up making, but the intended goal is the same; these stories and the solution we ended up making both try to expand the navigational tools a guidance robot has in crowded spaces. It is important to note that some parts of the robot here described fall out of the scope of the exact problem solved. | |||

===Physical contact through crowded spaces=== | |||

Jack is partially sighted and can see only a small part of what is in front of him. He has recently been helping out fellow students with their field tests which tests a robot guide. Last month he worked with a robot called Visior which helps steer him through his surroundings. Visior is a robot which is inspired and shares its physical features with CaBot. | |||

When Jack used Visior to get to the library to pick up a print request he had to pass through a mediumly-crowded Atlas building since there was an event going on. This went mostly as expected; not too fast and having to stop semi-periodically because of people walking or stopping in front of Visior. The robot was strictly disallowed to purposely make physical contact with other humans. Jack knows this so he learned to step up in these situations and try to kindly ask for the people in front to make way. This used to happen less when he used his white cane since people would easily identify him and his needs. After Jack arrived at printing room in MetaForum he picked up his print request. He handily put his batch of paper on top of his guiding robot, so he didn’t have to carry it himself. | |||

On his way back he almost fell over his guiding robot when it suddenly stopped when a hurried student ran by. Luckily, he did not get hurt. When Jack came home after this errand he crashed on his couch after an exhausting trip of anticipating the robot’s quirky behaviour. | |||

The next day the researchers and developers of Visior came to ask about his experiences. Jack told them about his experience with Visior and their trip to the library. The developers thanked him for his feedback and started working on improving Visior. | |||

This week they came back with the now new and improved Visior-robot. This version has been installed with a softer exterior and now rides in front of Jack instead of by his side. The developers have made it capable of safely bumping into people without causing harm. They also made it capable of communicating with Jack if it thinks it might have to stop suddenly to make Jack a bit more at ease when traveling together. | |||

The next day Jack used it to again make a trip to the printing space in MetaForum to compare the experience. When passing through the crowded Atlas again (there somehow always seems to be an event there) he was pleasantly surprised. He found it easier to trust Visior now that it was able to communicate the points in the trip where Visior thought they might have to stop or bump into other pedestrians. For example, when they came across a slightly more crowded space Visior had guided Jack to walk alongside a flow of other pedestrians. Jack was made aware of the slightly unknown nature of their surroundings by Visior. Then when a student suddenly tried to cross their path without looking, Visior had unfortunately bumped into their side. Visior gradually slowed their pace down to a halt. Jack obviously felt the bump but was easily able to stay stable due to the prior warning and the less drastic decrease in speed. The student who was now naturally aware of the something moving in their blind spot immediately stepped out of the way and looked at Jack and Visior; seeing the sticker stating that Jack was visually impaired. Jack asked them if they were alright, to which they responded with saying they were fine after which they both went on their way. After picking up his print he went back home. On his way back he had to pass through the small bridge between MetaForum and Atlas in which a group of people were now talking, blocking a large part of the walking space. Visior guided Jack to a small traversable path open besides the group; taking the risk that the person there would slightly move and come onto their path. Visior and Jack could luckily squeeze by without any trouble and their way back home was further uneventful. | |||

When the Developers of Visior came back the next day to check up on him Jack told them the experience was leagues better then before. He told them he found walking with Visior less exhausting than it had been before and found the behaviour of it more human-like making it easier to work with. | |||

===Familiar guidance advantage=== | |||

Meet Mark from Croatia | |||

He is a Minor Student following Mathematics courses, and lives on (or near) campus | |||

Mark is severely near-sighted, being born with the condition he has never seen very well. Mark is optimistic but chaotic. | |||

Mark likes his study and likes playing piano. | |||

Notable details: | |||

Mark makes use of a white cane and audio-visual aids to assist with his near-sightedness. | |||

He just transferred to TU/e for a minor and doesn't know many people yet. Mark will only be here a short time for his minor. He has a service dog at home, but does not have the resources, time or connections to provide for it here, and so he left it at home. | |||

Indoors, mark finds it hard to use his cane because of crowded hallways and he dislikes apologizing when hitting someone with his stick or being an inconvenience to his fellow students. Mark can read and write English fine, but still feels the language barrier. | |||

In a world without our robot mark might have to navigate like this: | |||

Mark has just arrived for his 2nd day of lectures. And will be going to the wide lecture hall at Atlas -0.820. Mark again managed to walk to Atlas (as we will not be tackling exterior navigation), and uses his cane and experience to navigate the stairs and rotary door of Atlas, using it to determine the speed and size of the revolving element to get in, and using the cane to determine the position of the doors and opening<ref>https://youtu.be/mh5L3l_7FqE</ref>. | |||

Once inside, he is greeted by a fellow student who has noticed him navigating the door. Mark had already started concealing the use of his cane, as he doesn't like the attention and so the university staff didn't notice him. Luckily, his fellow student is more than willing to help him get to his lecture hall. Unfortunately, the student is not well versed in guiding visually impaired around, and it has gotten busy with students changing rooms. | |||

Mark is dragged along to the lecture hall by his fellow student, bumping into other students who don't notice he cannot see them, as his guider is hastily pulling him past the students. Mark almost loses his balance when his guide slips past some other students, narrowly avoiding the trashcan while dragging mark by his arm. Mark didn't see the trashcan, which is not at eye level, and collides with the metal frame while trying to copy the movement of his guider to dodge the other students. He is luckily unharmed, and manages to follow his guide again, until he is finally able to sit in the lecture hall, ready to listen for another day. | |||

The next day a student sees Mark struggling with the door and shows Mark a guide robot. The robot has the task of getting mark to the lecture hall Mark needs to be. It starts moving and communicates its intended speed and direction through the feedback in the handle. As a result, Mark can anticipate the route the robot will take, similar as to how a guide would apply force to Mark's hand to change direction. | |||

The robot has reached the crowd of students moving through the busy part of Atlas. Its primary objective is to get Mark through this, and even though many students notice the robot going through, it still uses clear audio indications to warn students it will be moving through and notifies Mark it goes into some alternate mode through the handle. Mark notices, and thus becomes alert as he also feels that the robot reduces the number of turns it makes, navigating through the crowd in the most straightforward route it can take. Mark likes this, it is making it easy for him to follow it, and also for others to avoid them. | |||

Still, a sleepy student bumps into the robot as it is crossing. Luckily the robot is designed to contact other students, and its rounded shape, enclosed wheels (or other moving parts) and softened bumpers prevent harm. The robot does however slightly reduce its pace and makes an audible noise to let the sleepy student know it touched the robot too hard. Mark also notices the collision, partially because the bump makes the robot shake a little and loose a bit of pace, but mainly because his handle clearly and alarmingly notifies him, Mark also knows the robot will still continue, as the feedback of the handle indicates to him that it is not stopping. | |||

After the robot gets through the crowd, it makes it to the lecture hall. It parks just in front of the door and tells mark to extend his free hand slightly above hip level, telling him they arrived at a closed door that opens towards them swinging to his right, similarly to how a guide would, so mark can grab the door handle, and with support of the robot open the door. The robot proceeds mark slowly into the space, it goes a bit too fast though, and mark applies force to the handle, pulling it slightly in his direction. The robot notices this and waits for Mark. | |||

After they enter the lecture hall, the robot asks the lecturer to guide mark to an empty seat (and may provide instructions on how to do so). When mark is seated, the robot returns to its spot near the entrance, waiting for the next person. | |||

==Problem statement== | |||

The previous problem statement was quickly found to be too broad. In this research about state-of-the-art it was found that the problem statement consists out of a plethora of sub problems which all have to work in tandem to create a functional solution. For this reason, it is important to scope the problem as much as possible to create a manageable project. Throughout research on the topic of guidance robots the following problems were identified: | |||

*Localization of the guide | |||

*Identification of obstacles or other persons | |||

*Navigation in sparse crowds | |||

*Navigation in dense crowds | |||

*Overarching strategic planning (e.g. navigating between multiple floors or buildings) | |||

*Interaction with infrastructure (e.g. Doors, elevators, stairs, etc.) | |||

*Effective communication with the user (e.g. user being able to set a goal for the guide) | |||

We decided to focus on ‘Navigation of guidance robots in dense crowds on TU/e campus’. This was chosen because for navigation on campus such a ‘skill’ (an ability the guide can perform) is necessary. Typical scenarios in which such a skill would be useful for a typical student would be during on campus events, navigation in and out of crowded lecture rooms, or simply a crowded bridge or hallway. Besides its necessity it is also an active field of study without a clear final solution yet<ref name=":3">Mavrogiannis, C., Baldini, F., Wang, A., Zhao, D., Trautman, P., Steinfeld, A., & Oh, J. (2021). Core challenges of social robot navigation: A survey. ''arXiv preprint arXiv:2103.05668''.</ref>. Mavrogiannis et al.<ref name=":3" /> defines the task of social navigation as ‘to efficiently reach a goal while abiding by social rules/norms.’. | |||

A reformulation of our problem statement thus results in the following research question: ‘How should robots socially navigate through crowded pedestrian spaces while guiding visual impaired users?’ | |||

To work on this problem, it is assumed the remaining functions of the previous list are assumed to be working. | |||

==='''Scoping the problem'''=== | |||

At this time the first meeting with Assistant professor César López was held. Mr. López is part of the control systems technology group of the TUE and focusses on designing navigation and control algorithms for robots operating in a semi-open world. In our meeting the most important recommendation was that the navigation should be split up even further and a more defined crowd should be used to define the guide’s behaviour. He laid out that different crowds have different qualities. These crowds can roughly be split up into chaotic crowds; where there is no exact order and behaviour is less predictable (e.g., an airport where everyone needs to go in different directions), and structured crowds; where behaviour ''is'' predictable, such as crowds found walking in a hallway. The simplest structured crowd is one where all people have a unidirectional walking direction. This kind of behaviour can also be found in a paper from Helbing et al.<ref>Helbing, D., Buzna, L., Johansson, A., & Werner, T. (2005). Self-Organized Pedestrian Crowd Dynamics: Experiments, Simulations, and Design Solutions. ''Transportation Science'', ''39''(1), 1–24. <nowiki>https://doi.org/10.1287/trsc.1040.0108</nowiki></ref> which amongst other things describes crowd dynamics. The same paper also describes how a crowd with only 2 opposing walking directions self-organizes to two side-by-side opposing ‘streams’ of people. | |||

López then expanded on this finding by saying that the robot, in this crowd, could roughly be in 3 distinct scenarios': The robot could walk along a unidirectional crowd, it could walk in the opposite direction of a unidirectional crowds, or it could walk perpendicular to the unidirectional crowd. All of these have an application when navigating the university. López recommended that our research should be focused on only 1 of these scenarios since they all need different behavioural models unless a general navigation method was found. | |||

To summarize, it can be seen that for the guide to efficiently navigate in tight spaces, like hallways or in a lesser extend doorways, requires it to be able to navigate dense crowds which behave in a unidirectional manner. In navigating such a crowd, different approaches can be taken depending on the walking direction of the crowd and the guide. | |||

On López’s recommendation, it was decided to narrow the behavioural research down to only walking alongside a unidirectional crowd since this was the most standard case. | |||

To conclude this section the final research question is defined as ‘How should robots socially navigate through unidirectional pedestrian crowds while guiding visual impaired users?’.<br /> | |||

==USE analysis of the crowd navigation technology== | |||

This section will discuss the relevance and the impact of a safe crowd-navigation guidance robot, on users, society at large, and enterprises. | |||

===Users=== | |||

The robot has a number of possible users, but for this design there are two types distinguished in this design: | |||

*The visually impaired handler of the robot | |||

*The other persons participating in the crowd | |||

In the Netherlands around 2.7% of the population has severe vision loss, including blindness<ref>Country - The International Agency for the Prevention of Blindness (iapb.org)</ref>. This is over 400 thousand people, who do not know which route to walk in a new environment, where only a room number is given. There are aids such as a guide dog or cane, but those make sure blind people do not collide with the environment instead of guiding to an unknown location in a new surrounding. So, a device that guides those visually impaired people to a new location they have never been on campus, such as, meeting room Metaforum 5.199 is needed. To guide them to this meeting room a navigation is needed through crowds. | |||

As mentioned, modern robots have a freezing problem when walking to crowds, which is not optimal when walking with the sometimes-dense crowds on the TU/e campus. That is why nudging and sometime bumping is needed sometimes. The challenge here is to guide the handler as smoothly as possible while sometimes nudging and bumping with third persons. | |||

As the plan was to design a physical robot with inspiration taken from the CaBot, a lot of inspiration is taken from their user research for visually impaired people. On top of that research has been done in guide dogs and their ways of guiding. | |||

For third persons to the robot and handlers research has been done mainly focused on the touching and nudging aspect of the robot. This to see what reactions a touching robot may elicit, the safety of this concept, and the ethics of robotic touch. | |||

Secondary users include institutions that provide the robot for visually impaired people to navigate through their buildings. These users include, universities, government buildings, shopping malls, offices or museums. | |||

===Society=== | |||

As mentioned above, 2.7% of the population severe from vision loss, however there are many more benefits from a robot that can safely and quickly navigate through a crowd. Any robot that has a mobile function in society, will at some point encounter a crowd. Whether that is a dense or sparse crowd, or simply people blocking an entry or hallway. Consider robots that work in social service such as a restaurant, delivery robots or even guide robots for others than visually impaired people, for example at museums or shopping malls. | |||

For these robots, it is important that they can safely traverse through crowds in the quickest way possible. The solution investigated and presented here, is a good step in the right direction. Of course, each of these robots would need a different design in order to properly execute its function, but the strength lies in the social algorithm where the robot moves through a crowd in a different way than robots do now. | |||

Specifically for navigating visually impaired people, it helps with their accessibility and inclusivity in society. Implementing a robot such as this, will allow them to be a more integral part of society without having to rely on other people. | |||

===Enterprise=== | |||

For enterprises that might employ these robots there are two advantages. The use of the robot will enable visually impaired customers to have better access to any services the companies might provide. In addition, they will have a competitive advantage over competitors that do not provide such a robot or such a service. For example, a shopping mall would improve their accessibility which would turn into more customers, whereas government buildings improve general satisfaction. | |||

Specifically for universities such as the TU/e, next to attracting more students, it improves their public image that shows the effort to make a higher education possible and easier for all people. An advantage over other solutions such as a human guide, is that no new employees need to be trained. No big infrastructure changes, such as extra cameras or sensors throughout the building are needed for another type of robot or navigator. And lastly, there is no issue of a failing connection with for example a smartphone. | |||

==Project Requirements, Preferences, and Constraints== | |||

===Creating RPC criteria=== | |||

===='''Setting requirements'''==== | |||

The most important thing in building a robot operating in public spaces is to make it complete its tasks in a safe manner; not harming bystanders or the user themselves. Most hazards in robot-human interactions (or vice versa) in pedestrian spaces are derived from physical contact<ref name=":1">Salvini, P., Paez-Granados, D. & Billard, A. Safety Concerns Emerging from Robots Navigating in Crowded Pedestrian Areas. ''Int J of Soc Robotics'' 14, 441–462 (2022). <nowiki>https://doi.org/10.1007/s12369-021-00796-4</nowiki></ref>. This problem is even more present when working in crowded spaces where physical contact is impractical to avoid or cannot be avoided. Therefore, the robot has to be made physically safe; typical touch, swipes, and collisions are made non-hazardous. This term ‘physically safe’ will be abbreviated to ‘touch safe’ to make its meaning more apparent. | |||

If the robot somehow exhibits unsafe behaviour the user should be able to easily stop the robot with an emergency stop. Because the robot is able to make physical contact and apply substantial force, it becomes even more paramount that rogue behaviour is easily stopped if it occurs. | |||

When interacting with the user it should make them feel safe and thus allow trust in the robot. If the user does not feel safe, they cannot trust the robot and might become unnecessarily anxious or stressed. With as result that the user may avoid its services. Besides this the users might display unpredictable or counter-productive behaviour, e.g., walking excessively slow, not following the robot, etc. To this end the robot should be able to communicate its intent to the user so that they won’t have to be on-edge all the time. | |||

For the robot to be viable in practice there are some restrictions like making the robot relatively cheap since the budget is not unlimited and competing solutions like human guides exist for a set price; too large of a price would make robot guides obsolete. Our use-case also has restrictions on infra-structural modifications to the campus building of the TU/e as a previous solution was rejected due to this reason; installing waypoints all over the buildings was too much of an investment. | |||

===='''Setting preferences'''==== | |||

The robot should not slow down its user when avoidable, so an average speed of 1 m/s (average walking speed visually impaired users<ref name=":2" />) would be a good goal. | |||

For the robot to reach its goal efficiently it should avoid stopping for people. Even more reasons to avoid stopping is to make the user able to walk a constant speed, requiring less mental strain on its user, as well as avoiding hazards which occur due to stopping in pedestrian spaces like surprising and hitting the person behind the user<ref name=":1" />. | |||

===='''Setting constraints'''==== | |||

For the robot to operate in our specified use case it should be able to navigate campus. This involves being able to navigate narrow walk bridges and the wide-open spaces with different walk routes. Such things as interaction with elevators or stairs will not be focused on this research. | |||

===<u>RPC-list</u>=== | |||

====Requirements==== | |||

*Safety | *Safety | ||

** Touch proof | **Touch proof | ||

** Does not harm the user | **Does not harm bystanders or the user | ||

** | **Installed emergency stop | ||

*User feedback/interaction | |||

**Should give feedback about intentions to user | |||

**Robot must be able to receive feedback and information from user | |||

**Handler should feel safe based on interaction with robot | |||

* User feedback/interaction | *Implementable | ||

** Should give feedback about intentions to user | **Relatively cheap | ||

** Robot must be able to receive feedback from user | **No infrastructural changes in buildings | ||

** Handler should feel safe based on interaction with robot | |||

====Preferences==== | |||

* Implementable | |||

** Relatively cheap | *1 m/s (3.6 km/h) walking speed should be reached<ref name=":2">CaBot: Designing and Evaluating an Autonomous Navigation Robot for Blind People (acm.org)</ref> | ||

** No | *Does not stop for people unnecessarily | ||

====Constraints==== | |||

*Environment (TU/e campus) | |||

**Narrow walk bridges/hallways | |||

**Big open spaces | |||

==The solutions== | |||

In this section the worked-out solution to the problem statement is given. The solution consists of a physical and a behavioural description of the robot. These two factors influence each other: The design has an impact on how the robot should behave while socially navigating through a crowd, while the way it navigates through a crowd makes the specific requirements of the design. These together give a clear answer to the research question on how the robot with this specific design should socially navigate through a unidirectional crowd while guiding visually impaired users. | |||

This chapter consist of a detailed explanation of the physical design of the robot. The robot is designed to adhere as closely as possible to the rpc-list. After the design is defined the corresponding behaviour will be defined using scenarios. These scenarios are used to explain the behaviour we would want to see and expect. In a broader sense, this should demonstrate how the method of navigation can be utilised to effectively and safely navigate through dense crowds. | |||

===Design=== | |||

In this chapter the design of the robot model is documented. With the design, main focusses are safety and communication of nudging to the visually impaired handler, and third persons. | |||

For the design of the robot the main inspiration is the CaBot<ref name="CaBot: Designing and Evaluating an Autonomous Navigation Robot for Blind People" />. This is basically a type of suitcase design (rectangular box with 4 motorized wheels), with in the rectangular box all its hardware. Interestingly, it also has a sensor on its handle for vision (higher perspective). This design is rather simple, and the easy flat terrain on the TU/e campus should be no problem for the wheels. The CaBot excels in guiding people to a new location but does not work through crowds. When looking at safety the body design has been altered for nudging and bumping into people. Also, the handle design has been revamped for better communication to user. | |||

=== | ====Handle design==== | ||

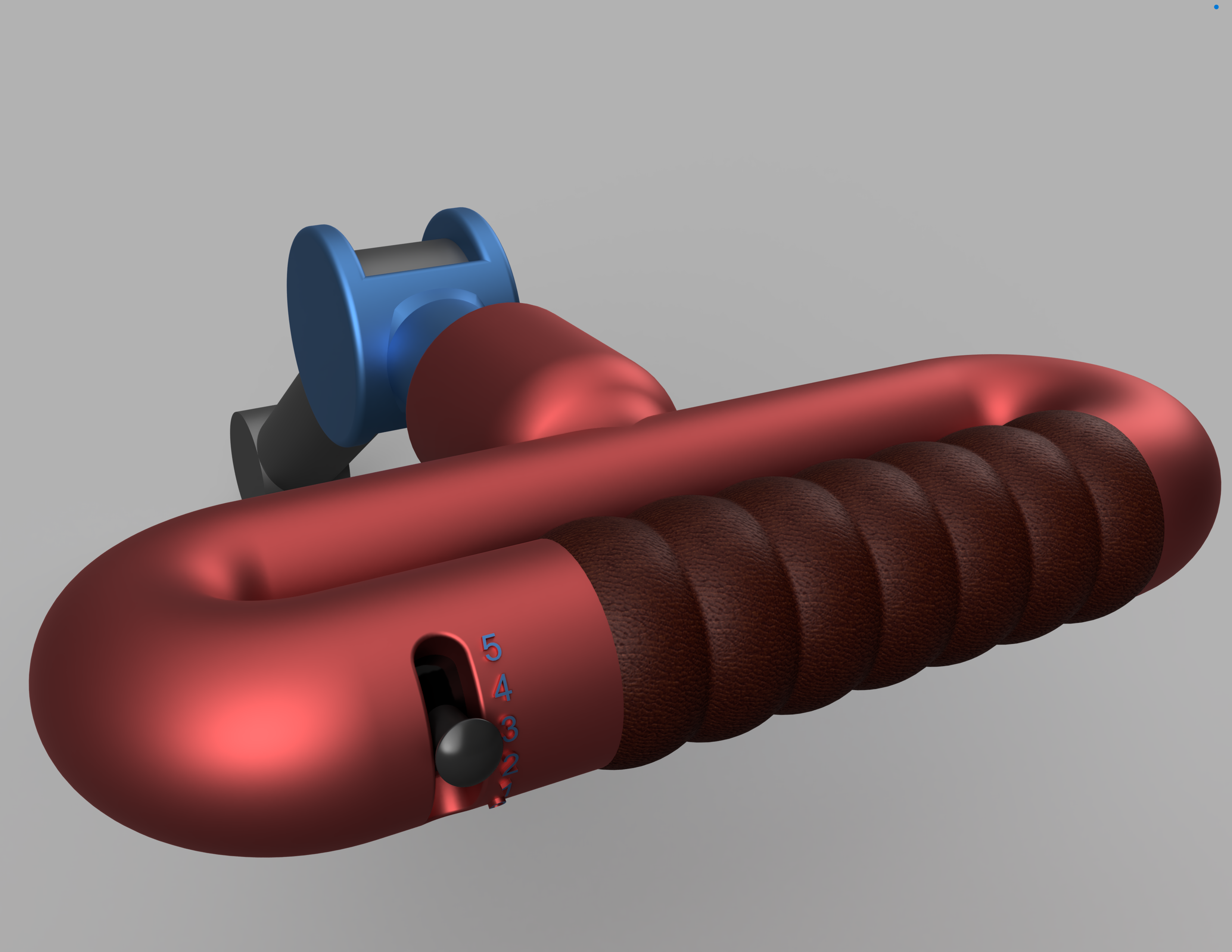

[[File:Guide arm front.png|thumb|Front view of the arm design of the guide robot to which the guided can grab on. The speed switch can be seen on the left. The settings are denoted using written numbers instead of braille because of limitations of the CAD software.]] | |||

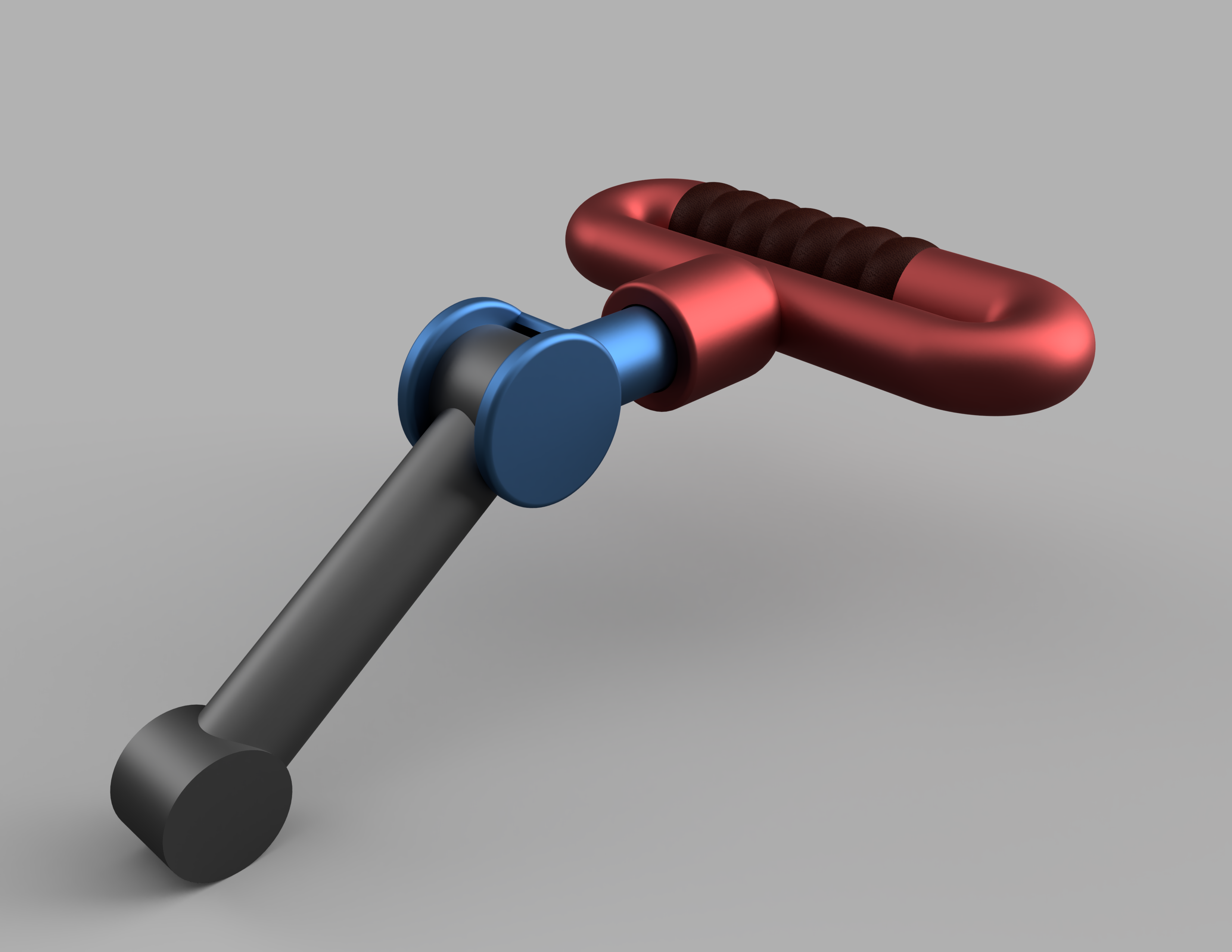

[[File:Guide arm side.png|alt=Back view of the arm design of the guide robot to which the guided can grab on. Interface utilities have not been added yet.|thumb|Back view of the arm design of the guide robot to which the guided can grab on. The upper arm, connecting to the hand-hold, has a suspension mechanism and a hinge.]] | |||

As the robots behaviour is focused on traversing through crowds of people, there is an important function also part of it. How to communicate this direction to its user? Any audible direction will quickly interfere with the sounds from the surroundings, which can result in missing the entire message or allow for confusion. Although a headset might allow for clearer communication, this is still not ideal. Therefore, the easiest way to provide feedback to the user is through the handle. The robot has a few functions that it needs to be able to communicate with the user or be able to be controlled by the user: | |||

*Speed | |||

* | **Setting a faster or slower speed | ||

** | **Communicating slowing down or accelerating | ||

** | **Emergency stop | ||

** | |||

== | *Direction | ||

**Turning left | |||

**Turning right | |||

All of these functions can be placed inside the handle, while designing for minimal strain on the user's active control. The average breadth of a male adult hand is 8.9 cm<ref>''ANTHROPOMETRY AND BIOMECHANICS''. (n.d.). <nowiki>https://msis.jsc.nasa.gov/sections/section03.htm</nowiki></ref>, which means that the handle needs to be big enough to allow people to hold on while also incorporating the different sensors and actuators. For white canes, the WHO<ref>WHO. (n.d.). ASSISTIVE PRODUCT SPECIFICATION FOR PROCUREMENT. At ''who.int''. <nowiki>https://www.who.int/docs/default-source/assistive-technology-2/aps/vision/aps24-white-canes-oc-use.pdf?sfvrsn=5993e0dc_2</nowiki></ref> has presented a draft product specification where the handle should have a diameter of 2.5cm. Which will be used for the handle of the robot as well. Since the robot can be seen as functioning similar to a guide dog, the handle will have a design similar to harnesses used for blind dogs, meaning a perpendicular, although not curved, handle that will stop in place if released.<ref>dog-harnesses-store.co.uk. (n.d.). ''Best Guide Dog Harnesses in UK for Mobility Assistance''. <nowiki>https://www.dog-harnesses-store.co.uk/guide-dog-harness-uk-c-101/#descSub</nowiki></ref> To be able to comfortable accommodate the controls and sensors described below, the total size of the handle will be 20 cm. | |||

The handle, which is connected to the robot, will provide automatic directional cues, without additional sensors or actuators. This will simplify the robot and act more similar to a guide dog. As for the matter of speed, there are three systems that would be implemented. The emergency stop, feedback about the acceleration and deceleration of the robot and the speed control of the user. The emergency stop can be a simple sensor in the handle that detects if the handle is currently being hold, if not, the robot will automatically stop moving and stay in place. The speed can be regulated via a switch-like control as visible on the CAD render on the right. When walking with a guide dog, the selected walking speed is about 1 m/s <ref name=":2" /> for visually impaired people, which means that with five settings, ranging from 0 m/s, 0.5 m/s, 0.75 m/s, 1.0 m/s and 1.25 m/s, the user can set their own speed preference. In order to give feedback about its current setting, the different numbers will be detailed in braille. Furthermore, changing settings will encounter some resistance and a feelable ‘click’ instead of being a smooth transition. The user can, at any times, use their thumb, or any other finger, to quickly check the position of the device and determine the speed setting. The ‘click’ provides extra security that the speed will not be accidentally adjusted without the user being aware of it. To this end, the settings will only affect the actual walking speed after a short delay to allow the user to have time to revert any changes. | |||

Lastly, the robot might, for whatever reason have to slow down while walking through the crowd. Either for obstacles, other people, or in order to go properly with the flow of the crowd. Since this falls outside the speed setting, the user must be made aware of the robots' actions. A simple piezo haptic actuator can do the trick. By placing it in the middle of the handle, it will be easily detected. A code for slowing down, for example a pulsating rhythm, and a code for speeding up, a continuous vibration, will convey the actions of the robot. Of course, this is in addition to the physical feel that the user has via the pull on the handle via the arm. However, because trust is so important in human-robot interactions, this is just additional feedback from the part of the robot to increase the confidence of the user when using the robot. | |||

<br /> | |||

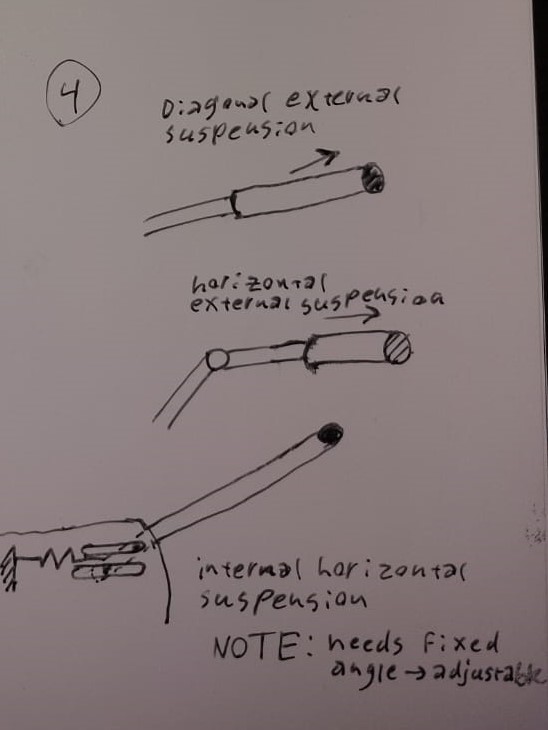

[[File:Arm design sketches.jpg|alt=3 sketches of different designs of an arm for a guidance robot|thumb|3 sketches of different designs of an arm for a guidance robot]] | |||

====Arm design==== | |||

Multiple designs were considered. The arm connects the handle to the body, it is important here that the handle height can be changed. One thing that was added in the name of safety was suspension, so that the movements of the robot would not jerk the arm of the guided if it were to suddenly change speeds, when for example bumping or nudging. Most design iterations went over on how to integrate the suspension. | |||

The first design was a straight pole from the robot body to the guided arm (as can be seen in the top sketch in the figure to the right). A problem we could see was that if the robot were to stop suddenly, it would push the arm slightly up instead of compressing the suspension. To solve this problem a joint was introduced in the middle of the arm (as can be seen in the middle sketch in the figure to the right). An alternative solution was to have the suspension only act horizontally and internalize it (as can be seen in the bottom sketch). This would allow the pole to have the same design as the first sketch without compromising on the suspension behaviour. Another plus would be that the pole would be marginally lighter due to this suspension being moved inwards. | |||

We have chosen for the second design as it had the intended suspension behaviour while remaining as simple as possible. This allows the mechanism to be constructed from mostly off-the-self parts, reducing the cost. | |||

====Body Design==== | |||

For the body three main designs were considered: A square, a cylindrical form, and a cylinder which changes diameter over its height. The square was immediately ruled out due to its sharp corners making it decidedly not touch safe. The more cylindrical shapes could more easily slide through public and had less chance to hit people hard on the front (it allows for a sliding motion instead of head-on collision). This left the choice between a normal cylinder, a cylinder wide at the bottom, and a cylinder wide at the top. | |||

A bottom-heavy design would help with balance; If the robot would bump it would hit at the lowest point, meaning more stability. However, it may surprise people when it hits as they might not notice the wide bottom. This is where the wide top outperforms, as it hits people around their waist/lower back area where collision can be more easily spotted. Furthermore, this is a more effective place to nudge people for them to get out the way (a lower hit might instead make people lift their leg instead of stepping out of the way). A draw back is that the robot is touched higher and more easily tips over. That is why in the design the best of both worlds is chosen. The body has a big diameter lower with a big bumper to not tip over, and has 'whiskers' of a soft, compressible foam material on top at the front to softly touch, or nudge people if they are in the way. Research has shown that touch by a robot elicits the same response by humans as touch by humans<ref>''Using contact-based inducement for efficient navigation in a congested environment''. (2015, August 1). IEEE Conference Publication | IEEE Xplore. <nowiki>https://ieeexplore.ieee.org/document/7333673</nowiki></ref>. The material for the rest of the body is a plastic as to make it not too hard. | |||

[[File:Guide full.png|center|thumb|This cad design shows the oval body shape of the design. It has its biggest diameter at 30 cm high, and whiskers at 120 cm from the ground.]] | |||

The pole on top of the body has two functions. | |||

*Visibility | |||

*Sensors | |||

The pole is 100 cm long, making the whole guide robot stand at 220 cm tall. This helps for the sensors which get, from a higher point of view, a better overview of the crowd. This height also helps with noticeability in dense crowds where at eye-level it will still be visible even when the lower body is (partially) obscured. | |||

===Behavioural description=== | |||

The behavioural description will concern behaviour in a crowd with a singular, uniform walking direction. As mentioned before, the expected behaviour will be described using scenarios. These will first describe the standard scenario, after which two special cases are discussed. Furthermore, it will be briefly discussed how this behaviour might also benefit other crowd types or behaviour. The purpose of the behaviour is to make the robot guide someone efficiently to reach a goal while abiding by social rules/norms. | |||

It is important to note that joining, and leaving these crowds require different behaviour (like sparse crowd navigation). These are thus not considered to fall inside of the scope of the research question. | |||

First, the standard navigation method will be discussed and how it functions in most scenarios. | |||

====The standard scenario==== | |||

López suggested that to navigate, the guide should check where it ''can'' walk, not where it cannot. He also suggested following a lead of some kind could make navigation in unidirectional crowds easier. These traits have been used to define the standard scenario. | |||

In this scenario the robot uses its LIDAR technology to follow a moving point cloud (i.e., the lead) in front of it. This point cloud could be one person or even a whole group. Regardless of this, the point cloud will always indicate the end of the guide's free walking space (space where nothing else stands in its way). It can thus be said that between this lead and the guide, there will in most cases, always be free walking space. As the lead walks in front of the guide it will continuously be creating a space in the crowd behind it, and in front of the robot, where the guide can move. | |||

The robot cannot see the difference between one person or a group, this will make the robot more robust as small details in people's behaviour will not affect the guide's actions. | |||

===='''Scenario 1: Cut off'''==== | |||

While in the standard scenario, someone or something starts to insert itself in between the guide and the previously thought of leading cloud. This has multiple different sub-scenario’s will be discussed. In this scenario we will consider a crowded space with approximately 0.8 persons/m<sup>2</sup> (which nears shoulder-to-shoulder crowds as found in <ref>Trautman, P., Ma, J., Murray, R. M., & Krause, A. (2015). Robot navigation in dense human crowds: Statistical models and experimental studies of human–robot cooperation. ''The International Journal of Robotics Research'', ''34''(3), 335-356.</ref>), where the people move alongside each other. Since the third person is inserting from the side it may not be assumed that only the feelers of the robot make contact. This means more severe consequences may follow. | |||

====='''Decision making criteria'''===== | |||

The decision making of the guide should depend on the intentions of the third person, the effects of their actions on the guide(d), and the effects on themselves. | |||

By far the most difficult thing is to determine the intentions of the third person. Are they trying to insert themselves in front of the robot or are they simply drifting in front. Since their mind cannot be read it seems reasonable to base the decision purely on the latter 2 decisive factors, namely, the effects on the guide(d) and the effects on the person inserting themselves. | |||

====='''Guide’s options'''===== | |||

There are 3 options the robot can take in any given scenario: | |||

{| class="wikitable" | {| class="wikitable" | ||

| | |Effects Action -> | ||

|Bump | |||

|Make way | |||

|Move to the side | |||

|- | |- | ||

| | |Effects on the guide(d) | ||

| | |<nowiki>- Little to no travel delay</nowiki> | ||

- Depending on the severity of the impact it might result in the robot having a sudden change in speed, inconveniencing the guided. | |||

|<nowiki>- The robot might have to slow down temporarily which might inconvenience the guided.</nowiki> | |||

- The robot might have to slow down permanently due to a change in the leads’ walking speed leading to a higher travel time. | |||

- Other people might also try to slip in front leading to multiple delays. | |||

|<nowiki>- The guided might incur a travel delay due to the perpendicular movement.</nowiki> | |||

- Too much side-to-side movement might lead to sporadic guidance to the guided. | |||

- The guide will have to make accurate decisions when sliding in front of someone else which might lead to unexpected problems or delays. | |||

|- | |- | ||

| | |Effects on the person inserting themselves | ||

| | |<nowiki>- They make physical contact with the robot resulting in a risk of injury depending on the severity.</nowiki> | ||

- They might be surprised by the robot resulting in unpredictable scenarios. | |||

- They might not be able to return to their original spot in the crowd resulting in unpredictable consequences. | |||

|<nowiki>- None</nowiki> | |||

|<nowiki>- None</nowiki> | |||

|} | |||

====='''Scenario variables'''===== | |||

It can be seen that the effect of any action is very much context dependent and as such a well-made decision will only be possible if the guide is well-informed. Assuming this is the case for now we can set up 4 factors which will determine the way the robot should act: | |||

1. The relative normal speed of the third person | |||

2. Their relative perpendicular speed | |||

3. The third person’s space to act | |||

4. The robot’s space to act | |||

From this, 4 behavioural tables can be set up: | |||

====='''Scenario 1: expected behaviour'''===== | |||

The following scenarios might seem excessive since the robot will most likely not be a rule-based reflex-agent. This detailed model should however be of importance when informing our decision-making process in the design of the robot as well as the evaluation of the simulation. The following behavioural tables have the relative forward speed of the guide, while at the top the speed the third person has in the direction perpendicular to the guide's walking direction is given. | |||

'''The third person and the robot are capable of making way''' | |||

{| class="wikitable" | |||

| | |||

|Low perpendicular speed | |||

|Medium perpendicular speed | |||

|High perpendicular speed | |||

|- | |||

|Smaller forward speed | |||

|Robot should make way | |||

|Robot should make way, as people think it shows manners and awareness. | |||

|Robot should make way, as people think it shows manners and awareness. | |||

|- | |||

|Same forward speed | |||

|Robot does not make way | |||

|Robot should make way, but prevent heavy breaking, soft pushing | |||

is still an option if the integration is too narrow | |||

|Robot should make way, but prevent heavy breaking, soft pushing | |||

is still an option if the integration is too narrow. | |||

|- | |||

|Larger forward speed | |||

|Robot does not make way | |||

|Robot does not make way | |||

|Robot does not make way, but tries to soften the by moving along the perpendicular direction of the third person | |||

|} | |||

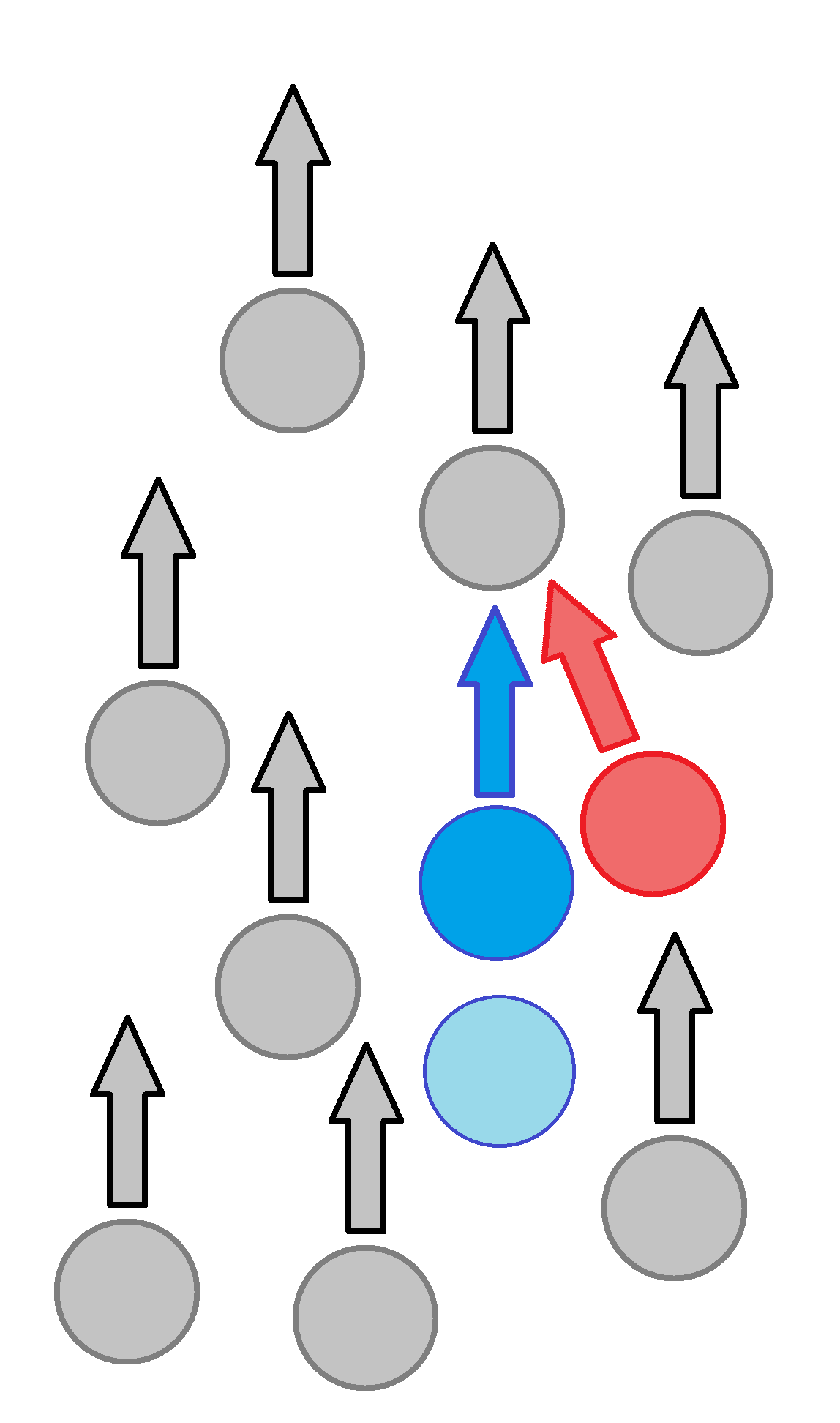

[[File:Scenario 2 inserting file.png|alt=Depiction of a third person inserting themselves between the guide and the lead.|thumb|Depiction of a third person inserting themselves between the guide and the lead. The circles represent people and the guide. The arrows indicate the direction they are moving. Grey is a normal crowd member, red is the third person cutting of the guide, dark blue is the guide, and light blue is the guided.]] | |||

'''Only the third person is capable of making way (see figure to the right)''' | |||

In all these scenarios the robot should use an audio cue to alarm the third alarm that the robot cannot evade them itself. | |||

{| class="wikitable" | |||

| | |||

|Low perpendicular speed | |||

|Medium perpendicular speed | |||

|High perpendicular speed | |||

|- | |||

|Smaller forward speed | |||

|The guide should not make way and risk impact to indicate it has no free space. | |||

|The guide should not make way and risk impact to indicate it has no free space. | |||

|The guide should not make way. If the impact is impending, it should try to soften it by moving in the same perpendicular direction as the third person to soften the impact. | |||

|- | |||

|Same forward speed | |||

|The guide should not make way and risk impact to indicate it has no free space. | |||

|The guide should not make way and risk impact to indicate it has no free space. | |||

|The guide should not make way. If the impact is impending, it should try to soften it by moving in the same perpendicular direction as the third person to soften the impact. | |||

|- | |||

|Larger forward speed | |||

|The guide should not make way and risk impact to indicate it has no free space. | |||

|If impact is impending, it should move the whiskers in the direction of the third person if possible. If this is not possible, the guide should slow down to soften the impact. | |||

|If impact is impending, it should move the whiskers in the direction of the third person if possible. If this is not possible, the guide should slow down and move in the same perpendicular direction as the third person to soften the impact. | |||

|} | |||

'''Only the robot is capable of making way''' | |||

{| class="wikitable" | |||

| | |||

|Low perpendicular speed | |||

|medium perpendicular speed | |||

|High perpendicular speed | |||

|- | |- | ||

| | |Smaller forward speed | ||

| | |Robot should make way | ||

|Robot should make way | |||

|Robot should make way, trying to prevent heavy breaking | |||

|- | |- | ||

| | |Same forward speed | ||

| | |Robot should make way | ||

|Robot should make way | |||

|Robot should make way, trying to prevent heavy breaking | |||

|- | |- | ||

| | |Larger forward speed | ||

| | |Robot should make way | ||

|Robot tries to make way, preventing heavy breaking | |||

|Robot tries to make way, preventing heavy breaking | |||

|} | |||

'''Neither are capable of making way''' | |||

In all these scenarios the robot should use an audio cue to alarm the third alarm that the robot cannot evade them itself. | |||

{| class="wikitable" | |||

| | |||

|Low perpendicular speed | |||

|Medium perpendicular speed | |||

|High perpendicular speed | |||

|- | |- | ||

| | |Smaller forward speed | ||

| | |Robot should try to make as much way as possible before making continuous contact with the person until the third person finds a way to decouple. | ||

|Robot should try to minimize the risk of harm by moving slightly to the side to soften the impact. Afterwards it should try to maintain continuous contact with the person until the third person finds a way to decouple. | |||

|Robot should try to position itself so that the harm from the impact can be minimized. For the same reason they should move slightly to the side to soften the impact. Furthermore it should maintain the continuous contact with the person until the third person finds a way to decouple. | |||

|- | |- | ||

| | |Same forward speed | ||

| | |Robot should try to make as much way as possible | ||

If there is not much room the robot should not bother to bump | |||

|Robot should try to minimize the risk of harm by moving slightly to the side to soften the impact. Afterwards it should maintain contact with the person until the third person finds a way to decouple. | |||

|Robot should try to position itself so that the harm from the impact can be minimized. For the same reason they should move slightly to the side to soften the impact. Furthermore it should maintain the continuous contact with the person until the third person finds a way to decouple. | |||

|- | |- | ||

| | |Larger forward speed | ||

| | |Robot should try to make as much way as possible before making continuous contact with the person until they naturally separate or the third person finds a way to decouple. | ||

|Robot should try to minimize the risk of harm by moving slightly to the side to soften the impact. Afterwards it should maintain contact with the person until they naturally separate or the third person finds a way to decouple. | |||

|Robot should try to position itself so that the harm from the impact can be minimized. For the same reason they should move slightly to the side to soften the impact. Furthermore it should maintain the continuous contact with the person until they naturally decouple or the third person finds a way to decouple. | |||

|} | |||

===='''Scenario 2: Stalled lead'''==== | |||

While in the standard scenario the lead has stopped moving. The guide may avoid the lead or nudge them. The guide is moving unidirectionally with the lead, and it is therefor assumed all impact will occur at the front of the guide. | |||

====='''Guide options'''===== | |||

The decision making of the robot should depend on the effects on the guide(d) and on the leads. The robot may in all situations attempt the following options: | |||

{| class="wikitable" | |||

|Effects Action -> | |||

|Try alternative route | |||

|Robot nudges using feelers | |||

|Stops | |||

|- | |- | ||

| | |Effects on the guide(d) | ||

| | |<nowiki>- The robot has to make side-to-side movement which results in a more sporadic pathing. This might inconvenience the guided.</nowiki> | ||

- Moving aside in a crowded space may result in the guide, or worse guided, to be pushed by other people. | |||

- Behaviour requires more complex observational methods and behaviour. | |||

|<nowiki>- Does not always resolve the problem which leads to more delay. </nowiki> | |||

|<nowiki>- Guide stops. significant time delay.</nowiki> | |||

- People behind guided may walk into or push them. | |||

|- | |||

|Effects on the stalled lead | |||

|<nowiki>- None</nowiki> | |||

|<nowiki>- Person may have to step aside or start moving.</nowiki><br />- Person might be uncomfortable with being nudged or pushed. | |||

|<nowiki>- None</nowiki> | |||

|} | |||

====='''Scenario variables'''===== | |||

The main variables are the following: | |||

1. Space to act for guide | |||

2. Space to act for lead | |||

====='''Scenario 2: expected behaviour'''===== | |||

<u>Due to the great low-risk results of nudging using the feelers this will in all cases be the first action</u>. | |||

If the attempt fails however, it must be decided if the guide should try to path around the now blocked path or to stop. If it can be seen that the lead ahead is stopping out of its own volition (There is free space in front of the lead), the robot should try to navigate around the lead in most cases. If the lead is expected to start moving in a reasonable timeframe, depending on the amount of time rerouting would take, the guide should stop. Something which hasn’t been taken into account yet is the actual freedom of the guide; a dense surrounding, or a fast-moving crowd could stop the guide being able to safely step aside. In these cases, the specifics and safety of the cross-flow-behaviour are of importance. | |||

Assuming the cross-flow-behaviour to be only safe in the limited case of a sparse, normal moving crowd, the following behavioural table can be made: | |||

{| class="wikitable" | |||

| | |||

|Normal moving crowd | |||

|Fast moving crowd | |||

|- | |- | ||

| | |Sparse crowd | ||

| | |Try alternative route | ||

|Stop | |||

|- | |- | ||

| | |Dense crowd | ||

| | |Stop | ||

|Stop | |||

|} | |} | ||

If the guide has stopped for a while and sees an opportunity for the lead to move, it should play a message asking for the lead to move. This also notifies the guided of the situation. | |||

<br /> | |||

====Generalisation==== | |||

The following scenarios pertain to a situation where the guide does not navigate alongside a unidirectional crowd flow. Although this is out of the scope of this research it is useful to look at what a robot with this design can add to other scenarios using touch. First, there will be a short look at the possibilities of physical touch in the other scenarios sketched by López. | |||

Opposing a unidirectional crowd is slightly harder for a robot, while moving through an opposing flow the robot is dependent on people moving out of the way. Otherwise, no space might open up where the robot can go. Here a robot that is programmed to not touch people might stall if there is a high enough crowd density. This is where light bumping comes might be useful, if people do not go fully out of the way they will get a light touch. | |||

=== | Crossing a unidirectional crowd is the hardest scenario. It might be hard for positions to open up where the robot can go. This due to people coming from the side, and the social implications that come with that. Does the robot give space, or will it walk on? Research has found that people find robots more social, and better if they let people pass first, but this has a problem of the robot stalling. That is why in dense crowds it might be preferred that the robot starts nudging to make a way for the guided person. | ||

Integrating into a crowd is an important behaviour of the robot. Inside the TUE, maximum crowd densities are assumed to occur only scarcely in the span of a year. In less dense crowds the guide should be able to integrate into the flow without hitting other people. However, in the scenario that crowds are to be very dense, the guide should be able to act in a more assertive manner due to the increase in safety measures preventing harmful human-robot collisions. | |||

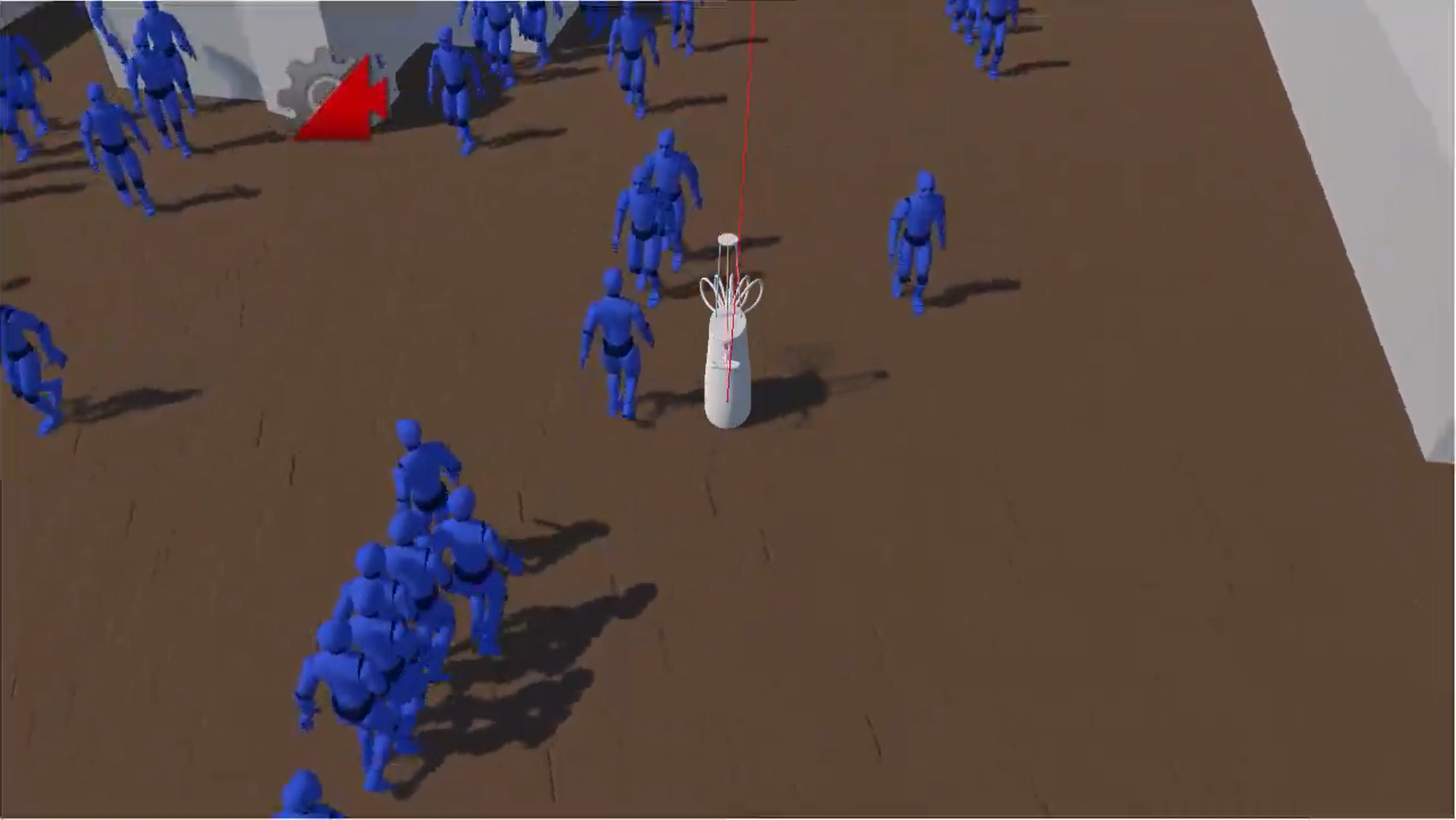

==Simulation== | |||

'''Goal''': | |||

In order for the behaviour description to be relevant, we show that the proposed behaviour is safe to employ in a representative environment. To measure this safety, we first of all measure the collisions and make the reasonable assumption that this is the primary source of harm our robot can afflict. The simulation will gather data about the frequency of collisions, and statistics on the forces applied to the person and robot during the collision. Secondly, we consider the adherence of the robot's behaviour to the ISO guidelines for safety<ref name=":0" /><ref name=":4" />, focussing on the minimum safety gap and the maximum relative velocity guidelines. | |||

'''Overview of applicable ISO Safety standards''' | |||

According to ISO 10218-2:2011<ref name=":4" />, for a (industrial) robot to operate safely: | |||

*Protective barriers should be included (Which is discussed in the body design) | |||

*Warning labels should indicate potential hazards. As the robot does not operate any manipulators or tools, and is designed not to be able to crush someone, or run someone over, the only danger here is tripping, which is also minimized in the design. | |||

*Light curtains, pressure mats and other safety devices: The robot includes whiskers at the front, that also help with avoiding a direct body collision. | |||

*Others, which do not apply to this robot, as it is not present in an industrial setting. | |||

In addition, ISO 15066:2016<ref name=":0" /> indicates requirements for robots in proximity to human operators: | |||

*A risk assessment should be made to identify hazards to surrounding personnel. | |||

*Monitoring systems to keep track of speed and separation, which are included in the form of the LIDAR sensor. | |||

*Force and power limits of the robot: The robot is not incredibly high powered, the amount of force it is capable of applying in normal operation depends on the physical implementation of this proposed concept, but is unlikely to be problematic, as there is no need for high-powered actuators for the drive train to function. | |||

*Emergency stop (already covered in the other ISO standard) | |||

*A safety distance gap should be kept to people around the robot, this is however ignored, as we are developing a solution that aims to be safe, without needing to keep clear of humans. | |||

*Force and Pressure limits are imposed on the robot, to prevent serious harm, both during normal operation and collision. These include: | |||

**A limit on the contact force during collision of 150N, '''this will be the focus of the simulation'''. | |||

**A pressure limit during collision of 1.5 kN/m^2, we make the reasonable assumption this pressure limit cannot be reached without violating the previous condition, as our robot is designed to be as smooth as possible, making it incredibly difficult for the robot to apply a lot of force in a very small and local area. | |||

**Force limiting or compliance techniques are to be implemented to reduce the force applied during collision. This comes in the form of whiskers for the compliance aspects, allowing them to deform to reduce the impact, and the behaviour is designed to limit the force by slowing, satisfying the limitation requirement. | |||

As a performance measure for the simulation, we consider the maximum force applied in a collision during the duration of the simulation. This is the element of the ISO standards that is not negated by the behaviour design, or design of the body itself, and thus the part that remains to show the robot is safe to operate. | |||

This data can also inform the design of the robot and its behaviour, as it can test various form factors, and navigation algorithms to optimize. In the end the simulation results act as assistance in design iteration, and ultimately inform us about the viability of the robot in crowds. | |||

'''Why a simulation:''' | |||

Testing which techniques have an impact require a setting with a lot of people to form a crowd, which can be controlled precise enough to eliminate outside or 'luck' factors. | |||

The performance needs to be a function of measurable starting conditions, and the behaviour of the robot. | |||

When using a real robot, we would need to work in an iterative approach, where we can alternate the appearance and workings of the robot after each simulation, to simulate different scenarios. This would require re-building the robot | |||

each time, which is something we simply don't have time for. Additionally, to obtain a large enough crowd (think of more than a 100 students) would become tricky in such a short notice. Using a real-world crowd (by going to the buildings in-between lectures) would present the most accurate situation but is not controllable and not reproducible. There is also the ethical dilemma of testing a potentially hazardous robot in a real crowd, and logistically, organizing a controlled experiment with a crowd of students is not an option. | |||

===Simulation: situation analysis=== | |||

The real world would have the robot guide a blind person through the atlas building, to a goal. This situation can broadly be dissected as: | |||

*Performance Measure: The maximum force applied during collision with a person, which cannot exceed 150 Newton. | |||

*Environment: Dynamic, partially unknown interior room, designed for human navigation. | |||

*Actuators: wheels. | |||

*Sensors: LIDAR & Camera, abstracted to General purpose vision and environment mapping sensors, but are assumed to be limited range and accuracy, systems capable of deducing depth, position and dynamic- or static obstacles. | |||

The environment is assumed to be: | |||

*Partially Observable | |||

*Stochastic | |||

*Competitive and Collaborative (humans aid each other in navigation, but are also their own obstacles) | |||

*Multi-agent | |||

*Dynamic | |||

*Sequential | |||

*Unknown | |||

===Considered Simulation Design variants.=== | |||

Simulating the robot may take various shapes, each with their own advantages. When considering the type of simulation we will make, we considered the following aspects: | |||

Environment Model: | |||

*Mathematical: Building a model of the environment, purely based on mathematical expressions of the real world. | |||

*Geometrical: Building a 3d version of the environment, using a 3d virtual representation of the environment. | |||

*2D: The environment does not consider depth | |||

*3D: The environment does consider depth | |||

Robot Agent: | |||

*Global awareness: The robot model has access to all information across the entire environment. | |||

*Sensory awareness: Observing the Simulated environment with virtual (imperfect) sensors. The robot only has access to the observed information. | |||

*Mechanics simulation: The detail at which the robot's body is modelled. Factors include whether the precise shape is considered, the accuracy of actuators and other systems, and delay between command and response. | |||

Crowd Behaviour Model: | |||

*Boid: Boids are a common method of simulating herd behaviour in animals (particularly fish) | |||

*Social Forces: The desire to approach a goal and avoid and follow the crowd is captured in vectors, which determine the velocity of each agent in the crowd. | |||

===Simulation: Crowd implementation=== | |||