PRE2020 3 Group11: Difference between revisions

TUe\20182751 (talk | contribs) No edit summary |

TUe\20172545 (talk | contribs) |

||

| (375 intermediate revisions by 5 users not shown) | |||

| Line 1: | Line 1: | ||

<font size = '6'>The acceptance of self-driving cars</font> | |||

<div style="font-family: 'Arial'; font-size: 16px; line-height: 1.5; max-width: 1100px; word-wrap: break-word; color: #333; font-weight: 400; box-shadow: 0px 25px 35px -5px rgba(0,0,0,0.75); margin-left: auto; margin-right: auto; padding: 70px; background-color: white; padding-top: 25px;"> | |||

---- | |||

= Group members = | |||

{| border=1 style="border-collapse: collapse; width: 60%; height: 14em;" | |||

! style="width: 10em;" | Name | |||

! style="width: 10em;" | Studentnumber | |||

! style="width: 10em;" | Email | |||

|- | |||

| Laura Smulders | |||

| 1342819 | |||

| L.a.smulders@student.tue.nl | |||

|- | |||

| Sam Blauwhof | |||

| 1439065 | |||

| S.e.blauwhof@student.tue.nl | |||

|- | |||

| Joris van Aalst | |||

| 1470418 | |||

| J.v.aalst@student.tue.nl | |||

|- | |||

| Roel van Gool | |||

| 1236549 | |||

| R.p.v.gool@student.tue.nl | |||

|- | |||

| Roxane Wijnen | |||

| 1248413 | |||

| R.a.r.wijnen@student.tue.nl | |||

|} | |||

= Introduction = | |||

== Problem statement == | == Problem statement == | ||

Self-driving cars are believed to be | Self-driving cars are generally believed to be safer than manually driven cars. However, they can not be 100% safe. Because crashes and collisions are unavoidable, self-driving cars should be programmed to respond to situations where accidents are highly likely or unavoidable (Nyholm & Smids, 2016). Among others, there are three moral problems involving self-driving cars. First, there is the problem of who decides how self-driving cars should be programmed to deal with accidents. Secondly, the moral question of who has to take the moral and legal responsibility for harms caused by self-driving cars is asked. Lastly, there is the morality of the decision-making under risks and uncertainty. | ||

A problem closely associated with the morality of self-driving cars is the trolley problem; For example, in the case of an unavoidable accident where the car has to choose between crashing into a kid, or into a wall, harming its four passengers, which should the car choose? When this choice is made, there is also the question of who is morally responsible for harms caused by self-driving cars. Suppose, for example, when there is an accident between an autonomous car and a conventional car. This will not only be followed by legal proceedings, it will also lead to a debate about who is morally responsible for what happened (Nyholm & Smids, 2016). | |||

A lot of uncertainty is involved with self-driving cars. The self-driving car cannot acquire certain knowledge about the | A lot of uncertainty is involved with the decision-making process of self-driving cars. The self-driving car cannot acquire certain knowledge about the trajectory of other road vehicles, their speed at the time of collision, and their actual weight. Second, focusing on the self-driving car itself, in order to calculate the optimal trajectory the self-driving car needs to have perfect knowledge of the state of the road, since any slipperiness of the road limits its maximal deceleration. Finally, if we turn to the case of an elderly pedestrian in the trolley problem, again we can easily identify a number of sources of uncertainty. Using facial recognition software, the self-driving car can perhaps estimate his age with some degree of precision and confidence. But it may merely guess his actual state of health (Nyholm & Smids, 2016). | ||

The decision-making | The decision-making of self-driving cars is realistically represented as being made by multiple stakeholders; ordinary citizens, lawyers, ethicists, engineers, risk assessment experts, car-manufacturers, government, etc. These stakeholders need to negotiate a mutually agreed-upon solution (Nyholm & Smids, 2016). Whatever this mutually agreed-upon solution will be, all parties will have to account for the general acceptance of their implemented solution if they wish for self-driving cars to be successfully deployed. This report will focus on the relevant factors that contribute to the acceptance of self-driving cars with the main focus on the private end-user. Among other things, into account are taken some ethical theories which could be a guideline for the decisions the car has to make: utilitarianism, kantianism, virtue ethics, deontology, ethical plurism, ethical absolutism and ethical relativism. Aside from ethical theories, other influences on acceptance will also be treated in this report. | ||

== | == State-of-the-art/Hypothesis == | ||

The developments and advances in the technology of autonomous vehicles have recently brought self-driving vehicles to the forefront of public interest and discussion. In response to the rapid technological progress of self-driving cars, governments have already begun to develop strategies to address the challenges that may result from the introduction of self-driving cars (Schoettle & Sivak, 2014). The Dutch national government aims to take the lead in these developments and prepare the Netherlands for their implementation. The Ministry of Infrastructure and the Environment has opened the public roads to large-scale tests with self-driving passenger cars and trucks. The Dutch cabinet has adopted a bill which in the near future will make it possible to conduct experiments with self-driving cars without a driver being physically present in the vehicle (mobility, public transport and road safety, etc.). | |||

The end-consumers (the actual drivers) will eventually decide whether self-driving cars will successfully materialize on the mass market. However, the perspective of the end user is not often taken into account, and the general lack of research in this direction is the reason this research is conducted. Therefore, our research question is: "What are the relevant factors that contribute to the acceptance of self-driving cars for the private end-user?" User resistance to change has been found to be an important cause for many implementation problems (Jiang, Muhanna, & Klein, 2000), so it is very likely that the implementation of the self-driving car will not be trivial as people may be resistant to accept the new technology. It is likely that a significant percentage of drivers may not be comfortable with full autonomous driving, as people might experience driving to be adventurous, thrilling and pleasurable (Steg, 2005). There is also the question whether self-driving cars could be seen as providing the ultimate level of autonomy when making people dependent on the technology. Given that self-driving cars could be tracked steadily could lead to privacy issues. Another potential cause for barriers towards self-driving cars is the risk of ‘misbehaving computer system’. With autonomous vehicles, criminals or terrorists might be able to hack into and use their cars for illegal purposes. Furthermore, the unavoidable rate of failure and crashes could lead to mistrust. Especially as people tend to underestimate the safety of technology while putting excessive trust in human capabilities like their own driving skills (König & Neumayr, 2017). | |||

In several recent surveys on the topic of self-driving vehicles, the public has expressed some concern regarding owning or using vehicles with this technology. Looking at a survey of public opinion on autonomous and self-driving vehicles in the U.S., the U.K., and Australia, the majority of respondents had previously heard of self-driving vehicles, had a positive initial opinion of the technology, and had high expectations about the benefits of the technology (Schoettle & Sivak, 2014). However, the majority of respondents expressed high levels of concern about riding in self-driving cars, security issues related to self-driving cars, and self-driving cars not performing as well as actual drivers. Respondents also expressed high levels of concern about vehicles without driver controls (Schoettle & Sivak, 2014). In the survey "Users’ resistance towards radical innovations: The case of the self-driving car", findings are that people who used a car more often tended to be less open to the benefits of self-driving cars. The most pronounced desire of respondents was to have the possibility to manually take over control of the car whenever wanted. This indicates that the drivers want to be enabled to decide when to switch to self-driving mode and have the option to resume control in situations when the driver does not trust the technology. In the survey the most severe concern involving the car and the technology itself was the fear of possible attacks by hackers (König & Neumayr, 2017). | |||

This report will focus on the relevant factors that contribute to the acceptance of self-driving cars for the private end-user. A survey is conducted to get more insight into the private end-user of self-driving cars. Together with the literature research and the survey conducted on the topic of self-driving vehicles, these relevant factors will be the ethical theories, the moral and legal responsibility, safety, privacy and the perspective of the private end-user. | |||

= Relevant factors = | |||

== Ethical theories == | == Ethical theories == | ||

- | A key feature of self-driving cars is that the decision making process is taken away from the person in the driver’s seat, and instead bestowed upon the car itself. From this drastical change several ethical dilemmas emerge, one of which is essentially an adapted version of the trolley problem. When an unavoidable collision occurs, it is important to define the desired behaviour of the self-driving car. It might be the case that in such a scenario, the car has to choose whether to prioritize the life and health of its passengers or the people outside of the vehicle. In real life such cases are relatively rare (Nyholm, 2018; Lin, 2016), but the ethical theory underlying that decision will possibly have an impact on the acceptance of the technology. Self-driving vehicles that decide who might live and who might die are essential in a scenario where some moral reasoning is required in order to produce the best outcome for all parties involved. Given that cars seem not to be capable of moral reasoning, programmers must choose for them the right ethical setting on which to base such decisions on. However, ethical decisions are not often clear cut. Imagine driving at high speed in a self-driving car, and the car in front comes to a sudden halt. The self-driving car can either suddenly break as well, possibly harming the passengers, or it can swerve into a motorcyclist, possibly harming them. This scenario can be regarded as an adapted version of the trolley problem. One could argue that since the motorcyclist is not at fault, the self-driving car should prioritize their safety. After all, the passenger made the decision to enter the car, putting at least some responsibility on them. On the other hand, people who buy might buy the self-driving car will have an expectation to not be put in avoidable danger. No matter the choice of the car, and the underlying ethical theory that it is (possibly) based on, it is likely that the behaviour and decision-making of the car has more chance of being socially accepted if it can morally be justified. Therefore in this section there is first highlighted some possible ethical theories, and then we will discuss some relevant aspects that surround the implementation of all ethical theories. | ||

- | '''Ethical theories under consideration''' | ||

Although there are not a lot of actions a car could take in the above-described scenario, there are a lot of ethical theories that can help to inform the car to make such a decision. The most prominent ethical theories that might prima facie be useful are utilitarianism, deontology, virtue ethics, contractualism, and egoism. These are the ethical theories that will be treated in this section. | |||

Utilitarianism considers consequences of actions, as opposed to the action itself. This means that the correct moral decision or action in any scenario is the one that produces the most good. Although "good" is a subjective term, in most versions of utilitarianism this usually refers to the net happiness or welfare increase for all associated parties (Driver, 2014). Circumstances or the intrinsic nature of an action is not taken into account, as opposed to Deontology. Deontology does not judge the morality of an action based on its consequences, but on the action itself. Deontology posits that moral actions are those actions which have been taken on the grounds of a set of pre-determined rules, which hold universally and absolutely. This means that for a deontologist, some actions are wrong or right no matter their outcome. | |||

The third major normative ethical theory is virtue ethics. Virtue ethics emphasizes the virtues, or moral character, as opposed to rules or consequences. Virtues are seen as positive or "good" character traits. Examples of such traits are courage, or modesty. A moral person should do actions which realize these traits, and therefore moral actions are those which cause a persons’ virtues to be realized. | |||

- | Other than the three major classical normative ethics theories, there are two more prima facie relevant theories, the first of which is egoism. Normative egoism posits that the only actions that should be taken morally are those actions that maximize the individuals self-interest. An egoist only considers the benefit and detriments other people experience in so far as those experiences will influence the self-interest of the egoist. Although it may not seem like it, egoism is very similar to utilitarianism, except that utilitarianism focusses on the maximum happiness of all people involved, and egoism only focusses on the maximum happiness of the individual. | ||

The last ethical theory that can be applied to the adapted trolley problem is (social) contractualism. Contractualism does not make any claims about the inherent morality of actions, but rather posits that a moral action is one that is mutually agreed upon by all parties affected by the action. What this agreement should look like exactly differs per version of contractualism: in some versions there must be unanimous consent, while in other versions there must be a simple or a supermajority. A good action is therefore one that can be justified by other relevant parties, and a wrong action is one that cannot be justified by the same. | |||

- | |||

'''Ethical theories applied to adapted trolley problem.''' | |||

First, let us apply utilitarianism to the adapted trolley problem. On a micro level a self-driving car with a utilitarian ethical setting would first want to minimize the amount of deaths, and then minimize the total number of severe injuries sustained by all people who are affected by a collision. This seems simple enough, but there are, among others, two issues with this implementation of a utilitarian setting. If for instance the technology is so advanced that it can target people based on if they are for instance wearing a helmet or not, then it would be safer for the car to collide with a biker wearing a helmet over a biker who is not wearing one, assuming all else is equal. Now the biker with a helmet is targeted, even though they are the one putting in effort to be safe. This is unfair, and if this is implemented, then it is possible some people will stop trying to take safety measures seriously, in order to not be targeted by a utilitarian self-driving car. This would ultimately reduce the overall safety on the road, which is exactly the opposite of what a utilitarian wants. Note that it is unclear whether such recognision technology will even be deployed in self-driving cars, and therefore the question arises whether this is a relevant problem at all. This paper does not make claims on the likelyhood such technology will be implemented, but instead assumes it is possible in order to make (ethical) claims on the subject. In reality the technology might not be so precise, but it is better to be prepared for the case that it is. | |||

The second problem is that it seems that although people want other road-users in self-driving cars to adopt a utilitarian setting, they themselves would rather buy cars that give preferential treatment to passengers (Nyholm, 2018; Bonnefon et al., 2016). "In other words, even though participants still agreed that utilitarian AVs were the most moral, they preferred the self-protective model for themselves" (Bonnefon et al., 2016). Therefore if self-driving cars are only sold with a utilitarian ethical setting, then less people might be inclined to buy them, again reducing the overall safety on the road. | |||

There are multiple possible counters to these two issues that a "true" utilitarian might propose. To counter the first problem, the utilitarian would simply not program the car to make a distinction between people who wear a helmet or those who do not wear a helmet. A distinction would also not be made in similar scenarios, since this solution is not only relevant to cases where helmets are involved. Of course, there are also be scenarios where the safer of the two options will be chosen by the self-driving car, assuming the same amount of people are at risk in both options. The difference between a valid safe choice and an invalid safe choice is that some safety measures are explicitly taken (such as the decision to put on a helmet), while others are more a byproduct of another decision (such as riding a bus versus driving a car). Riding a bus might be safer than driving a car, but most people who are passengers in a bus do not choose to be because of safety reasons. They might not have a car, or they do so out of concern of climate change. Since people in this scenario did not choose to ride a bus because of safety reasons, it is likely they will also not stop riding the bus because of a slightly increased chance of being hit by a self-driving car. Of course, this is only a thought-experiment, but if this also holds true in practice, then a utilitarian would find it acceptable for the self-driving car to choose the safer option in the bus vs car scenario, whereas in the helmet vs no helmet scenario the utilitarian would not find it acceptable to choose the safer option. | |||

To counter the second problem, the "true" utilitarian would ultimately want to reduce death and or/harm by reducing the amount of traffic accidents. If in practice that means that a significant number of people will not buy a self-driving car with a utilitarian setting, then the utilitarian would rather the self-driving cars be sold with an egoistic setting that gives passengers preferential treatment. This way, even though when an accident involving a self-driving car occurs it will be more deadly than with a utilitarian setting, accidents will overall decrease since more self-driving cars will be present. | |||

There are more problems with a utilitarian approach to self-driving cars, but they are unrelated to the two micro vs macro utilitarian problems we just treated. One of these problems has to do with discrimination. In an unavoidable collision scenario where the self-driving car has to either hit an adult man or a child, the adult has more chance of survival. Is the car therefore justified in choosing the man? A utilitarian would say the car is indeed justified, except if this decision has been found to cause consumers to be turned away from purchasing and using self-driving cars. Prima facie, this would not seem to be the case, but there is no major literature on this topic that gives any definitive or exploratory answer as far as we could find. Mercedes did announce that their self-driving cars would prioritize passengers over bystanders, but this was met with heavy backlash, causing Mercedes to retract the statement (Nyholm, 2018). In some countries, it has already been made law that this type of discrimination based on age, gender or type of road-user in self-driving cars is illegal, such as in Germany (Adee, 2016). Once again, as in the helmet example, it might be the case that self-driving cars will not be equipped with such precise recognition software, in which case the above described ethical problem is not relevant. Still, it is good to be prepared for the case that it is. | |||

The deontological ethical setting would not allow for a choice to be made that explicitly harms or kills a person, no matter the potential amount of saved lives. Therefore when faced with an unavoidable (possibly) deadly collision, the car would simply not make a decision at all, and events would play out "naturally". In essence, this makes the actual "chosen" collision somewhat random. As in the original trolley problem, the moral entity, in this case the car (or more accurately, the programmer who programs the ethics into the car), would simply not intervene at all. Deontologists are of the opinion that there is a difference between doing and allowing harm, and by not letting the car intervene in an unavoidable accident, both the passenger(s) and the programmer(s) are absolved of any moral responsibility. Some people might be happy with such a setting, since many people could not fathom being (morally) responsible for the deaths of others. By entering a self-driving car with a utilitarian ethical setting, the passenger(s) cannot be absolved of some moral responsibility in the case of an accident, since they made a conscious decision to buy a car that has been implemented to make explicit decisions. The same can not be said of passengers that enter a deontological self-driving car. Prima facie it seems likely that people do not want to be morally responsible in the case of an accident, and implementing a deontological ethical setting might therefore help acceptance of the technology. | |||

A virtue ethics response to the adapted trolley problem is very hard to come up with. An ethical setting based on virtue ethics would want the car to make a decision that improves the virtues of the moral entity. Therefore the decision that the car makes depends on which virtue we would want to improve. Take for instance bravery. One could posit that it is brave to take up danger to yourself if it means that other people will be safer for it. If we assume the moral entity/entities to be the passenger(s), then the self-driving car would always choose to put the passengers in danger, since this would improve on their bravery. There are two problems with this approach: firstly, it is hard to optimize any decision the car makes, since it is impossible to find a decision that always improves on all virtues. Also, what are those virtues in the first place? Is it for instance virtuous to sacrifice yourself if you leave behind a family? Secondly, since the car is not actually a moral agent, whose virtues should the decision the car makes improve upon? The programmers’ or the passengers’? This is unclear. If the programmers’ virtues should be improved, then it seems prima facie extremely unlikely that people would be willing to buy cars that might sacrifice themselves to improve the virtue(s) of a programmer they never met. If the passengers’ virtues should be improved, then people might be slightly more sympathetic, but even then I assume most people do not want to sacrifice their lives to improve upon an abstract notion of virtue and morality. | |||

If we take the perspective of self-driving car buyers and users, the ethical egoist response is to prioritize the lives of the passengers above all else. As said in the "utilitarian" part of this section, people who buy and use the car seem to prefer a self-driving car that always puts the lives of themselves above others. This setting could also possibly be regarded as the setting of a "true" utilitarian. There is another possible benefit to this ethical setting, namely that they are more predictable. If self-driving cars become very prevalent, that means that any self-driving car must always account for the decisions other self-driving cars are making. Therefore, if all self-driving cars prioritize themselves, their road behaviour becomes more predictable to other self-driving cars. However, this argument is theoretical in nature, and there are some game theorists who do not agree (Tay, 2000). The moral argument against ethical egoism is that it seems, and indeed is incredibly selfish. An ethical egoist might sacrifice hundreds of lives to save themselves. However, a "true" ethical egoist is not always extremely selfish, since extremely selfish behaviour is not tolerated by others. A "true" ethical egoist would therefore also consider the feelings of other people, since their thoughts and decisions may influence the reward any egoist may get out of any given situation. In the case of unavoidable (deadly) accidents however, no matter the feelings of others, an egoist that values their own life above all else will not care for the feelings of others, since there can be nothing more important now or in the future than their own life. | |||

Up until now we have considered only the perspective of buyers and users of self-driving cars, but the actual moral agent is the programmer (or a collection of people in the company that employs the programmer). Their egoist response would be based on how often they are planning to use the self-driving car for which they design the software. If they do not plan to use it at all, then the ethical egoist response of the programmer would be to implement a utilitarian ethical setting, since the programmer will be on average safer. If they however plan to use the self-driving car a lot, then the ethical egoist response is to implement an ethical setting that prioritizes the passenger. However, since this report is mostly concerned with the perspective of the end-user, the above described perspective is ultimately not very relevant. | |||

A contractualist ethical setting is one that is agreed upon by all relevant parties. Unanimous consent seems impossible to get, so in practice this would probably a simple democratic vote, where an arrangement of ethical settings, or a combination of ethical settings are proposed. Each possible affected person can take a vote on these settings, and the democratic winner(s) will be implemented. The tough question is: who is affected by the decisions of a self-driving car? Self-driving cars can potentially drive across whole continents; from Portugal to China, or from Canada to Argentina. If the decisions of these self-driving cars can influence events in a collection of multiple countries, should people in all these countries be part of the decision making process? If so, should there be a global vote on the specific ethical settings that can be implemented? Or if the vote is done nationally, does that mean that the ethical setting of a car must be changed when the self-driving cars enters a country where citizens voted on a different ethical setting? In practice this seems very difficult to implement. If any or all of these contractualist ethical settings are practically possible, then this setting almost completely solves the responsibility aspect of self-driving cars: if all relevant parties can vote, then society as a whole can be held ethically and legally responsible. Since responsibility might be one of the factors that contribute to the acceptance of self-driving cars, having a realistic solution to the issue of responsibility will likely positively impact public perception of self-driving cars. | |||

'''Letting the user decide the ethical setting or not. Also, all cars the same setting or not?''' | |||

It is clear that there is no ethical setting that is perfect for every scenario. For various different reasons, some authors advocate for people to be able to choose their own ethical settings. One can imagine an "ethical knob", which has different programmable ethical settings. An ethical knob might be on a scale from altruistic, to egoistic, with an impartial setting in them middle. Maybe there will even be a deontological setting which does not intervene in unavoidable accidents. There are several reasons to implement such an ethical knob. People might want to be able to buy cars that mirror their own moral mindset. Millar (2014) observes that self-driving cars can be regarded as moral proxies, which implement moral choices. Implementing a moral knob also makes it easier to assign responsibility to someone in the case of an unavoidable accident (Sandberg & Bradshaw‐Martin, 2013; Lin, 2014), since the passengers of the car have explicitly chosen the decision of the car. This might impact acceptance of the technology both positively and negatively. Prima facie it seems that people who want to buy self-driving cars might want to be able to choose their own ethical setting, but it is unknown if people would also want to choose their preferred ethical setting if this causes them to be legally and/or morally responsible. Also, other relevant road parties might not accept self-driving car passengers to choose their own ethical setting, since it is likely they will choose an egoistic setting, which negatively impacts their own road experience. This is especially true if the car is equipped with an "extremely egoistic" setting in which the value of the passengers life is worth considerably more than other people's lives. It seems likely people will not accept a self-driving car making such decisions, so perhaps manufacturers will limit how egoistic the ethical knob can be turned. Likely, these kind of ethical settings would be very unpopular, perhaps even with people who might benefit from an extremely egoistic setting. Indeed, it is already been explored in surveys that people generally want other self-driving cars to reduce overall harm (Bonnefon et al, 2016). An (extremely) egoistic ethical setting is the direct opposite of such a utilitarian setting. | |||

The same can be said for an ethical knob that can not only be turned by the user to fit their moral convictions, but can even be modified by the user to fit other kind of preferences. An ethical knob that is able to discriminate on gender, or race might be technologically possible to make, but users’ should not be allowed to let their self-driving cars be racist or sexist. Discrimination based on race or sex is illegal in many countries, so these ethical settings, if even possible to implement, will likely be outlawed anyway, as Germany has already done. To gauge which kind of settings are regarded as unacceptable, a contractualist might propose a democratic vote to gauge which kind of settings are regarded as unacceptable. The free choice of people to make their own-self driving car will then be limited by the democratic choice of all relevant road users. Such an arrangement might prove to be an acceptable middle ground between no ethical knob or a completely customizable ethical knob. However, whether this would actually lead to increased acceptance of the technology over the other two options has not been settled or much explored in academic context. | |||

== Responsibility == | == Responsibility == | ||

One very important factor in the development and sale of automated vehicles is the question of who is responsible when things go wrong. In this section we will look in detail at all factors involved and come up with | Although automated vehicles seemed a distant future a mere twenty years ago, they are becoming a reality right now. For some years companies like, for example, Google have run trials with automated vehicles in actual traffic situations and have driven millions of kilometers autonomously. However, between December 2016 and November 2017, for example, Waymo's self-driving cars drove about 350.000 miles and human driver retook the wheel 63 times. This is an average of about 5.600 miles between every disengagement. Uber has not been testing its self-driving cars long enough in California to be required to release its disengagement numbers (Wakabayashi, 2018). Though this research has been ground-breaking, there have also been some incidents in the past years. In 2016 a Tesla driver was killed while using the car’s autopilot because the vehicle failed to recognize a white truck (Yadron & Tynon, 2016). In 2018 a self-driving Volvo in Arizona collided with a pedestrian who did not survive the accident. It was believed to be the first pedestrian death associated with self-driving technology. When an Uber self-driving car and a conventional vehicle collided in Tempe in March 2017, city police said that extra safety regulations were not necessary, as the conventional car was was at fault, not the self-driving vehicle (Wakabayashi, 2018). | ||

One very important factor in the development and sale of automated vehicles is the question of who is responsible when things go wrong. In this section we will look in detail at all factors involved and come up with some solutions. As brought up by Marchant and Lindor (2012), there are three questions that need to be analysed. Firstly, who will be liable in the case of an accident? Secondly, how much weight should be given to the fact that autonomous vehicles are supposed to be safer than conventional vehicles in determining who of the involved people should be held responsible? Lastly, will a higher percentage of crashes be caused because of a manufacturing ‘defect’, compared to crashes with conventional vehicles where driver error is usually attributed to the cause (Marchant & Lindor, 2012)? | |||

'''Current legislation''' | |||

If we take a look at how responsibility works for conventional vehicles, we find that responsibility is usually addressed to the driver due to failure to obey to the traffic regulations (Pöllänen, Read, Lane, Thompson, & Salmon, 2020). This can be as small and common as driving too fast or losing attention for a fraction of a moment, something nearly everyone is guilty of doing at some point. Where this usually does not matter, sometimes it can lead to catastrophical results. This moment of misfortune still holds the driver responsible. As Nagel (1982) theorized, between driving a little too fast and killing a child that crosses the street unexpectedly, and there being no child, there is only bad luck. The consequence, however, is vast for the child, but also for the driver (Nagel, 1982). This reasoning could also be applied to automated vehicles. If an accident happens it is just bad luck for the driver, and he will without doubt be liable. However, looking at the fact that this depends on luck, and the fact that most autonomous vehicles allow for restricted to no control, this option is not considered as a plausible one (Hevelke & Nida-Rümelin, 2015). | |||

'''Blame attribution''' | |||

A couple of studies have shown that the level of control is crucial in blame attribution. McManus and Rutchick (2018) showed that people attribute less blame to a driver in a fully automated vehicle in comparison to a situation where the driver selected a different algorithm (e.g. to behave selfishly) or drove manually (McManus & Rutchick, 2018). Another study (Li, Zhao, Cho, Ju, & Malle, 2016) investigated blame attribution between the manufacturer, government agencies, the driver and pedestrians. They found that blame is reduced for drivers when the vehicle is fully autonomous, whereas the blame for the manufacturer or government agencies increased. | |||

'''The manufacturer''' | |||

It would be obvious to say the manufacturer of the car is responsible. They designed the car, so if it makes a mistake, they are to blame. However, there are different types of defects in the manufacturing process. Firstly, there is a defect in manufacturing itself, where the product did not end up as it was supposed to, even though rules are followed with care. This error is very rare, since manufacturing these days is done with a very low error rate (Marchant & Lindor, 2012). A second defect lies in the instructions. When it is failed to adequately instruct and warn, this could result in a consumer defect. A third defect, and the most significant for autonomous vehicles, is that of design. This holds that the risks of harm could have been prevented or reduced with an alternative design (Marchant & Lindor, 2012). | |||

Any flaw in the system that might cause the car to crash, the manufacturers could have known or did know beforehand. If they then sold the car anyway, there is no question in that they are responsible. However, by holding the manufacturer responsible in every case, it would immensely discourage anyone to start producing these autonomous cars. Especially with technology as complex as autonomous driving systems, it would be nearly impossible to make it flawless (Marchant & Lindor, 2012). In order to encourage people to manufacture autonomous vehicles and still hold them responsible, a balance needs to be found between the two. This is necessary, because removing all liability would also result in undesirable effects (Hevelke & Nida-Rümelin, 2015). In short, there needs to be found a way to hold the manufacturer liable enough that they will keep improving their technology. | |||

In order to encourage people to manufacture autonomous vehicles and still hold them responsible, a balance needs to be found between the two. This is necessary, because removing all liability would also result in undesirable effects (Hevelke & Nida-Rümelin, 2015). | '''Semi-autonomous vehicles''' | ||

As stated above, there have been studies on blame attribution in fully autonomous vehicles, and those with certain pre-selected algorithms. A semi-autonomous vehicle (with a duty to intervene) has not been discussed yet. A good analogy for a semi-autonomous vehicle would be that of an auto-piloted airplane. The plane flies itself, though it is the responsibility of the pilot to intervene when something goes wrong (Marchant & Lindor, 2012). So, it could be suggested that regarding responsibility, in case of an accident to hold the driver of the vehicle responsible. If the car is designed in such a way that the driver has the ability to take over and intervene, this could really be used in an argument against the driver. There is an argument in what the utility of the automated vehicle will be if they are designed like this. After all, when the driver has a duty to intervene, the vehicle can no longer be summoned when needed, it can no longer be used as a safe ride home when drunk, or when tired (Howard, 2013). However, as long as the vehicles will still reduce accidents overall, saying the driver has a duty to intervene or not would still be a better option than using conventional vehicles (Hevelke & Nida-Rümelin, 2015). It could be that the accident rate is dropped even more when the driver actually does have a duty to intervene, due to the fact that it can now intervene when it for example sees something the car doesn’t see. It would also mean that there is more of a transitioning phase when introducing the automated vehicles, instead of them suddenly being fully automatic. | |||

On the other hand, asking the driver to intervene in a fully automated vehicle is questionable. It would assume that the driver can intervene at all times, and this is not always the case due to human error in reaction time or danger anticipation (Hevelke & Nida-Rümelin, 2015). It would be difficult to recognize whether or not the automated vehicle will fail to respond correctly, and thus unclear when the driver needs to intervene. In this case it would be unrealistic to expect the driver to predict a dangerous situation. When implementing this reasoning, another problem is possible to arise: the driver might intervene when it should not have, resulting in an accident (Douma & Palodichuk, 2012). Next to that, as argued by Hevelke & Nida-Rümelin (2015), it seems impossible to ask a driver to pay attention all the time to be possible to intervene, while an actual accident is quite rare. All in all, it would be unreasonable to put responsibility on a driver that did not – or could not – intervene. | |||

'''Shared liability''' | |||

As is previously discussed, the responsibility of an accident can be placed on the individual driving the autonomous vehicle. For a number of reasons this was not ideal. An alternative would be to create a shared liability. People that drive cars everyday (especially when not necessary) take the risk of possibly causing an accident. They still make the choice to drive the car (Husak, 2004). You can extrapolate this thinking to the use of automated vehicles. If people choose to drive an automated vehicle, they in turn participate in the risk of an accident happening due to the autonomous vehicle. The responsibility of an accident is therefore shared with everyone else in the country also using the automated vehicle. In that sense the driver itself did not do something wrong, it did not intervene too late, it simply shoulders the burden with everyone else. A system that could work with this line of thinking is the entering of a tax or mandatory insurance (Hevelke & Nida-Rümelin, 2015). | |||

So, it seems there are a couple of options. The manufacturer can be fully responsible; however, this could result in the intermittence of autonomous vehicle manufacturing. On the other hand, it is desirable that the manufacturer does have some sort of liability, so they keep investing to improve the vehicle. At the same time, giving the driver full responsibility only seems to be able to work in the beginning phase of autonomous vehicles. When they are still in development, and drivers really do have a duty to intervene. When the vehicles are more sophisticated and able to fully drive autonomously, the responsibility can be shared with all people through a tax or insurance. | |||

== Safety == | == Safety == | ||

One of the main factors deciding whether self-driving cars will be accepted is the safety of them. Because who would leave their life in the hands of another entity, knowing it is not completely safe. Though almost everyone gets into buses and planes without doubt or fear. Would we be able to do the same with self-driving cars? Cars have become more and more autonomous over the last decades. Furthermore, self-driving cars will operate in unstructured environments, this adds a lot of unexpected situations | One of the main factors deciding whether self-driving cars will be accepted is the safety of them. Because who would leave their life in the hands of another entity, knowing it is not completely safe. Though almost everyone gets into buses and planes without doubt or fear. Would we be able to do the same with self-driving cars? Cars have become more and more autonomous over the last decades. Furthermore, self-driving cars will operate in unstructured environments, this adds a lot of unexpected situations (Wagner et al., 2015). | ||

'''Traffic behavior''' | |||

The car's safety will be determined by the way it is programmed to act in traffic. Will it stop for every pedestrian? If it does, pedestrians will know and might cross roads wherever they want. Furthermore, will it take the driving style of humans? And how does the driving style of automated vehicles influence trust and acceptance? | |||

According to the research of Elbanhawi, Simic and Jazar (2015), two factors are relevant for driving comfort: naturalness and apparent safety. The relationship between these two factors can be seen as operating between so-called safety margins (Summala, 2007). | |||

In a research two different designs were presented to a group of participants. One was programmed to simulate a human driver, whilst the other one was communicating with its surroundings in a way that it could drive without stopping or slowing down. The research showed no significant different in trust of the two automated vehicles. However, it did show that the longer the research continued, the more trust grew (Oliveira et al., 2019). It is therefore to say that the driving behavior does not necessarily influence the trust, but the overall safety of the driving behavior determines it. | |||

A driving style related to that of humans may however still be beneficial to the acceptance. For example, the car should be able to mimic a human driving the car (Elbanhawi, Simic & Jazar, 2015). This may reduce the hesitation towards self-driving cars and more people driving one (Hartwich, Beggiato & Krems, 2018). However, research conducted by Liu, Wang & Vincent (2020) concluded that people want self-driving vehicles at least four to five times as safe as human-driven vehicles. So, although people would like them to drive human-like, the risks shouldn’t be human-like. This could be explained by the fact that legal problems would be more complicated when an accident occurs, and safety is a major advantage of self-driving cars. If people don’t have that advantage, they may rather enjoy the pleasures of driving themselves. | |||

''Errors'' | '''Errors''' | ||

Despite what we think, humans are quite capable of avoiding car crashes. It is inevitable that a computer | Despite what we might think, humans are quite capable of avoiding car crashes. It is inevitable that a computer can crash, think for example about how often your laptop freezes. A slow response of a millisecond can have disastrous consequences. Software for self-driving vehicles must be made fundamentally different. This is one of the major challenges currently holding back the development of fully automated cars. On the contrary, automated air vehicles are already in use. However, software on automated aircraft is much less complex since they have to deal with fewer obstacles and almost no other vehicles (Shladover, 2016). | ||

'''Cybersecurity''' | |||

The software driving fully AV will have more than a hundred million lines of code, so it is impossible to predict the security problems. Windows 10 is made of fifty million lines of code and there have been lots of bugs. Doubling the amount of code will result in an even higher probability of unknown vulnerabilities (Parkinson et al., 2017). This complicated code is due to the fact that all self-driving cars have to be interconnected to make use of the most beneficial features of self-driving cars. Self-driving cars will be much more able to react to each other and plan movements ahead if they receive data from other cars through a network. Straub et al. (2017) presented a plan to protect against attacks. | |||

Self-driving cars hold the potential of eliminating all accidents, or at least those caused by inattentive drivers | To let cars react to each other appropriately and most efficiently, CACC (Cooperative Adaptive Cruise Control) must be made use of (Amoozadehi et al., 2015). This technology lets cars send information to other cars so that they can adapt to movements and speed changes of other cars. This technology comes as already mentioned above with security risks. There exist multiple kinds of attacks: application layer attacks, network layer attacks, system level attacks and privacy leakage attacks. Application layer attacks can influence applications as CACC beaconing. This could degrade the efficiency of cars reacting to each other, or messages could be falsified. This could result in rear-end collisions. Network layer attacks could make using the network impossible for cars, so that CACC doesn’t work at all anymore. DDoS-attacks are an example. System level attacks, on the other hand, don’t use CACC or vehicle-to-vehicle communication. These could be carried out when a person installs malicious software. Privacy leakage attacks are of course well known and actual. This is theft of data which should only be available to the user and maybe the manufacturer (Amoozadehi et al., 2015). | ||

'''Versus humans''' | |||

Self-driving cars hold the potential of eliminating all accidents, or at least those caused by inattentive drivers (Wagner et al., 2015). In a research done by Google it is suggested that the Google self-driving cars are safer than conventional human-driven vehicles. However, there is insufficient information to fully take a conclusion on this. But the results lead us to believe that highly-autonomous vehicles will be more safe than humans in certain conditions. This does not mean that there will be no car-crashes in the future, since these cars will keep on being involved in crashes with human drivers (Teoh et al., 2017). | |||

'''The city''' | '''The city''' | ||

The city is probably one of the most complicated locations for a self-driving car to operate in. It is filled with vulnerable road users, such as pedestrians and bikers which are relatively hard to track. Therefore, freeways are likely to be the first spaces in which the automated cars will be able to operate. This is a much more structured environment with simple rules and less unexpected situations. However, this will not solve the issue of traffic jams at popular destinations. Some might say the ambition is to allow cars, bikes and pedestrians to share road space much more safely, with the effect that more people will choose not to drive. However, and interesting question regarding this is raised by Duranton (2016): "If a driverless car or bus will never hit a jaywalker, what will stop pedestrians and cyclists from simply using the street as they please?" (Duranton, 2016). | |||

Image tracking information could be used to predict the movements of a pedestrian or a cyclist for example. This way, a car doesn’t have to stop for every pedestrian on the sidewalk (Sarcinelli et al., 2019). But still, it doesn’t fix the above-mentioned problem. | |||

Millard-Ball (2016) suggests pedestrian supremacy in cities. He agrees that autonomous vehicles will drive cautiously and therefore slowly in cities. That’s why people will walk more often in cities, because it will become the faster alternative. Travelling between cities will be done by autonomous vehicles, but people will exit the vehicle on a peripheral part of town, before walking to the center. This is not necessarily negative, just a change of culture. Google acknowledges this problem and states that when Google cars cannot operate in existing cities, perhaps new cities need to be created. And the truth is, it has happened in the past. The first suburb of America was developed by rail entrepreneurs who realized that developing suburbs was much more profitable than operating railways (Cox, 2016). | |||

We might need to look at alternative technologies that we need in urban transport. Rather than developing individualist self-driving cars, let’s look at the ‘technology of the network’. How can we connect more people without consuming the space we live in (Duranton, 2016). | |||

'''Trust''' | '''Trust''' | ||

Questions of whether | For decades, we have trusted safe operation of automated mechanisms around and even inside us. However, in the last few years the autonomy of these mechanisms have drastically increased. As mentioned before, this brings along quite a few safety risks. Questions of whether to trust a new technology are often answered by testing (Wagner et al., 2015). | ||

There has been a survey about trust in fully automated vehicles. Trust was defined as “the attitude that an agent will help achieve an individual’s goal in a situation characterized by uncertainty and vulnerability” (Lee & See, 2004). Within this survey sixty percent of the respondents mentioned to have difficulties trusting automated vehicles. Trust in this context can be seen as the driver’s belief that the computer drives at least as good as a human. | |||

The trust to be able to fully implement these technologies is not where it is supposed to be. We know that trust can build up over time and this is also the case with trusting self-driving cars. The hesitation is the greatest amongst the elderly, whom are also the generation that gain a lot of benefits as well. The good news of this research is that half of the older adults reported back that they are comfortable with the concept of tools that can help the driver. The amount of tools can grow, whilst the driver/passenger can get used to the idea of a completely self-driving car (Abraham et al., 2016). | |||

== Privacy == | == Privacy == | ||

Self-driving cars rely on an arrangement of new technologies in order to traverse traffic. Some of these technologies have to take data from its environment and/or the people in the car, which can have a big effect on the privacy of both the users of the car and the people around the car. Since fully autonomous cars are not yet on the market, and have not even been build yet, it is unclear how significant the privacy issues might be that are associated with self-driving cars. At minimum, the use of data that tracks locations seems like a necessary implication for self-driving cars, and thus necessary for a self-driving car to function correctly (Boeglin, 2015). This kind of location tracking is already prevalent in mobile phones, and the privacy issues that accompany it are very well known already (Minch, 2004). In fact, car GPS that is already in use already suffers from this problem. The car can save specific locations, has to plan routes based on current location, and has to access current traffic data. If anyone were to access this information, they would essentially access a record of the movements of a person, and also of activities associated with the destinations. If one knows that the user of the self-driving car visited a psychiatrist, or an abortion clinic, then one can also make an educated guess on the things the user has been going through in their lives. | |||

Besides these personal concerns that come from location tracking, there are also commercial concerns. The company that tracks location data might use the location data of the car to infer personal information of the users, and use this personal information for marketing purposes. We already know that this is possible, since this often happens with tracking mobile phone locations. If a mobile phone user visits a store that sells some product, then Google might use this data to send personalized advertisements to the user. The same could happen with self-driving cars. | |||

According to a paper by Boeglin (2016), whether a vehicle is likely to impose on its passengers' privacy can largely be reduced to whether or not that vehicle is communicative (Boeglin, 2016). A communicative vehicle relays vehicle information to third parties or receives information from external sources. A vehicle that is more communicative will be likely to collect information. Communicative vehicles could take a number of forms, therefore it is hard to gauge how severe the associated privacy risks will be. One kind of communicative self-driving car is a car that exchanges data between itself and other self-driving cars. Both cars can use this data for risk mitigation or crash avoidance. Wireless networks are particularly vulnerable, according to Boeglin (2016). When self-driving cars become more prevalent, they might also be able to communicate with roads or road infrastructure (traffic lights or road sensors) to exchange data that will make both parties more effective. As a result, the traffic authority (e.g. the municipality) will also have access to the records of each self-driving car. Whether people will accept this remains to be seen, and not a lot of research has been done on this subject. One such paper that does explore the general public's opinion on privacy in self-driving cars finds that a majority of people would want to opt out of identifiable data collection, and secondary use collections such as recognition, identification, and tracking of individuals were associated with low likelihood ratings and high discomfort (Bloom et al., 2017). | |||

Self-driving cars that are currently in development are not all communicative types of cars, partly because there does not exist infrastructure yet to support such cars. Privacy risks for non-communicative cars are less prevalent, but not nonexistent. Location tracking will always be an issue, and uncommunicative self-driving cars will still be heavily reliant on sensory data in order to get to the desired destination. This sensory data might still be hacked, but hacking is almost always a negative possibility that infringes on the right of privacy. Self-driving cars are hardly a special case in that regard. | |||

It is largely unclear how users will react to the potential risks to their privacy, since this is a newly emerging technology, and issues such as safety, decision-making and autonomy are usually more pressing issues. We expect that people will not rate privacy as a large concern, and instead will be more concerned with the aforementioned issues. This is especially the case when talking about uncommunicative self-driving cars, which seem to be more prevalent than communicative cars in today's world. We also expect that people largely think of uncommunicative self-driving cars instead of communicative self-driving cars, since communicative cars are a step further into the future than uncommunicative self-driving cars. This probably lowers the perceived level of risk associated with privacy issues among users even more. | |||

== Perspective of private end-user == | == Perspective of private end-user == | ||

The potential revolutionary change that self-driving cars could stir up would affect many areas of life. Apart from improving safety, efficiency and general mobility, it would change current infrastructure and the relationship between humans and machines (Silberg et al., 2012). This section will focus primarily on the user’s attitude towards self-driving cars, specifically perceived benefits and concerns. | |||

According to the National Highway Transportation Safety Administration cars are currently in ‘level 3 automation’, in which new cars have automated features, but still require an alert driver to intervene when necessary. ‘Level 4 automation’ would mean that a driver is no longer permitted to intervene (Cox, 2016). Before this level can be reached, the general public would need to feel comfortable with letting go of the steering wheel. | |||

'''General attitude''' | |||

A research by König & Neumayr (2017) showed that people are generally more worried about self-driving cars when they are older. They also showed that females have more concern than males, and that rural citizens are less interested in self-driving cars than urban citizens (König & Neumayr, 2017). Surprisingly, people who used their car more often seemed less open to the idea of a self-driving car, possibly because the change to self-driving cars would be too radical. Furthermore, the most common desire of people is to have the ability to manually take control of the car when desired. It allows them to still enjoy the pleasures of manually driving and they don’t lose the sense of freedom (Rupp & King, 2010). | |||

Another interesting finding by König & Neumayr (2017) was that people who had no car as well as people who already had a car with more advanced automated features showed a more positive attitude towards self-driving cars. Possibly because the people without a car see it as an opportunity to be able to take part in traffic, and people with advanced cars are more familiar with the technology (König & Neumayr, 2017). Lee et al. (2017) also found that people without a driver’s licence were more likely to use a self-driving car (Lee et al., 2017). | |||

'''Benefits and concerns''' | |||

It is common knowledge that many cars crash due to human error. The World Health Organization (2016) reported that road traffic injuries is the leading cause of death among people between the ages of 15 to 29 (World Health Organization, 2016). Raue et al. (2019) argues that removing the human error from driving is one of the biggest potential benefits of self-driving cars. They also pose that driverless cars could potentially decrease congestion, increase mobility for non-drivers and create more efficient use of commuting time. Next to that, there are also environmental benefits; when vehicles no longer need to be built with a tank-like safety, they are lighter and consume less fuel (Bamonte, 2013; Parida et al., 2018; Raue et al., 2019). | |||

König & Neumayr (2017) used a survey to judge people’s attitude towards potential benefits and concerns. They found that people mostly value the fact that a self-driving car could solve transport issues older and disabled people face. This is in accordance with Cox (2016) and Parida et al. (2018), who said the driverless car has the potential to expand opportunity and that it can improve the lives of disabled people and others who are unable to drive (Cox, 2016; Parida et al., 2018). From the survey König & Neumayr (2017) also found that people value the fact that they can engage in other things than driving. Participants did not feel that self-driving cars would give them social recognition, and they did not feel like it would yield to shorter travel times (König & Neumayr, 2017). | |||

On the other hand, there are also some concerns indicated by König & Neumayr (2017). Their participants were mostly concerned with legal issues, followed by concerns for hackers. Lee et al. (2017) also found that especially older adults are concerned with self-driving cars being more expensive. Surprisingly, they found that across al sub-groups people did not trust the functioning of the technology (König & Neumayr, 2017; Raue et al., 2019). | |||

'''Sharing cars''' | |||

While many people look positively towards the implementation of self-driving cars, less people are willing to buy one. Many people don’t want to invest more money in self-driving cars than they do in conventional cars right now (Schoettle & Sivak, 2014). Therefore, a car sharing scheme (e.g. a whole fleet provided by a mobility service company, or a ride sharing scheme) is an option to make self-driving cars more popular. This way people would not have to spend a large sum of money, and they could gradually learn to trust the technology by using the shared self-driving cars first (König & Neumayr, 2017). According to Cox (2016), this is not necessarily true. Since corporate mobility companies will then provide the cars, they have to cover the costs of for example vehicle operation, which will increase the fees for the user (Cox, 2016). | |||

So, how would it work when automated vehicles are being used as shared vehicles? Cox (2016) assumes that companies will be providing cars the same way they do now, renting them in short-term or long-term. Especially in large metropolitan areas automated vehicles could substantially shorten a trip, or solve current transportation problems (Cox, 2016; Parida et al., 2018). While cars are being shared, private ownership would still be possible, and people would be able to rent out their own personal cars short-term. | |||

One option of sharing cars is to let people share a single ride. This could decrease the number of cars in an urban area and address issues like congestion, pollution or the problem of finding a parking spot (Parida et al., 2018). However, there are certain issues with ridesharing. Because not every person starts and stops in the same place, trips could actually increase in time, making ridesharing less attractive. Lowering the price of ridesharing might not even be enough to attract travellers. Ridesharing does raise another important question: do people want to share a car with strangers? As stated by Cox (2016), personal security concerns will probably only increase and therefore people will not be willing to share a ride with someone they don’t know. | |||

An important notion is that vehicles are parked on average more than ninety percent of the time (Burgess, 2012). A driverless car fleet provided by a mobility company could possibly reduce the number of cars in a metropolitan city since the urban area is so densely packed. However, these cars would not be attractive to users living in a more rural area, or people that need to travel outside the urban area (Cox, 2016). | |||

In the present day, many people use transit (e.g. train, metro, bus, etc.) in metropolitan areas, though this is not the fastest possible commute. Owen and Levinson (2014) found that many jobs can be reached in about half the time by car than it takes by transit. This is mostly because of the “last mile” problem, the fact that many destinations are beyond walking distance of a transit stop (Owen & Levinson, 2014). Driverless cars can be used to overcome this “last mile” problem, by placing them more at transit stops. However, a fleet of driverless cars can have two consequences on transit. On the one hand it can cause transit users to refrain from using transit because of the improved travel times and door-to-door access. On the other hand, many transit riders have a low income and will probably not be able to pay for a driverless car alternative (Cox, 2016). Though, if the charges of driverless cars are too low this might reduce the attractiveness of transit even more, causing people to use the driverless vehicle for the entire trip (Cox, 2016). | |||

'''Acceptance''' | |||

Many studies have delved into technology acceptance across various domains, and many different ways to determine the acceptance of self-driving cars are mentioned. Lee et al (2017) found that across all ages, perceived usefulness, affordability, social support, lifestyle fit and conceptual compatibility are significant determinants (Lee et al., 2017; Raue et al., 2019). Raue et al. (2019) found that people’s risk and benefit perceptions as well as trust in the technology relate to the acceptance of self-driving cars (Raue et al., 2019). According to Rogers (1995), to increase the probability of a wide-spread adoption of the innovation, the following factors need to be taken into account: the relative advantage, the compatibility (steering wheel with a disengage button), the trialability (test-drives), the observability (car-sharing fleets), and complexity (introduction to automation) (König & Neumayr, 2017; Rogers, 1995). | |||

As found by Lee et al. (2017), older adults are possibly not ready yet to let go of the steering wheel. They found that older generations have a lower overall interest and different behavioural intentions to use. However, people with more experience with technology seemed to be more accepting (Lee et al., 2017). Other supporting studies did find that older adults are more likely to accept new in-vehicle technologies (Son, Park, & Park, 2015; Yannis, Antoniou, Vardaki, & Kanellaidis, 2010). However, Lee et al. (2017) also found that across all ages, people would be more likely to use a self-driving car if they would no longer be able to drive themselves due to aging or illness (Lee et al., 2017). | |||

As for the general public, Raue et al. (2019) looked into common psychological theories to assess people’s willingness to accept the self-driving car. They found that people who are familiar with actions or activities often perceive them to be less risky, and people’s levels of knowledge about a certain technology can affect how they understand it risks and benefits (Hengstler, Enkel, & Duelli, 2016; Raue et al., 2019). In that sense, affect is used as a decision heuristic (i.e. a mental shortcut) in which people rely on the positive or negative feelings associated to a risk (Visschers & Siegrist, 2018). Because negative emotions weigh more heavily against positive emotions, and people are more likely to recall a negative event, negative affect may influence people to judge self-driving cars to be of higher risk and lower benefit. This negative affect can be caused by anything, like for example the loss of control from removing the steering wheel, or knowledge of accidents involving self-driving cars (Raue et al., 2019). Parida et al. (2019) stresses the importance of public attitude and user acceptance of self-driving cars as the global market acceptance heavily relies on it (Parida et al., 2018). | |||

= Method = | |||

'''Research design''' | |||

For this questionnaire, a non-probability convenience sampling method was applied that leveraged the group’s broad networks. Even though convenience sampling means that the sample is not representative, it was a feasible opportunity to reach out to the crucial audience and to enable the collection of relevant data forming first evidence. As the questionnaire was conducted with the general public, there was no strict geographical scope in order to reach as many different people as possible. This allows first indications of driver’s attitudes towards self-driving vehicles not applying to certain regions. The survey is conducted in the Netherlands. | |||

'''Data collection''' | |||

Data was collected over a one-week time frame in March 2021 using an online questionnaire using Microsoft Forms (see appendix), a web-based survey company. This method was chosen for several reasons. Assessed information was widely available among the public. Due to Covid-19, an online approach made it easier to reach people to ensure physical distancing. And by not requiring an interviewer to be present, it reduced both potential bias and cost and time. Microsoft Forms is used because it has a safe environment, and it meets EU privacy standards. | |||

Respondents were reached by sending out emails and private messages on social media (e.g. WhatsApp), including both personalized invitational letters, explicitly stating self-driving vehicles as the topic of the research, as well as a direct link to the online questionnaire. A consent form was included on the cover page of the questionnaire where respondents were assured of anonymity and confidentiality. Given the study’s exploratively nature, reaching a large number of respondents was prioritized. The minimum number of respondents favored was 100. Completed surveys were eventually received for 115 respondents. | |||

'''Measures''' | |||

In the questionnaire, several relevant factors related to self-driving vehicles were examined. The main topics addressed in the questionnaire were about general knowledge and taken from our hypothesis, namely: | |||

- Familiarity with self-driving vehicles | |||

- Expected benefits of self-driving vehicles | |||

- Concerns about different implementations of self-driving vehicles | |||

- Favored ethical settings in self-driving vehicles | |||

- Acceptance of legal responsibility in unavoidable crashes with self-driving vehicles | |||

''Personal car use and demographics'' | |||

In the first part of the questionnaire, participants had to answer the question whether they have a driver's license. Additionally, the respondents were asked how often they drove a car presented with the answering options ‘(almost) every day, weekly, monthly, annually and never’. Furthermore, demographical questions regarding age and education were asked. | |||

''Familiarity with self-driving vehicles'' | |||

Participant’s existing knowledge about self-driving vehicles was assessed. Respondents were confronted with a set of rating questions containing even, numerical Likert scales made up of four points ranging from ‘unfamiliar’ (1) to ‘familiar’ (4). | |||

''Expected benefits of self-driving vehicles'' | |||

Participants were further asked to rate their agreement with statements reflecting presumed benefits of the use of self-driving vehicles. To allow for a ‘neutral’ opinion, the statements were combined with a 5-point scale ranging from ‘very unlikely’ (1) to ‘very likely’ (5). A 5-point Likert scale is used because in forced choice experiments, consisting of a 4-point Likert scale, choices are contaminated by random guesses. | |||

''Concerns about different implementations of self-driving vehicles'' | |||

After the expected benefits of self-driving vehicles, respondents were asked to rate their concerns with statements regarding self-driving vehicles by using a 4-point Likert scale ranging from ‘not concerned’ (1) to ‘very concerned’ (4). | |||

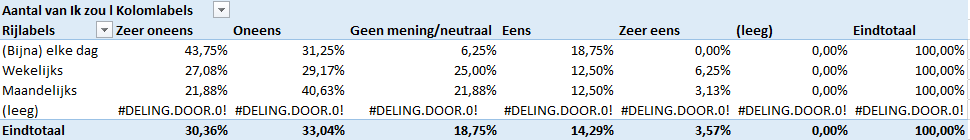

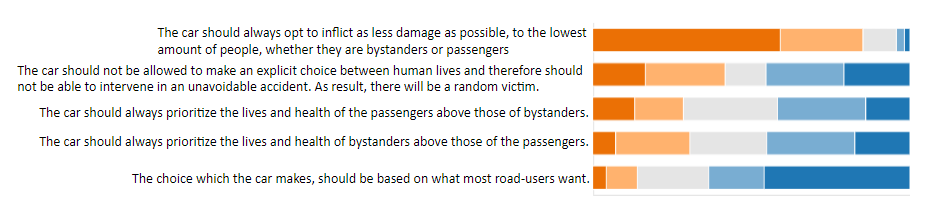

''Favored ethical settings in self-driving vehicles'' | |||

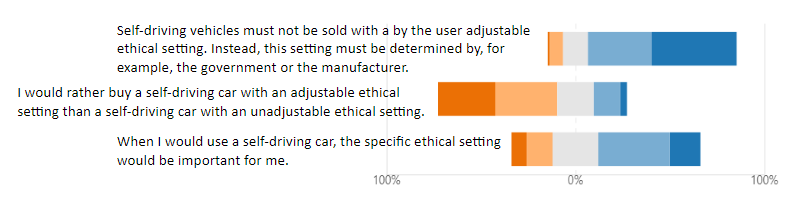

The preferred ethical setting, in which participants would like to see self-driving vehicles which are on the road, is assessed with 5 ranking options from first choice to last choice. Furthermore, there are statements regarding ethical settings used in self-driving vehicles assessed with a 5-point Likert scale, to allow the neutral opinion, ranging from ‘strongly disagree’ (1) to ‘strongly agree’ (5). | |||

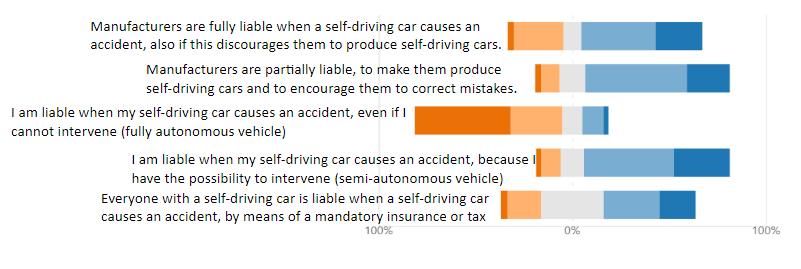

''Acceptance of legal responsibility in unavoidable crashes with self-driving vehicles'' | |||

Lastly, participants were asked to rate their agreement with statements about the legal responsibility in unavoidable crashes with self-driving vehicles. Again with a 5-point Likert scale, to allow the neutral opinion, ranging from ‘very unlikely’ (1) to ‘very likely’ (5). | |||

The full text of the questionnaire is included in the appendix. | |||

= Results = | |||

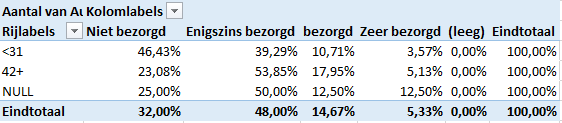

Completed surveys were received for 115 respondents. First demographic questions were asked. Question 2, about the respondent's age, has received 104 responses. Since this is an open question, appropriate intervals are constructed. The youngest person who filled in the survey is 17 years old and the oldest person is 80 years old. Half of the respondents are between 17 and 30 years old, and the other half is between 42 and 80 years old. There were no respondents between the ages of 30 and 42 years old. Since this points to an obvious dichotomy, the following two intervals are used: | |||

- 51.3% <31 | |||

- 39.1% >41 | |||

- 9.6% no answer | |||

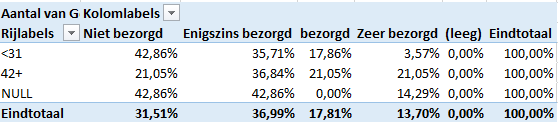

Question 3, about the respondent's education, has received 115 responses. 2.6% of the respondents have no education or incomplete primary education, 6.1% have a high school diploma, 7.8% of the respondents are currently studying MBO, 32.2% of the respondents are currently studying an HBO or WO and do not have a diploma yet, 29.6% have an HBO or WO Bachelor diploma, 20.0% have an HBO WO Master diploma and 1.7% has a PhD. Question 4, about having a driver's license, has received 114 responses. 89.5% of the respondents have a driving license and 10.5% have no driving license. Question 5, about the regularity of car use, there were 114 responses. Of the respondents, 28.1% uses their car (nearly) every day, 43.0% weekly, 24.5% monthly, 4.4 % annually and 0.0% never. | |||

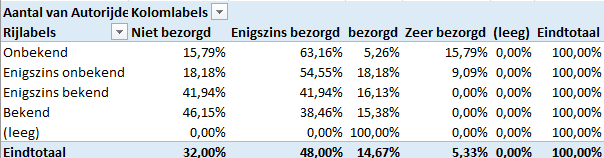

The question about how familiar respondents are with self-driving vehicles, question 6, has received 114 responses. 22.8% of the respondents are unfamiliar with self-driving vehicles, 16.7% are somewhat unfamiliar, 42.1% are somewhat familiar and 18.4% are familiar. | |||

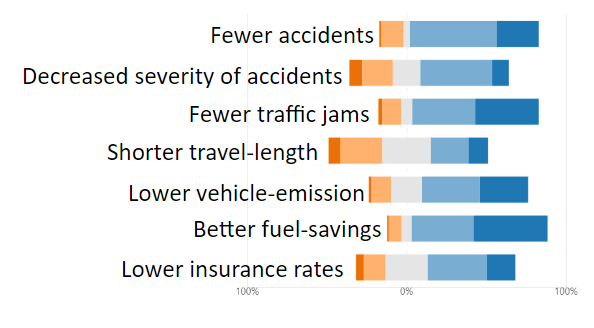

Question 7, about the benefits when using a self-driving car, has received 112 responses in which 1 respondent did not respond to subquestions 1, 2 and 7, 1 respondent to subquestion 2, and 1 respondent to subquestions 3 up to 7. For all the sub-questions of question 7, the possible answers are very unlikely, somewhat unlikely, no opinion/neutral, somewhat likely and very likely. The following percentages per answer occurred, as shown in figure 1 in the result section in the appendix: | |||

- Fewer accidents: 0.9%, 14.0%, 4.4%, 54.4%, 26.3% | |||

- Decreased severity of accidents: 8%, 19.5%, 16.8%, 45.1%, 10.6% | |||

- Fewer traffic jams: 2.6%, 11.4%, 7%, 39.5%, 39.5% | |||

- Shorter travel-length: 7.9%, 26.3%, 29.8%, 23.7%, 12.3% | |||

- Lower vehicle emission: 1.8%, 12.3%, 19.3%, 36.0%, 30.7% | |||

- Better fuel-savings: 0.9%, 7.9%, 6.1%, 38.6%, 46.5% | |||

- Lower insurance rates: 5.3%, 13.3%, 26.5%, 37.2%, 17.7% | |||

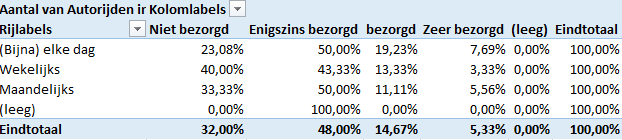

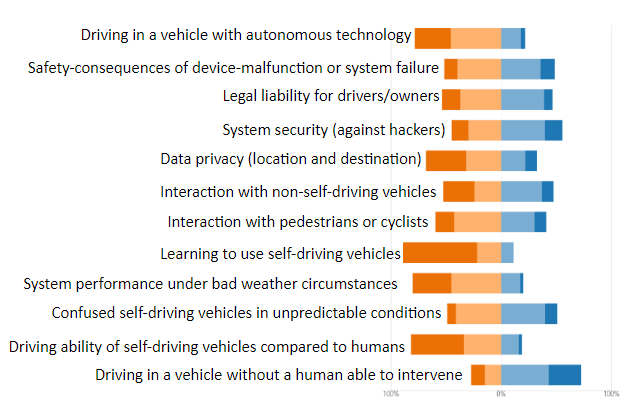

In question 8, about the concerns related to self-driving vehicles, the first 39 responses were omitted and in total 76 valid responses were received. 1 respondent did not respond to subquestion 3, 1 respondent to subquestion 4, 1 respondent to subquestion 6, 3 respondents to subquestion 12, 1 respondent to subquestions 2 up to 6, 8 up to 10 and 12, 1 respondent to subquestions 1 up to 12 and 1 respondent to subquestions 2 up to 12. For all the subquestions of question 8, the possible answers are not concerned, slightly concerned, concerned and very concerned. The following percentages per answer occurred, as shown in figure 2 in the appendix: | |||

- Driving in a vehicle with autonomous technology: 32.0%, 48.0%, 14.7%, 5.3% | |||

- Safety-consequences of device-malfunction or system failure: 9.6%, 43.8%, 41.5%, 15.1% | |||

- Legal liability for drivers/owners: 13.9%, 43.1%, 36.1%, 6.9% | |||

- System security (against hackers): 12.5%, 33.3%, 40.3%, 13.9% | |||

- Data privacy (location and destination): 31.5%, 36.9%, 17.8%, 13.7% | |||

- Interaction with non-self driving vehicles: 26.4%, 22.2%, 38.9%, 12.5% | |||

- | - Interaction with pedestrians or cyclists: 13.5%, 39.2%, 33.8%, 13.5% | ||

- | - Learning to use self-driving cars: 64.4%, 24.6%, 9.6%, 1.4% | ||

- | - System performance under bad weather conditions: 36.1%, 50.0%, 9.7%, 4.2% | ||

- | - Confused self-driving vehicles in unpredictable conditions: 8.2%, 43.9%, 35.6%, 12.3% | ||

- Driving ability of self-driving vehicles compared to humans: 46.0%, 39.2%, 13.5%, 1.3% | |||

- | - Driving in a vehicle without a human able to intervene: 12.9%, 15.7%, 44.3%, 27.1% | ||