Mobile Robot Control 2020 Group 10: Difference between revisions

| (62 intermediate revisions by 5 users not shown) | |||

| Line 88: | Line 88: | ||

The control component makes sure PICO moves along the planned path. | The control component makes sure PICO moves along the planned path. | ||

*'''moveToPoint()''' <br> The move to point function will be used to move PICO to the set point generated by the path planner. To prevent that PICO will hit the wall, the world model will be used to determine the trajectory from the current position of PICO to the set point. | *'''moveToPoint()''' <br> The move to point function will be used to move PICO to the set point generated by the path planner. To prevent that PICO will hit the wall, the world model will be used to determine the trajectory from the current position of PICO to the set point. | ||

=====Monitoring===== | =====Monitoring===== | ||

| Line 159: | Line 159: | ||

The hospital challenge is based upon a real-life scenario where a PICO robot can assist with tasks in the hospital. The goal of the robot is to visit different cabinets to gather or deliver medicenes for example. This process should be executed as smooth, fast and safe as possible. The main challenges which arise are the planning and the path of the robot, which is unknown before the start. The robot should be able to determine its path’s on the run. The robot should also be able to robustly navigate through the hospital. Imperfect walls, static and dynamic clutter, such as garbage bins or people, and wheelspin are all present in practice, while these are not necessary present in a perfect simulated environment. | The hospital challenge is based upon a real-life scenario where a PICO robot can assist with tasks in the hospital. The goal of the robot is to visit different cabinets to gather or deliver medicenes for example. This process should be executed as smooth, fast and safe as possible. The main challenges which arise are the planning and the path of the robot, which is unknown before the start. The robot should be able to determine its path’s on the run. The robot should also be able to robustly navigate through the hospital. Imperfect walls, static and dynamic clutter, such as garbage bins or people, and wheelspin are all present in practice, while these are not necessary present in a perfect simulated environment. | ||

=== | ===Software-structure and interaction=== | ||

In order to | In order to successfully complete the given task, the structure of the code which runs the robot must be well designed, structured and intuitive. The code is divided into multiple components, which all serve a different task. | ||

'''World model''' is used to store the information of the map and the sensors in two maps, a global map and a live map. The information is read from and written to this world model. | |||

'''Planning''' is used in order to plan an optimal path through the map, which can change when clutter or closed doors are encountered. | |||

'''Control''' focuses on the actuation of the robot itself. | |||

'''Monitoring''' is a big component which takes all the perception data, and uses it to determine the position of the robot, the live map, and clutter detection based on a gridmap. | |||

'''Perception''' is the component which translates the raw sensor data to useful information. | |||

'''Statemachine''' is the brain component of the robot, and decides which steps need to be undertaken in order for the robot to complete its task. | |||

'''User interface''' is used in order to visualize the maps, the robot and its behavior, and can give tremendous insights during testing and execution of the tasks at hand. | |||

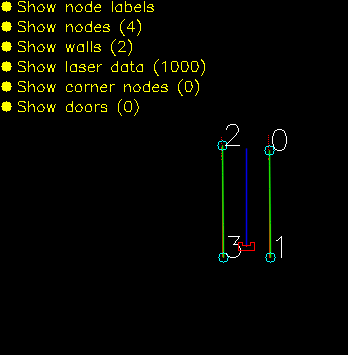

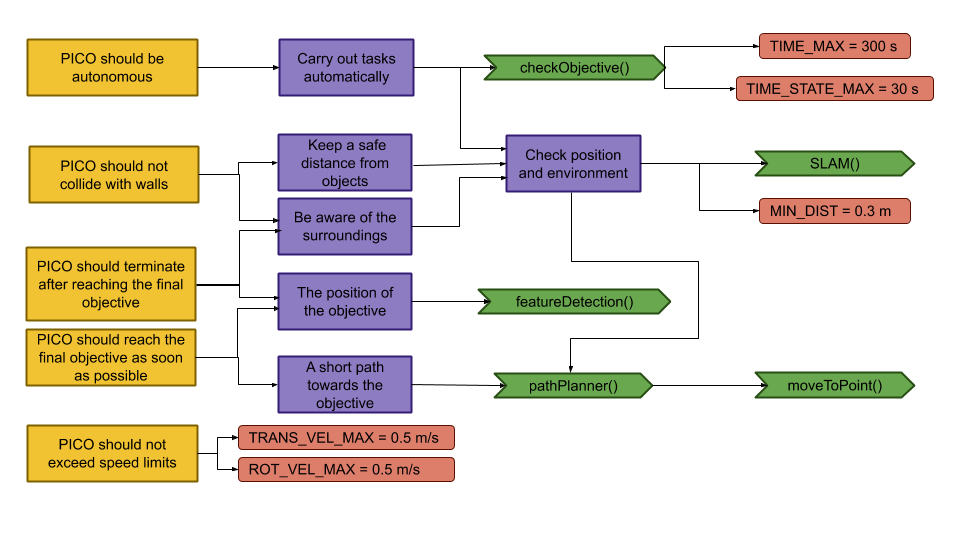

The program written has been designed in a way such that the semi-independent components all work together. Some of these even work in parallel to improve qualitative performance. In the following sections, these different components and their interactions will be dissected and explained. Below, a UML class diagram can be seen showing the different components and their most important member attributes and methods. | The program written has been designed in a way such that the semi-independent components all work together. Some of these even work in parallel to improve qualitative performance. In the following sections, these different components and their interactions will be dissected and explained. Below, a UML class diagram can be seen showing the different components and their most important member attributes and methods. | ||

Clicking the thumbnail at the right will show the full sized version of the UML Class Diagram. | |||

Clicking | |||

[[File:UML Diagram.png|thumb|right|UML Class diagram of the program]] | |||

The different components and functions run in an important specified order. The sequence of execution is shown in the sequence diagram below. | The different components and functions run in an important specified order. The sequence of execution is shown in the sequence diagram below. | ||

| Line 233: | Line 208: | ||

|- | |- | ||

| potentialField | | potentialField | ||

| A vector containing all the potential vectors | | A vector containing all the potential vectors, used in controlling the robot | ||

|- | |- | ||

| laserPoints | | laserPoints | ||

| Processed laserData. Vector containing all | | Processed laserData. Vector containing all the laserpoints and their local coordinates (w.r.t. the robot) | ||

|- | |- | ||

| laserData | | laserData | ||

| Line 242: | Line 217: | ||

|- | |- | ||

| odomDataPair | | odomDataPair | ||

| Contains two sets of | | Contains two sets of odometry data to predict the new position. | ||

|- | |- | ||

| target | | target | ||

| | | Current robot target | ||

|- | |- | ||

| prev_target | | prev_target | ||

| Line 263: | Line 238: | ||

|- | |- | ||

| destinations | | destinations | ||

| | | Ordered list of all the cabinets which have yet to be visited | ||

|- | |- | ||

| currentPath | | currentPath | ||

| | | Ordered list of points on the global map drawing the current path of the robot | ||

|- | |- | ||

| FailCount | | FailCount | ||

| Line 275: | Line 250: | ||

|- | |- | ||

| monteCarloPositions | | monteCarloPositions | ||

| Vector of possible robot positions. | | Vector of possible robot positions, used by Monte Carlo localization. | ||

|} | |} | ||

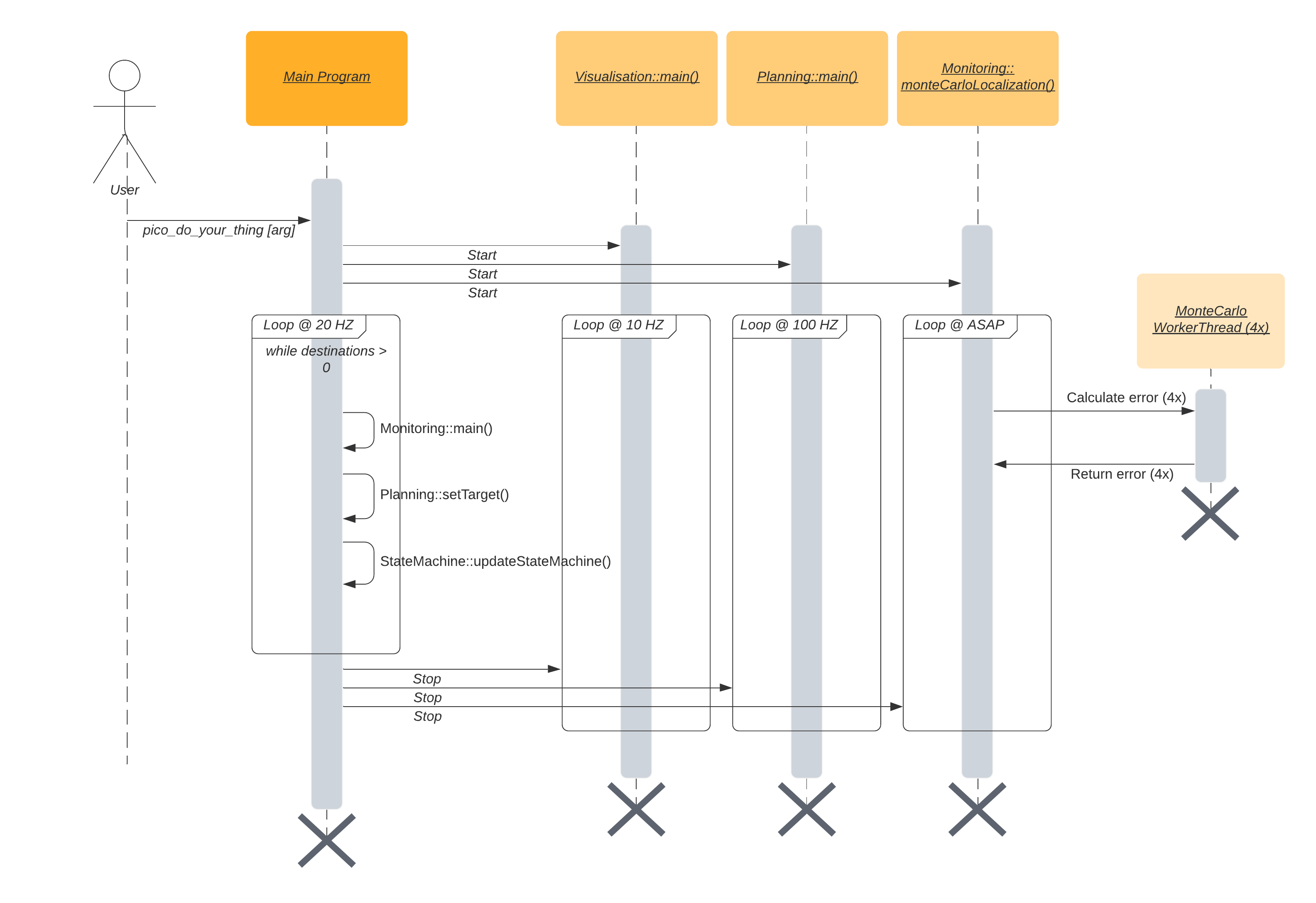

The data structures that are shown below are an addition of the UML diagram. The main data structure is the Map. This Map contains all the information from the provided JSON file and is used to create both maps. The basic building block of the map is the node. The more complex objects are made by pointing to the nodes. | The data structures that are shown below are an addition of the UML diagram. The main data structure is the Map. This Map contains all the information from the provided JSON file and is used to create both maps. The basic building block of the map is the node. The more complex objects are made by pointing to the nodes. | ||

[[File: | [[File:4SC020_2020_Group10_WorldModel_datastructs_v2.PNG]] | ||

''Node'': | ''Node'': | ||

| Line 288: | Line 263: | ||

''Waypoint'': | ''Waypoint'': | ||

A waypoint consits of an id, it coordinates on the global map | A waypoint consits of an id, it coordinates on the global map and the angle at which the robot is facing the front of the cabinet | ||

''Map'': | ''Map'': | ||

A map consits of all the nodes, wall that are made from those nodes | A map consits of all the nodes, wall that are made from those nodes and waypoints | ||

''Velocity'': | ''Velocity'': | ||

| Line 297: | Line 271: | ||

''Target'': | ''Target'': | ||

A target contains and id, its position in the global map, it angle compared to the front of the robot and force translate | A target contains and id, its position in the global map, it angle compared to the front of the robot and force translate | ||

''RobotPosition'': | ''RobotPosition'': | ||

| Line 317: | Line 291: | ||

=====Path Planning===== | =====Path Planning===== | ||

[[File:4SC020_2020_group10_PathPlanning.gif|frame|right|A* Path Planning. A closed door is detected, the path is redirected.]] | [[File:4SC020_2020_group10_PathPlanning.gif|frame|right|A* Path Planning. A closed door is detected, the path is redirected.]] | ||

For the path planning the A* algorithm is used, ([https://www.geeksforgeeks.org/a-search-algorithm/ A* algorithm]; [http://theory.stanford.edu/~amitp/GameProgramming/ Amit Patel, Standford University] ). The A* algorithm is an informed search algorithm, meaning that it calculates the path based on weighted graphs or gridmaps. A* determines which of its paths to extend based on the cost of the path and an estimate of the cost required to extend the path all the way to the goal. In mathematical terms, A* selects the path that minimizes: | For the path planning the A* algorithm is used, ([https://www.geeksforgeeks.org/a-search-algorithm/ A* algorithm]; [http://theory.stanford.edu/~amitp/GameProgramming/ Amit Patel, Standford University] ). | ||

The A* algorithm is an informed search algorithm, meaning that it calculates the path based on weighted graphs or gridmaps. A* determines which of its paths to extend based on the cost of the path and an estimate of the cost required to extend the path all the way to the goal. In mathematical terms, A* selects the path that minimizes: | |||

:<math>f(x)</math> = <math>g</math>(<math>x</math>) + <math>h</math>(<math>x</math>) | :<math>f(x)</math> = <math>g</math>(<math>x</math>) + <math>h</math>(<math>x</math>) | ||

| Line 382: | Line 357: | ||

The path planning already accounts for a large deal of the obstacle avoidance, but it is not perfect on its own. Furthermore, the path planning is limited to planning a path around static objects. Any dynamic object is not picked accounted for in the gridmap used for the path planning. To solve these issues it is opted to use an artificial potential field, APF in short. The philosophy of the artificial potential field approach can be described as follows: | The path planning already accounts for a large deal of the obstacle avoidance, but it is not perfect on its own. Furthermore, the path planning is limited to planning a path around static objects. Any dynamic object is not picked accounted for in the gridmap used for the path planning. To solve these issues it is opted to use an artificial potential field, APF in short. The philosophy of the artificial potential field approach can be described as follows: | ||

''The manipulator moves in a field of forces. The position to be reached is an attractive pole for the end effector and obstacles are repulsive surfaces for the manipulator parts.'' | ''The manipulator moves in a field of forces. The position to be reached is an attractive pole for the end effector and obstacles are repulsive surfaces for the manipulator parts.''<ref>[Ingemar J. Cox, Gordon T. Wilfong, Autonomous Robot Vehicles, Springer Science & Business Media, 1990, 1461389976, 9781461389972] Artificial Potential Field </ref> | ||

In this case, the position to be reached is the next path point. Since the APF is only used as a last resort for obstacle avoidance, we do not want the full effect of it at all times. To this end, the effect of the APF will only be exploited when Pico sees an obstacle within a certain range. At this particular range, the repulsive potential vector starts to increase from zero. | In this case, the position to be reached is the next path point. Since the APF is only used as a last resort for obstacle avoidance, we do not want the full effect of it at all times. To this end, the effect of the APF will only be exploited when Pico sees an obstacle within a certain range. At this particular range, the repulsive potential vector starts to increase from zero. | ||

| Line 403: | Line 380: | ||

The velocity which is send to the Pico base is determined by an based on a few conditions. In the "APPROACH_CABINET" state Pico is forced to translate, whilst in the "ORIENT_TO_CABINET" state Pico is forced to rotate. In the other states Pico will purely rotate if the angle <math>\theta</math> between the robot and the target is too large, purely translate in the longitudinal direction if <math>\theta</math> is small enough, and have a combined movement of rotating and translating in both directions if <math>\theta</math> is in between the two boundaries. If there is a repulsion force, the minimum angle is ignored for the combined movement. | The velocity which is send to the Pico base is determined by an based on a few conditions. In the "APPROACH_CABINET" state Pico is forced to translate, whilst in the "ORIENT_TO_CABINET" state Pico is forced to rotate. In the other states Pico will purely rotate if the angle <math>\theta</math> between the robot and the target is too large, purely translate in the longitudinal direction if <math>\theta</math> is small enough, and have a combined movement of rotating and translating in both directions if <math>\theta</math> is in between the two boundaries. If there is a repulsion force, the minimum angle is ignored for the combined movement. | ||

====Monitoring | ====Monitoring==== | ||

The monitoring component has the job of tracking and updating the robot's current position and it's surroundings. This class consists of two main parts: mapping of the robot's actual surroundings (incl. static clutter) and determining the exact position of the robot within the given global map using laser data. Mapping the surroundings is done using 'Occupancy Grid Mapping'<ref> [[Cyrill Stachniss, [http://ais.informatik.uni-freiburg.de/teaching/ws13/mapping/pdf/slam10-gridmaps.pdf "Robot Mapping - Grid Maps"], Uni Freiburg]] ''Autonomous Intelligent Systems'' </ref> and localization using laser data has been accomplished by implementing so called Monte Carlo localization. | The monitoring component has the job of tracking and updating the robot's current position and it's surroundings. This class consists of two main parts: mapping of the robot's actual surroundings (incl. static clutter) and determining the exact position of the robot within the given global map using laser data. Mapping the surroundings is done using 'Occupancy Grid Mapping'<ref> [[Cyrill Stachniss, [http://ais.informatik.uni-freiburg.de/teaching/ws13/mapping/pdf/slam10-gridmaps.pdf "Robot Mapping - Grid Maps"], Uni Freiburg]] ''Autonomous Intelligent Systems'' </ref> and localization using laser data has been accomplished by implementing so called Monte Carlo localization. | ||

=====Occupancy grid mapping (static clutter detection) | =====Occupancy grid mapping (static clutter detection)===== | ||

To be able to create correct and efficient paths through the hospital, the robot has to be in possession of a complete and actual map of it's surroundings. This is done using Occupancy Grid Mapping. In this technique, a gridmap is drawn over the (given) global map, with each grid-square containing a single value indicating whether or not that grid-square is occupied (traversable) or not. | To be able to create correct and efficient paths through the hospital, the robot has to be in possession of a complete and actual map of it's surroundings. This is done using Occupancy Grid Mapping. In this technique, a gridmap is drawn over the (given) global map, with each grid-square containing a single value indicating whether or not that grid-square is occupied (traversable) or not. | ||

| Line 437: | Line 414: | ||

[[File:GridMap-formula.png]] | [[File:GridMap-formula.png]] | ||

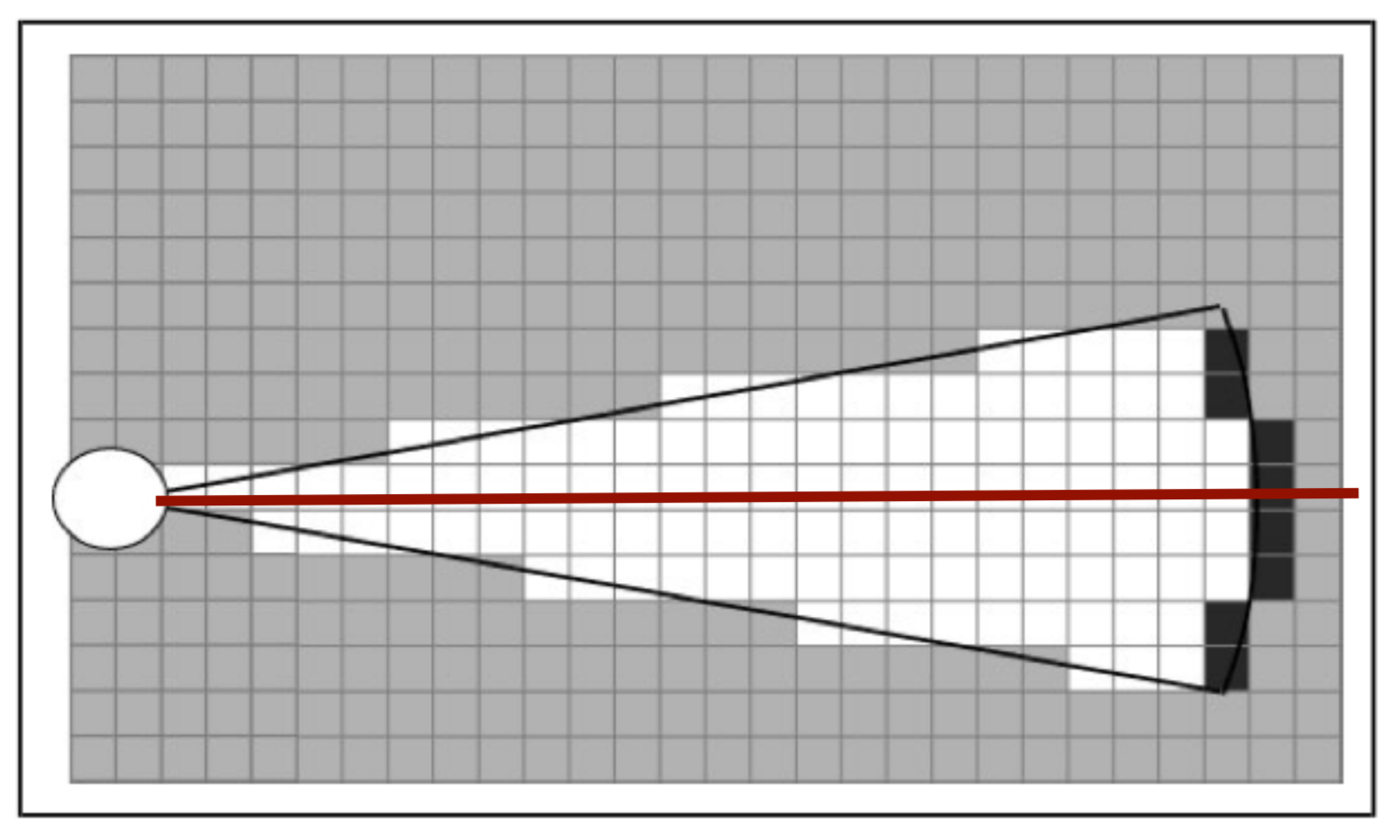

=====Monte Carlo localization | =====Monte Carlo localization===== | ||

[https://en.wikipedia.org/wiki/Monte_Carlo_localization Monte Carlo localization] is used in order to determine the position of the robot within the map. Due to the initial position of the robot being roughly known and the ability to track the (rough) position of the robot using odometry data, this method is a relatively easy way to get an exact position whilst being relatively robust. | [https://en.wikipedia.org/wiki/Monte_Carlo_localization Monte Carlo localization] is used in order to determine the position of the robot within the map. Due to the initial position of the robot being roughly known and the ability to track the (rough) position of the robot using odometry data, this method is a relatively easy way to get an exact position whilst being relatively robust. | ||

| Line 445: | Line 422: | ||

'''Generation of Monte Carlo positions''' | '''Generation of Monte Carlo positions''' | ||

[[File:MonteCarlo demonstration - cropped.gif|frame|Monte Carlo localization determining the initial position of the robot using a rough initial estimate. The green arrow represent the Monte Carlo positions tried.]] | |||

Possible new positions of the robot (Monte Carlo positions) consist of a position (x- and y- coordinates) and an orientation (angle). These positions are generated in a radius around the current robot position. The orientation of these positions are taken from a normal distribution around the current robot position's orientation. The exact way of calculating these values is related to the 'uncertainty' value of the current robot position. The higher this value, the greater the radius of the circle in which the positions are generated, the standard deviation of the normal distribution used and the amount of positions generated. This has two reasons: | Possible new positions of the robot (Monte Carlo positions) consist of a position (x- and y- coordinates) and an orientation (angle). These positions are generated in a radius around the current robot position. The orientation of these positions are taken from a normal distribution around the current robot position's orientation. The exact way of calculating these values is related to the 'uncertainty' value of the current robot position. The higher this value, the greater the radius of the circle in which the positions are generated, the standard deviation of the normal distribution used and the amount of positions generated. This has two reasons: | ||

| Line 453: | Line 433: | ||

This uncertainty value gets increases over time and is reset once a new position is determined using Monte Carlo. The new value is related to how much the robot has moved since the previous time the position has been determined using Monte Carlo. This uncertainty value is used in the generation of the Monte Carlo positions which are going to be checked. | This uncertainty value gets increases over time and is reset once a new position is determined using Monte Carlo. The new value is related to how much the robot has moved since the previous time the position has been determined using Monte Carlo. This uncertainty value is used in the generation of the Monte Carlo positions which are going to be checked. | ||

'''Selecting the best Monte Carlo position''' | '''Selecting the best Monte Carlo position''' | ||

| Line 459: | Line 438: | ||

For each position, the likelihood of the position being the actual robot position has to be determined. This is done by calculating an 'error' value for each position. This is done by going through the currently seen laserpoints (with a position w.r.t. the robot), placing these points within the global map using the Monte Carlo position and determining the distance to the closest wall or cabinet. These distances are summed for all laserpoints and together make up the error value. The position with the lowest error value (in effect, the position in which the seen laserpoints line up with the given .json-map the best) is selected as a new robot position candidate. A last check is done to verify the found position is within reason (it's not unreasonably far away from the current position) and if so, it's set as the new robot position and the new position uncertainty is set. | For each position, the likelihood of the position being the actual robot position has to be determined. This is done by calculating an 'error' value for each position. This is done by going through the currently seen laserpoints (with a position w.r.t. the robot), placing these points within the global map using the Monte Carlo position and determining the distance to the closest wall or cabinet. These distances are summed for all laserpoints and together make up the error value. The position with the lowest error value (in effect, the position in which the seen laserpoints line up with the given .json-map the best) is selected as a new robot position candidate. A last check is done to verify the found position is within reason (it's not unreasonably far away from the current position) and if so, it's set as the new robot position and the new position uncertainty is set. | ||

====Perception==== | |||

====Perception | |||

[[File:Laserdata jitter.gif|frame|Jitter in the laser-data due to odometry and laser data being out of sync]] | [[File:Laserdata jitter.gif|frame|Jitter in the laser-data due to odometry and laser data being out of sync]] | ||

The perception module is in essence quite straightforward: it reads out the laser and odometry sensor data from the robot sensors and writes it to the world model. Some problems where observed however in laserdata moving erratically w.r.t. the robot's position, as seen in the image to the right. | The perception module is in essence quite straightforward: it reads out the laser and odometry sensor data from the robot sensors and writes it to the world model. Some problems where observed however in laserdata moving erratically w.r.t. the robot's position, as seen in the image to the right. | ||

| Line 492: | Line 444: | ||

This problem has been traced back to the fact odometry and laser data is not retrieved from pico's API at the exact same time. Even when both lines of code retrieving the data where direct after eachother, differences in the timestamps of the returned data could be observed. Instead of writing complex code interpolating data to match timings (or other solution), the laser and odometry data is only updated if the received data has the exact same timestamps. Although quite some data updates get rejected due to mismatches in timestamps (experimentation showed rejection rates of up to 50%), this approach did not seem to have a negative impact on performance of the program. | This problem has been traced back to the fact odometry and laser data is not retrieved from pico's API at the exact same time. Even when both lines of code retrieving the data where direct after eachother, differences in the timestamps of the returned data could be observed. Instead of writing complex code interpolating data to match timings (or other solution), the laser and odometry data is only updated if the received data has the exact same timestamps. Although quite some data updates get rejected due to mismatches in timestamps (experimentation showed rejection rates of up to 50%), this approach did not seem to have a negative impact on performance of the program. | ||

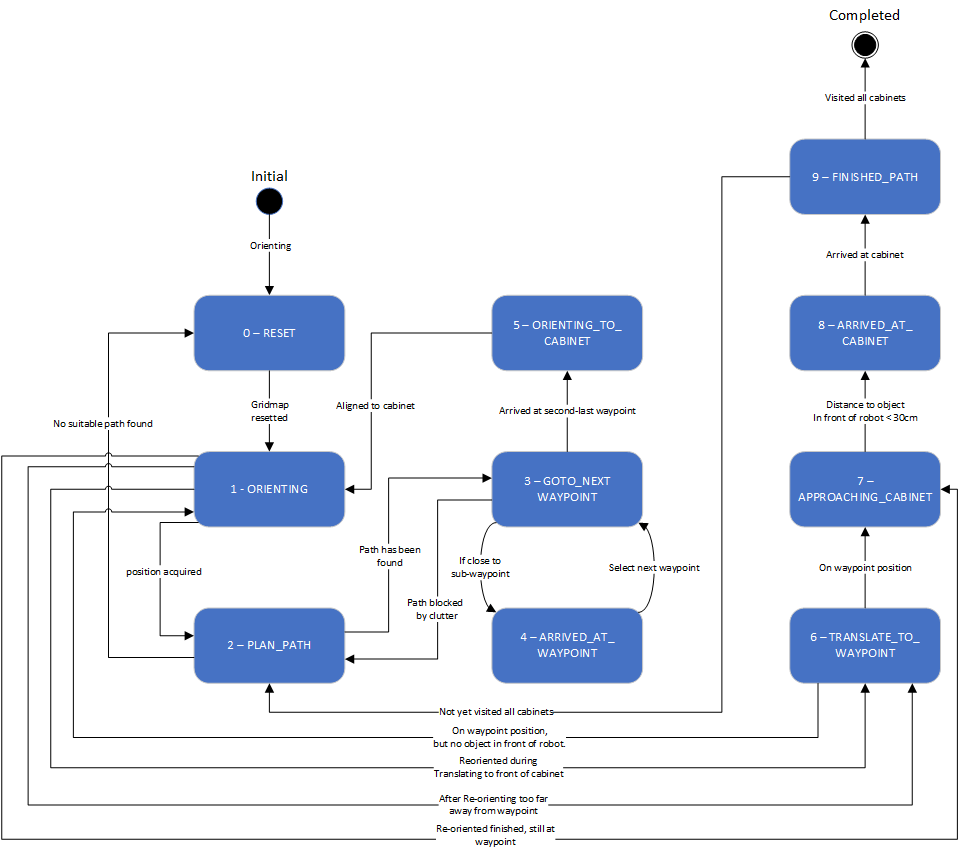

===Statemachine | ===Statemachine=== | ||

[[File:State_Machine_Hospital_challenge.png|frame|schematic representation of the state machine]] | [[File:State_Machine_Hospital_challenge.png|frame|schematic representation of the state machine]] | ||

The state machine designed for the hospital challenge is described in this paragraph. | The state machine designed for the hospital challenge is described in this paragraph. | ||

| Line 555: | Line 507: | ||

This state is reached when all cabinets have been visited. The robot shuts down when this state is reached. | This state is reached when all cabinets have been visited. The robot shuts down when this state is reached. | ||

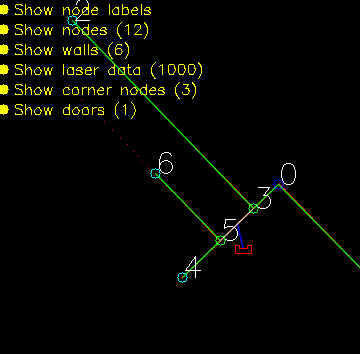

====User interface | ====User interface==== | ||

[[File:4SC020_2020_Group10_Interface.png|frame|Global map interface. With on the top left the menu to toggle the visualization of different parts of the map. On the top right are the runtimes shown of the different threads. On the bottom left is the toggle to turn on manual control]] | [[File:4SC020_2020_Group10_Interface.png|frame|Global map interface. With on the top left the menu to toggle the visualization of different parts of the map. On the top right are the runtimes shown of the different threads. On the bottom left is the toggle to turn on manual control]] | ||

| Line 566: | Line 518: | ||

On the bottom left it is possible to toggle manual control of the robot. Turning manual control on will stop the statemachine and control loop. With these turned of it is possible to control the robot using the numpad just like in the simulator. This save time if specific scenarios need to be looked at. A couple example scenarios could be: Approaching objects on a certain angle or see what the robot registers when it is looking at objects from a certain angle. This was what went wrong with the maze challenge so we wanted something that could test that. Another scenario was with the dynamic objects, with manual control we could easily test how our gridmap was updated when the robot moved along dynamic objects. | On the bottom left it is possible to toggle manual control of the robot. Turning manual control on will stop the statemachine and control loop. With these turned of it is possible to control the robot using the numpad just like in the simulator. This save time if specific scenarios need to be looked at. A couple example scenarios could be: Approaching objects on a certain angle or see what the robot registers when it is looking at objects from a certain angle. This was what went wrong with the maze challenge so we wanted something that could test that. Another scenario was with the dynamic objects, with manual control we could easily test how our gridmap was updated when the robot moved along dynamic objects. | ||

===Testing | ===Testing=== | ||

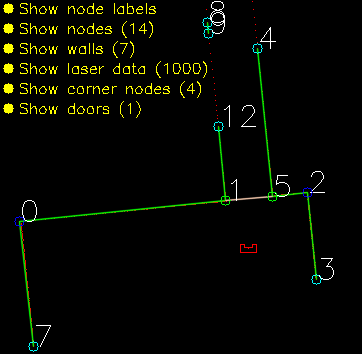

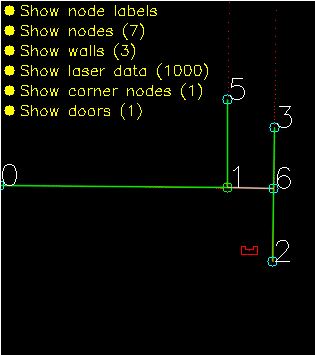

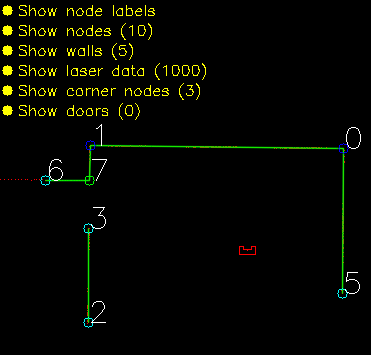

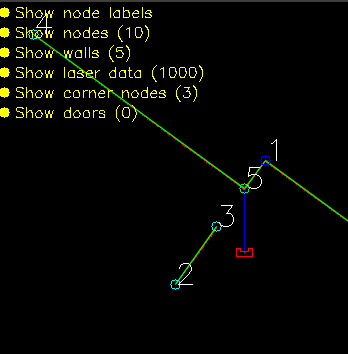

[[File:2020_group10_total_gif_maps.gif|frame|Different test maps used during testing. 1) The perfect .json map. 2) Uneven walls with gaps and misplaced wall segments. 3) Multiple extra pieces of clutter. 4) Skewed map by over 3 degrees with respect to the vertical.]] | [[File:2020_group10_total_gif_maps.gif|frame|Different test maps used during testing. 1) The perfect .json map. 2) Uneven walls with gaps and misplaced wall segments. 3) Multiple extra pieces of clutter. 4) Skewed map by over 3 degrees with respect to the vertical.]] | ||

| Line 608: | Line 560: | ||

''Path planning'' | ''Path planning'' | ||

[[File:2020_Group_10_Dijkstra.gif|frame|Dijkstra Algorithm]] | |||

A different path planning strategy was used in the early stages of the project. This strategy used manually set waypoints throughout the map, and [https://en.wikipedia.org/wiki/Dijkstra%27s_algorithm Dijkstra's algorithm] to navigate through these waypoints. The links between the waypoints can be made inactive if an obstruction on the path of the robot is observed. Though this strategy was promising, it was eventually discarded in favor of the gridmap and A* approach. This choice was made based upon robustness. A lot of manual waypoints must be placed in order to ensure that the robot can drive through the hospital with all kinds of different clutter configurations. This way of 'hard coding' the path also seemed like a rather messy solution. | A different path planning strategy was used in the early stages of the project. This strategy used manually set waypoints throughout the map, and [https://en.wikipedia.org/wiki/Dijkstra%27s_algorithm Dijkstra's algorithm] to navigate through these waypoints. The links between the waypoints can be made inactive if an obstruction on the path of the robot is observed. Though this strategy was promising, it was eventually discarded in favor of the gridmap and A* approach. This choice was made based upon robustness. A lot of manual waypoints must be placed in order to ensure that the robot can drive through the hospital with all kinds of different clutter configurations. This way of 'hard coding' the path also seemed like a rather messy solution. | ||

This animation shows how Dijkstra's algorithm was implemented. This particular case has an added obstruction between waypoint 8 and 9. The robot wants to go through this closed door, but is stopped by the potential field. After some time, the robot realizes that this link is invalid, and calculates a new path around the obstacle. | This animation shows how Dijkstra's algorithm was implemented. This particular case has an added obstruction between waypoint 8 and 9. The robot wants to go through this closed door, but is stopped by the potential field. After some time, the robot realizes that this link is invalid, and calculates a new path around the obstacle. | ||

| Line 617: | Line 569: | ||

''Dynamic clutter detection'' | ''Dynamic clutter detection'' | ||

A seperate dynamic clutter detection implementation was written. This implementation first scans the LRF data for any objects which do not coincide with known wall positions. All unknown found data is then labeled as clutter. The clutter is then grouped. Clutter objects which lay within 15 centimeters of each other are combined into one bigger clutter object. These clutter objects are then checked with the previous iteration of the clutter detection. If the location of the clutter object with the respect to the global map does not change, the clutter object is labeled as static clutter. If the clutter group has moved a bit however, it is assumed that this is dynamic clutter. This strategy could allow for better dynamic clutter avoidance, by means of estimation of the dynamic objects for example. | |||

This implementation was not realized eventually however. The noisy sensor data proved to be problematic with the recognition of dynamic objects, and the fine-tuning of the parameters was more troublesome than expected. | |||

====Evaluation of design process==== | ====Evaluation of design process==== | ||

| Line 631: | Line 585: | ||

====Evaluation of challenge==== | ====Evaluation of challenge==== | ||

[[File:Final Challenge fail edit.gif|frame|Before and after re-orientation, incl. a visualization on how the error values are calculated in the different situations]] | [[File:Final Challenge fail edit.gif|frame|Before and after re-orientation, incl. a visualization on how the error values are calculated in the different situations. The errors are calculated as the sum of all distances between the laserpoints (red) and the walls (green) and cabinets (orange) as defined by the given input .JSON, blue arrows give an impression of some of these distances.]] | ||

In [https://www.youtube.com/watch?v=-tlRSVm-kIM this video], a run of the final program of the final map can be seen. Since no video of the actual (live) challenge is available, this run has been recreated at a later time. The behavior is exactly the same however. One thing has been changed in the visualization w.r.t. the program ran during the challenge. Instead of only showing the grid with the actual clutter, the ranges which are placed around this clutter and used by the path planning algorithm are shown aswell. This way, it's easier seen when/why certain behavior happens. The enabling of this visualization was not done during the live challenge, due to the extra resources this requires. The visual hiccups which seem to appear in the video (drop in framerate) where not visible during actual recording and are most probably an artifact of the recording software used. | In [https://www.youtube.com/watch?v=-tlRSVm-kIM this video], a run of the final program of the final map can be seen. Since no video of the actual (live) challenge is available, this run has been recreated at a later time. The behavior is exactly the same however. One thing has been changed in the visualization w.r.t. the program ran during the challenge. Instead of only showing the grid with the actual clutter, the ranges which are placed around this clutter and used by the path planning algorithm are shown aswell. This way, it's easier seen when/why certain behavior happens. The enabling of this visualization was not done during the live challenge, due to the extra resources this requires. The visual hiccups which seem to appear in the video (drop in framerate) where not visible during actual recording and are most probably an artifact of the recording software used. | ||

| Line 641: | Line 595: | ||

Arrival at the cabinet is determined by it's global position w.r.t. the waypoint in front of the cabinet. Due to position errors which can occur during travel, a 're-orienting' state has been added before actually approaching the cabinet it should be in front of. In this state, the localization algorithm is ran three times and the regular limits w.r.t. maximum allowable 'jump' in position are removed. In this room however, this results in problems. In the shown video, it's visible what the situation is when arriving at the cabinet, and the new position which the robot thinks is the correct one. A human can clearly see the initial position is off by a few centimeters, but more or less correct. The new position however, is completely tilted and wrong. The algorithm disagrees however. Also shown in this video, is the way the error of the different positions is calculated. This error is the sum of all distances between a subset of the laserpoints and it's closest wall or cabinet. This indicated by the blue arrows. Now, it's easily seen why the algorithm chooses this position: the sum off all distances is lower, even though a lot of individual distances are actually increased. | Arrival at the cabinet is determined by it's global position w.r.t. the waypoint in front of the cabinet. Due to position errors which can occur during travel, a 're-orienting' state has been added before actually approaching the cabinet it should be in front of. In this state, the localization algorithm is ran three times and the regular limits w.r.t. maximum allowable 'jump' in position are removed. In this room however, this results in problems. In the shown video, it's visible what the situation is when arriving at the cabinet, and the new position which the robot thinks is the correct one. A human can clearly see the initial position is off by a few centimeters, but more or less correct. The new position however, is completely tilted and wrong. The algorithm disagrees however. Also shown in this video, is the way the error of the different positions is calculated. This error is the sum of all distances between a subset of the laserpoints and it's closest wall or cabinet. This indicated by the blue arrows. Now, it's easily seen why the algorithm chooses this position: the sum off all distances is lower, even though a lot of individual distances are actually increased. | ||

In [https://www.youtube.com/watch?v=kbSCrFAvAJw this video], it's clearly seen what the impact of the added re-orienting state is and why it has been added. In the video, a run is shown without the re-orienting state when arriving at cabinet. It shows nicely how cabinet number six is reached successfully due to the omitting of this state, but the accumulative error position being to large at the next cabinet and resulting in missing the cabinet. | |||

| Line 649: | Line 605: | ||

# Instead of looking at all geometry in the map, only check distances of the laserpoints w.r.t. geometry the robot is expected to see from it's current (approximate) location. This not only removes the option of using the distance between a laserpoint and the back-side of a wall, but (if implemented efficiently) could also reduce computational costs by reducing the amount of maximum distance calculations required. | # Instead of looking at all geometry in the map, only check distances of the laserpoints w.r.t. geometry the robot is expected to see from it's current (approximate) location. This not only removes the option of using the distance between a laserpoint and the back-side of a wall, but (if implemented efficiently) could also reduce computational costs by reducing the amount of maximum distance calculations required. | ||

# A preference for positions in which most points have a negligible distance to a wall or cabinet (e.g. 5 cm) instead of only looking at the average distance of all laserpoints w.r.t. a wall or cabinet. | # A preference for positions in which most points have a negligible distance to a wall or cabinet (e.g. 5 cm) instead of only looking at the average distance of all laserpoints w.r.t. a wall or cabinet. | ||

# Further tuning of the 'acceptance' limits. Currently, the robot determines how large it's jump in position can be by using time since last successful localization and a certain multiplier. Changing this multiplier and/or capping it at different limits could result in more (correct) positions being accepted during movement, reducing or even removing the 'stacking' position error which in turn could remove the need for a re-orienting state. | |||

====Code snippets==== | ====Code snippets==== | ||

| Line 654: | Line 611: | ||

Excerpt showing multithreading of the Monte Carlo localization using a easily configured threadcount (''MONTE_CARLO_THREADCOUNT''): [https://gitlab.tue.nl/MRC2020/group10/snippets/634 Multithreading of Monte Carlo localization] | Excerpt showing multithreading of the Monte Carlo localization using a easily configured threadcount (''MONTE_CARLO_THREADCOUNT''): [https://gitlab.tue.nl/MRC2020/group10/snippets/634 Multithreading of Monte Carlo localization] | ||

Smoothening of path generated by A*: [https://gitlab.tue.nl/MRC2020/group10/snippets/653 Smoothening of path generated by A*] | |||

Detection of path obstruction: [https://gitlab.tue.nl/MRC2020/group10/snippets/654 Detection of path obstruction] | |||

Indication on how complicated it is to calculate the distance between a point and a wall: [https://gitlab.tue.nl/MRC2020/group10/snippets/636 Determining distance to wall] | Indication on how complicated it is to calculate the distance between a point and a wall: [https://gitlab.tue.nl/MRC2020/group10/snippets/636 Determining distance to wall] | ||

Latest revision as of 20:46, 24 June 2020

Team members:

Arend-Jan van Noorden (1378147)

Sjors Boutkan (0938234)

Jeroen van Meurs (0946114)

Koen van Gorkom (0954964)

Friso Buurman (0906665)

Escape Room

Design Document

The design document of group 10 can be found here.

Strategy

Solving the escape room is quite simple: find the corridor and drive through it. This document givesan overview of the system being build to solve this task.Finding the corridor is done by detecting the two outside corners marking the start of the corridor.Since the room is square, no other outside corners will be present in the environment. If these cornerscan’t be found from PICO’s initial location, it’ll follow the walls of the rooms clockwise, until thecorridor (outside corners) are encountered. The corresponding state diagram is shown in Figure1.1. The movement and navigation will be done using set-points and a potential-field algorithmpreventing PICO from hitting any obstacles or walls.

Requirements and Specifications

| Requirements | Specifications |

|---|---|

| PICO should not bump into walls | PICO's measurements are 0.41 m in width by 0.35 m in length |

| The free radius around PICO should be at least 0.21 m | |

| PICO cannot exceed speed limits | Maximum translational velocity is 0.5 m/s |

| Maximum rotational velocity is 1.2 rad/s | |

| Since PICO will be autonomous, it should be aware of its surroundings. | PICO should have an accurate map of its surroundings. |

| LRF range is between 0.01 m and 10 m. | |

| LRF FOV is from -2 to 2 rad, measured over 1000 points of equal increments. | |

| PICO should terminate when the final objective is reached. | PICO should come to a stop after it has crossed the finish line. |

| PICO should reach the final objective as soon as possible. | PICO should cross the finish line within 5 minutes. |

| PICO should not end up in a live lock state. | PICO should not keep repeating one action for over 30 seconds. |

| PICO should not end up in a dead lock state. | Careful coding has to be done to have no dead lock states. |

Components and Functions

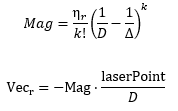

Basic architecture of robot code:

All functions need to be run based on a Finite State Machine. As with sensors, all data has noise so these data streams need to be filtered.

The tasks of each component can be divided in several functions each with a specific subroutine.

World model

The world model stores the information gathered by the components and distributes this information to the other components. The information consists of:

- A map of the surrounding area.

- A list of found features.

- The current and previous state.

- The next position to travel to.

The world model contains no functions because the world model is only used to store the world data, so there are no active subroutines present.

Plan

The plan component uses data from the world model to determine the next position PICO needs to go to. It thereby also check if the objective is met.

- checkObjective()

The checkObjective function is the supervisor of the complete system. It controls/executes the states as defined in figure \ref{fig:state-machine} and executes the strategy as described in \ref{sec:strategy}. It will also check for failure of the system by identifying incorrect states, e.g. not moving for a set amount of time, following a wall for too long or having made a complete loop of the room without finding the corridor.

- pathPlanner()

The plan component contains the path-planner function, this functions is responsible to define the setpoints for PICO. These setpoints will be determined based on the state machine and the world model.

Control

The control component makes sure PICO moves along the planned path.

- moveToPoint()

The move to point function will be used to move PICO to the set point generated by the path planner. To prevent that PICO will hit the wall, the world model will be used to determine the trajectory from the current position of PICO to the set point.

Monitoring

The monitor component will try to identify features from the world model data.

- featureDetection()

To determine the corners and walls of the maze the feature detection function will be used based on the world model data.

Perception

The perception component will gather the sensor data, clean it and update the world model with it.

- getLaserData()

To acquire the data points of the laser sensor, the get laser data function will be used. The gathered data will be preprocessed to filter out the disturbances. The processed data will be written to the world model.

- getOdomData()

The odometry date will be read out with the getOdomData function. This date will be written to the world model.

- SLAM()

The gathered data from the laser and odometry sensors will be used to generate a map of the environment using Simultaneous Localization and Mapping (SLAM)

- UpdateVisuals()

A window will be opened that shows all the detected features and the path that PICO is going. This function will update that window

Interfaces

All components interface with the world model, getting data required for their functions and setting data created to be used by other components. The world mode is the central data store. This can also be seen in figure Figure Boven. Differences between the the proposed system and the system originally shown in [1], is that there are no interfaces between the 'resources' and 'capabilities' and the world model. The world model will not be able to get it's own data or control the motors of the system. The interface to the sensors will be through the perception component and the controlling of capabilities will be done by the control component.

Challenge result

Sadly we did not manage to get out of the escape room, below we will discuss why and how we solved it.

Main

After the Maze challenge we analyzed which part of the main program caused the failure which lead to the the wall collision. The problem occurred in the corner detection function, which resulted in the interpretation of a inside corner as an outside corner. The planning algorithm used this corner as target position to search a second outside corner, and let the robot drive to this corner. With the absence of a potential field algorithm, the robot was able to drive through the wall.

The main program for the maze challenge is using the state machine as shown in the Components and functions paragraph, in the following paragraphs is the robot behavior at each state described.

- ORIENTING

In the first state the robot will turn to check if it can see a door or a outside corner. A door is defined if there are two outside corners within 0,5 m and 1,5 m from each other, or a outside corner and a wall within the same range as with the outside corners. If the robot is not able to find a door from its initial position, then there will be searched for a single outside corner. When there is no door or outside corner found then the robot will search for the closest wall to follow.

- SEARCH_FORWARD

If the robot cannot detect any features in the orienting state, then the robot will drive straight forward in the same direction as the initial orientation.

- ALIGN_TO_WALL

When multiple walls are detected the closest wall is determined, the angle between the wall and the robot will be computed to determine the rotation direction. When the robot is aligned within +/- 3 degrees the state will be set to FOLLOW_WALL.

- FOLLOW_WALL

The robot will follow the wall at 20 cm distance till a door or outside corner is found, if this is the case the state will be set to GOTO_DOOR or SEARCH_DOOR. Otherwise the robot will follow the wall if a second wall in front of the is found, when this second wall is closer by then the first wall the ALIGN_TO_WALL state will be called again.

- SEARCH_DOOR

If one outside corner is found a way point will be set 25 cm from the x and y direction with respect of the corner, with this way point there can be guaranteed that the robot will not collide with the wall. When the robot is at this position and the second outside is still not found it will to ORIENTING again, otherwise the robot will be set GOTO_DOOR.

- GOTO_DOOR

When the door is found the planning script will place a way point 25 cm in front of the midpoint of the door, if the robot is at this location the robot will be set to the DRIVE_THROUGH_DOOR state.

- DRIVE_THROUGH_DOOR

In this state the robot will drive to the way point 25 cm in the middle the door, when the robot loses the door the DRIVE_THROUGH_CORRIDOR state is set.

- DRIVE_THROUGH_CORRIDOR

To complete the maze a way point is set in the middle of the furthest corridor walls nodes. To determine the corridor walls there is checked if the walls are parallel to each other, if the perpendicular distance is between the 0,5 m and 1,5 m and if at least two wall nodes can be projected on the other wall to check if the walls are not after each other. When the robot is at this way point the robot has exited the maze and the state will be set to COMPLETED. Below there is shown that the robot is executing SEARCH_DOOR, GOTO_DOOR and DRIVE_THROUGH_CORRIDOR.

Backup

The backup was a simple wall following algorithm. Initially, Pico will drive forwards until it encounters an object in front. Next, Pico will turn 90 degrees to the right. Then, as long as Pico's left side is obstructed, the wall will be followed while making adjustments to align with the wall and not strafe too close to the wall or too far away from the wall. Again, when the front is obstructed as well, Pico will turn 90 degrees to the right and the next wall will be followed. When a gap at the left side is detected, Pico will move forward a little bit, turn left and drive forward into the corridor. If the senses a object in front, Pico will know the opening was only a small gap and not the corridor, in this case Pico will rotate right and continue following the wall.

Unfortunately, the backup attempt failed as well. The problem Pico encountered during the challenge was an edge case which unfortunately did not result in a correct execution of the algorithm. After finding a wall at the front, Pico turned 90 degrees to the right. After the turn all sides were 'free', meaning that Pico thought he was next to a corridor. After turning left it noticed that its front was free and its left side was not. This triggered the state machine to believe it was in the corridor. When the state machine enters this state, the only possible state is the finished state. So after driving through the wall, all sides of Pico were 'free' again thus entering the finished state. Pico drove through the when it thought it was in the corridor. This is because of the specific laser beams that Pico uses for the collision detection. Pico checks three sides, using ten laser beams per side. The front side is the actual front of Pico. The right and left side however, are about 45 degrees from the front instead of 90. Since the actual side of Pico was not monitored, Pico thought he was still some distance away from the wall.

After the challenge a little time was spend to improve the behavior of the wall following algorithm. To this end, the hard 90 degree turns have been replaced by an alignment check and the collision detection was changed that the actual right and left side of Pico are measured. When an obstructing in front is detected, Pico rotates until the obstruction is not visible anymore and the left side is aligned to a wall. This is checked by comparing the ranges of a subset of the of the LRF to the left of Pico. When the ranges are about equal, Pico is aligned. With this improvement, Pico was able to reach the actual finish of the Maze Challenge.

Hospital challenge

The hospital challenge is based upon a real-life scenario where a PICO robot can assist with tasks in the hospital. The goal of the robot is to visit different cabinets to gather or deliver medicenes for example. This process should be executed as smooth, fast and safe as possible. The main challenges which arise are the planning and the path of the robot, which is unknown before the start. The robot should be able to determine its path’s on the run. The robot should also be able to robustly navigate through the hospital. Imperfect walls, static and dynamic clutter, such as garbage bins or people, and wheelspin are all present in practice, while these are not necessary present in a perfect simulated environment.

Software-structure and interaction

In order to successfully complete the given task, the structure of the code which runs the robot must be well designed, structured and intuitive. The code is divided into multiple components, which all serve a different task.

World model is used to store the information of the map and the sensors in two maps, a global map and a live map. The information is read from and written to this world model.

Planning is used in order to plan an optimal path through the map, which can change when clutter or closed doors are encountered.

Control focuses on the actuation of the robot itself.

Monitoring is a big component which takes all the perception data, and uses it to determine the position of the robot, the live map, and clutter detection based on a gridmap.

Perception is the component which translates the raw sensor data to useful information.

Statemachine is the brain component of the robot, and decides which steps need to be undertaken in order for the robot to complete its task.

User interface is used in order to visualize the maps, the robot and its behavior, and can give tremendous insights during testing and execution of the tasks at hand.

The program written has been designed in a way such that the semi-independent components all work together. Some of these even work in parallel to improve qualitative performance. In the following sections, these different components and their interactions will be dissected and explained. Below, a UML class diagram can be seen showing the different components and their most important member attributes and methods.

Clicking the thumbnail at the right will show the full sized version of the UML Class Diagram.

The different components and functions run in an important specified order. The sequence of execution is shown in the sequence diagram below.

The program is started by the argument ‘pico_do_your_thing’. Using this command, the amount of cabinets and the order of the cabinets is specified to the robot. The main loop of the robot keeps on looping until there are no more destinations to be reached. This loop excecutes the MONITORING, PLANNING SET TARGET, and STATEMACHINE code. Three processes run in parralel with this code; the VISIUALISATION, the main planning loop, and the MONTE CARLO LOCALIZATION.

The visualization is run independently from the main code. The visualization is not essential for the main code of the robot, but does give tremendous insights in the behavior of the robot. In order to reduce the computational power required to run the program, the refresh rate of the visualization is reduced compared to the main loop

The main planning loop does not contain the code which determines the target and the path of the robot, but does contain the nessecary control components of the robot. The potential field algorithm, as well as the drive control functions are present in the main planning loop. These functions should be placed in a different loop, but during the testing of the code, this structure ‘snuck in’ to our code, and proved not to be problematic.

The Monte Carlo Localization is also run seperately from the main loop. The main reason for this choice is the very long run time of the localization compared to all other threads. While the goal is to run the localization as soon as possible (ASAP), the realistic runtime approaches 6 seconds per iteration, even when the localization is run on 4 threads.

World model

The world model is used as a database. This database is separated into two maps: A global map and a grid map. All gathered data is stored in the world model and then can be used by other processes. Besides the data structures, the world model also has a couple of functions to update the model and extract data from it. The main function is "initGlobalMap", this is the initialization of the global map. Then the function "addWallToGridMap" is used to update the gridmap with all the statics walls. These functions will be described further below. Next to those main functions there are some smaller functions to update or extract odometry data,laser data, robot position and more.

Data structures

The data structures used by the world model are briefly shown in the UML diagram above. They will be further explained here:

| Variable | Explanation |

|---|---|

| potentialField | A vector containing all the potential vectors, used in controlling the robot |

| laserPoints | Processed laserData. Vector containing all the laserpoints and their local coordinates (w.r.t. the robot) |

| laserData | Data retrieved from the LFR sensor |

| odomDataPair | Contains two sets of odometry data to predict the new position. |

| target | Current robot target |

| prev_target | Previous target in the path |

| gridMap | Defines the the gridmap as a matrix |

| gridMapRanges | Same as the gridMap, but used for placing the distance ranges from objects |

| globalMap | Map created from the provided JSON file. Contains variuos structures that are shown below |

| globalRobotPosition | Position of the robot on the global map |

| destinations | Ordered list of all the cabinets which have yet to be visited |

| currentPath | Ordered list of points on the global map drawing the current path of the robot |

| FailCount | Counter for failed attempts of path planning. |

| initialMonteCarloSearch | Boolean to check if it is the first time orienting |

| monteCarloPositions | Vector of possible robot positions, used by Monte Carlo localization. |

The data structures that are shown below are an addition of the UML diagram. The main data structure is the Map. This Map contains all the information from the provided JSON file and is used to create both maps. The basic building block of the map is the node. The more complex objects are made by pointing to the nodes.

Node: A Node consits of an id and various coordinates. The coords_img is the place of the node on an image. The coords_cart is the coordinates with respect to the robot. The coords_glob is the place of the node on the global map.

Wall: A wall consist of an id, pointers to the two nodes that make up the wall, a typ that defines if it is a horizontal or vertical wall and the lineEquation

Waypoint: A waypoint consits of an id, it coordinates on the global map and the angle at which the robot is facing the front of the cabinet Map: A map consits of all the nodes, wall that are made from those nodes and waypoints

Velocity: The velocity contains values for velocity in x, y and theta direction.

Target: A target contains and id, its position in the global map, it angle compared to the front of the robot and force translate

RobotPosition: The RobotPosition contains it position inx and y direction, its angle compared to the x axis and the amount of uncertainty this position has.

PotentialVector: The PotentialVector contains the gradient and a type to signify if it is a attractive or repulsive vector.

Functions

initGlobalMap: This function loads a modified json and turns into into a global map as seen in picture **. Th json file consists of nodes, walls, cabinets and waypoints in front of the cabinets. Every item in this list will be visualized, this is done to make debugging easier. This global map is used as a reference base of the grid map and used to make a clearer visualization.

addWallToGridMap: This function is used to create the basic grid map. It will use the walls that are supplied by the global map to fill in the grid map. This gives a solid base for a initial path planning.

Planning

The planning is made up of two major components: Target determination and path planning. First the path planning and path smoothing algorithms are discussed. Afterwards the target determination is elaborated on.

Path Planning

For the path planning the A* algorithm is used, (A* algorithm; Amit Patel, Standford University ). The A* algorithm is an informed search algorithm, meaning that it calculates the path based on weighted graphs or gridmaps. A* determines which of its paths to extend based on the cost of the path and an estimate of the cost required to extend the path all the way to the goal. In mathematical terms, A* selects the path that minimizes:

- [math]\displaystyle{ f(x) }[/math] = [math]\displaystyle{ g }[/math]([math]\displaystyle{ x }[/math]) + [math]\displaystyle{ h }[/math]([math]\displaystyle{ x }[/math])

Where [math]\displaystyle{ x }[/math] is the next node on the path, [math]\displaystyle{ g }[/math]([math]\displaystyle{ x }[/math]) is the cost of the path from the start node to [math]\displaystyle{ x }[/math], and [math]\displaystyle{ h }[/math]([math]\displaystyle{ x }[/math]) is a heuristic function that estimates the cost of the cheapest path from [math]\displaystyle{ x }[/math] to the goal.

The heuristic function is the Euclidian distance between [math]\displaystyle{ x }[/math] and the goal, this means that the heuristic function is admissible. In other words: A* is guaranteed to return the least-cost path from start to goal.

The cost function [math]\displaystyle{ g }[/math]([math]\displaystyle{ x }[/math]) is based on the grid map generated from laser data. If a laser can is reflected at a grid point, the grid point is eventually flagged as blocked. A blocked grid point has a high integer value and a unblocked grid point has a value of zero if it is not close to a blocked grid point. The closer a unblocked grid point is to a blocked grid point, the higher the integer value of the grid point, thus also the higher the cost. To end up with the shortest path, the grid point values are raised by one and multiplied by one if [math]\displaystyle{ x }[/math] is a direct neighbor of the current node and multiplied by √2.

A* has some conditions which determine whether it is possible for a path to be planned. The simplest two are that the starting node and the goal node have to be reachable, meaning that their grid points are not flagged as blocked. The other condition is that there is a free path between the start node and the goal node. This free path in this implementation is a dynamic factor. To ensure that Pico does not collide with static objects, the grid map contains a certain range around blocked grid point where the values of the grid points are higher than zero. For the first iteration of the definition of a free path is that there is a unbroken line of grid points (diagonal counts as well) with a value of zero. This implies that Pico stays well away from all objects. If Pico cannot find a free path after the first iteration, the threshold of accepted grid point values is raised to incorporate the first row of grid points which is effected by a blocked grid point. The threshold keeps decreasing if no path is found until all grid points, except for the blocked grid points, are used by A*. If a path is found, the threshold is reset to its initial value.

The path which is generated by the A* algorithm is not the ideal path. This is due to the fact that the path is generated from the mid points of the grid, but Pico is able to drive anywhere on a free grid point. To increase smoothness and speed, a shorter and more direct path is created. Basically, from the first node of the path it is checked until what node a straight line can be drawn without intersecting with obstacles. Once the line cannot be extended further, the line is drawn between the starting node and the current node and the cycle repeats from the current node. This is repeated until the final node is reached. Since the state machine is optimized to have an waypoint at least each five centimes, the lines which are to long are split in multiple lines to ensure the right waypoint distance.

Set Target

The determination of the next target is done using the statemachine, therefor the statemachine is used here and in the actual statemachine section.

RESET

The target is set to the current robot position, in effect stopping it from moving. Furthermore, the gridmap in the world model is reset to its original state (only the walls and cabinets from the .json-map are present).

ORIENTING

The target is set to the current robot position, stopping all movement. To allow for (re-)orienting in the Monte Carlo algorithm the uncertainty of the global robot position is increased to increase the search area and the limits on which positions to accept are lifted.

PLAN_PATH

The target is set to the current robot position, stopping all movement. A path is generated from the A* search algorithm from the current robot position to the next destination. The raw path from the A* algorithm is then adjusted where possible to allow for a smoother and shorter path. Finally the new path (a series of target positions) is saved in the world model.

GOTO_NEXT_WAYPOINT

The target is set to the next waypoint on the current path.

ARRIVED_AT_WAYPOINT

Once Pico has arrived at its target, the waypoint is removed from the current path in the world model.

FINISHED_PATH

If the previous state was not FINISHED_PATH, it means that a destination was reached. This destination is removed from the list of destinations. Furthermore, the target is set to the current robot position to stop Pico from moving.

ORIENTING_TO_CABINET

Translating movement is set to zero and the robot rotates to face the direction the current target prescribes. Rotating movement is controlled by the difference in the robot angle and the prescribed angle.

TRANSLATE_TO_WAYPOINT

The robot moves to the waypoint, without rotating and with movement in all directions allowed. This is different from normal movement, in which the robot is not allowed to move backwards.

APPROACHING_CABINET

The robot moves forward slowely.

ARRIVED_AT_CABINET

According to the statemachine the cabinet has been reached. The robot is stopped and IO.speak is used to communicate with the outside world which cabinet has been reached.

Control

The control of Pico is made up of two components: An artificial potential field and a function which determines the different velocities that are fed into Pico's base.

Artificial potential field

The path planning already accounts for a large deal of the obstacle avoidance, but it is not perfect on its own. Furthermore, the path planning is limited to planning a path around static objects. Any dynamic object is not picked accounted for in the gridmap used for the path planning. To solve these issues it is opted to use an artificial potential field, APF in short. The philosophy of the artificial potential field approach can be described as follows:

The manipulator moves in a field of forces. The position to be reached is an attractive pole for the end effector and obstacles are repulsive surfaces for the manipulator parts.[2]

In this case, the position to be reached is the next path point. Since the APF is only used as a last resort for obstacle avoidance, we do not want the full effect of it at all times. To this end, the effect of the APF will only be exploited when Pico sees an obstacle within a certain range. At this particular range, the repulsive potential vector starts to increase from zero.

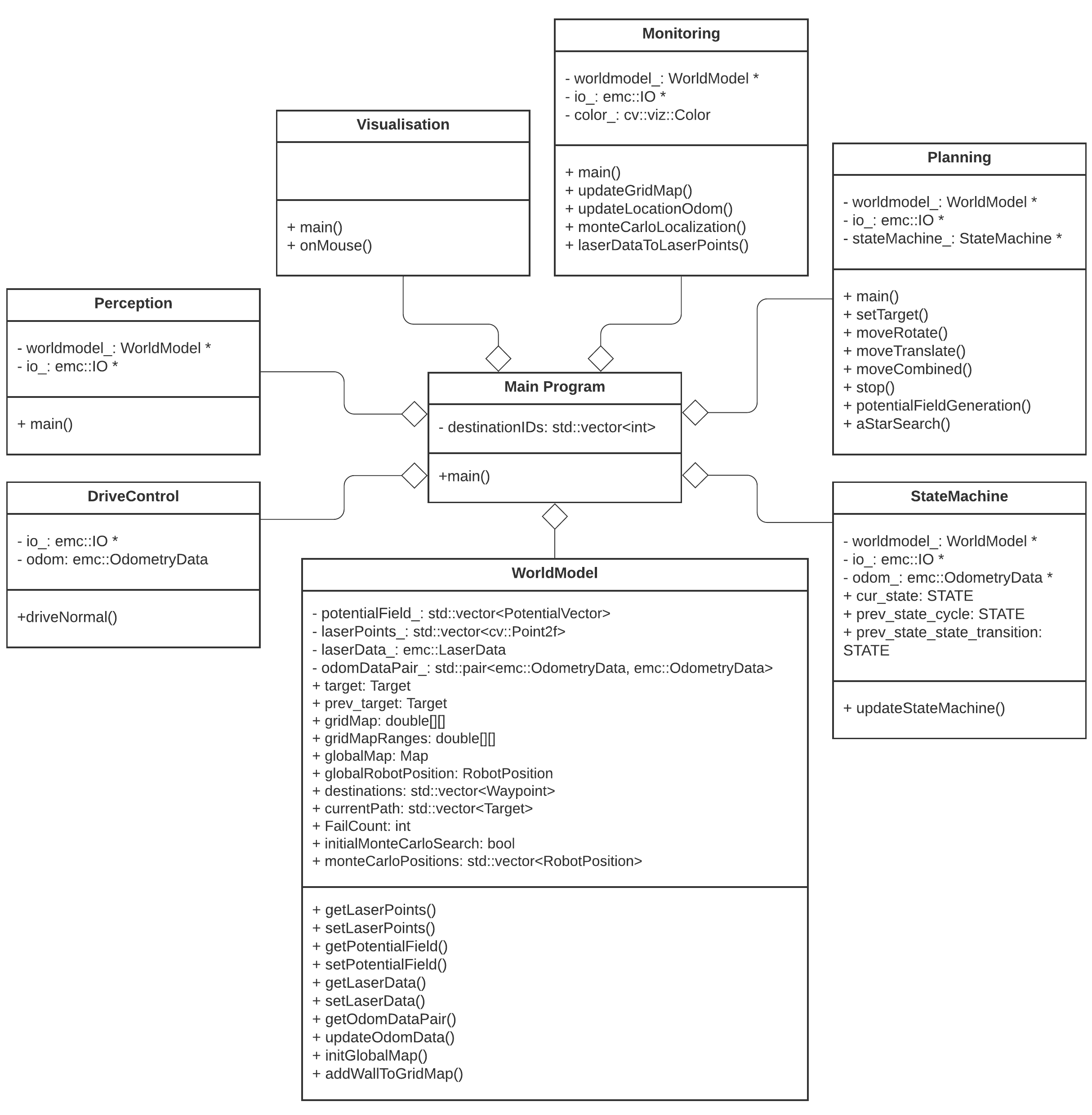

The repulsive potential vector is created from the live laser data Pico gathers at all times. If a laser beam has a range between the minimal range of the LRF and a maximum free distance around Pico, a vector is calculated in the following manner:

Here [math]\displaystyle{ \eta }[/math][math]\displaystyle{ r }[/math] is a constant to scale the magnitude. [math]\displaystyle{ k }[/math] is a value to change the slope of the magnitude, higher values for [math]\displaystyle{ k }[/math] give a steeper slope. [math]\displaystyle{ D }[/math] is the distance between the robot and the laser point, or effectively the range of the laser beam. [math]\displaystyle{ \Delta }[/math] is the safe distance around Pico, for values of [math]\displaystyle{ D }[/math] higher than [math]\displaystyle{ \Delta }[/math] nothing will happen. The other way around the magnitude will increase. Finally laserPoint is the point in the local robot coordinate frame where the laser beam is reflected by an object.

Every laser beam results in an Vecr. To final repulsive vector used in the actual control of the robot is the summation of all Vecr. In this way vectors from adjacent sides are able to cancel each other.

For the final implementation of the APF only the repulsive vector is used. The vector to the next path point is taken as the attractive vector. Only when there is a repulsive vector with a magnitude greater than zero, so when there is an object within a distance [math]\displaystyle{ \Delta }[/math] from Pico, the repulsive vector is added to the attractive vector to act as a collision avoidance measure. To ensure that Pico will never run into any object, the APF is updated at an increases rate of 100 Hz. It is chosen to do this at a higher rate, since lower rates like 20 Hz would induce a oscillation if Pico was blocked by objects on two different sides, eventually leading to a collision.

A downside of the APF approach is the existence of local minima in which one could get stuck. For this implementation the local minimum does not really cause any problems, since the solutions are accounted for by other parts of the code. For instance, when a new object is detected on the current path of Pico, a new path is calculated. If Pico does find itself in a narrow situation, the path planning is optimized to find the best path possible within the boundaries provided by Pico.

Velocity determination

The velocity and direction of Pico depends on the location of the next target with respect to the location and orientation of Pico and on the repulsive force of the APF. The location of the next target and the repulsive vector are given as vectors in the local Cartesian coordinate frame of the robot. If there is a repulsive force generated by the APF, the target vector and the repulsive vector are summed. This resulting vector is the basis of the lateral and longitudinal velocity of Pico. The angular velocity is solely determined by the angle of the target vector.

The velocity which is send to the Pico base is determined by an based on a few conditions. In the "APPROACH_CABINET" state Pico is forced to translate, whilst in the "ORIENT_TO_CABINET" state Pico is forced to rotate. In the other states Pico will purely rotate if the angle [math]\displaystyle{ \theta }[/math] between the robot and the target is too large, purely translate in the longitudinal direction if [math]\displaystyle{ \theta }[/math] is small enough, and have a combined movement of rotating and translating in both directions if [math]\displaystyle{ \theta }[/math] is in between the two boundaries. If there is a repulsion force, the minimum angle is ignored for the combined movement.

Monitoring

The monitoring component has the job of tracking and updating the robot's current position and it's surroundings. This class consists of two main parts: mapping of the robot's actual surroundings (incl. static clutter) and determining the exact position of the robot within the given global map using laser data. Mapping the surroundings is done using 'Occupancy Grid Mapping'[3] and localization using laser data has been accomplished by implementing so called Monte Carlo localization.

Occupancy grid mapping (static clutter detection)

To be able to create correct and efficient paths through the hospital, the robot has to be in possession of a complete and actual map of it's surroundings. This is done using Occupancy Grid Mapping. In this technique, a gridmap is drawn over the (given) global map, with each grid-square containing a single value indicating whether or not that grid-square is occupied (traversable) or not.

Populating the gridmap

Populating the gridmap is done once at program startup and then updated every main-program cycle. During initialization and interpretation of the given .json-map, the each grid-square being crossed by a wall or cabinet is assigned a value of GRID_WALL_VALUE (100.0).

During the execution of the program, the grid-squares' values are updated using the currently seen laserpoints. For each laserpoint, the corresponding grid-square in which it's located is determined and this grid-square's value is increased by GRID_LASER_BLOCKED_VALUE (0.5). Even more, the grid-squares positioned in between the robot's current location and the laserpoint's position are determined as being empty and have their values subtracted by GRID_LASER_FREE_VALUE (0.5). This uses the fact the robot wouldn't have been able to see an object if the grid-squares in between the object and the robot are not empty. Determination of which grid-squares are in between the robot and the laserpoint is done using a Bresenham algorithm implementation from tech-algorithm.com.

The ability to 'open up' grid-squares after they have been determined as being occupied, either by the initializing sequence or object detection, is crucial in creating a robust system being able to handle the imperfect world. E.g. dynamic clutter, doors or walls being in a slightly different position w.r.t. the given .json-map, being able to fix the gridmap after populating it with incorrect data due to an error in localization, etc.

Interpreting the grid-map

Since the gridmap consists of a collection of grid-squares, all possessing a value, this value has to be translated to a portion of the map being occupied or free. This is done by checking if the grid-square's value is above GRID_LASER_FREE_VALUE (0.5), if so, the grid-square should be interpreted as being occupied. This is done e.g. in the visualizer and in the planning of the path of the robot.

Preparing the gridmap for path planning use

The path planning algorithm (A*) does not use the 'raw' gridmap as described above. Instead, it uses a processed copy (gridMapRanges) which adds a region of obstruction around the detected objects with values tapering off the further away from this object. It normalizes all grid-square values to the range [0, 255]. These values are used by A* in a way it'll prefer staying away from objects but still being able to do come close if absolutely necessary. The exact workings of A* and it's using of these grid-square values can be seen at the 'Planning & Control' section on this page.

For each grid-square which has been identified as occupied (grid-square value > GRID_LASER_FREE_VALUE), the grid-squares present in a circle of GRIDMAP_RESOLUTION * 6 (0.5 * 6) around the occupied grid-square are affected and have a value between 0 and 255 assigned to them. This is done using the following formula, in which [math]\displaystyle{ x }[/math] is the distance of the grid-squarew.r.t. the occupied grid-square diveded by GRIDMAP_RESOLUTION.

Monte Carlo localization

Monte Carlo localization is used in order to determine the position of the robot within the map. Due to the initial position of the robot being roughly known and the ability to track the (rough) position of the robot using odometry data, this method is a relatively easy way to get an exact position whilst being relatively robust.

In this approach, a group of different robot positions is generated and a estimation is made on how well the current observations (laser points) fit each possible position. The one most likely to be the correct position is then chosen. The different steps of this process are explained below.

Generation of Monte Carlo positions

Possible new positions of the robot (Monte Carlo positions) consist of a position (x- and y- coordinates) and an orientation (angle). These positions are generated in a radius around the current robot position. The orientation of these positions are taken from a normal distribution around the current robot position's orientation. The exact way of calculating these values is related to the 'uncertainty' value of the current robot position. The higher this value, the greater the radius of the circle in which the positions are generated, the standard deviation of the normal distribution used and the amount of positions generated. This has two reasons:

- If uncertainty is low, the amount of Monte Carlo positions generated can be minimized to reduce computational intensity and, in turn, runtime

- If uncertainty is low, the same amount of positions can be distributed along a smaller area and orientation range. This can improve accuracy of the found position

This uncertainty value gets increases over time and is reset once a new position is determined using Monte Carlo. The new value is related to how much the robot has moved since the previous time the position has been determined using Monte Carlo. This uncertainty value is used in the generation of the Monte Carlo positions which are going to be checked.

Selecting the best Monte Carlo position

For each position, the likelihood of the position being the actual robot position has to be determined. This is done by calculating an 'error' value for each position. This is done by going through the currently seen laserpoints (with a position w.r.t. the robot), placing these points within the global map using the Monte Carlo position and determining the distance to the closest wall or cabinet. These distances are summed for all laserpoints and together make up the error value. The position with the lowest error value (in effect, the position in which the seen laserpoints line up with the given .json-map the best) is selected as a new robot position candidate. A last check is done to verify the found position is within reason (it's not unreasonably far away from the current position) and if so, it's set as the new robot position and the new position uncertainty is set.

Perception

The perception module is in essence quite straightforward: it reads out the laser and odometry sensor data from the robot sensors and writes it to the world model. Some problems where observed however in laserdata moving erratically w.r.t. the robot's position, as seen in the image to the right.

This problem has been traced back to the fact odometry and laser data is not retrieved from pico's API at the exact same time. Even when both lines of code retrieving the data where direct after eachother, differences in the timestamps of the returned data could be observed. Instead of writing complex code interpolating data to match timings (or other solution), the laser and odometry data is only updated if the received data has the exact same timestamps. Although quite some data updates get rejected due to mismatches in timestamps (experimentation showed rejection rates of up to 50%), this approach did not seem to have a negative impact on performance of the program.

Statemachine

The state machine designed for the hospital challenge is described in this paragraph.

RESET

The first state is the RESET state, this state will be executed when the program is initially started. This state will also be executed when the path planning is not able to find a suitable path to the current target. The task of this state is to clear the grid map of all previous detected clutter, when the gridmap is resetted the ORIENTING state will be executed.

ORIENTING

The localization of the robot within the world model will be done in the orienting state based on the Monte Carlo algorithm. When the localization is successfully executed and the position uncertainty is low enough, then the state machine will continue based on these three statements: